There is a quarterly review going on and the AI line item on the cloud bill is up forty percent over plan. The model is the same. The use case is the same. The volume is up, the context windows have grown, and nobody named an owner for cost shape. The CFO wants a number; engineering wants a quarter to fix it.

This is more than a cost-tracking gap. It is a failure of AI optimization discipline.

AI Velocity Blueprint

Measure and multiply engineering velocity using AI-powered diagnostics and sprint-aligned teams.

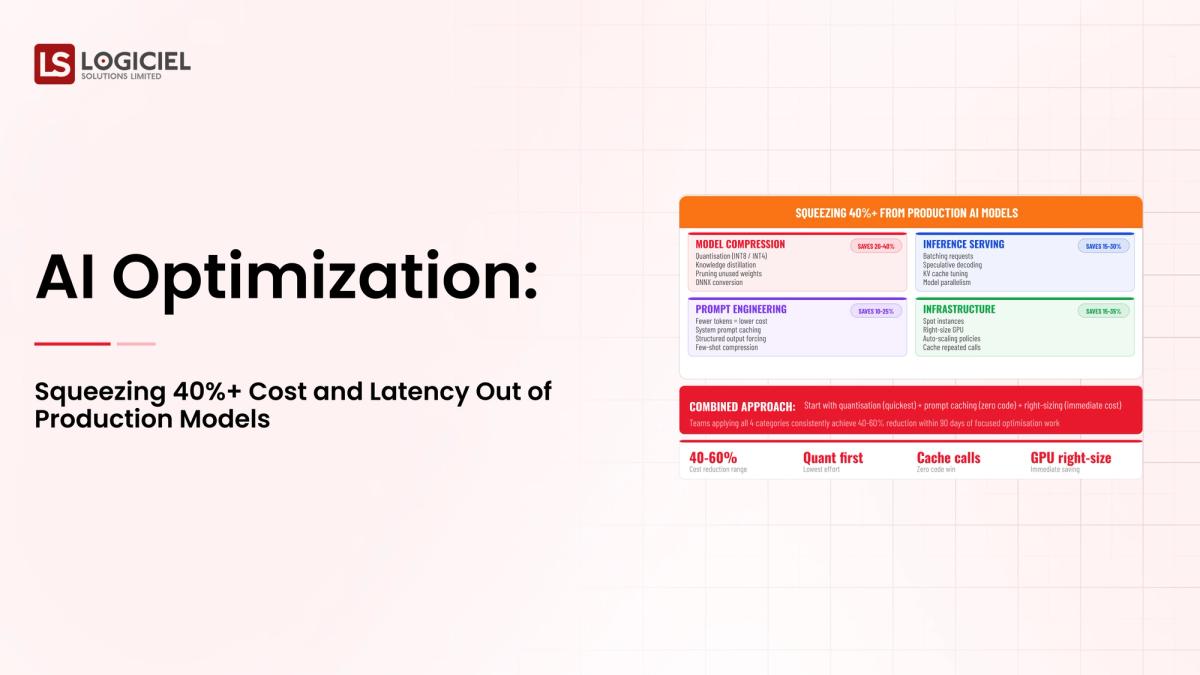

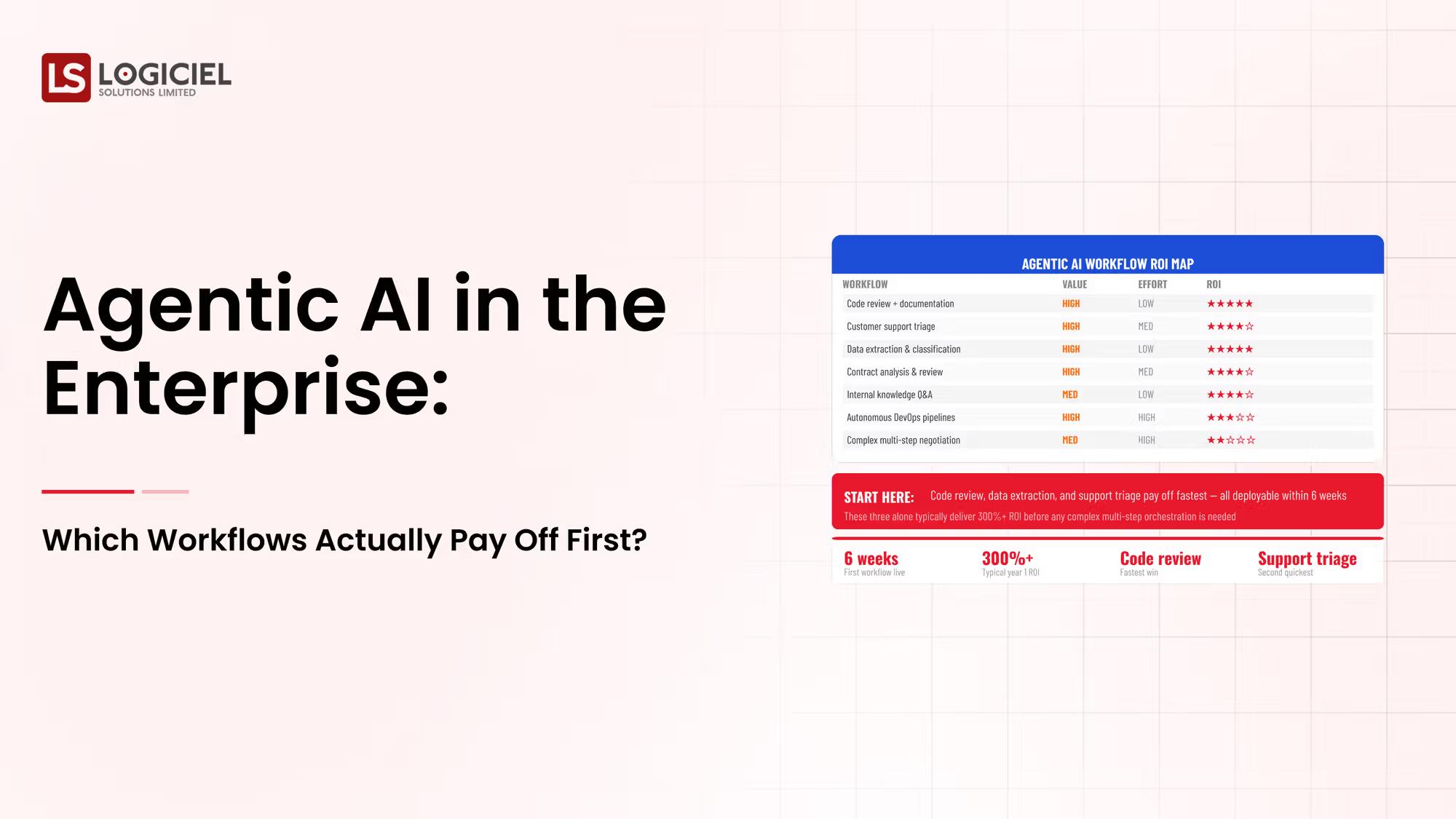

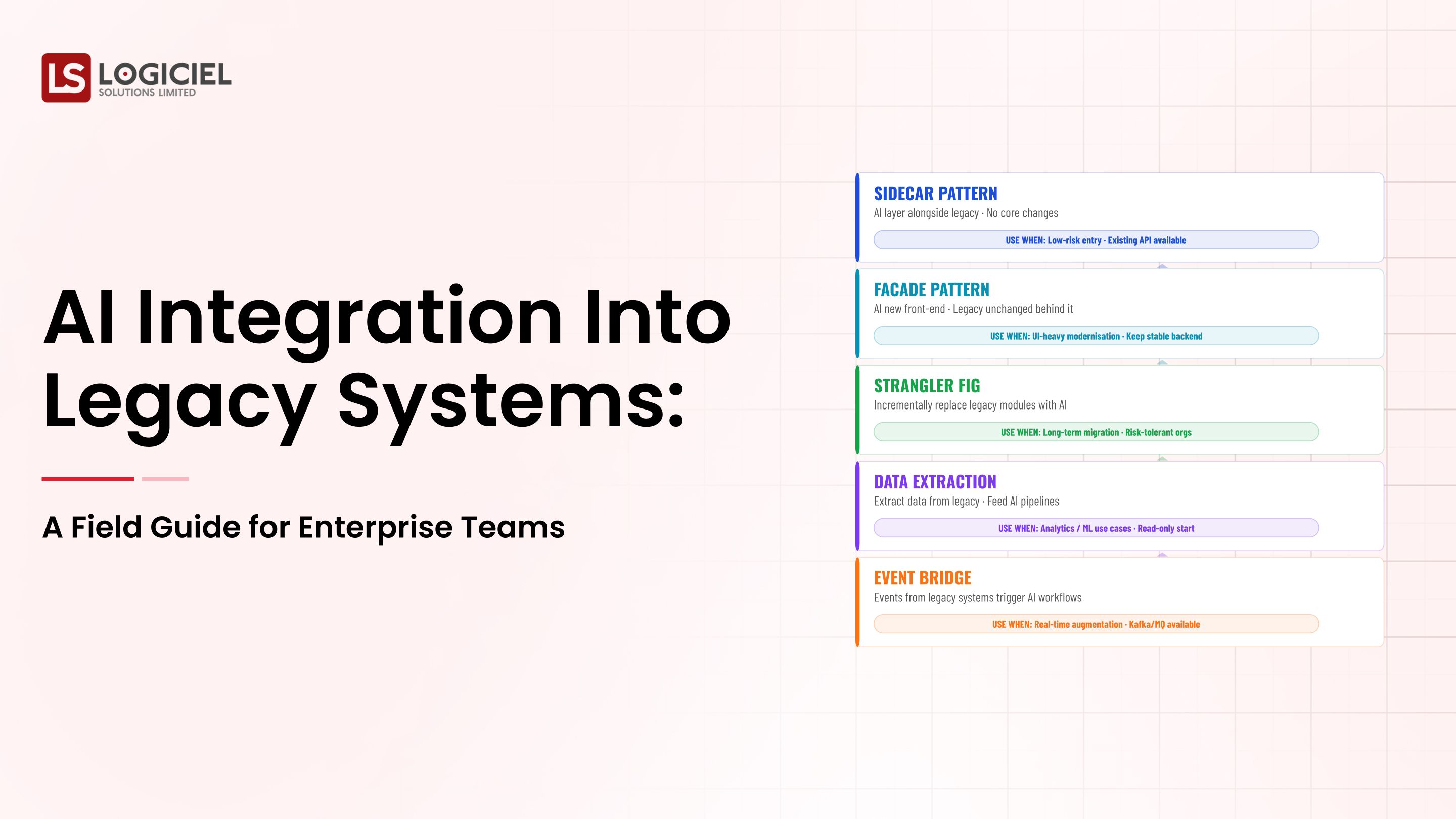

A modern AI optimization program is not a one-off rewrite. It is a layered set of levers across model selection, prompt design, retrieval shape, caching, batching, and routing, plus the operating cadence that keeps the gains in place.

However, many teams treat optimization as a project that happens after launch, and discover that retrofitting it is harder than building it in.

If you are a VP Engineering and are responsible for building or scaling your AI optimization program, the intent of this article is:

- Define what AI optimization actually means in production

- Walk through the cost and latency levers that move the needle

- Lay out the operating cadence that keeps gains from eroding

To do that, let's start with the basics.

What Is AI Optimization? The Basic Definition

At a high level,AI optimization is the discipline of reducing cost and latency in production AI systems while keeping quality inside an agreed envelope.

To compare:

If model selection is choosing the right car, AI optimization is the work of tuning the engine, picking the route, and learning to drive efficiently. The car alone does not deliver the savings.

Why Is AI Optimization Necessary?

Issues that AI Optimization addresses or resolves:

- Bringing AI cost shape back to plan as usage scales

- Meeting latency targets that the cloud round-trip alone cannot hit

- Sustaining gains over time so they do not erode silently

Resolved Issues by AI Optimization

- Surfaces the levers that actually move cost and latency

- Decouples quality from cost so tradeoffs are explicit

- Builds the cost dashboard the CFO needs before they ask

Core Components of AI Optimization

- Model selection across a tier ladder (small, medium, frontier)

- Prompt and context optimization including templating and caching

- Retrieval optimization (indexing, reranking, freshness budgets)

- Batching and request shaping at the gateway

- Tier routing that picks the cheapest sufficient model per request

Modern AI Optimization Tools

- Model gateways like LiteLLM, Portkey, and Vellum for routing and tiering

- Vector DBs (Pinecone, Weaviate, Qdrant, pgvector) with reranker pipelines

- Prompt caching and KV-cache reuse via vLLM and TGI

- FinOps platforms (Cloudability, Apptio, Kubecost) extended for AI

- Eval and quality monitors (LangSmith, Arize) to bound the optimization tradeoff

These tools are the building blocks; the discipline of using them on a cadence is the harder part.

Other Core Issues They Will Solve

- Provides defensible unit economics for board and CFO conversations

- Reduces vendor lock-in by enabling tier substitution at runtime

- Improves user experience through latency wins that compound across features

In Summary: AI optimization concepts are the discipline of squeezing cost and latency out of production AI without sacrificing the quality envelope.

Importance of AI Optimization in 2026

AI optimization has shifted from a nice-to-have to a board-level concern. Four reasons explain why it matters now.

1. Cost is the limiting factor for AI program scale.

Programs that cannot bring cost shape under control hit a ceiling that no model upgrade fixes.

2. Latency budgets keep tightening.

User-facing AI features have latency expectations measured in seconds, not minutes. Optimization is how you meet them.

3. Vendor pricing is volatile.

Per-token, per-request, and per-minute pricing has shifted multiple times in the last year. Optimization protects your unit economics from vendor moves.

4. Sustainability is becoming a board topic.

AI workloads are energy-intensive. Optimization reduces footprint as well as cost; both matter to ESG-aware boards.

Traditional vs. Modern AI Optimization Concepts

- Single-model deployment vs. tier ladder with runtime routing

- Static prompts vs. cached and templated prompts with retrieval discipline

- Annual cost reviews vs. weekly optimization cadence

- Quality measured at launch vs. quality monitored alongside cost continuously

In summary: AI optimization is the operating discipline that lets AI programs scale without breaking the budget.

Details About the Core Components of AI Optimization: What Are You Designing?

Let's go through each layer.

1. Model Tier Layer

Where you decide which model handles which class of request.

Tier decisions:

- Small models for simple, high-volume tasks

- Medium models for balanced quality-cost

- Frontier models reserved for complex or differentiating use

2. Prompt and Context Layer

Most quick wins live here.

Levers:

- Template prompts to enable caching

- Trim context to the minimum useful window

- Use system prompt caching where supported

3. Retrieval Layer

Right context beats more context.

Retrieval levers:

- Index design and chunking strategy

- Reranker pipelines for relevance

- Freshness budgets that match the use case

4. Request Shaping Layer

How requests reach the model.

Shaping levers:

- Batching where latency budgets allow

- Streaming responses to reduce perceived latency

- Backpressure under load to protect tail latency

5. Operating Cadence Layer

What keeps the gains from eroding.

Cadence components:

- Weekly cost and latency review

- Per-feature unit economics dashboard

- Quarterly tier ladder review and re-routing

Benefits Gained from Tier Routing and Prompt Caching

- Per-request cost reductions that compound across features

- Lower latency for the simple-but-frequent requests

- Defensible budget conversations with finance

How It All Works Together

Requests arrive at the gateway, get classified by complexity, and are routed to the cheapest sufficient model in the tier ladder. Prompts are templated and cached. Retrieval is bounded by freshness budget and reranker. The eval harness monitors quality alongside cost. The operating cadence reviews the dashboard weekly and adjusts levers when usage patterns shift.

Common Misconception

AI optimization is a one-time rewrite that delivers savings.

Optimization is a continuous practice. The first pass delivers the largest gains; the operating cadence keeps them in place.

Key Takeaway: Each layer has its own levers. Programs that pull on one layer and ignore the others see partial gains that erode quickly.

Real-World AI Optimization in Action

Let's take a look at how ai optimization operates with a real-world example.

We worked with a SaaS company whose AI feature was running thirty-five percent over budget by month four. The optimization audit surfaced:

- No tier ladder; every request hit the frontier model

- Context windows growing with no template discipline

- Retrieval with no reranker, returning low-relevance candidates

Step 1: Build the Cost and Latency Baseline

Per-feature unit economics, latency percentiles, current model and prompt shape.

- Per-request cost dashboard

- p50/p95/p99 latency by feature

- Current model and tier mapping

Step 2: Pull the Tier Routing Lever

Classify requests by complexity; route to the cheapest sufficient tier; verify quality with eval.

- Complexity classifier on request

- Tier ladder defined explicitly

- Quality eval blocking promotion of regressions

Step 3: Optimize Prompts and Context

Template prompts; cache where supported; trim context to the minimum useful window.

- Templating with deterministic structure

- Prompt caching enabled per provider

- Context trimming with eval guardrail

Step 4: Tune Retrieval

Indexing, chunking, rerankers, freshness budgets aligned to use case.

- Reranker added where missing

- Chunk size tuned per use case

- Freshness budget documented per query type

Step 5: Operate the Cadence

Weekly cost and latency review with named owner; quarterly tier ladder review.

- Named cost owner

- Weekly review on the dashboard

- Quarterly tier and architecture review

Where It Works Well

- Tier ladder enforced at runtime, not in design docs

- Eval harness running alongside cost dashboard

- Named cost owner who argues against drift

Where It Does Not Work Well

- Optimization without quality monitoring

- One-off rewrites with no operating cadence

- Single-lever fixes that ignore the rest of the stack

Key Takeaway: Forty percent cost reduction is achievable in a quarter when the levers are pulled in sequence and the cadence is sustained.

Common Pitfalls

i) Optimizing without quality bounds

Cuts that look like wins until quality regression shows up in customer complaints.

- Bound optimization with eval thresholds

- Block deploys that regress quality

- Treat quality as a co-equal metric to cost

ii) One-off rewrites with no cadence

Gains from one rewrite erode over the next two quarters as usage patterns drift.

iii) Single-lever fixes

Pulling on tier routing alone, or prompt caching alone, leaves gains on the table. Pull multiple levers in sequence.

iv) No named owner for cost

Without a named owner whose job is to argue against drift, cost grows back to baseline within two quarters.

Takeaway from these lessons: Most optimization erosion is operating-cadence failure, not technical regression. The cadence is the work.

AI Optimization Best Practices: What High-Performing Teams Do Differently

1. Build the cost dashboard before the optimization

You cannot optimize what you cannot measure. Per-feature unit economics, refreshed daily, owned by a named lead.

2. Operate a tier ladder

Multiple model tiers with explicit routing rules. The cheapest sufficient tier per request.

3. Cache and template prompts

Prompt caching cuts cost and latency on repeated patterns. Templating enables it.

4. Monitor quality alongside cost

Eval running on a schedule. Regression blocks deploy. Quality is a constraint, not an aspiration.

5. Review weekly; tune quarterly

Weekly cadence catches drift. Quarterly cadence addresses architecture-level changes.

Logiciel'svalue add is helping engineering leaders run AI optimization audits and build the operating cadence that turns one-time wins into sustained savings.

Takeaway for High-Performing Teams: High-performing teams operate AI optimization weekly. The discipline is the difference between cost spikes and cost stability.

Signals You Are Designing AI Optimization Correctly

How do you know the ai optimization program is set up to succeed? Not in a board deck or a celebration, but in the daily evidence the team produces. Below are the signals that distinguish programs on the path from programs that look like progress.

- The team can describe failure modes without flinching. People who actually run ai optimization systems will tell you the last three things that broke. People who have only read about it will not.

- Cost is observable in real time. The team can tell you, today, how much they spent yesterday on this and what drove the change.

- Change is boring. New versions, new models, new pipelines all roll forward and roll back the same way. Heroic deploys signal an immature system.

- Eval is continuous, not ceremonial. A live dashboard refreshed at least daily, not a quarterly slide.

- Vendor lock-in is a known quantity. The team can name the dependencies that would hurt to remove and the rip-and-replace cost in dollars and weeks.

Adjacent Capabilities and Connected Work

This work does not exist in isolation. AI Optimization depends on, and feeds into, several adjacent capabilities. Building one without thinking about the others is the most common scoping mistake.

In most enterprise programs, ai optimization shares infrastructure with the data platform, the observability stack, and the security review process. It shares team capacity with platform engineering, applied ML, and SRE. And it shares leadership attention with whatever the next AI initiative is on the roadmap. Naming these adjacencies upfront helps the program scope realistically and helps leadership see the work as a portfolio rather than a one-off project.

The most common mistake in adjacent-capability scoping is treating each adjacency as someone else's problem. The integration with the data platform is your problem. The security review of the runtime is your problem. The on-call rotation that covers the system you ship is your problem. Pretending otherwise pushes work to teams that did not plan for it, and the work returns to you later as a delay or an incident. Own the adjacencies you depend on; partner with the teams that own them; share the timeline.

Stakeholder Considerations and Communication

Different stakeholders ask different questions about ai optimization. The board wants to know about risk, ROI, and competitive position. The CFO wants unit economics and forecast. The CISO wants the threat model and the audit posture. Engineering wants to know what gets built and what gets bought. The line of business wants to know when the value lands and what the experience will look like for users.

Programs that anticipate these questions and prepare answers move faster than programs that improvise. Build a one-page brief for each major stakeholder. Update the briefs quarterly. The cost of preparing them is low; the cost of not preparing them is the meeting where the program loses sponsor confidence.

There is also a communication cadence question. Programs that update sponsors weekly during active delivery, monthly during steady-state operation, and at every incident or major change tend to maintain confidence. Programs that go quiet between milestones tend to surprise leadership. Decide the cadence at kickoff and protect it.

Metrics That Tell You AI Optimization Is Working

Beyond the success signals listed earlier, there are operational metrics worth tracking week over week. These are not vanity numbers; they are leading indicators that distinguish programs that are compounding from programs that are running in place.

Time from idea to production. How long does it take a new use case to go from concept to a customer-impacting deployment? Programs that compound see this number drop quarter over quarter; programs that do not see it grow.

Per-program cost trajectory. Are you spending less per unit of value delivered each quarter, or more? Cost trajectory is the cleanest leading indicator of whether the platform layer is paying back.

Incident severity over time. Severity ticks down as the operating model matures. If incident severity is flat or rising, the operating model has gaps that need attention before the next program ships.

Reuse rate across programs. What fraction of the platform layer is being reused by program two and program three? Reuse rate is the cleanest indicator that the investment in the first program is amortizing.

Stakeholder net promoter. Are your sponsors more or less likely to fund the next program than they were last quarter? Sponsor confidence is hard to measure directly; the trend in approved budget and strategic emphasis is the proxy.

Conclusion

AI optimization is the operating discipline that lets AI programs scale without breaking the budget. The levers are well known; the cadence is the work.

Key Takeaways:

- Optimization spans model, prompt, retrieval, request shaping, and cadence

- First-pass gains are large; cadence preserves them

- Quality is a constraint, not a tradeoff

When AI optimization is built into the operating model, the benefits compound:

- Cost shape that the CFO can defend

- Latency wins that improve user experience

- Vendor lock-in reduced through tier substitution

- Reusable optimization patterns across the AI portfolio

Evaluation Differnitator Framework

Why great CTOs don’t just build they evaluate. Use this framework to spot bottlenecks and benchmark performance.

Call to Action

If your AI cost or latency is drifting, the move this month is to build the unit-economics dashboard and run the optimization audit.

Learn More Here:

- AI Home Energy Optimization Cost Carbon 2025

- AI in Cloud Optimization Cost Savings Performance

- AI Finops Cloud Cost Optimization

At Logiciel Solutions, we run AI optimization audits and build the operating cadence that keeps gains in place across the AI portfolio.

Explore how to optimize your production AI.

Frequently Asked Questions

What is AI optimization?

The discipline of reducing cost and latency in production AI while keeping quality inside an agreed envelope.

Where do the biggest wins usually come from?

Tier routing and prompt caching usually deliver the largest first-pass gains. Retrieval tuning and request shaping deliver the next layer.

How much can we expect to save?

Forty percent cost reduction in the first quarter is realistic for programs without prior optimization. Subsequent quarters deliver smaller, sustained gains.

How do we keep gains from eroding?

A named cost owner, a weekly cadence on the dashboard, and a quarterly architecture review.

What is the biggest mistake in AI optimization?

Optimizing without bounds. Cuts that look like wins until quality regression shows up in customer complaints. Always optimize against an eval guardrail.