There is an AI feature that is supposed to ship next quarter and the team is now four weeks behind because a legacy schema changed without notice. The model is fine; the prompt is fine; the integration broke. The line of business is asking why a feature dependent on AI is delayed by something that is not AI.

This is more than a delivery hiccup. It is a failure of AI integration.

A modern AI integration is the engineering work that connects modern AI systems to operational systems of record, including data plumbing, identity, change management, and failure handling.

Real Estate Investment AI

Your models aren’t wrong. Your data is. Here’s how real estate teams fix AI failures before they cost millions.

However, many programs scope only the AI capability and discover the legacy reality the hard way.

If you are a VP Engineering and are responsible for building or scaling your AI integration program, the intent of this article is:

- Define what AI integration into legacy systems actually means

- Walk through the four integration patterns that work

- Lay out the data plumbing, identity, and operating-model work that determines success

To do that, let's start with the basics.

What Is AI Integration? The Basic Definition

At a high level, AI integration into legacy systems is the engineering work that connects modern AI systems to operational systems of record. The work includes data plumbing, identity and access, change management, and failure handling.

To compare:

If launching a greenfield AI feature is a sprint, integrating AI into legacy is a relay race where you have to hand the baton to a runner who has been running a different race for fifteen years.

Why Is AI Integration Necessary?

Issues that AI Integration addresses or resolves:

- Resolving schema, latency, and authentication mismatches between AI and legacy

- Building the identity layer that lets AI act on behalf of users with audit

- Absorbing AI's change rate so legacy systems do not have to

Resolved Issues by AI Integration

- Provides clean contracts between AI and legacy systems of record

- Translates AI-specific failure modes into something legacy systems can handle

- Builds an integration layer that survives vendor and model changes

Core Components of AI Integration

- Integration gateway for auth, rate limiting, protocol translation

- Context-assembly layer pulling from legacy with caching and freshness

- AI runtime with abstraction over model providers

- Output translation layer adapting AI outputs to legacy formats

- Audit pipeline capturing every AI-mediated interaction

Modern AI Integration Tools

- API gateways like Kong, Apigee, AWSAPI Gateway for protocol translation

- iPaaS platforms like MuleSoft, Boomi for legacy connectors

- Identity platforms (Okta, Auth0, AWS IAM) extended for AI agent identities

- Schema registries and contract testing platforms

- Internal abstraction layers built on top of these tools

These tools are the typical building blocks; the architecture is what makes them work together.

Other Core Issues They Will Solve

- Provides fallback paths so legacy operates when AI is degraded

- Captures audit trails for AI-mediated interactions in legacy systems

- Builds a reusable integration platform for future AI programs

In Summary: AI integration into legacy is the engineering layer that determines whether the AI capability actually reaches users.

Importance of AI Integration in 2026

Most enterprise AI work in 2026 spends more time on integration than on AI. Four reasons explain why integration discipline matters now.

1. Most enterprise data and identity still live in legacy systems.

Greenfield AI deployments are rare in enterprise. The integration layer is where most engineering time goes.

2. Legacy schemas change without notice.

Pipelines that ran clean for a year break the day a vendor pushes a schema change. The integration layer absorbs that risk.

3. Identity for AI agents is not a solved problem.

Existing identity systems were built for human users. The identity layer for AI agents requires deliberate design.

4. AI changes faster than legacy can absorb.

The integration layer is the buffer between AI's change rate and legacy's stability.

Traditional vs. Modern AI Integration Concepts

- Direct API integration vs. abstracted integration layer

- Identity treated as an afterthought vs. identity designed in phase four

- Schema assumptions vs. contract testing and schema validation

- AI failure modes ignored vs. AI failure modes handled at the integration layer

In summary: AI integration into legacy is the largest single engineering investment in most enterprise AI programs.

Details About the Core Components of AI Integration: What Are You Designing?

Let's go through each layer.

1. Data Plumbing Layer

Where AI gets context from and where outputs go.

Plumbing concerns:

- Schema validation and contract testing

- Latency and freshness budgets

- Error handling and retries

2. Identity and Access Layer

Who the AI acts on behalf of, what permissions it has, and how those are verified.

Identity concerns:

- Agent identity tied to user context

- Permission scope per agent action

- Audit trail for AI-mediated decisions

3. Change Management Layer

How AI changes are absorbed by legacy without breaking it.

Change handling:

- Versioning of integration contracts

- Deprecation paths for legacy interfaces

- Rollback automation for both AI and integration changes

4. Failure Handling Layer

What happens when the AI fails: timeouts, wrong answers, partial outputs, tool errors.

Failure paths:

- Timeout handling with graceful degradation

- Output validation before downstream calls

- Documented fallback paths per integration point

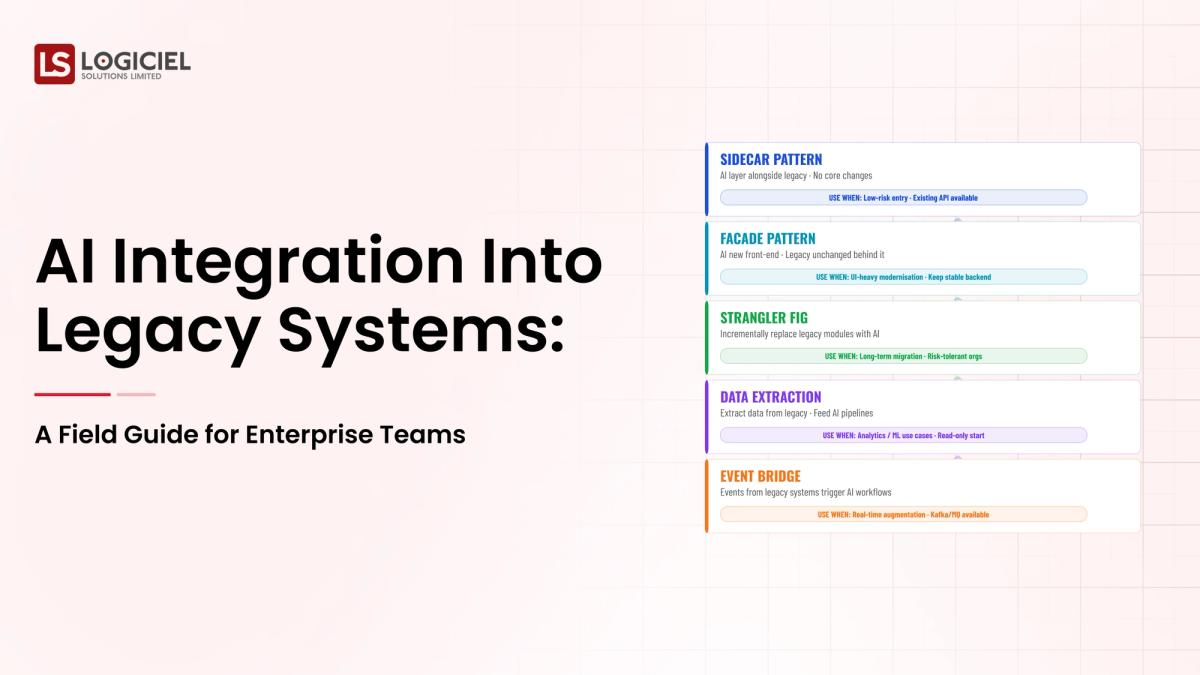

5. Integration Patterns

Four patterns dominate; pick deliberately based on use case.

Pattern choices:

- Proxy: AI sits in front of legacy, augmenting requests

- Companion: AI runs alongside, enriching outputs

- Orchestration: AI coordinates multiple legacy systems

- Replacement: AI replaces a legacy capability behind the same interface

Benefits Gained from Integration Layer and Failure Handling

- Legacy systems continue operating when AI is degraded

- Schema changes do not break AI integration silently

- Audit trails capture every AI-mediated interaction

How It All Works Together

Requests land at the integration gateway, context is pulled from legacy with appropriate caching and freshness, the AI runtime produces an output, the output is translated to legacy-compatible format, the integration layer captures the interaction in audit, and a fallback path is invoked if AI is unavailable or low-confidence.

Common Misconception

AI integration is mostly about calling legacy APIs.

AI integration is mostly about absorbing the change rate and failure modes that legacy systems were not built to handle. The API call is the easy part.

Key Takeaway: Each layer of integration absorbs a specific class of failure; programs that skip layers ship brittle systems.

Real-World AI Integration in Action

Let's take a look at how ai integration operates with a real-world example.

We worked with an enterprise integrating AI into a customer-facing workflow that touched a CRM, a billing system, and a data warehouse, with these constraints:

- Legacy systems with documented but inconsistently followed contracts

- Identity model built for humans, not for AI agents

- Strict audit requirements for any customer-facing decision

Step 1: Map the Legacy Systems

Document the data shape, access pattern, change cadence, and failure modes for each legacy system involved.

- Per-system data shape documentation

- Access pattern and authentication mechanism

- Change cadence and known failure modes

Step 2: Pick Integration Pattern Per Use Case

Proxy, companion, orchestration, or replacement. Pick deliberately based on the use case and the legacy system.

- Pattern decision documented per use case

- Tradeoffs explicit

- Reusable pattern definitions

Step 3: Design Data Plumbing

Schemas, latency budgets, freshness requirements, error handling, retries.

- Schema validation at integration boundaries

- Per-request freshness and latency targets

- Documented error handling per integration point

Step 4: Design Identity and Access

AI agent identity, permission scope, verification, audit. Built with security and compliance from day one.

- Agent identity model defined

- Per-action permission scope

- Audit trail tied to user and agent identity

Step 5: Design Failure Handling

Every integration point handles AI failure modes gracefully. Timeouts, wrong answers, partial outputs, tool errors.

- Timeout handling with graceful degradation

- Output validation before downstream calls

- Documented fallback per integration point

Where It Works Well

- Abstracted integration layer that survives vendor and schema changes

- Identity model designed in phase four with security partner

- Failure handling that lets legacy operate without AI when needed

Where It Does Not Work Well

- Direct integration without an abstraction layer

- Identity addressed as an afterthought

- No fallback path; AI failure becomes legacy failure

Key Takeaway: The team that scopes integration honestly ships in twelve weeks. The team that scopes only the AI capability ships in twelve months.

Common Pitfalls

i) Direct integration without an abstraction layer

AI calling legacy APIs directly couples them tightly. Vendor changes break the legacy; schema changes break AI.

- Build the abstraction layer

- Version the contracts

- Test changes through the abstraction

ii) Underscoping the data plumbing

Most programs underestimate data plumbing by half. Estimate honestly; the plumbing is most of the work.

iii) Identity afterthought

Building integration without addressing AI agent identity creates a security gap. Address in phase four, not month nine.

iv) No fallback path

Every legacy system has to operate without AI. Without a fallback, AI outages become legacy outages.

Takeaway from these lessons: Most integration failures are scoping failures. The work was always there; the plan did not include it.

AI Integration Best Practices: What High-Performing Teams Do Differently

1. Map legacy systems before scoping AI

Per-system data shape, access pattern, change cadence, failure modes. The map is the foundation.

2. Pick integration patterns deliberately

Proxy, companion, orchestration, replacement. Each has tradeoffs. Most programs use a mix across use cases.

3. Build the abstraction layer first

Versioned contracts, schema validation, abstracted vendor calls. The abstraction is the part that survives change.

4. Design identity in phase four

Agent identity, permission scope, audit. With security and compliance from day one. Retrofitting is expensive.

5. Plan fallback paths per integration point

Every legacy system has to operate without AI. Document the fallback; test it; treat it as part of the integration deliverable.

Logiciel's value add is helping engineering leaders scope and design AI integration into legacy environments, including the data plumbing, identity, and operating-model work that determines success.

Takeaway for High-Performing Teams: High-performing teams treat integration as the largest engineering investment in their AI program; it is.

Signals You Are Designing AI Integration Correctly

How do you know the ai integration program is set up to succeed? Not in a board deck or a celebration, but in the daily evidence the team produces. Below are the signals that distinguish programs on the path from programs that look like progress.

- The team can describe failure modes without flinching. People who actually run ai integration systems will tell you the last three things that broke. People who have only read about it will not.

- Cost is observable in real time. The team can tell you, today, how much they spent yesterday on this and what drove the change.

- Change is boring. New versions, new models, new pipelines all roll forward and roll back the same way. Heroic deploys signal an immature system.

- Eval is continuous, not ceremonial. A live dashboard refreshed at least daily, not a quarterly slide.

- Vendor lock-in is a known quantity. The team can name the dependencies that would hurt to remove and the rip-and-replace cost in dollars and weeks.

Adjacent Capabilities and Connected Work

This work does not exist in isolation. AI Integration depends on, and feeds into, several adjacent capabilities. Building one without thinking about the others is the most common scoping mistake.

In most enterprise programs, ai integration shares infrastructure with the data platform, the observability stack, and the security review process. It shares team capacity with platform engineering, applied ML, and SRE. And it shares leadership attention with whatever the next AI initiative is on the roadmap. Naming these adjacencies upfront helps the program scope realistically and helps leadership see the work as a portfolio rather than a one-off project.

The most common mistake in adjacent-capability scoping is treating each adjacency as someone else's problem. The integration with the data platform is your problem. The security review of the runtime is your problem. The on-call rotation that covers the system you ship is your problem. Pretending otherwise pushes work to teams that did not plan for it, and the work returns to you later as a delay or an incident. Own the adjacencies you depend on; partner with the teams that own them; share the timeline.

Conclusion

AI integration into legacy systems is the discipline that determines whether AI capability reaches users. The integration layer is most of the work, even when it is not the conversation.

Key Takeaways:

- AI integration is forty to sixty percent of an enterprise AI program

- Pick integration patterns deliberately per use case

- Design data plumbing, identity, and failure handling explicitly

When AI integration is scoped and designed correctly, the benefits compound:

- AI features that ship on time without legacy surprises

- Reusable integration platform for the next program

- Audit trails that capture AI-mediated interactions

- Legacy stability preserved even when AI is degraded

PropTech AI Infrastructure Roadmap

They’re stuck because the data layer they need doesn’t exist yet

Call to Action

If you are integrating AI into legacy systems, the work this month is to map the legacy systems, pick the integration patterns, and scope the data plumbing realistically.

Learn More Here:

- Enterprise Data Architecture 2026 Guide

- Agentic Systems Multi Agent Architectures Autonomous AI Engineering Teams

- Enterprise AI Stack 2028 Autonomous Systems

At Logiciel Solutions, we partner with engineering leaders on AI integration into legacy enterprise environments, focusing on the data plumbing, identity, and operating model work that determines whether the program ships.

Explore how to integrate AI into your legacy stack.

Frequently Asked Questions

What is AI integration into legacy systems?

The engineering work that connects modern AI systems to operational systems of record, including data plumbing, identity and access, change management, and failure handling.

How much of an AI program is integration work?

Forty to sixty percent for first-time programs. Twenty to forty percent for subsequent programs that ride on the platform built in the first one.

Which integration pattern should we use?

Proxy for augmenting requests; companion for enriching outputs; orchestration for coordinating across systems; replacement for swapping a capability behind the same interface.

What about identity?

Build the identity layer that lets the AI act on behalf of users with the right permissions and audit trail. Existing identity often does not extend to AI use cases. Design with security and compliance from day one.

What is the most common integration mistake?

Underscoping the data plumbing. Most programs underestimate this work by half. Estimate honestly upfront, including the schema validation and error handling work that does not appear on the architecture diagram.