There is a customer support thread asking why the AI assistant gave a wrong answer about pricing. The model passed eval. The deployment was clean. The dashboard says 99.99% uptime. And yet the answer was wrong, the customer is unhappy, and your team is reconstructing what happened from incomplete traces.

This is more than an embarrassing incident. It is a failure of AI reliability.

A modern AI reliability program is more than uptime monitoring. It is the discipline of building systems that produce correct, useful outputs at acceptable rates, with detectable, recoverable failures, across the variability that production introduces.

Real Estate Marketing Attribution

A single attribution mistake led to a 22% pipeline drop. Here’s how real estate teams fix it with full-funnel visibility.

However, many teams measure availability, assume quality follows, and discover the gap during their first significant incident.

What follows is the version of AI reliability that holds up in a customer-facing incident, an executive review, and a regulator inquiry. It is structural, not aspirational, and it can be applied this quarter.

If you are a Head of AI and are responsible for building or scaling your AI reliability program, the intent of this article is:

- Define what AI reliability actually means in production

- Walk through the four reliability dimensions that matter

- Lay out the playbook that turns a fragile prototype into a platform

To do that, let's start with the basics.

What Is AI Reliability? The Basic Definition

At a high level, AI reliability is the discipline of building and operating AI systems that produce correct, useful outputs at acceptable rates, with detectable, recoverable failures, across the variability of production.

To compare:

If traditional reliability is keeping the lights on, AI reliability is keeping the lights on and ensuring the bulbs are still emitting the right color of light. Availability without quality is not reliability for AI.

Why Is AI Reliability Necessary?

Issues that AI Reliability addresses or resolves:

- Closing the gap between lab eval and production behavior

- Detecting quality regressions before customers do

- Recovering from failures without catastrophic disruption

Resolved Issues by AI Reliability

- Wraps stochastic model outputs in deterministic system behavior

- Provides instrumentation across availability, quality, detectability, and recoverability

- Establishes the operating model that turns reliability into muscle memory

Core Components of AI Reliability

- Eval harness running continuously in production

- Drift detection on input and output distributions

- Rollback automation for model and prompt changes

- Fallback paths for failed inferences

- Observability stack capturing the full request lifecycle

Modern AI Reliability Tools

- LangSmith, Arize, Helicone, Galileo for AI observability

- Promptfoo, Ragas, DeepEval for eval harness construction

- Feature flag platforms (LaunchDarkly, Statsig) for deployment control

- OpenTelemetry for unified tracing across AI and traditional services

- Internal eval case management built on top of these platforms

These tools form the typical AI reliability stack in 2026.

Other Core Issues They Will Solve

- Surfaces drift early so it does not compound silently

- Provides defensible evidence for incident reviews

- Supports continuous improvement through structured feedback loops

In Summary: AI reliability is the discipline that turns a model that passes eval into a system that operates predictably in production.

Importance of AI Reliability in 2026

AI reliability has shifted from a research concern to an operational discipline. Four reasons explain why it matters now.

1. Customers and regulators expect production reliability.

Wrong answers at scale generate complaints, churn, and audit attention. Lab-grade quality does not survive production exposure.

2. Failure modes are unfamiliar to traditional SRE.

Hallucinations, drift, prompt injection, partial outputs. Standard observability tooling does not catch them. New instrumentation is required.

3. Quality regressions compound silently.

Drift does not produce alerts unless you build them. Programs without continuous eval discover regressions when customers do.

4. Reuse depends on a reliable platform.

The eval harness, observability stack, and operating model built for the first program become the floor every future program runs on.

Traditional vs. Modern AI Reliability Concepts

- Uptime as the only reliability metric vs. availability + quality + detectability + recoverability

- Eval as a notebook activity vs. eval as production code

- Manual incident triage vs. structured incident response with runbooks

- Single-model deployment vs. abstracted layer with rollback automation

In summary: AI reliability is the discipline that separates a clever prototype from a system the business can run.

Details About the Core Components of AI Reliability: What Are You Designing?

Let's go through each layer.

1. Availability

Does the system respond inside the latency budget?

Standard SRE metrics:

- Error rates and latency percentiles

- Saturation across compute and memory

- Cross-region failover where applicable

2. Quality

Is the response correct, grounded, and useful?

Quality measurement:

- Per-request eval against expectations

- Sampling-based human review

- Known-failure-mode detection

3. Detectability

When quality drops, how quickly does the team know?

Detection mechanisms:

- Drift detection on inputs and outputs

- Regression detection in eval

- Anomaly alerts on output distribution

4. Recoverability

When the system fails, can it return to normal without catastrophic disruption?

Recovery mechanisms:

- Rollback for model changes

- Fallback paths for failed inferences

- Kill switches for systemic failures

5. Operating Layer

How the team sustains reliability over time.

Operating components:

- On-call rotation tuned for AI failure modes

- Postmortem cadence and action tracking

- Quarterly review of eval cases and runbooks

Benefits Gained from Continuous Eval and Recoverability

- Quality regressions caught before customers see them

- Faster recovery when something fails

- Evidence base for incident review and continuous improvement

How It All Works Together

Availability instrumentation tells you the system is up. Quality instrumentation tells you the responses are correct. Detectability tells you when quality drops. Recoverability tells you the system can return to normal. Operating layer keeps all four current. Together, they turn reliability from a property you hope for into a property you measure. The four dimensions are not a checklist; they are the foundation of how you operate the system. Programs that build instrumentation across all four ship into more sensitive workflows than programs that measure only availability. The capability is the system around the model, not the model itself.

Common Misconception

AI reliability is the same as system uptime.

A system that is up but returning wrong answers is not reliable. AI reliability covers four dimensions; uptime is one of four.

Key Takeaway: Each dimension requires its own instrumentation; programs that measure only availability discover the gap during incidents.

Real-World AI Reliability in Action

Let's take a look at how ai reliability operates with a real-world example.

We worked with a SaaS company that had shipped an AI feature that passed eval and was generating complaints in production. The seven-mode reliability diagnostic surfaced:

- Quality drift not detected because eval ran weekly

- No rollback automation; model swaps were manual

- Audit trail incomplete; failures could not be reproduced

Step 1: Define Reliability Targets

For each dimension, set a target: latency, quality score, time-to-detection, time-to-recovery.

- Latency budget and error budget

- Quality threshold with regression alerting

- Time-to-detection and time-to-recovery budgets

Step 2: Build the Eval Harness

Eval as production code; runs on schedule; results in a queryable place; regressions block deploys.

- Curated eval case set covering known failure modes

- Daily run with regression alerting

- Public dashboard reviewed daily

Step 3: Instrument the Four Dimensions

Standard SRE for availability; eval and sampling for quality; drift and anomaly for detectability; rollback and fallback for recoverability.

- Per-dimension instrumentation surface

- Alerting tuned to avoid fatigue

- Cross-dimensional dashboards

Step 4: Build Alerts, Runbooks, Rollback, Fallback

Alerts that fire when targets are breached; runbooks for each alert; rollback inside minutes; fallback paths documented.

- Per-alert runbook

- Rollback automation tested quarterly

- Fallback path verified per inference path

Step 5: Operate on a Cadence

Weekly reliability review, monthly eval review, quarterly operating-model review, incident-driven updates.

- Documented cadence

- Action tracking from postmortems

- Sunset criteria for capabilities not pulling weight

Where It Works Well

- Continuous eval running daily with regression alerting

- Rollback automation tested quarterly

- Operating cadence sustained without heroism

Where It Does Not Work Well

- Measuring uptime as the only reliability metric

- Eval that runs occasionally rather than continuously

- No drift detection on inputs and outputs

Key Takeaway: The team that measures all four dimensions ships reliable AI. The team that measures only uptime ships incidents.

Common Pitfalls

i) Measuring uptime, not quality

A system that is up and wrong is not reliable. Build quality instrumentation alongside availability.

- Eval as production code

- Sampling-based human review

- Known-failure-mode detection

ii) Eval that runs occasionally

Weekly eval catches weekly regressions. Real reliability requires daily or continuous eval.

iii) No drift detection

Drift is the slow-motion failure mode. Without detection, you discover failures when customers do.

iv) Untested rollback

A rollback path you have not tested is a rollback path you do not have. Test in production-like environments quarterly.

Takeaway from these lessons: Most reliability failures are operational gaps, not model gaps. Operating discipline is the difference between fragile and reliable. The team that builds the eval harness, instruments the four dimensions, tests rollback quarterly, and runs a quarterly operating-model review is the team whose AI program survives its first year and compounds in years two and three.

AI Reliability Best Practices: What High-Performing Teams Do Differently

1. Build continuous eval as the floor

Eval that runs daily, blocks regressions, and updates from production failures. The eval harness is production code.

2. Instrument all four dimensions

Availability, quality, detectability, recoverability. Each requires its own instrumentation. Skipping any creates a predictable gap.

3. Test rollback and fallback quarterly

In production-like environments. Document the test. Rollback you have not tested is rollback you do not have.

4. Tune alerts to avoid fatigue

Too many alerts that do not require action create blindness. Treat alert noise as a problem; tune ruthlessly.

5. Operate the program on a cadence

Weekly reliability review, monthly eval review, quarterly operating-model review. Without cadence, reliability erodes.

Logiciel's value add is helping AI and engineering leaders design and operate reliability programs, including eval harness setup, observability for AI systems, and operating model design.

Takeaway for High-Performing Teams: High-performing teams treat reliability as a daily practice, not a quarterly milestone.

Signals You Are Designing AI Reliability Correctly

How do you know the ai reliability program is set up to succeed? Not in a board deck or a celebration, but in the daily evidence the team produces. Below are the signals that distinguish programs on the path from programs that look like progress.

- The team can describe failure modes without flinching. People who actually run ai reliability systems will tell you the last three things that broke. People who have only read about it will not.

- Cost is observable in real time. The team can tell you, today, how much they spent yesterday on this and what drove the change.

- Change is boring. New versions, new models, new pipelines all roll forward and roll back the same way. Heroic deploys signal an immature system.

- Eval is continuous, not ceremonial. A live dashboard refreshed at least daily, not a quarterly slide.

- Vendor lock-in is a known quantity. The team can name the dependencies that would hurt to remove and the rip-and-replace cost in dollars and weeks.

Adjacent Capabilities and Connected Work

This work does not exist in isolation. AI Reliability depends on, and feeds into, several adjacent capabilities. Building one without thinking about the others is the most common scoping mistake.

In most enterprise programs, ai reliability shares infrastructure with the data platform, the observability stack, and the security review process. It shares team capacity with platform engineering, applied ML, and SRE. And it shares leadership attention with whatever the next AI initiative is on the roadmap. Naming these adjacencies upfront helps the program scope realistically and helps leadership see the work as a portfolio rather than a one-off project.

The most common mistake in adjacent-capability scoping is treating each adjacency as someone else's problem. The integration with the data platform is your problem. The security review of the runtime is your problem. The on-call rotation that covers the system you ship is your problem. Pretending otherwise pushes work to teams that did not plan for it, and the work returns to you later as a delay or an incident. Own the adjacencies you depend on; partner with the teams that own them; share the timeline.

Conclusion

AI reliability is the discipline that turns a clever prototype into a platform the business can rely on. The four dimensions are the structure; the operating cadence is the muscle.

Key Takeaways:

- Reliability covers availability, quality, detectability, recoverability

- Continuous eval is the floor; rollback automation is the safety net

- Operating cadence keeps the program from eroding silently

When AI reliability is built and operated correctly, the benefits compound:

- Faster recovery when failures happen

- Customer trust that does not have to be rebuilt every quarter

- A reusable reliability platform for the next program

- Defensible posture in incident review and audit

Real Estate Identity Resolution

Duplicate records are hiding your best leads. Identity resolution reveals true buyer intent and fixes your pipeline.

Call to Action

If your AI program is feeling fragile, the move this month is to define reliability targets across the four dimensions and build the eval harness.

Learn More Here:

- AI Development Services Engagement Models

- AI Agent Deployment Models

- Hybrid Delivery Model Ctos AI First Engineering 2026

At Logiciel Solutions, we work with AI and engineering leaders on reliability programs, including eval harness design, observability for AI, and operating model setup.

Explore how to make your AI program reliable.

Frequently Asked Questions

What is AI reliability?

The discipline of building and operating AI systems that produce correct, useful outputs at acceptable rates, with detectable, recoverable failures, across the variability of production.

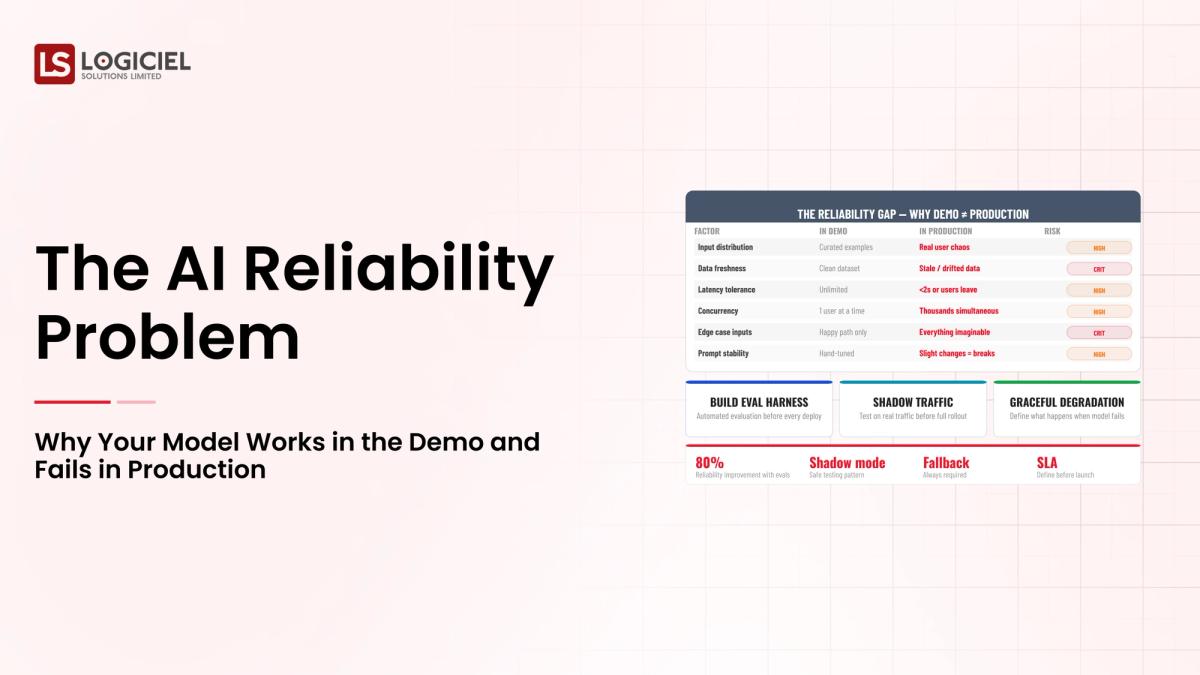

Why do models that pass eval fail in production?

Because eval covers what the team thought to test. Production introduces variability that eval did not cover. The fix is to expand eval continuously based on production failures.

Is reliability the same as uptime?

No. A system that is up but returning wrong answers is not reliable. Reliability covers availability, quality, detectability, recoverability.

What is drift detection?

Monitoring the distribution of model inputs and outputs to detect statistical changes that suggest the model is performing differently. Drift is the slow-motion failure mode.

What is the biggest mistake in AI reliability programs?

Measuring uptime and assuming quality follows. It does not. Build quality instrumentation alongside availability.