Once architecture, security, and governance foundations are clear, the next strategic question for CTOs is deployment.

Where do AI agents live?

How are reasoning systems integrated into existing infrastructure?

What boundaries define data exposure?

How does deployment choice influence latency, cost, compliance, and long-term scalability?

The deployment model is not an implementation detail. It defines operational risk, cost profile, and architectural flexibility. AI agents are not passive services. They reason, call tools, retrieve context, and modify state. Their physical and logical placement inside your infrastructure determines exposure.

This cluster examines deployment patterns, infrastructure trade-offs, SaaS scaling considerations, cost architecture, latency engineering, and long-term operational strategy for production-grade AI agents.

Agent-to-Agent Future Report

Understand how autonomous AI agents are reshaping engineering and DevOps workflows.

Why Deployment Strategy Is a Strategic Decision

In deterministic systems, deployment location primarily affects latency and scaling. In agentic systems, deployment affects security posture, data sovereignty, tool access pathways, and cost predictability.

An AI agent interacts with:

- Internal databases

- External APIs

- Monitoring systems

- Customer data

- CI/CD pipelines

- Infrastructure services

Each interaction crosses boundaries. Deployment architecture determines how those boundaries are protected.

For CTOs, deployment must align with three core considerations.

First, data exposure and regulatory compliance.

Second, operational scalability and performance.

Third, economic sustainability at scale.

A misaligned deployment model can undermine otherwise strong architectural design.

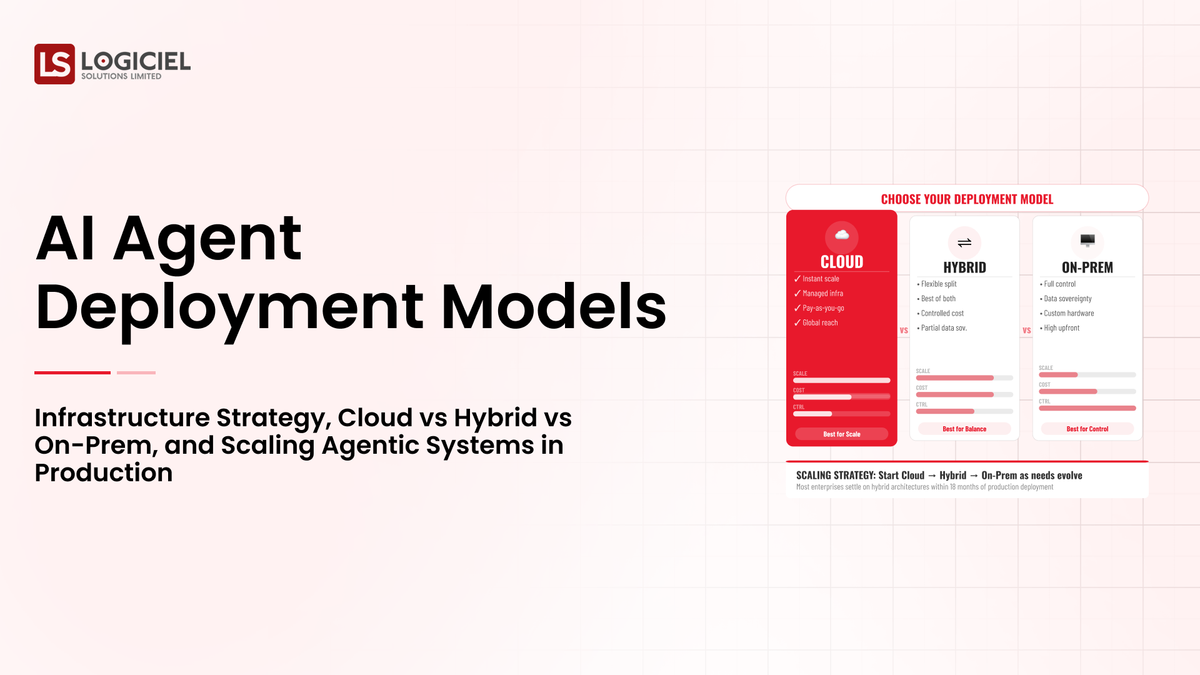

Cloud-Native Deployment Model

The most common starting point for AI agent deployment is cloud-native integration.

In this model, the reasoning engine is accessed through external APIs, orchestration runs in cloud infrastructure, and tool interfaces connect through secure endpoints.

The advantages are speed and scalability.

Cloud-native deployment allows rapid experimentation. Elastic scaling supports variable workloads. Managed services reduce DevOps overhead. Integration with SaaS ecosystems is straightforward.

However, this model introduces exposure considerations.

Sensitive data may traverse external networks. Vendor dependency increases. Token consumption costs scale with usage volume. Data residency regulations may restrict cross-border transfer.

For early-stage SaaS companies or internal productivity agents, cloud-native deployment often provides sufficient control. For regulated industries, additional constraints may apply.

Cloud-native is optimal for agility, but it requires strong boundary management.

Hybrid Deployment Model

Hybrid deployment separates reasoning access from sensitive data handling.

In this architecture, orchestration and tool execution remain inside private infrastructure. The reasoning engine may still use external model APIs, but interaction passes through a controlled gateway. Sensitive data is preprocessed internally before external calls.

Hybrid models reduce exposure surface while preserving scalability.

This approach allows organizations to enforce data minimization before context reaches the reasoning engine. It enables logging and validation layers to operate internally. It reduces compliance friction while retaining flexibility.

However, hybrid deployment increases integration complexity. Latency may increase due to additional routing. DevOps overhead expands.

For mid-market SaaS companies serving enterprise clients, hybrid deployment often becomes the long-term standard.

It balances speed with governance.

On-Premise and Air-Gapped Deployment

For highly regulated sectors such as finance, healthcare, or defense, on-premise deployment may be required.

In this model, reasoning engines are self-hosted within controlled infrastructure. Tooling, memory, and orchestration layers operate entirely inside the organizational network. External API dependency is minimized or eliminated.

The advantages are maximum data sovereignty and regulatory alignment.

The trade-offs are infrastructure cost, hardware scaling requirements, and operational complexity. Model updates must be managed internally. Performance optimization requires dedicated engineering effort.

On-premise deployment demands architectural maturity.

For most SaaS companies outside heavily regulated industries, hybrid models provide sufficient control without full on-premise overhead.

Latency Engineering Across Deployment Models

AI agents introduce multi-step reasoning loops. Deployment model directly affects latency.

Cloud-native systems benefit from high-performance managed infrastructure but may incur network round-trip delays.

Hybrid systems introduce additional routing layers for context preprocessing and validation.

On-premise systems may reduce external latency but introduce hardware bottlenecks if not provisioned correctly.

Latency compounds across reasoning cycles. Each perception, planning, execution, and evaluation loop adds delay.

CTOs must establish latency budgets per workflow.

Strategies to reduce latency include:

Limiting reflection depth Parallelizing independent tool calls Caching retrieval results Using deterministic shortcuts for known patterns Reducing context window size

Latency engineering becomes essential for maintaining acceptable user experience.

Cost Architecture and Economic Scaling

Deployment model influences cost structure.

Cloud-native systems incur token consumption charges and compute scaling costs. High-usage agents can amplify operating expense rapidly.

Hybrid models may reduce token cost through context minimization but increase infrastructure overhead.

On-premise systems shift cost from API consumption to hardware investment and operational management.

Cost must be modeled per completed workflow.

If an agent reduces pull request review time by twenty percent but requires multiple reflection loops per request, the economic benefit must outweigh token and infrastructure cost.

Cost governance should include:

Token usage monitoring Workflow cost ceilings Reflection loop limits Tool invocation caps

Economic sustainability determines scalability.

Infrastructure Isolation and Boundary Management

Regardless of deployment model, infrastructure isolation is critical.

Agents should operate in segmented environments with restricted access paths.

Execution environments should be sandboxed when possible. Temporary containers can isolate tool execution. Read-only views can restrict unintended modifications.

Network segmentation should separate reasoning layers from core production databases unless explicitly required.

Boundary management reduces blast radius if reasoning misinterprets context.

Deployment is not merely where the agent runs. It is how boundaries are enforced.

Memory Placement Strategy

Memory architecture interacts with deployment.

Short-term memory often resides in orchestration services. Retrieval memory may use vector databases hosted internally or externally. Structured state memory should ideally reside within internal deterministic storage layers.

For hybrid models, retrieval memory may remain internal to prevent sensitive embedding exposure.

CTOs must decide which memory components can reside externally and which must remain internal.

Memory placement affects both latency and compliance.

Scaling Agents Across SaaS Architectures

In SaaS environments, multi-tenant architecture introduces additional considerations.

Agents may operate across multiple customer datasets. Isolation between tenants must be preserved. Memory retrieval must respect tenant boundaries.

Token usage patterns may vary significantly between customers. Cost allocation models should reflect tenant-level consumption.

Agents embedded in SaaS platforms must integrate with existing observability stacks. Logging and reasoning traces should align with application monitoring systems.

Scaling across tenants requires strict isolation discipline.

CI/CD Integration and Deployment Lifecycle

AI agents themselves require deployment pipelines.

Model updates, prompt modifications, tool schema changes, and orchestration adjustments must follow controlled CI/CD processes.

Testing frameworks should include regression tests for reasoning stability.

Staging environments should simulate representative workflows before production rollout.

Deployment maturity reduces risk of behavioral drift.

Agents should not bypass existing DevOps discipline.

Vendor Dependency and Portability Considerations

Cloud-native deployments introduce vendor lock-in risk.

CTOs should evaluate portability strategies. Abstraction layers between reasoning engines and orchestration logic reduce switching cost.

Tool interfaces should remain independent of specific model providers where possible.

Hybrid and on-premise models provide greater control but increase engineering overhead.

Strategic planning should consider long-term vendor flexibility.

Observability Across Infrastructure Layers

Deployment strategy must integrate observability from the beginning.

Reasoning logs, tool call traces, and cost metrics should feed into centralized monitoring systems.

Anomaly detection should trigger alerts for unusual execution patterns.

Infrastructure metrics and reasoning metrics must be correlated.

Observability maturity determines whether autonomy scaling is safe.

Resilience and Fail-Safe Design

Agentic systems must fail safely.

If reasoning fails, deterministic fallback paths should activate. If tool execution errors occur repeatedly, workflows should abort gracefully.

Circuit breakers can prevent runaway loops. Escalation triggers can route uncertain tasks to human oversight.

Resilience design ensures that probabilistic variability does not cascade into systemic instability.

Strategic Deployment Roadmap for CTOs

Deployment should follow staged maturity.

Begin with low-risk internal workflows. Measure reliability and cost stability.

Introduce hybrid boundary controls as scale increases.

Implement tenant isolation and observability before expanding scope.

Evaluate on-premise options only when regulatory requirements demand.

Scale autonomy progressively as infrastructure maturity increases.

Deployment is not a one-time decision. It evolves with system complexity.

Long-Term Infrastructure Implications

Over time, agentic systems become embedded across development, operations, support, and product workflows.

Infrastructure must evolve to support continuous reasoning workloads.

Compute allocation must account for dynamic token usage. Logging storage must scale to accommodate reasoning traces. Security audits must incorporate AI-specific risk.

CTOs must treat AI agents as long-term infrastructure investments.

Short-term experimentation without long-term deployment planning leads to architectural debt.

Closing Perspective on Deployment Strategy

AI agent deployment is not about where the model runs. It is about how reasoning integrates with infrastructure boundaries.

Cloud-native provides speed. Hybrid provides balance. On-premise provides sovereignty.

Latency engineering ensures usability. Cost governance ensures sustainability. Boundary management ensures safety. Observability ensures control.

For CTOs, deployment strategy determines whether AI agents scale as structural assets or remain isolated experiments.

Architecture maturity, not model capability, defines long-term success.

Evaluation Differnitator Framework

Why great CTOs don’t just build they evaluate. Use this framework to spot bottlenecks and benchmark performance.