There is a production agent that just took an action your team did not expect. The action was within the model's tool surface but not within the policy your team thought was in force. The audit log exists, but the log does not show why the action was allowed. The conversation in the room is uncomfortable.

This is not a model failure. It is a guardrail failure.

A modern guardrail layer is not a policy document. It is the runtime controls that prevent the agent from taking actions outside an approved envelope, and the evidence trail that proves the controls worked.

Budget Approval Playbook

Inside a 5-step framework that won $500K of infrastructure budget in 14 days.

However, many programs ship guardrails as documentation and discover at the first incident that documentation is not enforcement.

If you are a VP AI and are responsible for building or scaling your agentic AI guardrail program, the intent of this article is:

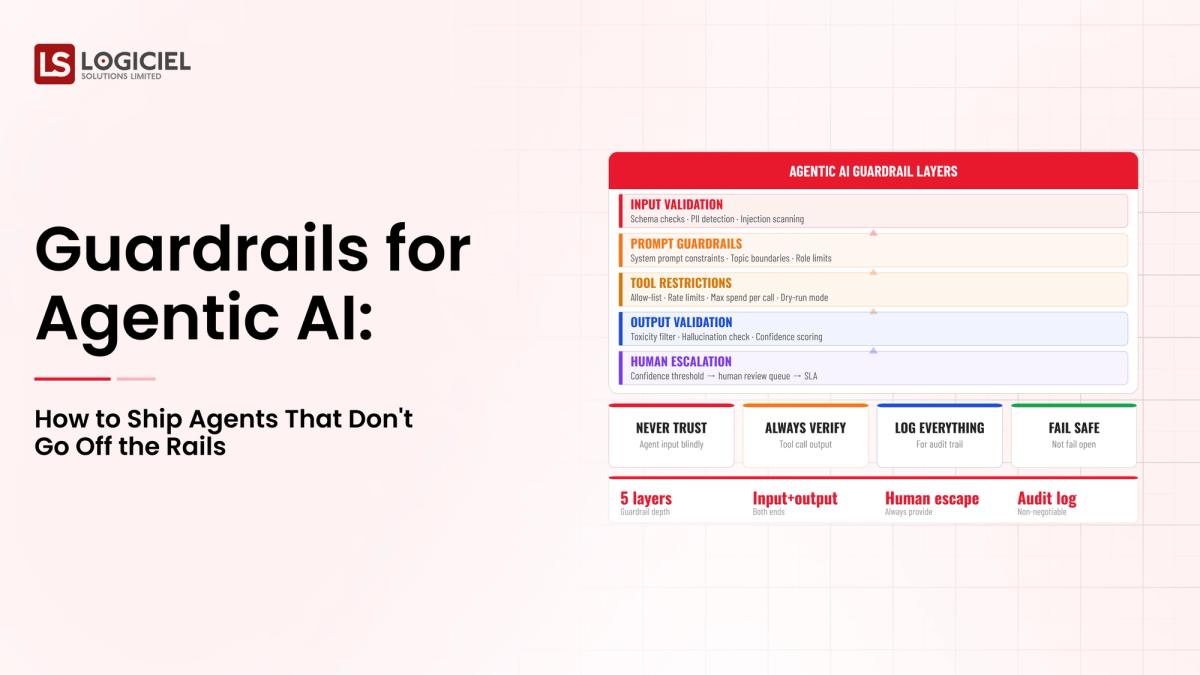

- Define what guardrails for agentic AI actually are

- Walk through the six categories of control every production agent needs

- Lay out the operating cadence that keeps guardrails effective over time

To do that, let's start with the basics.

What Is Guardrails for Agentic AI? The Basic Definition

At a high level, guardrails for agentic AI are the runtime controls that prevent the agent from taking actions outside an approved envelope. They are enforced; they produce evidence; they are operated on a cadence.

To compare:

If a policy document is the safety brochure on an airline, a guardrail is the cockpit door lock. Both matter; only one stops a bad outcome in the moment.

Why Is Guardrails for Agentic AI Necessary?

Issues that Guardrails for Agentic AI addresses or resolves:

- Closing the gap between policy intent and runtime behavior

- Producing audit evidence that the controls worked

- Bounding blast radius for actions the agent can take

Resolved Issues by Guardrails for Agentic AI

- Translates policy into enforced runtime checks

- Captures evidence of blocked attempts for audit

- Layers controls so single-point failures do not become incidents

Core Components of Guardrails for Agentic AI

- Tool-level controls (input validation, rate limits, authorization)

- Output validation (schema, policy, hallucination, citation checks)

- Kill switches with documented invocation criteria

- Blast-radius scoping that matches autonomy to risk

- Audit trail capturing plan, tool calls, intermediate state, final outcome

- Human-in-the-loop checkpoints for high-blast actions

Modern Guardrails for Agentic AI Tools

- Policy engines like Open Policy Agent for tool-level controls

- Guardrail libraries: NeMo Guardrails, Guardrails AI, Llama Guard

- Observability platforms: LangSmith, Arize, Galileo for guardrail evidence

- Runtime kill switches built on top of orchestrators or feature flags

- Audit-trail platforms: append-only stores like S3 with immutability

These tools are the building blocks; the discipline is the operating model around them.

Other Core Issues They Will Solve

- Surfaces blocked attempts for trend analysis and detection of attack patterns

- Provides a defensible evidence base for regulator and auditor questions

- Reduces incident severity by stopping the agent before harm propagates

In Summary: Guardrails for agentic AI are runtime controls plus evidence plus cadence; without all three, you have governance theater.

Importance of Guardrails for Agentic AI in 2026

Guardrails are no longer optional in 2026. Four reasons explain why.

1. Boards and regulators are asking specific guardrail questions.

Programs that cannot show evidence of runtime enforcement struggle in those conversations.

2. Agent capability is outpacing policy.

What an agent can do in 2026 is broader than a policy document written six months ago anticipated. The guardrail layer absorbs the gap.

3. Adversarial inputs are a real attack surface.

Prompt injection, tool poisoning, and context attacks are real failure modes. The guardrail layer is the defense.

4. Cost of incidents is rising.

Customer-impacting agentic incidents now make news. The cost of a single significant incident exceeds the cost of guardrail work several times over.

Traditional vs. Modern Guardrails for Agentic AI Concepts

- Policy document only vs. enforced runtime controls with evidence

- Single-layer control vs. defense in depth across six categories

- Annual review of guardrails vs. quarterly operating cadence

- Untested kill switches vs. tested kill switches with documented invocation criteria

In summary: Guardrails are the substance of AI governance in 2026, not the wrapper.

Details About the Core Components of Guardrails for Agentic AI: What Are You Designing?

Let's go through each layer.

1. Tool-Level Controls

Each tool enforces its own input validation, rate limits, and authorization checks.

Per-tool controls:

- Input validation against contract

- Rate limits and per-tenant quotas

- Authorization checks for role-bound actions

2. Output Validation

Before any agent output reaches a user or downstream system, validate it.

Validation categories:

- Schema and structural correctness

- Policy compliance and safety filters

- Hallucination and citation checks for grounded answers

3. Kill Switches

Documented conditions under which the agent stops, with a tested runtime mechanism.

Kill-switch design:

- Per-task and per-fleet stop conditions

- Tested quarterly in production-like environments

- Documented invocation criteria and operator authority

4. Blast-Radius Scoping

Autonomy is matched to the blast radius of each action.

Scoping rules:

- High-blast actions default to HITL

- Low-blast actions can be high autonomy

- Scoping is enforced at the tool layer, not the prompt layer

5. Audit Trail

Every agent run captures plan, tool calls, intermediate state, and final outcome.

Audit requirements:

- Queryable interface for auditors and risk reviewers

- Retention period that meets regulatory requirements

- Append-only storage with tamper-evidence

Benefits Gained from Layered Controls and Evidence Capture

- Defense in depth across multiple control categories

- Defensible posture in audit and regulator review

- Faster recovery and shorter incident timelines

How It All Works Together

Tool-level controls catch most issues at the input. Output validation catches what slips through. Kill switches stop the agent when systemic conditions are detected. Blast-radius scoping ensures that even allowed actions are appropriate to risk. Audit captures everything for review. HITL is a designed feature for the highest-risk steps. Defense in depth.

Common Misconception

Guardrails are documentation and policy work.

Guardrails are runtime controls. Documentation describes them after the fact. Without runtime enforcement, the policy is a hope.

Key Takeaway: Each control category addresses a different risk surface. Programs that pick two and skip four have predictable gaps.

Real-World Guardrails for Agentic AI in Action

Let's take a look at how guardrails for agentic ai operates with a real-world example.

We worked with a healthcare technology company shipping an agentic system that touches PHI, with these constraints:

- Tool-level controls for any action affecting patient records

- Output validation for any clinical text shown to users

- HITL approval for any action with patient-safety implications

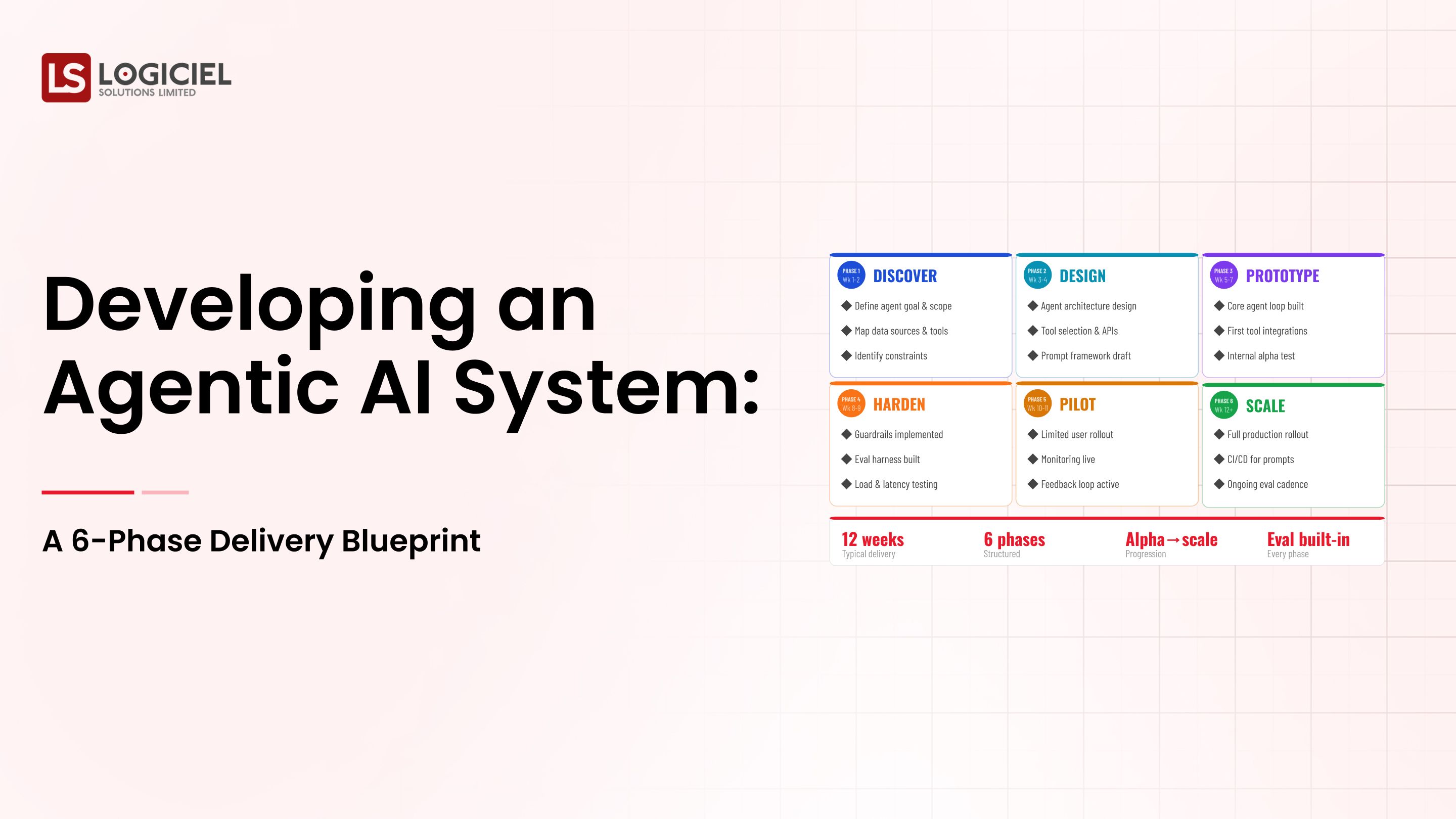

Step 1: Map the Action Surface

List every action the agent can take, organized by blast radius. The list is the foundation of the guardrail layer.

- Per-action blast-radius classification

- Required controls per action

- Owner per control category

Step 2: Design Tool-Level Controls

For each action, specify input validation, rate limits, authorization checks, and kill switches.

- Per-tool input contract

- Per-tenant rate limits

- Authorization checks tied to identity

Step 3: Design Output Validation

Schema, policy, hallucination, and citation checks before any output reaches a user.

- Block at validation layer when checks fail

- Capture blocked attempts in audit

- Surface trends for review

Step 4: Build the Audit Trail and HITL Surfaces

Capture plan, tool calls, intermediate state, and final outcome. Build queryable interfaces.

- Append-only audit store

- Queryable interface for auditors

- HITL approval UX for high-blast actions

Step 5: Operate the Guardrails on a Cadence

Quarterly review; new tools require new controls; autonomy changes require re-evaluation; kill switches tested quarterly.

- Quarterly guardrail review session

- Documented changes with rationale

- Tabletop exercise with risk function

Where It Works Well

- Defense in depth across all six control categories

- Evidence capture for every blocked attempt

- Quarterly operating cadence to prevent drift

Where It Does Not Work Well

- Prompt-only guardrails treated as enforced controls

- Single-layer guardrails creating one point of failure

- Static guardrails that drift as the system changes

Key Takeaway: Guardrails done well let you ship more capable agents into more sensitive workflows than your competitors. The capability is the system around the model, not the model itself.

Common Pitfalls

i) Prompt-only guardrails

Telling the model not to do something in a prompt is a request, not a guardrail. Build runtime controls.

- Prompts are advisory; controls are enforced

- Audit response to a regulator depends on enforcement

- Test by trying to bypass the guardrail in eval

ii) Single-layer guardrails

Putting all controls in one layer creates a single point of failure. Defense in depth across multiple categories.

iii) Untested kill switches

A kill switch you have not tested is a kill switch you do not have. Test quarterly. Document the test.

iv) Audit logs without queryability

Logs that exist but cannot be queried are not an audit trail. Build the queryable interface.

Takeaway from these lessons: Most guardrail failures are not technical; they are operational. The runtime layer exists; the cadence and evidence layer are missing.

Guardrails for Agentic AI Best Practices: What High-Performing Teams Do Differently

1. Build defense in depth

All six categories of control. Each addresses a different risk surface. Skipping any creates a predictable gap.

2. Capture evidence of every blocked attempt

The auditor's first question is what the guardrails prevented. Without evidence, the answer is unconvincing.

3. Test the kill switch quarterly

In production-like environments. With documented criteria. By the operator who would invoke it under real conditions.

4. Schedule quarterly guardrail reviews

New tools, new actions, new autonomy levels. Without review cadence, the guardrail layer erodes silently.

5. Treat guardrails as part of the product, not as overhead

Capable agents in sensitive environments require strong guardrails. The guardrails are the capability, not a constraint on it.

Logiciel's value add is helping teams design the guardrail layer alongside the agent itself, with the runtime controls, evidence capture, and operating cadence that turn policy into enforcement.

Takeaway for High-Performing Teams: High-performing teams treat guardrails as engineering, not as policy. The discipline is the difference between a program that scales and a program that incidents.

Signals You Are Designing Guardrails for Agentic AI Correctly

How do you know the guardrails for agentic ai program is set up to succeed? Not in a board deck or a celebration, but in the daily evidence the team produces. Below are the signals that distinguish programs on the path from programs that look like progress.

- The team can describe failure modes without flinching. People who actually run guardrails for agentic ai systems will tell you the last three things that broke. People who have only read about it will not.

- Cost is observable in real time. The team can tell you, today, how much they spent yesterday on this and what drove the change.

- Change is boring. New versions, new models, new pipelines all roll forward and roll back the same way. Heroic deploys signal an immature system.

- Eval is continuous, not ceremonial. A live dashboard refreshed at least daily, not a quarterly slide.

- Vendor lock-in is a known quantity. The team can name the dependencies that would hurt to remove and the rip-and-replace cost in dollars and weeks.

Adjacent Capabilities and Connected Work

This work does not exist in isolation. Guardrails for Agentic AI depends on, and feeds into, several adjacent capabilities. Building one without thinking about the others is the most common scoping mistake.

In most enterprise programs, guardrails for agentic ai shares infrastructure with the data platform, the observability stack, and the security review process. It shares team capacity with platform engineering, applied ML, and SRE. And it shares leadership attention with whatever the next AI initiative is on the roadmap. Naming these adjacencies upfront helps the program scope realistically and helps leadership see the work as a portfolio rather than a one-off project.

The most common mistake in adjacent-capability scoping is treating each adjacency as someone else's problem. The integration with the data platform is your problem. The security review of the runtime is your problem. The on-call rotation that covers the system you ship is your problem. Pretending otherwise pushes work to teams that did not plan for it, and the work returns to you later as a delay or an incident. Own the adjacencies you depend on; partner with the teams that own them; share the timeline.

Conclusion

Guardrails for agentic AI are the substance of governance, not the wrapper. The runtime controls, the evidence, and the cadence together make the system safe.

Key Takeaways:

- Guardrails are runtime controls plus evidence plus cadence

- Defense in depth across six categories beats single-layer enforcement

- Test the kill switch; review the layer quarterly; capture every blocked attempt

When guardrails are designed and operated well, the benefits compound:

- Capability to ship agents into more sensitive workflows

- Defensible posture in audit and regulator review

- Faster recovery when incidents occur

- Reusable control patterns for the next agent

From Data Chaos to Data Confidence

Inside a 6-month plan that turned 47 fragile pipelines into 98.7% reliability.

Call to Action

If you are shipping agentic AI, the work this month is to map the action surface, design tool-level controls, and build the audit trail.

Learn More Here:

At Logiciel Solutions, we work with VPs of AI on guardrail design and the operating cadence that keeps guardrails effective over time.

Explore how to design your agentic AI guardrails.

Frequently Asked Questions

What are guardrails for agentic AI?

The runtime controls that prevent the agent from taking actions outside an approved envelope. Six categories: tool-level controls, output validation, kill switches, blast-radius scoping, audit trail, HITL checkpoints.

Are prompt instructions guardrails?

No. Prompt instructions are advisory; guardrails are enforced runtime checks. The audit response to a regulator is fundamentally different.

Which control category matters most?

Tool-level controls have the highest enforcement leverage. But single-category enforcement creates gaps. Use all six.

How do we audit-proof the system?

Capture plan, tool calls, intermediate state, final outcome, and blocked attempts. Build a queryable interface. Retain for the period regulation requires.

What is the biggest mistake in guardrail programs?

Treating guardrails as policy work instead of runtime engineering. Without enforcement, the policy is decoration.