There is an agentic AI initiative on your roadmap that the team is calling complex but exciting. Your job is to make sure the team treats it as a delivery problem, not a research problem. Excitement does not survive the first incident; structured delivery does.

This is not a piece on AI capability. It is a piece on the delivery discipline that determines whether the capability ships safely.

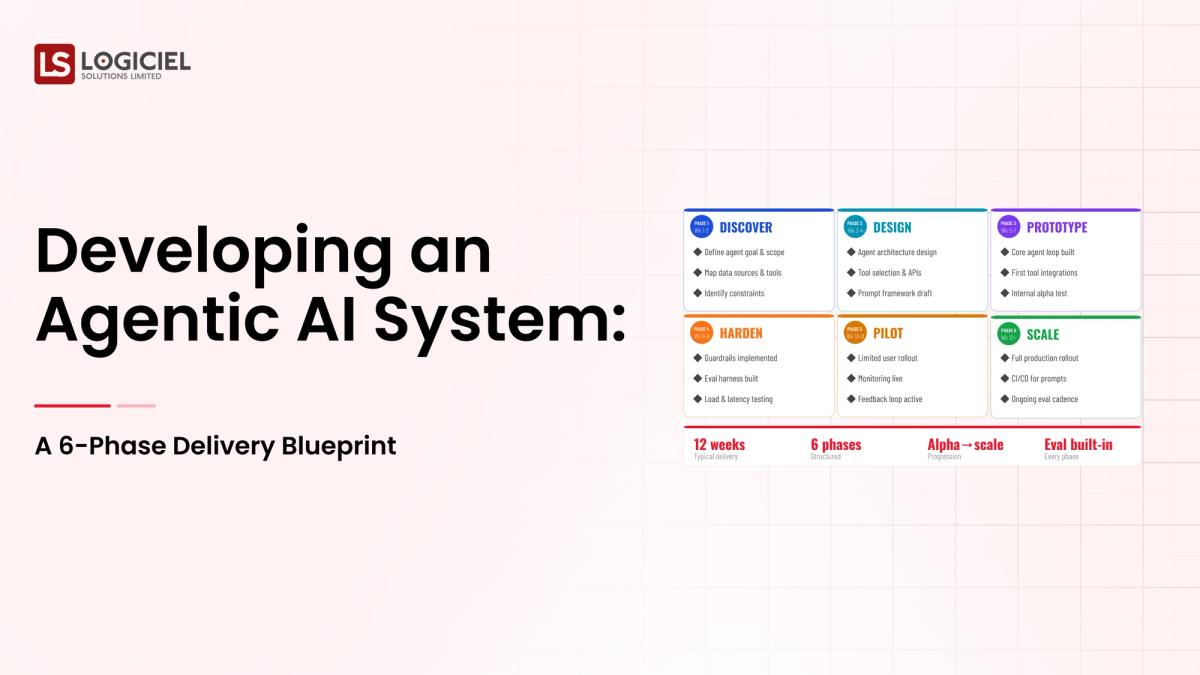

A modern agentic AI delivery follows a structured phase plan with clear deliverables, owners, and gates. It also resists the urge to skip phases that feel like overhead.

Reliability as Competitive Advantage

Inside a published-SLA program that turned silent reliability gains into a +42 NPS swing.

However, most teams new to agentic AI ship the agent before the controls and learn the hard way which phases were essential.

If you are a VP Engineering and are responsible for building or scaling your agentic AI delivery, the intent of this article is:

- Define what agentic AI delivery looks like end-to-end

- Walk through the six-phase blueprint with deliverables for each phase

- Lay out the team shape, timeline, and operating model that turn a build into a system

To do that, let's start with the basics.

What Is Developing an Agentic AI System? The Basic Definition

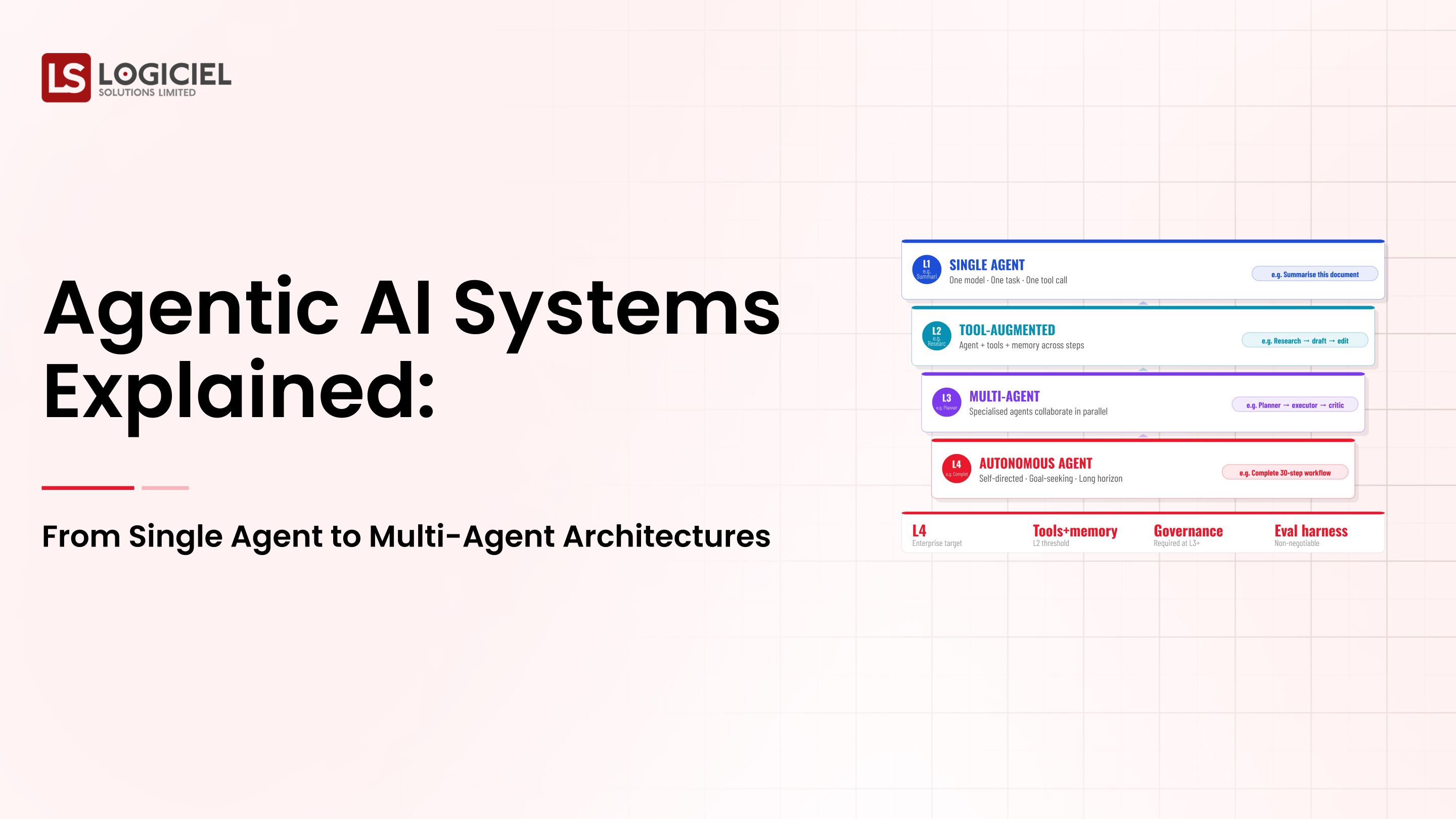

At a high level, developing an agentic AI system means designing, building, evaluating, deploying, and operating a system where a model plans actions, calls tools, observes outcomes, and decides what to do next, with the controls and operating model that let it run safely.

To compare:

If non-agentic AI delivery is shipping a feature, agentic AI delivery is shipping a service. The discipline required is closer to running a production database than launching a marketing page.

Why Is Developing an Agentic AI System Necessary?

Issues that Developing an Agentic AI System addresses or resolves:

- Avoiding the 'clever demo, fragile production' arc that wastes quarters

- Producing audit-grade evidence for risk and compliance reviews

- Building a platform layer that compounds across future agents

Resolved Issues by Developing an Agentic AI System

- Sequences the work so upstream decisions enable downstream ones

- Forces deliverables that prevent shortcut shipping

- Aligns engineering and risk at each phase gate

Core Components of Developing an Agentic AI System

- Phase 1. Workflow and outcome

- Phase 2. Blast radius and controls

- Phase 3. Tool layer design

- Phase 4. Agent design and eval harness

- Phase 5. Controlled rollout and operating model

- Phase 6. Scale and continuous improvement

Modern Developing an Agentic AI System Tools

- LangGraph, LangChain Agents, DSPy for agent orchestration

- Anthropic Claude, OpenAI Assistants, AWS Bedrock Agents for managed runtimes

- LangSmith, Helicone, Arize for agent observability

- Pinecone, Weaviate, pgvector for memory and retrieval

- Internal tool wrappers and policy engines for control

These tools form the typical platform stack for enterprise agentic delivery in 2026.

Other Core Issues They Will Solve

- Documents the operating model before the first incident

- Builds reusable tool surfaces and eval harnesses

- Creates audit-grade traces that survive regulator review

In Summary: Developing an agentic AI system is a six-phase delivery discipline that turns a clever agent into a safe production service.

Importance of Developing an Agentic AI System in 2026

Structured delivery for agentic AI matters more in 2026 than for any prior generation of AI work. Four reasons explain why.

1. Agents take real action.

The blast radius of agentic systems is larger than non-agentic AI. Wrong actions in production are not learning opportunities; they are incidents.

2. Operating models are still being invented.

Most enterprises do not have a runbook for agentic on-call. The team that ships first writes the runbook; the runbook is part of the deliverable.

3. Audit and regulatory surfaces are growing.

Risk committees and regulators are starting to ask agentic-specific questions. Programs without designed audit trails are programs that fail those reviews.

4. Reuse compounds the investment.

The platform you build for the first agent gets reused by the next. The first delivery is expensive; the second is fast; the fifth feels obvious.

Traditional vs. Modern Developing an Agentic AI System Concepts

- Code-first delivery vs. design-first phased delivery

- End-of-project eval vs. eval as a phase deliverable

- Operating model as afterthought vs. operating model as phase five deliverable

- Single-shot rollout vs. controlled population then scale

In summary: The phase blueprint exists to keep delivery disciplined when excitement and pressure conspire to skip steps.

Details About the Core Components of Developing an Agentic AI System: What Are You Designing?

Let's go through each layer.

1. Phase 1. Workflow and Outcome

Deliverable: a one-page workflow document with sign-off.

What goes in the document:

- Business outcome in one sentence

- Sequence of steps required to produce it

- Systems of record each step touches

- Sign-off from line-of-business owner

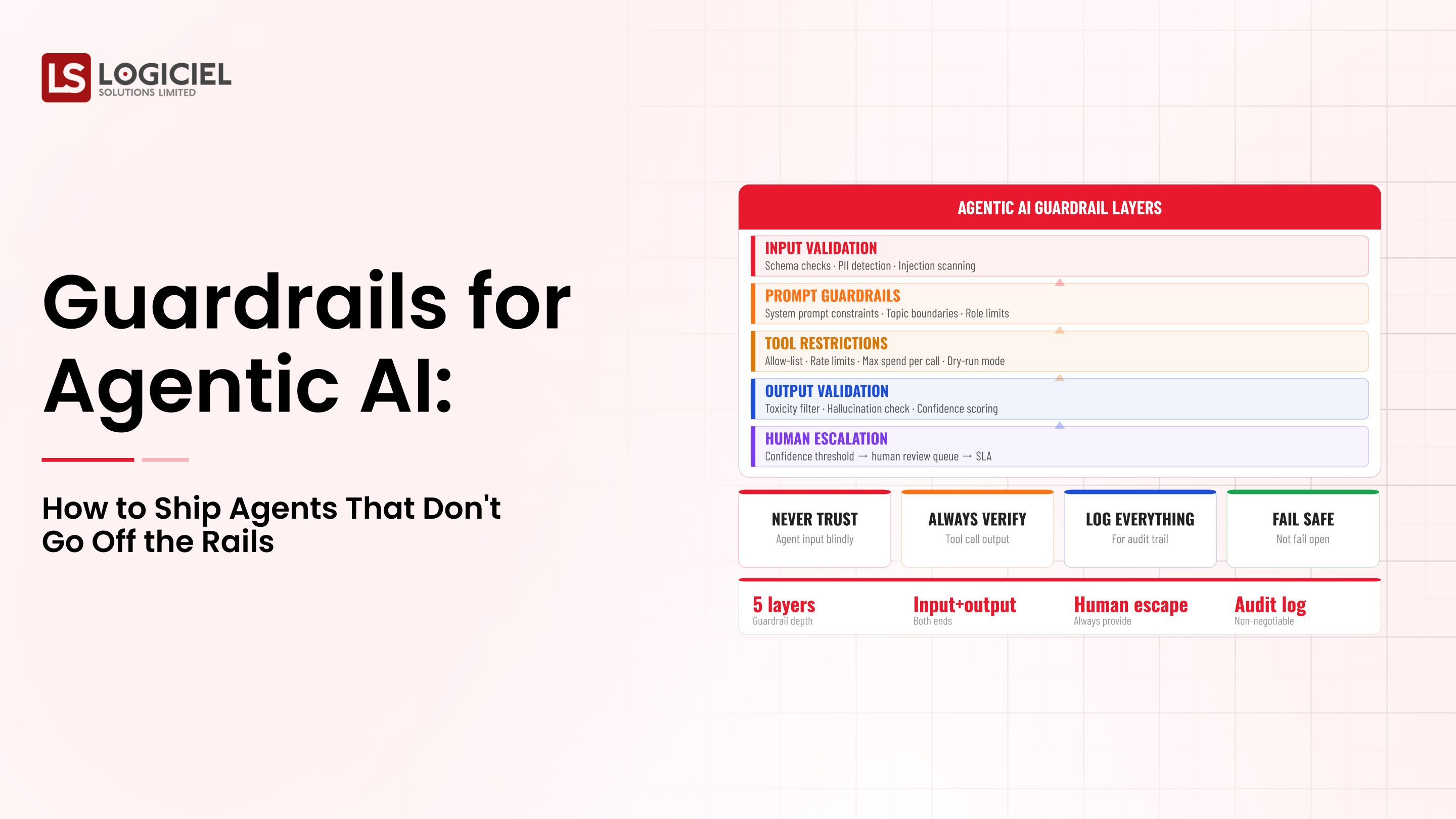

2. Phase 2. Blast Radius and Controls

Deliverable: a blast-radius register with autonomy levels.

What the register names:

- Worst-case impact of each step going wrong

- Required controls per step

- Autonomy level (HITL or autonomous) per step

3. Phase 3. Tool Layer Design

Deliverable: a tool surface specification.

Tool spec contents:

- Input contract per tool

- Output contract and failure modes

- Rate limits, authorization, kill-switch

4. Phase 4. Agent Design and Eval Harness

Deliverable: agent architecture and eval harness running on schedule.

What ships in this phase:

- Single vs. multi-agent decision documented

- Coordination shape and memory model decided

- Sequence eval covering happy, recoverable, unrecoverable, adversarial cases

5. Phase 5. Controlled Rollout and Operating Model

Deliverable: production deployment to a small population, plus operating model.

Operating model components:

- On-call rotation and runbooks

- Kill-switch test and incident playbook

- Audit-trail design and retention policy

Benefits Gained from Phased Delivery and Operating Discipline

- Predictable delivery without skipped foundations

- Reusable platform layer for the next agent

- Audit-grade evidence from day one of production

How It All Works Together

Each phase has explicit deliverables. A program is not in the next phase until the current phase has its deliverables. Skipping deliverables is the most reliable way to ensure rework later. Phase one feeds phase two; phase two constrains phase three; phase three enables phase four; phase four prepares phase five; phase five sustains phase six.

Common Misconception

Agentic AI delivery is mostly about prompt and model tuning.

Coding is roughly thirty percent of the program. Design, eval, controls, and operating model are the rest. Skipping the non-coding work is how programs ship demos and incidents.

Key Takeaway: Each phase has a specific deliverable that protects later phases. Skipped phases create rework, not speed.

Real-World Developing an Agentic AI System in Action

Let's take a look at how developing an agentic ai system operates with a real-world example.

We worked with a financial services company developing an agentic system for internal account operations, with these constraints:

- Strict audit and rollback requirements

- HITL approval for any high-blast action

- Reusable platform layer for future agents in the portfolio

Step 1: Workflow and Outcome Document

One-page document; sign-off from line of business; named outcome and system owners.

- Business outcome in one sentence

- Step-by-step workflow

- Two named owners and a sponsor

Step 2: Blast-Radius Register and Tool Surface

Each step categorized by blast radius; tool surface narrowed deliberately.

- Worst-case impact per step

- Autonomy level per step

- Tool surface spec with input/output contracts

Step 3: Eval Harness for Sequences

Eval cases cover sequences, not just turns; runs daily; regressions block deploys.

- Happy, recoverable, unrecoverable, adversarial cases

- Daily run with alerting

- Public quality dashboard

Step 4: Controlled Rollout to a Supervised Population

Internal users first; named feedback channels; daily review of agent traces.

- Pilot with named participants

- Feedback channel reviewed daily

- Documented promotion criteria

Step 5: Operating Model and Scale

On-call rotation, runbooks, kill-switch tests, quarterly reviews. Scale only after absorbing first-month learning.

- On-call rotation across engineering and risk

- Quarterly review of tool surface and autonomy

- Sunset criteria for tools that do not earn their keep

Where It Works Well

- Phase deliverables enforced before progressing

- Engineering and risk represented at every phase gate

- Operating model designed in phase five rather than improvised

Where It Does Not Work Well

- Skipping the workflow document because it feels like a formality

- Wide tool surface that grows opportunistically

- Scaling before absorbing pilot learning

Key Takeaway: Phased delivery feels slower in week one and is faster by month six. The discipline pays back twice over by the second program.

Common Pitfalls

i) Phase-skipping

Each phase has deliverables. Programs that ship without producing them rebuild the work later, more expensively.

- Skipping the workflow document is the most common

- Skipping eval is the most expensive

- Skipping the operating model is the slowest to recover from

ii) Tool sprawl

The tool surface grows because adding tools feels productive. Each tool is a control point. Audit quarterly; remove tools not pulling weight.

iii) Eval drift

The eval harness becomes stale because the workflow changed and nobody updated cases. Schedule quarterly eval review.

iv) Operating model drift

Runbooks become stale; rotations become unfair; kill switches become untested. Schedule quarterly operating-model review.

Takeaway from these lessons: Most agentic delivery failures are phase-skipping failures dressed up as engineering challenges.

Developing an Agentic AI System Best Practices: What High-Performing Teams Do Differently

1. Treat phase deliverables as gates

A program does not enter the next phase without producing the current phase's deliverables. Resist the urge to overlap phases for speed.

2. Build sequence eval as a first-class system

Eval that runs daily, blocks regressions, and exercises sequences not turns. The eval harness is production code.

3. Design operating model in phase five

Runbooks, kill-switch tests, on-call rotation, audit-trail retention. The operating model is the difference between a project and a service.

4. Pilot with a controlled population before scaling

First-month production data is the most valuable input you will get. Capture it; absorb it; update phase deliverables; then scale.

5. Schedule quarterly platform reviews

Tool surface, autonomy levels, eval cases, operating model. Without the cadence, the platform erodes silently.

Logiciel's value add is running phase delivery alongside enterprise teams, including the design, eval, and operating-model work that turns a build into a service.

Takeaway for High-Performing Teams: High-performing teams treat agentic delivery like database delivery: structured, reviewed, operated.

Signals You Are Designing Developing an Agentic AI System Correctly

How do you know the developing an agentic ai system program is set up to succeed? Not in a board deck or a celebration, but in the daily evidence the team produces. Below are the signals that distinguish programs on the path from programs that look like progress.

- The team can describe failure modes without flinching. People who actually run developing an agentic ai system systems will tell you the last three things that broke. People who have only read about it will not.

- Cost is observable in real time. The team can tell you, today, how much they spent yesterday on this and what drove the change.

- Change is boring. New versions, new models, new pipelines all roll forward and roll back the same way. Heroic deploys signal an immature system.

- Eval is continuous, not ceremonial. A live dashboard refreshed at least daily, not a quarterly slide.

- Vendor lock-in is a known quantity. The team can name the dependencies that would hurt to remove and the rip-and-replace cost in dollars and weeks.

Adjacent Capabilities and Connected Work

This work does not exist in isolation. Developing an Agentic AI System depends on, and feeds into, several adjacent capabilities. Building one without thinking about the others is the most common scoping mistake.

In most enterprise programs, developing an agentic ai system shares infrastructure with the data platform, the observability stack, and the security review process. It shares team capacity with platform engineering, applied ML, and SRE. And it shares leadership attention with whatever the next AI initiative is on the roadmap. Naming these adjacencies upfront helps the program scope realistically and helps leadership see the work as a portfolio rather than a one-off project.

The most common mistake in adjacent-capability scoping is treating each adjacency as someone else's problem. The integration with the data platform is your problem. The security review of the runtime is your problem. The on-call rotation that covers the system you ship is your problem. Pretending otherwise pushes work to teams that did not plan for it, and the work returns to you later as a delay or an incident. Own the adjacencies you depend on; partner with the teams that own them; share the timeline.

Conclusion

Developing an agentic AI system is a delivery discipline. The phase blueprint exists to keep the discipline strong when excitement and pressure conspire against it.

Key Takeaways:

- Six phases with explicit deliverables; each phase gates the next

- Coding is thirty percent of the work; design, eval, and operating model are the rest

- Pilot with a controlled population; scale after absorbing learning

When agentic AI delivery follows the blueprint, the benefits compound:

- Predictable delivery without rework

- Reusable platform layer for the next agent

- Audit-grade evidence from day one of production

- Operating model that survives the first incident

6 Vendors to 1 Platform

Inside a 7-month consolidation that cut six tools to one and saved $1.4M.

Call to Action

If you are developing an agentic AI system, the move this month is to produce the workflow document, the blast-radius register, and the tool surface specification.

Learn More Here:

- Agentic Systems Multi Agent Architectures Autonomous AI Engineering Teams

- AI Observability and Metrics Agentic Teams

- Agentic AI Teams and Skills Startups

At Logiciel Solutions, we run agentic AI workshops with engineering and AI leaders, walking through the phase blueprint with your specific use case.

Explore how to develop your agentic AI system.

Frequently Asked Questions

What does developing an agentic AI system involve?

Workflow design, blast-radius analysis, tool layer design, agent design, eval harness construction, controlled rollout, and operating-model setup. Coding is about thirty percent of the program.

How long does a first agentic delivery take?

Twelve to twenty weeks to a controlled production pilot. Programs that promise faster are usually skipping eval or operating-model work.

What does the team look like?

Engineering lead, applied scientist or ML engineer, platform engineer for tools and observability, security partner, domain expert, operator. Six people for a first program.

Single agent or multi-agent for a first program?

Single agent. Premature multi-agent design is a common cause of debuggability problems. Decompose only if the workflow asks for it.

What is the biggest mistake in agentic AI development?

Phase-skipping. Programs that ship without producing phase deliverables rebuild the work later, more expensively.