There is an executive team asking which agentic AI workflow to fund first. The team has heard the demos. The vendors are pitching everything. The honest answer is that some workflows pay off in two quarters and some pay off in eighteen months, and the difference is rarely about model capability.

This is more than a prioritization gap. It is a failure of agentic AI portfolio discipline.

A modern enterprise agentic AI portfolio prioritizes workflows by ROI, blast radius, and time-to-value, not by which demo was most exciting last week.

However, many programs fund the most visible workflow first and discover the prioritization gap when the program runs out of credibility before the next funding cycle.

If you are a VP Product and are responsible for building or scaling your enterprise agentic AI portfolio, the intent of this article is:

- Define what makes an agentic AIworkflow pay off first

- Walk through the prioritization framework that separates wins from theater

- Lay out real examples of high-ROI early agentic workflows

To do that, let's start with the basics.

AI – Powered Product Development Playbook

How AI-first startups build MVPs faster, ship quicker, & impress investors without big teams.

What Is Agentic AI in the Enterprise? The Basic Definition

At a high level, an agentic AI workflow that pays off first is one with bounded blast radius, clear automation value, available training and reference data, and a path from pilot to scale that does not require rebuilding the rest of the company.

To compare:

If picking the right agentic workflow is choosing the right campsite, the criteria are not the view but the access road, the water source, and the weather forecast. Picking the prettiest spot rarely means picking the right one.

Why Is Agentic AI in the Enterprise Necessary?

Issues that Agentic AI in the Enterprise addresses or resolves:

- Funding the workflow that delivers ROI in months, not years

- Avoiding the credibility loss of a slow first program

- Building reusable platform layer for the next agent

Resolved Issues by Agentic AI in the Enterprise

- Surfaces ROI, blast radius, and time-to-value as explicit criteria

- Provides the framework procurement and product teams can share

- Builds organizational pattern-matching for future portfolio decisions

Core Components of Agentic AI in the Enterprise

- ROI assessment per workflow (automation value, time saved, error reduction)

- Blast radius classification (high, medium, low)

- Time-to-value estimate per workflow

- Data and reference availability

- Operating model fit

Modern Agentic AI in the Enterprise Tools

- ROI scorecards calibrated for agentic workflows

- Time-to-value estimation frameworks

- Blast radius classification rubrics

- Data readiness assessments per workflow

- Operating model templates for agentic AI

Tools support the framework; the discipline of using it honestly is the differentiator.

Other Core Issues They Will Solve

- Reduces program failure rates through pre-flight prioritization

- Strengthens sponsor confidence through predictable wins

- Builds reusable platform across future agentic programs

In Summary: Agentic AIin the enterprise pays off first on workflows where ROI, blast radius, and time-to-value align.

Importance of Agentic AI in the Enterprise in 2026

Workflow prioritization matters more in 2026 than at any prior point. Four reasons.

1. Agentic capability is finally enterprise-ready.

The bottleneck is no longer model quality. It is choosing the workflow with the highest payoff per quarter.

2. Sponsor confidence is fragile.

First agentic programs that take eighteen months erode sponsor confidence. Two-quarter wins build it.

3. Platform reuse compounds.

The platform built for the first agentic workflow gets reused by the next. Pick a workflow that builds the right platform.

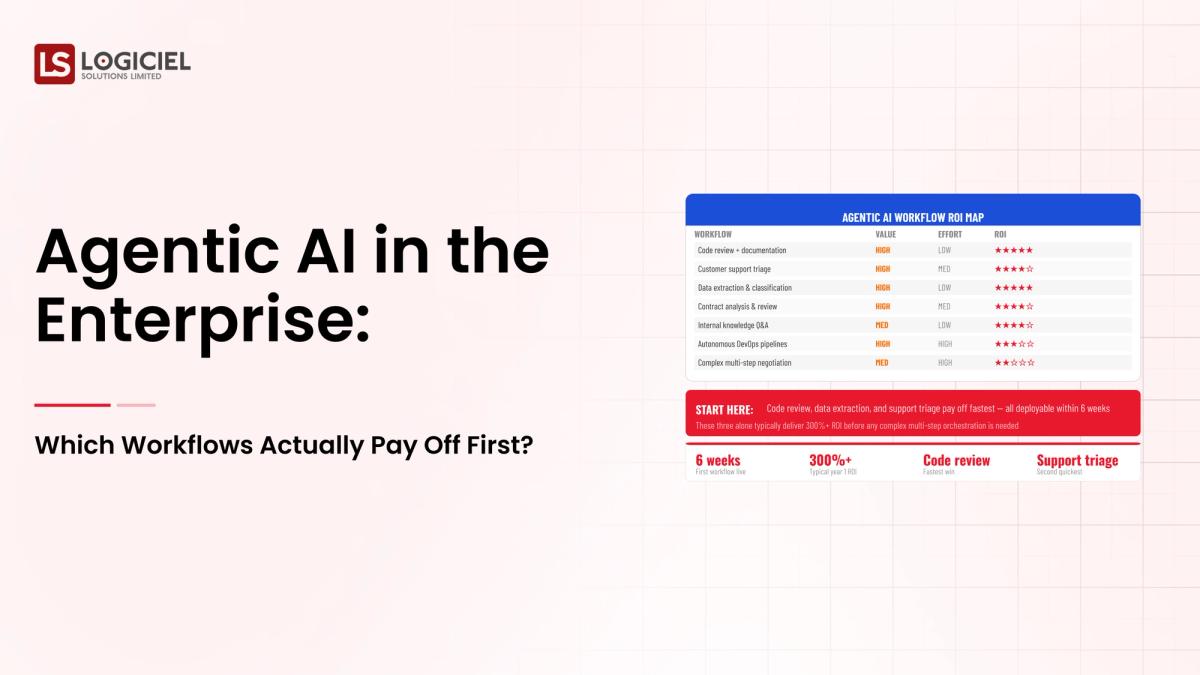

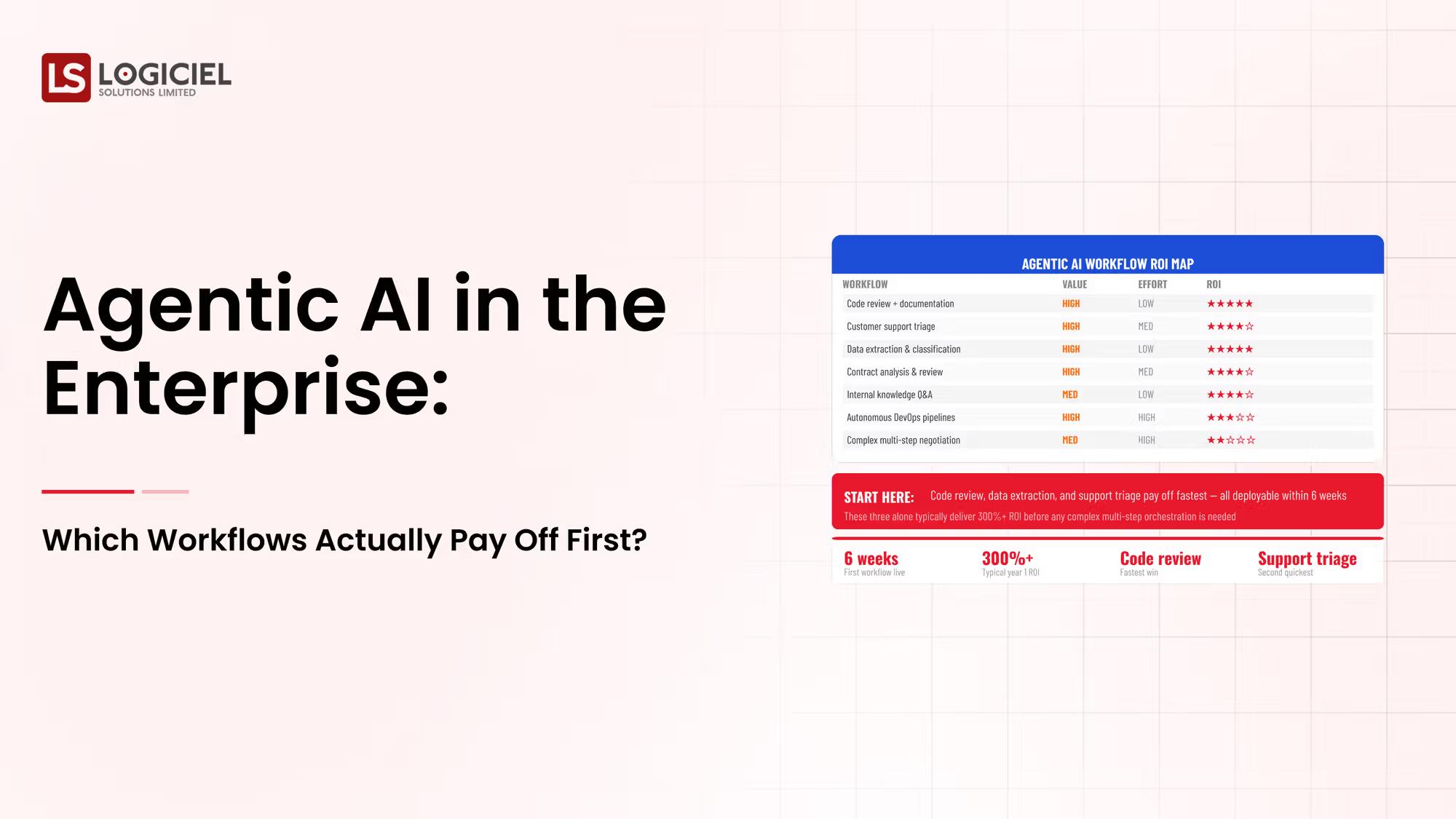

4. ROI patterns are now predictable.

Across hundreds of enterprise agentic programs, the workflows that pay off first share clear characteristics.

Traditional vs. Modern Agentic AI in the Enterprise Concepts

- Most-visible-workflow-first vs. ROI-prioritized portfolio

- Demo-led prioritization vs. framework-driven prioritization

- Single-workflow scope vs. portfolio with sequencing

- Annual reviews vs. quarterly portfolio rebalancing

In summary: The first agentic workflow shapes the trajectory of every program that follows.

Details About the Core Components of Agentic AI in the Enterprise: What Are You Designing?

Let's go through each layer.

1. ROI Layer

Measurable value of the workflow.

ROI components:

- Hours of human work automated

- Error reduction or quality improvement

- Revenue lift or cost reduction

2. Blast Radius Layer

What can go wrong if the agent fails.

Blast radius categories:

- Low: internal-only with reversible decisions

- Medium: customer-facing with reversible outcomes

- High: regulator or money-affecting actions

3. Time-to-Value Layer

How long until the workflow delivers measurable value.

Time-to-value factors:

- Data and reference availability

- Tool surface complexity

- Operating model fit

4. Data and Reference Layer

What the agent needs to perform.

Data readiness:

- Available training and reference data

- Quality and freshness

- Access controls and audit posture

5. Operating Model Fit

Whether the organization can operate the workflow.

Fit factors:

- On-call and incident response capability

- Reviewer pool for HITL workflows

- Stakeholder alignment on outcomes

Benefits Gained from ROI Prioritization and Blast Radius Discipline

- First wins delivered in two quarters

- Sponsor confidence sustained for the next program

- Reusable platform layer that compounds across the portfolio

How It All Works Together

ROI surfaces value. Blast radius surfaces risk. Time-to-value surfaces feasibility. Data readiness surfaces prerequisites. Operating model fit surfaces sustainability. Together, the layers turn workflow prioritization from a vendor pitch into a portfolio decision.

Common Misconception

The most exciting agentic demo is the right first workflow.

The most exciting demo and the highest-ROI first workflow are rarely the same. Prioritization is structural, not visual.

Key Takeaway: Each layer surfaces a different prioritization criterion. Programs that skip layers fund the wrong workflow.

Real-World Agentic AI in the Enterprise in Action

Let's take a look at how agentic ai in the enterprise operates with a real-world example.

We worked with a VP of Product weighing five candidate agentic workflows for a Fortune 500, with these constraints:

- First program must deliver measurable ROI inside two quarters

- Reusable platform layer for future workflows

- Acceptable blast radius for first deployment

Step 1: Score Candidate Workflows on ROI

Hours automated, errors reduced, revenue lifted.

- Per-workflow ROI estimate

- Documented value drivers

- Comparison across candidates

Step 2: Classify Blast Radius

Low, medium, high based on what can go wrong.

- Per-workflow blast radius classification

- Required controls per classification

- Acceptable scope for first program

Step 3: Estimate Time-to-Value

Data and reference, tool surface, operating fit.

- Time-to-value per workflow

- Data availability assessment

- Tool surface complexity

Step 4: Sequence the Portfolio

First workflow optimizes for ROI plus low blast radius plus short time-to-value.

- First workflow chosen

- Sequence of next workflows

- Platform reuse plan

Step 5: Operate the Cadence

Quarterly portfolio review; reprioritize as data emerges.

- Quarterly portfolio review

- Updated ROI per workflow

- Portfolio sequencing refresh

Where It Works Well

- First workflow with ROI in two quarters and low blast radius

- Platform layer designed for reuse across the portfolio

- Quarterly portfolio rebalancing

Where It Does Not Work Well

- First workflow chosen by demo excitement

- High blast radius first workflow without HITL

- No platform reuse plan across workflows

Key Takeaway: The first agentic workflow shapes the trajectory of every program that follows. Pick deliberately.

Common Pitfalls

i) Demo-led prioritization

Demos are sales artifacts. ROI scoring is the prioritization criterion.

- Score on ROI, not on demo

- Document value drivers

- Compare across candidates

ii) High blast radius first

First programs benefit from low blast radius. Save high-blast workflows for after the operating model is proven.

iii) No platform reuse plan

First programs that do not build platform reuse leave value on the table for subsequent programs.

iv) Single-workflow scope

Treat agentic AI as a portfolio. Sequencing matters.

Takeaway from these lessons: Most agentic enterprise prioritization regret is demo-led prioritization regret.

Agentic AI in the Enterprise Best Practices: What High-Performing Teams Do Differently

1. Score on ROI, blast radius, time-to-value

Three criteria. Documented per workflow. Compared across candidates.

2. Pick low blast radius first

First programs benefit from low blast radius. Build the operating model on safer workflows first.

3. Plan platform reuse

First workflow builds the platform; subsequent workflows ride on it.

4. Sequence the portfolio

Treat agentic AI as a portfolio with sequencing, not a single program.

5. Operate quarterly cadence

Reprioritize as data emerges; portfolio is dynamic.

Logiciel's value add is helping VPs of Product run agentic AI portfolio prioritization with ROI, blast radius, and time-to-value scoring.

Takeaway for High-Performing Teams: High-performing product organizations treat agentic AI as a portfolio with sequenced wins.

Signals You Are Designing Agentic AI in the Enterprise Correctly

How do you know the agentic ai in the enterprise program is set up to succeed? Not in a board deck or a celebration, but in the daily evidence the team produces. Below are the signals that distinguish programs on the path from programs that look like progress.

- The team can describe failure modes without flinching. People who actually run agentic ai in the enterprise systems will tell you the last three things that broke. People who have only read about it will not.

- Cost is observable in real time. The team can tell you, today, how much they spent yesterday on this and what drove the change.

- Change is boring. New versions, new models, new pipelines all roll forward and roll back the same way. Heroic deploys signal an immature system.

- Eval is continuous, not ceremonial. A live dashboard refreshed at least daily, not a quarterly slide.

- Vendor lock-in is a known quantity. The team can name the dependencies that would hurt to remove and the rip-and-replace cost in dollars and weeks.

Adjacent Capabilities and Connected Work

This work does not exist in isolation. Agentic AI in the Enterprise depends on, and feeds into, several adjacent capabilities. Building one without thinking about the others is the most common scoping mistake.

In most enterprise programs, agentic ai in the enterprise shares infrastructure with the data platform, the observability stack, and the security review process. It shares team capacity with platform engineering, applied ML, and SRE. And it shares leadership attention with whatever the next AI initiative is on the roadmap. Naming these adjacencies upfront helps the program scope realistically and helps leadership see the work as a portfolio rather than a one-off project.

The most common mistake in adjacent-capability scoping is treating each adjacency as someone else's problem. The integration with the data platform is your problem. The security review of the runtime is your problem. The on-call rotation that covers the system you ship is your problem. Pretending otherwise pushes work to teams that did not plan for it, and the work returns to you later as a delay or an incident. Own the adjacencies you depend on; partner with the teams that own them; share the timeline.

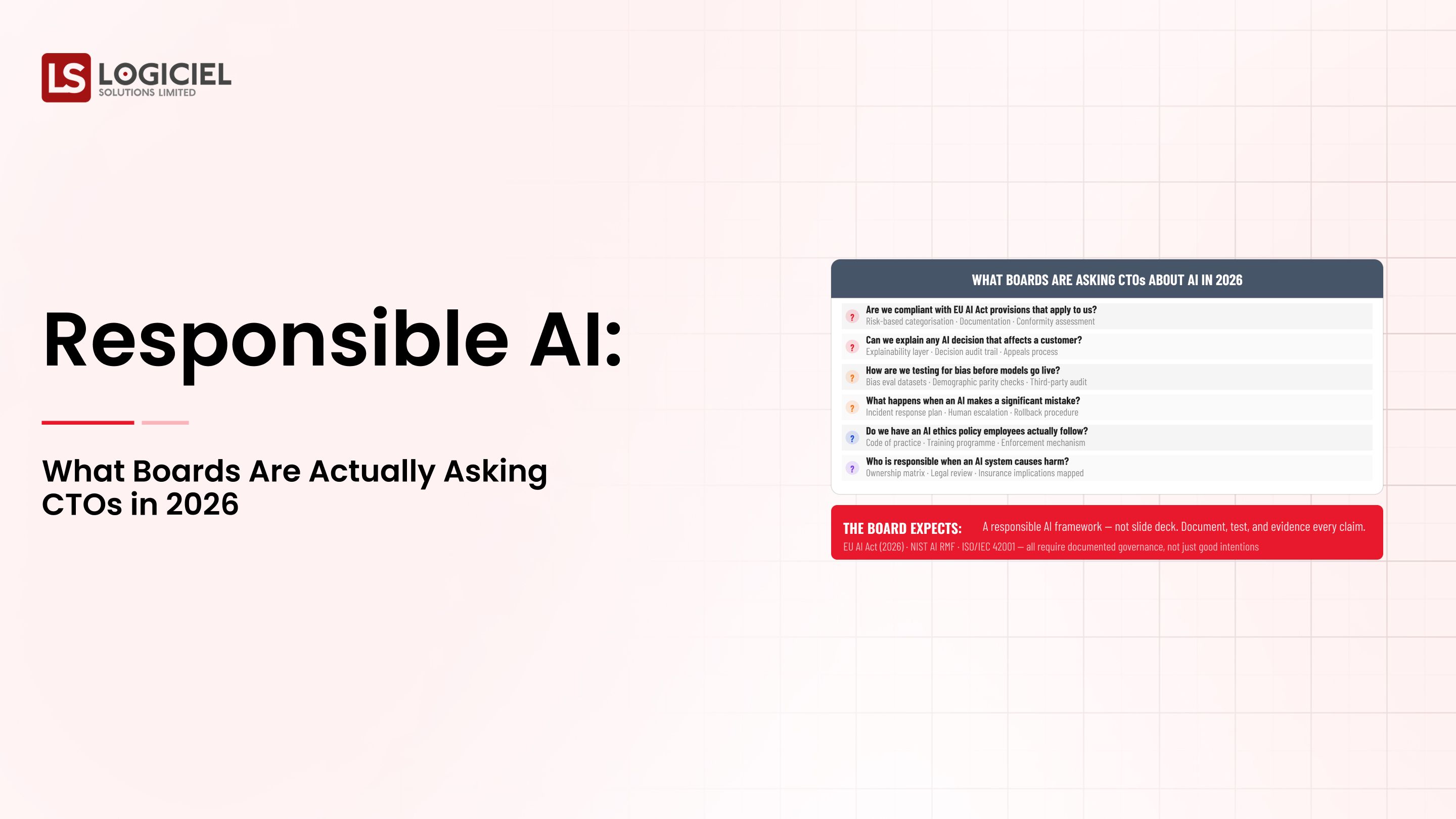

Stakeholder Considerations and Communication

Different stakeholders ask different questions about agentic ai in the enterprise. The board wants to know about risk, ROI, and competitive position. The CFO wants unit economics and forecast. The CISO wants the threat model and the audit posture. Engineering wants to know what gets built and what gets bought. The line of business wants to know when the value lands and what the experience will look like for users.

Programs that anticipate these questions and prepare answers move faster than programs that improvise. Build a one-page brief for each major stakeholder. Update the briefs quarterly. The cost of preparing them is low; the cost of not preparing them is the meeting where the program loses sponsor confidence.

There is also a communication cadence question. Programs that update sponsors weekly during active delivery, monthly during steady-state operation, and at every incident or major change tend to maintain confidence. Programs that go quiet between milestones tend to surprise leadership. Decide the cadence at kickoff and protect it.

Metrics That Tell You Agentic AI in the Enterprise Is Working

Beyond the success signals listed earlier, there are operational metrics worth tracking week over week. These are not vanity numbers; they are leading indicators that distinguish programs that are compounding from programs that are running in place.

Time from idea to production. How long does it take a new use case to go from concept to a customer-impacting deployment? Programs that compound see this number drop quarter over quarter; programs that do not see it grow.

Per-program cost trajectory. Are you spending less per unit of value delivered each quarter, or more? Cost trajectory is the cleanest leading indicator of whether the platform layer is paying back.

Incident severity over time. Severity ticks down as the operating model matures. If incident severity is flat or rising, the operating model has gaps that need attention before the next program ships.

Reuse rate across programs. What fraction of the platform layer is being reused by program two and program three? Reuse rate is the cleanest indicator that the investment in the first program is amortizing.

Stakeholder net promoter. Are your sponsors more or less likely to fund the next program than they were last quarter? Sponsor confidence is hard to measure directly; the trend in approved budget and strategic emphasis is the proxy.

Conclusion

Agentic AI in the enterprise pays off first on workflows where ROI, blast radius, and time-to-value align. The framework is well known; the discipline of using it honestly is the work.

Key Takeaways:

- Score on ROI, blast radius, time-to-value

- Pick low blast radius first

- Treat agentic AI as a portfolio with sequencing

When agentic AI portfolio prioritization is run with discipline, the benefits compound:

- First wins delivered in two quarters

- Sponsor confidence sustained for the next program

- Reusable platform layer that compounds

- Stronger procurement and partner conversations

100 CTOs. Real Expectations

This report shows what actually predicts delivery success and what CTOs discover too late.

Call to Action

If you are scoping agentic AI for the enterprise, the move this quarter is to score candidate workflows on ROI, blast radius, and time-to-value before any vendor conversation.

Learn More Here:

At Logiciel Solutions, we work with product and AI leaders on agentic portfolio prioritization, including the scoring framework that turns vendor pitches into portfolio decisions.

Explore which agentic AI workflow pays off first for your enterprise.

Frequently Asked Questions

What is an agentic AI workflow that pays off first?

One with bounded blast radius, clear automation value, available training and reference data, and a path from pilot to scale that does not require rebuilding the company.

What kinds of workflows tend to pay off first?

Internal automation with high time savings, customer-support deflection workflows with reversible outcomes, and document-heavy workflows where retrieval and grounding deliver clear value.

Who should own portfolio prioritization?

VP of Product or Head of AI with input from engineering, risk, and the line of business that owns each workflow's outcome.

How long should the first agentic program take?

Two quarters to first measurable ROI for low-blast-radius workflows. Programs that take longer almost always picked the wrong workflow first.

What is the biggest mistake in agentic enterprise prioritization?

Letting demo excitement drive prioritization. ROI, blast radius, and time-to-value are the structural criteria; demos are sales artifacts.