There is a production agent that has just executed a tool call your team did not expect. The action completed, the audit log is incomplete, and the line-of-business owner is asking what happened. Your team is reconstructing the trace from fragments while leadership waits.

This is more than an unusual incident. It is a failure of the concept of agentic AI systems.

A modern agentic AI system is more than a model that calls tools. It is a designed combination of autonomy, tool surface, coordination shape, memory model, and control layer that lets the system act safely.

Investor-Ready Infrastructure in 90 Days

Inside a 90-day sprint that took a flagged round to a $28M close.

However, many teams ship agents before designing the controls, and discover what they should have built when something goes wrong.

If you are a Head of AI and are responsible for building or scaling your agentic AI program, the intent of this article is:

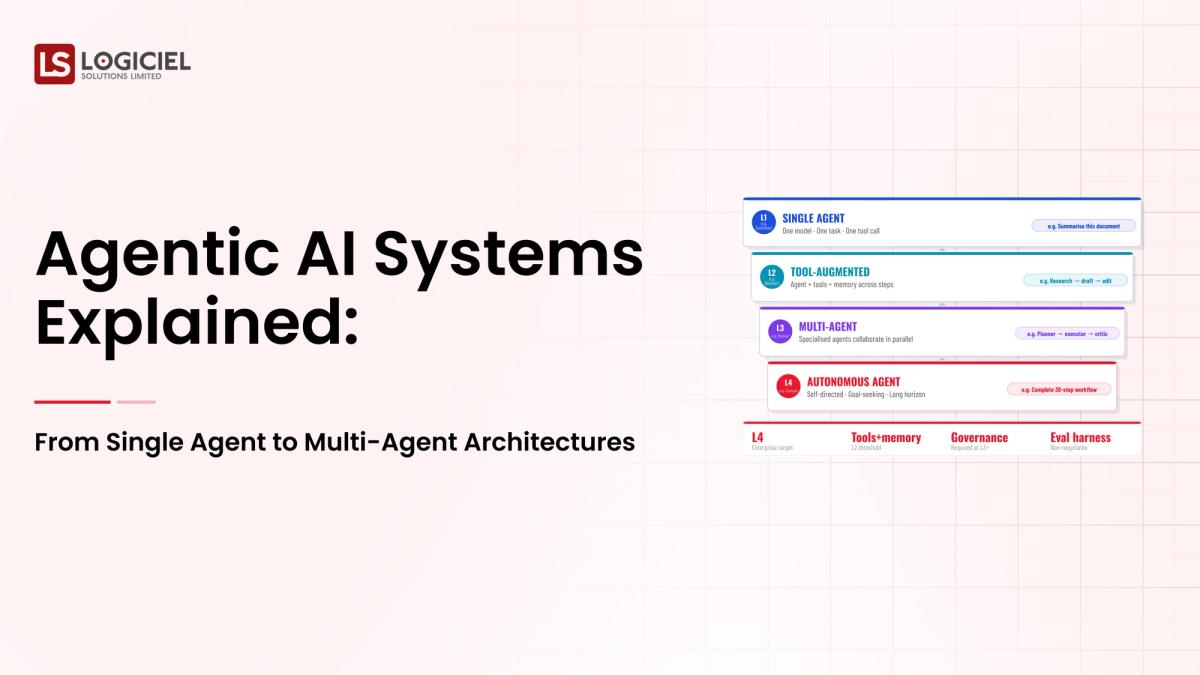

- Define what agentic AI systems actually are

- Walk through single-agent vs. multi-agent architectures and when each fits

- Lay out the controls every production agent needs

To do that, let's start with the basics.

What Is Agentic AI Systems? The Basic Definition

At a high level, an agentic AI system is a model that plans actions, calls tools, observes outcomes, and decides what to do next, possibly in a loop, possibly in coordination with other agents.

To compare:

If a chatbot is a librarian who answers questions, an agent is an assistant who books your travel and updates your calendar. Both are useful; one has a much wider blast radius.

Why Is Agentic AI Systems Necessary?

Issues that Agentic AI Systems addresses or resolves:

- Solving the gap between text generation and real action

- Automating workflows that decompose into steps with branching logic

- Enabling AI to operate where deterministic code is brittle

Resolved Issues by Agentic AI Systems

- Wraps tool calls in a planning and validation loop

- Adds memory and reflection where pure prompting falls short

- Provides a structured pattern for multi-step automation

Core Components of Agentic AI Systems

- Planner that decomposes a goal into actionable steps

- Tool surface defining what actions the agent can take

- Memory layer (stateless, conversational, episodic, or persistent)

- Reflection and validation layer for self-checking

- Control layer with kill switches, rate limits, and HITL checkpoints

Modern Agentic AI Systems Tools

- LangGraph, LangChain Agents, and DSPy for agent orchestration

- OpenAI Assistants API, Anthropic Claude, AWS Bedrock Agents for managed agent runtimes

- Pinecone, Weaviate, pgvector for memory and retrieval

- LangSmith, Helicone, and Galileo for agent observability

- Custom tool wrappers built on top of internal APIs

These tools reflect the maturation of agentic AI from research demos to production systems.

Other Core Issues They Will Solve

- Enable controlled automation in workflows previously requiring humans

- Provide audit trails for AI-mediated decisions

- Allow specialization across multiple agents for complex workflows

In Summary: Agentic AI systems concepts turn a model that generates text into a system that takes safe, governed action.

Importance of Agentic AI Systems in 2026

Agentic AI has shifted from research curiosity to enterprise capability. Four reasons explain why it matters now.

1. Models are now capable enough to plan multi-step workflows.

Frontier models can decompose goals, choose tools, and recover from intermediate errors. The ceiling is now on the system around the model, not the model itself.

2. Tool ecosystems have matured.

Standardized tool-calling APIs and managed agent runtimes make production agentic systems accessible to enterprise teams without research budgets.

3. Workflow automation ROI is finally compelling.

Agents that automate real human work, not just answer questions, change the business case for AI investment.

4. Governance frameworks now address agentic specifically.

Boards, regulators, and auditors are starting to ask agentic-specific questions. Programs without designed controls are programs that struggle in those conversations.

Traditional vs. Modern Agentic AI Systems Concepts

- Single-call inference vs. multi-step planning with tool use

- Stateless interactions vs. memory-aware long-horizon tasks

- Manual prompt engineering vs. designed agent architectures

- No formal controls vs. layered guardrails with audit

In summary: Agentic AI systems concepts are the foundation of the next wave of enterprise AI work.

Details About the Core Components of Agentic AI Systems: What Are You Designing?

Let's go through each layer.

1. Planning Layer

Where the agent decomposes a goal into steps.

Planning decisions:

- Single-shot plan vs. iterative replan

- Plan validation against policy

- Plan transparency for audit

2. Tool Surface Layer

The set of actions the agent can take.

Tool surface design:

- Narrow surface by default; expand deliberately

- Per-tool input validation, rate limits, and authorization

- Documented failure modes per tool

3. Memory Layer

What the agent remembers and for how long.

Memory choices:

- Stateless: simple, limits long-horizon

- Conversational: supports multi-turn but not long-horizon

- Episodic and persistent: enables long-horizon at higher operational cost

4. Reflection and Validation Layer

Where the agent self-checks before acting.

Validation checks:

- Schema and structural correctness of tool calls

- Policy compliance before execution

- Confidence-based escalation to HITL

5. Control Layer

Kill switches, rate limits, blast-radius scoping, and audit.

Controls in production:

- Per-task budget enforced at runtime

- Documented kill-switch conditions and tested mechanism

- Audit trail capturing plan, tool calls, intermediate state, final outcome

Benefits Gained from Tool Surface Discipline and Control Layer

- Bounded blast radius for production agents

- Defensible audit trail for risk and regulator review

- Faster recovery when agents misbehave

How It All Works Together

A goal arrives at the planner, which decomposes it into steps. Each step calls a tool through the validation layer. Memory persists across steps. The control layer enforces budget, blast radius, and kill-switch conditions. The audit layer captures everything. When the agent finishes or escalates, the trail is complete.

Common Misconception

Agentic AI is just a model that uses tools.

Agentic AI is a system with planning, memory, validation, and controls. The tool calls are the visible part; the control layer is what makes them safe.

Key Takeaway: Each layer has a specific job. Programs that skip layers ship clever demos and risky systems.

Real-World Agentic AI Systems in Action

Let's take a look at how agentic ai systems operates with a real-world example.

We worked with a SaaS company shipping an agentic feature for customer support automation, with these constraints:

- Resolve common ticket categories without human escalation

- Audit every action taken on a customer account

- Hard kill switch if quality regresses below a threshold

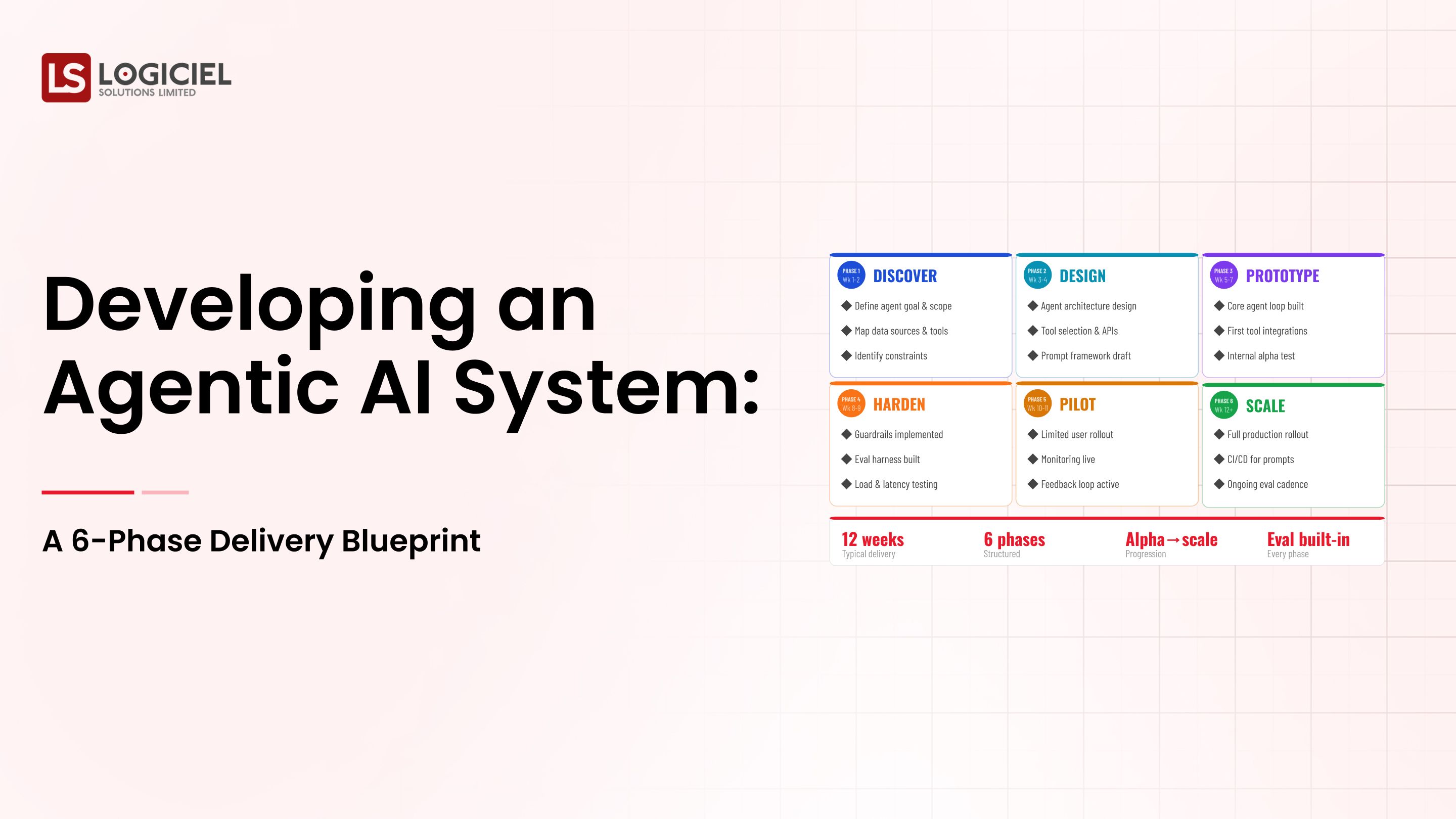

Step 1: Define the Workflow and Blast Radius

Write the workflow as a sequence of steps. For each step, name the system the agent touches and the worst-case impact.

- Step-by-step workflow document

- Blast-radius register

- Autonomy level per step based on blast radius

Step 2: Pick Single vs. Multi-Agent

Single-agent for workflows that do not decompose cleanly. Multi-agent for workflows that decompose into specialized roles. Most first programs benefit from starting single.

- Default single-agent

- Decompose to multi-agent only when the workflow asks for it

- Document the coordination shape if multi-agent

Step 3: Design the Tool Surface

List the tools the agent can call. Define input contracts, output contracts, failure modes, rate limits, and kill switches.

- Narrow tool surface

- Per-tool input validation

- Per-tool rate limits and budget

Step 4: Build the Eval Harness for Sequences

Eval cases cover happy paths, recoverable failures, unrecoverable failures, and adversarial inputs.

- Sequence eval, not just turn eval

- Daily run with regression blocking

- Failure-mode-specific test cases

Step 5: Ship to a Controlled Population, Then Scale

First production exposure is a small, supervised population with named feedback channels.

- Internal users first

- Daily review of agent traces in the first month

- Scale only after absorbing first-month learning

Where It Works Well

- Narrow tool surface with documented failure modes per tool

- Sequence eval that runs daily and blocks regressions

- Tested kill switch with documented invocation criteria

Where It Does Not Work Well

- High autonomy on high blast-radius actions

- Wide tool surface that grows opportunistically

- Premature multi-agent design before workflow mapping

Key Takeaway: The agent that works in production is the agent whose controls were designed before the planning prompt was tuned.

Common Pitfalls

i) High autonomy on high blast-radius actions

Letting an agent act without human approval on actions that move money or change records of regulatory significance is the most common high-cost mistake.

- Match autonomy to blast radius

- Default high-blast actions to HITL

- Document the rationale for any high-autonomy choice

ii) Wide tool surface

Each tool is a control point and a risk. Add tools when use cases require, not because the framework supports them.

iii) Premature multi-agent

Designing multi-agent before mapping the workflow is a coordination overhead with no benefit. Pay it when needed; avoid it when not.

iv) No tested kill switch

A kill switch you have not tested is a kill switch you do not have. Test quarterly. Document the test.

Takeaway from these lessons: Most agentic failures trace to control gaps, not model failures. Design the controls before the planning prompt.

Agentic AI Systems Best Practices: What High-Performing Teams Do Differently

1. Map the action surface before building

List every action the agent can take, organized by blast radius. The list is the foundation of the control layer.

2. Build sequence eval as a first-class system

Eval that exercises sequences, not just turns. Cover happy, recoverable, unrecoverable, and adversarial cases.

3. Default narrow on tool surface

Add tools deliberately. Each tool requires its own validation, rate limit, and kill switch. Wide surfaces are debt.

4. Design HITL as a feature, not a fallback

Specific points in the workflow where a human reviews and approves. HITL is a designed feature for high-blast actions.

5. Operate the agent like infrastructure

On-call rotation, runbooks, kill-switch tests, quarterly review of tool surface and autonomy. Treat it like a database, not a feature.

Logiciel'svalue add is helping teams design the workflow, blast-radius register, tool surface, and control layer alongside the agent itself, so the program ships an operable system rather than a clever demo.

Takeaway for High-Performing Teams: Focus on the control layer and the operating model. Capability without control is liability.

Signals You Are Designing Agentic AI Systems Correctly

How do you know the agentic ai systems program is set up to succeed? Not in a board deck or a celebration, but in the daily evidence the team produces. Below are the signals that distinguish programs on the path from programs that look like progress.

- The team can describe failure modes without flinching. People who actually run agentic ai systems systems will tell you the last three things that broke. People who have only read about it will not.

- Cost is observable in real time. The team can tell you, today, how much they spent yesterday on this and what drove the change.

- Change is boring. New versions, new models, new pipelines all roll forward and roll back the same way. Heroic deploys signal an immature system.

- Eval is continuous, not ceremonial. A live dashboard refreshed at least daily, not a quarterly slide.

- Vendor lock-in is a known quantity. The team can name the dependencies that would hurt to remove and the rip-and-replace cost in dollars and weeks.

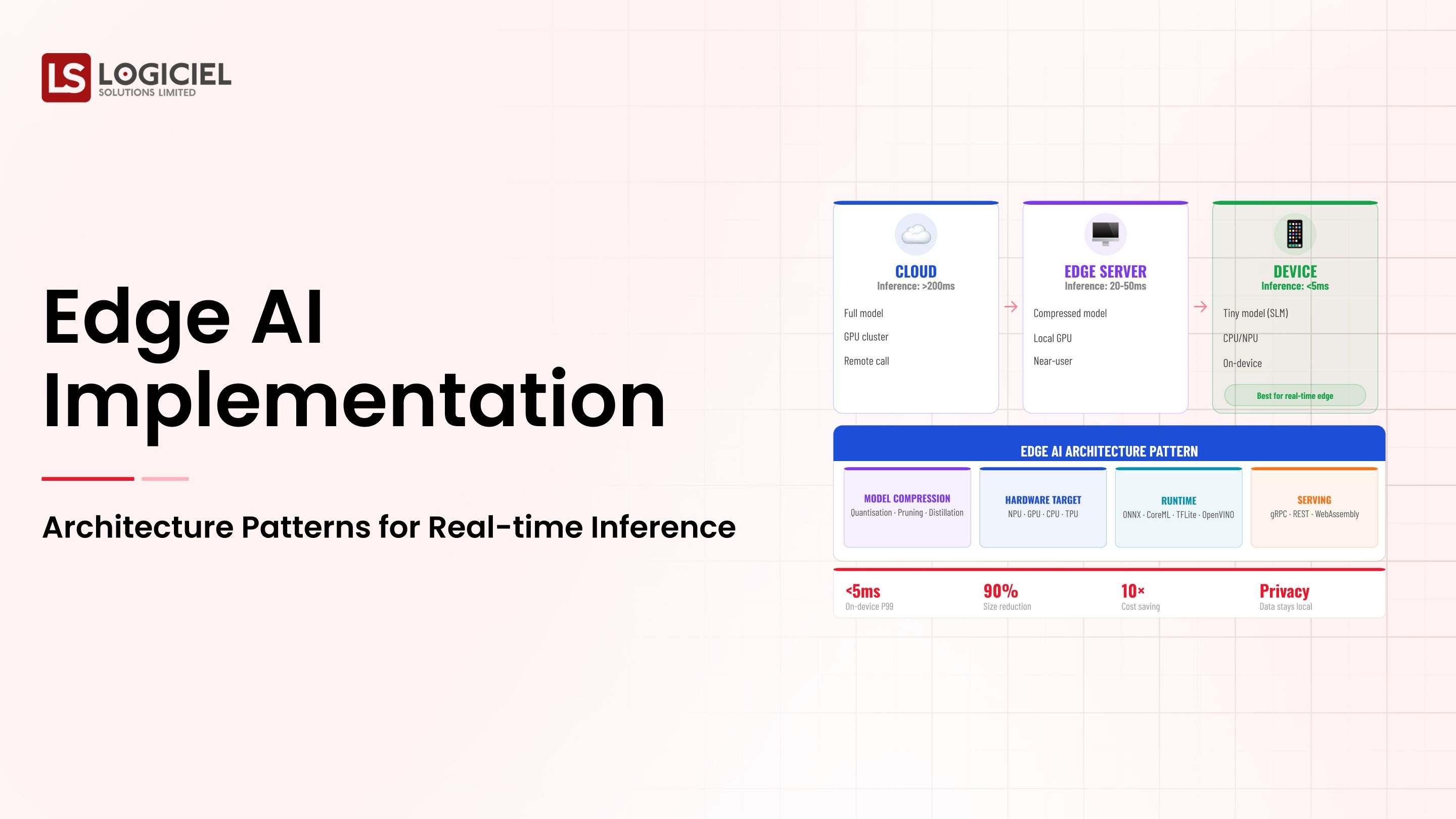

Adjacent Capabilities and Connected Work

This work does not exist in isolation. Agentic AI Systems depends on, and feeds into, several adjacent capabilities. Building one without thinking about the others is the most common scoping mistake.

In most enterprise programs, agentic ai systems shares infrastructure with the data platform, the observability stack, and the security review process. It shares team capacity with platform engineering, applied ML, and SRE. And it shares leadership attention with whatever the next AI initiative is on the roadmap. Naming these adjacencies upfront helps the program scope realistically and helps leadership see the work as a portfolio rather than a one-off project.

The most common mistake in adjacent-capability scoping is treating each adjacency as someone else's problem. The integration with the data platform is your problem. The security review of the runtime is your problem. The on-call rotation that covers the system you ship is your problem. Pretending otherwise pushes work to teams that did not plan for it, and the work returns to you later as a delay or an incident. Own the adjacencies you depend on; partner with the teams that own them; share the timeline.

Conclusion

Agentic AI systems are the next wave of enterprise AI capability. The discipline that turns a clever agent into a safe one is the same discipline that turned models into systems: design, eval, and operate.

Key Takeaways:

- Agentic AI is a system, not a model

- Single-agent is the right default; decompose to multi-agent only when justified

- Match autonomy to blast radius, narrow the tool surface, build sequence eval

Building effective agentic AI requires planning, eval, and control discipline. When done correctly, it produces:

- Workflow automation that survives audit and scale

- Bounded blast radius across production actions

- Reusable control patterns for the next agent

- Defensible posture in board and regulator conversations

Board Approval for Infrastructure Modernization

Inside a financial-frame business case that turned a 14-month stall into a 45-minute board approval.

Call to Action

If you are scoping an agentic program, map the workflow, define blast radius, narrow the tool surface, and build sequence eval before tuning a single planning prompt.

Learn More Here:

- Agentic Systems Multi Agent Architectures Autonomous AI Engineering Teams

- Multi Agent Systems Orchestration Collaboration

- Agentic AI Product Models Agent As a Service

At Logiciel Solutions, we work with Heads of AI on agentic system design, control layer engineering, and operating model setup. Our reference patterns come from production agent deployments.

Explore how to design your agentic AI system.

Frequently Asked Questions

What are agentic AI systems?

A system where a model plans actions, calls tools, observes results, and decides what to do next, possibly in a loop, possibly in coordination with other agents. Three properties separate agentic from non-agentic: planning, tool use, loops.

When should we use single agent vs. multi-agent?

Single agent for workflows that do not decompose cleanly. Multi-agent for workflows with specialized roles. Most first programs should start single and decompose only if needed.

What is the difference between an agent and a chatbot?

A chatbot generates text. An agent takes actions through tool calls that affect external state. The threshold is tool use that touches systems of record.

How autonomous should the agent be?

As autonomous as the blast radius allows. Match autonomy to risk action by action. High-blast actions default to HITL.

What is the biggest mistake in agentic AI?

Building the agent before designing the controls. Programs that ship agents and then add controls discover what they needed at the worst possible moment.