There is a fleet of edge devices in the field running inference, and one model update has just bricked twelve percent of them. The lab tests passed. The canary fleet looked fine. Now there is a remediation team on a call, a status page going up, and a customer email being drafted.

This is more than a deploy mistake. It is a failure of the concept of edge AI implementation.

A modern edge AI implementation is more than a model on a device. It is fleet management, observability, security, update orchestration, and a graceful path to handle the moments your network is not cooperating.

ROI of AI-Ready Data Infrastructure

Inside an 8-month rebuild that turned three failed pilots into a 9:1 ROI model.

However, many teams scope edge AI as cloud AI minus the network, and discover the rest of the work the hard way.

If you are a Staff Engineer and are responsible for building or scaling your edge AI program, the intent of this article is:

- Define what edge AI implementation actually means in production

- Walk through the four reference architectures that work

- Lay out the fleet operations work that nobody scopes correctly the first time

To do that, let's start with the basics.

What Is Edge AI Implementation? The Basic Definition

At a high level, edge AI implementation is the practice of running AI inference on hardware physically close to data generation, with the fleet operations, observability, and security work that lets the system stay reliable in environments your team does not control.

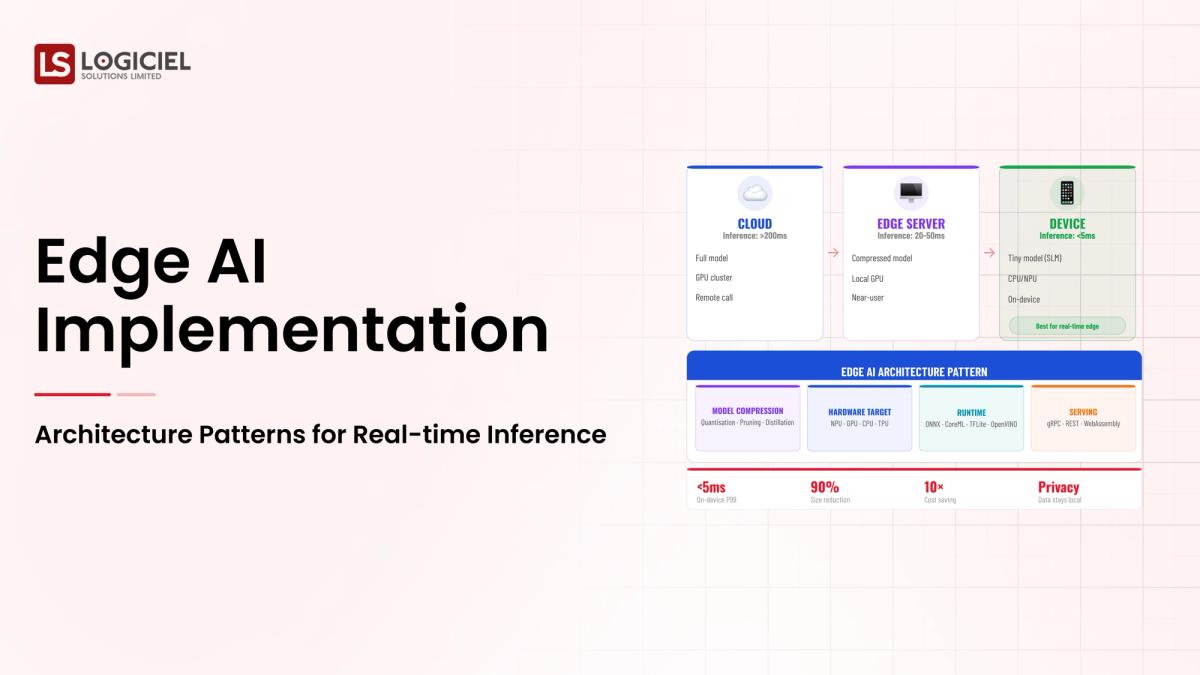

To compare:

If cloud AI is a kitchen in your house, edge AI is a food truck. Both can serve dinner. The food truck has to handle weather, fuel, regulation, and the fact that nobody is going to come fix it for you between services.

Why Is Edge AI Implementation Necessary?

Issues that Edge AI Implementation addresses or resolves:

- Solving the latency problem for use cases the cloud cannot meet

- Addressing privacy and connectivity constraints that rule out centralized inference

- Reducing bandwidth and cost for high-volume sensor data

Resolved Issues by Edge AI Implementation

- Brings inference to where data is generated, reducing round-trip cost

- Enables AI for environments without reliable connectivity

- Keeps sensitive data on-device rather than shipping it to the cloud

Core Components of Edge AI Implementation

- Device-side inference runtime (TFLite, ONNX Runtime, Core ML, TensorRT)

- Fleet management for registration, updates, and monitoring

- Observability and feedback loop streaming back to a central platform

- Security and identity for devices in untrusted environments

- Orchestration layer deciding what runs at edge vs. cloud

Modern Edge AI Implementation Tools

- ONNX Runtime, TFLite, Core ML, TensorRT for device-side inference

- AWS IoT Greengrass, Azure IoT Edge, Google Distributed Cloud for fleet platforms

- Balena, Mender, and Memfault for OTA updates and observability

- Edge-specific MLOps platforms like Edge Impulse

- OpenTelemetry for unified observability across edge and cloud

These platforms reflect the maturation of edge AI from one-off device deployments to managed fleets.

Other Core Issues They Will Solve

- Enables staged rollouts and canary fleets for safer model updates

- Provides device-level audit trails for regulated environments

- Supports federated learning where data cannot leave devices

In Summary: Edge AI implementation concepts turn a device-side model into a managed fleet that stays reliable in the messy real world.

Importance of Edge AI Implementation in 2026

Edge AI has shifted from a niche optimization to a mainstream architectural choice. Four reasons explain why it matters now.

1. Latency budgets keep tightening.

Real-time inference for autonomous systems, AR, and industrial control cannot tolerate cloud round-trip latency. Edge is the only way to meet the budget.

2. Privacy regulation favors on-device inference.

Keeping data on the device rather than shipping it to the cloud simplifies compliance posture for sensitive use cases.

3. Bandwidth and cost penalties scale with sensor volume.

High-frequency sensor streams shipped to the cloud cost more than the inference they enable. Edge prefiltering changes the math.

4. Model efficiency has caught up with edge constraints.

Quantization, distillation, and architecture-aware design make capable models fit edge hardware budgets that did not exist two years ago.

Traditional vs. Modern Edge AI Implementation Concepts

- One-off device deployment vs. managed fleet with OTA updates

- Cloud round-trip inference vs. on-device inference with cloud fallback

- Custom telemetry per project vs. unified edge-cloud observability

- Manual device provisioning vs. zero-touch onboarding with hardware identity

In summary: Edge AI implementation concepts are the foundation of every product that needs inference where the network or the cloud cannot reach.

Details About the Core Components of Edge AI Implementation: What Are You Designing?

Let's go through each layer.

1. Device Layer

What hardware is running inference. Pick the device after the model.

Device decisions:

- CPU, GPU, NPU, or FPGA

- Power and thermal envelope

- Connectivity options and offline operation

2. Runtime Layer

What inference runtime executes the model.

Runtime considerations:

- Model format support

- Performance under your latency budget

- Operational maturity and update path

3. Fleet Management Layer

How devices are registered, updated, monitored, and recovered.

What this layer handles:

- Device identity and authentication

- OTA model and firmware updates with staged rollouts

- Health monitoring and recovery automation

4. Observability and Feedback Layer

How model and device telemetry flow back to a central system.

What flows back:

- Inference outcomes and confidence scores

- Device health and resource utilization

- Anomaly signals for drift and adversarial inputs

5. Orchestration and Partitioning Layer

Decides what runs where, including fallback and offload paths.

Partitioning decisions:

- Full edge for privacy or zero-connectivity environments

- Hybrid with cloud fallback for graceful degradation

- Edge prefilter with cloud-deep for latency-sensitive heavy workloads

Benefits Gained from Fleet Management and Observability

- Safer model updates through staged rollouts and canary fleets

- Faster diagnosis when devices fail in environments you cannot reach

- Continuous improvement loop fed by real-world inference outcomes

How It All Works Together

Devices register with the fleet platform, receive a signed model update, run inference locally, stream telemetry back to observability, and fall back to cloud inference when local confidence is low. The orchestration layer decides what runs where; the fleet layer keeps it deployable; the observability layer keeps it debuggable.

Common Misconception

Edge AI is just running a smaller model on a device.

Edge AI is a fleet operations problem with an inference component. The model is roughly twenty percent of the work; fleet management, observability, security, and orchestration are the other eighty.

Key Takeaway: Each layer has its own engineering investment, and underbuilding any of them is the most common failure mode.

Real-World Edge AI Implementation in Action

Let's take a look at how edge ai implementation operates with a real-world example.

We worked with a manufacturing client deploying computer vision on production lines, with these constraints:

- Sub-100ms inference latency for defect detection

- Zero-connectivity tolerance for the production environment

- Centralized model management across hundreds of plant sites

Step 1: Define the Constraint That Wins

Latency, privacy, bandwidth, connectivity, or cost. Pick one. The winning constraint shapes every downstream decision.

- Document the constraint in writing

- Validate with the line-of-business owner

- Use it to score architecture options

Step 2: Select the Reference Architecture

Full-edge for strict privacy or zero-connectivity. Hybrid with fallback for cost and graceful degradation. Edge prefilter for latency-sensitive heavy compute. Federated learning for privacy-preserving training.

- Match architecture to the winning constraint

- Avoid full-edge by default; justify it deliberately

- Plan for hybrid as the realistic path for most enterprises

Step 3: Design the Fleet Management Layer First

Before model work, before device selection, design how devices are registered, updated, monitored, and recovered.

- Pick the fleet platform that fits your security and scale needs

- Design staged rollout and rollback flows

- Define device health metrics and alerting

Step 4: Pick the Device and Runtime Together

Run benchmarks on candidate device-runtime pairs against your model and your latency budget.

- Real benchmarks, not vendor claims

- Stress test under thermal and power constraints

- Verify the update and recovery path works

Step 5: Pilot, Observe, Iterate

Ship to a small fleet, capture everything, learn from production conditions before scaling.

- Pilot fleet of 10-100 devices in real conditions

- Daily review of inference outcomes and device health

- Update operating model before scaling fleet size

Where It Works Well

- Fleet management designed in phase three, not phase nine

- Observability that streams real telemetry, not just keep-alives

- Staged rollouts with documented rollback automation

Where It Does Not Work Well

- Trusting paper benchmarks instead of running your own

- Treating each device as identical when fleets are heterogeneous

- Picking the device before the model

Key Takeaway: The team that invests in fleet management before models ships in twelve weeks. The team that defers fleet management ships in twelve months.

Common Pitfalls

i) Defaulting to full-edge because it sounds modern

Full-edge is right for strict privacy and zero-connectivity. For everything else, hybrid is usually better.

- Justify full-edge when picked

- Default to hybrid

- Account for graceful degradation

ii) Skipping the fleet management investment

Fleet management is half the work. Teams that skip it ship the first version fast and spend the next year operating it badly.

iii) Picking the device before the model

The model determines the compute requirement. Picking the device first locks you into a model class that may not meet your accuracy bar.

iv) Bolt-on security

Edge devices are physical objects. Plan the security model in phase three, not after the first compromise.

Takeaway from these lessons: Most edge AI failures are operational, not algorithmic. Plan the operating model first.

Edge AI Implementation Best Practices: What High-Performing Teams Do Differently

1. Automate device fleet operations

Zero-touch onboarding, signed updates, staged rollouts, automated rollback. Manual fleet operations do not scale past dozens of devices.

2. Stream telemetry continuously

Inference outcomes, model confidence, device health, network state. The feedback loop is what makes the next model better.

3. Optimize the model for the device

Quantization, pruning, distillation, architecture-aware design. The model that fits the device cleanly is the model that ships.

4. Define service-level agreements per device class

Latency, accuracy, uptime, freshness. Without SLAs, the team negotiates the meaning of working every time something breaks.

5. Implement an AI-first edge operating model

On-call coverage that understands edge failure modes. Runbooks for fleet incidents. Quarterly reviews of fleet health and model performance.

Logiciel's value add is helping teams design the fleet management layer alongside the model layer, so edge AI ships as a managed system rather than a pile of devices.

Takeaway for High-Performing Teams: Focus on fleet operations and observability first. Models change; the operating platform compounds.

Signals You Are Designing Edge AI Implementation Correctly

How do you know the edge ai implementation program is set up to succeed? Not in a board deck or a celebration, but in the daily evidence the team produces. Below are the signals that distinguish programs on the path from programs that look like progress.

- The team can describe failure modes without flinching. People who actually run edge ai implementation systems will tell you the last three things that broke. People who have only read about it will not.

- Cost is observable in real time. The team can tell you, today, how much they spent yesterday on this and what drove the change.

- Change is boring. New versions, new models, new pipelines all roll forward and roll back the same way. Heroic deploys signal an immature system.

- Eval is continuous, not ceremonial. A live dashboard refreshed at least daily, not a quarterly slide.

- Vendor lock-in is a known quantity. The team can name the dependencies that would hurt to remove and the rip-and-replace cost in dollars and weeks.

Adjacent Capabilities and Connected Work

This work does not exist in isolation. Edge AI Implementation depends on, and feeds into, several adjacent capabilities. Building one without thinking about the others is the most common scoping mistake.

In most enterprise programs, edge ai implementation shares infrastructure with the data platform, the observability stack, and the security review process. It shares team capacity with platform engineering, applied ML, and SRE. And it shares leadership attention with whatever the next AI initiative is on the roadmap. Naming these adjacencies upfront helps the program scope realistically and helps leadership see the work as a portfolio rather than a one-off project.

The most common mistake in adjacent-capability scoping is treating each adjacency as someone else's problem. The integration with the data platform is your problem. The security review of the runtime is your problem. The on-call rotation that covers the system you ship is your problem. Pretending otherwise pushes work to teams that did not plan for it, and the work returns to you later as a delay or an incident. Own the adjacencies you depend on; partner with the teams that own them; share the timeline.

Conclusion

Edge AI implementation concepts are the foundation of every product that needs inference where the cloud cannot reach. The discipline is what separates fleets that compound from fleets that age.

Key Takeaways:

- Edge AI is a fleet operations problem, not just an inference problem

- Pick the constraint that wins; pick the architecture that fits the constraint

- Design fleet management first; everything else rides on it

Building an effective edge AI implementation requires hardware, software, and operating-model alignment. When done correctly, it produces:

- Real-time inference where the cloud cannot reach

- Reliable model updates across thousands of devices

- Privacy-preserving AI that satisfies regulator and customer expectations

- A platform that compounds across edge AI use cases

Scaling Data Team Without Scaling Headcount

Inside a 12-week overhaul that doubled output and cancelled two senior data engineering hires.

Call to Action

If you are designing edge AI into a product, the move is to define the winning constraint, pick the reference architecture, and design the fleet management layer first.

Learn More Here:

- Data Warehouse Concepts Every Data Engineer Should Know in 2026

- Hybrid Delivery Model Ctos AI First Engineering 2026

- AI Implementation Construction Planning

At Logiciel Solutions, we partner with engineering and architecture teams on edge AI design, fleet operations, and observability. Our reference patterns are built from production deployments at scale.

Explore how to design your edge AI fleet.

Frequently Asked Questions

What are edge AI implementation concepts?

The discipline of running AI inference on hardware close to data generation, with the fleet operations, observability, and security work that lets the system stay reliable in environments your team does not control.

When does edge AI make sense over cloud AI?

When latency, privacy, bandwidth, or connectivity constraints rule out centralized inference. Pick the constraint that wins; the constraint shapes the architecture.

Which reference architecture should we pick?

Full-edge for strict privacy or zero-connectivity. Hybrid with fallback for cost and graceful degradation. Edge prefilter with cloud-deep for latency-sensitive heavy compute. Federated learning for privacy-preserving training.

What is the most common edge AI failure mode?

Underinvestment in fleet management and observability. The architecture diagram looks fine; the operating model is not designed; six months in, the team is operating instead of building.

What is the biggest mistake in edge AI implementation?

Picking the device before the model and trusting paper benchmarks. Real benchmarks on your model and your latency budget save the device-swap project later.