There is a corporate AI program in month nine that is supposed to be in production. The kickoff was confident, the proof of concept worked, the leadership readout went well. The dashboard says progress is on track. The team says progress is fine. And the program has not shipped a single customer-facing AI feature.

This is more than a delivery delay. It is a failure of the corporate AI implementation discipline.

60% Overhead Reduction Guide

Inside a one-quarter overhead audit that pulled a five-person data team back from 67% firefighting.

A modern corporate AI implementation reaches a state where it produces measurable business value at acceptable cost and acceptable operating burden. Anything short of that is a failure, regardless of whether the model technically works.

However, most companies still measure technical milestones and assume business success follows. It does not, automatically.

If you are a VP Engineering and are responsible for building or scaling your AI program portfolio, the intent of this article is:

- Define what corporate AI implementation failure actually means

- Walk through the seven failure modes that show up most often

- Describe the remediation playbook for each failure mode

To do that, let's start with the basics.

What Is Corporate AI Implementation Failure? The Basic Definition

At a high level, corporate AI implementation failure is a program that fails to reach a production state where it produces business value at sustainable cost. The model may work. The business outcome may not.

To compare:

If a startup AI failure is usually a single dramatic event, corporate AI failure is usually a slow fade. The shape is different. The diagnostics are different. The remediation playbook is different.

Why Is Corporate AI Implementation Failure Necessary?

Issues that Corporate AI Implementation Failure addresses or resolves:

- Surfacing failure patterns that look like progress

- Diagnosing programs in trouble before sponsors lose confidence

- Recovering programs that have stalled rather than starting over

Resolved Issues by Corporate AI Implementation Failure

- Names the seven recurring failure modes so they can be diagnosed

- Provides early signals that distinguish trouble from normal turbulence

- Lays out the remediation order so upstream issues get fixed first

Core Components of Corporate AI Implementation Failure

- Outcome ambiguity (the most common single failure mode)

- Single-owner overload across outcome and system

- Vendor-led scope and procurement-first decisions

- Eval blindness and quality reported in vibes

- Cost drift without a named owner

- Operating debt that consumes the team that built the system

- Compliance surprise during the first audit

Modern Corporate AI Implementation Failure Tools

- Diagnostic frameworks like the seven-mode review

- Eval harnesses such as LangSmith and Arize for catching quality regressions

- FinOps tooling for AI cost visibility

- Operating-model templates and runbooks adapted for AI workloads

- Audit logging and decision-trail capture for compliance evidence

These are the tools that mature programs use to detect, diagnose, and recover from each failure mode.

Other Core Issues They Will Solve

- Surfaces structural issues hidden under reasonable-sounding symptoms

- Makes recovery cheaper than restart in most cases

- Builds organizational pattern-matching for the next AI program

In Summary: Corporate AI implementation failure is a diagnosable, preventable, and recoverable condition.

Importance of Corporate AI Implementation Failure in 2026

Diagnosing failure modes early is the highest-leverage skill a VP of Engineering can build for corporate AI work. Four reasons make 2026 the year to invest in this muscle.

1. Programs in trouble look indistinguishable from programs making progress.

Status reports look fine until the day they don't. The fix is structural diagnostics, not better dashboards.

2. Failure modes compound silently.

Outcome ambiguity feeds eval blindness, which feeds cost drift, which feeds operating debt. Catch the upstream cause and several downstream symptoms resolve.

3. Remediation is cheaper than restart.

Programs that score on two or three modes can usually be recovered in a quarter. Restarting costs a year.

4. Pattern-matching is hard to do internally.

Every program looks unique from inside. An external diagnostic that has seen many programs is the cheapest way to surface what your team cannot see.

Traditional vs. Modern Corporate AI Implementation Failure Concepts

- Annual program review vs. quarterly seven-mode diagnostic

- Hoping issues self-correct vs. naming the failure mode and assigning the fix

- Replacing the team vs. remediating the structure

- Killing struggling programs vs. recovering them when the underlying outcome is still relevant

In summary: Failure mode literacy is the operating-leadership equivalent of unit testing.

Details About the Core Components of Corporate AI Implementation Failure: What Are You Designing?

Let's go through each layer.

1. Mode 1. Outcome Ambiguity

The most common single failure mode. Nobody can write the success metric in one sentence.

Early signals:

- Different team members give different answers to why the program exists

- Roadmap items keep getting added without success criteria

- The line-of-business sponsor is vague when asked what they want

2. Mode 2. Single-Owner Overload

One person is accountable for both the outcome and the system. They cannot make tradeoffs because every tradeoff lands on them.

Early signals:

- Decisions stall whenever the single owner is unavailable

- The owner cannot delegate without re-explaining context every time

- Burnout indicators in the lead role

3. Mode 3. Vendor-Led Scope

Scope is shaped by what a vendor can sell rather than what the business needs.

Early signals:

- The system roadmap mirrors the vendor product roadmap

- Scope expansion requests come from the vendor sales team

- The team cannot explain why specific features were prioritized

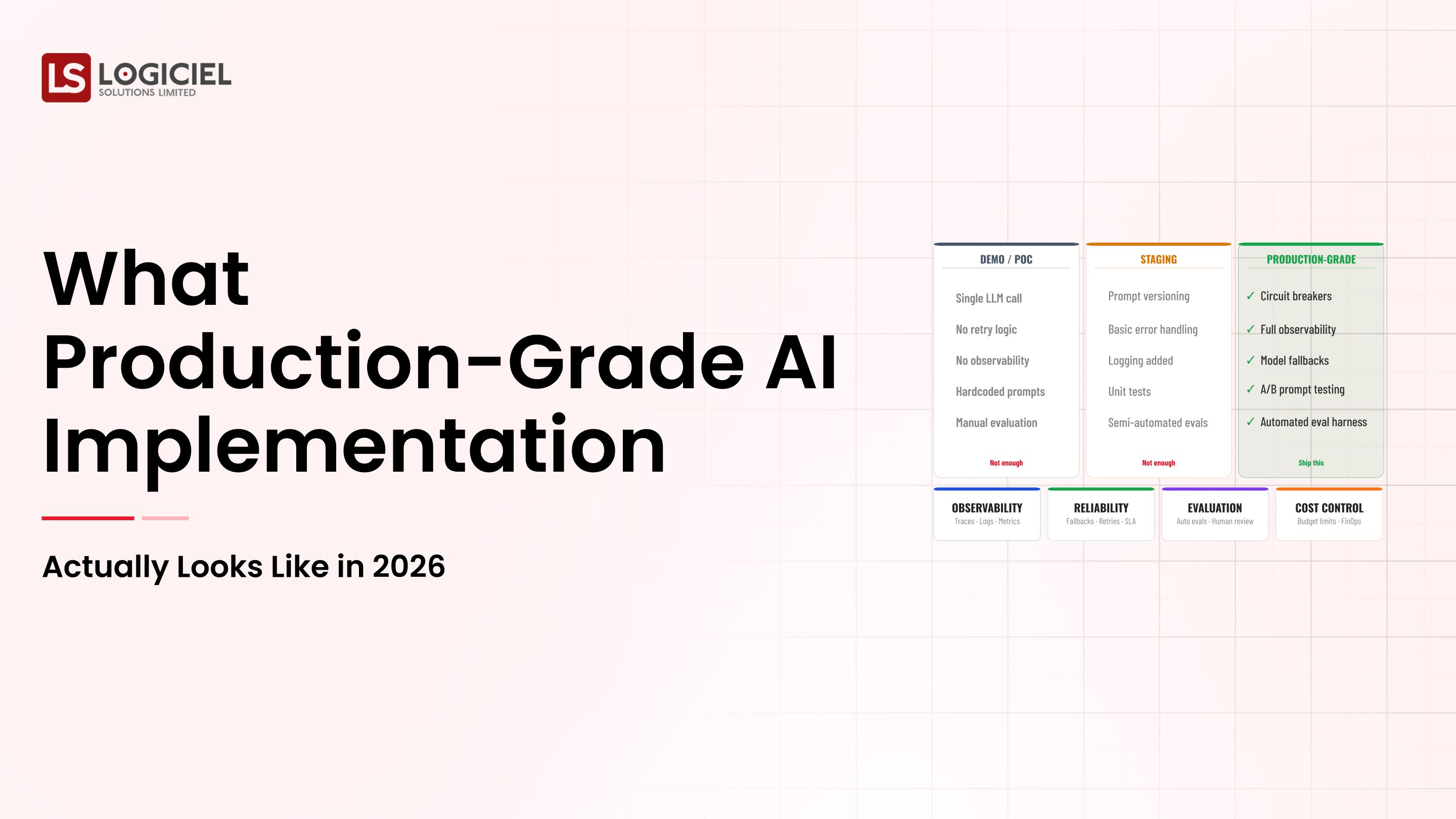

4. Mode 4. Eval Blindness

Quality is reported in vibes rather than numbers.

Early signals:

- Nobody can tell you the regression rate from last week

- Eval runs occasionally rather than continuously

- Quality discussions become opinion-based rather than data-based

5. Mode 5. Cost Drift

Inference cost grows faster than usage. Nobody is named for it.

Early signals:

- CFO asks about AI spend; the answer is conditional

- Per-request cost is not instrumented

- Cost-by-feature attribution is impossible

Benefits Gained from Diagnostic Discipline and Remediation Sequencing

- Faster recovery for programs already in trouble

- Cheaper kickoff for the next program because the lessons transfer

- Stronger sponsor relationships because issues surface earlier

How It All Works Together

Run the seven-mode diagnostic. Identify the modes that are present. Sequence the remediation: framing first, then ownership, then eval, then cost, then operating model, then audit. Each fix unlocks the next one. Skipping the sequence makes the work slower, not faster.

Common Misconception

AI programs fail because of model limitations or talent gaps.

Programs fail because of structural issues that have nothing to do with the model. Talent and tools are usually fine. Framing, ownership, and operating model are usually broken.

Key Takeaway: Each failure mode has its own remediation, and the modes are diagnosable in a single working session.

Real-World Corporate AI Implementation Failure in Action

Let's take a look at how corporate ai implementation failure operates with a real-world example.

We worked with a Fortune 500 company whose AI program was nine months in and still not in production. The team was strong; the model was performing; the program was stalled. The seven-mode diagnostic surfaced:

- Outcome ambiguity, with two different definitions in active use

- Single-owner overload on the engineering lead

- Eval blindness because quality was reviewed monthly, not daily

Step 1: Run the Seven-Mode Diagnostic

Score the program against each mode. Be honest. Programs scoring above zero on three or more modes are in the danger zone.

- Use a structured one-hour session

- Include the engineering lead, the product owner, and the sponsor

- Document scores and qualitative evidence

Step 2: Fix the Framing First

If outcome ambiguity is present, fix it before anything else. Every other fix depends on a clear outcome.

- Working session with outcome owner and system owner

- One-sentence success metric with a number

- Documented failure threshold and redress path

Step 3: Split Ownership

If single-owner overload is present, split the role into outcome owner and system owner.

- Two named roles with clear decision rights

- Sponsor with authority to settle disputes

- Documented escalation path

Step 4: Make Eval Public

If eval blindness is present, build the harness, publish the dashboard, treat regressions as release blockers.

- Continuous eval running daily

- Public regression dashboard

- Named owner for eval cases

Step 5: Sequence the Remaining Modes

Address cost drift, operating debt, and compliance surprise in that order. Each fix unlocks the next.

- Named cost owner with weekly visibility

- Documented operating model with on-call rotation

- Audit-trail design and tabletop exercise

Where It Works Well

- Honest scoring during the diagnostic

- Sequenced remediation that fixes upstream causes first

- Sponsor support for a one-quarter pause to remediate before resuming feature work

Where It Does Not Work Well

- Hiding the diagnosis from the sponsor

- Trying to fix all seven modes in parallel

- Bringing in a new vendor to fix structural issues

Key Takeaway: Most stalled corporate AI programs can be recovered in a quarter if the diagnosis is honest and the remediation is sequenced.

Common Pitfalls

i) Reluctance to admit the program needs fixing

Programs in trouble look like programs making progress. Admitting otherwise is a leadership move that takes courage.

- The longer you wait, the harder the fix

- Hiding the diagnosis from the sponsor turns a recoverable program into an unrecoverable one

- Sponsor reaction is shaped by how the news is delivered, not the news itself

ii) Trying to fix all seven modes in parallel

Sequence the work. Outcome before eval. Eval before cost. Operating model before audit. Skipping the sequence makes remediation slower, not faster.

iii) Hiring through the problem

Adding headcount to a program with structural issues makes the problems bigger, not smaller. Diagnose first. Hire second.

iv) Killing programs that needed remediation

Sometimes the right call is to kill. Often it is not. Distinguish between an obsolete outcome and a broken structure. The right move for the second is remediation.

Takeaway from these lessons: Most corporate AI failures are recoverable. The remediation requires honesty, sequencing, and sponsor support. None of the three is automatic.

Corporate AI Implementation Failure Best Practices: What High-Performing Teams Do Differently

1. Run the diagnostic on every active program quarterly

Schedule the seven-mode review as part of the quarterly business review. Programs scoring on three or more modes get formal remediation plans.

2. Start every program with the framing document

One-page outcome, success metric, owner, redress path, and operating-model commitment. Sign off before kickoff.

3. Build the eval harness before the system

Phase three of every AI program is the eval harness. Regressions block deploys. Quality is a number, not a vibe.

4. Name the cost owner from day one

Unit economics from the start. Weekly visibility. Owner whose job is to argue against drift.

5. Treat the operating model as a deliverable, not an afterthought

On-call, runbooks, postmortems, sunset criteria. Designed in phase five, not invented during the first incident.

Logiciel's value add is running the diagnostic with engineering and risk leaders, then leading the remediation across whichever modes are active. Pattern-matching from many programs surfaces what teams cannot see from inside.

Takeaway for High-Performing Teams: High-performing organizations build diagnostic discipline as a quarterly practice, not a crisis response.

Signals You Are Designing Corporate AI Implementation Failure Correctly

How do you know the corporate ai implementation failure program is set up to succeed? Not in a board deck or a celebration, but in the daily evidence the team produces. Below are the signals that distinguish programs on the path from programs that look like progress.

- The team can describe failure modes without flinching. People who actually run corporate ai implementation failure systems will tell you the last three things that broke. People who have only read about it will not.

- Cost is observable in real time. The team can tell you, today, how much they spent yesterday on this and what drove the change.

- Change is boring. New versions, new models, new pipelines all roll forward and roll back the same way. Heroic deploys signal an immature system.

- Eval is continuous, not ceremonial. A live dashboard refreshed at least daily, not a quarterly slide.

- Vendor lock-in is a known quantity. The team can name the dependencies that would hurt to remove and the rip-and-replace cost in dollars and weeks.

Adjacent Capabilities and Connected Work

This work does not exist in isolation. Corporate AI Implementation Failure depends on, and feeds into, several adjacent capabilities. Building one without thinking about the others is the most common scoping mistake.

In most enterprise programs, corporate ai implementation failure shares infrastructure with the data platform, the observability stack, and the security review process. It shares team capacity with platform engineering, applied ML, and SRE. And it shares leadership attention with whatever the next AI initiative is on the roadmap. Naming these adjacencies upfront helps the program scope realistically and helps leadership see the work as a portfolio rather than a one-off project.

The most common mistake in adjacent-capability scoping is treating each adjacency as someone else's problem. The integration with the data platform is your problem. The security review of the runtime is your problem. The on-call rotation that covers the system you ship is your problem. Pretending otherwise pushes work to teams that did not plan for it, and the work returns to you later as a delay or an incident. Own the adjacencies you depend on; partner with the teams that own them; share the timeline.

Conclusion

Corporate AI implementation failure is preventable and, when it happens, recoverable. The discipline to do either is the same: name the failure modes, diagnose honestly, sequence the fix.

Key Takeaways:

- Failure is diagnosable in seven recurring modes

- Remediation is cheaper than restart in most cases

- Sequence the fix; do not parallelize

When the diagnostic discipline is built into how programs run, the benefits compound:

- Faster recovery for programs in trouble

- Stronger kickoff discipline for the next program

- Higher sponsor confidence over time

- A reusable diagnostic vocabulary across the engineering organization

Why Audit-Ready Beats Audit-Survived Every Time

Inside a 120-day remediation that turned three material findings into zero at follow-up.

Call to Action

If you suspect your AI program is stalling, the seven-mode diagnostic is the cheapest hour of work you can do this month.

Learn More Here:

- AI Implementation Construction Planning

- AI Fix Build vs Buy Dilemma Scaling Teams

- AI in Ecommerce Startups Future Trends Implementation Guide

At Logiciel Solutions, we work with engineering and AI leaders on program diagnostics, remediation, and operating model design. Pattern-matching across many programs is our differentiator.

Explore how to diagnose and recover your AI program.

Frequently Asked Questions

What is corporate AI implementation failure?

A program that fails to reach a state where it produces measurable business value at sustainable cost, regardless of whether the model technically works.

What is the most common failure mode?

Outcome ambiguity. Programs without a clear, agreed, documented success metric drift, and drift compounds. It is also the cheapest mode to fix when caught early.

How do we diagnose failure?

Run the seven-mode review. Programs scoring above zero on three or more modes are in the danger zone. Above five, pause new work and remediate.

Can a stalled program be recovered?

Most can. Programs scoring on two or three modes typically recover in a quarter. The keys are honesty, sequencing, and sponsor support.

When should we kill a program rather than remediate?

When the underlying business outcome is no longer relevant. If the outcome still matters, remediate. The instinct to start over is usually more expensive than the work to fix.