There is a critical AI feature on a customer-facing surface that is returning a wrong answer at scale. The model passed eval. The deploy was clean. But the answer is wrong, and customers have already seen it. Your team scrambles to look at retrieval, then prompts, then the upstream data, and then begins the slow process of rebuilding faith with the line of business they support.

This is more than a model failure. It is a failure of the concept of AI implementation.

100 CTOs. Real Expectations

This report shows what actually predicts delivery success and what CTOs discover too late.

A modern AI implementation is more than a deployed model. It is the runtime, the eval harness, the observability, the governance, and the operating model that turn a clever capability into a system the business can rely on.

However, many teams are still using outdated concepts of how AI implementation actually works in production.

If you are a CTO/VP Engineering and are responsible for building or scaling your AI program, the intent of this article is:

- Define what we mean when we reference AI implementation

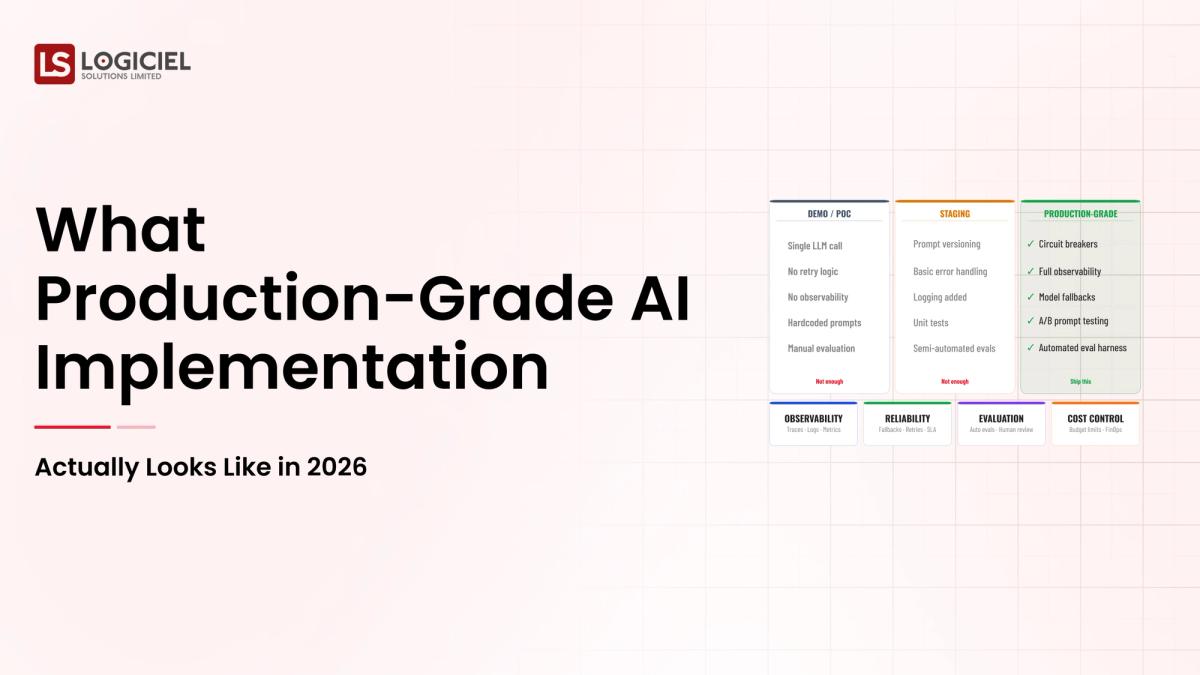

- Understand the differences between a lab-grade and a production-grade AI implementation

- Design systems that can scale with your company without burning out the team

To do that, let's start with the basics.

What Is AI Implementation? The Basic Definition

At a very high level, AI implementation is the discipline of turning a model into a system that produces correct, useful, observable, and governed outputs in production at acceptable cost.

To compare:

If a model is the engine, AI implementation is the car. The car is the part the customer experiences, the part that has to pass safety review, and the part that has to keep working when the road conditions change.

Why Is AI Implementation Necessary?

Issues that AI Implementation addresses or resolves:

- Solving the gap between lab eval and production behavior

- Providing audit trail and governance for regulated environments

- Making model swaps safe and rollbackable

Resolved Issues by AI Implementation

- Wraps unstructured model outputs into reliable system contracts

- Separates AI workloads from operational systems through clean integration layers

- Optimizes for cost, latency, and quality across the full request lifecycle

Core Components of AI Implementation

- Request gateway that handles auth, routing, and rate limits

- Context-assembly layer that grounds the model with retrieval and policy

- Model-serving layer that abstracts which model is in use

- Output validation layer that catches policy or schema violations

- Audit pipeline that records every request, response, and decision

Modern AI Implementation Tools

- OpenAI, Anthropic, and Bedrock for managed model APIs

- vLLM, TGI, and Ollama for self-hosted inference

- LangChain, LlamaIndex, and DSPy for orchestration patterns

- Weights & Biases, Arize, and LangSmith for AI observability

- Pinecone, Weaviate, and pgvector for retrieval

These platforms reflect the maturation of AI implementation from notebook to production system.

Other Core Issues They Will Solve

- Enable safe model upgrades through canary deploys and rollback

- Provide unit-economics visibility so cost grows with value, not noise

- Enable change-management discipline that keeps audit trails defensible

In Summary: AI implementation concepts convert a clever model into a reliable system the business can run.

Importance of AI Implementation in 2026

AI implementation has shifted from a research exercise to a board-level program. Four reasons explain why the discipline matters now.

1. Boards expect AI to ship, not just to be discussed.

If there is no production-grade implementation discipline, programs stall, sponsors lose confidence, and the next AI initiative does not get funded. The cost of weak implementation is rarely a single incident. It is a slow loss of organizational appetite.

2. Cost shape changes the unit economics of every AI feature.

Inference is cheap until it isn't. Teams that don't instrument unit economics on day one pay for the lesson later. Implementation discipline is what keeps cost grounded to value.

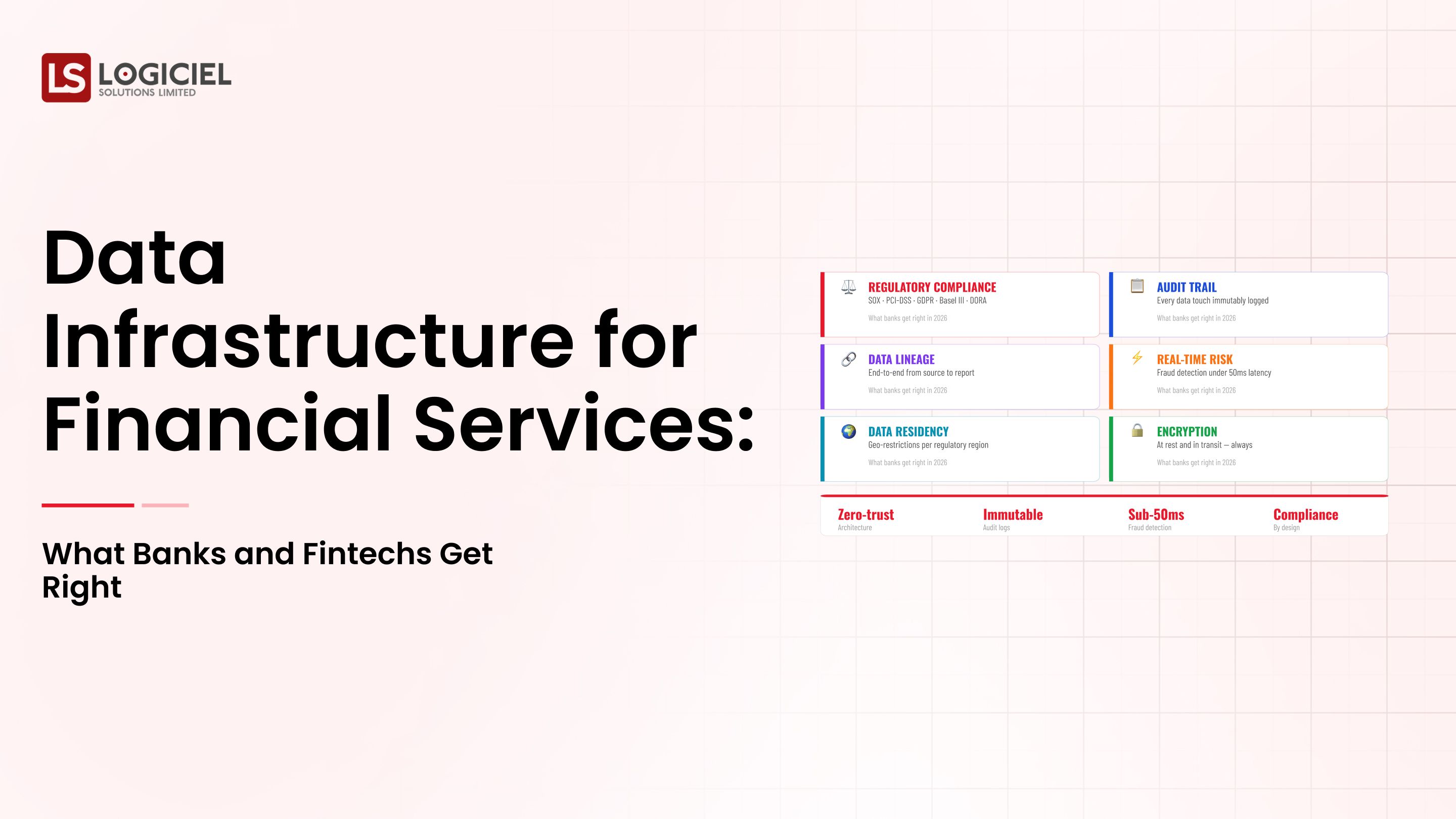

3. Regulation has caught up with the practice.

The EU AI Act, NIST AI RMF, and a wave of sector-specific rules now require evidence that controls work, not just policy claims that they exist. Implementation produces the evidence.

4. Talent is scarce; reuse is the multiplier.

The best teams design implementation as a platform, not a project. Each new use case rides on the same eval harness, governance layer, and observability stack. Reuse is the prize.

Traditional vs. Modern AI Implementation Concepts

- Single-model deployment vs. abstracted, swappable model layer

- Notebook eval vs. continuous eval harness running in production

- Manual incident triage vs. observability-first operating model

- Static governance documents vs. enforced runtime guardrails

In summary: AI implementation concepts are the foundation of every modern AI program that survives its first year.

Details About the Core Components of AI Implementation: What Are You Designing?

Let's go through each layer.

1. Request and Routing Layer

This is where every customer-facing request lands first.

Responsibilities of the Request Layer:

- Authentication and tenant isolation

- Rate limits and quota enforcement

- Routing to the right model and the right prompt template

2. Context-Assembly Layer

Most AI quality work happens here.

What this layer does:

- Pulls retrieval candidates from vector and SQL stores

- Applies redaction and policy rules to inputs

- Assembles the final prompt within a defended context budget

3. Model-Serving Layer

The substitutable part of the system.

What this layer abstracts:

- Vendor and model identity

- Failure handling and retry policy

- Cost-aware routing across model tiers

4. Output Validation Layer

The second line of defense after tool-level controls.

What gets validated here:

- Schema and structural correctness

- Policy compliance and safety filters

- Citation and grounding for retrieval-grounded answers

5. Audit and Feedback Layer

The boring part that survives audit.

What this layer captures:

- Plan, tool calls, intermediate state, final outcome

- Quality flags from users and from sampling-based human review

- Drift signals on input and output distributions

Benefits Gained from the Validation and Audit Layers

- Defensible evidence for regulators, auditors, and the board

- Faster debugging when production failures occur

- Continuous improvement loop that turns flagged outputs into eval cases

How It All Works Together

Requests land at the routing layer, context is assembled with retrieval and policy, the model layer produces an output, validation runs before the user sees it, and the audit layer captures every step. When something fails, the audit layer is the source of truth. When something improves, the feedback loop is the mechanism.

Common Misconception

AI implementation is just about picking the right model.

AI implementation is the system around the model. The model is roughly twenty percent of the work. The other eighty percent is the system, the eval, the observability, the governance, and the operating model.

Key Takeaway: Each layer contributes to the overall reliability and cost shape of your AI system.

Real-World AI Implementation in Action

Let's take a look at how ai implementation operates with a real-world example.

We have a client running a SaaS product with AI features that need to track:

- How users interact with AI-powered surfaces

- What outputs the model produced and what changed

- Where cost is being spent and what is driving the change

Step 1: Frame the Outcome

Write the success metric in one sentence with a number attached. Sign off with the line-of-business leader.

- Business outcome, not model accuracy

- Failure threshold and redress path

- Two named owners: outcome and system

Step 2: Map the Data and Access Paths

Document where data lives, who owns it, what the latency budget is, and how it is accessed.

- Source systems and update cadence

- Access controls and redaction rules

- Latency and freshness budget per request type

Step 3: Build the Eval Harness

Write the tests that would let you fire a vendor before you have a vendor.

- Happy paths, recoverable and unrecoverable failures

- Adversarial inputs

- Schedule and regression-blocking automation

Step 4: Ship the Smallest Useful Version

Deploy to a small, real audience and watch what happens. The metric you forgot to instrument is the one you will need.

- Internal users with named feedback channels

- Daily eval review for the first month

- Documented decision rules for promoting or rolling back

Step 5: Operate It Like Infrastructure

Treat the system like a database, not a feature shipment.

- On-call rotation and runbooks

- Quarterly cost and quality reviews

- Sunset criteria for capabilities that do not earn their keep

Where It Works Well

- Tight scope with one named owner per layer

- Eval harness that runs every day, not every quarter

- Cost dashboard that the team checks before the CFO does

Where It Does Not Work Well

- Vendor-led scope where the model dictates the outcome

- Wide tool surface without matching controls

- Operating model invented during the first incident

Key Takeaway: Overall performance is dictated by the design decisions made in week one, regardless of how capable the model is.

Common Pitfalls

i) Overengineering too early

Building elaborate abstractions before the first use case ships:

- Slow time-to-value

- High maintenance cost from day one

- Premature lock-in to design assumptions that will not survive contact with reality

ii) Ignoring observability

Without observability built into the implementation, issues go undetected and debugging is reactive instead of structured.

iii) Skipping documentation

If decisions and contracts are not documented, the next team to inherit the system will rebuild it instead of operating it.

iv) Treating the system as static

AI implementations are dynamic. They change to absorb new models, new use cases, and new regulatory expectations. Static designs become legacy fast.

Takeaway from these lessons: The majority of AI implementation failures come from gaps in the process, not from the choice of tools.

AI Implementation Best Practices: What High-Performing Teams Do Differently

1. Automate the eval pipeline

Continuous eval, regression detection, schema validation, and policy checks running on every change. Treat the eval pipeline as production code, not as a notebook.

2. Use a thin abstraction over model APIs

Direct vendor calls in product code create lock-in. A thin abstraction over the model layer lets you swap providers in a sprint, not a quarter.

3. Optimize the cost shape

Per-request budgets, model tier routing, prompt caching, and unit-economics dashboards. Cost discipline is a daily practice, not a quarterly review.

4. Define service-level agreements for AI

Latency targets, quality scores, availability budgets, and rollback timelines. Without explicit SLAs, the team negotiates the meaning of working every time something breaks.

5. Implement an AI-first engineering operating model

On-call rotation. Runbooks. Postmortems. Quarterly architecture and cost reviews. Programs that treat AI as infrastructure ship more capable systems with fewer incidents.

Logiciel's value add comes from helping teams move beyond a collection of point tools to an integrated AI implementation platform that scales across use cases without rebuilding from scratch each time.

Takeaway for High-Performing Teams: Focus on platform reuse, automation, and operating discipline. Models change. The system around them is what compounds.

Signals You Are Designing AI Implementation Correctly

How do you know the ai implementation program is set up to succeed? Not in a board deck or a celebration, but in the daily evidence the team produces. Below are the signals that distinguish programs on the path from programs that look like progress.

- The team can describe failure modes without flinching. People who actually run ai implementation systems will tell you the last three things that broke. People who have only read about it will not.

- Cost is observable in real time. The team can tell you, today, how much they spent yesterday on this and what drove the change.

- Change is boring. New versions, new models, new pipelines all roll forward and roll back the same way. Heroic deploys signal an immature system.

- Eval is continuous, not ceremonial. A live dashboard refreshed at least daily, not a quarterly slide.

- Vendor lock-in is a known quantity. The team can name the dependencies that would hurt to remove and the rip-and-replace cost in dollars and weeks.

Adjacent Capabilities and Connected Work

This work does not exist in isolation. AI Implementation depends on, and feeds into, several adjacent capabilities. Building one without thinking about the others is the most common scoping mistake.

In most enterprise programs, ai implementation shares infrastructure with the data platform, the observability stack, and the security review process. It shares team capacity with platform engineering, applied ML, and SRE. And it shares leadership attention with whatever the next AI initiative is on the roadmap. Naming these adjacencies upfront helps the program scope realistically and helps leadership see the work as a portfolio rather than a one-off project.

The most common mistake in adjacent-capability scoping is treating each adjacency as someone else's problem. The integration with the data platform is your problem. The security review of the runtime is your problem. The on-call rotation that covers the system you ship is your problem. Pretending otherwise pushes work to teams that did not plan for it, and the work returns to you later as a delay or an incident. Own the adjacencies you depend on; partner with the teams that own them; share the timeline.

Conclusion

AI implementation concepts are the foundation of every modern AI program. The discipline is what separates programs that ship from programs that stall.

Key Takeaways:

- AI implementation is the system around the model, not the model itself

- Production discipline beats lab cleverness over the lifetime of any program

- The platform you build for the first use case is the asset every future program rides on

Building an effective AI implementation requires thoughtful design, continuous optimization, and alignment with business outcomes. When implementation is done correctly, it produces:

- Faster time-to-value for new AI use cases

- Reliable, governed AI behavior in production

- A scalable platform that compounds with each new program

- Improved trust with leadership, customers, and regulators

AI – Powered Product Development Playbook

How AI-first startups build MVPs faster, ship quicker, & impress investors without big teams.

Call to Action

If your AI program feels fragile, slow, or expensive to operate, now is the time to evaluate your AI implementation architecture before the next initiative starts.

Learn More Here:

- Data Warehouse Concepts Every Data Engineer Should Know in 2026

- Hybrid Delivery Model Ctos AI First Engineering 2026

- AI and Data Engineering Services

At Logiciel Solutions, we help engineering and AI leaders build AI-first implementation platforms that improve performance, reliability, and scalability. Our engineering approach focuses on system-level design and long-term operating efficiency.

Explore how to modernize your AI implementation.

Frequently Asked Questions

What are AI implementation concepts?

AI implementation concepts cover how to design, build, eval, deploy, and operate AI systems in production. They include request handling, context assembly, model serving, output validation, and audit.

What is the difference between a model and an AI implementation?

A model is the inference engine. An AI implementation is the system around it: orchestration, eval, observability, governance, and operating model. The model is roughly twenty percent of the work; the implementation is the rest.

Why is AI implementation moving to platform thinking?

Platforms are how teams scale across multiple AI use cases without rebuilding the eval, governance, and observability work each time. The first program is expensive; the second one rides on the platform; the fifth feels obvious.

What is an eval harness in AI implementation?

An eval harness is the system that measures AI quality continuously, against a curated set of cases, with regressions blocking deploys. It is production code, not a notebook.

What is the biggest mistake in AI implementation?

Treating it as a one-time project rather than infrastructure. Programs that operate AI like a database survive their first year. Programs that operate it like a feature shipment do not.