Your stakeholders are no longer confident in the data.

Risk reports aren't matching, fraud alerts are taking too long to activate and the number of customers in the dashboards do not match. At this point, your leadership is starting to doubt whether the systems you create can support AI.

This is not a pipeline problem; it's a data infrastructure for AI problem.

If you are a VP or Head of Data in financial services, you are responsible for one of the most complicated data environments in existence. In this environment, you must balance compliance, real-time transaction validation and system reliability all at once.

In this guide, you are going to learn:

- What makes up financial services data infrastructure.

- How leading banks and fintechs design scalable systems.

- What steps you can implement to achieve better reliability and compliance.

To begin with, let’s examine the elements that make the financial services data environment uniquely difficult to manage.

The Financial Services Data Dilemma – What Makes It Different

The financial services industry is not just another data industry; it's a high-risk, highly complex data environment.

1. Sensitivity and Risk of Data

Some types of data in the financial services industry include:

- Transaction data

- Personally identifiable information (PII)

- Payment details

When financial data fails to be processed accurately, it results in significant issues beyond just technical issues; it will also create:

- Regulatory fines

- Financial loss

- Damage to reputation

2. Real-time requirements

- Fraud detection

- Payment processing

- Risk monitoring

Have low-latency pipelines and event-driven systems; batch processing is not sufficient.

3. Compliance constraints

Must meet legal requirements, audit requirements and security standards, which require.

4. Fragmented data sources

Typical financial services Stack:

- Core banking systems

- CRM platforms

- Payment processors

- Risk systems

Different schemas, operate independently without real-time integration.

Where current systems fall short

Most organizations still use legacy systems, batch pipelines, manual reconciliation, which results in delayed insights, data inconsistencies, and operational inefficiencies. The stakes are higher for financial services due to the direct impact on financial outcomes, compliance with regulations that result in fines and trust being so imperative.

Agent-to-Agent Future Report

Understand how autonomous AI agents are reshaping engineering and DevOps workflows.

Therefore, financial services data infrastructure must provide concurrent capabilities for scale, compliance and real-time processing.

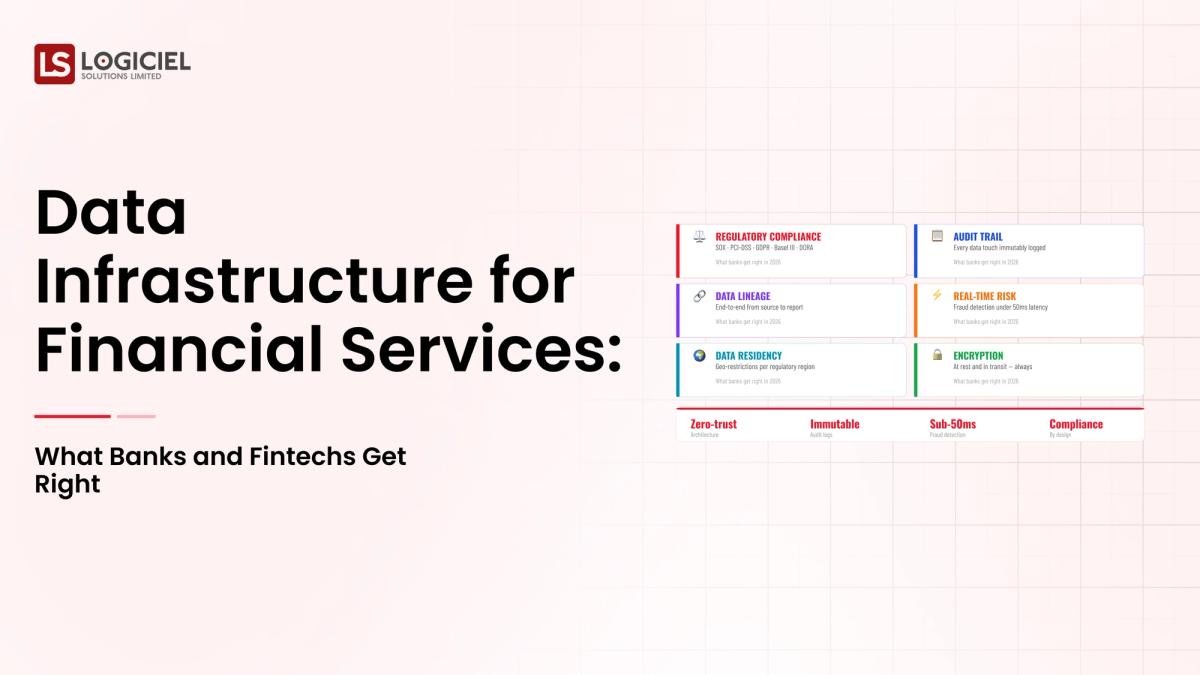

Regulatory and Compliance Considerations (SOC 2, GDPR, PCI-DSS, BCBS 239)

Compliance is not an add-on; it is a foundational design requirement.

Key Frameworks:

- SOC 2 Security and Availability Controls

- GDPR Data Privacy and Residency

- PCI-DSS Payment Data Security

- BCBS 239 Risk Data Aggregation and Reporting

What is Required:

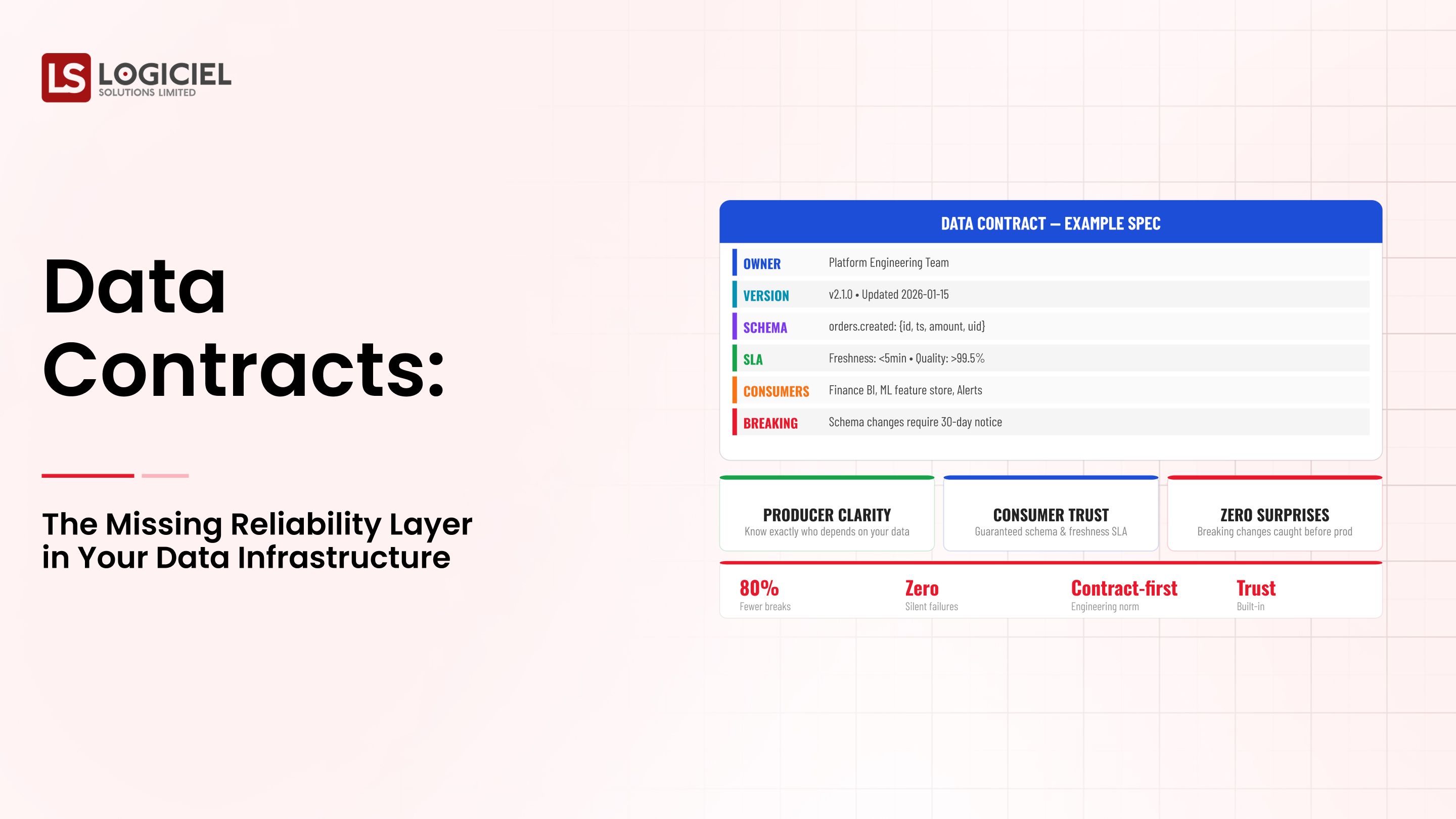

Data Lineage and Auditability

Tracking of Data Source(s), Transformations and Output.

Data Residency

Data must not leave defined geographical boundaries and comply with regional legislation.

Retention Policy

Define length of time data is stored; define the method of deletion of data.

Access Controls

Be certain:

- Role-based access includes

- Encryption

- Monitoring

Affects Architecture

Compliance has an Impact on:

- Storage

- Pipeline Design

- Access Layers

Build VS Retrofit

If You Build Compliance Early the Result Will be a Lower Long-Term Cost

If You Retrofit Compliance the Result Will Be Increasing Complexity and Risk

According to Reported Industry Data Organizations Who Retrofit Compliance will Spend 30-50% More On The Compliance to Their Existing System.

Primary Takeaway:

Ensure You Build Compliance into Your Data Infrastructure for AI From Day 1

The Financial Services Data Architecture That Is Truly Scalable

As a Result, Leading Organizations Are Forming Similar Architectures.

Hybrid Processing (Batch and Real-time)

- Batch for Reporting

- Streaming for Real-time Decisions

Decoupled Storage and Compute (the Benefits)

- Scalability

- Cost Control

- Flexibility

Data Lakehouse

Scalability of The Data Lake (Storage) and Performance of The Data Warehouse

Strong Observability Layer (To Track):

- Quality of Data

- Health of Pipeline

- Latency of Data

Multiple Systems Integration (To Support):

- Core Banking Systems

- CRM

- Payment Platform

All Require:

- Standardized Schemas

- Normalised Data

- Consistent Pipeline

Where You Need to Process in Real-Time

- Fraud detection

- Payment Validation

Where You Can Continue to Use Batch Processing

- Regulatory Reporting

- Historical Analysis

The Key Takeaway;

Scalable Systems Will Be Based On Real-Time Processing, Observability, And Modular Design.

Common Use Cases That Are Associated With Financial Services AI Data Infrastructure

1. Real-time Operational Analytics

Example: Fraud detection systems

With The Following Requirements:

- Streaming Pipelines

- Low Latency

- High Quality of Service (Reliability)

2. Regulatory Reporting

- Accuracy of Data

- Audit Trails

- Consistent Outputs

3. 360 Degree View of Customer

- Transactions

- Behaviour

- Interactions

4. All Transactions In History Is Required

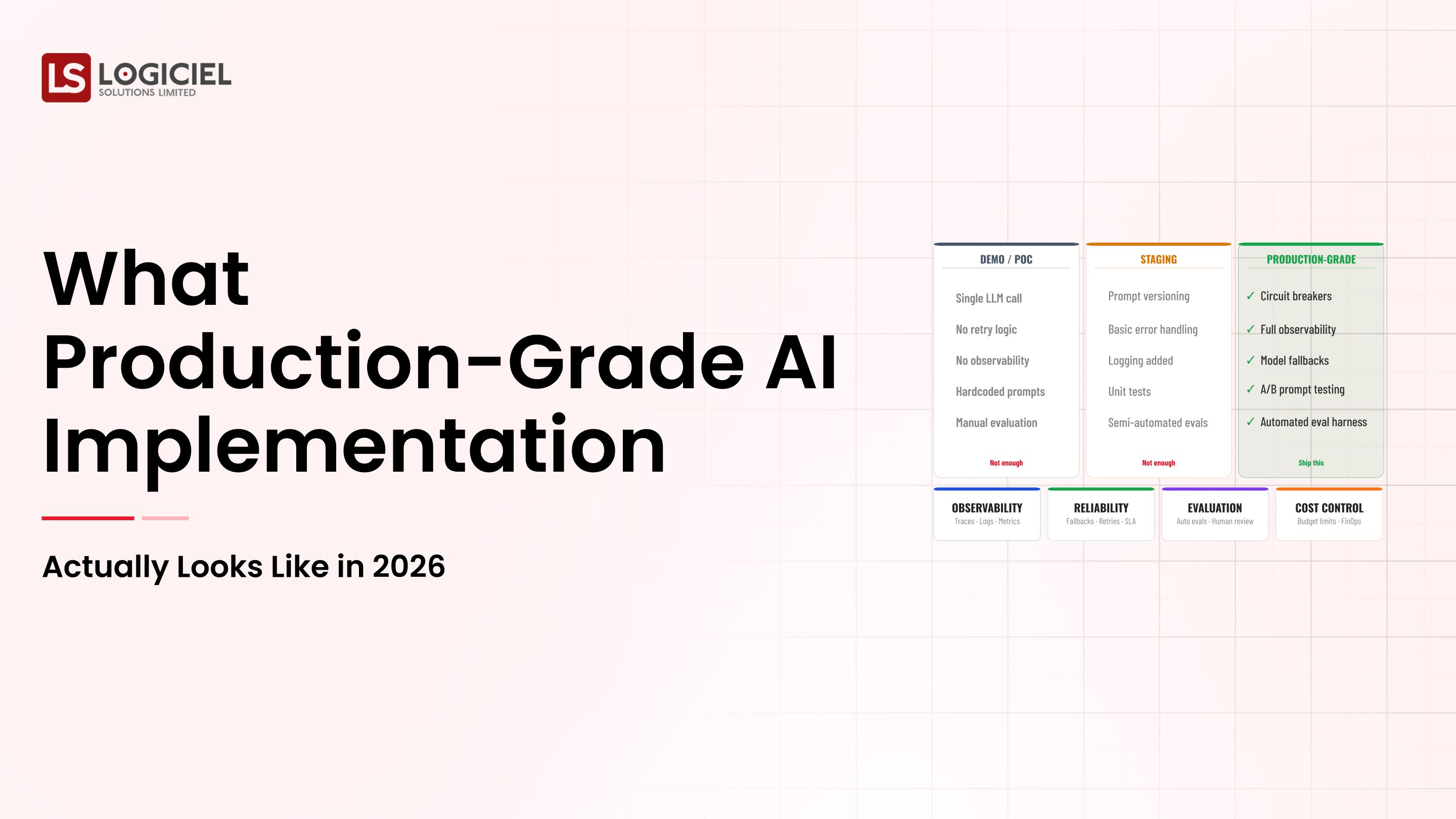

AI and Machine Learning Model Development

Needs to Make:

- Clean, Qualifying Data

- Historical Data

- Data Lineage

The Importance of These Use Cases:

They Directly Affect:

- Revenue

- Risk Management

- Customer Experience

Conclusion:

The Infrastructure for AI to Serve as a Foundation for Financial Services Critical Use Cases

What Financial Service Executives Should Do Well To Be Elevated:

Leading Teams Responsibilities:

- Treat Data as a Regulated Asset.

- Invest in Observability Head of Time.

- Align Engineering and Compliance.

The Misunderstandings of Others:

- Treat Data as a By Product of a Process.

- Wait until Problems Arise and then Address Quality Issues.

- React to Problems after they Occur.

Before & After

Reactive Teams vs Proactive Teams:

- Fix Issues Late/Before they Occur

- Manual Processes/Automated Processes

- Limited Visibility/Complete Observability.

Key Takeaway

This is not about Tools.

This is about Mindset and Design of Systems.

Conclusion:

Highly Performing Teams are Settling for Reliability, Compliance and Visibility.

Implementation Considerations; How to Get Started:

- Focus on High-Risk Data Design Flows;

- Create a Business Case (Cost of Failure, Compliance Risk, ROI for Reliability);

- Migrate Incremental; (Build Parallel Data pipelines/Check Validations/Migrate Gradually);

- Build Cross-Functional Team Alignments; Include Engineering, Compliance, and Legal;

- Establish an AI-Predicated Engineering System; Leading Financial Services Companies are Moving beyond Traditional Design/Systems to Predict Failures, Maintain Compliance, and

Logiciel has been specifically designed to do three things:

- Support the regulatory environment

- Reduce the complexity of day-to-day operations

- Scale AI systems

To ensure continued success, we recommend starting small and gradually expanding your capacity based on your risk assessment of the current infrastructure.

In conclusion

The complexity of building data infrastructure (both hardware and software components) across all financial services is one of the greatest engineering feats of the 21st century.

It is evident that the data infrastructure needs to be reliable and compliant, as financial institutions rely more heavily on consistent data than do most other businesses.

In addition, an architecture that can handle both batch and real-time workloads will have a more successful implementation.

Lastly, observability, governance and system design will determine the overall success of building out the data infrastructure.

This is not just an upgrade; it is a fundamental change.

When successfully completed, there will be new possibilities that were unattainable prior to the implementation of a compliant data infrastructure:

- Higher level of reliability for AI systems

- Increased speed of decision making

- Improved level of compliance

- More trustworthy data

In order to evaluate your data infrastructure and determine if they are experiencing any issues with reliability and/or compliance, we suggest that you take the following steps:

Explore the following resources:

- Data Infrastructure Design: How to Architect for Scale, Reliability, and AI Readiness

- Real-Time Data Infrastructure: Why Batch Processing is no longer a viable option for AI

- How to construct a data infrastructure roadmap

Logiciel Solutions can help you build a compliant, scalable AI-first data infrastructure. Contact us to learn more!

RAG & Vector Database Guide

Build the quiet infrastructure behind smarter, self-learning systems. A CTO’s guide to modern data engineering.

Call to Action

If your organization is struggling with reliability, compliance, or AI scalability challenges within financial services infrastructure, now is the time to evaluate your architecture.

Recommended Next Steps:

- Review your current real-time processing capabilities

- Audit compliance and observability gaps

- Evaluate whether your infrastructure can support AI workloads at scale

Additional Resources:

- Data Infrastructure Design: How to Architect for Scale, Reliability, and AI Readiness

- Real-Time Data Infrastructure: Why Batch Processing is no longer a viable option for AI

- How to Construct a Data Infrastructure Roadmap

Next Step:

👉 Request a Financial Services Infrastructure Assessment from Logiciel Solutions.

Logiciel Solutions helps financial institutions build compliant, scalable, AI-first data infrastructure systems designed for reliability, governance, and real-time intelligence.

Frequently Asked Questions

What data infrastructure is required to build AI in Financial Services?

A data infrastructure consists of the systems and processes that support data collection, processing, and delivery of data for AI and analytics applications in compliance and reliability.

Why is the data infrastructure of the Financial Services sector more complex than most industries?

The primary reason is due to the need for the infrastructure to be able to provide real-time processing, while maintaining strict compliance and managing sensitive information all at once.

What is the biggest challenge associated with the data infrastructure in financial services?

The greatest challenge is balancing scalability, compliance and performance while at the same time reducing complexity.

What techniques can a company implement to ensure compliance within their data systems?

By building auditability, access control and data lineage functions into their design at the very beginning.

What is the most appropriate architecture for the Financial Services sector?

The best architecture will be a hybrid architecture that connects batch and real-time processing with strong observability and governance.