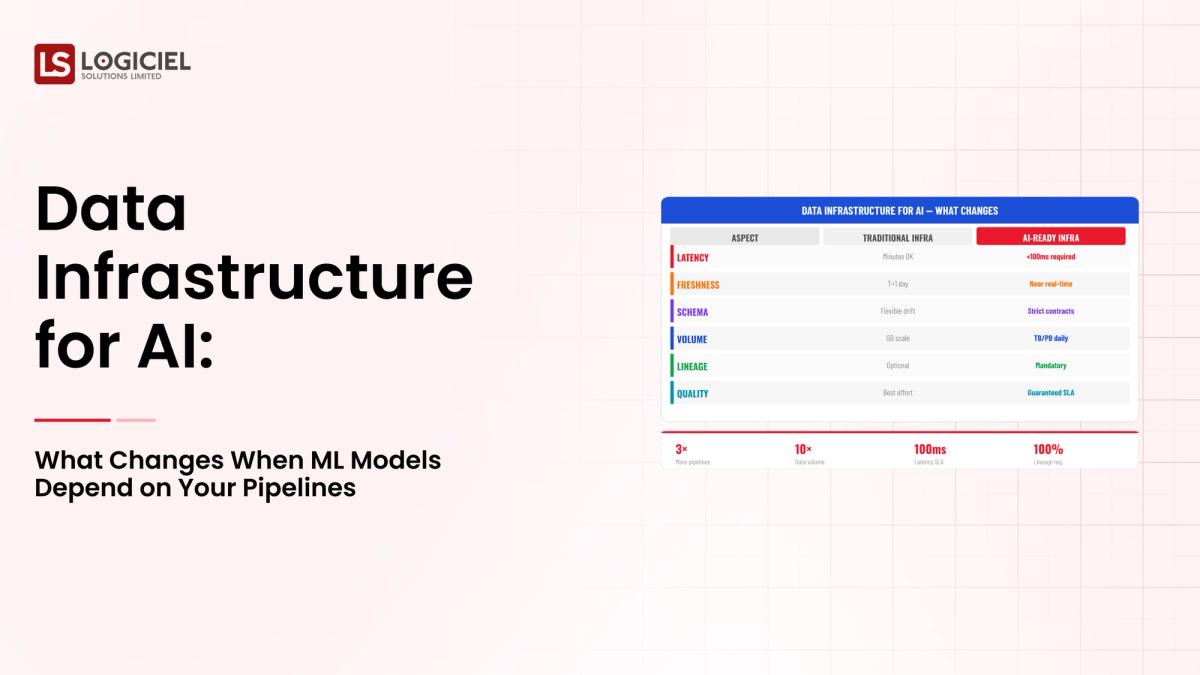

Three years ago, you had a reasonable architectural plan and built pipelines that were designed for analytics. You used batch processing, as well; and latency was not a problem.

Fast-forward to today; however, and now you are experiencing difficulty supporting machine learning workloads on those same systems.

You have silent failures, non-matching features, and unpredictable data freshness; and, all of a sudden, your data infrastructure is using up 30-40% of your sprints with debugging/rework.

This change is not by coincidence; it is a structural change.

ROI of AI-Ready Data Infrastructure

Inside an 8-month rebuild that turned three failed pilots into a 9:1 ROI model.

If you are the Staff/Principal Engineer responsible for building/scaling AI systems, the purpose of this article is to:

- Help you understand what has changed at a fundamental level when ML relies on your pipelines.

- Educate you on how to design data infrastructures for AI-ready systems.

- Help you avoid the most common mistakes made by teams when scaling AI workloads.

Let us now look at how the enterprise data challenge changes in this context.

What Makes Enterprise Data Challenges Different

Building data systems is already complex; however, building data infrastructures for AI elevates this complexity to a new level.

1. Scale Is Non-Linear

Traditional Systems:

- Process data in batches

- Handle predictable workloads

AI Systems:

- Ingest data continuously

- Require real-time, or near-real-time, updates

This results in:

- Increased throughput requirements

- Increased pressure on infrastructure

- Increased reliance on infrastructure reliability

The Significance of Data Privacy and Regulations

Data sensitivity and regulatory considerations will have a significant influence on how you build your AI system. Generally, AI systems rely on three types of data: customer data, behavioral data, and financial or regulated data.

As a result, AI systems must comply with strict regulations and auditing, have the ability to track data in a governed manner, and have many governance issues concerning data.

3. Real-Time Needs

Machine learning models often depend on almost instantaneous feature creation and inferencing, which means that batch pipeline technology is no longer adequate.

4. Complexity of Data Dependencies

AI pipelines depend on multiple upstream systems, feature engineering workflows, and training datasets for the ML model. If one component fails, this failure will propagate through the entire system.

Challenges with Existing Data Architectures

The majority of existing data systems were designed primarily for analytics, not for AI. Therefore, they often do not support real-time operations, have very limited observability, and lack end-to-end lineage on data.

The Importance of Rethinking Your Data Architecture

When an AI system fails, the system will give unreliable results, leading to poor quality decision-making and ultimately result in negative customer experiences. This is why it is critical to take a holistic and critical look at how you want to build your data engineering architecture.

Regulatory and compliance factors must be considered when implementing data-infrastructure for AI because they will shape architecture decisions from day one.

Key Compliance Considerations

The SOC 2, GDPR, and other regulations related to specific industries such as finance and healthcare will establish rules on how the data can be stored, moved, and processed, thus introducing restrictions on these in the design of the architecture.

1. Data Residency

There will be an expectation in the legal world when storing data that it will remain within a set geographic area. This will affect the design of how data will be stored, strategies to replicate data, and the architecture of the pipeline.

2. Retention Policy

AI systems will need a high volume of historical data for training, but the laws associated with the data may require that it be deleted after a certain period, thus restricting how long the data can be retained. This creates a challenging balance.

3. Audit Trails

Any data created or modified must be able to identify where the data came from, how it was transformed, and where it was used in order to create a complete lineage for the data, making it critical that lineage is included in your development process.

4. Impact of Pipeline Design

The regulatory compliance constraints will impact how data flows through your system, the types of storage you choose to use, and the levels of access you choose to provide.

When you build compliance into your systems at the beginning, it helps you:

- Prevent expensive retrofits down the road

- Create an environment that fosters innovation

Insight:

Compliance is not a hindrance; rather, it is a design constraint that should be part of your data infrastructure for AI.

For AI, an enterprise data architecture that is truly scalable must be designed deliberately.

A scalable data infrastructure consists of distinct core architecture layers:

Ingestion Layer

Where you ingest data from a variety of sources and process both streaming and batch inputs.

Storage Layer

Where you have your data stored in either data lakes or warehouses, with support for both structured and unstructured data.

Processing Layer

Where you transform your data and create features for modeling, and process both batch and real-time data.

Orchestration Layer

Where you manage your workflow and its dependencies, ensure dependable service, and provide observability of the system.

Observability Layer

Where you track the health of your pipeline and monitor the quality of your data.

Real-Time vs Batch Data Usage

Not all data must be processed on a real-time basis.

Use real-time for:

- User facing applications.

- Fraud detection.

- Recommendations.

Use batch for:

- Report generation.

- Historical analysis.

Interoperating with Multiple Systems of Record

Typical enterprise systems included in an enterprise architecture may include:

- Customer Relationship Management (CRM)

- Billing Systems

- Internal databases

When developing your architecture, you will want to make sure that you:

- Integrate with these systems,

- Maintain consistency, and

- Ensure reliability.

What do High Performing Teams Typically Do

High performing teams typically build modular systems, ensure that design modularity is preserved across layers, and develop systems that are scalable from day one.

Insight:

A scalable architecture is not dependent on the tools you use, but rather on a solid separation of responsibilities and design principles.

Common Enterprise Use Cases of Data Infrastructure Used for AI

Understanding real-life use cases will help clarify the requirements.

1. Real-time Operational Analytics

Examples of real-time operational analytics include:

- Fraud detection.

- Inventory management.

- Patient monitoring.

Real-time operational analytics requires:

- Low latency (or near real-time processing).

- A high degree of reliability.

2. Regulatory Reporting

AI systems must provide for:

- Accurate report generation.

- Audit trails for all activity.

Regulatory reporting requires:

- Effective data governance.

- Complete data lineage.

3. Customer 360 View

Combines

- Behavioral Data

- Transaction Data

- Interaction Data

Requires

- Data Integration

- Consistency

4. ML Model Training

Models Depend on

- High-Quality Data

- Consistent Features

- Historical Datasets

Requires

- Reliable Pipelines

- Versioned Datasets

Key Insight:

Every use case has a unique requirement that can/does shape and inform pipeline and infrastructure design.

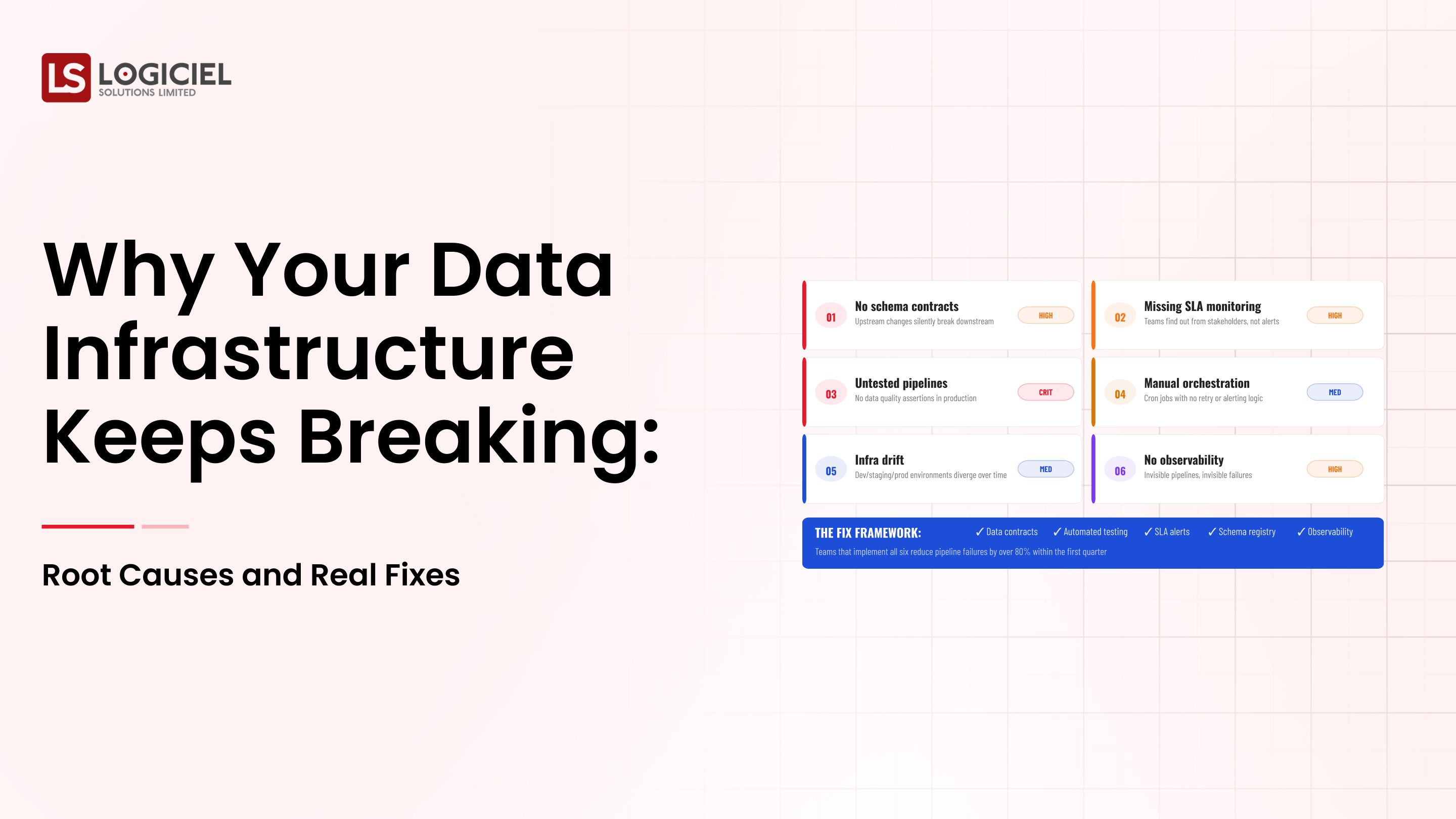

What Enterprise Leaders Are Doing Right Vs Others

There is a real distinction between high performing teams and low performing teams.

What Leaders Do Right

1. Data Is a Strategic Asset

Data is not just a byproduct of doing business.

2. Early Investment in Observability

Before you have to take action due to a failure.

3. Create Cross Functional Ownership

Data engineering, compliance and product teams.

What Others Do Wrong

1. Reactive Approach

Fixing problems after an incident occurs.

2. Underestimating Complexity

Thinking that your Analytics Infrastructure will easily scale to AI.

3. Not Thinking About Governance Until There Is a Compliance Issue

Before / After

Before

- Frequent pipeline failures

- Slow debugging

- Low Data Trust

After

- Proactive monitoring

- Faster resolution

- High system Reliability

Key Insight:

Creating a successful environment is done through proactive design, not through reactive fixes.

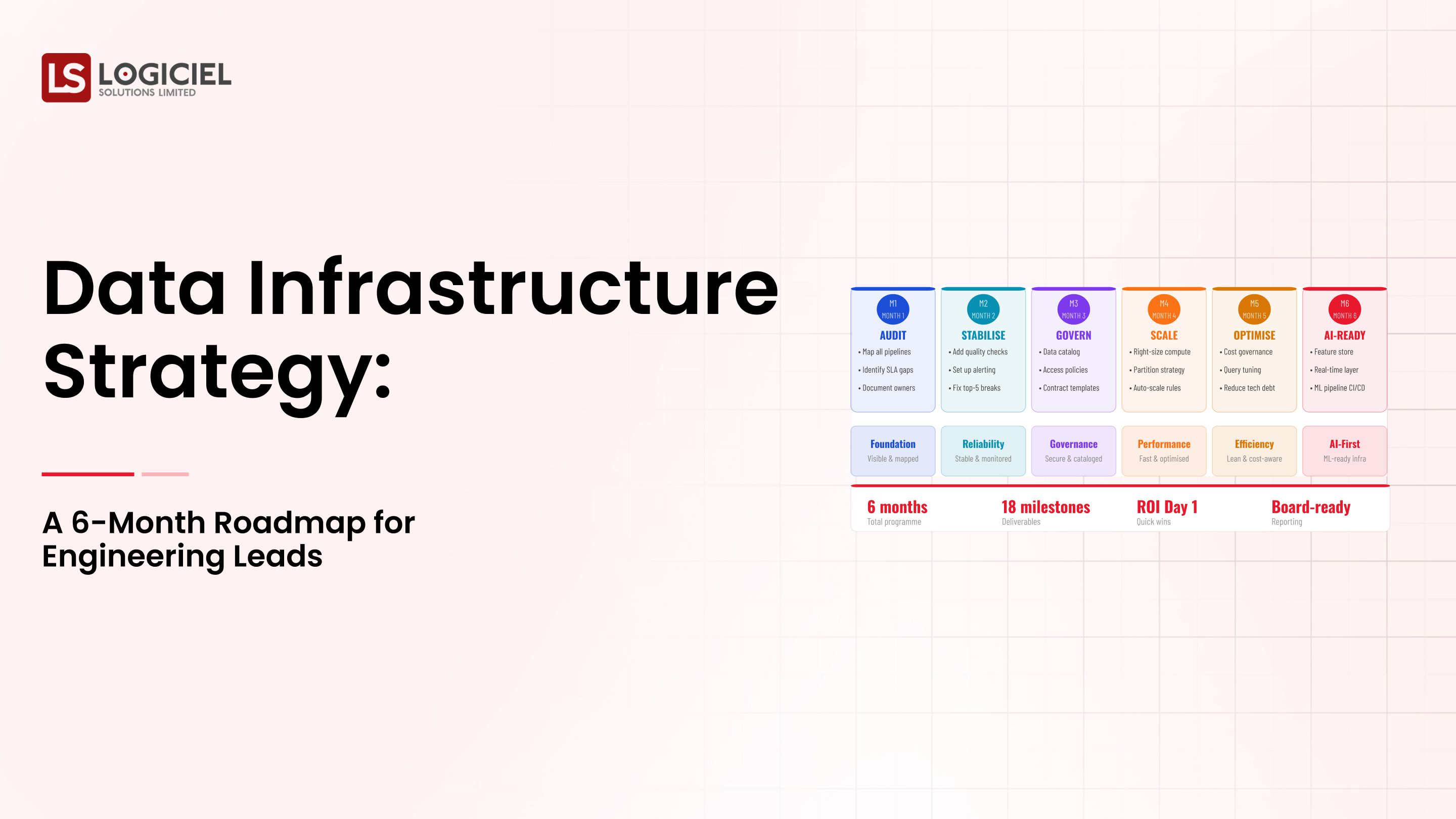

Implementation Considerations and How to Get Started

Building Data infrastructure for AI is an ongoing process.

1. Begin with the Highest Risk Data Flows.

Focus on the:

- Critical pipelines

- Highest impact datasets

Not the coolest problems.

2. Build The Business Case To Leadership

Show the potential risks

- Quantify the amount of wasted resource

- Demonstrate ROI

3. Create a Migration Strategy

Use:

- Parallel pipelines

- Validation Layers

- Gradual cutover

Reduce the level of risk.

Scaling incrementally involves a small first step, validating the approach, and then systematically expanding once you have an established methodology.

5. How Logiciel's AI-First Engineering Approach Helps

Logiciel's AI-first engineering approach:

- Integrates observability and lineage

- Automatically manages pipeline reliability

- Reduces operational overhead

By doing this, teams are able to:

- Scale quickly

- Increase reliability

- Decrease costs

Conclusion

Building data infrastructure for AI is fundamentally different than building a data infrastructure for its predecessors.

The key reasons are:

- AI systems magnify existing infrastructure deficiencies

- Existing methods will not be scalable

It is critical that observability and lineage be included when designing any infrastructure for AI; without them, it will not be possible for the system to be manageable.

Incremental and strategic implementations produce results

The all-at-once approach is unsuccessful.

This is a difficult endeavor, yet solving it produces:

- Reliable AI systems

- Faster innovation

- Better business results

Scaling Data Team Without Scaling Headcount

Inside a 12-week overhaul that doubled output and cancelled two senior data engineering hires.

Call to Action

For those who are beginning to plan their infrastructure for AI, the following articles should aid:

- Data Infrastructure Design: How to Architect for Scale, Reliability, and AI Readiness

- How to Build a Data Infrastructure Roadmap: A Framework for Engineering Leaders

As the next step, schedule a demo with Logiciel and see how Logiciel can assist your team in building AI-ready infrastructure.

Logiciel Solutions assists engineering teams in moving their AI systems from experimental to production-grade.

Logiciel’s AI-first engineering teams build infrastructure that improves:

- Reliability

- Scalability

- Delivery speed

Let’s create systems that your AI can rely on.

Frequently Asked Questions

What is data infrastructure for AI?

Data infrastructure for AI is the set of pipelines, storage, processing and monitoring systems that will be used to support your AI and ML workloads. This data must be reliable, consistent and available for both training and inference.

How is AI data infrastructure different than traditional data infrastructure?

AI infrastructure requires real-time processing and higher reliability than traditional infrastructure, and traditional architectures tend to be batch-focused and not suitable for supporting ML workloads.

Why is it important to have data lineage in AI?

Data lineage allows users to trace data used in ML models back through the entire process and is useful for debugging issues, maintaining compliance, and improving model reliability.

What are the biggest challenges with implementing AI data infrastructure?

A: - Data quality - Reliability of pipeline - Real-time processing demands - Compliance requirements

How should teams begin with AI data infrastructure?

Focusing on the most important pipelines first, implementing observability and lineage first, and then scaling incrementally. Teams should avoid over-engineering their systems initially.