You receive an alert at 2:07 a.m. while on call for a critical pipeline failure. The alarm did not sound very loudly or sound clear at all. The data displayed on downstream dashboards does not match the correct values; thus, stakeholders have begun asking questions about the data. Meanwhile, your team is rapidly scrambling to determine what caused the failure.

This is the current state of the modern era of data management infrastructure.

If you are a Data Engineering Lead, then there is a high likelihood that you have experienced this pattern:

- Silent Failures

- Slow Root Cause Analysis

- Repetitive Fire-fighting

Within this article, you will discover:

- The actual reasons that data systems fail in 2026.

- Three root causes that most engineering teams typically underestimate.

- A practical framework for diagnosing and resolving your data management infrastructures.

This is not a standard tooling guide. Instead, this is a system-wide diagnostics and solutions guide.

60% Overhead Reduction Guide

Inside a one-quarter overhead audit that pulled a five-person data team back from 67% firefighting.

WHY ARE DATA MANAGEMENT INFRASTRUCTURE ISSUES GETTING WORSE AND NOT BETTER?

On the surface of things, our data tooling has never been better; however, the fact is that our overall data management infrastructures continue becoming less and less stable.

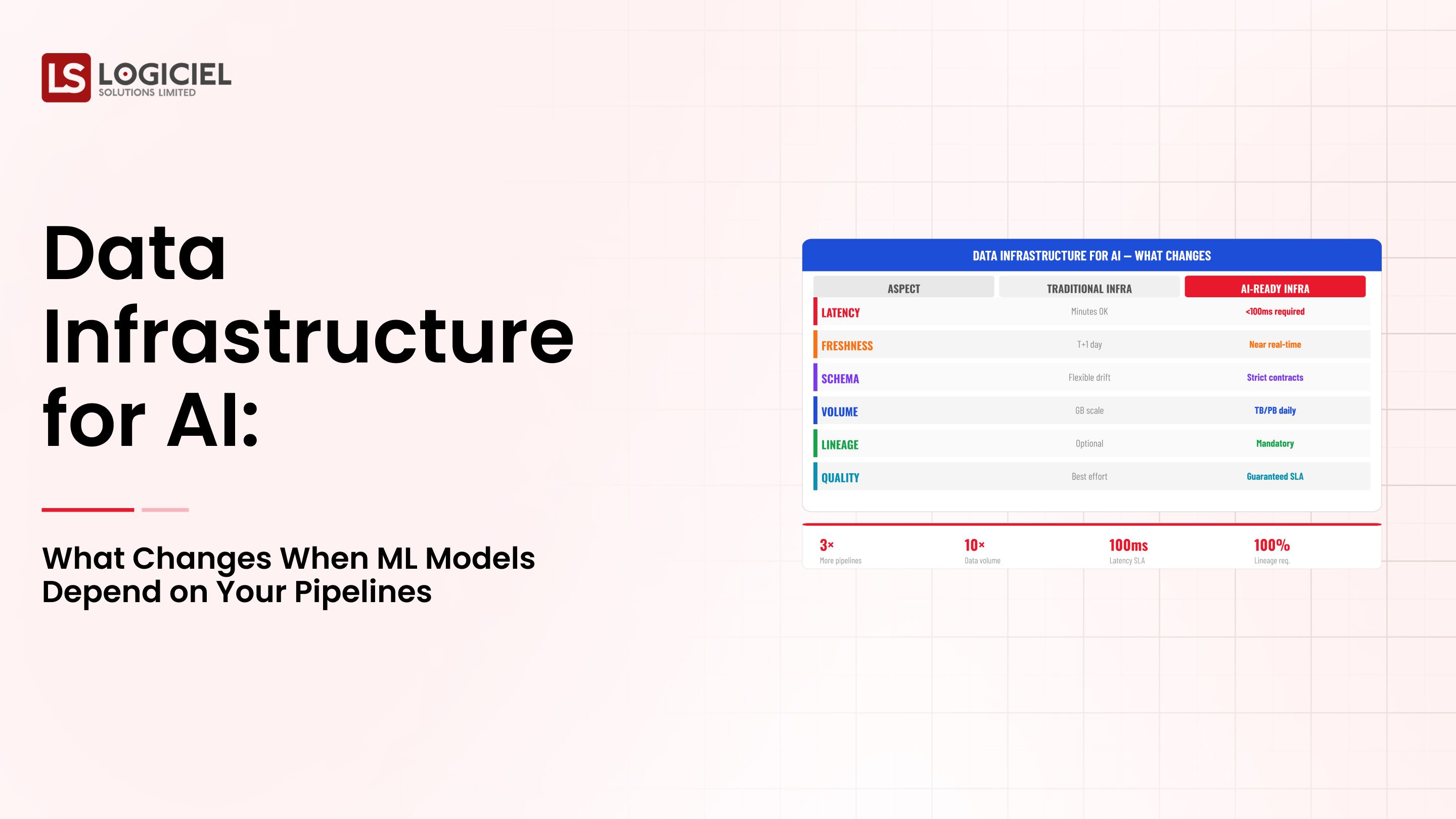

DATA VOLUME CONTINUES TO OUTPACE TEAM CAPACITY

Modern data management infrastructure now consists of:

- Streaming events

- Third-party integrations

- Real-time user interaction

However, engineering teams have yet to scale accordingly to accommodate all of this incoming data.

As a result of this imbalance between incoming data and the current capacity of your engineering teams, you will ultimately face large volumes of pipeline failures relative to the number of engineers assisting in developing and maintaining the data pipelines.

Therefore, to maintain your current data management system as well as improve its reliability, you must do your best to maintain an adequate pipeline-to-engineer ratio so that you will receive sufficient support from your engineering staff to manage your data management system as well as work towards fixing unidentified problems with your existing data management

More Tools = More Opportunity for Errors

Today's average data platform consists of:

- Orchestration tools

- Transformation frameworks

- Storage systems

- Monitoring tools

Every one of these point solutions adds:

- Complexity to data integration

- Failure points that surface when data is integrated

- Debugging, to a greater or lesser extent, and so on

This concept known as tool sprawl is where we have added many point solutions creating double the amount of cognitive load for our teams.

The fact that we’re placing increasing cognitive load on data and at the same time we’re increasing company expectations means our data pipelines aren’t built to satisfy the exponentially increasing level of expectation that data will be delivered to business users.

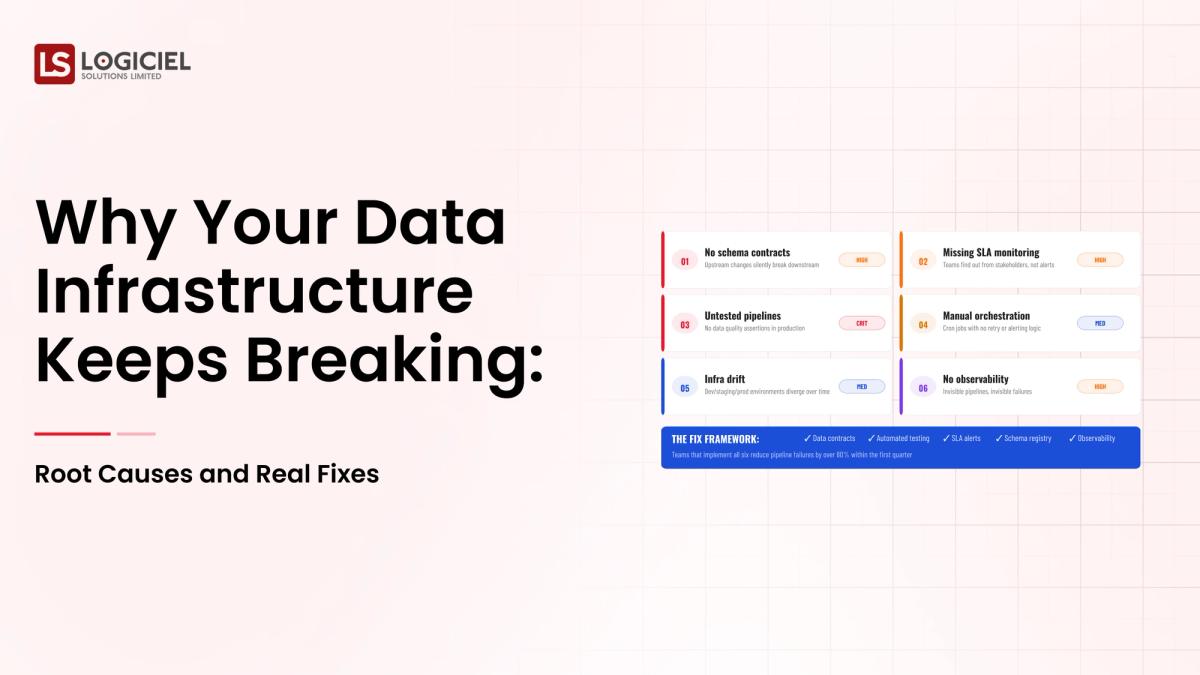

Reason #1: No Visibility, No Fix

Even when teams have many monitoring applications, they typically do not have visibility into their data flows.

The Issue

Most of the time, we discover these issues when:

- A business user comes to us and tells us there’s a problem;

- Our dashboards are not functional and we do not know that they are down until a business user tells us; or

- A business decision was negatively impacted by an issue.

This reactive approach is why our teams are not performing well.

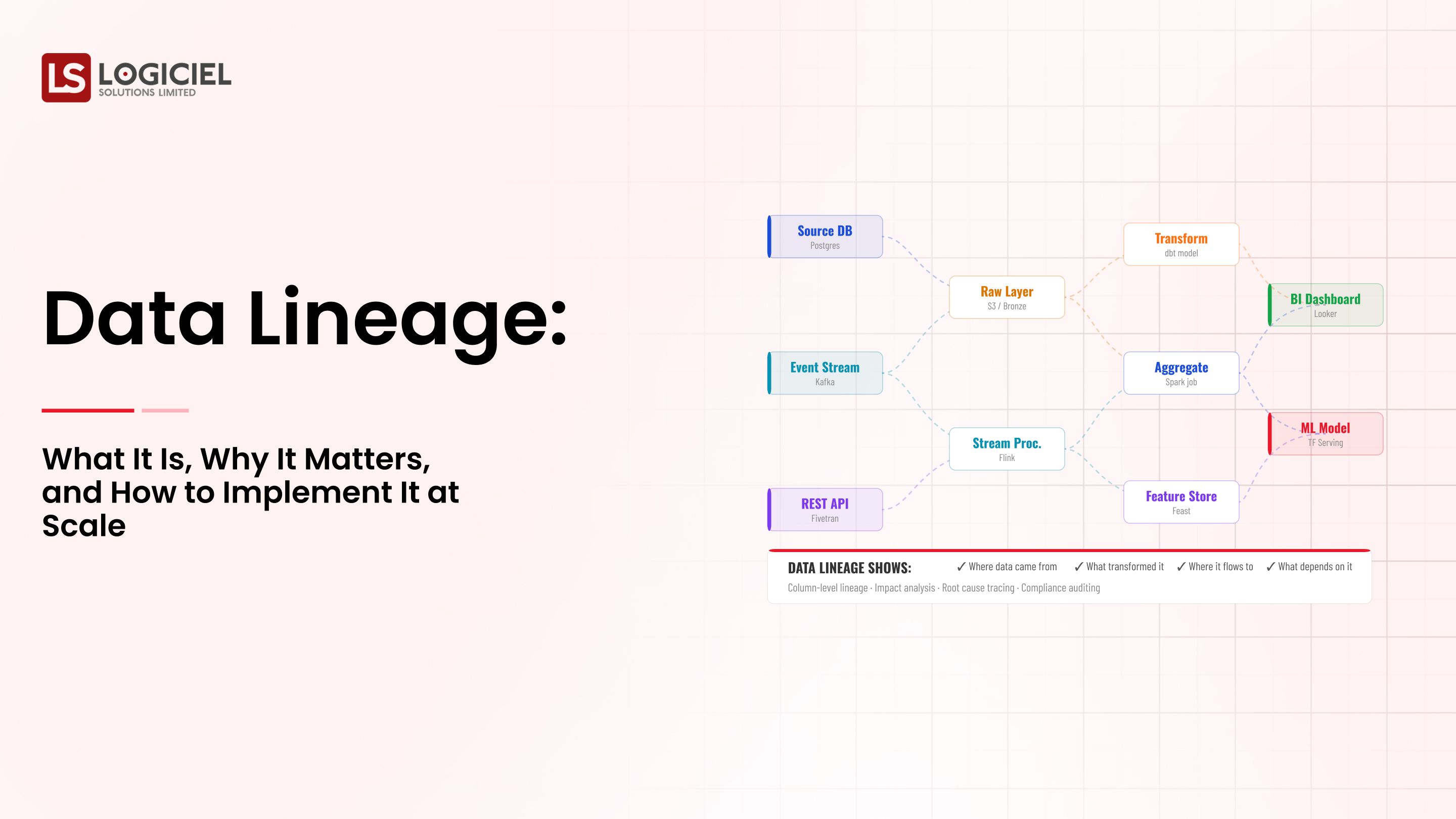

What Causes This?

- Monitoring is fragmented across multiple tools

- End-to-end data observability has not been established

- Data lineage is not tracked.

When you cannot track data lineage, answering basic questions becomes a challenge:

- What data source is this coming from?

- What transformations were applied to the data?

- What downstream systems are negatively impacted?

The Effect

- It takes teams longer to do root cause analysis.

- It takes longer for teams to respond to systems being down.

- Business users are frustrated.

The Way It Should Be

High performing data teams are implementing:

- End-to-end data observability

- Real-time anomaly detection

- Automated alerting before business impact occurs.

For example, if an anomaly is detected in a pipeline while ingesting data, the alert is triggered prior to any transformations taking place so that the issue can be addressed before business users are negatively impacted.

Key Insight

Visibility into a system is not about having a bunch of logs; visibility into a system is about understanding the system from the end-users’ perspective.

Root Cause 2: Lack of Clarity About Ownership

When something goes wrong, your first thought is, "Who is responsible?"

If you cannot figure that out right away, then you have a problem related to ownership.

The problem through my experience is, pipelines cut across multiple teams, do not document ownership, and you do not know who is responsible for what.

As a result, you have:

- Slower response to an incident.

- People blaming each other for the failure.

- Increased length of time that an incident is present.

Reasons for the cause are:

- Rapid growth in the system.

- No governance.

- Rarely, if ever, do you enforce data contracts.

The hidden costs of ambiguity regarding ownership increases both the mean time to detect and mean time to resolve incidents. The lack of accountability decreases incident reliability.

The Solution

1. Data Ownership Maps

Each dataset must have an owner and that person must be documented.

2. SLA Accountability

Define the expectations for reliability and assign someone that is responsible for meeting those expectations.

3. Data Contracts

Define the expectations for the schema and prevent breaking changes.

For example

Before:

A schema change silently breaks a downstream pipeline.

After:

Contract violation triggers an alert and notifies the owner immediately.

Key point

Ownership is fundamental to scaleable data engineering systems; it is not an option.

Root Cause 3: Complexity of Tooling; Too many Tools, No Direct Views

Modern stacks are powerful but very fractured.

The Problem

Various teams use different tools for:

- Ingestion

- Transformation

- Monitoring

- Alerting

Each operates in isolation.

The Result

- Engineers are constantly switching contexts.

- Debugging is diffused over several systems or services.

- No one has a complete view of the pipeline's health.

Cognitive Overheads

Engineers will spend more time managing tools than producing output.

In many cases, 30% - 40% of the Sprint Capacity is spent on maintenance work.

The Solution

Create a cohesive control plane:

- Centralized visibility

- Unified alerts

- Combined lineage tracking

Less:

- Switching context

- Time to debug

- Operational complexity

What High Performing Teams Do

- Collocate tools wherever useful

- Integrate systems where colocation isn’t viable

- Regularize workflows by teams

Critical Insight

Complexity is unavoidable. Fragmentation is not.

The Hidden Costs Teams Do Not Measure Until Too Late

Most companies track:

- Pipeline success rates

- Query performance

They fail to measure the actual costs.

1. Engineer Time Lost

Engineers spend:

30 to 40% of time responding to emergencies

Leading to less:

- Innovation

- Features

- Strategy

2. Data Trust Erosion

When data was considered unreliable.

Stakeholders withdraw their association from the data

Decisions return to an "intuition" based approach

The loss of confidence undermines your entire data business.

3. Compliance Risks

Undetected issues lead to:

- Incorrect Reporting

- Possibly Regulatory Violations

Such as finance, healthcare, etc.

4. Opportunity Cost

When it takes a longer time/ unreliable data.

You will end up:

- Sending decisions late

- Missing out on opportunities

Industry estimates indicate that high reliable data companies make decisions cite five times quicker than their competition.

Critical Insight

The most significant costs are not technical. They are business-related.

A Framework For Diagnosing Your Own Stack's Vulnerabilities

Before you attempt any resolution, conduct a well-structured diagnosis.

Step 1: Ask Yourself Following Critical Questions

- Are you able to trace a data point from end to end?

- Do you know who owns each critical pipeline?

- Are you able to detect problems before your stakeholders?

If you answered “no” to any of the 4 step process, you have systemic gaps.

Step Two - Complete a Self Assessment Framework

You should evaluate your systems across 3 dimensions -

1. Reliability

- Thoroughness of the pipeline success rates

- Frequency of incidents

2. Observability

- Speed of detection

- Visibility of root cause

3. Ownership

- Defined and clear accountability

- Meeting Service Level Agreements (SLAs)

Step Three: Prioritize Fixes

Your focus should be on:

- High impact pipelines

- Critical workflows for the business

Do not try to fix everything at once.

Step Four: Incremental Implementation

- Start with one domain

- Validate the improvements

- Work to grow in scale

How Logiciel Can Assist

Logiciel applies an AI-first approach to engineering that integrates:

- Observability

- Lineage

- Reliability metrics

This results in one cohesive system where all of the following occur:

- System issues are identified earlier

- The root cause of the issues is made visible

- Accountability is enforced

Conclusion

Keep in mind the following:

Data systems do not fail one issue. They fail due to systemic gaps.

The three most important takeaways:

- Visibility gaps cause delays

- Absence of observability and lineage = guesswork when debugging

- Lack of ownership prolongs incidents

Without clear lines of accountability, you will not achieve a reliable system.

It is also worth noting that tooling complexity creates invisible inefficiencies; thus, simplifying and unifying your systems will help reduce operational burden.

Fixing these problems does not require purchasing new tools, rather we must build cohesive, observable systems to achieve this end.

With a reliable data management infrastructure:

- Engineers can spend more time building

- Stakeholders can trust that the data is accurate

- Decisions can be made more quickly.

Why Audit-Ready Beats Audit-Survived Every Time

Inside a 120-day remediation that turned three material findings into zero at follow-up.

Call to Action

If your team is always fighting fires, it may be time for a reset through a structured approach.

Explore:

- How to Build a Data Infrastructure Roadmap: A Guide for Engineering Leaders

- How to Evaluate Data Infrastructure Vendors: 40 Questions You Should Be Asking

Or take it to the next level and:

👉 Request a no-cost infrastructure audit to identify your current gaps.

Logiciel Solutions helps engineering teams move from reactive fire-fighting to predictable, reliable data management.

Our AI-first engineering teams build infrastructure that increases:

- Reliability rating

- Visibility rating

- Speed-to-innovate rating

Let us help you fix root causes as opposed to simply fixing symptoms.

Frequently Asked Questions

Why does data management infrastructure frequently collapse?

Most failures are based on visibility gaps, lack of a defined owner and tooling complexity; compounded by massive growth this leads to frequent incidents that take longer to resolve.

What is the greatest challenge of data pipeline management?

The single-most greatest challenge with directing the pipeline is maintaining data reliability as the size of data pipelines creates and increases dependencies which creates more extensive issues that diminish the ability to resolve them without proper observability and lineage.

How can data observability create reliable data?

Data observability allows your team to quickly identify data-related issues, create improved visibility into their root causes and limit the adverse impact downstream.

What is the importance of data ownership in creating stable infrastructure?

Data ownership provides accountability therefore accurate ownership for each data set/pipeline will allow for each incident to be resolved in a timely manner thereby promoting the reliability of each system.

How do you reduce complexity within your modern data stack?

There are several ways to reduce complexity including: - Consolidating your tool sets as much as possible - Integrating your systems in the best possible method (using API's when possible) - Standardizing your workflow for maximum efficiency A consolidated control plane will also enhance the efficiency of your overall operation.