Three years ago, the decision made for an architecture was a good one; it allowed you to progress quickly. Pipelines were straightforward and each creator clearly owned their pipeline.

Today, that same architecture is costing your team 30% to 40% of their capacity to send sprints debugging broken pipelines, tracing data inconsistencies, and answering questions from your stakeholders such as, “How did you get that?”

This is the state of today’s modern data management infrastructure. As the system has grown, the complexity of managing our environment has accumulated, creating additional operational issues for us to solve.

For Staff Engineers and/or Principal Engineer Managers that build/manage data systems, you will

- Understand that Data Lineage is foundational today and no longer optional.

- Learn an actionable framework to implement data lineage at scale.

- Avoid common pitfalls that cause data engineering teams to slow down.

To understand how and why most teams are unsuccessful with data management at this stage of evolution, I will explain why they struggle before thinking about data lineage.

100 CTOs. Real Expectations

This report shows what actually predicts delivery success and what CTOs discover too late.

Why do so many teams have difficulty managing data today?

Most teams fail not because they do not have all of the tools, but rather because they do not have clearly defined process, responsibility, and visibility into these processes.

In the early stages of evolution, data systems evolve naturally:

- Pipelines get built quickly.

- Transformation occurs in a notebook/script.

- Ownership is implied.

This is effective until it is no longer effective.

Once your data platform starts to grow and scale, there are many challenges.

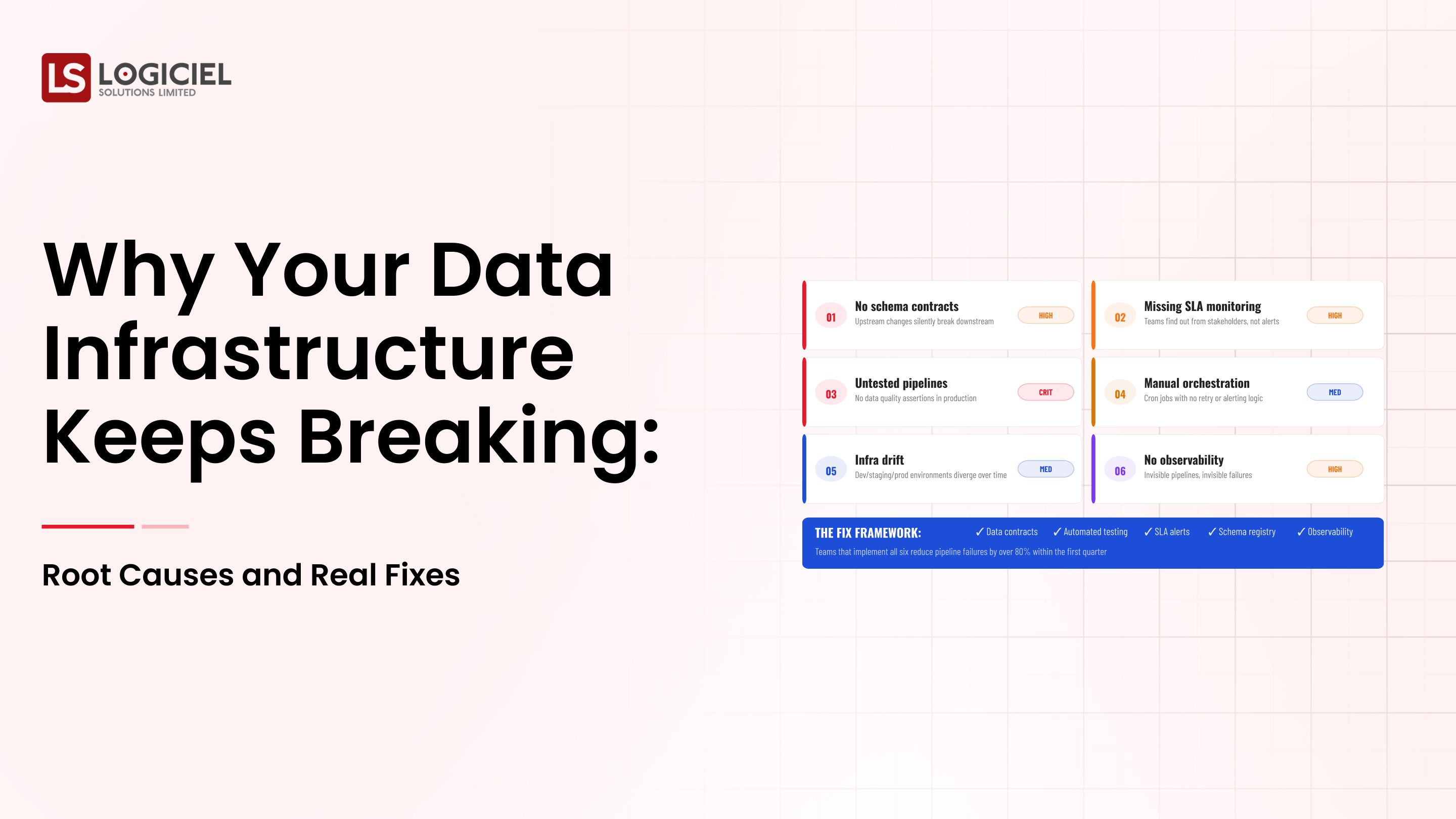

1. Each solution created for each pipeline contributes to fragmentation across multiple pipelines as each created solution solves a localized problem.

Your data pipeline ecosystem is becoming increasingly hard to comprehend over the span of time.

2. There Is No Clear Ownership Model.

When you experience a break in your pipeline, your team has questions:

- Who owns this dataset?

- Who has responsibility for fixing it?

Not having the answer to either question, means your teams will have significantly increased mean time to resolution.

3. There Is No Data Observability.

Most of the time your team learns about issues after stakeholders reach out and let them know. By this time, you've already damaged some trust.

It Will Be More Difficult In 2026

Modern data systems are:

- Real-time or close to real-time

- Powering AI and ML systems

- Integrated across multiple tools and platforms.

This means:

- More places where dependencies exist

- More points of failure

- Increased expectations on data reliability

What Success Looks Like

A mature engineering team can do the following:

- Know where every dataset comes from

- Trace the transformations of that dataset instantaneously

- Detect issues before they have an impact on the business

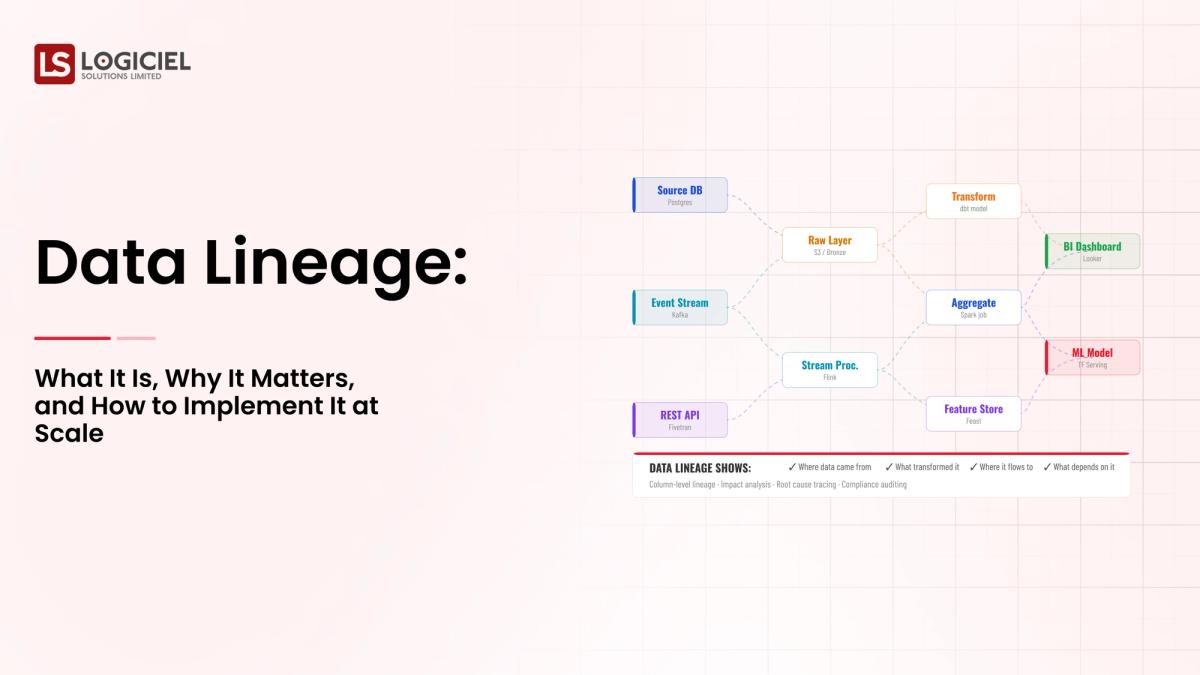

This is why having data lineage is critically important. It provides an additional visibility layer that many teams currently don’t have.

Prerequisite: What You Must Have Before Getting Started

There are several foundational components to your data management infrastructure that you must have in place before you implement data lineage.

If you skip these foundational components, you will end up with a lineage system that is incomplete or unusable.

1. A Clear Ownership Model

Every dataset and pipeline needs:

- An owner’s name

- Clearly defined responsibilities

- Established escalation paths

Without an established ownership model in place, lineage turns into another documentation without accountability.

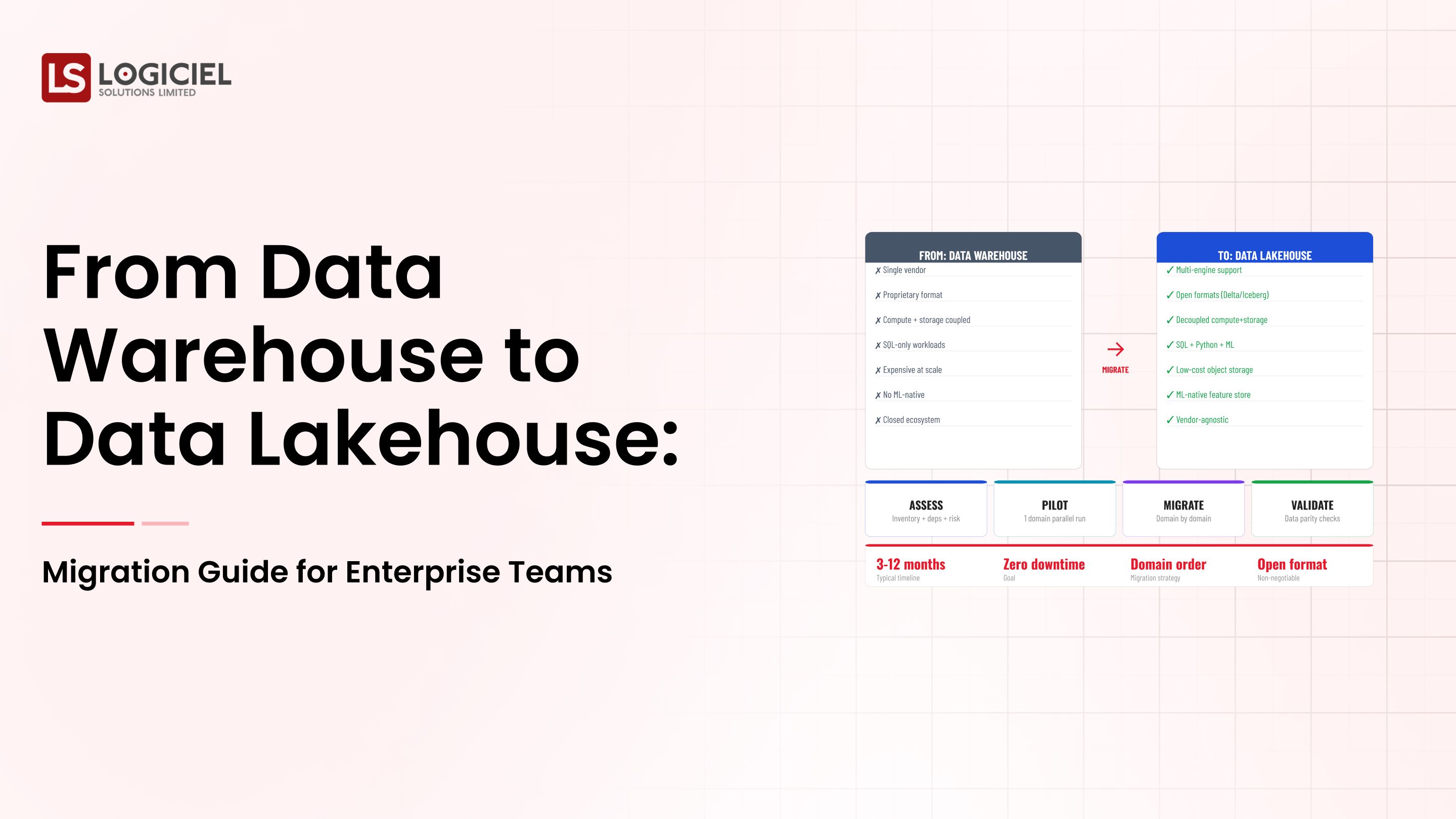

2. A Baseline Stack

Your technical stack does not have to be perfect, but it should have:

- A functioning data pipeline orchestration layer

- Centralized storage (data warehouse or data lake)

- Basic logging or monitoring

Lineage will be built on top of existing systems and not replace existing systems.

3. A Data Contract/Schemas

One of the biggest ways to break your environment is through schema changes.

Data Contracts:

- Upstream providers define their expectations.

- Downstream consumers are protected.

Reduces silent failures in your data engineering workflows.

4. Alignment among Stakeholders

Lineage is not just an engineering concern…

- Analytics teams

- Product teams

- Compliance teams

You must define:

- Why lineage is important

- What success will look like

- How it will be utilized

5. Defining Success Metrics

Prior to implementing anything, you should determine:

- What problems will you be solving?

- What metrics will you improve?

Some examples of success metrics include:

- Time to resolve incidents has decreased

- Increased trust in the data

- Faster onboarding of new engineers

If you do not define success metrics, then lineage will just be a "nice to have" instead of a strategic asset.

Phase 1: Evaluate your Current State

Before you can build anything, you need to have a clear understanding of what exists now.

Oftentimes, this step is rushed and that is a problem.

Step 1: Perform An Audit of Your Data Ecos

Grouping High Impact, Low Consequence Changes into 2 Categories

- Quick Wins: High Impact—Low Effort

- Strategic Initiative: High Impact—Longer Timeline

Quick Win: Introduce Logging In Critical Pipelines

Strategic Initiative: Create End to End Lineage Tracking

This type of priority creates momentum while paving the way for planning improvements in the long term.

Phase 2: Create A Target Architecture

Once you determine your existing state, you can move to designing the future state solution.

Most teams tend to go to the same tool. Do Not Do This!

1. Define Architecture Principles

Your architecture must follow defined architectural design principles.

1. Observability First - Visibility is built-in; it is not added after the fact

2. Modular - Pipelines are separate and can be tested independently.

3. scalable - The system will grow without having to redesign

4. Redundancy - Failures are expected and will be accounted for.

These principles will drive each design decision.

2. Select Components Purposefully

In your Data Platform, you will have:

- Ingestion Layer

- Storage Layer

- Transformation Layer

- Orchestration Layer

- Observability/Lineage Layer

Select tools based on:

- The fit for your use case

- When the tool was introduced

- The level of complexity in operation

3. Design For Lineage From Day One

The purpose of having lineage is to capture:

- Sources of data.

- Data transformations.

- Dependencies.

- Usage.

Having full lineage will enable:

- Faster resolution of debugging

- Impact analysis

- Compliance tracking

4. Build Observability into The System

The following aspects are involved:

- Monitoring Pipeline Health.

- Tracking Data Freshness.

- Tracking Errors.

Industry Metrics Demonstrated A 50% Reduction In Resolution Time Through Strong Observability.

5. Document Assumptions

Every architectural decision carries trade-offs.

At a minimum, you must document:

- Why The Decision Was Made

- What Limitations Existed

- What Can Change

These should be reviewed at least once quarterly. The system will evolve, and your assumptions should also evolve.

Phase 3: Develop, Test, And Release Incrementally

The single biggest mistake that teams make is attempting to complete Try an incremental approach.

1. Start with one of the following high-impact domains:

a. revenue data

b. product usage data/analytics

c. customer data

This will help you:

- validate your approach

- identify gaps early on

- establish patterns that can be reused across domains

2. When migrating, run parallel pipelines (i.e. run your legacy pipelines while you build new ones).

This will help you:

- ensure no data will be lost during the migration (or “cutover”)

- have an easy way to validate the results of the new pipeline implementation against the old pipeline

- have the ability to safely “roll back” to the old pipeline should issues arise after the migration is complete

3. Build automated tests for your pipelines.

Some tests that each pipeline should include are:

- schema validation

- validation of data quality

- validation of transformation logic applied to incoming data

Automated tests eliminate the need for much manual debugging.

4. Instrument everything.

You should track the following performance metrics for each of your pipelines:

- latency

- error rates

- freshness (i.e., how recently data was received)

- success rates for the pipeline

You will use these metrics to populate your data observability tools.

5. Scale gradually.

Once you have migrated successfully to one domain and have established a baseline of performance for your pipeline(s):

- replicate patterns used in migrating to the first domain

- standardize processes across all domains

- expand your coverage of the remaining domains in your data management portfolio

This will ensure that the growth of the data management infrastructure remains sustainable.

How to Measure Success and Iterate

Implementing your new data management infrastructure is only the first step; True success is measured through ongoing measurement and iterations of the project.

1. Define service-level objectives (SLOs) early in the migration process.

Some examples include:

- the up-time of the pipeline should be 99.9%

- the maximum allowable lag time for data freshness should not exceed 5 minutes

- the maximum allowable error rate for any data received from a pipeline shall not exceed 0.1%.

2. Create a dashboard that will be used by your stakeholders

Your dashboard should be easy to read and understand, even for non-technical stakeholders.

3. Schedule a Retrospective for every month

Your focus should be on:

- what failures occurred during the month

- why the failures occurred

- what corrective action to take

This will foster a culture of continuous improvement within your organization.

4. Measure the impact that your data management infrastructure has on your organization

You will want to measure:

- Reduced downtime

- Improved decision-making speed

- Increased data usage/adoption among users

Use Intelligent Systems as a Tool

Modern day software solutions are integrating the following into their solutions:

- Observability

- Lineage

- Reliability metrics

This allows you to reduce the amount of work performed manually and gain a single view of your entire System.

Final Thoughts

Building a new data management infrastructure that can handle future growth is not only a technical challenge but an organisational challenge as well.

3 Key Takeaways

- Data Lineage is the foundation for all things Data.

- Without Data Lineage it becomes nearly impossible to debug and trust the data is good before you make a decision.

- Everything starts with having an Understanding of what Data you own.

- Tools alone will not resolve a systemic issue.

- Use clear metrics and incrementally scale your Data Systems.

- Sustainable Systems develop Over Time by Building in Small Steps.

- Building a New Data Management Infrastructure is an Evolving Process.

Once you do this successfully, you will have the ability to do the following:

- Make quicker Decisions

- Deliver Products and Features Faster

- Build Trust Between Teams

AI – Powered Product Development Playbook

How AI-first startups build MVPs faster, ship quicker, & impress investors without big teams.

Call to Action

If you are considering the current state of your Data Management Infrastructure we recommend that you use a structured process to do so.

Please download our Internal Checklist or read some of the relevant articles we have posted such as:

- Why Your Data Management Infrastructure is Failing: Root Causes and Real Solutions

- How to Create a Data Roadmap for Your Organisation: A Framework for Engineering Leadership

At Logiciel Solutions we help Technology Leaders make the transition from Reactive Data Systems to AI first, Reliable Infrastructure.

Our Engineers Design and Build Solutions for your Data Systems that improve Data Reliability, Observability, and Scalability while helping accelerate your Product Delivery.

If you are interested in having us help you Build a Resilient Data Foundation please let us know.

Frequently Asked Questions

What is Data Lineage in Simple Terms?

Lineage provides visibility into the movement of data from one point to another (Source to Destination). Lineage will tell you how data was changed and who used it. This provides insight into how to debug when things do go wrong, ensures compliance, and builds trust in Data Management Solutions.

Why is Data Lineage Important for New Data Management Infrastructures?

As systems grow and the flow of data become more complicated, a lack of lineage makes it difficult for teams to identify where problems are and where they are dependant upon other systems. Lineage provides additional reliability and speed which will improve resolution of incidents, and ultimately support better decision making.

How Does Data Lineage Assist with the Observability of Data?

Lineage gives context to Observability. The observability of data allows a company to identify that a data element has failed, while lineage allows a company to identify how and why it failed and how it will impact Down Stream products.

What are Typical Barriers to Implementing Data Lineage?

Typical barriers include: - Lack of Ownership - Inconsistent Documentation - Disparate Tools - Legacy Systems that Lack Visibility

Can Small Teams Benefit From Data Lineage Solutions?

Absolutely. Small teams can benefit from the early implementation of Data Lineage Solutions. This will help to minimise complexity in the future, make On-boarding new employees easier, and provide a Data Management Infrastructure that can support continued Growth without becoming Difficult to Manage.