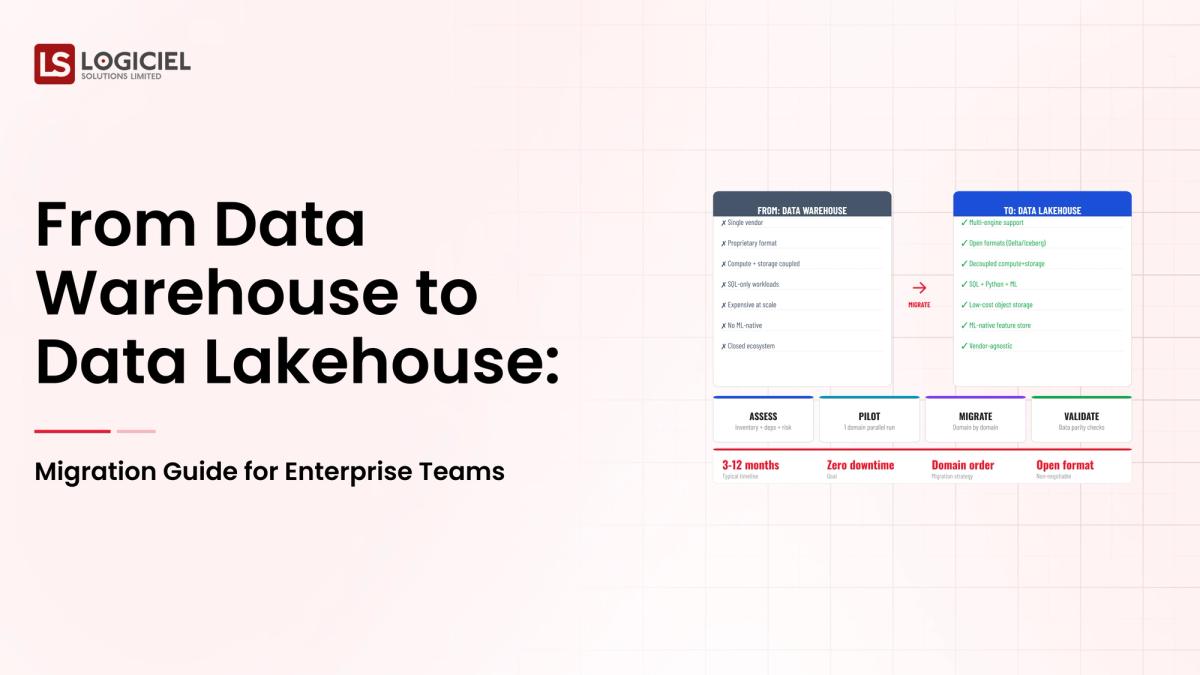

Three years ago, you decided that a Data Warehouse was the right choice for your business.

The Data Warehouse centralized reporting, supported analytics, and provided a single source of truth for your organization.

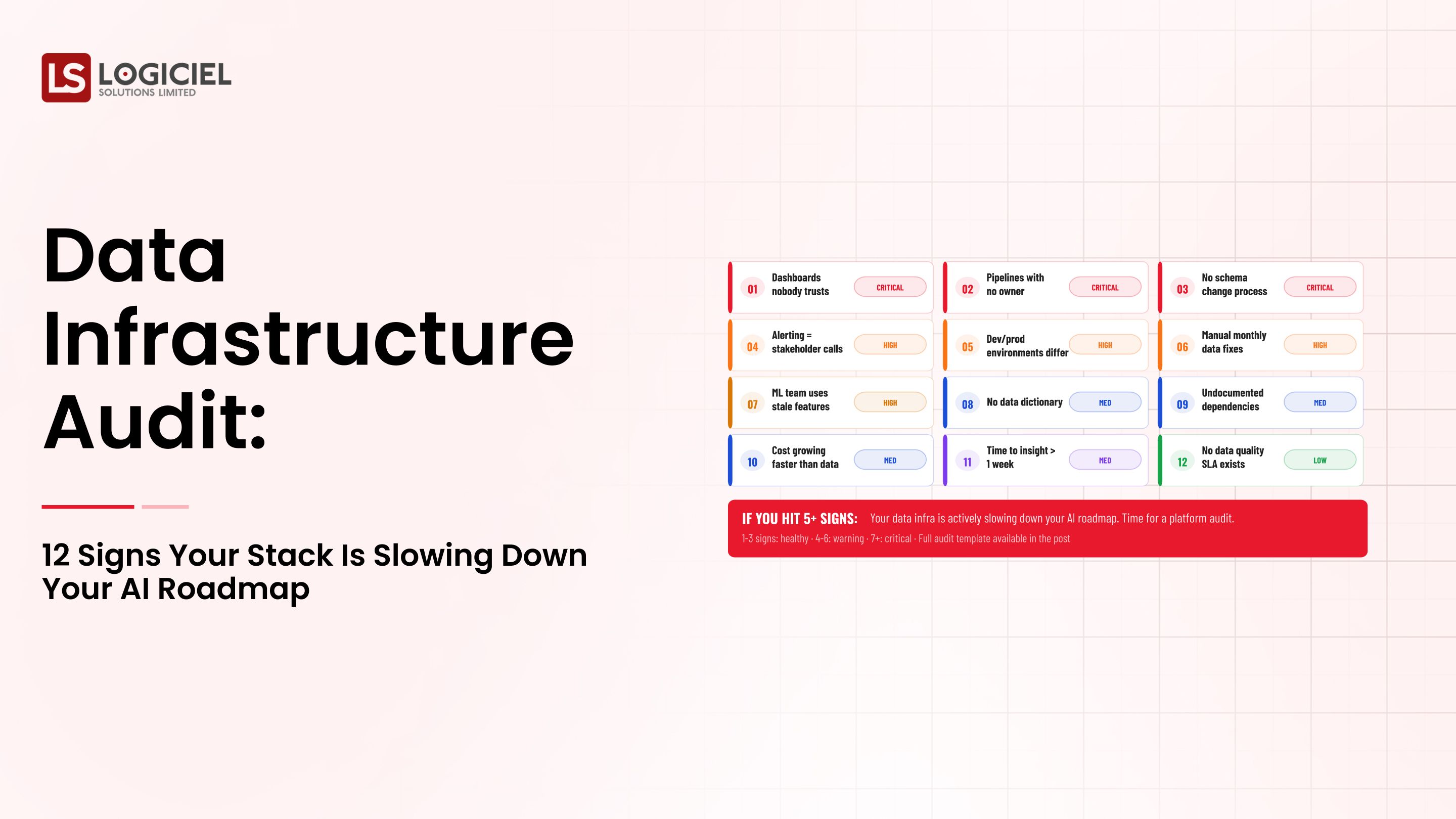

However, today your Data Warehouse is becoming a bottleneck for your organization.

High storage costs, difficulty supporting real-time use cases, and AI workloads that exceed your architectural limitations is requiring the Enterprise Data Architecture to evolve.

As a Staff or Principal Engineer who is responsible for the modernization of the Enterprise Data Architecture, moving from a Data Warehouse to a Data Lake House is more than just a solid upgrade – it’s beginning to become the standard for teams looking to adopt scale, flexibility, and AI readiness.

Agent-to-Agent Future Report

Understand how autonomous AI agents are reshaping engineering and DevOps workflows.

This guide will:

- Cover why traditional Data Warehouses are reaching their limitations

- Outline how to assess your current architecture to prepare for a Data Lake House Migration

- Provide a step-by-step migration plan for migrating to a Data Lake House without interrupting existing systems

Let’s begin with the reason why many teams are struggling to evolve their Enterprise Data Architecture.

Section 1: Reasons Why Many Teams Are Unable to Evolve Their Enterprise Data Architecture

The challenge isn’t recognizing that transformation needs to occur, it’s actually executing that transformation correctly.

The typical migration process for most teams is as follows:

- Adding a data lake next to a warehouse

- Moving a few pipelines

- And trying to keep their legacy system alive

This results in more complexity, duplicate data, and confused teams.

In 2026, the complexity of enterprise data architecture is only going to increase because of the need for modern systems to support:

- Structured and unstructured data

- Real-time processing, as well as batch processing

- AI and ML workloads

- New compliance regulation requirements, and more

Thus, architectural decisions will become even more complicated.

Legacy systems with hard-coded transformations, fixed schemas, high storage costs, etc., will become evident as your organization continues to grow in scale.

When determining what success looks like to a staff or principal engineer, you'll find that success means:

- Having one platform to support all data types

- Lower overall storage and compute costs

- The ability to run real-time and AI workloads

- A streamlined and simplified architecture

A good example of this type of migration issue is when your ML team requires access to raw data.

You will likely run into the following issues:

- The warehouse only stores processed data

- Raw data is in other locations

- Pipelines will have to be rebuilt

This is not a tooling issue but an architectural limitation.

Section 2: Prerequisites: What You Need to Do Before Migrating

To be successful at migrating your current data architecture to a new system, there are several fundamental things that you need to do prior to starting your migration:

1. Clearly Define Ownership

Every dataset/pipeline must have:

- An allocated owner

- A defined "who's responsible for what"

- And, a well-written SLA (service level agreement) between data owner and pipeline owner

2. Stabilize Your Existing Environment

This means:

- Repairing broken pipelines

- Resolving data consistency problems

- And, creating missing documentation

If you migrate an unstable, broken, or inconsistent environment, you are adding additional risk to your project.

3. Establish Data Contracts

Data-only contracts will help you by:

- Keeping your schema consistent

- Predicting/identifying future changes

- Reducing pipeline breakage

4. Gain Stakeholder Alignment

Ensure you have buy-in from all involve parties (i.e., Engineering, Analytics, Business teams) on:

- Why you are migrating

- What the expected outcome will be

5. What Are Metrics of Success?

- Cost Reduction

- Improvement In Performance

- Improvement In Data Access

6. Secure Resources for Migration

Your migration will need to secure capacity for engineering, infrastructure investment and operate in parallel to its existing systems.

Section Three: Phase One: Determine Your Starting Point

The first step in designing your future lakehouse is to determine where you are today.

1. Inventory Your Data Systems

Make sure you document your existing data environment. The critical information to capture includes:

- Data Sources

- Pipelines

- Storage Systems

- Business Intelligence Tools

2. Identify Your Most Important Workloads

The most important workloads to focus on are those that will be the primary source of revenue. Examples include:

- Revenue Generating Data

- Customer-Facing Analytics

- AI / Machine Learning Pipelines

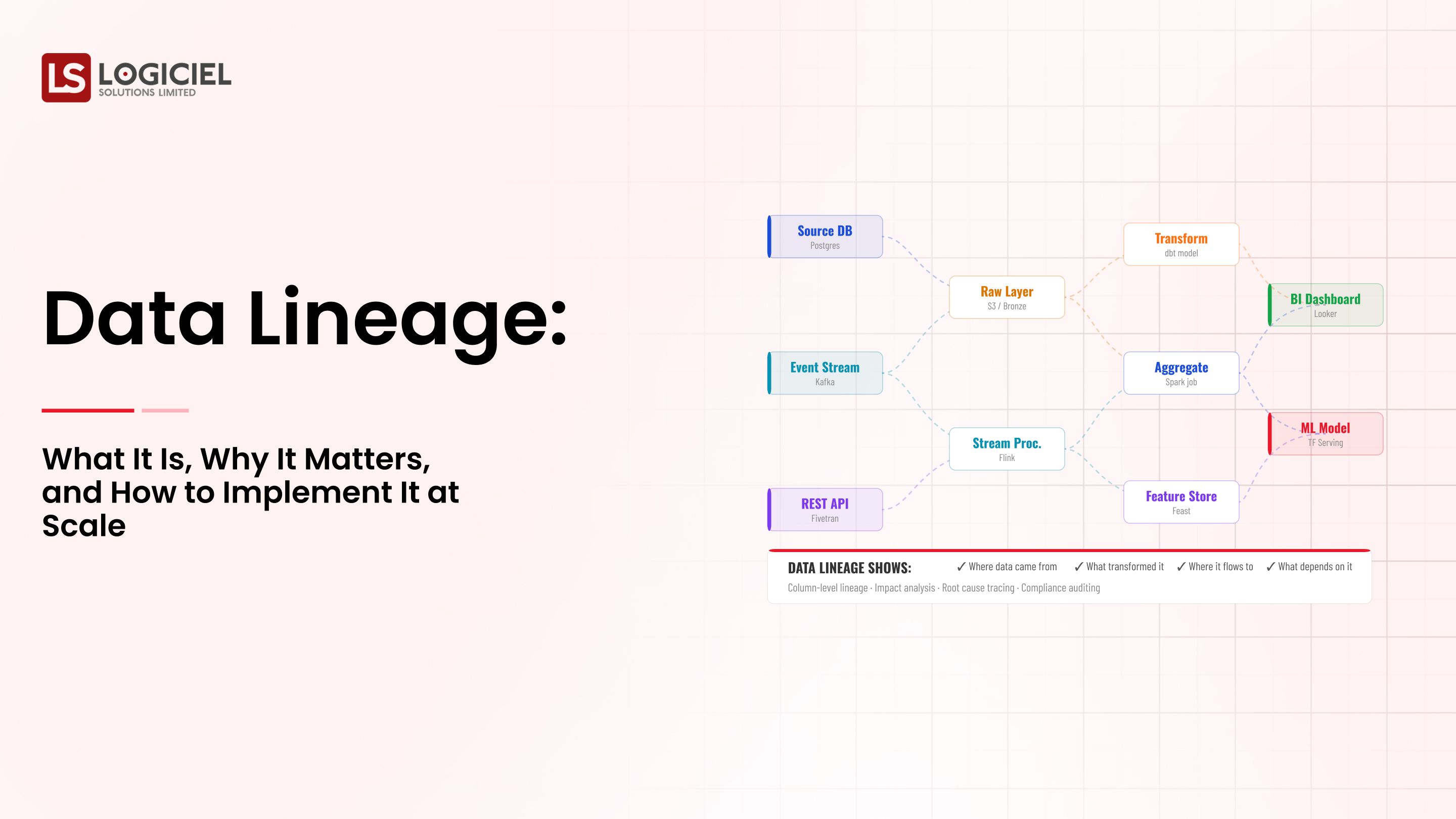

3. Map Your Data Flows

It is essential to understand the flow of the data you are collecting as it relates to:

- How data moves

- Where data transformations take place

- Who the ultimate end users of that data will be

4. Identify Your Bottlenecks

Common issues that can cause bottlenecks are:

- High data storage costs

- Slow execution of data queries

- Limited data flexibility

5. Assess Your Current Service Level Agreements

When determining the risks associated with your current data systems, ask yourself:

- What level of reliability does my current data environment offer?

- How often am I experiencing system failures?

- How long does it take me to resolve any failures to my current data systems?

6. Prioritize Your Migration Plan

Once you've completed your studies, divide your migration plan into:

- Quick Wins

- Low-Risk Data Sets

- Data Pipelines That Are Isolated By Functionality

- Complex Migrations

- Key Data Models

- Data Sets Shared By More Than One Group

You Are Now Prepared!

By going through this process, you now have:

- A high-level view of your data system

- Prioritized migration list

Section Four: Phase Two: Designing Your Target Architecture

Based on your previous work, you are now ready to create your lakehouse architecture.

1. Establish Architecture Principles

When defining the data architecture for your organisation, it should meet the following principles:

- Unified

- Scalable

- Flexible

- Observable

2. Understanding the Lakehouse Architecture

The primary characteristic of a lakehouse is that it is the combination of:

- The flexibility of a data lake

- The performance of a data warehouse

This allows for:

- The ability to store both raw and processed data

- Ability to query the data efficiently

- Ability to support any AI workload

3. Choosing Your Components Wisely

Common components of a lakehouse will include:

- An Ingestion Layer

- A Storage Layer (Object Storage and/or Tabular Storage)

- A Processing Layer

4. OrEssential Components of Multi-Workload Systems Design

- Business Intelligence Reporting

- Real-Time Data Analytics

- Machine Learning

5. Build Observability Early

- Pipeline Monitoring

- Data Freshness

- Data Lineage

- Create Data Contracts

- Schema Stability

- Version Controls

- Controlled Modification

Section 5 - Phase 3: Build, Test and Incrementally Deploy

Execution Is The Key To Success

1. Start With One Domain

- Choose Non-Critical Use Case

- Select Reasonable Data Set

2. Run Both Systems In Parallel

- Warehousing

- Lakehouse Validate

3. Infrastructure Must Have 100% Automated Test Cases

- Schema Validation

- Expected Quality

- Transformation Verification

4. Get Stakeholder Validation

Prior To Completing Transition:

- Compare Outputs

- Obtain Sign-Off

5. Scale Back After Completing Validation

- Migrate Further Workloads After Validation

- Perform Performance Optimization

- Decommission Legacy Systems

When All Migrations Have Been Completed:

- Retire Outdated Pipelines

- Reduce Costs

Key Insight

Migration Is About Trust & Confidence As Opposed To Being Speed Driven.

Section 6 - Measuring Success and Assuring Iteration

Once You Have Gone Live You Must Determine Your Measurements.

1. Service Level Objectives

- Up-Time

- Performance

- Data Freshness

2. Measure Cost-Effectiveness

- Storage Cost

- Computation Cost

3. Measure Adoption Rate

- Utilization Of Lakehouse

- Wearhouse Dependency Reduction

4. Review Retrospectively

- First 3 Months

- Weekly Check-In With Engineering

- Monthly Check-In With Stakeholders

5. Monitor Primary Indicator

- Incident Rate

- SLA Compliance

- Performance Improvement

RAG & Vector Database Guide

Build the quiet infrastructure behind smarter, self-learning systems. A CTO’s guide to modern data engineering.

Call to Action

Moving From Warehouse To Lakehouse Is Not Just A Technology Upgrade—It's A True Evolution To The Enterprise Data Architecture.

Successful Teams Are:

- Planning

- Developing Incrementally

- Building Reliability

Logiciel Supports Engineering Teams In Creating and Migrating their New Data Architecture for Analytics of Data, AI, and Real-Time Processing.

Is Your Warehouse A Bottleneck?

If So, It Is Time For You To Evolve Your Data Structure.

Learn How Logiciel’s AI-Based Engineering Teams Can Help You Transition From Warehouse To An Efficient And Scalable Future-Ready Data Architecture.

Frequently Asked Questions

What Is A Lakehouse?

Data Lakehouse Is The Marriage Of Data Lake Flexibility With Data Warehouse Speed And Structure; Allowing For Unified Analyses and AI Workloads.

Why Are Companies Moving From Warehouses To Lakehouses?

Companies Use Lakehouses To: - Decreased Storage Costs - Data Format Flexibility - AI and Real-Time Use Cases

How Long Will Migration Take?

8 to 12 Weeks Initial Migration per Domain. Several Months Full Migration Across The Organization.

What Is The Risk of Migrating?

The Largest Risk Of Migrating Is Damaging The Existing Systems; Losing Trust From Stakeholders Through Lack of Consistency In Their Data.