Three years ago, you and your team made a good choice.

You built pipelines quickly, focused on speed, and delivered dashboards that performed “well enough”.

This same good choice has now resulted in a loss of 30-40% of your sprint capacity.

AI Velocity Blueprint

Measure and multiply engineering velocity using AI-powered diagnostics and sprint-aligned teams.

Pipelines break whenever schemas change, leading to downstream systems that fail silently, and engineers who are spending hours of effort finding problems in systems that were never designed for scaling.

These are the hidden costs associated with inadequate data infrastructure design.

If you are a Staff or Principal Engineer with the responsibility of building or evolving data systems, this document will help you:

- Understand what modern data infrastructure design really entails

- Understand the necessity for data contracts as a missing element in the engineering of reliable systems

- Implement a design methodology that scales without continual fire-fighting.

At scale, reliability is not optional; it is engineered.

What is Data Infrastructure Design? A Plain-English Definition

At its most basic level, data infrastructure design is your reference guide for how data moves and transforms and is used throughout your organization.

An Easy Way to Think About This is to Compare Data Infrastructure Design to the Design of a Transportation System

Analogously:

- Roads = data pipelines

- Vehicles = data packets

- Traffic Laws = data contracts

- Destinations = dashboards, APIs, ML models

Without traffic laws, vehicles continue to flow, albeit in an unpredictable manner; however, with traffic laws, your organization’s systems can scale in a redundant fashion because of the predictability of the vehicles.

Data Infrastructure Design Core Components

Components Function

- Ingestion Gets Model

- Storage Keeps Model

- Processing Converts Model

- Orchestration Controls Work and Works

- Reliability (Data Contracts) Ensure Consistent Success and Avoid Changes That Could Hurt Performance

How it Fixes It:

We have not had reliable Engineering Practices therefore;

- Pipelines Fail without Warning

- Schema Changes Are Silently Propagating Through The Entire System Without Documentation or Communication

- Time Spent Trying To Fix/Debug Issues

With Our Data Infrastructure Design Engineering Practices;

- Data Flows Consistently and Predictably Throughout Systems

- Systems Have Flexibility and Resilience To Change

- Engineers Can Work Fast And Confidently

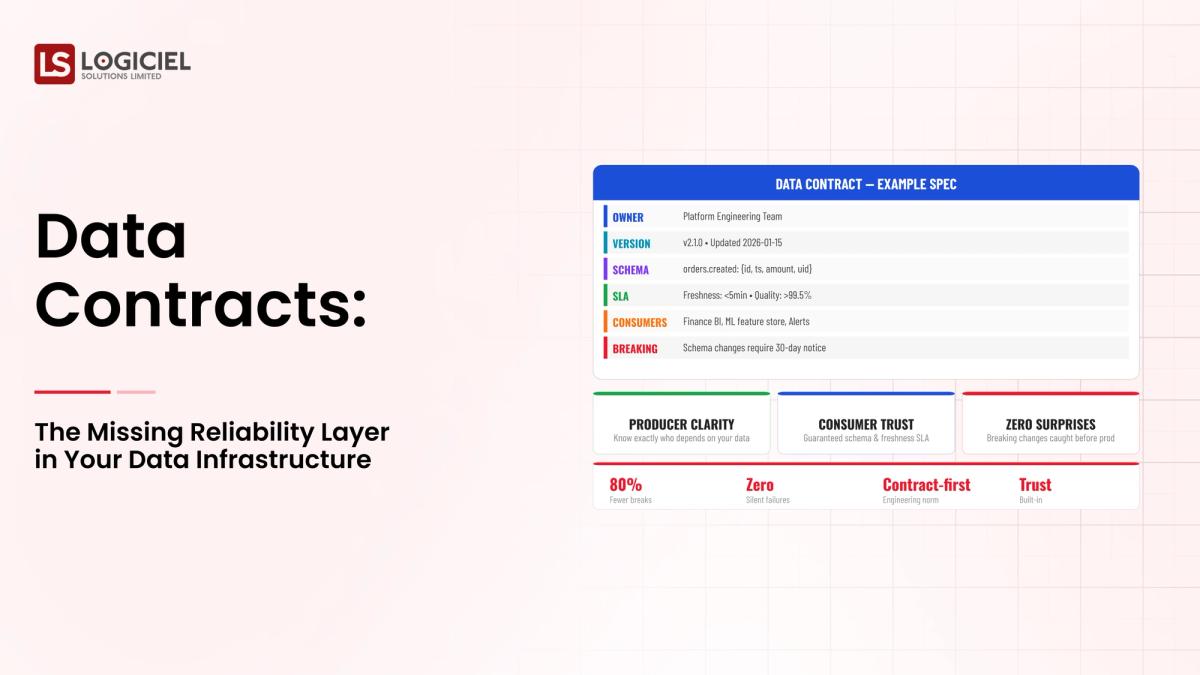

The Role of Data Contracts Can Be Defined As Follows:

- The Schema You Should Expect

- The Format of The Data

- The Quality You Are Expecting

They Are the Framework That Communications Provide The Producers and Consumers of Data.

Insight:

Data Infrastructure Design Is Not Just About The Architecture And Infrastructure Design Is About Creating A Reliable Infrastructure At Every Point In The Data Stack.

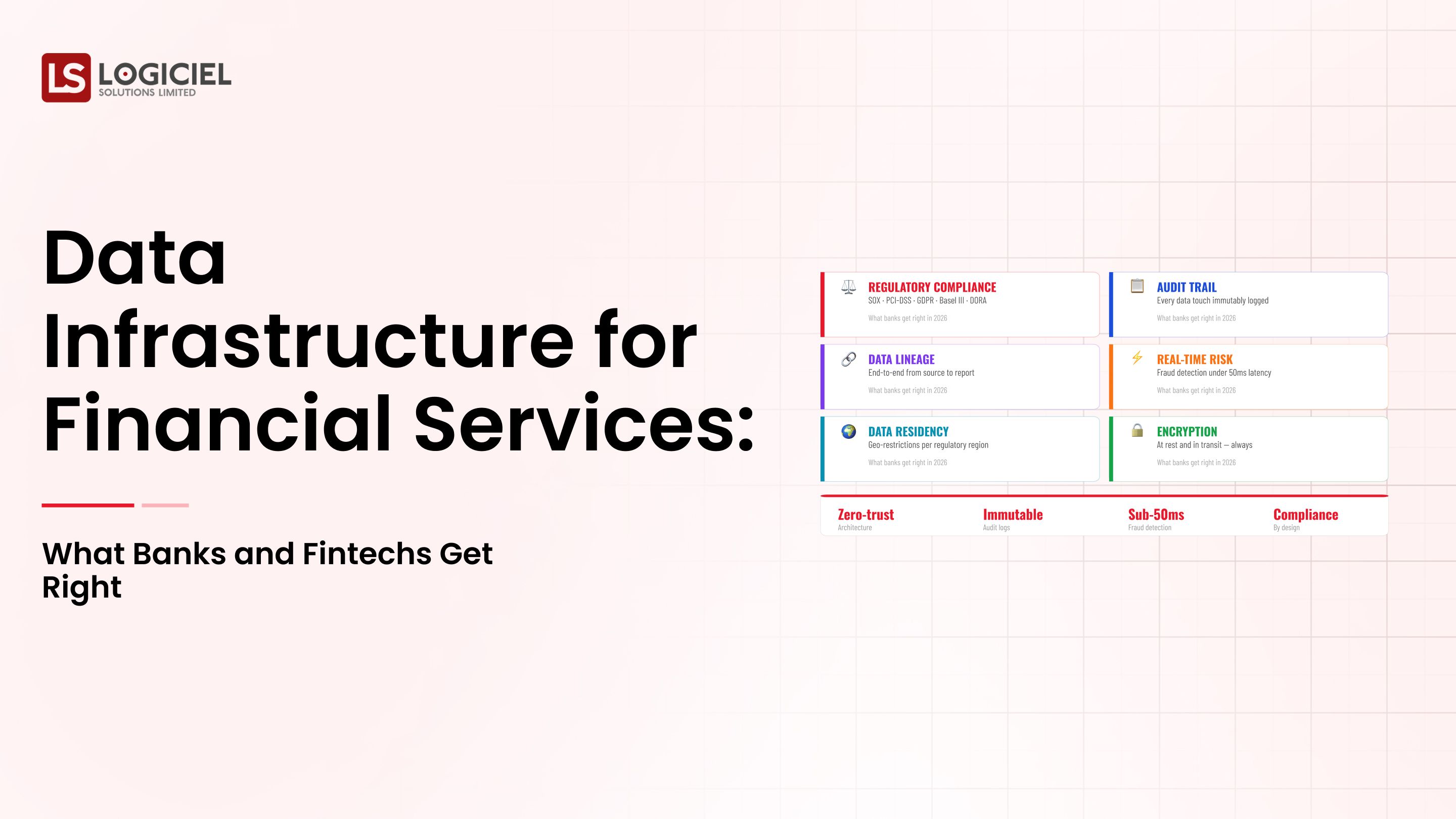

Why Data Infrastructure Design Is Becoming More Important As Of 2026

The Pressure For Data Infrastructure Design Is Greater Than Ever Before.

1) AI Systems Are Quality Dependent

Modern AI Systems Need;

- Real-Time Data

- Consistent Features

- Reliable Data Pipelines

A Small Deviation from Any Of These Conditions Can Have A Devastating Impact On The Production or Operations Of The Application;

- Model Failure

- Incorrect Predictions

2) Data Is Increasing By Volume And Complexity

By 2025 The Amount of Data In The World Will Exceed 180 Zettabytes (IDC)

As Data Grows;

- More Pipelines

- Many More Dependencies

- Many More Points of Failure

3) The Cost of A Failure Is Greater

The Impact of A Failure Is;

- Revenue Loss

- Customer Experience

- Legal and Regulatory Compliance Ability To Follow RulesLost Time Due to Inefficient Data Infrastructure

If properly designed data infrastructures aren't in place for an organization, engineers will be spending 30-40% of their time debugging, development velocity will slow and innovation will stall.

Illustrating Before and After Cases

Before (Poor Design):

- Frequent pipeline failures

- No ability to debug proactively

- Data is considered untrustworthy

After (Good Design plus Contracts):

- Predictable pipeline function

- Fast debugging time

- Data is trusted

Takeaway

Predictable behavior of data is more important to a modern system than just being able to scale infrastructure.

Key Data Infrastructure Design Elements: What Are You Building?

It’s important to understand your system before designing it.

1. Ingestion Layer

Gathers incoming data from:

- APIs

- Databases

- Event Stream Data

Requirements for this layer:

- Reliability

- Schema Validation

2. Storage Layer

Contains:

- Data Lake

- Data Warehouse

Supports:

- Both structured and unstructured data store.

3. Processing Layer

Accomplishes:

- Transform the data

- Aggregate the data

- Feature engineering

This is where most complexity occurs.

4. Orchestration Layer

Manages:

- Workflow scheduling

- Dependencies between working pieces

- Retries of failed tasks

5. Reliability Layer (Data Contracts)

Provides:

- Schema Validation

- Controlled Change

- Early Detection of Failures

How the Various Components Work Together

- Data is ingested into a data lake or warehouse

- Centrally stored and processed into something usable

- Contracts ensure that data is validated

What's Included with Data Infrastructure vs. What's Not Included

Included:

- Data Pipelines

- Data Platforms

- Observability Systems

Not Included:

- Business logic

- Application Level Features

Takeaway

Reliability enforcement is the missing layer in the majority of data infrastructures, not the actual infrastructure itself.

How the Data Infrastructure Design Will Work In Reality: Step By Step Guide

Ingesting Data

- From web applications

- From mobile applications

Data Storage

- Stored in a data lake

Processing

- Cleansing

- Aggregating

- Creating features

Orchestrating Workflows

- Managing execution order

- Handling dependencies

How Data Is Delivered

- Dashboards

- ML models

Where Data Pipelines Can Break

- Schema changes breaking the pipelines

- Errors propagating through downstream systems

- Debugging is complicated and time-consuming

Example of a Data Pipeline

Data Source (Events) → Ingestion → Data Lake (Storage) → ETL (Processing) → Warehouse → Dashboard/ML Delivery

With Data Contracts

- Schema changes validated before changes occur

- Breaking changes blocked

- Teams notified immediately

Key Insight

Data contracts provide a means for preventing silent failures and turning them into visible and actionable failure events.

Data Infrastructure Design Mistakes

1. Over-Engineering Too Early

- Complexity

- Longer development time

2. Underinvesting in Observability

- Problems go unnoticed

- Debugging becomes reactive

3. Skipping Data Contracts

- Schema changes break pipelines

- No early warning for teams

4. Treating Infrastructure as Static

- Systems become obsolete

Key Insight

Most of the infrastructure failures are the result of poor design and processes, rather than being technical failures.

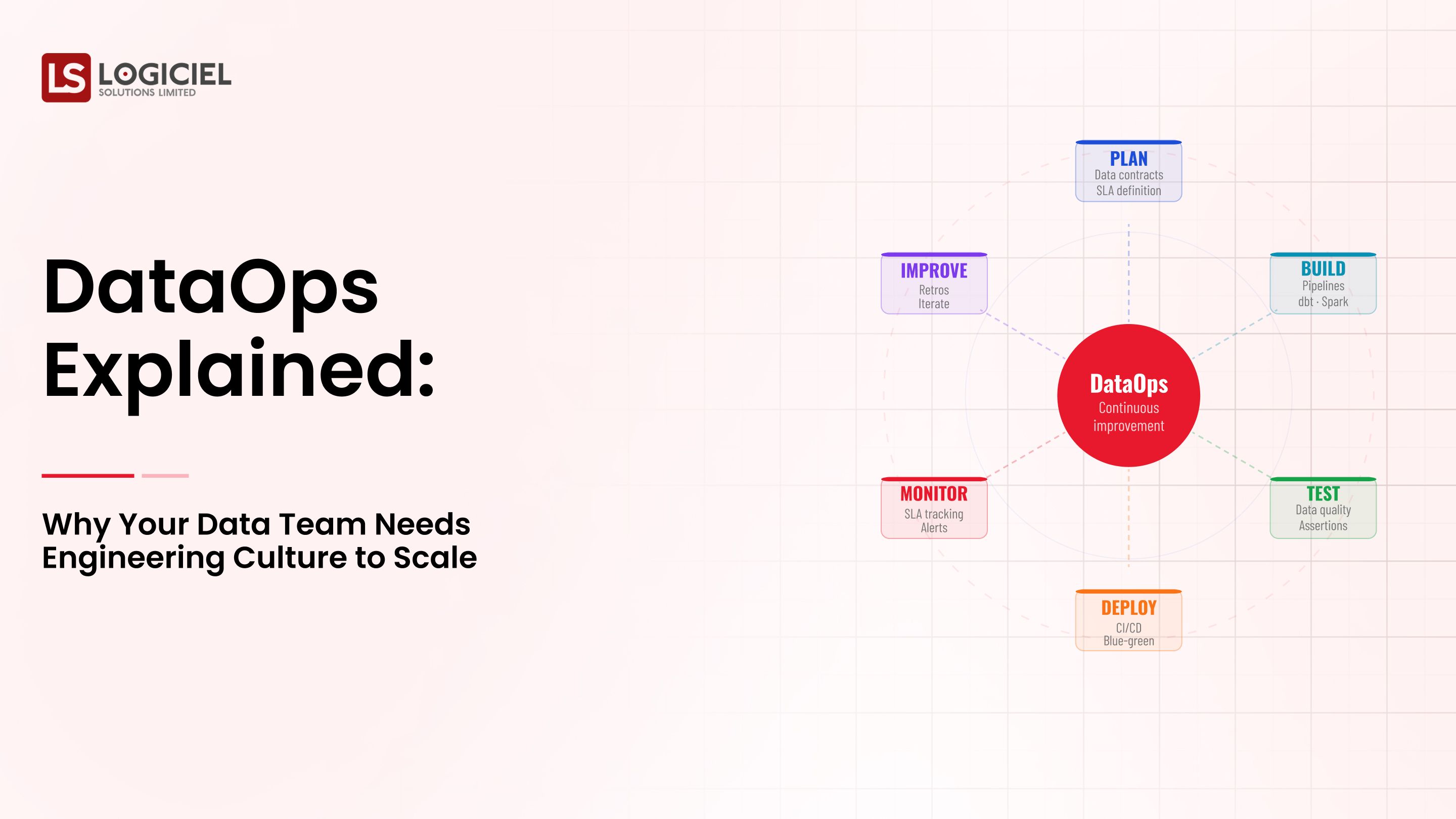

Data Infrastructure Design Best Practices

1. Automate Validation

- Schema integrity checks

- Data quality validation

- Alerting on failures

2. Treat Infrastructure as Code

- Version control pipelines

- Reproducible systems

Failure Construct

- Retries

- Circuit Breakers

- Dead Letter Queues

4. Create Early SLAs

- Data Freshness

- Reliable Systems

- Data Accuracy

5. Require Data Contracts

- Definitions Given By Producers

- Consumer Protection Available

How Logiciel Delivers

Logiciel Helps You By Providing:

- Automation Of Contract Enforcements

- Real-Time Observability

- Dependable Data Pipelines

Will Reduce:

- Debugging Time

- Pipeline Failures

- Operational Overhead

Key Point

The Best Teams Don't Just Emphasise On Performance, They Also Care About Being Predictable In Their Performance

Final Comments

Today's Systems Need More Than Just Pipelines And Storage Systems

3 Key Points:

- Data Infrastructure Design Must Have Reliability Layers

- The Use Of Data Contracts Are Critical

- The Majority Of Failures Are Predictable / Avoidable

Good Design And Engineering Practices Lead To Scalability; Not Tools

This Is A Very Large Problem, Solving This Problem Will Create:

- Trustworthy Data Systems

- Faster Development Cycles

- Greater Performance From Artificial Intelligence

Evaluation Differnitator Framework

Why great CTOs don’t just build they evaluate. Use this framework to spot bottlenecks and benchmark performance.

Call To Action

If Your Pipelines Are Failing More Frequently Than You Would Like:

Read:

- Why Your Data Infrastructure Is Continually Breaking; Root Causes And Fixes

- How To Establish A Proof Of Concept For Data Infrastructure

- How To Get Your CFO To Approve The Investment In Data Infrastructure

- How To Evaluate Data Infrastructure Vendors

Otherwise, Your Next Step Will Be:

👉 Request An Infrastructure Audit or Data Contract Checklist (Completely Free)

Logiciel Solutions Partners Will Help You Design Data Systems That Are Reliable And Scalable And Ready For Artificial Intelligence.

Frequently Asked Questions

What Is Data Infrastructure Design?

It Is The Design Of Systems To Manage The Pipelines, Storage, Processing Capabilities, For Both Scalable And Reliable Systems.

What Are Data Contracts?

They Are Definitions Given To Producers Of Data, And Consumers Of Data Which Instruct The Producers Of Data How The Data Will Be Formatted To Avoid Breaking Changes.

Why Are Data Contracts Important?

Because They Help Ensure Data Integrity; They Help Avoid System Breakdowns; They Help Increase System Reliability.

What Is The Most Common Error In A Data Infrastructure Design?

Failure To Have A Reliability Layer In The Design (Use Of Data Contracts And Observability).

What Can Teams Do To Make Their Designs Better?

Utilise Data Contracts; Automate Validations; Improve Incrementally.