Your dashboards are executing. Your pipelines are "operating".

However, your stakeholders have slowly lost faith in your data.

They do not escalate this to you right away, rather they are double checking numbers in spreadsheets. They are requesting manual validation. They are delaying making decisions not because lack of data, but because of their lack of trust in the data.

Real Estate Investment AI

Your models aren’t wrong. Your data is. Here’s how real estate teams fix AI failures before they cost millions.

This is the failure in managing inadequate data infrastructure.

For those of you who are VPs or Heads of Data who are responsible for scaling the data platform, this guide will provide:

- Understanding what data infrastructure management is (beyond tools)

- Identifying those reasons why old ways fail at scale

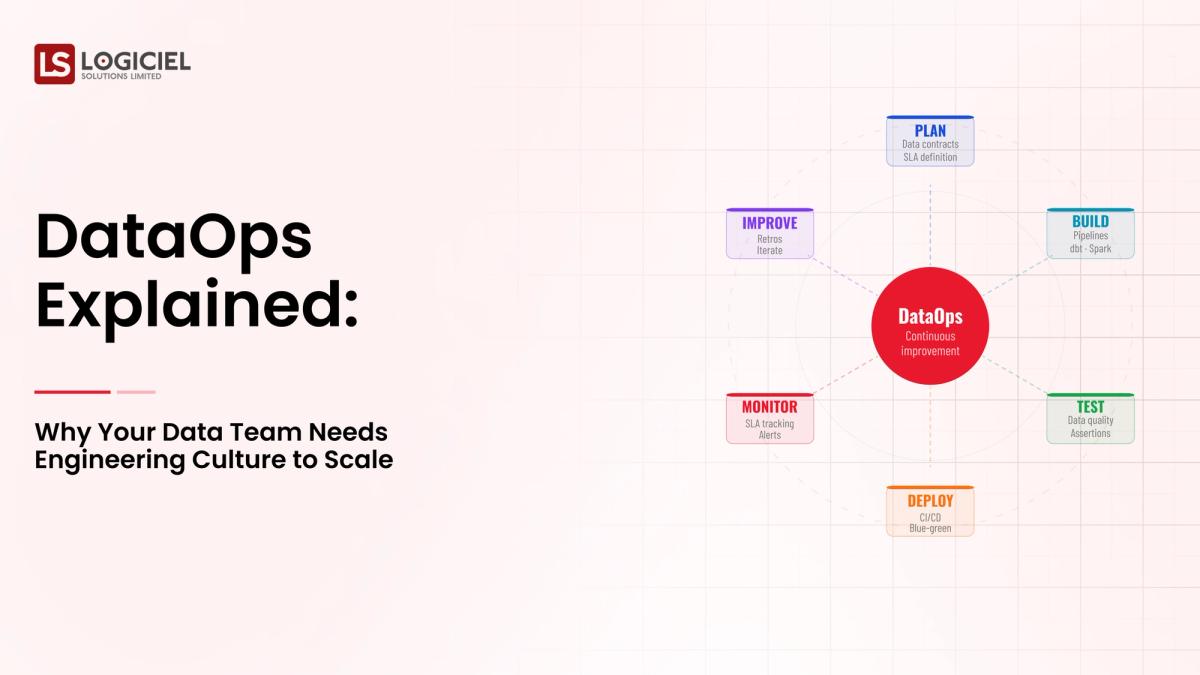

- How DataOps and an engineering culture improve data confidence and speed.

At scale, data issues will no longer be technical, they will be systemic and cultural.

What is Data Infrastructure Management? Plain English Definition.

Data infrastructure management is all about establishing, maintaining and continually improving the operations and processes that transport, store and deliver data throughout the organization.

A Simple Analogy

A good analogy would be a logistics system.

- Raw materials --> raw data

- Warehouses --> storage systems

- Shipping routes --> data pipelines

- Delivery location --> dashboards, APIs, ML models

If one piece of the logistics system breaks, all operations slow down, or even worse, incorrect information is produced.

Core Components Of Data Infrastructure Management

Component | Function

- Ingestion Layer | Collecting of data from various sources

- Storage Layer | housing structured and unstructured data.

- Processing Layer | transformation and preparing of the data

- Orchestration Layer | workflows and dependencies are managed

- Observability Layer | supports monitoring of the system and data quality.

What Problem Does It Solve

Without having structured data infrastructure management:

- Data pipelines become fragile

- Lack of visibility in your system

- Debugging becomes slow and reactive

With having structured data infrastructure management:

- Data flows reliably without disrupting your team or organization.

- Your system scales predictably and aligns with business growth.

- Teams have confidence in using data at all times.

What It’s Often Confused With

It is not just:

- A data stack

- A series of tools

- A one-off decision regarding an architecture

It should be treated as an ongoing operational capability.

Key Insight

The purpose of data managementis not just moving data but rather ensuring trust, reliability, and scalability of the entire system with near real-time availability.

Why Is Data Infrastructure Management More Important In 2026 Than Ever

Data infrastructure management has changed significantly, including..

1. AI Has Raised The Bar

Today's technology has allowed us to create:

- Recommendation engines

- Predictive Analytics

- LLM-based apps

With these types of systems, you now need:

- Fresh daily data

- Highly reliable streams

- Consistent pipelines from end to end

2. Explosion Of Data Volumes

Industry analysts predict worldwide data volumes will exceed 180 Zettabytes globally by 2025 (IDC)

As data volume increases:

- There will be more data pipelines

- There will also be more dependencies

- There will by default create more potential failure points

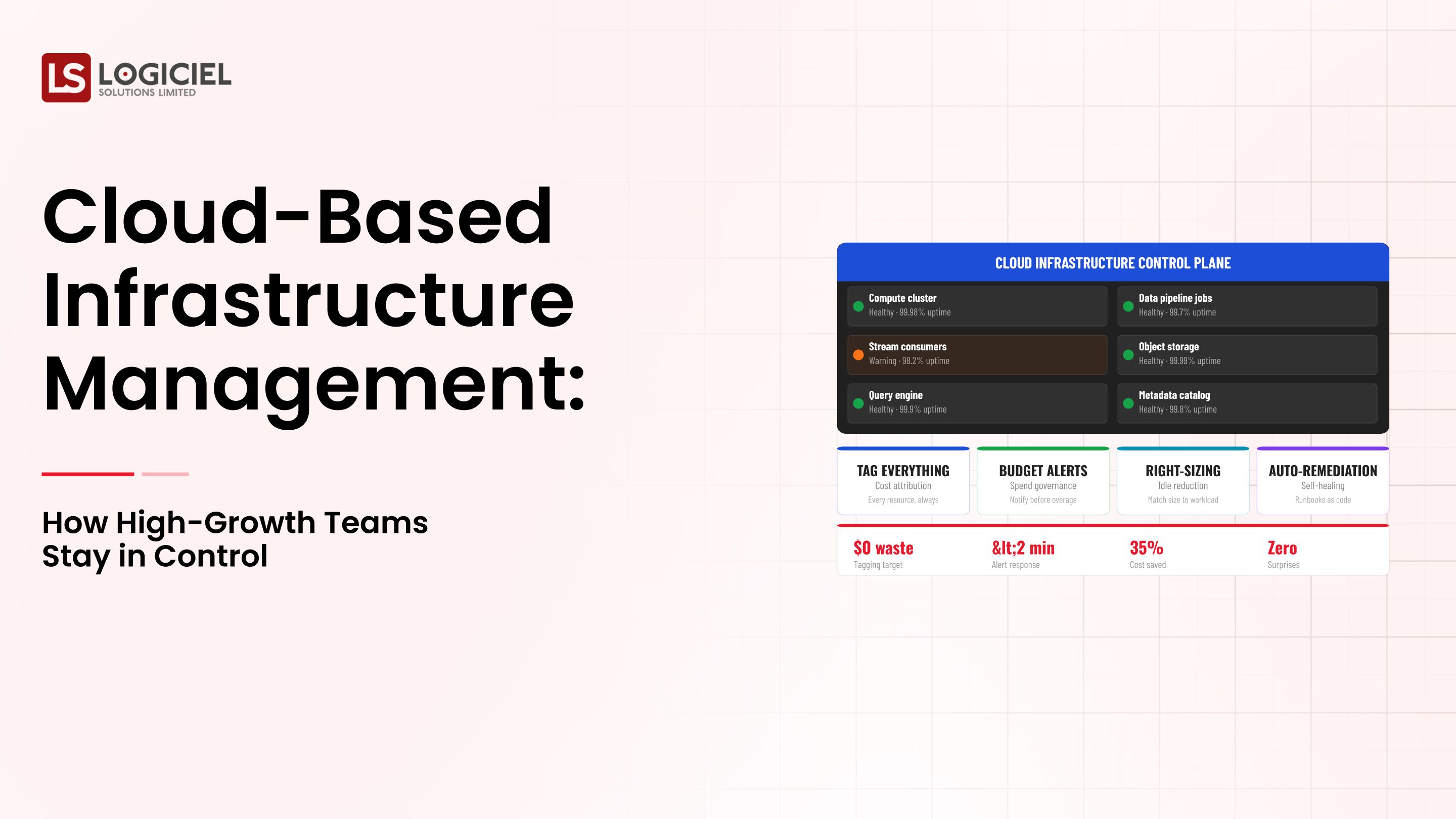

3. Increased Pressure On Costs

Data infrastructure is driving costs through:

- Cloud spend

- Engineering resources

- Operational Overhead

When not properly managed will meeting compliance requirements organizations should focus on:

- Data Traceability

- Access Control

- Auditability.

To successfully accomplish this organizations should achieve:

- Strong Governance

- End To End Visibility

Before & After Vision

Traditional Approaches (Before)

- Batch Pipelines

- Limited Scale

- Tolerable Delay

New Age Approaches (After)

- Real-Time Data

- AI Driven Decision Making

- Zero-Tolerance for Failure

Consequence of Not Managing Data Infrastructure Strongly

Without Strong Data Infrastructure Management Engineers Are Spending 30-40% Debugging Data Trust Grows Slow Business Decision-Making Process.

Key Point

By 2026 the Data Infrastructure Will Be a Core Component - No Longer A Support Function.

Core Elements of Data Infrastructure Management: What Are You Really Building

To Build Effective Systems Understand The Architecture.

1. Ingestion Layer

Where The Data Comes From:

- Applications

- REST API's

- Databases.

The Ingestion Layer Requirements Include:

- Reliability

- Scalability

- Schema Validation

2. Storage Layer

Where The Data Ends Up:

- Data Warehouses

- Data Lakes.

The Storage Layer Must Support:

- Very High Data Volumes

- Structured And Unstructured Data Formats.

3. Processing Layer

What Happens To The Data:

- Data Transformation

- Aggregation

- Feature Engineering (Most Data Engineering Complexity Lived Here).

4. Orchestration Layer

How Work Is Done:

- Workflow Scheduling

- Manage Dependencies Between Different Components

- Manage Retry Logic On Failed Jobs (Pipelines Execute In Sequence).

5. Observability Layer

How You Know If Everything Is Working Properly:

- Data Quality

- Pipeline Health

- Newness Of Data.

Requires Good Data Quality To Ensure Data Reliability.

How All These Pieces Fit Together

- Ingest Data.

- Store Data In A Centralized Location.

- Process Data To Create Usable Formats.

- Orchestrate Data Through Workflows.

- Monitor All Components For Problems.

What Is Included Vs What Is Not Included.

Includes:

- Data Pipelines.

- Data Platforms.

- Systems Used To Monitor The Observability Layer (Example Metrics).

Does Not Include:

- Business Logic.

- Application Level Decisions.

Key Point

Your Data Stack Will Be As Strong As The Weakest; Your Layers.

How Data Infrastructure Management Works in Reality: A Typical Example

Let’s take a look at how this might work out.

1. Data Ingestion

The collection of user activity data comes from several places:

- Web.

- Mobile.

- Backend systems.

2. Storage

Data is stored in two ways:

- Centralized in a warehouse.

- Partitioned for easy retrieval.

3. Processing

The various transformations that occur to the data are:

- Cleansing the data.

- Aggregating metrics.

- Making features.

4. Orchestration

Workflows are used to manage:

- Pipelines running on schedule.

- Dependencies between elements of the pipeline.

5. Delivery

Data is delivered to:

- Dashboards.

- Analytics tools for creating reports.

- Machine learning (ML) models for building algorithms.

Where Things Can Break

Things that cause problems include:

- Changes to the schema of the data.

- Delays in receiving data.

- Errors in transforming data.

Example of a Pipeline

Source (App Events) → Ingestion (Streaming API) → Storage (Data Lake) → Processing (ETL Jobs) → Analytics Layer/Emba- wet) → Dashboards / ML Models

How Data Teams Get Things Done Daily

- Monitoring pipelines

- Troubleshooting and fixing failures

- Optimizing queries

- Maintaining high-quality data

Things that teams of all experience levels do wrong with Infrastructure Management

The common mistakes that people make in managing their infrastructure include:

1. Building for a future scale when needed.

Over-engineering results in:

- Complexity.

- Longer development time (because you built too much).

2. Not spending enough time on observability.

Not having observability means that:

- Problems will be found later.

- It is difficult to resolutely define/debug the root cause of the issue.

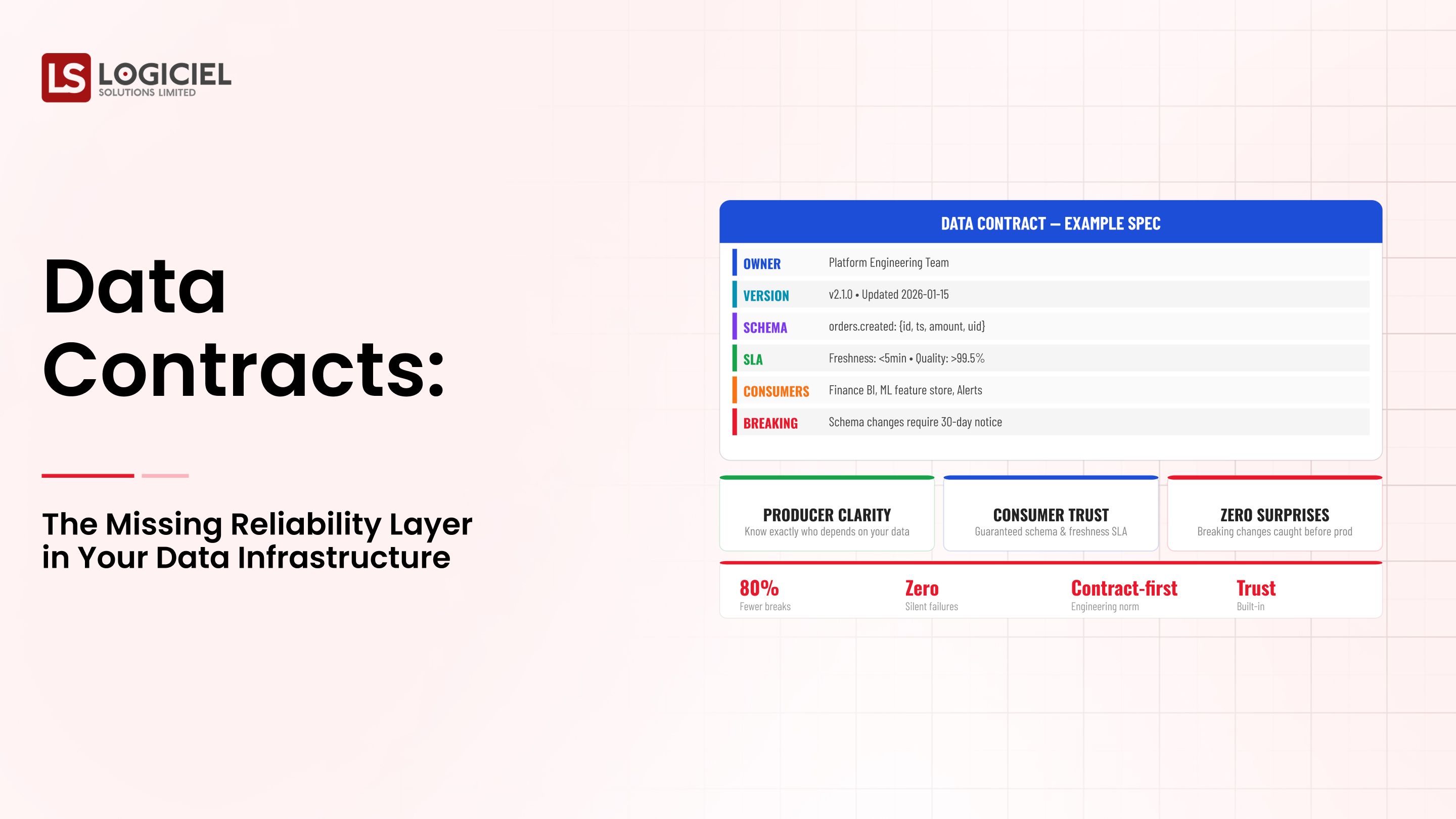

3. Skipping the data contract process.

Without contracts you are creating:

- Schemas that are subject to change without warning, therefore systems that will break and inconsistencies in the data become evident.

4. Treating data infrastructure management as a one-time project.

Data infrastructure management is an ongoing effort. Static systems become obsolete; they cannot satisfy new needs.

Key Insight

Most failures occur due to a lack of processes and discipline, not because of a shortage of tools.

Best Practices for Managing Data Infrastructure: What High Performing Teams Do Differently

High performing teams don't just manage systems—they build an engineering culture.

Automate as Much as is Possible

Automation will include validation of data, alerts, monitoring, and will reduce human mistakes.

Treat Your Infrastructure as Code

Your infrastructure is a version controlled environment with a reproducible environment—this increases the consistency and reliability of your system.

Plan for the Eventuality of Failure

Have built-in mechanisms for retrying any failed process; use circuit breakers; have dead-letter queues. Always design your processes to fail because failures are a normal part of a system.

Establish SLAs Early On

Decide early on what the expected levels of data freshness, pipeline uptime, and the level of accuracy will be.

Use DataOps Principles

DataOps supports a continuous integration/delivery/deployment model and feedback loops.

How Logiciel Supports Teams

Logiciel provides the following:

- Automation of observability

- Reliability in pipeline management

- Quality assurance for data

These elements support delivering faster, fewer incidents, and developing scalable infrastructure.

Key Insight

The main difference between average teams and high performing teams is operational discipline—not the tools they use.

Conclusion

Scaling your data systems is not just about the technology. It is also about how your team operates.

Three Key Takeaways

- Managing data infrastructure is a continually evolving capability.

- Managing data infrastructure is a continual process—it is not a one-time architecture decision.

- The foundation for your system being able to scale is observed and reliable systems. Without consistent reliability and observable systems your data systems will break when scaling.

The execution of an engineering culture (DataOps) drives the success of data systems. The process of execution and following consistent/proven discipline will create more successful data systems than will having the most advanced tools.

This is a challenging undertaking. However, successfully completing this task will yield the following benefits:

- Faster decision-making

- Increased trust in pre-processed data

- Better performance of artificial intelligence projects

PropTech AI Infrastructure Roadmap

They’re stuck because the data layer they need doesn’t exist yet

Call to Action

If you are designing or building data systems:

Resources available:

- Why the Infrastructure of Your Data System Fails: The Roots of the System and the Actual Solution to Fix Your Data System

- How to Conduct a Proof of Concept on Your Data Infrastructure

- How to Justify Investment in a Data Infrastructure to Your CFO

- How to Improve Your Use of the Data Infrastructure Vendor

Next Steps:

👉 Explore the Logiciel platform or subscribe to our Engineering newsletter.

Logiciel Solutions supports teams in building AI-first scalable data infrastructure systems.

Frequently Asked Questions

What is data infrastructure management?

Data Infrastructure Management is the management of all processes associated with the management of data infrastructure.

Why is it useful?

They provide you with the reliability, scalability, and trustworthiness of the data that is critical for today's business and analytics and AI systems.

What are the components of data infrastructure?

D/data Ingestion, D/data Storage, D/data Process, D/data Orchestration, and D/data Observability.

What is DataOps?

DataOps is an operating model based on DevOps methodology applied to data systems to allow systems and processes' performance to be improved while keeping costs low and timeliness of products delivered to customers or stakeholders.

How does your team get started using DataOps?

Begin using DataOps by implementing observability, defining service-level agreements, automating processes and implementing incremental improvements.