You receive your latest cloud bill-it’s 40% more than last quarter.

Everything looks unchanged. There aren’t any major new product launches, and your number of users hasn’t changed.

But even though you haven’t made any significant changes, your data infrastructure costs appear to increase.

Your team of engineers says the system is functioning as expected; finance is requesting a reason for the increase; and your management is really just trying to answer the big question:

“Are we overspending? Or, are we just scaling?”

Real Estate Marketing Attribution

A single attribution mistake led to a 22% pipeline drop. Here’s how real estate teams fix it with full-funnel visibility.

This is when most teams discover they do not understand what drives up their data infrastructure costs.

If you are a CTO or VP of Engineering, this guide will help you:

- Identify where many companies are overspending on data infrastructure

- Understand why costs can increase even if usage remains constant

- Develop a structured method for optimizing your spending without negatively impacting performance

Cost optimization is not about eliminating resources; it is about eliminating inefficiencies in your data infrastructure.

Reasons for Data Infrastructure Cost Increases

Cost increases are rare; they happen gradually.

The Common Trend

- New Data pipelines added

- Increased data quantity

- More tools are added to existing pipelines

Every individual decision is logical from a standpoint of the individual decision-maker, yet collectively, they present significant data infrastructure inefficiencies.

Why This Will Continue To Become Increasingly Difficult In 2026

Modern data infrastructures include:

- Real-time data pipelines

- AI/ML environments

- Multi-Cloud

All of which contribute to more data being processed, more duplication of stored data, more data being moved between locations, etc.

A Familiar Example

A data engineering group creates a duplicate data set for reporting:

- 1 in the data warehouse

- 1 in the feature store

- 1 in backup storage

All serve a separate purpose.

In summary, the increased storage cost and computational power used through data synchronization add significant operational expenses.

A mature data infrastructure model provides:

- Cost-per-pipeline tracking

- Alignment of spending with business value

- Elimination of redundant processing

Key Insight

Most of the cost problems are due to architecture-related issues.

The Six Major Cost Factors Associated with Data Infrastructure

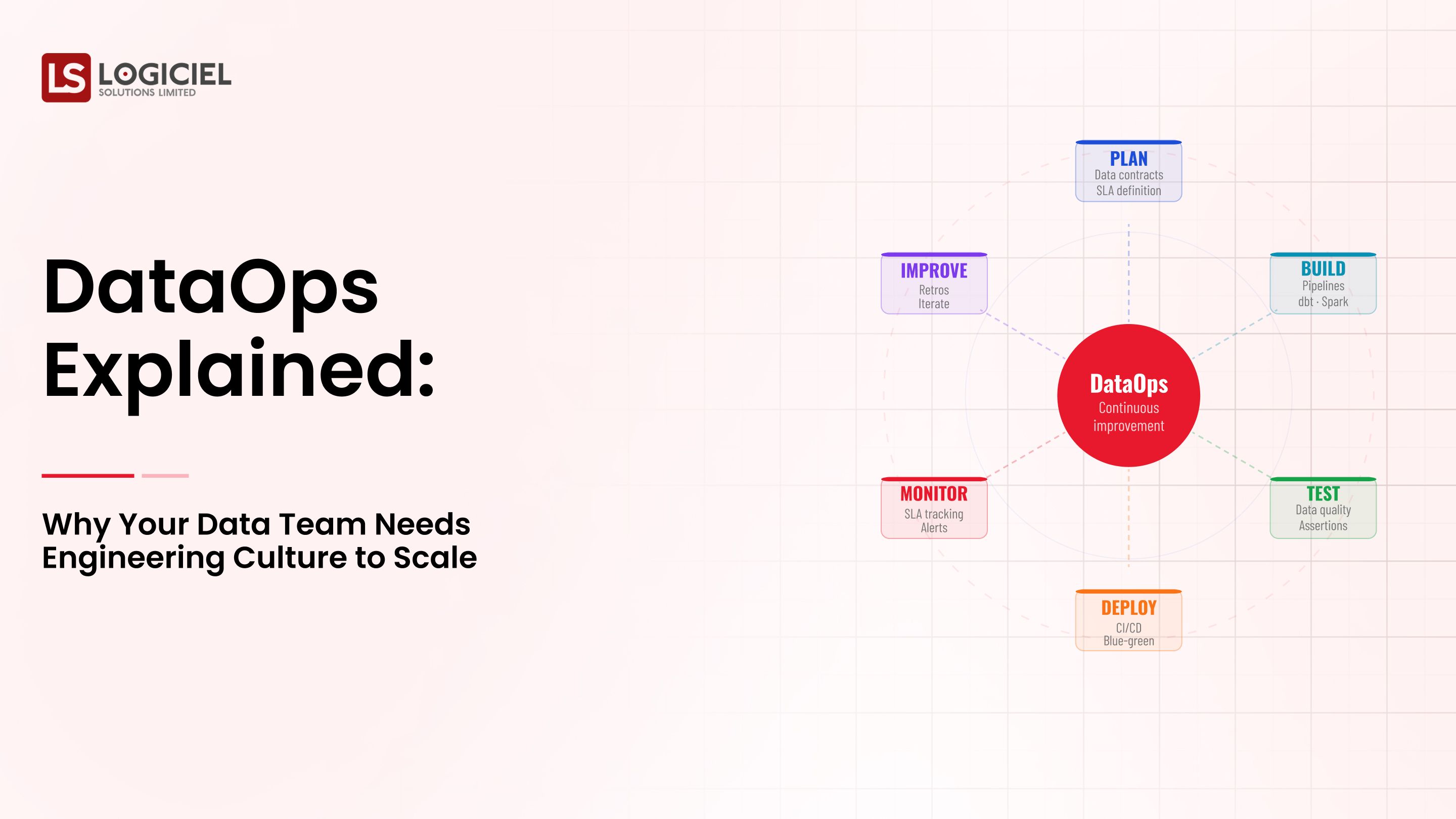

To improve costs, we must understand the cost factors associated with the data infrastructure.

1. Compute Waste

For example, compute waste can be caused by:

- Over-provisioning compute clusters

- Inefficient querying

- Idle/underutilized resources

The result of compute waste is a high recurring expense.

2. Data Storage Explosion

The explosion of data storage is caused by:

- Duplication of datasets

- Retention of non-usable historical data

- No retention policies

The result of the explosion of data storage is that storage costs (for the enterprise) will exponentially grow.

3. Data Redirection or Movement Costs

Data redirection or movement costs include:

- Cross-region transfer of data

- On-premise to cloud sync of data

The result of both types of data redirection or movement costs is that they may build, but are ultimately "hidden."

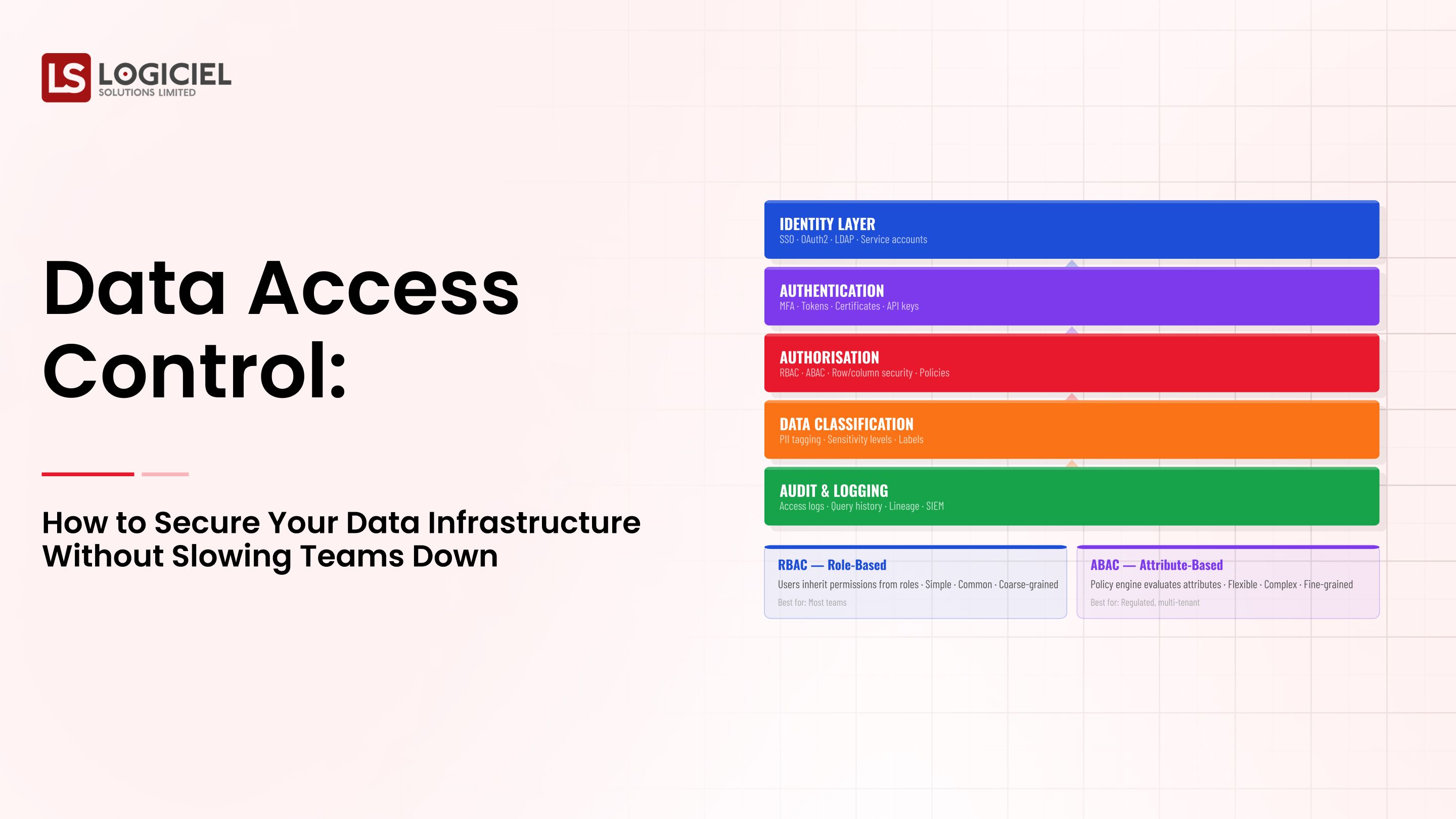

4. Tool Sprawl

For example, many data analytics teams are using too many data analytics tools, as well as separate monitoring environments.

The result of tool sprawl is additional licensing expenses, as well as integration costs.

5. Inefficient Pipelines

For example, reprocessing full data sets, with overlapping transformations adds to inefficient pipelines.

The net result of inefficient pipelines is increased volumes of compute.

Key Insight

The ability to reduce costs will not begin until you understand the source of the costs.

Prerequisites for Cost Reduction

The visibility you need before reducing costs:

Cost Attribution

- Cost-per-pipeline

- Cost-per-team

- Cost-per-use case (e.g. reporting)

Observability

- Resource utilization

- Pipeline performance

- Data movement

Ownership Model

Define allocation of ownership between:

- Data pipelines

- Cost ownership

Stakeholder Alignment

Stakeholder alignment must include:

- Engineering

- Finance

- Executive leadership

Defined Metrics

Cost metrics need to include:

- Cost-per-query

- Cost-per-data product or use case (e.g. reporting)

- Rate of cost growth

Key Insight

If cost reduction does not have visibility, then there are a high probability that the wrong decisions will be made.

Part One (Identifying and Prioritising your cost leaks)

Identify and document inefficiencies in:

- Infrastructure

- Pipelines

- Storage Systems

- Tools

Identify high cost areas:

- Top 20% of cost contributors

- Underutilized/resources

Map cost versus value (answer these questions):

- What is the contribution of each pipeline to the business?

- What is not contributing?

Determine priority to fix

Quick wins:

- Redundant resources

Strategic fixes:

- Architectural changes

The output will be a prioritised roadmap for cost optimization.

Key Insight

Focus on largest impact first when fixing inefficiencies.

Part Two (Optimize Architecture and Usage)

You will begin the process of systematically eliminating waste.

Optimise Compute

- Size correct clusters

- Use auto-scaling

- Optimise your queries

Reduce Waste in Storage

- Eliminate duplicates

- Implement retention policies

- Archive good data

Minimise Data Movement

- Process data near where you collect it

- Minimise cross-region transfer of data

Consolidate Tools

- Remove redundant tools

- Integrate systems

Improve the Efficiency of Pipelines

- Use Incremental Data

- Avoid doing a full pipeline reprocessing of your data

Key Insight

Optimizing is not about obtaining cheaper work; it is about reducing workload overall.

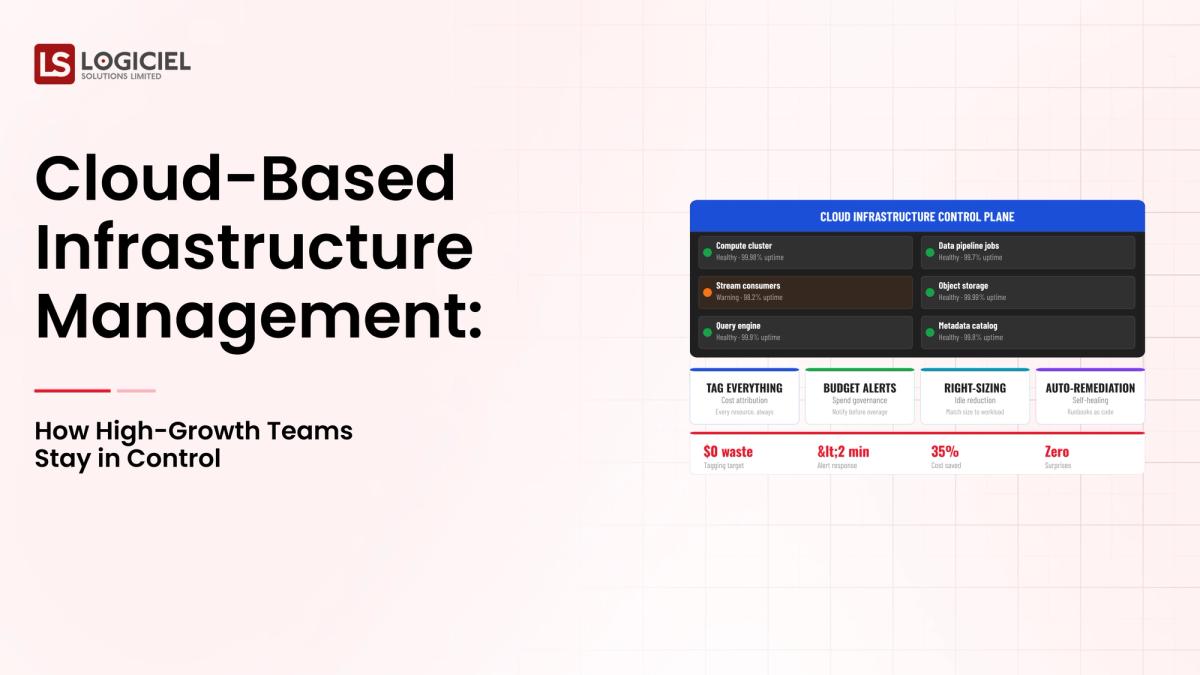

Part Three (Build Continuous Cost Governance)

Cost Optimisation is NOT a one-time effort.

Define Cost Related SLO's

Example:

- Cost/Query Threshold

- Monthly budget limits

Create Cost Dashboards

Include:

- Total spend by Pipeline

- Historical Expenses for each Pipeline

- Historical Trends for each Pipeline

- Where spikes occur in Data

Conduct Periodic Reviews

Monthly:

- Review spikes in costs (investigate cause of spike)

Assign Cost Centre Ownership

- Assign responsibility

- Cost Centre ownership

Key Insight

Continuous governance practices eliminates cost creep.

Cost Reduction Mistakes

Cost Cutting can create Risk if done without due diligence.

Examples of Risk Mistakes

- Over-optimising critical pipelines

- Reducing resources without proper testing

- Ignoring business impact

Best Practice Workflows

Previous Workflow:

- Optimise your non-critical workloads first

Previous Workflow:

- Measure the impact of Optimisation before making any Scaling Changes

Previous Workflow:

- Balance cost vs reliability

Example

Before

High compute and low efficiency.

After

Optimised queries with same performance and much lower cost.

Key Insight

Infrastructure investments should result in efficient performance, NOT minimum cost.

Conclusion

Costs associated with running Data Infrastructure operations should not continue to increase without accountability.

Key Takeaways

- Cost problems will be due largely to Architecture

- Will not optimise cost-related issues without having visibility

- Continuous governance keeps Cost Creep to a minimum

If implemented properly, Cost Optimisation will allow you to do the following:

- Spend Less

- Be More Efficient

- Scale Your Business

Real Estate Identity Resolution

Duplicate records are hiding your best leads. Identity resolution reveals true buyer intent and fixes your pipeline.

Call to Action

Next Steps to an Increasingly Costly Data Infrastructure

Start by reviewing:

- Why does your Data Infrastructure keep breaking- Root Causes and Guaranteed Fixes

- Data Infrastructure Strategy (6-Month Road Map For Engineering leaders)

Next step is to request a Cost Optimisation Audit.

Logiciel assists teams in:

- Creating efficient AI-First Data Systems while reducing cloud cost by 30-40%

- Increase overall Performance

- Scale Business without Waste

Frequently Asked Questions

Why do Data Infrastructure Cost continue to Increase?

Due to volume of Data, inefficient Data Pipelines and duplication among a number of data resources.

What are the Major Cost Drivers?

Compute Usage and inefficient Data Processing.

How can Teams Quickly Decrease Costs?

Remove Unused Resources and Optimise Queries.

Is Cost Optimisation a One-Time Process?

No, it requires ongoing monitoring and governance processes.

How can you Balance Cost versus Performance?

Start with optimising your non-critical workloads, measure the impact prior to allocating resources for scale.