Your company launches a product 2 weeks later than planned; not because the engineering team was lazy and didn’t put in enough effort, but rather, because the data behind the product was unreliable.

Or even worse, you are about to undergo a compliance audit and discover major inconsistencies in your reporting layer. All of a sudden your leadership has questions, your customers are still waiting, and now your engineering team has to retrace their steps back to the initial cause of this failure - a failure that could’ve easily been avoided if data infrastructure security was a priority in your organization.

100 CTOs. Real Expectations

This report shows what actually predicts delivery success and what CTOs discover too late.

At this point, data infrastructure security goes from being an issue of technology, to being an issue of business risk.

If you’re the CTO or VP of Engineering, this guide will help you with:

- Understanding why most disaster recovery plans fail in today’s data-driven world.

- Building practical solutions to secure your data pipeline and infrastructure.

- Establishing a phased approach to prevent, detect and recover from failures.

By 2026, failure is going to be the status quo; it is time to build for the inevitable!

What Most Organizations Get Wrong When Managing Data Infrastructure Security

Most organizations do not ignore Data Infrastructure Security - Most Organizations Undervalue Data Infrastructure Security.

The Common Patterns Of Failure

- Data Pipelines Are Built To Be Fast, Not Resilient.

- Ownership Of Various Components Of Data Pipelines Is Unclear.

- Monitoring Data Pipelines Is Done, But There Is No Real Observability Into The Entire Infrastructure.

It Is Only When Things Begin To Scale, Do We See That Both Speed And Lack Of Clarity Create Fragile Data Pipelines.

2026 Will be Even More Difficult.

Modern data stacks:

- Are spread out between cloud and on-premises systems.

- Require real-time analytics and AI workloads.

- Are based on dozens of upstream sources.

Each layer will introduce:

- New points of failure.

- Complex dependencies.

- Larger blast radius when things go wrong.

An Example That Everyone Can Relate To:

A schema change in an upstream system goes unnoticed.

A downstream pipeline fails without anyone knowing about it.

Now data on your dashboard is incorrect, and your ability to make decisions is affected.

By the time you find out that the pipeline is failing:

- Trust has already been lost.

- It takes hours or days to debug the pipeline and get it working properly again.

How a Mature System Looks Like:

- You are able to identify anomalies in your data before they have an effect on the business.

- There is always someone responsible for every dataset.

- Your systems can automatically recover from failures.

The Key Point:

Data infrastructure security isn’t about preventing failures. It is all about reducing the impact of those failures and improving the time it takes to recover from them.

Prerequisites: What to Have in Place Before You Start

Before you can have a proper disaster recovery strategy, you should already have the following foundational systems:

1. Clear Ownership Model

Every pipeline must be assigned to:

- An individual owner.

- A specified escalation path.

- An SLA for which that owner will be held accountable.

If there is no owner for an incident, it can linger for an indefinite period.

2. Baseline Infrastructure

In order to implement a disaster recovery plan, you must already have:

- Functional data pipeline systems.

- Centralized storage.

- Basic automation/orchestration.

The disaster recovery plan is built on top of existing infrastructure.

3. Data Contracts

Data contracts (schema agreements) are you;re assurance:

- There will be no downstream failures as a result of upstream changes.

- Upstream failures will be detected immediately.

4. Stakeholder Alignment

When it comes to security, it has an impact in:

- Engineering.

- Compliance.

- Product.

Everyone involved with the disaster recovery plan must agree on:

- Risk tolerance levels.

- How long to recover from a recovery event.

5. Defined Success Metrics

Some examples of your success metrics may include:

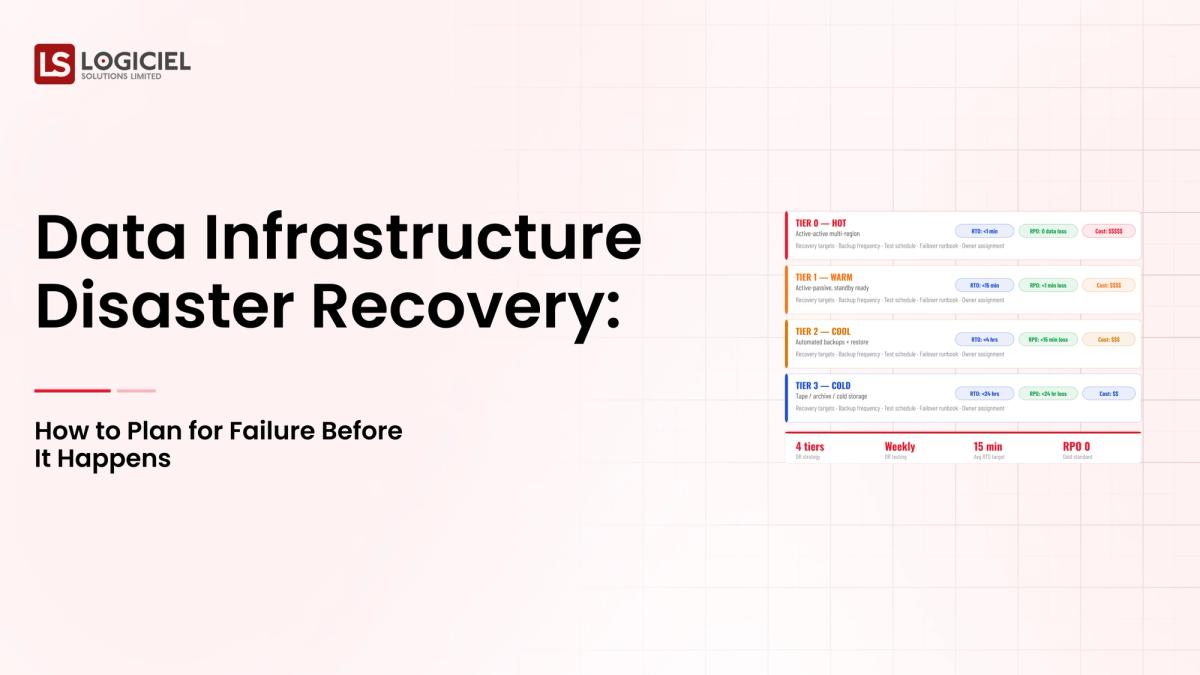

- Recovery Time Objective (RTO).

- Recovery Point Objective (RPO).

- Pipeline Availability.

The Key Point:

If you do not have a sufficient number of prerequisites, your disaster recovery strategy will devolve into a reactive firefighting operation rather than a structured approach for building resilience.

Phase 1: Evaluate Your Present Condition

Understand your present environment before developing solutions.

Step 1: Audit Your Data Ecosystem

Identify and Document the Components of Your Data Ecosystem:

Your Data Ecosystem (Documentation):

- Pipelines

- Tools

- Data Sources

- Storage Systems

Other things to capture are:

- Ownership

- Service Level Agreements (SLAs)

- Dependencies

Step 2: Identify Where The Biggest Gaps Are

Most groups that perform these audits discover:

1. Visibility Gap

No complete view of data flows from beginning to end (i.e., source to destination).

2. Reliability Gap

Regularly occur or go unnoticed or quietly fail.

3. Ownership Gap

Lack of ownership or responsibility for the data.

Step 3: Document Data Flows

Even a simple data flow diagram helps with this process:

Source to Transform to Destination

This diagram reveals:

- Critical dependencies

- Single points of failure

Step 4: Prioritize Initiatives

Break them down into:

- Quick Wins (monitoring, alerts)

- Strategic Investments (lineage, observability)

Output

A prioritized roadmap of:

- Immediate Fixes

- Long-term Improvements

Key Insight

You cannot protect what you don't truly understanding.

Phase 2: Create a Blueprint for Your Ideal Data Architecture

Now you need to determine what your data architecture should look like if it were to fail.

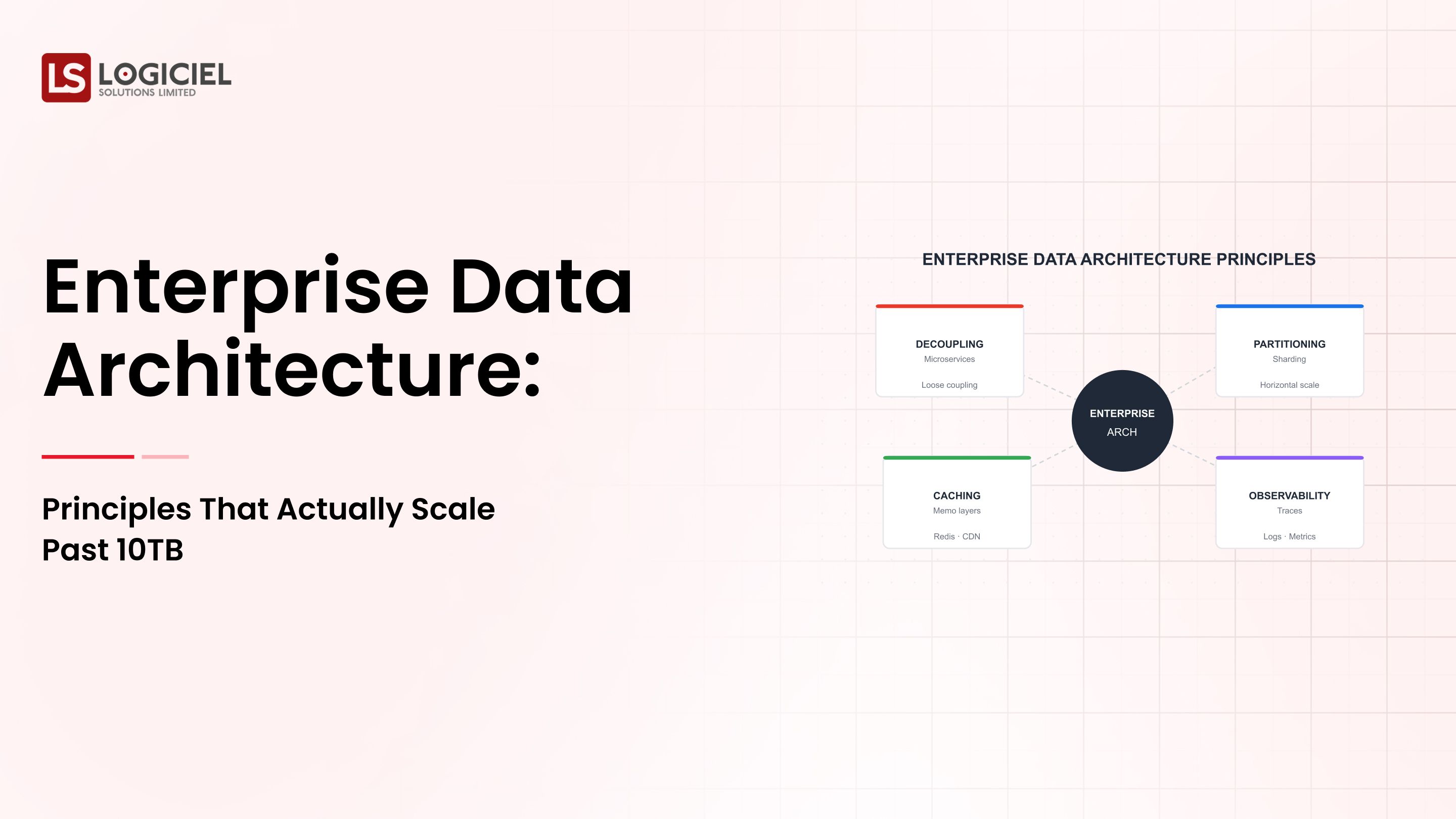

1. Create Your Design Principles

Your data architecture should be:

- Designed to be resilient

- Observable across every layer of the architecture

- Modular and scalible

2. Be Deliberate in Components Selection

Avoid doing what you always do.

Instead, evaluate potential tools based upon:

- Reliability

- Integration

- Operational Overhead

3. Build Observability into the Blueprint First

Include in your observability as:

- Data Freshness Tracking

- Pipeline Health Monitoring

- Error Detection

According to industry standards, teams that have good observability reduce their time to resolve incidents by more than 50%.

4. Build in Recovery

Include into your architecture as:

- Failover

- Backup Pipelines

- Retry Mechanisms

5. Document Your Assumptions

Document as you design or develop:

- Design decisions made

- Constraints

- Trade-offs

Revisit quarterly. can be recovered from.

Build-test-release incremental phases in your project as the third phase.

Avoid big-bang releases.

Select one domain at a time between revenue or customer analytics domains.

Confirm your strategy by validating each chosen domain.

- During development/testing/beta release phases, you should set up a parallel data pipeline for each legacy system and then execute a pivot to ensure (no data loss & ease of rollback)

- Automate the testing of the new system using schematics/data quality/transformational tests.

- Establish monitoring systems for latency/error/dat freshness.

- Implement scaling gradually after your system is stable into additinal domains; then standardize designs.

- Incremental releases reduce risk and improve acceptance/adoption levels.

Implementing a system is only the beginning of success; you must evaluate it as well.

Set your service level objectives (SLOs):

For example;

- 9% uptime;

- < 5 minute latency;

- < .1% error rate,

establish a dashboard to track stakeholder metrics:

- Pipeline health;

- data freshness;

- incident trends.

When in doubt; make it easy for your stakeholder to see this data.

Create monthly retrospective reviews of what failed; root cause analysis and preventative action items.

Measure business impacts, such as;

- reducing downtime,

- speed of decision-making.

Intelligent systems (logiciel software) provide observability, automated reliability checks and highlighting actions items regarding failures and assure that recovery is accomplished quickly.

Building metrics and measures creates a continual improvement process that will keep your data infrastructure safe and secure.

The risk for you're planning for failures has become a requirement.

Takeaway:

- You should plan for failure as if it were an inevitability;

- build your system to recover fast from any failure;

- provide visibility into the recovery process as well as define who is responsible for recovering from a failure.

Failure to do these things will cause you to take longer to recover from any failure you experience.

Success will come from using incremental implementations; do not try to migrate an entire database to your new system in one shot.

Building secure and reliable data infrastructures is a challenge; do it right, however to provide:

- Reliability to your data;

- speed of innovation;

- and employees of your organisation will have confidence and trust in the speed at which they can recover from system failures.

AI – Powered Product Development Playbook

How AI-first startups build MVPs faster, ship quicker, & impress investors without big teams.

Call to Action:

Have you had a lot of incidents: if yes; it's time to revamp your strategy.

Read these two resources:

- Stop Data Infrastructure From Breaking - Why It Keeps Happening and How To Fix It

- Evaluating Data Infrastructure Providers - 40 Questions to Ask

If you would like assistance we can provide a free Infrastructure Resiliency Audit for you.

At logicielsolutions.com we build resilient AI First data infrastructures for engineering departments to;

- Identify failures quickly;

- automatically recover from failures;

- and provide a scalable solution with confidence.

Frequently Asked Questions

What is Data Infrastructure Security?

Data infrastructure security involves protecting your data infrastructure from failure, being able to recover lost data in an acceptable time, and providing reliable access to your data.

Why is Disaster Recovery Important?

Because failure is inevitable; having a disaster recovery plan limits your exposure to maximum possible downtime and loss of data.

What are RTO & RPO?

A: #### RTO (Recovery Time Objective) is the time it will take to rebuild your systems; and then create a data store that can be completely recovered to the state it was in prior to a disaster event. #### RPO (Recovery Point Objective) is the maximum amount of data, in time, that can be lost in the event of a full system failure.

How Can My Team Improve the Reliability of their Data?

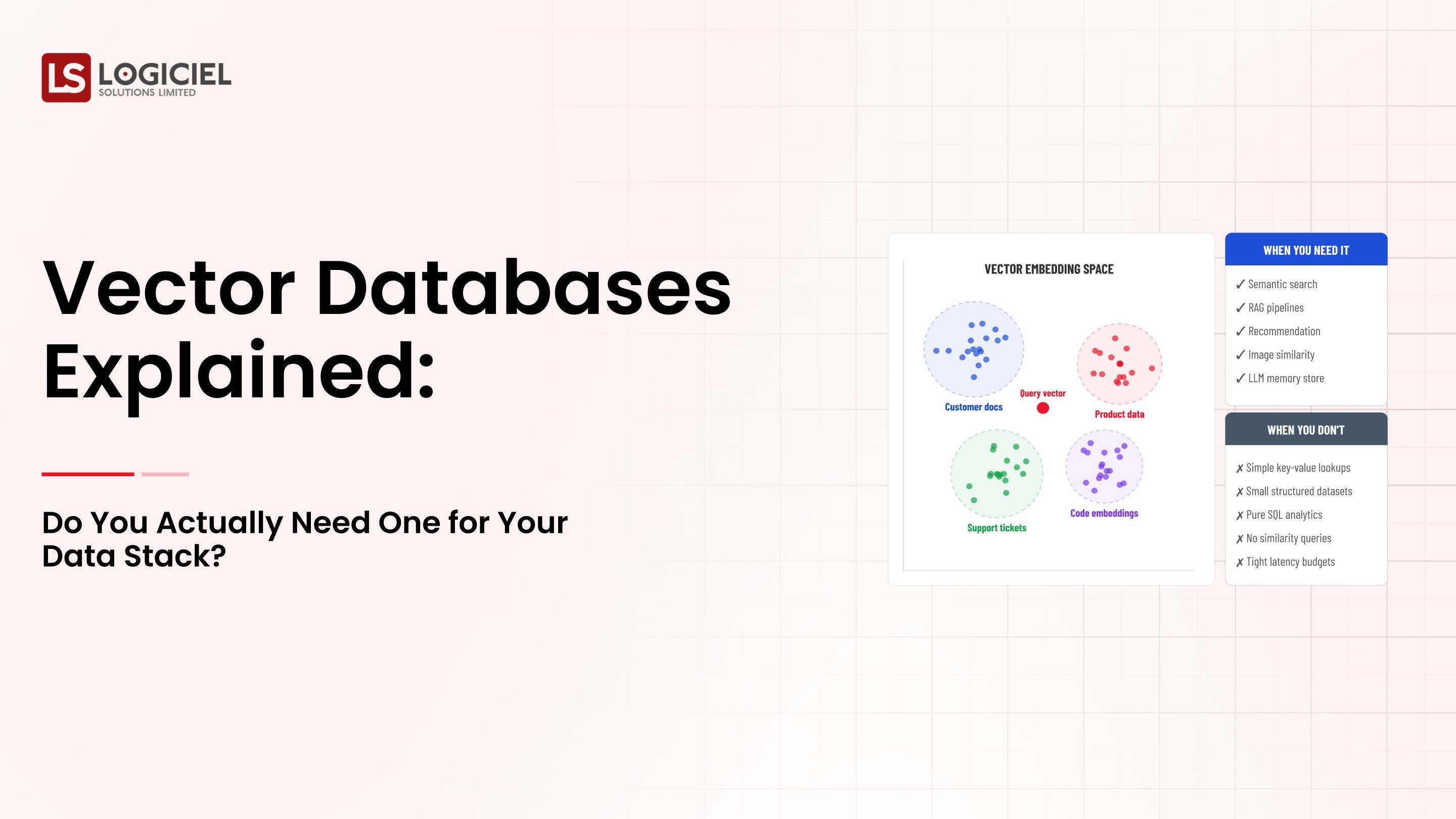

Implement observability; Create data contracts; implement automated testing; define the owners of each piece of data, allow them to aggregate all data associated with that particular piece of data, and enforce defined processes to ensure that data quality metrics are met.

Where Should My Team Get Started?

Assess your existing infrastructure and identify gaps; improve your data reliability incrementally.