There is a critical dashboard that is showing incorrect revenue numbers. The pipeline is marked as having succeeded but there is a problem with the data. Your team runs off to look at the transformations and then check the source, and then begins the process of re-establishing faith from the people they support.

This is more than just a failure of a pipeline. It’s a failure of the concept of a data warehouse.

A modern data warehouse is defined as more than just a storage mechanism, it is a place where you can build your analytics, AI and decision-making.

However, a lot of teams are still using outdated concepts of how data operates.

If you are a Data Engineering Lead or CTO and are responsible for building or scaling your analytics infrastructure, the intent of this article is:

Define what you mean when you reference the concept of a data warehouse

Understand the differences between a legacy and a modern data warehouse

Design systems that are capable of scaling with your company

To do that, let’s start with the basics.

Evaluation Differnitator Framework

Why great CTOs don’t just build they evaluate. Use this framework to spot bottlenecks and benchmark performance.

What Are Data Warehouse Concepts? The Basic Definition

At a very high level, the concept of a data warehouse outlines how we collect, store, transform, and query data to provide analytical capabilities.

To compare:

If your operational database is the real-time transaction processing system, the data warehouse is your reporting system that provides useful insight into your raw transactional data.

Why Are Data Warehouses Necessary?

Because Operational Systems doIssues that Data Warehouses Address/Resolve

-Solving large query challenges

-Providing historical context

-Resolving complex aggregation problems

Resolved Issues by Data Warehouses

-Structures data to enable analysis

-Separates data workload from transactional systems

-Optimizes data for fast reads

Core Components of a Data Warehouse

-Ingest Data (From different sources)

-Stores Structured analytical data

-Transforms or models data

-Queries Data (Analytics/reporting)

Modern Data Warehouse Tools

-Snowflake

-Google BigQuery

-Amazon Redshift

These are all platforms that show the evolution of an analytical database.

Other core issues they will solve

-Enable high-speed analytics

-Provide historical analysis

-Enable scalable reporting

In Summary: Concept of Data Warehouse convert raw data into information that can be used for analysis.

Importance of the Data Warehouse Concept in 2026

The Data Warehouse's role has significantly evolved.

1. AI requires: a clean set of structured data, a large historical set of data and a consistent schema in which to house the data.

If there is no strong warehouse design: a model may produce false results, and may provide poor insight.

2.A large volume of new organizations are processing billions of event streams and some real-time cross-source data streams that can't be process using the traditional database designs.

3.Businesses are making data-driven decisions based on real-time dashboarding, predictive analytics and automated reporting to measure their success.

4.Cost optimization is critical.

When the warehouse is poorly designed, it results in an expensive query to find the needed data, creating redundant storage of the same data, and using inefficient computing resources.

Traditional vs. Modern Data Warehouse Concepts

-Batch-only data processing vs. real-time and batch processing

-Rigid schemas vs. flexible modeling to fit the data

-Manually optimize vs. automatically scaling

-Limited analytics vs. advanced analytics

In summary: Data warehouse concepts are at the core of a modern data-driven organization.

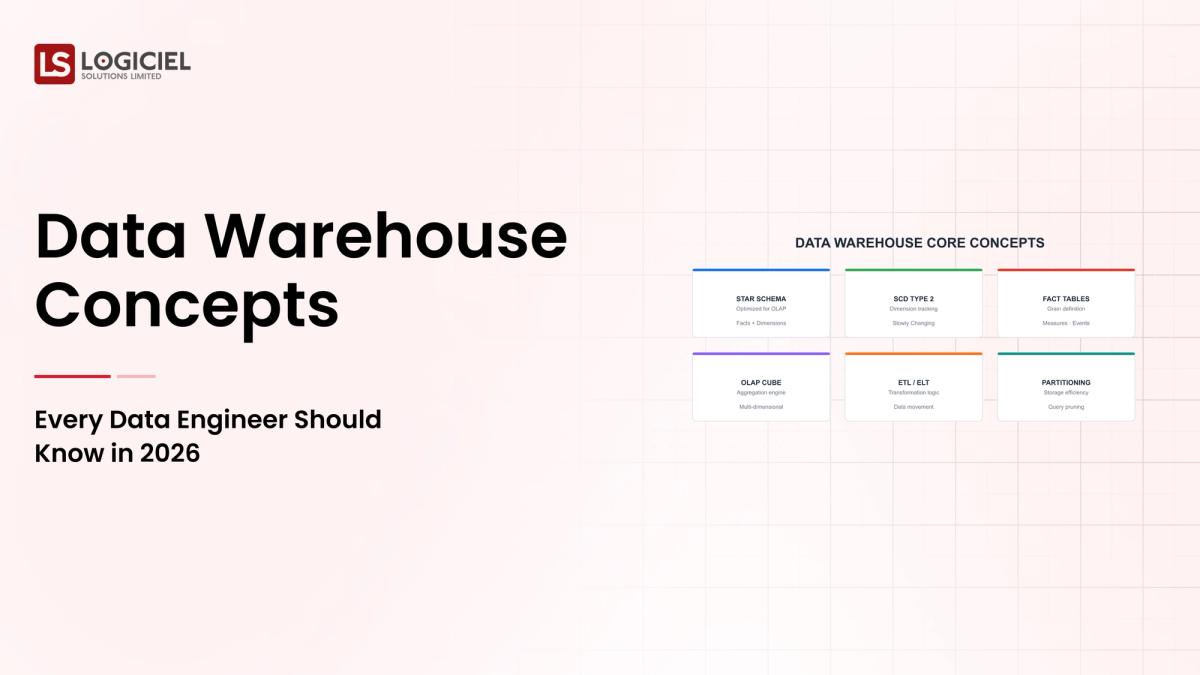

Details about the Core Components of Data Warehouse Concepts: What are You Designing?

Let’s look atIngestion Layer

Data is collected from:

Data from Application Databases, API’s, and Event Streams.

Responsibility’s of the Ingestion Layer:

- Data Extraction

- Data Validation

- Formatting the data into its initial structure.

2. Storage Layer

Modern Data Warehousing (DW) means most modern DW’s use:

- Columnar Storage

- Distributed Systems

Benefits gained from Columnar Storage & Distributed Systems:

- Speed of querying

- Data Compression

3. Processing Layer

Data transformations will be done using:

- SQL to transform the data.

- Use ELT Process

- dbt for transformation

4. Data Modeling Layer

Data Models include:

- Star Schema

- Snowflake Schema

- Denormalized Tables

5. Query Layer (OLAP)

Optimization of Query Layer is for the following:

- Aggregates

- Complex Queries

- Analytical Workloads

How It All Works Together

Data is ingested into the data warehouse, stored in the data warehouse, transformed to a model, and queried by analysts once transformed.

Common Misconception

A data warehouse is only for data storage.

Data warehouse provides an end to end solution for the management of all things analytical.

Key Takeaway Each of the different layers is contributing to the overall performance and reliability of your data infrastructure.

Real-World Data Warehouse Concepts in Action

Let’s take a look at how a Data Warehouse (DW) operates with a real-world example.

We have a client that has a SaaS Analytics platform that needs to track a variety of items, such as:

- How users are behaving

- Transactions made

- Using features of the application

Step 1: Ingesting Data

The ingestion of data will be from:

Application databases (creating records), Event tracking (event created).

Step 2: Data Storage

Once data is ingested, it will be loaded into:

Snowflake or BigQuery.

Step 3: Transform Data

Data transformation using dbt will include:

- Cleaning Data

- Creating Data Model(s)

- Creating Aggregate Data

Step 4: Querying Data

Teams will utilize Data Analytics Tools and write SQL queries to query this data.

Step 5: Produce Insights

The analytics team will produce the following types of outputs:

- Dashboards

- Reports

- Machine Learning Features

Where It Works Well

- Fast Querying

- Reliability of Data

- Scalability of Systems

Where It Does Not Work Well

- Poor Data Models

- No Data Validation

- Inefficient Querying

Key Takeaway Overall performance in the real world is dictated by the initial design decisions that were made regardless of how good the product may be.Overengineering too early

Creating complex schemas too soon can cause:

i) Slow development

ii) Increased upkeep and maintenance costs

ii) Ignoring observability

Without observability built into the workflows:

i) Issues will go undetected.

ii) Debugging is done reactively

iii) Skipping documentation

If there is no documentation: Teams misinterpret the data, which leads to an increase in errors.

iv) Treating this as static

Data warehouses are a dynamic entity. They must change to meet:

i) Occasional new data sources

ii) Changing business requirements

Takeaway from these lessons learned: A majority of the failures occur due to gaps in the processes, not because of the tools.

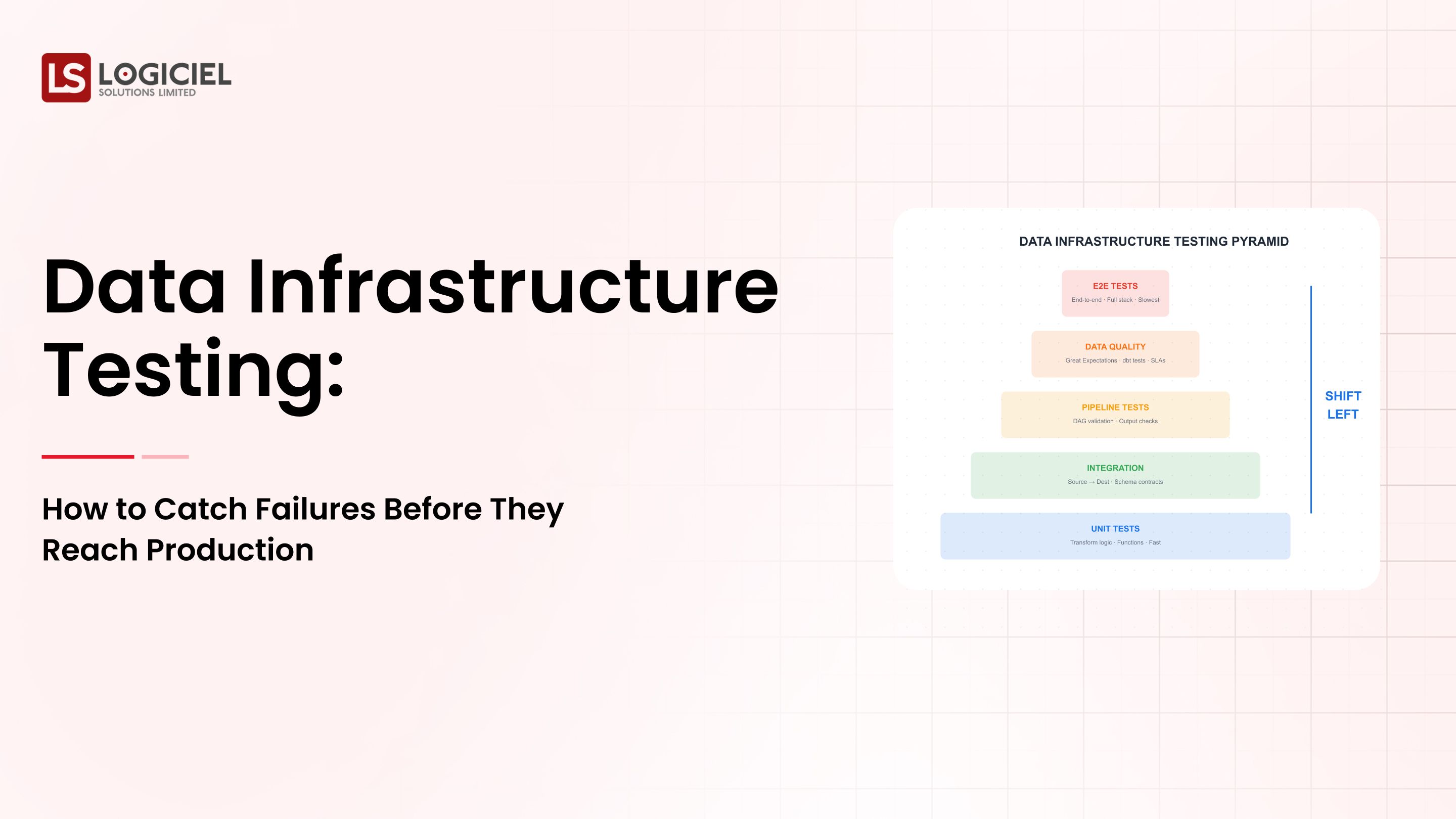

Data Warehouse Best Practices: What High Performing Teams Do Differently

1. Automate pipelines. Includes:

- validation,

- testing

- deployment.

2. Use ELT instead of ETL.

Load data first and transform when needed, which can give more flexibility.

3. Optimize the queries.

Include partitioning, clustering and indexing whenever possible.

4. Define Service Level Agreements.

Monitor and track the performance of the service, including: the dispatch time for queries, data freshness (when was the last time data was loaded), and reliability (how often did the service experience an outage).

5. Implement AI first in your engineering systems.

Leading teams have moved beyond just manual optimization, and instead are implementing systems that: automatically optimize query execution, ingesting data in real-time, and improve the overall performance of their engineering solution.

Logiciel's value add comes from the fact that teams have moved away from trying to manage individual point solutions (different tools) and instead are using intelligent data systems that can be efficiently scaled.

Takeaway for High Performing Teams: Focus on automation and system design.

Conclusion

The principles of data warehouses are fundamental to the success of any modern data systems.

Key Takeaways:

- Data warehouse systems support an organization’s ability to scale its analytical and decision-making processes.

- Modern data systems demand a flexible, scalable design.

- The success of any data warehouse is determined by the architectural design of the data warehouse system, not simply by the tools used.

The creation of an effective data warehouse is a complex process and requires thoughtful design, continuous optimization, and alignment with business goals. When data warehouses are developed correctly, they produce the following benefits:

- Faster insights

- Reliable analytics

- A scalable data framework

- Improved decisions.

Call to Action

If your analytics system is becoming increasingly complex to manage, now is the time to evaluate your data warehouse architecture.

Learn More Here:

How to Choose A Data Warehouse in 2026: Snowflake versus BigQuery versus Redshift

Trade-offs Between Data Lakes and Data Warehouses

How to Migrate From a Data Warehouse to a Data Lakehouse.

At Logiciel Solutions, we assist teams in building AI first data platforms that improve performance, dependability, and scalability. Our engineering strategy focuses on optimizing at the systems level and ensuring long-term efficiencies.

Explore how to modernize your data warehouse.

AI Velocity Blueprint

Measure and multiply engineering velocity using AI-powered diagnostics and sprint-aligned teams.

Frequently Asked Questions

What Are Data Warehouse Concepts?

Data warehouse concepts provide details on how to collect data, store it, and analyze it for reporting and analytics. Data warehouse concepts include ingestion, storage, transformation, and querying processes.

What Is the Difference Between OLAP and OLTP?

OLTP is responsible for handling transactional workloads, while OLAP is optimized for processing data queries for analytical purposes. Data warehouses utilize OLAP systems to produce reports and insights.

Why Are Data Warehouses in the Cloud?

Cloud-based warehouses are highly scalable, flexible, and have cost-efficient aspects to them. This ability enables teams to independently scale computing and storage capabilities.

What is ELT in Data Warehousing?

ELT stands for Extract, Load and Transform. Data is first loaded into the warehouse, before being transformed into the appropriate analysis schema/format. Providing teams with a high degree of flexibility and scalability.

What is the Biggest Mistake in Designing a Data Warehouse?

The biggest mistake is to over-engineer the initial design without understanding what the actual data requirements are. Design with simplicity and scalability in mind.