Three years ago, our data pipelines were running just fine - with failures being few & far between, smaller data sets and with downstream customers trusting the numbers they see.

Now? An erosion of trust behind the same underlying data pipeline.

40% of your team's sprint capacity is being spent on debugging data-related problems, rerunning your data pipelines and explaining how you arrived at the numbers/reports to your stakeholders. Dashboards are broken, you'll see ML models start to drift and there will be discrepancies between the numbers/reports produced. The worst thing is that most of these failures occur/will be discovered in production.

The answer? This isn't just a data pipeline issue; it is a Data Quality Problem.

If you are a staff/principal engineer of a growing data system and your job is to provide the systems necessary to scale your data systems, this guide will help you with.

- Why your current Data Systems are Facing Increased Levels of Failure;

- How to Construct Effective Data Quality Testing Frameworks; and

- How to Produce Reliable, Production Ready Data Infrastructure

Let's start with the understanding that the data quality of the current date and time is more complicated than you think.

Agent-to-Agent Future Report

Understand how autonomous AI agents are reshaping engineering and DevOps workflows.

Why Most Organizations Struggle to Implement Effective Data Quality

Most organizations assume that data quality is simply a tooling problem - however, it isn't.

They are actually struggling with Systemic Design Problems and Ownership Problems....

1. No Clear Ownership of Data

At many organizations,

- Data Producers Do Not Own Any Downstream Effect Of Their Data

- Data Consumers Assume Data That Is Correct

- No Team Owns The Overall End-to-End Quality Of The DataMaking Decisions Ad-Hoc

2. A great deal of the time teams:

- Add validation rules after an issue arises

- Fix issues after they occur

- Do not take the time to document processes, systems or procedures

Over time this results in systems that are more susceptible to failure.

3. The Complexity of Data Has Grown

Modern systems contain:

- Batch-based and Streaming pipelines

- Multiple Layers of storage

- Real Time analytics

Each of the above systems introduces additional failure point(s).

4. The Compounding Effect of Technical Debt

Small Samples of Technical Debt; such as:

- No validation

- Hard coding

- Ignoring Schema Evolution

Will eventually result in failure across your entire system.

What Success Will Look Like

A principal or staff engineer can equate strong data quality with:

- Detection of Failure prior to it occurring in production

- Clearly defined data contracts

- Systems that are Observable and Testable

In conclusion, data quality is not a feature; it is a Capability of a System.

Prerequisite(s): What Needs to Be in Place Prior To Deployment

The foundation of any data quality framework requires the following:

1. Clear Ownership Model

Defining i.e.

- Who owns each dataset

- Who is responsible for validating each dataset

- Who is responsible for responding to any issues that occur

Unless there is clear ownership of datasets quality cannot be ensured.

2. Baseline Infrastructure

Requires the following;

- Stable Pipelines

- Orchestration Systems e.g. Airflow / Dagster

- Centralized storage

It is not possible to test the quality of data with an unstable pipeline.

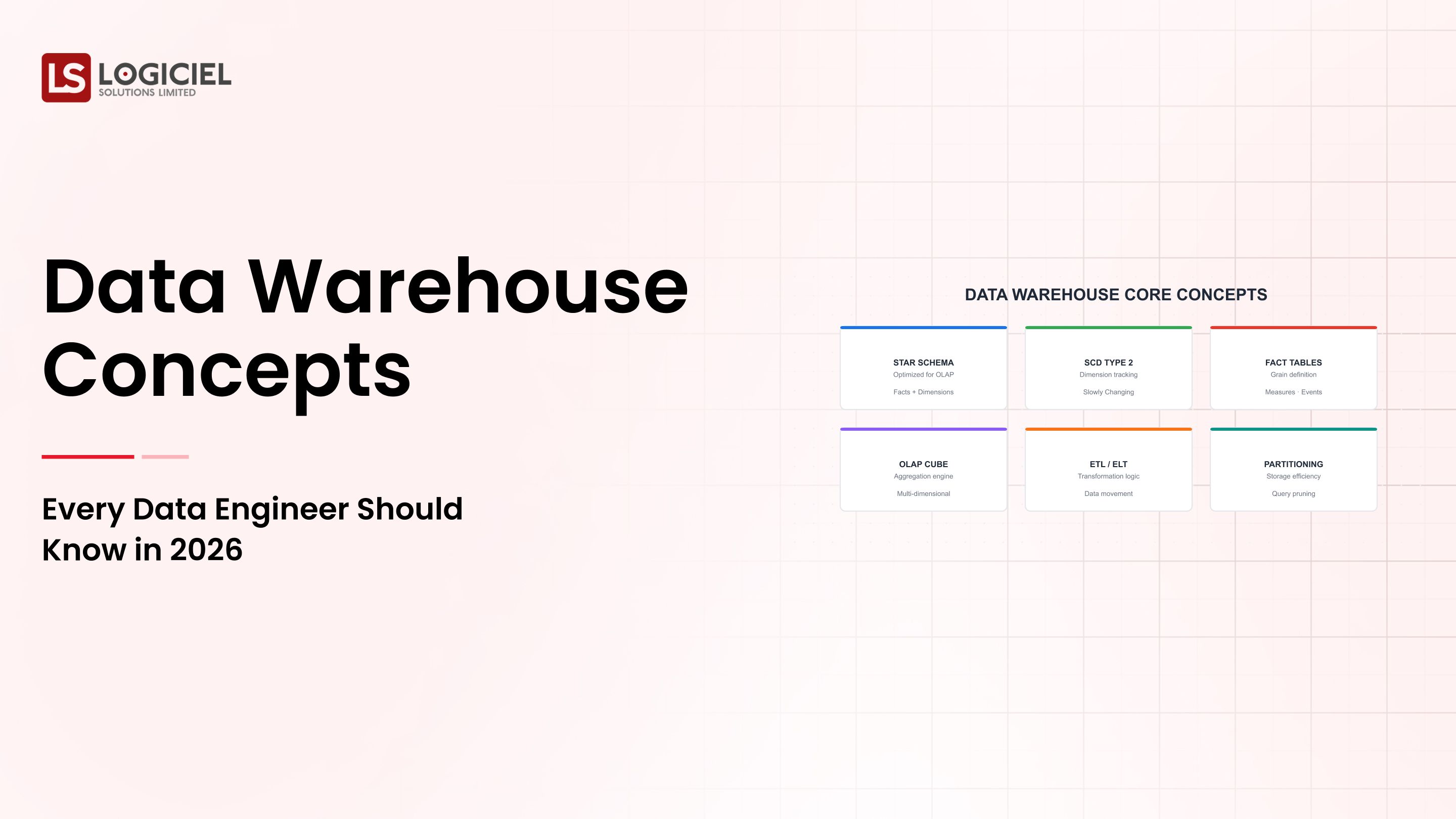

3. Data Contracts

Setting expectations for;

- Schema

- Type of Data

- Freshness of Data

These set the standards for both Producers and Consumers of the Data.

4. Defined Success Metrics

To include;

- Data Accuracy

- Pipeline Reliability

- Time to Detect

5. Stakeholder Alignment

To ensure;

- Business agrees to limitations of Data

- Engineering agrees to priority of Items Required

In summary, the first step to achieving Data Quality is through Aligning your Stakeholders first before you have any tools.

Phase 1: Assess Your Current State

You Must Establish Clearly Defined Visibility Prior to Improving Upon Your Data Quality. Conduct an Audit of Your Pipelines

Identify:

The number of pipeline systems;

- What dependencies exist;

- The frequency with which each pipeline fails.

Evaluate Current Testing

Determine:

- What exists for validation;

- What is missing from validation;

- How effective the tests are.

Map the Data Flows

Document:

- What the source systems are;

- What the transform processes look like;

- What the outputs are for the various systems.

Even a simple diagram can help with this step.

Identify Key Gaps

Some examples of gaps are:

- Missing validation for data;

- No monitoring;

- No defined ownership of data.

Output - a prioritised roadmap.

Organize into:

Quick wins (e.g., adding basic validation);

Long-term improvements (e.g., redesigning the pipelines).

Key Point: You cannot fix what you cannot see.

Phase II - Design the Infrastructure

At this point, you are moving from the process of diagnosing the existing issues to the process of designing the potential solutions.

Define the Core Principles of Your System

Systems need to be built with the following principles in mind:

- Observability;

- Testability;

- Scalability.

Choose the Components of the System Deliberately

Don’t default to the tools you normally use.

When evaluating tools to use:

- Assess how scalable the tool is;

- Assess how well the tool will integrate with other tools you want to use;

- Assess team members’ expertise (if there are people on the team who know how to work with a specific tool, that may be beneficial enough to use).

Design with Observability as the Priority

Do not consider observability as an afterthought - design the solution with observability at the forefront of the decisions made.

You should factor in:

- Logging;

- Metrics;

- Alerts.

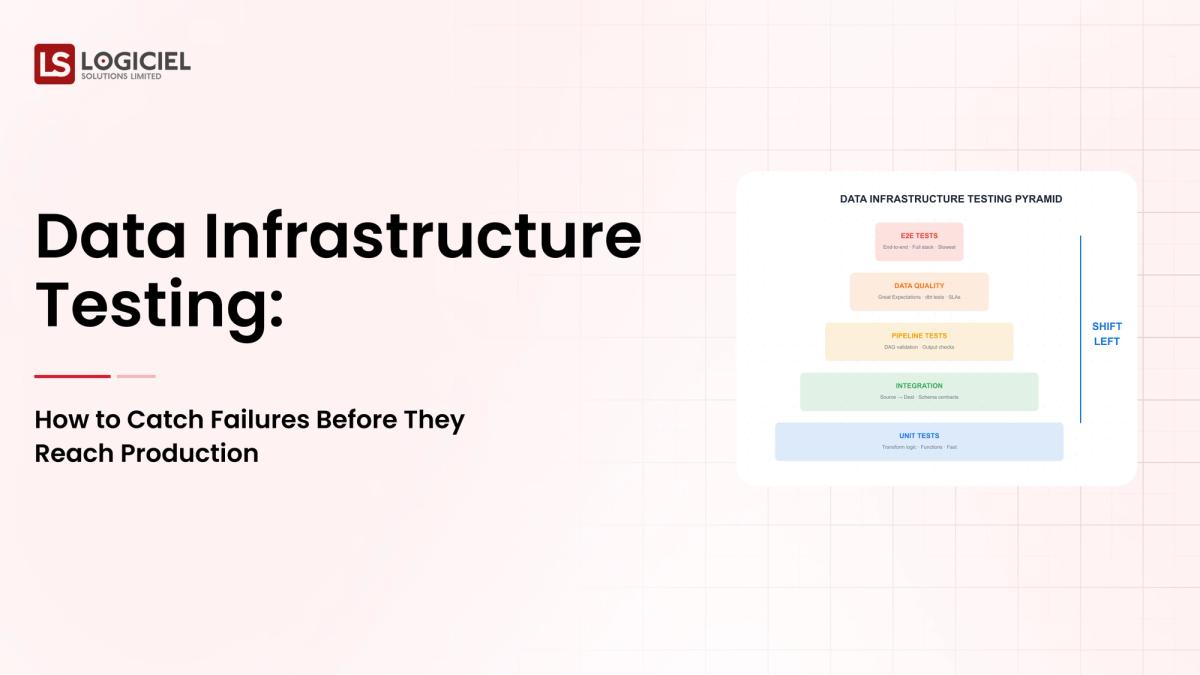

Implement Layered Testing

Multiple types of testing should exist at multiple levels:

- Layered Examples

- Ingestion: Schema Validation

- Transformations: Business Logic Tests

- Outputs: Data Consistency Checks

Documenting Everything

Essentially, you should document:

- Data Definitions;

- Pipeline Logic;

- Testing Rules.

Key Point: A good design will prevent more problems than will be found in the data.

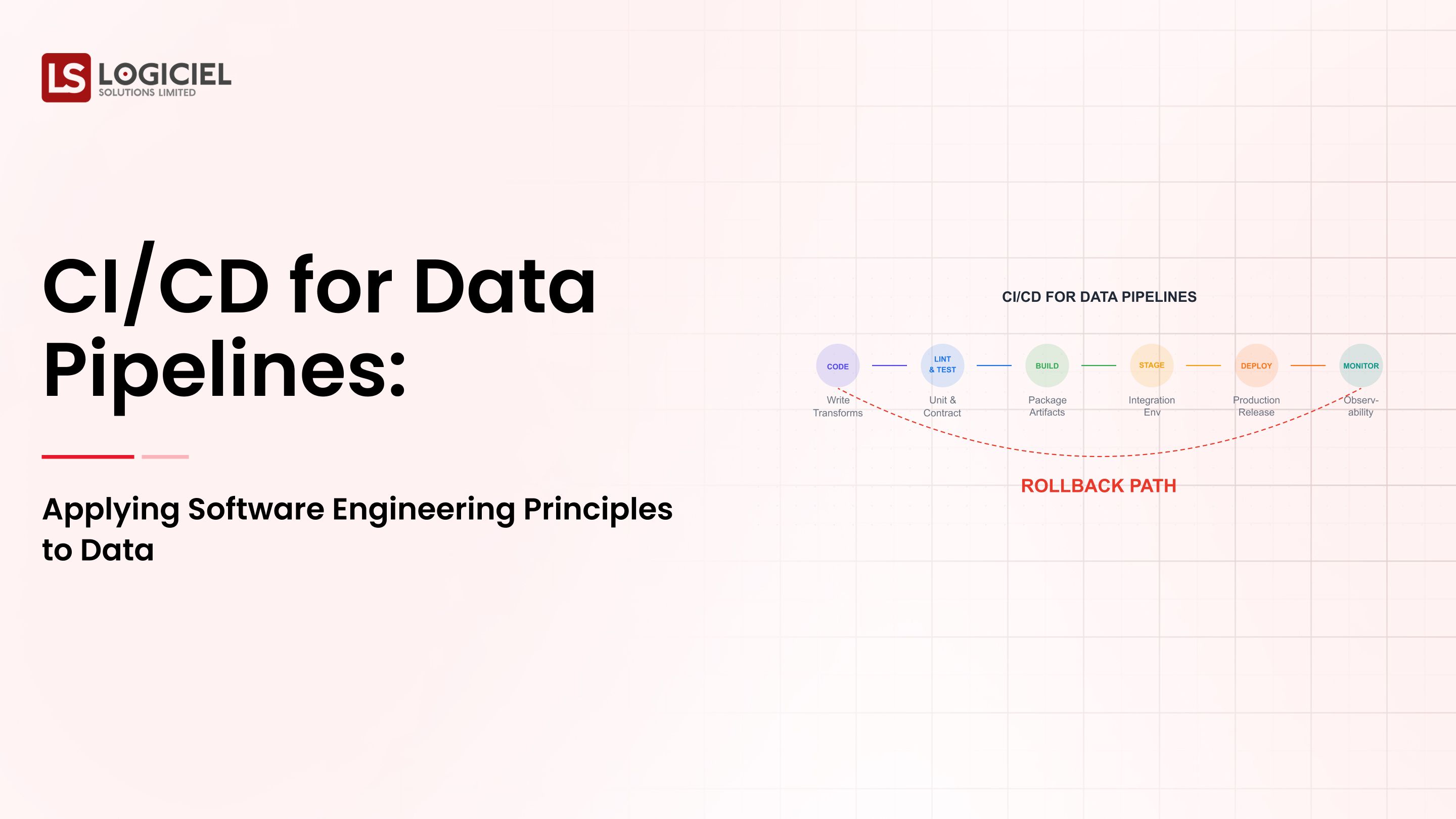

Phase III - Develop, Test and Implement Incrementally

Develop the new processes for implementation progressively.

Start Small

Choose one domain and one pipeline to build and test.

Use this as your proving ground.

Run Two Parallel Process

During the course of the transition, you will need to maintain your existing pipeline; route data through the new pipeline; and validate the new pipeline.

This process will minimize risk.Automated Testing

To be successful in automated testing, you’ll want to include three things: schema validation, data quality checks, and regression tests.

4. Instrument All Things in Your Environment

To understand how to instrument things in your environment, you can track latency, error rates, and data freshness to gain visibility into all aspects of your data.

5. Iterate Often

Consider that continuous iteration (refining testing, monitoring, and your processes) is key to achieving successful data quality.

Take Away: Building a quality data set will not be successful with a one-time approach, but rather through iteration of the quality data process.

Measurement, Improvement & Data Quality

Measurement is crucial if you want to achieve an improvement in your data quality across the board.

1. Develop Service Level Objectives (SLOs)

For instance:

- 99.9% of the time the pipeline will be up;

- The data will be updated within 5 minutes;

- The error rate will be less than 1% of the total number of transactions, on average.

2. Create Dashboard that is Accessible

To have success with your dashboards, the non-technical stakeholders should be able to read the metric at the time that they view the dashboard.

3. Schedule Regular Reviews

Schedule a review of the data quality processes regularly (i.e., monthly retrospective) and review any incident that caused an issue.

4. Develop Key Metrics

i.e., The average time it takes to detect a problem (MTTD), the average time it takes to correct a problem (MTTR), the rate of accuracy of the data being tracked.

5. Using System-Level Intelligence

Leading teams are moving beyond the manual monitoring of their data.

They use AI-driven systems for the following:

Identify the anomalies that exist in the system automatically, Predict future failures, and Optimize the pipeline performance.

This is where the combination of Logiciels’ methodology, validation, and observability will create an advantage for the teams.

Teams will begin tracking the quality of their data through proactive, rather than reactive, optimization of the quality within their systems.

Take Away: Measurement creates a data quality improvement cycle that converts it into a continuous process of improvement.

Final Thoughts

Data quality is a core component of any modern data system; it is not an additional component.

The following are the three key messages:

Data quality issues should be looked at as system-level issues – not just bugs

Testing will be built into every phase within the pipeline

Achieving success depends on ownership, observability, and iteration.

Building a successful data infrastructure will require coordination with the different development teams in the organization (this is very complex), and thus will require investment in the proper tools and processes to accomplish this.

But, the benefits are as follows:

Having reliable data systems will create faster decision making by the organization as a whole

Reducing the total cost of operation to the organization by having reliable data systems will produce more value for the organization. and build a trusted atmosphere across the organization.

Call to Action

If you are experiencing a lack of trust in your data systems, it’s time for you to determine how your data infrastructure handles your validation and monitoring.

Find more information here:

Data Quality at Scale: How Good is the Quality of Your Data for AI-First Engineering Teams

The Best Ways to prevent Data Infastructure from Breaking

What is the New Data Stack? A Guide for Engineering Teams

At Logiciel Solutions, we are helping organizations to build reliable AI-first data systems that will detect problems prior to the production environment.

Our methodology and validation will assist with observability and intelligent automation to increase data quality across all data.

To find out how to build a data system that can be trusted, click here.

RAG & Vector Database Guide

Build the quiet infrastructure behind smarter, self-learning systems. A CTO’s guide to modern data engineering.

Frequently Asked Questions

What is data quality?

The quality of data refers to the characteristics of accuracy, completeness, consistency, and reliability of data. The greater the quality of data, the greater the reliability of information that is produced from it.

Why is data quality difficult to maintain?

Due to the vast complexity of data systems, primarily because they always contain multiple pipelines and dependencies. Proper testing, monitoring and ownership are required to prevent problems from being propagated throughout the data system.

What is used to test for the quality of data?

Various tools can be used to test for the quality of data, such as Great Expectations, dbt tests or custom-developed validation frameworks. However, the success of data quality testing typically depends more on the process(es) used than on the tool(s) that are used.

What are data contracts?

Data contracts define what the expectations for data are, including the data structure and schema, and the quality of the data, between the data producer and the data consumer. Data Contracts are typically used to prevent breaking changes from occurring.

How do you measure success in data quality?

Success in data quality can be measured by metrics that provide a quantifiable measure of success (like accuracy, freshness, uptime, and incident response times). Becoming successful in data quality is dependent on how the outcome of your metrics are tracked over time.