Your phone starts vibrating at 2:07 AM to alert you of a serious error in your dashboard that affects how revenue is being calculated. The error in your dashboard was due to a failure within your data pipeline, which was marked "success" in the pipeline logs, but actually only delivered partial results. After working through the problem and fixing the data quality issues caused by this system failure, your team lost several hours due to the number of times the pipelines had to be re-run to validate all of your managed data was correct, and as a result, your credibility has also suffered greatly.

This is not because of the tool you have to manage your data; but rather it represents a more significant issue in the area of DataOps.

With greater complexity and interdependency of systems, traditional methods of overseeing and managing data pipelines are being abandoned. The majority of companies have now abandoned using their previously used methods to monitor the quality of their data; as most have moved to traditional manual validation, disconnected tools, or reactive troubleshooting in order to verify the quality of their data before being delivered to their organisation. The result has been to create unscalable, brittle systems that are not able to scale with the business requirements.

If you are one of those CTOs or Data Engineering Leads focused on velocity and reliability, this article will provide you with:

Clarification of the meaning of DataOps in practice

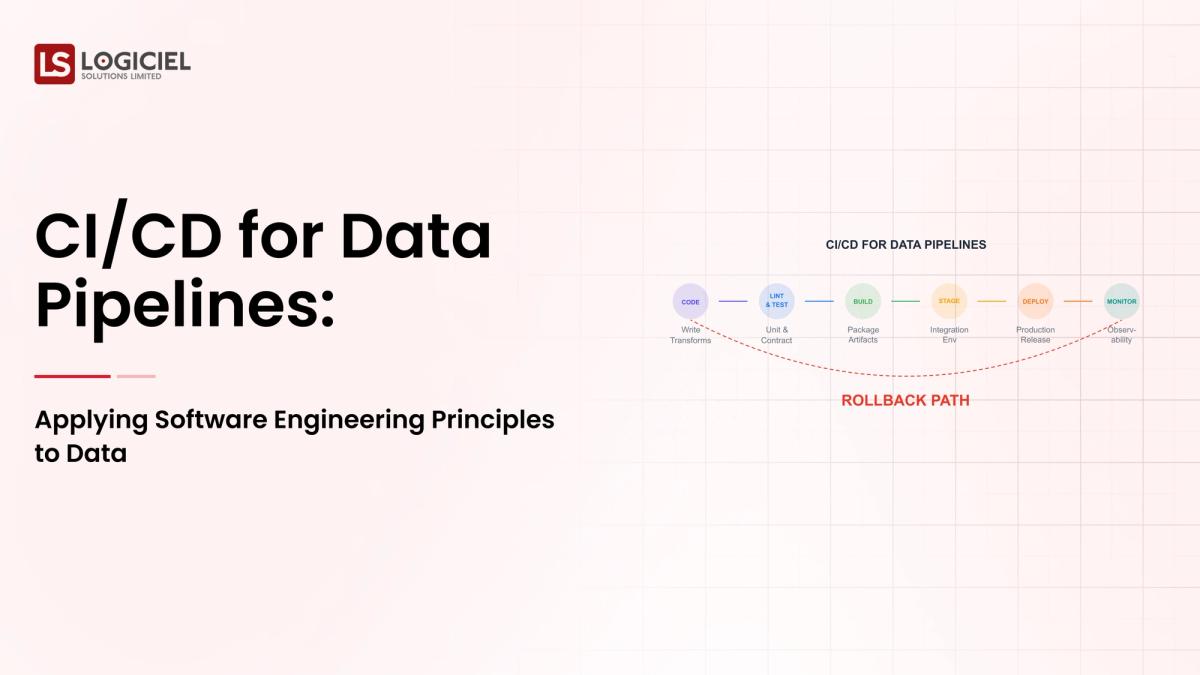

An understanding of how the principles of CI/CD may apply to your data pipelines and

The ability to create an environment where the reliability, observability, and scalability of your systems can be built.

Okay, let’s define DataOps in simple terms first.

Evaluation Differnitator Framework

Why great CTOs don’t just build they evaluate. Use this framework to spot bottlenecks and benchmark performance.

What is DataOps?

DataOps is the use of the best practices for applying software engineering principles such as CI/CD, automated testing, and monitoring of pipelines and procedures used to deliver quality data to your customers.

To simplify this further:

Just like DevOps allows for trust in software delivery to your end-users, DataOps allows for trust in data delivery to your end-users.The purpose of DataOps to address:

Inefficiencies of a traditional data engineering process include but are not limited to:

- Manual pipeline deployments

- Lack of testing

- Inadequate observability

- Reactive resolution of issues

The current traditional data engineer process can be described as having no structured process; there is too much chaos and no method to their madness.

The primary components of a DataOps process includes:

| Component | Function |

|---|---|

| CI/CD Pipeline | Automate testing and deployment |

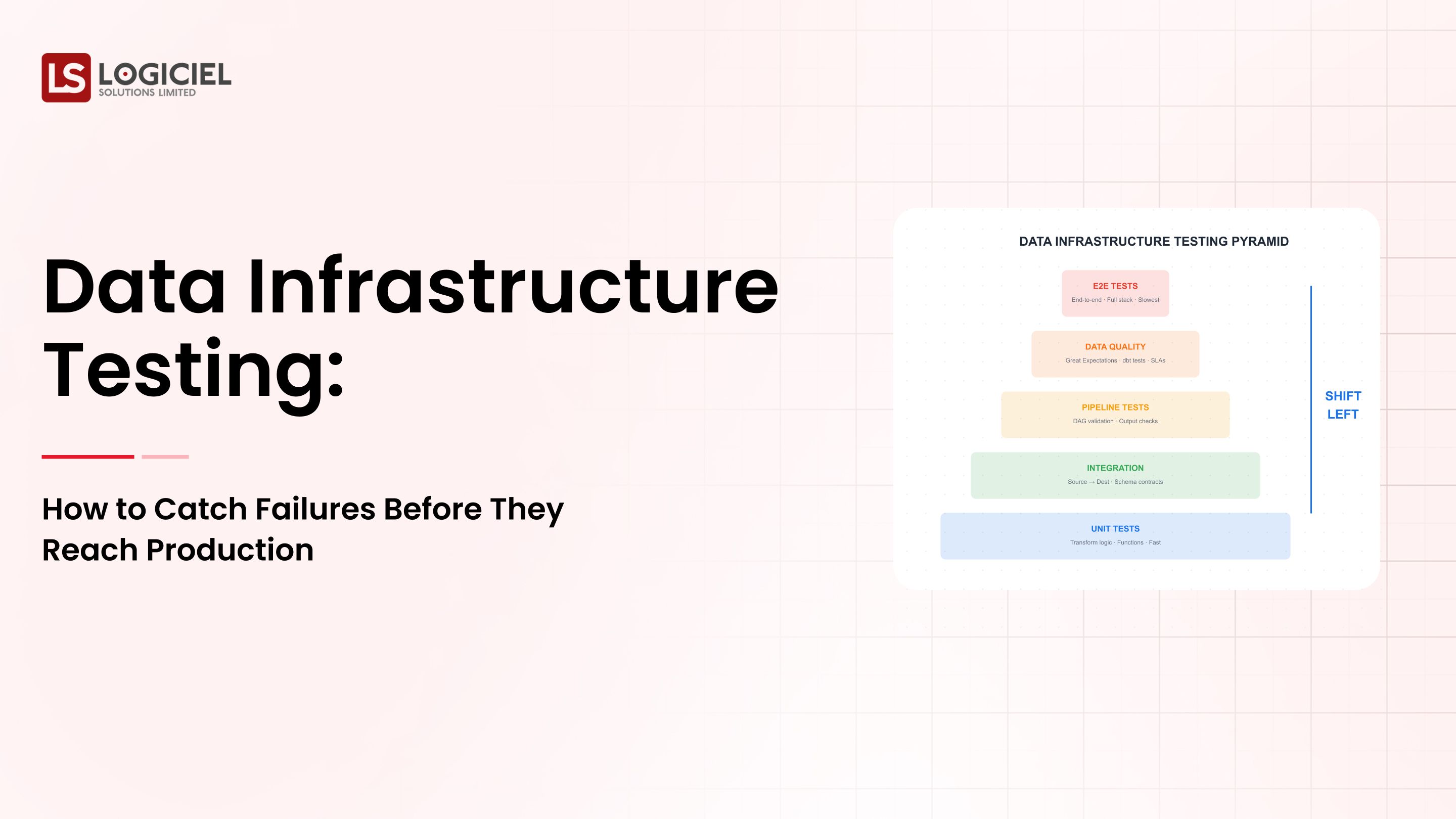

| Data Testing | Validate data quality at all stages |

| Orchestration | Manage dependencies and workflows |

| Observability | Monitor the health and performance of the pipeline |

For Engineers - A Simple Analogy:

A CI/CD pipeline can be thought of as a production line with quality control.

- CI/CD = Automation of the assembly line

- Testing = Quality control

- Monitoring = Real-time monitoring

- Alerting = Quality Control

DataOps is a solution that solves:

- Silent failures

- Manual debug cycles

- Data quality uncertainty

The result of implementing DataOps Converts Fragile Pipelines into Reliable Production Grade Systems.

The Value of DataOps will only be magnified by System Complexity in 2026:

1. AI Models depend upon good data.

If AI models use bad data, then they produce bad results.

Without DataOps:

- The training data will be of varying quality

- The Models will produce bad outputs

- Debugging will be impossible

Current industry estimates indicate that 70% or more of data issues go undetected if validation systems are not in place.

2. Data is Increasing in Quantity and Speed

- Most systems are processing data in real-time (via streaming, events driven architectures, analytics-in-between events).

- Current manual processes are not able to handle the amount of data in most cases.

3.The Price of Incurred Mistake Is More Expensive

Some consequences of broken pipeline include:

- Inaccurate information for business decisions.

- Impact to revenue.

- Risk of compliance issues.

DataOps Versus No DataOps

Without DataOps, the traditional data pipeline approach consists of:

- Manual deployments of data using CI/CD.

- No alerts for pipelines operating apathy.

- Debugging on an action basis after there is an issue; no proactive preemptive monitoring.

- The data pipeline may/may not have been validated through standard means prior to processing.

What Happens If You Ignore DataOps?

Engineering teams will feel burnt-out and over-worked leading to higher operational costs and slower release cycles.

Conclusion: You"ll Need DataOps If You Want To Scale Modern Data Systems.

Key Elements Of DataOps Are What You"re Really Constructing

DataOps is the creation of a DataOps System and the use of DataOps Tools.

1. Ingestion Layer

This layer is responsible for ingesting/receiving incoming data via:

- APIs

- Databases

- Streaming

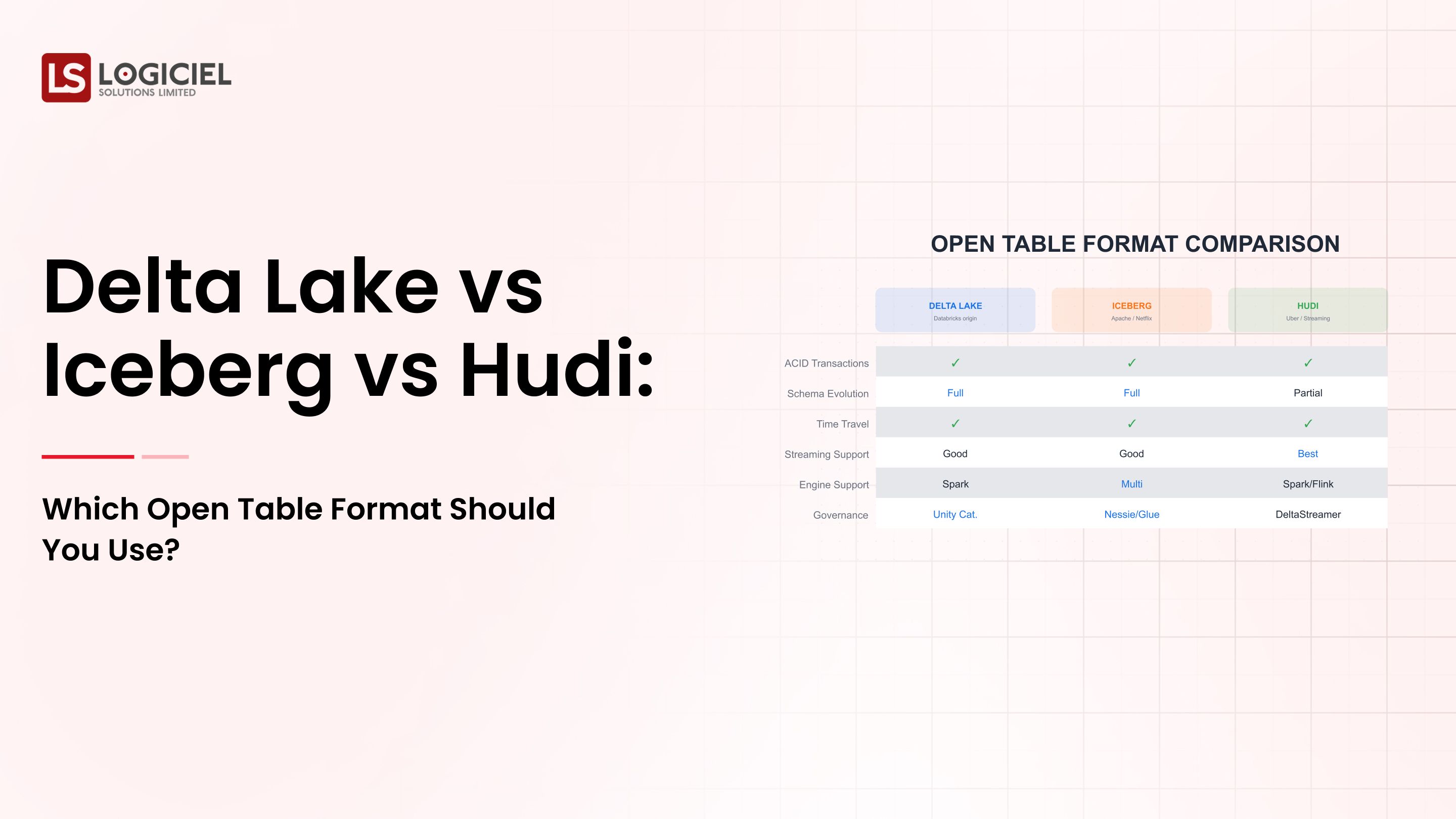

2. Storage Layer

This is where your ingested data is persisted:

- Data Lakes

- Data warehouses

- Lakehouse Model

3. Processing Layer

This layer is responsible for transforming data using:

- Spark

- DBT SQL

- Pipelines

4. CI/CD Layer

This layer is where DataOps diverges, or, differentiates itself from previous data modeling methodologies. This layer supports:

- Automated testing

- Version Control

- Deployment Pipelines

5. Orchestration Layer

This layer is responsible for managing workflows, executing DataOps.

Examples of Orchestration tools are:

- Airflow

- Dagster

6. Observability Layer

This layer of data operations is responsible for monitoring pipeline performance, validating data quality, ensuring system reliability, and how well the data is delivering expected outcomes.

The Order In Which Data Operations Occur:

- Ingestion of Data into the Pipeline

- Validation of Ingested Data

- Transformation of Ingested Data

- Delivery of Transformed Data into Production via CI/CD Delivery Pipeline

- Monitoring of Data Delivered Into Production

Common Misunderstandings About DataOps:

- DataOps does not mean to implement a tool..

- DataOps will not be implemented on a single platform.

- DataOps cannot be implemented with one methodology.

Conclusion: DataOps is a method for operating systems, not an implementation for tool sets.

DataOps In Action: Real World Demonstration

Let"s work through a reality based scenario.

Marketing Analytic Pipeline

Data you Collect:

- Marketing campaign information

- User interaction information

- Conversion Information

Step 1 - Development:

The data engineering team will provide data transformation on to the actual production by developing a transformation process to convert data into analytics. Version Control

The source code is stored in a version control system, and changes can be reviewed by pull requests.

CI Pipeline

Automated tests such as schema validation, data quality tests, and unit tests are executed as part of the Continuous Integration (CI) pipeline process.

Deployment

The CI pipeline process automatically deploys the source code into production without manual intervention or impact. In addition, all environments are built consistently.

Monitoring

The systems have monitoring for:

- Data freshness

- Pipeline success rates

- Errors and exceptions

Incident Management

If an incident occurs:

- Alerts are triggered immediately.

- Engineers can investigate with context.

What Makes High-Performing Teams Successful

High-performing teams release more often, with fewer failures and better data quality.

Where There Are Still Challenges

- Lack of test coverage

- Poor alerting configuration

- Lack of ownership or accountability

Key Takeaway: DataOps reduces risk through improved operational processes; however, having proper discipline is equally important.

Common Mistakes Teams Make That Impact DataOps

1. Over-engineering too early in the CI process.

Building complex systems/processes too early creates slow delivery cycles and increased complexity compared to simple solutions that allow teams to deliver their work on time.

2. Not having any observability.

Without observability (monitoring), teams cannot detect failures until after they happen and must reactively debug them.

3. Not having explicit data contracts.

When teams do not have explicit agreements/data contracts with each other, it creates an environment where pipelines do not work, and teams will misalign.

4. Treating DataOps as a one-time set up.

Data is not static; it continues to change over time due to business requirements or other factors, and therefore teams need to continuously improve their operational processes.

Key Takeaway: The majority of all failures in DataOps can be attributed to gaps in operational processes, rather than tool issues.

DataOps Best Practices: Things High-Performing Teams Do

1. Automate Everything

Every process related to testing, deployment and monitoring should be automated to achieve uniformity and repeatability.

2. Treat Data As Code

This means version controlling everything, utilizing pull requests for code review, and maintaining documentation/knowledge for future teams.

3. Build for Failure

The systems and processes need to have built-in retry mechanisms, alert systems, and backup pipelines.

4. Define Service Level Agreements (SLAs) Early

Establish and measure what successful outcomes will be for:

- Data freshness

- Data reliability

- Data latency

5. Use AI-First Engineering Systems

High performing teams are moving away from manually optimizing their data systems using automation.Following are systems that incorporate:

- Predictive failure methods

- Pipeline optimization methods

- Faster delivery service methods

Logiciel therefore stands out with its entire approach which provides teams the means to utilize system-level intelligence rather than connecting and connecting with multiple tools therefore improving both speed and reliability.

Takeaway: High performing teams build systems with the sole purpose of creating value and success.

DataOps is quickly changing the way we design and manage modern data systems as we have known them.

Three major takeaways need to be considered regarding DataOps.

- DataOps utilizes CI/CD principles to create data pipelines

- Reliability, scalability, and readiness for Artificial Intelligence are paramount to success utilizing DataOps.

- DataOps requires using automated tools combined with disciplined processes and effective systems engineering to produce valuable results.

While implementing any data-driven initiative is not always easy; however, it is important to remember that making necessary changes creates positive results.

Key areas of value added due to new data-powered infrastructures are:

- Delivery cycles will be shortened significantly with the new data-driven strategy.

- Data systems will become more trustworthy.

- Less operational expense will occur as a result of more efficient processes.

- Businesses will be able to make better decisions.

Next Steps: While your data-driven systems may currently be difficult to maintain you need to analyze how you can manage them in a reliable and scalable manner going forward.

AI Velocity Blueprint

Measure and multiply engineering velocity using AI-powered diagnostics and sprint-aligned teams.

Frequently Asked Questions

What exactly is DataOps?

DataOps combines automation with CI/CD and/or continuous improvement practices throughout the entire data pipeline development and delivery process so that end-users receive reliable and reusable data.

What is the difference between DataOps and DevOp?

DataOps relates to data and engineering processes, whereas DevOps refers to software and engineering processes. While both have some commonality, the focus of their processes varies based upon the purpose behind each company's efforts.

Why does the data pipeline experience regular failure rates?

Regularly, organization experience higher-than-average failure rates due to multiple reasons that are attributed to the following factors; (1) No test cases, (2) No monitoring, (3) Schema redevelopment, (4) Manual processing workflows. DataOps will solve each of the problems listed with the development of automated processes for testing and monitoring the data.

What are the tools used by DataOps?

Examples of tools include Microsoft/ Azure Data Factory, Airflow, Dbt, Spark, and many other monitoring applications and platforms. However, it is important to recognize that DataOps refers to a work philosophy and holistic processes rather than a single solution, therefore being disciplined and developing effective systems will enable full utilization of the aforementioned resources.

Is DataOps good for smaller teams?

Yes. Smaller teams will ultimately realize greater benefit through the application of DataOps than larger organizations because it reduces manual working hours, creates more reliable data, and prevents scaling problems; both now as well as in the future.