Three years ago choosing how to model your data architecture was easier. You would select a data warehouse, attach a data lake as a means of accumulating large amounts of information, and build pipelines connecting the two.

Today, those same decisions frequently become a form of technical debt.

Engineering teams are now spending 30–40% of their sprint cycles maintaining brittle data pipelines, troubleshooting schema mismatches, and fine-tuning queries which were not designed for the current volume of data being processed through them. The emergence of the Data Lakehouse has created a new set of expectations for organizations who need support for analytical processes, AI capabilities and Real-Time workloads all operating on one infrastructure.

It is therefore imperative to carefully consider the differences between Delta Lake, Apache Icebox, and Apache Hudi, before implementing any of them as your organization's data lakehouse.

The following guide is designed to assist Staff and Principal Engineers with evaluating the various options with respect to implementation of a Data Lakehouse architecture:

How do each of the Data Lakehouse formats perform behind the scenes when compared to one another.

Review the real-world trade-offs of each option, since actual performance will differ from the marketing collateral of each vendor

Identify the option that will best fit your organization's data lakehouse requirements in terms of size, tooling and objectives.

Let’s explore why it is even more important to evaluate differences between Delta Lake, Icebox and Hudi now than it has ever been before.

AI – Powered Product Development Playbook

How AI-first startups build MVPs faster, ship quicker, & impress investors without big teams.

1. Why is this Comparison Important

The decision to select Delta Lake, Icebox, or Hudi as your organization’s data lakehouse format is no longer only a tools choice. ItChange is inevitable. As new AI workloads continue to grow, so does the way in which we manage our data with various expectations. The key requirements for all AI pipelines are as follows:

- Data is to be versioned.

- Data can be reproduced accurately.

- Data has to be reliable.

Without a proper table structure, your data lakehouse becomes a blocker.

Over 70% of AI projects fail due to inadequate data prep, rather than the fault of the model.

2. Data Growth is No Longer Linear

The data growth is on a curve where there is a rapid increase from the left side and is offsetting much of the traditional way of partitioning and managing files.

3. Measurement of Engineering Performance Has Become Important

Modern teams are measuring their success by:

- The velocity of delivery.

- The reliability of their deliveries.

- The optimization of costs associated with getting to that delivery.

The wrong choice of data lakehouse format will create:

- Higher operational costs,

- Longer time to get through the development phase of a new feature,

- Higher expenses in consuming the cloud.

What is at Stake?

Issue Impact Table Format Speed & reliability of querying. Ecosystem Fit Polyfunctionality of engineering. Metadata Model Scalability of systems. Transaction Model Accuracy of data.

That being said, there is no one-size-fits-all solution; the correct choice will depend on your specific situation and will also be dependent on the current level of maturity of your team.

Understanding Delta Lake: Where You Can Find Delta Lake's Strengths and Weaknesses

Delta Lake is for everyone currently using the Spark Ecosystem.

Characteristics of Delta lake as a Data Lakehouse

- It is highly scalable with the support of ACID transactions.

- It is tightly coupled with Spark.

- The databricks ecosystem has been well-developed and has been around for quite some time.

Functionality of Delta Lake

- Data versioning.

- Schema enforcement.

- Unification of batch and streaming.

Architectural Principles of Delta Lake

The Delta lake architecture expects that:

- You are using Spark as your primary data processor.

- You are willing to have a tighter coupling between the data lakehouse and processing engine.

- You would like to gain the benefits of having access to batch and streaming data.

Where Delta Lake Doesn't Favor Anyone

Engine Lock-In

Delta works primarily with Apache Spark, therefore any time you use it in a multi-engine environment the system can become complex and potentially cumbersome.Complications due to Metadata Scaling

Lack of metadata support/access can greatly diminish performability of large tables.

Cost Implications

Cost of performing at a larger scale becomes higher when much of your workload relies on compute-heavy processes.

Who Can Use Delta Lake

If the teams are really committed to using Spark for data engineering, Delta Lake may work. Simplicity may trump flexibility, and if the organization uses Databricks organization-wide, Delta Lake would benefit.

As a summary, Delta Lake is a viable option for Spark-first users looking for a lightweight, integrated solution.

Analyzing the Pros, Cons, and Once-Used of Apache Hudi

Apache Hudi is not like traditional data warehousing/ETL tools that rely on a traditional batch processing method. Hudi has been designed to emphasize real-time ingestion and incremental processing.

Optimizations that Apache Hudi was designed for:

- Fast upserts & deletes

- Incremental data processing

- Real-time data ingestion

Key trade-offs of Hudi's architecture:

- Use of copy-on-write and merge-on-read architectures.

- Complex indexing to enable efficient data querying.

- High operational overhead compared to traditional batch processing methods.

Where Hudi excels:

Data processing workloads that rely heavily on streaming data

- Real-time analytics

- CDC pipelines

Where Hudi becomes challenging to use:

- Rapidly escalating operational complexity of Hudi.

- Significant experience level required to be able to use/manage Hudi well.

- Not as intuitive to batch-oriented environments as it is to streaming data oriented environments.

Best Fit for Hudi:

Hudi is good for:

- Streaming first environments

- Frequent (@ least once a day) updates and low-latency ingestion

- Working in an environment that can support a higher level of operational complexity.

To summarize, Hudi is most well-suited for realtime data processing systems requiring ever-continuous incremental updates.

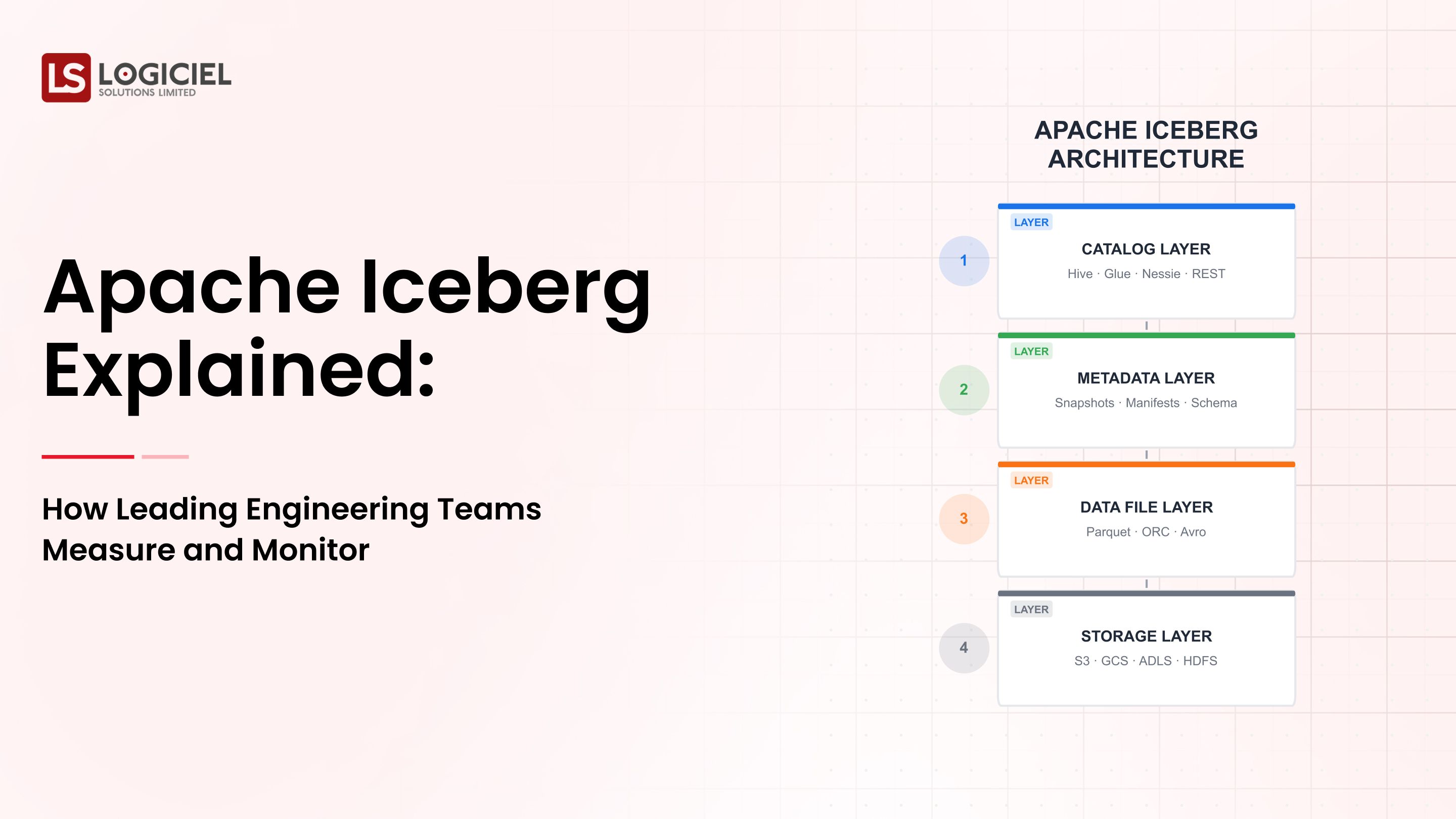

Analyzing the Pros & Cons of Apache Iceberg

Apache Iceberg was created for scalability, flexibility, and cross-engine compatibility.What Makes Iceberg Different from Other Solutions

- Decouples compute and storage

- Supports a variety of engines (Spark, Trino, Flink)

- Utilizes complex metadata structures

Dominant Strengths

- Scalable Metadata Layer

- Iceberg can manage massive tables efficiently via:

- Manifest Files

- Metadata Pruning

- Schema Evolution Without Impacting Current Data

- Teams Can:

- Add or Change Columns

- Prevent Pipeline Failure

- Hidden Partitioning

- No manual partitioning required.

Drawbacks of Iceberg

- Somewhat steeper learning curve

- Requires thoughtful architecture and implementation

- The ecosystem is maturing compared to Delta.

Best Use Cases for Iceberg

If you are:

- In a multi-engine environment

- Planning for long-term scalability

- Wishing to avoid vendor lock-in

Iceberg is your best option for flexible, future-proofed data lakehouse architectures.

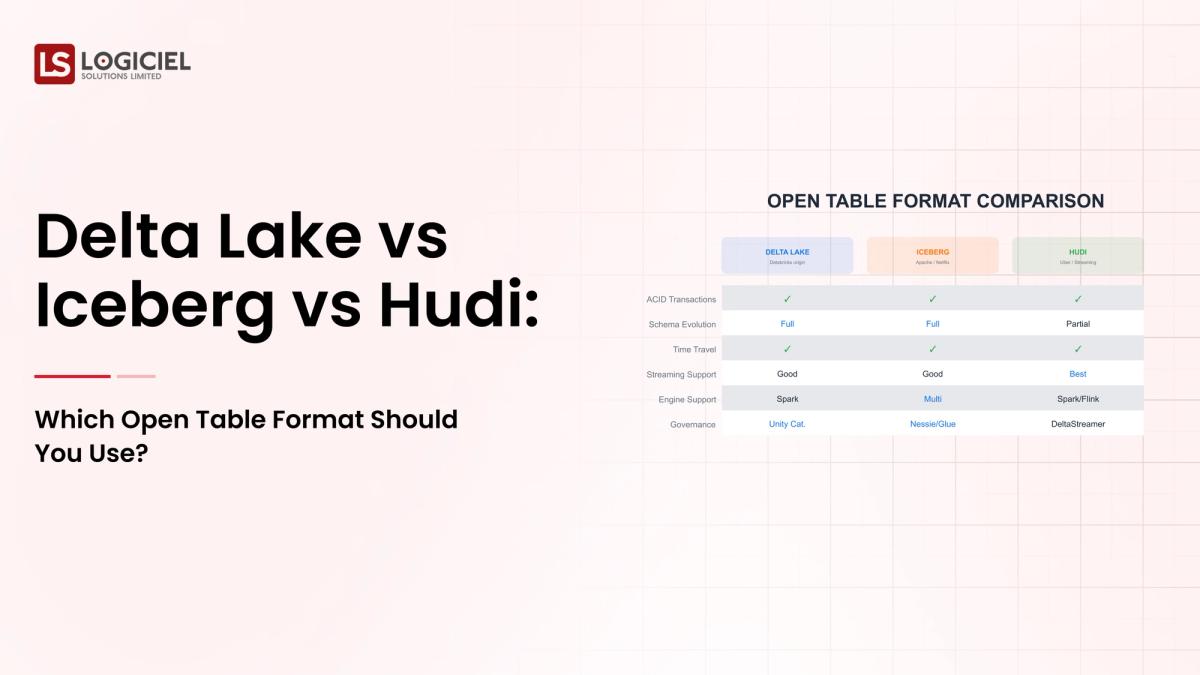

Comparative Data Points of Iceberg, Hudi, and Delta Lake

The next table summarizes the comparison of Delta Lake, Iceberg, and Hudi based on operational engineering metrics.

| Data Point | Delta Lake | Iceberg | Hudi |

|---|---|---|---|

| Scalability at High Volume | Strong Performance (Spark-based) | Excellent Performance (Metadata-driven) | Strong Performance (Streaming-centered) |

| Multi-Engine Compatibility | Limited | Excellent | Average |

| Ease of Use | Very Easy | Average | Very Difficult |

| Streaming Compatibility | Good | - | Very Good |

| Operation Complexity | Medium | Average | Very High |

| Vendor Lock-In | Higher Risk | Low Risk | Low Risk |

Insight

Delta = Simplicity

Iceberg = Flexibility

Hudi = Real-Time Optimisation

Your Decision is Based on Your PrioritiesHere’s a brief overview of selecting Delta Lake vs. Iceberg and using Logiciel's tools to enhance productivity.

When You Should Choose Delta Lake (And When You Should Not)

Select Delta Lake When...

- Your stack features Spark at its core.

- You want to reduce the time it takes to onboard new developers.

- You want to provide a single unified eco-system.

Do Not Choose Delta Lake When...

- You need to support multiple engines such as Hive, Impala, and Presto.

- You do not wish to be locked-in to a vendor.

- Your data volume is threatening to exceed metadata limits.

When You Should Choose Iceberg (And When You Should Not)

Select Iceberg When...

- You plan to operate multiple query engines using the same data set.

- You need to scale reliably and grow for the next 5 to 10 years.

- You expect future workload architectural flexibility with speed-to-delivery.

Do Not Choose Iceberg When...

- You have team members without experience using distributed systems.

- You need a fast and easy to implement solution.

- Your workloads are relatively simple and small in size.

Where Logiciel Fits In

Many organizations experience difficulty in the selection process but rather in how to put it all into practice. Logiciel solves the challenges of operationalizing data formats through Logiciel's AI-first engineering approach, which provides:

- Automation of pipeline optimization

- Reduction in infrastructure complexity

- Improvement of system reliability.

The Summary

In summary, selecting between Delta Lake, Iceberg, and Hudi is not an easy comparison; it is about aligning your architectural model from reality.

Consider these three important points:

There is no definitive, single best solution; the context in which you apply a solution is critical.

Iceberg is more about flexibility; Delta is about simplicity; and Hudi is about real-time workload capabilities.

Your execution performance will be more important than the selection process.

The real benefit comes not from whether you select Delta Lake, Iceberg, or Hudi, but from how you create, automate and maintain a highly effective system.

When implemented effectively, a Data Lakehouse provides benefits such as:

Accelerated Analytics,

Stable AI Pipelines, Lower, Operational Overhead and Improved Engineering Velocity,

Your next action step should be to ensure that you clearly understand what it will be like to run Data Lakehouse infrastructures in production.

Further reading about Data Lakehouse architecture:

Apache Iceberg Explained - The Open Table Format Changing the World of Data,

Reasons Your Data Infrastructure will Continuously Break & Real Solutions,

What does the Modern Data Stack look like? - A Comprehensive Guide for Engineering Leaders.

Logiciel Solutions helps teams develop AI-enabled data architectures that are able to grow without becoming overly complex.

The focus of our engineering frameworks is around: Reliability, Speed and Long-term Flexibility.

Explore how to create a Data Architecture & Platform that is Future-Proof.

RAG & Vector Database Guide

Build the quiet infrastructure behind smarter, self-learning systems. A CTO’s guide to modern data engineering.

Frequently Asked Questions

What is the definition of a Data Lakehouse architecture?

The Data Lakehouse architecture is the combination of the scalability of a Data Lake and the performance/structure of a Data Warehouse. The capabilities delivered from a Data Lakehouse include the ability to store raw data, but also allow for Fast Analytics, ACID Transactions and schema rules to be performed on the data using the same architecture.

Which is the best table format for Data Lakehouses: Delta Lake, Iceberg or Hudi?

None of the table formats is the best; each is designed for a unique workload type and best suited for either Spark-based workloads (Delta Lake), Multi-Engine Scalable work loads (Iceberg) or Real Time Ingestion (Hudi). Therefore, the decision about which is "best" will depend greatly upon each team's capability to implement that architecture successfully.

Is Apache Iceberg going to replace Delta Lake?

The answer is No, not yet (Iceberg gaining popularity due to its flexibility, however Delta Lake remains strong within the Spark ecosystem). Many companies are evaluating both to see which architecture best meets their needs.

Can both Delta Lake and Iceberg table formats be used at the same time?

Yes, there are companies that successfully run Hybrid Architectures and leverage both formats. However, doing so introduces complexity to the architecture and therefore, it is recommended that teams who decide to run both Delta Lake and Iceberg, standardize on ONE format unless they have a strong need for both formats.

What is the most common error made when selecting a data lakehouse format?

The most common error is selecting a format based upon short-term convenience rather than selecting an option that will be scalable over time. Many organizations will follow the common practice of selecting a format based solely upon familiarity and not take the time to consider future requirements such as multi-engine, AI suitability, or overall operating overhead.