Three years ago, your team made a good decision. You built what you thought was going to work out as your data platform and that data platform was based on a traditional data lake. It did Work. Pipelines were healthy, queries yielded predictable results, and cost was affordable.

Fast forward to present day: that same data architecture has now consumed nearly 40% of your sprint capacity in the areas of: maintenance, re-processing and debugging.... Schema changes break pipelines, partitioning strategies don't scale… query performance is inconsistent and your ability to execute on AI projects is being blocked by unreliable data.

This is where Apache Iceberg comes into the picture.

If you are a Staff Engineer or Principal Engineer, responsible for modernising your data Infrastructure, then this guide is for you.

By the time you finish reading this article you will:

I was able to define, in layman’s terms, what Apache Iceberg is

Identified the role of Apache Iceberg within modern data Lakehouse architectures

Describe high-performance teams using Apache Iceberg in production environments

Let’s start from the top.

Agent-to-Agent Future Report

Understand how autonomous AI agents are reshaping engineering and DevOps workflows.

What is Apache Iceberg? Plain English Definition

Put simply, Apache Iceberg is an open table format designed to make data lakes more reliable, performant and scalable.

Here’s a simple way to think about this:

If a traditional data lake is one folder filled with files, Apache Iceberg is the intelligent index and transaction layer giving those files database-like characteristics.Why It Is Here

Classic data lakes have difficulties that include:

Schema evolution affecting pipelines Slow queries that are caused by poor partitioning No transactional guarantees Limited options for time travel and versioning data

Apache Iceberg fixes the above issues by providing a structured metadata layer.

Basic Building Blocks

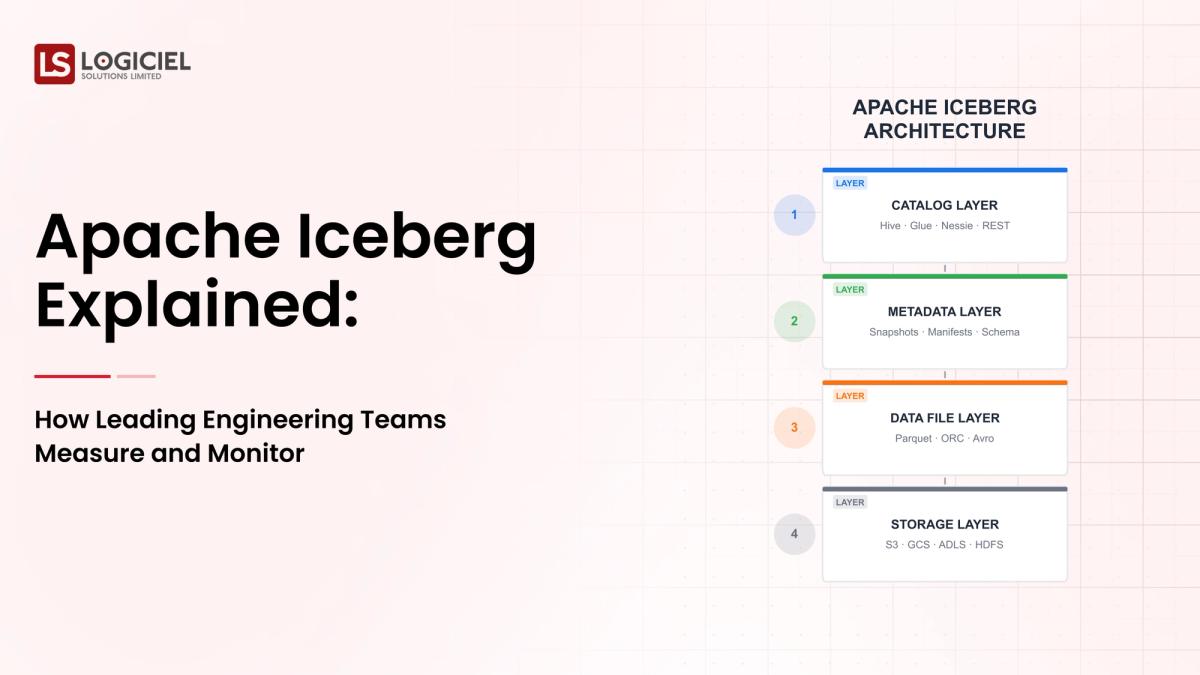

Apache Iceberg has four basic components.

- Table Metadata - Tracks your schema and snapshots, and the table's structure

- Manifest Files - Contains metadata about your files to allow for faster lookups

- Data Files - The actual data being stored (in formats such as Parquet or ORC)

- Snapshots - A way to do time travel and version control

Analogy for Engineers

Apache Iceberg is like using Git with your data;

- Snapshots = commits

- Metadata = commit history

- Data files = your code

- Time travel = checking out older versions

What it Does

By decoupling storage from management, you can make your data lake behave like a data warehouse without being locked into one vendor.

Summary: Apache Iceberg allows you to convert your raw storage into structured, queryable, and reliable data.

Why You Should Care About Apache Iceberg More in 2026

While the urgency surrounding Apache Iceberg may seem theoretical, it is being driven by real engineering pain.

1. AI and ML Require Quality Data

Modern AI requires:

- Consistent Schemas

- Versioned Datasets

- Reproducible Data Pipelines

Reports show that over 70% of failed AI projects are a result of poor quality data and poor data/infrastructure; performance is not an issue.

Without solutions like Apache Iceberg, your AI projects will not be able to scale.

2. Planetary Data Growth

Data in enterprises is increasing at a rate of 30-40% per year; with that kind of rate, traditional partitioning approaches are not going to work.Iceberg provides the following:

- Hidden partitioning

- Metadata Pruning

- Efficient file skipping

This all leads to very significant performance improvement for queries when using distributed architectures/scaled environments.

3. Compliance and Governance Pressure

There are increased pressures to meet the following regulatory requirements:

- Data lineage

- Auditability

- Versioning

Engaging the Iceberg software that uses its snapshot-based architecture makes compliance much more manageable.

Apache Iceberg Before and After

- No Iceberg With Iceberg

- Manual Partitioning Automatic Evolution of Partitions

- Schema Failures – Not The End SAFE Schema Evolution

- Slow Processing of Queries Uses Metadata to Optimize Processing RT

- No Versioning Easy Access to Previous Versions

Cost of Ignoring the Use of Apache Iceberg

Organizational teams that delay adopting modern table formats will encounter the following:

- Higher infrastructure costs

- Decrease in speed of analysis

- Obstruct AI efforts

- Engineer Burnout

Takeaway - Apache Iceberg Is No Longer Optional. It Is Establishing a Minimum Standard for Scalable Data Infrastructure.

Core ApI Components for Apache Iceberg - What Your Developing Building Will Look Like

When innovating a new system that is dependent upon using Apache Iceberg; you are designing a system not a tool.

1. Ingestion Layer

Ingestion Layer is where the data gets ingested into your system.

Common Tools -

- Kafka

- Spark streaming

- Flink

Responsibilities of the Ingestion Layer:

- Data Validation

- Schema Validation

- Initial Partitioning

2. Storage Layer

Iceberg operates on top of object stores:

- AWS S3

- Azure Data Lake

- Google Cloud Storage

Iceberg Data Storage Formats Include:

- Parquet

- ORC

3. Iceberg - Core Table Layer

The core of your Apache Iceberg system is where you manage, store and access metadata, snapshots (i.e. changes made to data), and schema evolutions.

4. Processing Layer

Processing Layer, query engines can access the Iceberg table structure:

- Spark

- Trino

- Flink

- Presto

Query Engines leverage Iceberg Metadata for Processing Optimization.

5. Orchestration Layer

Orchestration tools assist in automation of coordinating tasks and establishing dependencies (e.g.

- Airflow

- Dagster) in a workflow.The mechanism by which these components integrate consists of:

System Workflow

- The ingestion of data through pipelines

- Storing data within an object-storage

- The indexing of data using icebergs metadata

- The querying of data from computational engines

- The orchestration of all the above-mentioned components into an operational workflow

Key Clarification

Some common misconception about Apache Iceberg is that it is a database, processing engine or a storage system when in fact, it is a table abstraction layer.

Therefore, as per the takeaway, Iceberg is a mediator between the storage and the computing parts of a comprehensive cohesive system.

Example of how Apache Iceberg's functionality may appear in practice will be presented using an Ecommerce analytics pipeline.

Process:

The ingestion of event records into kafka will occur through SPARK where the data will be validated for accuracy and reliability before being written into iceberg tables.

After the data is written into the iceberg tables, the data will land as compressed parquet files within S3, which are managed by iceberg tracking file locations along with their existing partition classifications.

Once the data is written into iceberg tables, analysts will run queries from Trino leveraging icebergs fast file pruning and scan capabilities.

Using iceberg allows for seamless changes in schema by providing:

- No interruption to the existing pipeline

- No need for rewriting previously declared data

In the event of a bug causing corruption on data from the previous day, users can simply go back to a previous snapshot of the iceberg table in order to restore data back to a functional state.

Excellent outcomes associated with using iceberg include:

- Fast performance associated with querying data regardless of the amount or type of data

- Changements to schemas are always safe and maintainable

- Data will always be versioned and available for auditing purposes

Even with the numerous successful outcomes associated with the use of iceberg, there are still instances such as:

- Poor ingestion validation

- Lack of visibility into the data pipeline ingestions

- Improperly configured partition strategies

The overall takeaway from this situation is that while iceberg resolves many structural issues associated with data storage; consistent discipline in the operations of using iceberg is essential.

Teams will continuously fail at leveraging the capabilities of Apache iceberg when they:

Over-engineer the implementation of iceberg.

By doing this teams will create unnecessary complexity and cause significant delays in delivering the right solution onNot Paying Attention

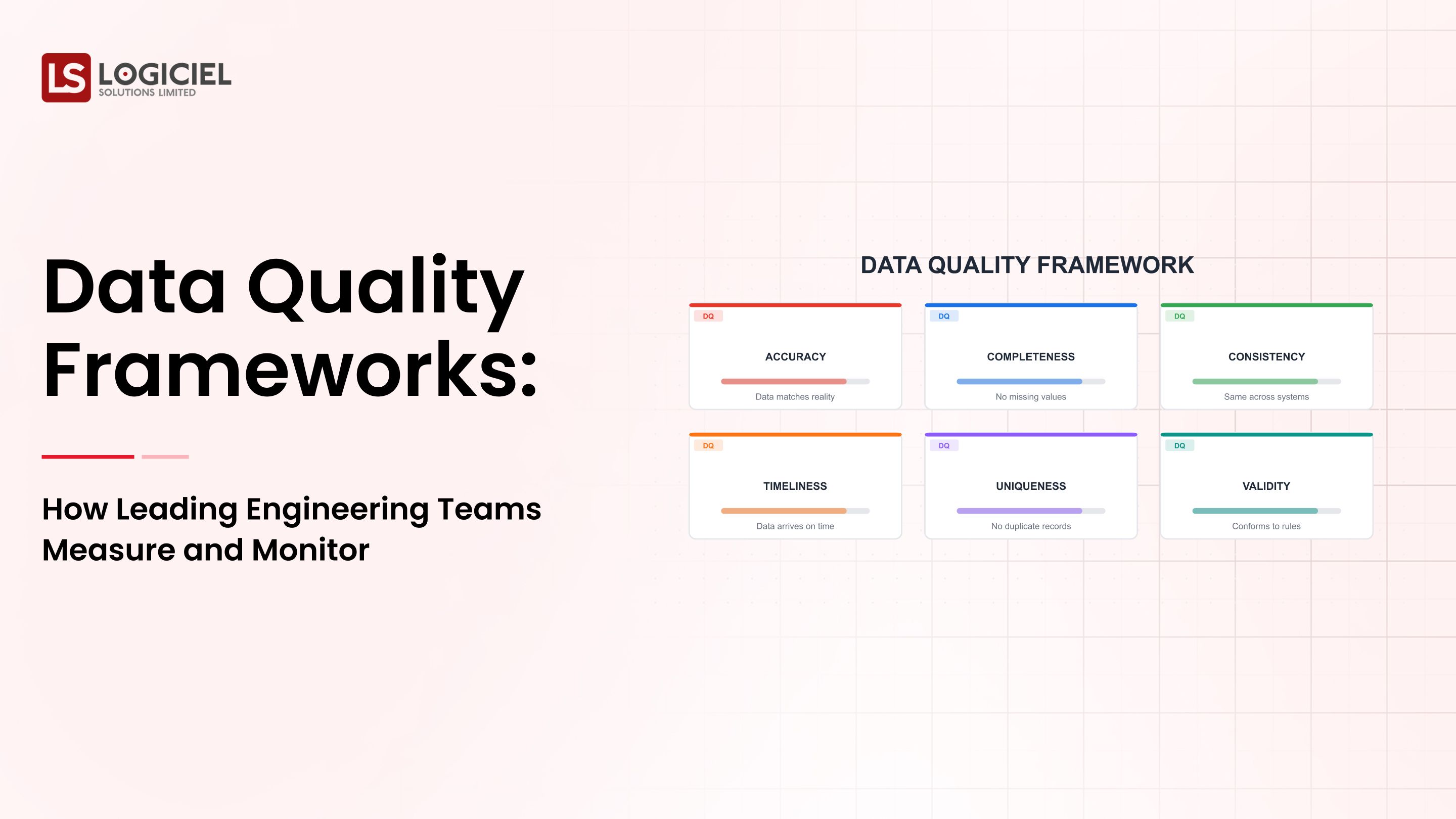

Monitoring is not something you do after you set up your Apache Iceberg implementation. Your engineering teams need to be mindful of these areas related to their jobs:

- Aging of Data

- Errors in Your Data Pipeline

- Performance of Your Query

When There are No Data Contracts

Lack of a schematized owner for your data will lead to …

- Multiple breakages/disconnections in your data pipeline

- You will have poor quality data

Apache Iceberg is not a plug-and-play, set-it-and-forget-it system.

Maintaining the Infrastructure (metadata):

- Ongoing optimization of the system

- Ongoing maintenance of your metadata

- Ongoing performance tuning

Conclusion - The majority of the failures with your use of Apache Iceberg are based on your teams' issues and how they have deployed it.

Best practices - efficient use of Apache Iceberg: The difference between highly effective engineers and everyone else.

Highly efficient teams see/deploy Apache Iceberg as an ecosystem that is a system, not as a tool.

Automate Anything and Everything:

- Schema validation

- Data quality checks

- Alert systems

Automation will reduce human errors.

Create Your Infrastructure Like Code!

Version Control (VC) all of your:

- Table Definition

- Pipelines

- Configuration Information

Will ensure everything you build will be repeatable.

Prepare for Failures

Build your systems with:

- Retry Logic

- Dead Letter Queues

- Circuit brakers

Failures will occur; what will differentiate you is your ability to weather the failure.

Contract with Your SLA Before a Failure Occurs

Monitor:

- Aging of Data

- Latency from your Query Execution

- Reliability of the Data Pipeline

Before a problem occurs.

Maintain a Systems Approach

Logiciel can provide you the ability to maintain a Systems Approach.

Instead of worrying about:

- All the tools you have to use

- Different Data Pipelines

- Manual processes

Highly efficient engineers develop with an AI first systems approach, whereby:

- Their failure rate is predictable

- Their production systems are automatically optimized

- Their ability to deliver to market is greatly accelerated.

Conclusion - the tools you're using are not the difference; your disciplined execution of a definedThe three principal points of consideration related to these findings include:

• When used in conjunction with other technologies and processes that allow for complete automation of your data processing and analytics functions, Apache Iceberg significantly enhances the reliability of your data lake as an operational data system.

• They can be considered critical components of AI and data-driven business processes because they allow for a higher volume and velocity of AI-driven analytics while also supporting compliance to various regulatory and legal requirements.

• Your success with Apache Iceberg ultimately depends on your ability to execute the required changes (implementation) and your ability to successfully adopt the necessary system requirements (adoption).

Achieving the benefits of Apache Iceberg is no easy task. It introduces several new layers of abstraction, operational complexity, as well as, significant design considerations.

Nonetheless, the ROI is significant!

When successful in implementing Apache Iceberg, engineering teams obtain the following significant advantages:

• Improved speed of analytics

• Improved stability of AI-driven pipelines

• Reduced cost of infrastructure

• Increased velocity of engineering activity

Next Steps

If you are exploring Apache Iceberg or in a position to modernize your existing data lakehouse, then you need to begin by understanding how these technologies will perform in the real world.

Additional Reading/Research

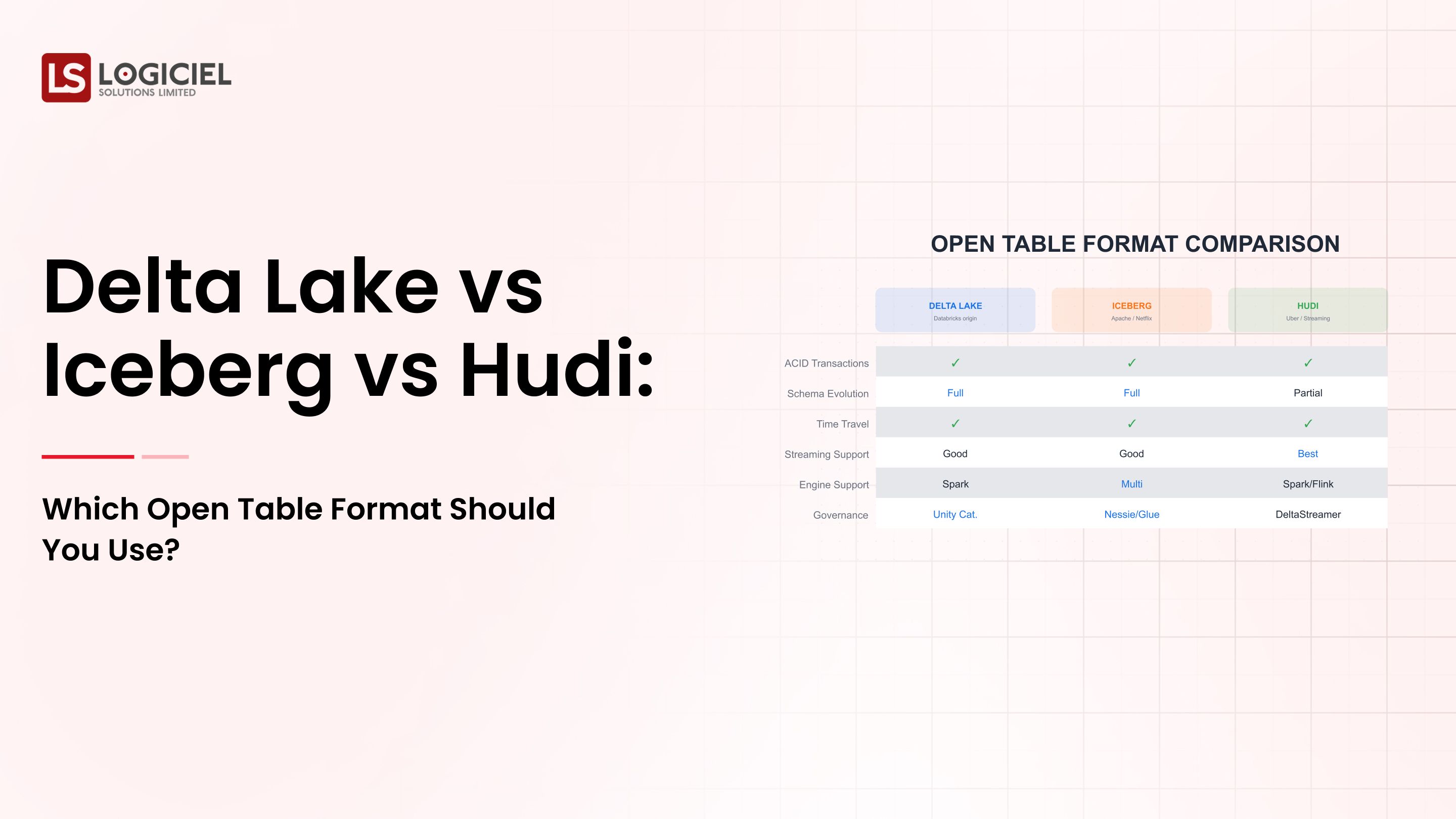

• How to Choose an Open Table Format: Delta Lake vs Iceberg vs Hudi?

• Understanding the Root Causes and Solutions to Data Infrastructure Failures

• Understanding the Modern Data Stack: A Guide for Engineering Leaders

At Logiciel Solutions we specialize in the transformation of fragmented data systems into an AI-first, scalable, reliable data platform.

To accomplish this we combine system-level design with intelligent automation to achieve improvements in both speed and stability.

You can modernize your existing data infrastructure without increasing the complexity associated with those changes.

AI Velocity Blueprint

Measure and multiply engineering velocity using AI-powered diagnostics and sprint-aligned teams.

Frequently Asked Questions

What is the purpose of Apache Iceberg?

Apache Iceberg is designed for managing large volumes of unstructured data in data lakes. It provides schema evolution, time travel, and highly efficient querying, making it suitable for analytics and machine learning workloads, as well as for data lakehouse use cases that require reliability and scalability.

What are the main differences between Apache Iceberg and Delta Lake?

While both are open-source table formats, the primary difference between them is that Apache Iceberg is engineered to be compatible with more than one processing engine (i.e. Apache Spark, Apache Flink, etc.) than Delta Lake (which is tightly integrated with Apache Spark). Thus, Apache Iceberg can be used in multi-processing environments with increased ease than Delta Lake.

Does Apache Iceberg replace the need for a data warehouse?

Not entirely. While Apache Iceberg provides some of the same functional capabilities as a traditional data warehouse, you will still require a query engine, such as Trino, and orchestration tools such as Apache Airflow for Apache Iceberg to function as a complete replacement data warehouse.

Can Apache Iceberg be used for near real-time data processing?

Yes. When used with stream processing tools (e.g. Apache Kafka, Apache Flink, etc.), Apache Iceberg provides businesses with a mechanism to function in both near real-time analytics and streaming data pipeline environments through its incremental update and snap-shot isolation capabilities.

What are the key challenges faced when utilizing Apache Iceberg?

The following are the most common challenges faced when implementing Apache Iceberg:

• The challenge of managing metadata in a scalable manner

• The challenge of delivering proper observability

• The challenge of developing efficient partitioning strategies

• The challenge of ensuring cross team alignment around data contracts

However, if you have established proper process and system architecture, you will have the ability to effectively manage those challenges.