In most cases, data will not break in a straightforward manner.

Data corrupts itself over time (drift) or at some point in time (degrade) and is usually hidden from users until it affects the outcome of the user's (decision).

Data displayed on dashboards and KPIs may appear wrong or misbehave, and as a consequence, a model may not have achieved its goal without the user knowing the reason.

This is a common occurrence in today's environments, which is why the leadership of engineering teams is now focusing on making sure that data quality is a priority.

When you are a Staff or Principal Engineer, you have two challenges. First, resolve bad data, and second, build systems capable of measuring, monitoring and enforcing data quality over time, across the entire enterprise.

Data quality frameworks allow you to transition from a reactive to a proactive approach in your engineering practice regarding data quality.

AI – Powered Product Development Playbook

How AI-first startups build MVPs faster, ship quicker, & impress investors without big teams.

What Is Data Quality?

Data quality (DQ) is an indication of how reliable the data is, in terms of its intended use.

To be considered high quality, the data should:

- Be accurate

- Be complete

- Be consistent

- Be timely

- Be validated

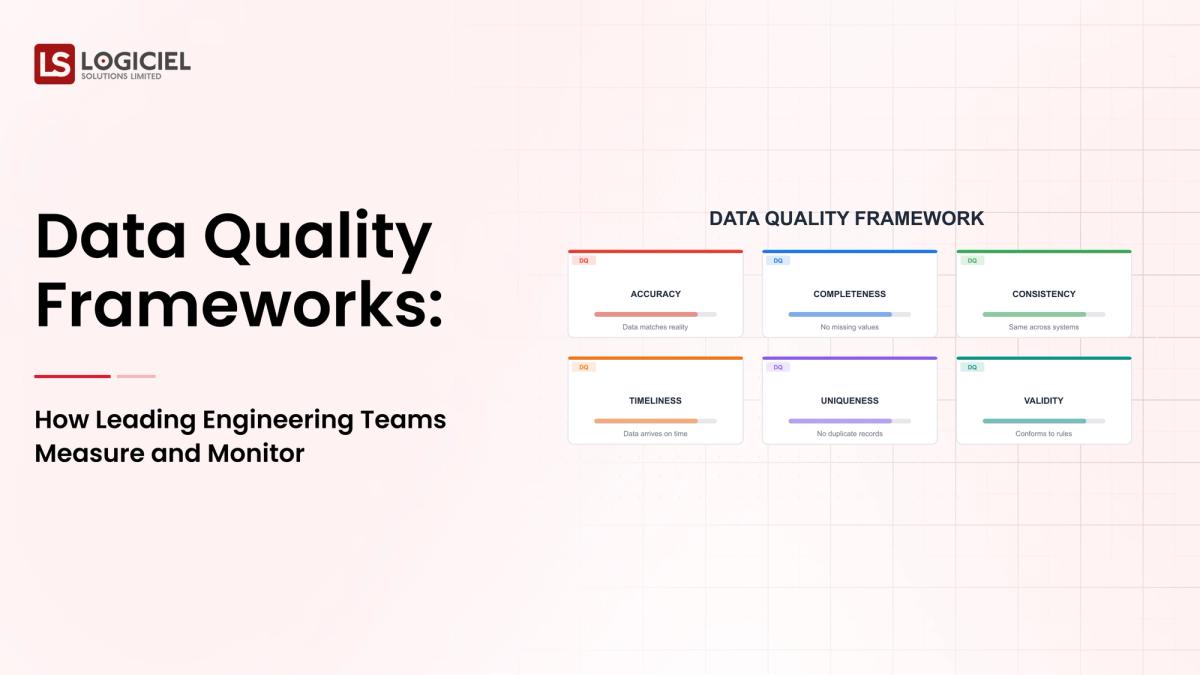

What Are The Dimensions of Data Quality?

Leading engineering teams will evaluate DQ using dimensions like:

- Is the data accurate?

- Are the necessary fields included as part of the data?

- Is the data consistent across all relevant systems?

- Is the data current, and is it still useful?

- Is the data being measured using an acceptable measurement standard?

Some companies will extend this to create a framework that includes such things as the '7 Cs' of DQ (correctness, credibility and coverage) for more

The Need for Data Quality Frameworks

Having an organized System for applying Standards to the way your Organization develops a Data Quality program, to ensure that their Data is of a High Quality, will prevent your Team from experiencing the Problems Associated with:

- Manual Auditing

- Anomalies in Debugging

- Post-Event Corrections

Effects of Poor Quality Data on Organizations

Low Quality Data Can Result in:

- Ineffective Business Decisions

- Deficient Dashboards and Reports

- Ineffective Machine Learning Algorithms

- Reduced User and Stakeholder Confidence in the Data

The Financial Impact

Engineering Teams will spend their Time:

- Debugging Data Pipelines

- Explaining Data Inconsistencies

Defining the Research-Based Data Quality Framework

A Data Quality Framework is a system to provide:

- Measures For Data Quality

- Methods for Measuring Data Quality

- Processes for Monitoring Data Quality

- Systems for Resolving Data Quality Issues

The Components of a Data Quality Framework Include:

- Data Quality Metrics

- Validation Rules and Procedures

- Systems to Monitor Data Quality

- Processes for Alerting users of Data Quality Issues

Data Ownership/Governance Establishment of High Quality Data Is Through a Framework that Provides Measurable As Well As Enforceable Processes.

High Performing Engineering Teams are Applying Data Quality in the Same Manner as How They Apply Reliability Engineering

1. Establishing a Data Quality SLA to Set Expectations of:

- Data Freshness

- Data Accuracy

- Data Completeness

2. Continuously Monitoring Data Quality by Reviewing the Following Metrics:

- Null Data Values

- Duplicate Data

- Data Schema Changes

3. Data Profiling to Establish a baseline of what Data should Look Like so When Abnormalities Occur, They Can Be Detected.

4. Establishing a Baseline of "Normal" Data, So That "Abnormal" Data Can Be Detected.

In Conclusion, Measurement of Data Quality is an Ongoing Process.

Method of Evaluating Data Quality Management Tools

The selection of Data Quality Management Tools Is An Important Decision; However, the Approach To Implementing That Tool Is More Important.

Evaluate The Following Criteria When Selecting Data Quality Management Tools:

- Scalability of Data Quality

- Integration with Existing Data Pipelines

- Real-time Data Quality Monitoring

- Ability to Define Custom Data Quality Rules

Key Questions to Ask When Selecting a Data Quality Management Tool Include:

- How Will Anomaly Detection Be Conducted Automatically?

- How Integrated Will the Tool Be with Existing Data Quality Management Tools?

- How Easy for Teams to Define Custom Rules?

Key takeaway: Data quality tools should complement your existing framework.

Best Software Tools for Enhancing Data Quality:

There are 3 main types of data quality software in today’s market:

- Data Profiling Tools to help identify patterns and outliers in your data.

- Validation Tools to help ensure compliance with business rules and restrictions

- Observability Platforms to track the real-time status of data pipelines.

Comparing Different Data Profiling Software

Look for the following factors:

- Automation

- Scalability

- Ease of Integration

Key takeaway: Use a layered solution for data quality monitoring; no one solution will do all of your data quality needs.

Best Practices to Ensure Data Quality and Integrity in Your Organization

In order to maintain strong data quality, you need a consistent process.

1. Validate Your Data Through Each Stage of the Process

Apply checks when the data is ingested, transformed and consumed.

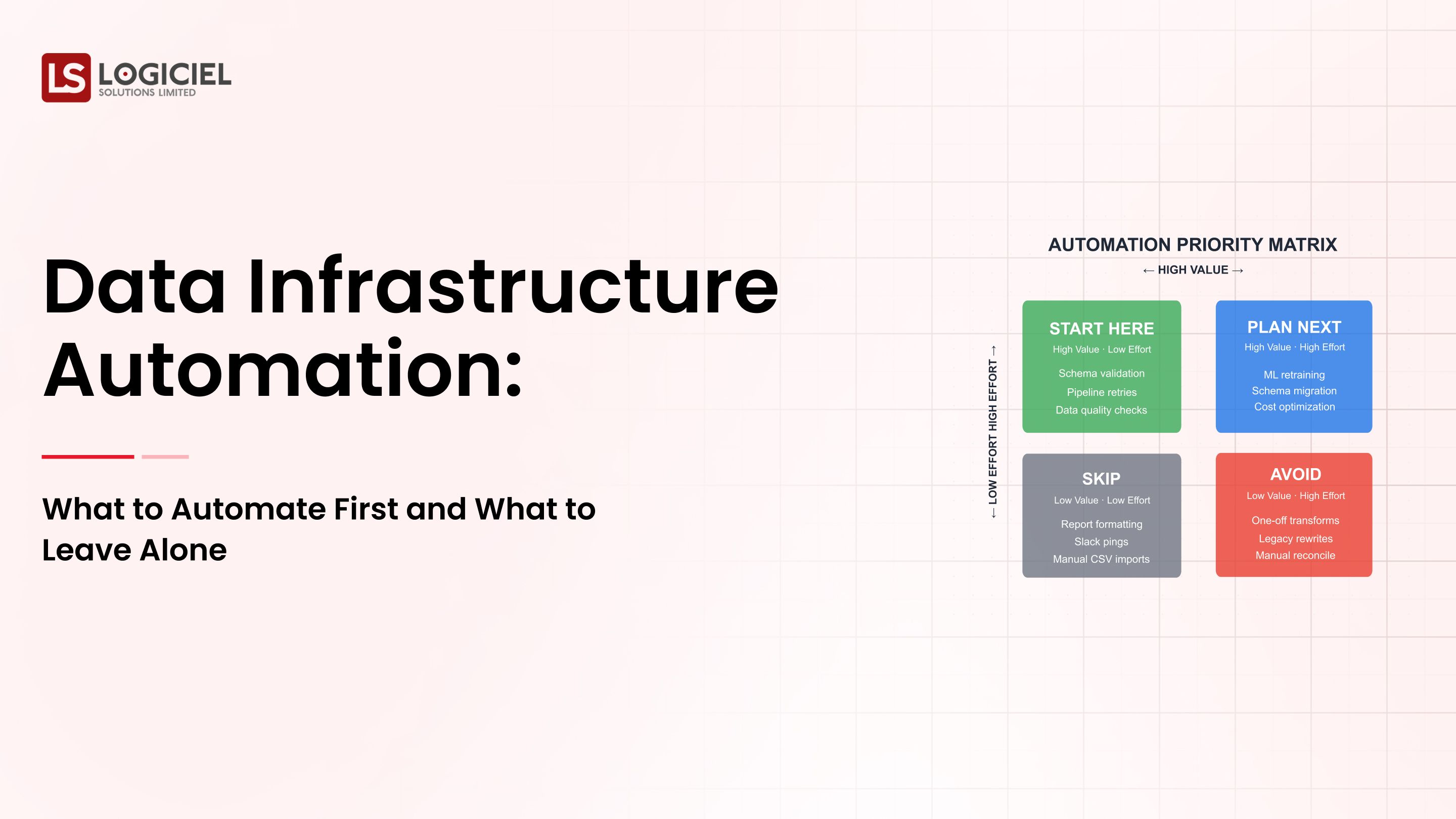

2. Automate Your Data Quality Checks

By using automated processes to check your data quality, you reduce the amount of time spent manually performing checks, as well as providing consistently accurate results.

3. Utilize Data Contracts

Create written agreements between data producers and consumers that define how the data will be used.

4. Continuously Monitor Your Data

This allows you to catch issues with your data before they impact your consumers.

Key takeaway: Enforce data quality from end-to-end.

Steps for Validating Data Quality in Practice:

A practical approach to validate data quality includes:

- Identify which datasets are most critical to your organization

- Define the metrics that are important for the data that you identified.

- Use validation rules to validate the data based on the metrics defined above.

- Monitor your data continuously for quality purposes.

- Improve your data quality over time by making small changes and scaling to other areas.

Common Types of Data Quality Checks:

- Null Checks

- Range Checks

- Schema Checks

Key takeaway: Start small, then scale up.

Data Quality in Modern Data Architectures:

Modern data systems have made improvements to their architecture, which has also increased their complexity. These complexities include:

- Distributed Data Pipelines

- Real-time Data Processing

- Multiple Data Sources

How Leading Organizations Address These Challenges:

- Centralize Observability

- Automate Data Quality Validations

- Follow Strong Governance Models

Key takeaway: The larger your systems grow, the more difficult it is to maintain a high level of data quality, while also making data quality an absolute necessity.Data quality is a very important issue to many businesses.

Here's an example of a data quality issue in the real world:

Business Case

An organization that relies on dashboards to provide performance based decision making for their revenue stream.

Issue

1. Data Pipelines Containing Missing Data

2. Consistent Metrics on Dashboards

3. No Validation Rules for the Data In The Pipeline

4. No Monitoring for Validation Rules or Data Quality

Solution

1. Implement a Data Quality Framework

2. Implement Automated Checks on Data Before It Goes Into the Data Pipeline

3. Continuously Monitor the Data and Data Pipelines to ensure that data quality rules are applied and met.

Outcome

1. Accurate Reporting of Revenues/Performance

2. Decisions Made Faster!

3. Restored Trust in the Data

Key takeaway: The majority of data quality issues are avoidable if your organization utilizes a data quality framework.

The Next Generation of Data Quality Frameworks Will Contain:

1. AI Driven Data Quality

2. Automated Anomaly Detection

3. Real-Time Monitoring and Anomaly Detection

4. Data Contracts Integration

5. Unified Data Platforms

Key takeaway: Data quality will become part of platform design rather than an add-on feature.

Conclusion: Data Quality is a System

Data Quality is no longer optional.

It is no longer just about cleaning your data; it is about engineering systems that prevent bad data from entering the system.

The best engineering teams will treat data quality in the same manner as they treat reliability; their data quality will be:

- Defined

- Measured

- Monitored

- Continuously improved

In today’s data-first organizations, your competitive advantage is no longer defined by the data you have...

It is defined by the amount of data you can trust.

RAG & Vector Database Guide

Build the quiet infrastructure behind smarter, self-learning systems. A CTO’s guide to modern data engineering.

Frequently Asked Questions

What is Data Quality?

Data quality refers to the usability and reliability of data when it is used to make decisions. Data quality consists of characteristics like accuracy, completeness, consistency, and timeliness. When you have high data quality, analytical resources and systems can be trusted.

What are the key dimensions of data quality?

The key dimensions of data quality include accuracy, completeness, timeliness, consistency, and validity. These five dimensions of data quality ensure that data is complete, correct, usable across multiple systems, and up-to-date.

How to measure data quality.

Data quality is measured utilizing methodology such as null values, duplicate records, freshness SLAs, and schema validation. Continuous monitoring should occur in order to determine if any issues exist.

What Tools Can Be Utilized to Improve Data Quality?

Data Profiling Tools, Validation Frameworks, and Data Observability Tools can help to maintain high data quality. These tools are used to detect anomalies, enforce data quality rules, and conduct continuous monitoring of all data being placed in the pipeline.

Why is data quality so important?

Poor data quality results in inaccurate data, broken dashboards, and in-turn, a loss of trust in the data. High data quality will provide reliable insights, better overall performance, and will provide scalable data systems.