Automation is everywhere in modern data systems.

CI/CD pipelines are deployed faster than ever. Data pipelines scale rapidly. Infrastructure can be provisioned in minutes.

Yet one critical question remains unanswered: What should we actually automate?

For data engineering leaders, the challenge is not whether to automate. It is how to balance speed, reliability, and control.

This guide explains:

- What to automate

- What not to automate

- How to build scalable systems without unnecessary complexity

Agent-to-Agent Future Report

Understand how autonomous AI agents are reshaping engineering and DevOps workflows.

What is Data Infrastructure Design?

Data infrastructure design defines how systems:

- Capture data

- Store data

- Process data

- Deliver data

It includes:

- Pipelines

- Storage layers

- Compute systems

- Governance

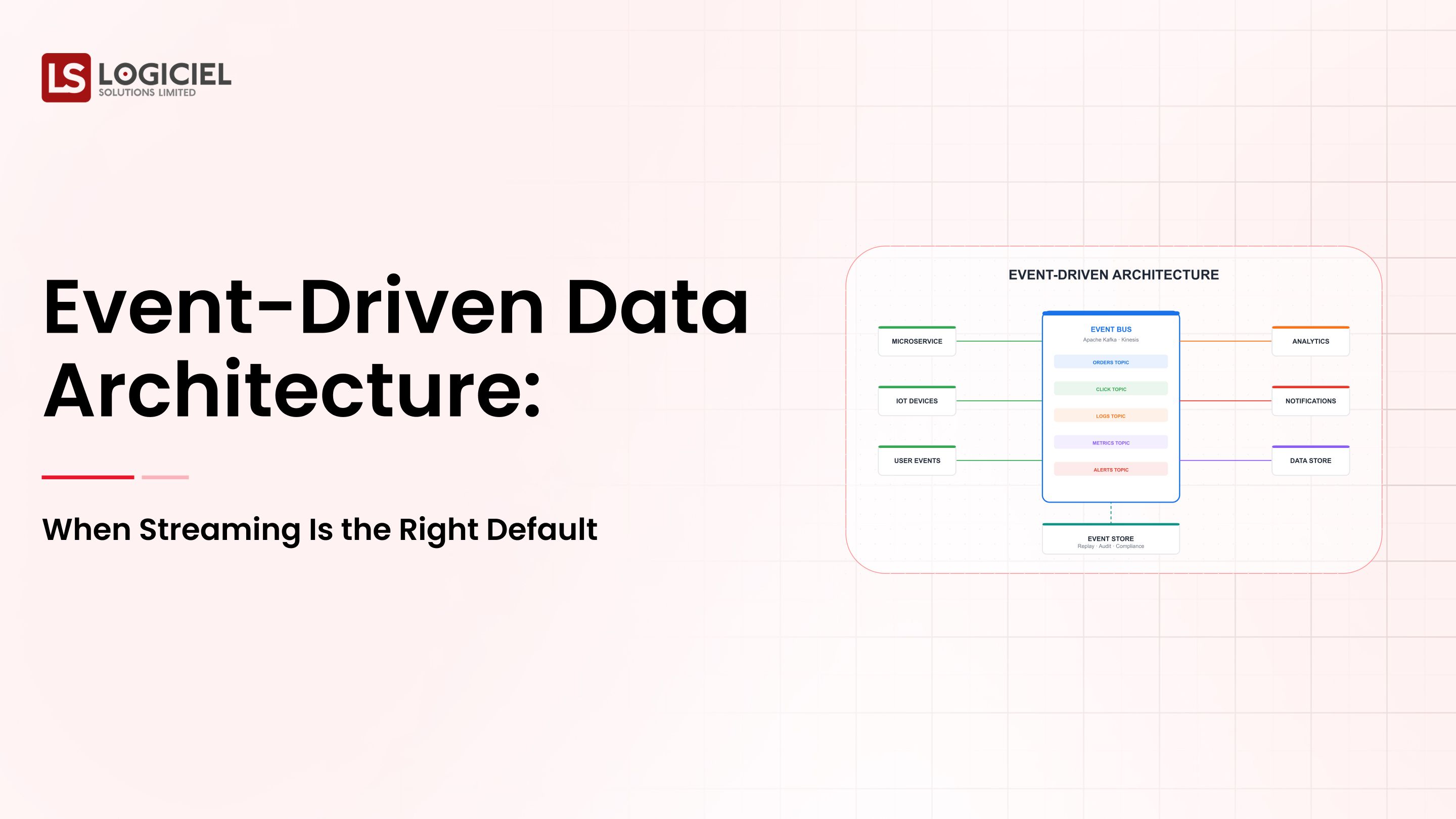

What Does Modern Data Infrastructure Look Like?

A typical system includes:

- Streaming data ingestion

- Data lakes or warehouses

- Transformation pipelines

- Analytics and BI layers

Key takeaway: Automation is part of architecture, not a separate layer.

The Reality of Automation

Automation brings:

- Faster deployment

- Less manual work

- More consistency

But also introduces:

- Hidden complexity

- Loss of control

- Debugging challenges

Common mistakes:

- Automating too early

- Automating the wrong layers

- Automating without visibility

Result:

- Fragile systems

- Hard-to-debug pipelines

- Reduced trust

Key takeaway: Automation amplifies both good and bad design decisions.

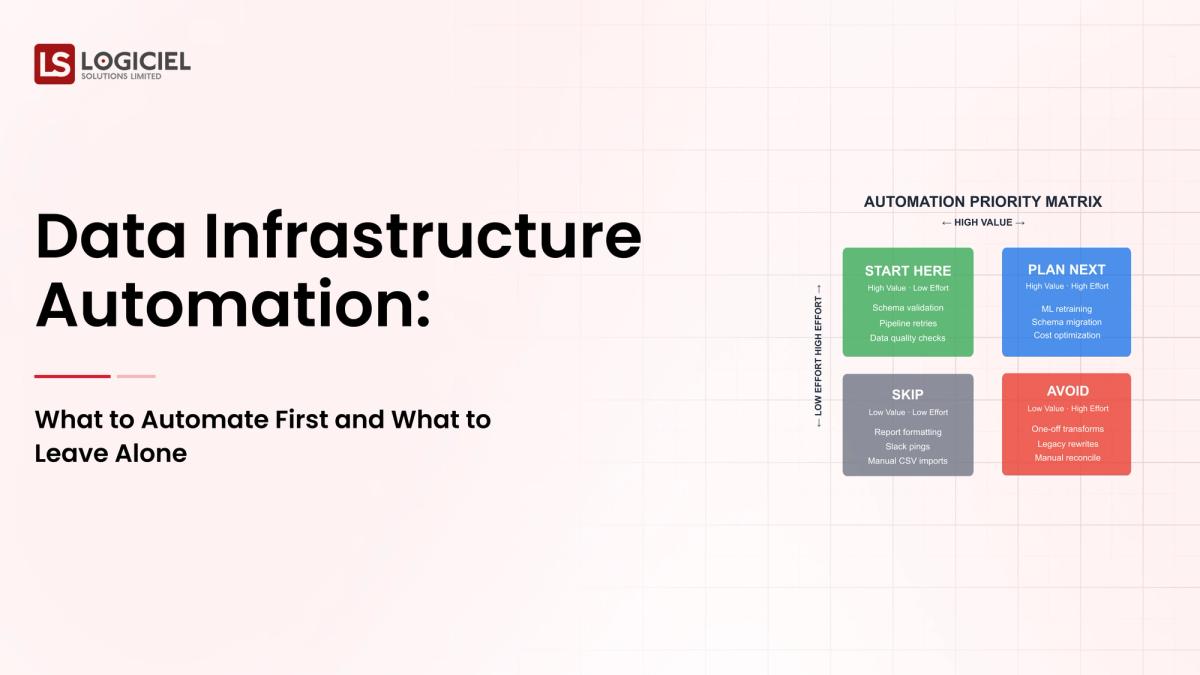

What to Automate First (High-ROI Areas)

1. Pipeline Deployment

Why it matters: Manual deployment slows teams and introduces errors.

What to automate:

- CI/CD pipelines

- Version control

- Environment promotion

Impact:

- Faster releases

- Consistent deployments

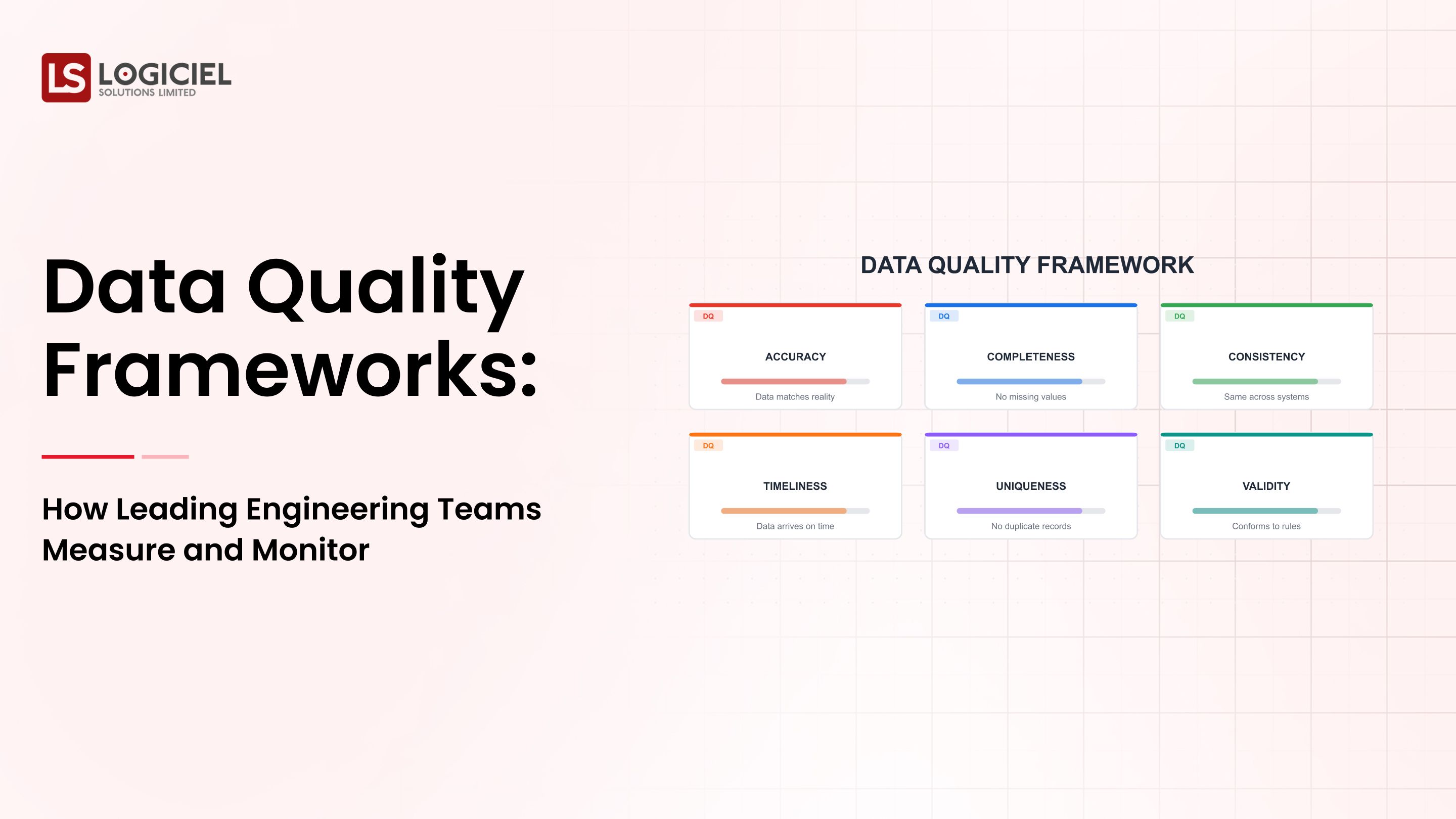

2. Data Validation and Quality Checks

Why it matters: Bad data affects everything downstream.

What to automate:

- Schema validation

- Data quality rules

- Anomaly detection

Impact:

- Early issue detection

- Increased trust

3. Infrastructure Provisioning

Why it matters: Manual setup is slow and inconsistent.

What to automate:

- Resource provisioning

- Environment setup

- Scaling policies

Impact:

- Faster setup

- Reduced errors

4. Monitoring and Alerting

Why it matters: Automation without visibility creates risk.

What to automate:

- Pipeline monitoring

- Alerts and incident tracking

Impact:

- Faster issue detection

- Reduced downtime

5. Metadata and Lineage Tracking

Why it matters: Understanding data flow is critical.

What to automate:

- Lineage tracking

- Metadata updates

- Documentation

Impact:

- Better visibility

- Easier debugging

What NOT to Automate (Initially)

1. Business Logic

Changes frequently and requires flexibility.

2. Early-Stage Pipelines

Unstable systems lead to technical debt if automated too early.

3. Complex Transformations

Require human oversight and are harder to debug when automated.

4. Cross-Team Dependencies

Need clear contracts before automation.

5. Decision-Making Processes

Require context and judgment.

Key takeaway: Automate systems, not thinking.

Core Principles for Automation

- Simplicity: Complex systems fail more often

- Modularity: Break systems into smaller components

- Observability: Maintain full visibility

- Scalability: Design for growth

- Reliability: Prioritize accuracy over speed

Key takeaway: Good architecture reduces the need for excessive automation.

Cloud Platforms for Automation

- AWS: Scalable infrastructure

- Google Cloud: Strong analytics

- Azure: Enterprise-ready solutions

Selection factors:

- Performance

- Pricing

- Integration

- Trade-offs

Key takeaway: No single platform fits all use cases.

Monitoring and Performance

Monitoring tools should provide:

- Real-time performance tracking

- Anomaly detection

- Production comparisons

Why it matters: Early monitoring ensures long-term reliability.

Designing for Scalability

Best practices:

- Design for failure

- Use idempotent processes

- Separate compute and storage

- Optimize data flow

Key takeaway: Plan for scale from the beginning.

Data Lakes in Automated Systems

Consider:

- Storage scalability

- Cost

- Integration

Data lakes are effective for:

- Raw data storage

- Machine learning pipelines

But require strong governance.

The Risk of Over-Automation

Example: A fast-growing company automated everything but lost visibility and control.

Result:

- Complex systems

- Frequent failures

- Difficult debugging

Key takeaway: Automation does not guarantee improvement.

Future of Automation

- AI-driven automation

- Self-healing pipelines

- Unified cloud platforms

- Intelligent orchestration

Conclusion

Automation is not the goal. It is a tool.

The best teams do not automate everything. They automate the right things at the right time.

Logiciel POV

At Logiciel Solutions, we help teams design AI-first data infrastructures that use automation intelligently-balancing speed, reliability, and control.

Our systems are built to scale without complexity and perform reliably even under failure conditions.

Explore how we can help you build smarter, automated data systems.

Evaluation Differnitator Framework

Why great CTOs don’t just build they evaluate. Use this framework to spot bottlenecks and benchmark performance.

Frequently Asked Questions

What is data infrastructure design?

The structure of systems that manage data ingestion, storage, processing, and delivery.

What should be automated first?

Deployment, validation, provisioning, and monitoring.

What should not be automated?

Business logic, early pipelines, and decision-making processes.

Where does automation help most?

In improving efficiency, reliability, and scalability.

What are the risks of automation?

Increased complexity, reduced visibility, and debugging challenges.