Three years ago your team made a logical architectural architectural choice,

Batch Pipelines, Hourly Update., Nightly Aggregation They Have Worked Well Enough. the Systems Were Predictable, and Manageable Overhead.

Today that same logical choice is costing your team approximately 30-40% of your sprint capacity.

Delays in dashboarding are blocking the use case for real-time. strewn together workarounds to simulate streaming behavior over top of batching systems are being devised by Engineers and the net-result is complexity without any real-time capability.

This is why we are discussing data streaming.

If you are a Staff Engineer or Principal Engineer who has tasked yourself with evaluating event-driven systems, this guide will help you.

Grasp the principles of data streaming from a practical perspective;

Know when it is right to choose event-driven architectures as an option

Avoid the common pitfalls that come with deploying streaming systems.

Let’s first break down the technical basics.

AI Velocity Blueprint

Measure and multiply engineering velocity using AI-powered diagnostics and sprint-aligned teams.

What is Data Streaming? - What Data Streaming Means in Simple Terms of Language?

Data streaming means the basically a continuous flow of data since the time events occur rather than processing data as batch.

Batch Processing (Check your email only once every single day) vs Data Streaming (Receive Emails as they are sent to you).

Reasons Why There is Data Streaming

Traditional Batch Systems have been struggling with:

- Latency

- Real Time Decisions

- Incremental Updates

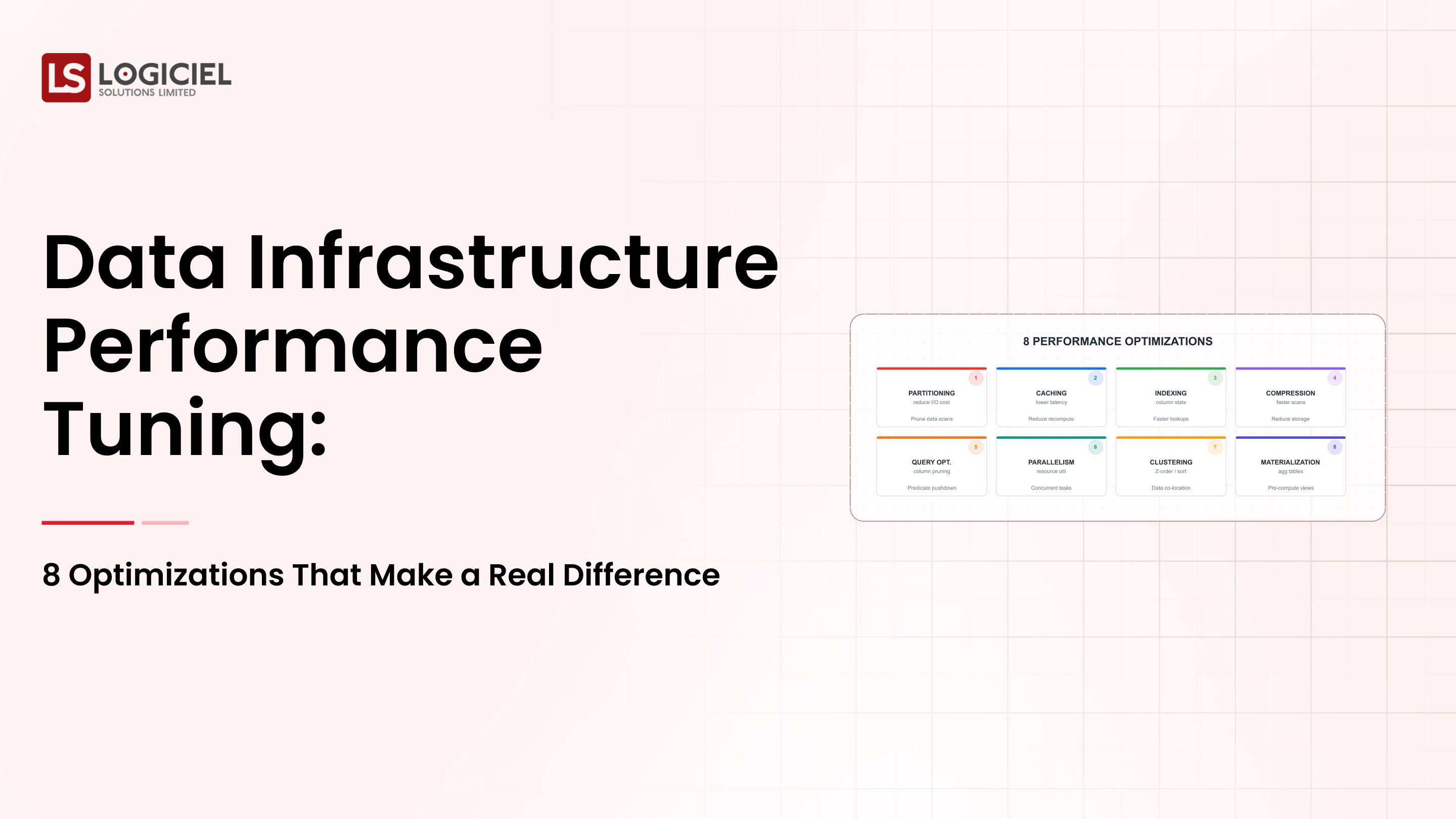

streaming systems solve this challenge by allowing data to be processed as it is produced.Core Components of Data Streaming

Ports and the other elements of data streaming are broken down as follows:

Component Function Producers Produces events (App, Sensor, Service, etc.) Transport Transports events to the Processing Engine (Kafka, etc.) Processing Engine Processes the events (Flink, Spark, etc.) Consumers Utilizes the processed data (Dashboards, API, etc.)

What Technologies are Used

- Event Streaming => Kafka

- Streaming Processing => Flink

- Real-time Analytics Systems

What is the Problem that Streaming Solves?

- Real-time insights from data.

- Reacting in real-time to events.

- Continuous processing of data.

Key Takeaway: Streaming changes how systems are used from reactive systems to now being real-time and event-driven systems.

The Importance of Streaming By 2026

All systems are now built on streaming, not just an option anymore.

1. The User's Expectation of Real-time.

Users have expectations such as:

- Immediate updates to their data.

- Real-time dashboards about their data.

- Instant feedback about their events.

Batch systems will not meet these user expectations.

2. AI Systems Require Fresh Data.

AI models depend on:

- Real-time signals from sensors.

- Continuous updates of those signals.

An event-driven process to provide value to the user.

3. Event Velocity = Increase in Events.

Modern systems create:

- Millions of events every minute (e.g., weather systems).

- Continuous data streams between events.

- Complex relationships with each other.

4. Business Impact of Latency.

If there is an event delay:

- The business will miss out on revenue opportunities.

- Users who wait will have a poor user experience.

- The longer the delay, the more money the user will lose.

Before & After Streaming

- Batch Systems vs Streaming Systems

- Delayed insights vs Real-time insights

- Periodic updates vs Continuous updates

- Reactive Decisions vs Proactive Actions

Key Takeaway: Streaming is critical to modern real-time systems.

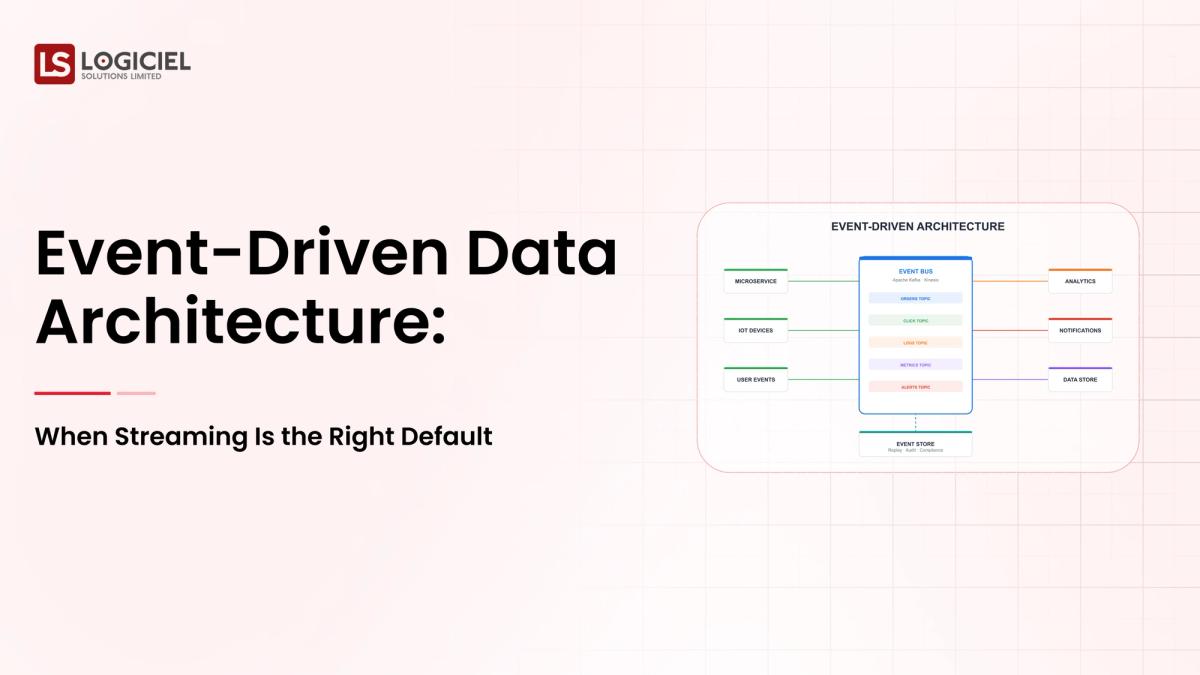

Core Components of Data Streaming - What are you building?

Data streaming systems are made of multiple layers.

1. Layer 1 - Ingestion Layer.

The Ingestion layer captures events which can come from:

- App

- Device

- API

2. Layer 2 - Streaming Platform.

The Streaming Platform is the backbone of the streaming system. Its functions include:

Transports the events from Ingestion layer to Processing Engine using Kafka.

Continuously retains events from the Ingestion layer and can scale to meet demand.

3. Layer 3 - Processing Layer.

The Processing Layer will process events using either Flink or Spark Streaming (filtering, aggregation, situation enrichment) and produce the final processed data.

4. Layer 4 - Storage Layer.

The Storage Layer stores the processed data in either data lakes or warehouses.

5. Consumer Layer.

The consumer(s) will read or use theWays Events Get Generated And Received

You can have Event Stream Processors (ESPs) that can generate events for many applications across separate processes, or from an event source (e.g., A web application, a Mobile app, or IoT device).

The data generated from solicitations from end-users can be (among other things):

• Order confirmations;

• Stock availability changes;

• Cart confirmations; and

• Availability notifications.

These different forms of event generation should be designed to be aggregated from all sources at the same time to provide a seamless experience for your end-user.

In doing so, an ESP can collect incoming events using AP from a wide variety of sources and broadcast as a single flow of data to all appropriate applications for consumption.

To accomplish this, you will require an asynchronous messaging platform or framework (e.g., Kafka, RabbitMQ, or ActiveMQ) that is designed to handle a large amount of data being transported in real-time, along with the ability to scale.

How Events Work As A Unit Of Work (UoW):

In a typical scenario, latency can approach seconds. An event is generated by an external process, transported to an ESP, and finally consumed by an end-user application in near real-time (less than 1 second).

Consider how you, as a UoW, interact with all of your customers.

Your customers interact with you through multiple platforms:

• Email;

• Phone;

• Website; and

• Social media

Consider how many times you want to generate an event based on these multiple customer touchpoints.

For example, if there was a change in stock availability on one platform (e.g., website), but no change on the other platforms (e.g., phone, email), should the customer not receive notification of this?

To provide a seamless experience for your end-user, you must provide them notification of an event occurring from one platform to the other.

By aggregating all incoming events from multiple sources into an ESP, you can create a single stream of all event data generated by different processes in near real-time (less than 1 second), allowing you to provide the customer with near instantaneous notification of whatever events occurred based on their customer interactions.Using Streaming as a One-Time Setup

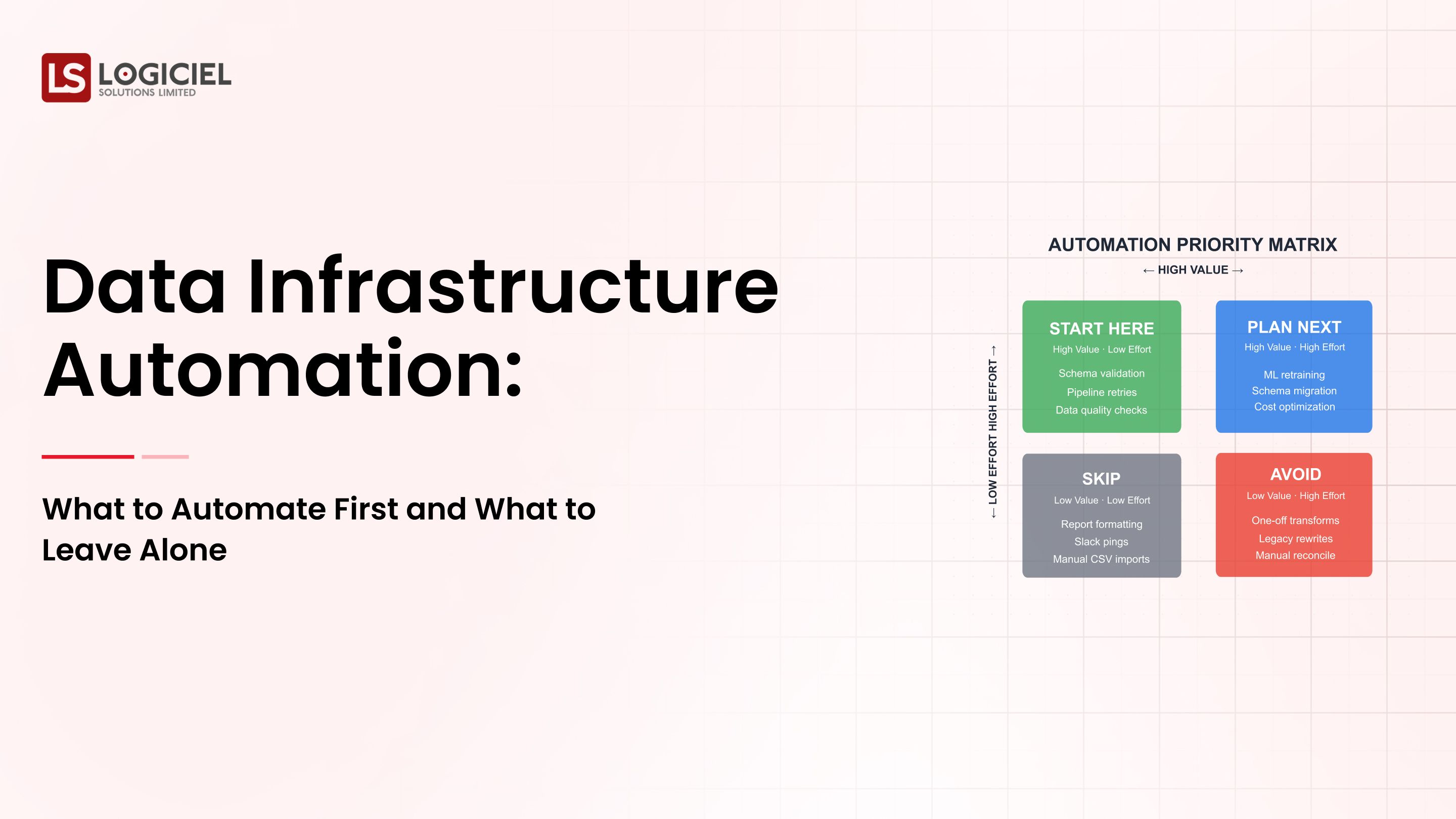

There are two aspects of continuous updating:

- Tuning

- Optimization

Either can occur many different ways. Most failures related to data streaming have more to do with design than technology.

The following is a list of the top best practice ideas that the highest performing organizations use when working with data streaming:

1) Have clarity around what you want to achieve.

The only reason you would want to use streaming is if you must get data in real-time; if you need low-latency data delivery.

2) Carefully design your events as they will form the data structure of your business.

Make sure that each event has a clearly defined schema and that the schema format is consistent for every event you have. You should also include versioning support in your event structure.

3) Build your systems to accommodate failure.

When implementing streaming systems ensure that you build in features that will allow your systems to handle failure. Some of these features would be; Retry mechanisms, Dead letter queues, and Monitoring systems.

4) Automate all processes.

When using streaming systems, it is important to automate processes such as: validation, deployment, and scaling.

5) Leverage AI-First Engineering Systems.

Incorporate systems that allow you to transition away from manual operations.

The majority of successful organizations utilize these types of AI systems for data streaming; these systems can predict failures, optimize pipelines, and provide better performance. This is an area where Logiciel demonstrates value in working with teams.

Instead of managing disparate data streaming systems, organizations begin adopting predictive and intelligent architecture that can scale efficiently.

In closing, success with streaming comes from; discipline and good system design.

Conclusion

The introduction of data streaming has significantly impacted how data architecture is viewed today.

There are three implicit conclusions regarding data streaming:

Streaming enables real-time, event-driven systems;

Streaming is incredibly important for powering AI, analytics, and user experience; and

Success with streaming depends greatly upon good design principles rather than just good streaming tools.

In addition to its many complexities, implementing streaming systems takes time for new designs and processes to become part of the organization’s DNA Cultures.

Ultimately, utilizing data streaming provides many advantages:

- Improved speed of insight;

- Improvements in analytics;

- Systems that can scale;

- Improved user experience.

Next Steps (Call To Action)

If you own a system that is struggling with latency issues or needing real time requirements, evaluate the architecture of your current system(s).

More Detailed Information:

Compare and contrast Apache Kafka and Apache Flink versus Cloud Native data streaming

Explore the root causes of your data infrastructure failures within your organization

Understand how to build a modern data stack

For the teams we work with within Logiciel Solutions, we can help you build a streaming system powered by AI that will allow your organization to achieve real time data insight without having complexity within your environment.

When designing a streaming system, Logiciel utilizes automation (removing manual processes), observability (visibility into performance issues) and intelligent optimization.

Am I stressing enough how easy it is to incorporate streaming data into the processes of your organization? Let me show you how.

AI – Powered Product Development Playbook

How AI-first startups build MVPs faster, ship quicker, & impress investors without big teams.

Frequently Asked Questions

What is data streaming?

Data streaming is the real-time processing of data as it is being created, enabling organizations to gain real-time insight and take action.

When should your organization utilize data streaming vs batch processing?

Organizations should utilize streaming ONLY when latency is vital to their operations, for example: Real-time analytics, fraud detection, and/or providing live dashboards.

Is streaming a better type of processing over batch processing?

No, streaming is ideal for scenarios that require real-time processing; whereas, batch processes work well for routine analysis.

Which tools are used with streaming?

Apache Kafka is the most commonly used for event streaming; while Apache Flink is used most for processing data.

What is the greatest obstacle or challenge in the use of streaming systems?

For most organizations, managing complexity is the greatest obstacle/challenge in using a streaming system. Complexity can be found through event design, monitoring, and reliability of the data streaming system.