Why Is This Important?

The time is 2:21AM. Another Alert. A critical data pipeline has succeeded, but downstream dashboards are delayed again, queries are running slower than expected and overall cost increases are mounting...and once again the team has spent the whole night debugging performance.

This situation illustrates poor data infrastructure management.

Modern data infrastructures do not fail due to a lack of tools; they fail because they aren't optimized. As they grow larger, their inefficiencies get more pronounced. What works on a 1TB database becomes a performance bottleneck when that database grows to 10TB.

To help you understand what data infrastructure management means, the eight practical optimizations that you will learn here will provide quantifiable benefits for performance and reliability.

Before we go on, let's explore the definition of data infrastructure management.

Agent-to-Agent Future Report

Understand how autonomous AI agents are reshaping engineering and DevOps workflows.

Data Infrastructure Management In Plain English

The true definition of data infrastructure management is designing, monitoring and optimizing your data infrastructures to meet both performance and reliability needs while allowing growth.

An analogy would be if your data infrastructure is like a highway; then data infrastructure management is what keeps traffic flowing without creating congestion.Why is It Important

There are many negative effects of bad management:

- Slow pipelines

- High cost for queries

- More failures

Four Main Components of Management

Component The Purpose of the Component Data Pipeline Move and transform data Storage System Store structured or unstructured data Processing Engine Execute transformations and queries Observability Layer Monitor how performance and reliability

What the Management Solves

- Lack of Efficient Use of Resources

- Need Reliable Pipelines

- Need Scalable Systems

Conclusion: The Management of Data Infrastructure will keep your systems fast, reliable, and cost-effective.

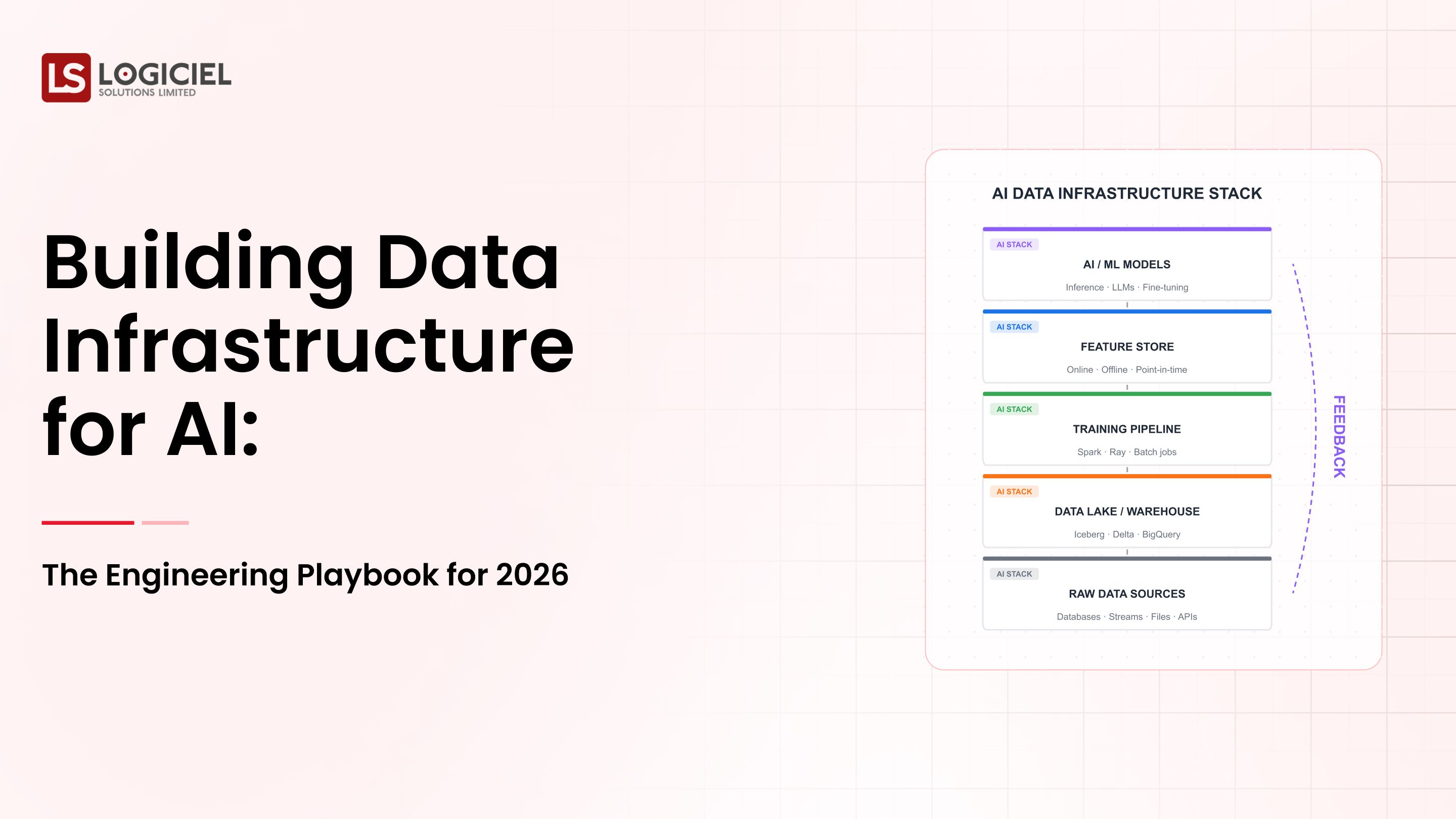

Why Data Infrastructure Management Will Matter More in 2026

1. Performance Demand for AI Workloads

For AI pipelines, there is a demand for:

- Fast access to data

- Low latency

- Reliable pipelines

2. Rapid Increase in Volume of Data

All types of systems now process:

- Very large amounts of data

- Ongoing data streams

- Complex data transformations

3. Increased Cost Pressure

The cloud has cost increases due to:

- Inefficient queries

- Poor resource allocation

- Redundant processing

4. Performance Impacts the Bottom Line

Slow systems result in:

- Delayed business decisions

- Poor user experience

- Loss of revenue

Before Optimization as to After Optimization

Examples of Performance Measures between before optimization and after optimization

- Unoptimized System Optimise System

- Slow query times Fast query times

- High costs Efficient use of resources

- requent pipeline failures Reliable pipelines

Conclusion: Performance optimization is a responsibility of engineering.

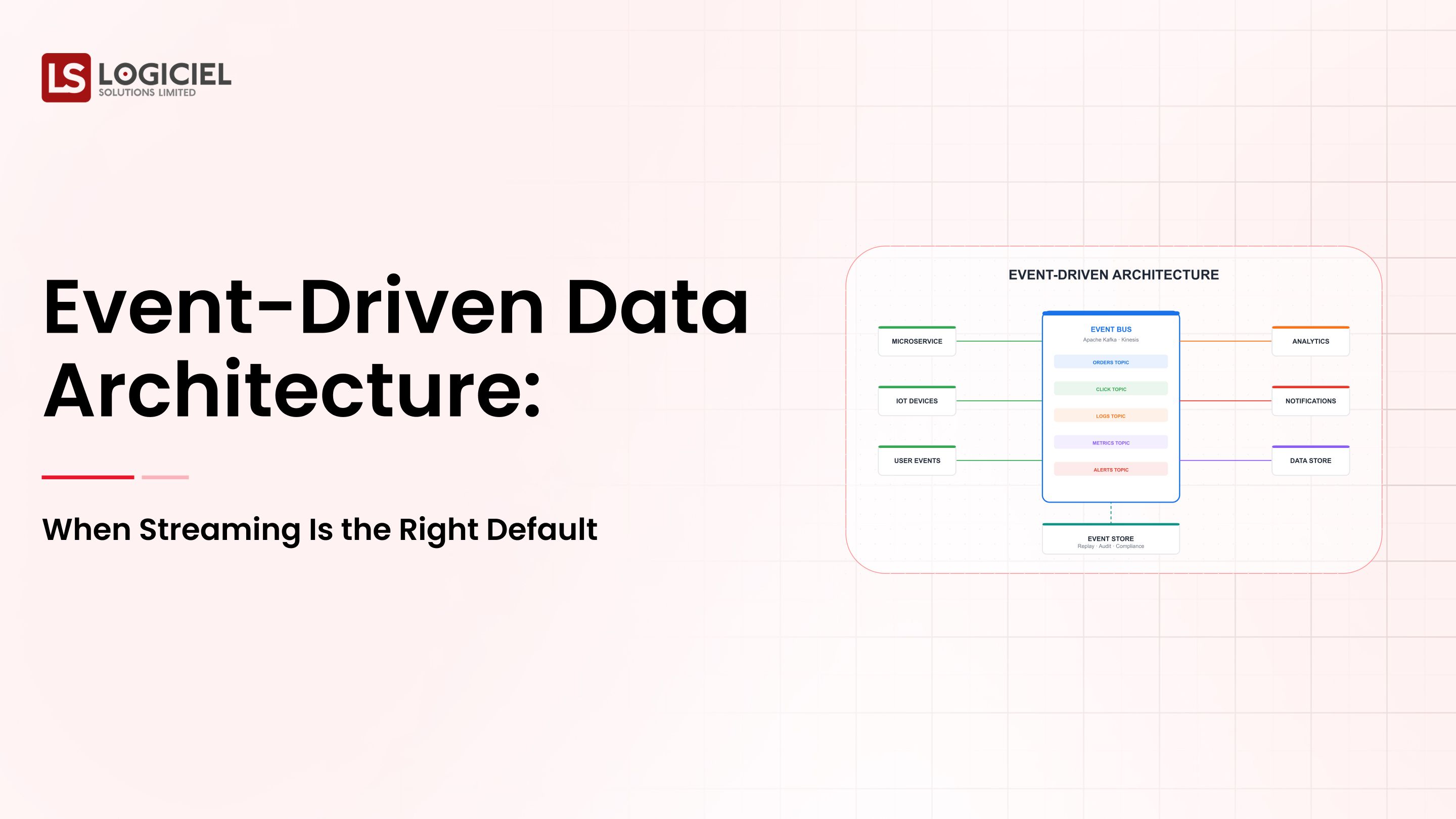

The Core Components of Data Infrastructure Management - Where Building and What You are Building

1. Ingestion Layer

Responsible for handling incoming data from:

- APIs Streaming

- systems

- Databases

2. Storage Layer

Includes:

- Data lakes

- Warehouses

3. Processing Layer

Responsible for performing transformations using:

- Batch processing

- Streaming pipelines

4. Orchestration Layer

Responsible for managing workflows that manage:

- Scheduling

- Dependencies

5. Observability Layer

Tracks:

- Latency

- Errors

- Resource usage

How It Works as a Unit

Data is ingested and stored efficiently, then it is processed, transformed, and monitored.

Conclusion: How well they (layers) are optimized determines whether you will achieve the performance you desireData Infrastructure Management in The Real World

An organization has a Data Platform as a SaaS offering and therefore has to ingest User events, Transactional, and Analytical data and process them through the following stages:

Ingesting your Data into your Pipeline Store your Data into either a Data Lake or Data Warehouse Transform your Data through the following types of transformation processes; Aggregation Join

The Data is consumed through Dashboards and Reports from the Data Warehouse or Data Lake.

Successful Metrics:

- Quick access by users to each of their Queries

- Reliable Processing Systems

Failed Metrics:

- Inefficient Queries

- Bad Partitioning

- No Testing

Key Takeaway: There are bottlenecks identified in each step of the process

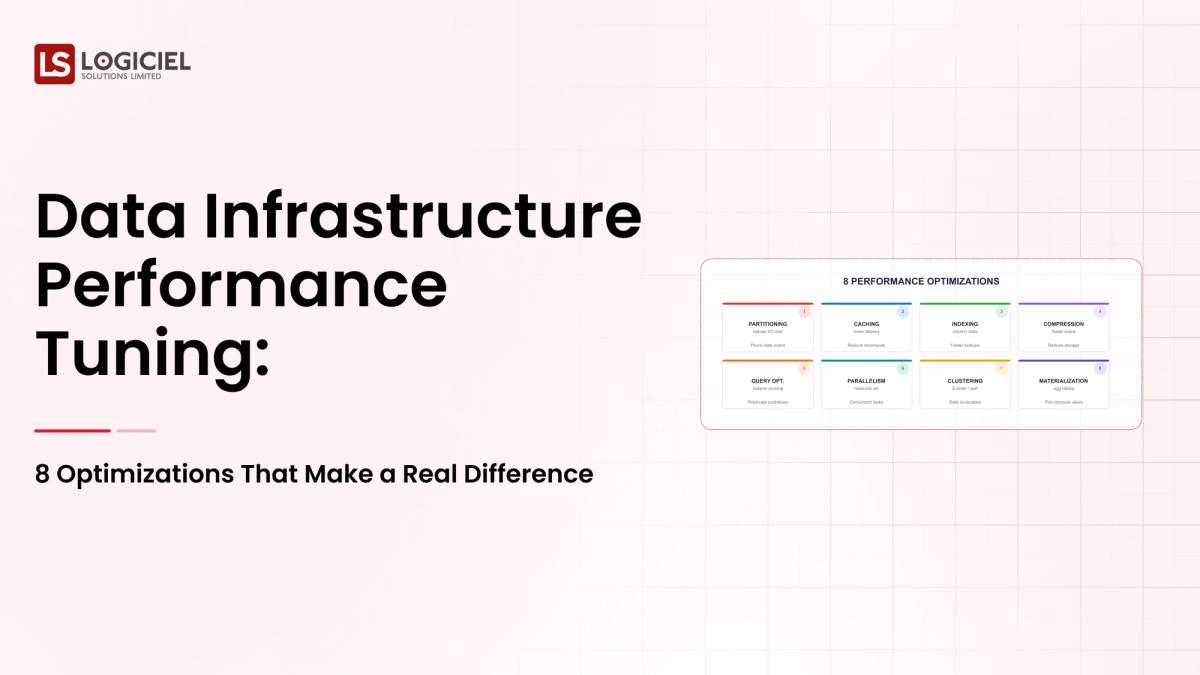

8 Performance Optimizations to make a Positive Impact

Now let’s discuss how to optimize your performance.

1. Optimize Your Data Partitioning

Inefficiently partitioned data will cause:

- Inefficient and long-running Queries

- Full Table Scans

Examples of techniques for best practices of good partitioning would be:

- Time-based partitioning

- Dynamically Partitions

2. Reduce Your Data Movement

Minimize:

- Cross-region data transfers

- Redundant data processing

3. Optimize Your Data Queries

Do NOT do the following:

- Use redundant Joins to get results

- Access Half Million Row data sets for Retrieval

DO:

- Filter Data Upfront

- Aggregate Data Efficiently

4. Utilize a Columnar Format for your Data Storage

Columnar formats like Parquet provide:

- Faster Performance than Regular/Extensional Formats

- Lower Cost of Storage

5. Caching Frequently Run Queries and Intermediate Results

Cache:

Frequently Run Queries and Intermediate Result Sets

6. Monitor and Alert on Your Data Processing Latency and Error Rate

Setup Alerts for Key Metrics

7. Utilize Automation to allow for Dynamically Scaling your Data Processing Infrastructure

Use:

- Auto-scaling Systems

- Dynamically Allocate Resources as Needed

8. Improve Data Observability

You should implement:

- Data Quality Checks

- A Pipeline Monitoring Tool

Treating optimization as a one-off is a common mistake. The role of performance tuning is to be an ongoing commitment to helping organizations analyze, update, and modify their technologies continually to optimize their infrastructure for maximum performance and efficiency.

Best practices for high-performing teams that do this:

- Automate the optimization of various parts of the system, including: a. Query tuning b. Resource allocation

- Use infrastructure by treating it as code. This includes: a. Version control b. Reproducibility

- Create mechanisms to recover from failures, including: a. Retry mechanisms b. Alerts to notify teams when there are failures

- Establish Service Level Agreements (SLAs) to monitor: a. Performance b. Reliability

- Implement AI-first engineering systems, which allow for: a. Detection of inefficiencies b. Optimization of performance c. Prediction of failures

What sets Logiciel apart from others is that instead of relying on manual performance tuning, high-performing teams use intelligent systems that will continually optimize their infrastructure.

In summary, high-performing teams recognize that success comes from an emphasis on automation and intelligence in the systems they use.

Summary

Performance of data infrastructure is critical in today’s world. There are three main points to remember:

- Performance issues will grow exponentially as the system grows.

- The optimization of one system can achieve benefits for many other systems.

- Performance improvement is essential and a continuous process.

When performance tuning is done correctly, it will provide:

- Faster data pipelines

- Lower operating costs

- More reliable data

- Higher engineering velocity

Call to Action

If your data systems are slow, the next step is to identify areas that are causing performance bottlenecks. For more information, check out:

- Reasons Why Your Data Infrastructure Isn't Working: Causes & Solutions

- Guide to Running a Proof-of-Concept with a Data Infrastructure

- Evaluating Vendors of Data Infrastructure Solutions

At Logiciel Solutions, we are a partner with organizations in building AI-first, automated data systems that optimize performance through engineering expertise and reduced complexities in delivering data efficiently and reliably. Find out how Logiciel can help you optimize your data infrastructure.

Evaluation Differnitator Framework

Why great CTOs don’t just build they evaluate. Use this framework to spot bottlenecks and benchmark performance.

Frequently Asked Questions

What is Data Infrastructure Management?

Data Infrastructure Management is the strategic design, optimization, and ongoing management of data systems to ensure optimal performance, reliability, and scalability.

Why is Performance Tuning Important?

Performance tuning is essential because it drives higher performance, lower costs, and ensures that data is processed reliably.

What are Common Causes of Slow Data Systems?

There are numerous reasons why data systems take a long time to run, including poorly designed partitions, poorly written queries, and a lack of system monitoring.

How Often Should You Optimize Data Infrastructure?

Optimizing your data infrastructure should be a continuous process, not just a one-time activity.

What are Some Common Tools Used to Optimize Performance?

Tools that are commonly used to optimize performance include observability platforms, query analyzers/optimizers, and systems that are capable of auto-scaling.