It’s 2:34 a.m.

The model that was producing stellar results a week ago is now producing unreliable predictions. The problem is not the model, but rather something awry with the data pipeline. An unnoticed schema change went unnoticed. Feature data are incoherent. No alert made you aware there's been an issue.

Building data infrastructures to enable AI systems experiences this as an everyday reality.

Traditional analytics systems amplify the negative impact caused by data issues. Minor inconsistencies create significant breakdowns. Pipelines that worked for roundly serving dashboards don't provide the same guarantee of reliability for machine learning.

If you are CTO or lead data engineer to AI systems, then this playbook will illustrate for you:

Differentiators that makes AI data infrastructure unique from traditional data infrastructure.

Ways to design infrastructure that enables consistent and reliable workflows in AI.

Ways to avoid the top likely causes of large-scale inconsistent or unreliable AI systems.

Beginning with the central challenge.

AI – Powered Product Development Playbook

How AI-first startups build MVPs faster, ship quicker, & impress investors without big teams.

Enterprise Data Challenge: What Is Unique

Building out an enterprise’s data infrastructure for the purpose of enabling AI is about more than simply expanding existing systems. You will need to address entirely new limitations as you build data infrastructures adequate to support AI.

1. Sensitivity and accuracy requirements associated with data

AI systems require data that is:

Of high-quality, adequately labeled. Consistently defined. Reproducibly defined with respect to the historical record.

Even small errors in these areas can affect the performance of the site’s models.

2. The complexity introduced by managing batch and real time data

AI systems often require distinct sources of data depending on the use case (e.g. predictive modelling). In AI systems, this must be performed in parallel.

3. The difficulty presented when developing new models (i.e. new inputs) for use with AI models

AI models are developed through analytical and statistical means, which results in the incorporation of large volumes of data into developed AI models due to continuously changing data inputs. When you develop new models, this can create issues with the original model(s) using the same data; therefore, new inputs need to be developed for new models. Thus, when developing a new model, there is an additional level of complexity and new models will need other new inputs that may need to be developed periodically using different data sourcesSystems That Are Related To More Than One Way Of Doing Business Together

Enterprise businesses are made up of many different types of information, such as:

Customer Relationship Management (CRM) systems

- Billing systems

- Product catalogs

Integration of these data sources into a single view is difficult and requires a significant investment of time.

4. Increased Costs Related To Failure

Failure in a business impacts:

- The quality of the product

- Customer satisfaction

- Revenue

Limitations Of Traditional Business

Traditional data systems have a number of disadvantages, including:

- There is little or no visibility into data

- There is no ability to trace the lineage of data

- Data ever attainable

The most important point here is that an AI data structure must be more reliable, more visible, and more scalable than traditional data structures.

Regulatory And Compliance Impacts

AI enterprise data must comply with many different regulatory bodies and organizations.

1. Privacy and Data Residency

Requirements include:

- Compliance with GDPR

- Data localization

- Data access control

2. Auditability and Lineage

Includes the following tasks:

- Keep track of your source of data

- Track how that data is being transformed

- Track your Model inputs

3. Retention Policy

Keep your data:

- In an appropriate place

- Deleted when necessary

4. Compliance based upon the Design of The System

Compliance impacts:

- How data is stored

- How the data is extracted

- How the data is accessed

Build versus Retrofit

Building for compliance from day one:

- Decreases the long-term costs

- Avoids the need to rework the compliant system.

Retrofitting after the fact creates many issues:

- Increased complexity

- Slows down teams to get compliance.

Takeaway: Compliance is a Requirement For Any System Or Product Being Built To Meet Standards.

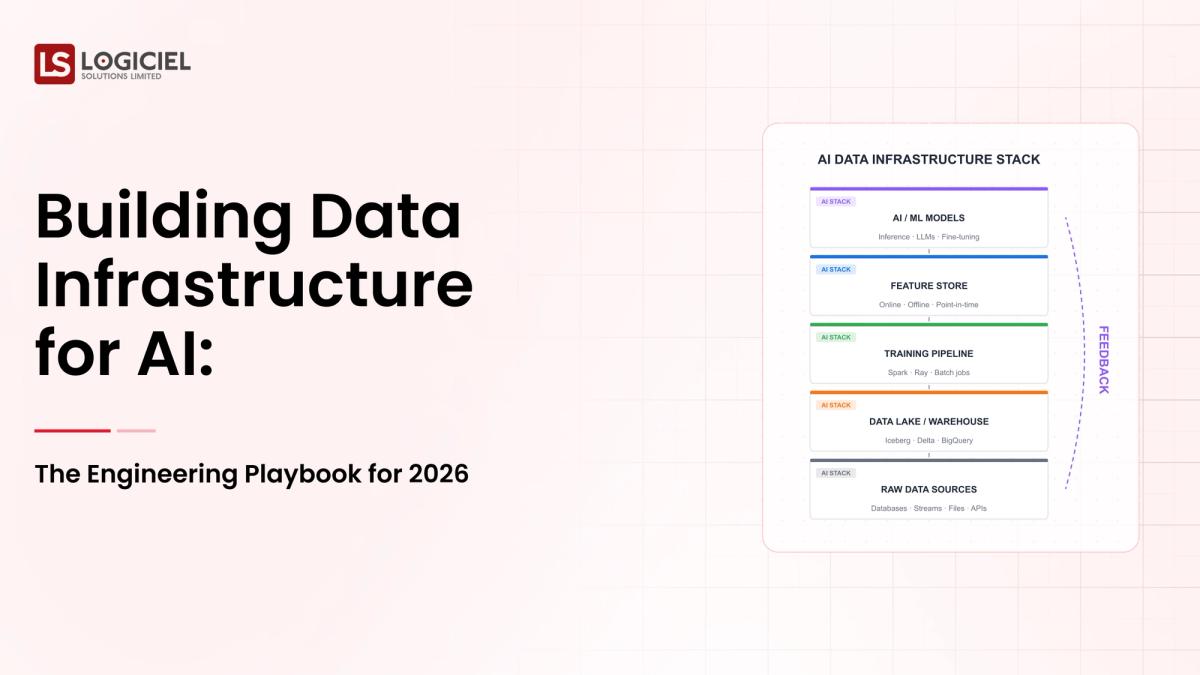

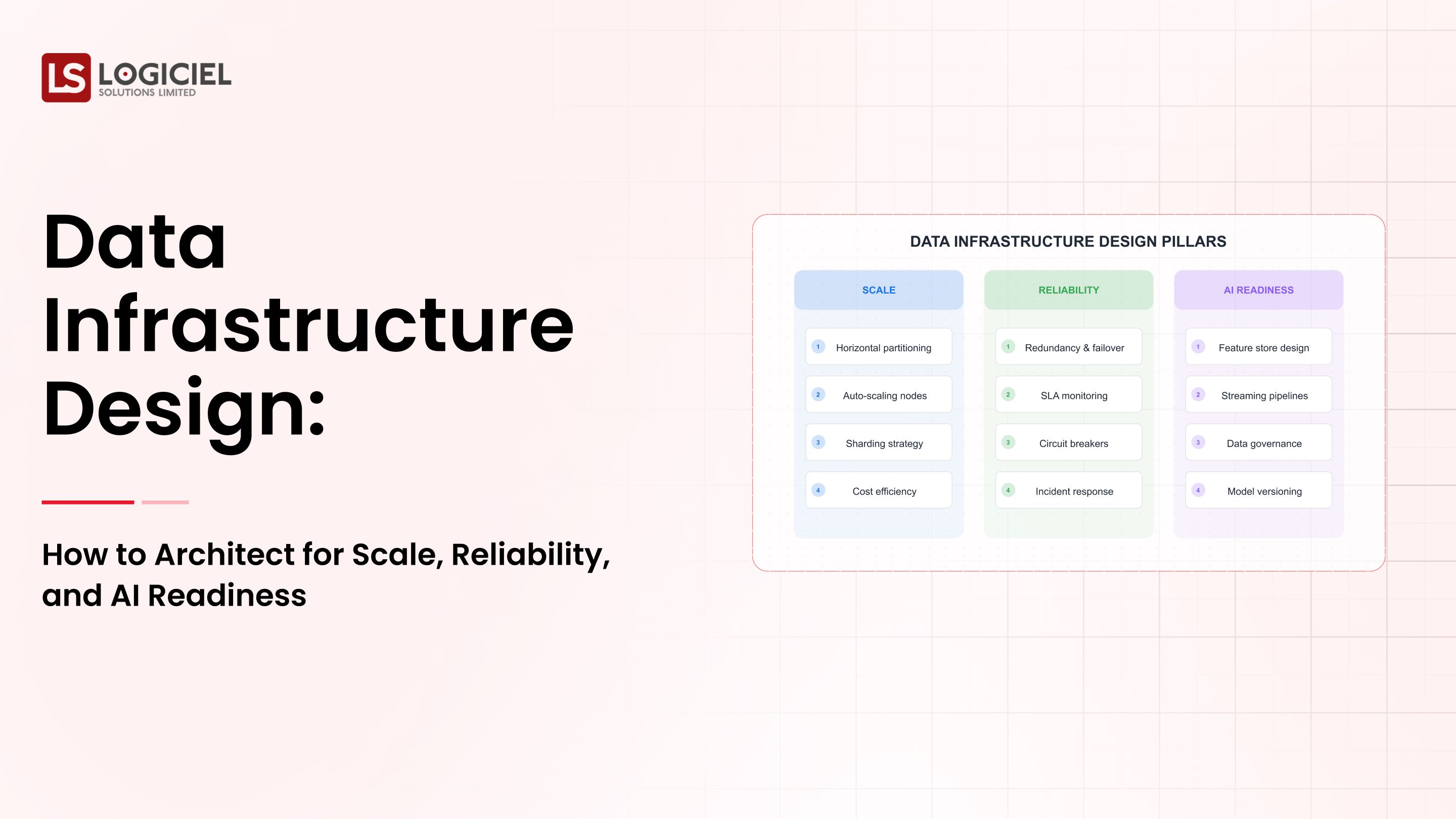

Enterprise Data Architecture

As I mentioned earlier: There are many ways to build a scalable and reliable system.

1. Hybrid System (Batch + Streaming)

Batch: For building training data

Streaming: To provide real-time inference

2. Separately Store and Process

Allows:

- To easily scale up/down

- Flexibility

- Cost savings

3. Feature Store Layer

A single point of reference for:

- Series of features

- Overall data consistency

4. Using The Data Lakehouse Model

A way to combine both:

- The scalability of a data lake

- The reliability of a data warehouse.

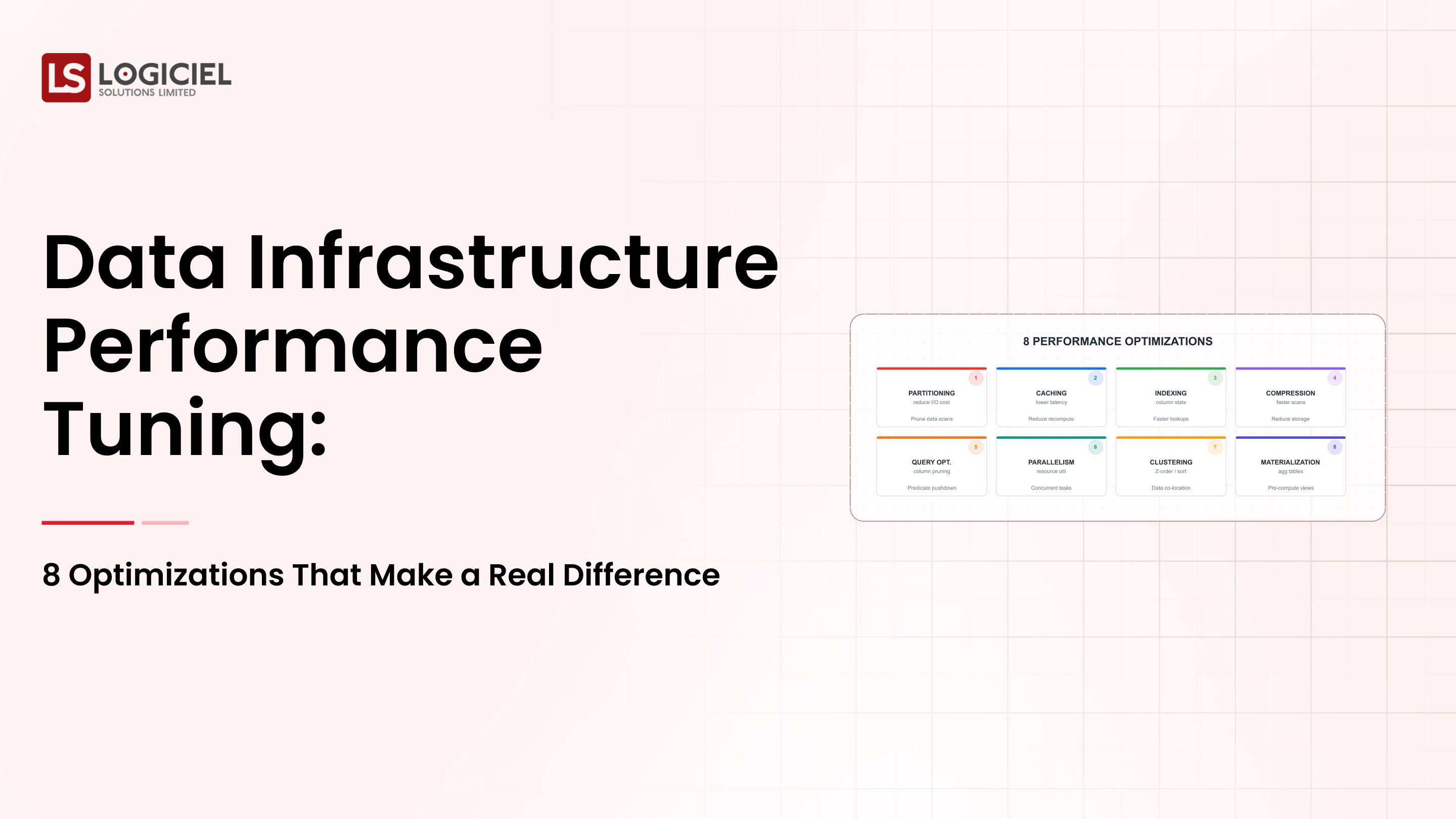

5. Observability Layer

In order to measure...

- Quality of your data

- Quality of your pipeline

- The inputs into your model

Leveraging Multiple Sources of Data

To do that, you must:

- Normalize data sources

- Use a consistent schema

- Track lineage.

Remember: Scalable AI systems must be built with module/observable/flexible architectures.Top Use Cases in Data Infrastructure for Enterprises

1. Real-Time Operations Assessment

- Examples - Innovative Fraud Identifying Solutions

- Recommendations Systems

2. Reporting for Regulations

- Keep Systems Accurate

- Produce Records of Audit Trials

3. A Unified View of the Customer

- Bring Together All Information from:

- CRM

- Product Usage

- Transactions

4. Machine Learning Operations

- Used for:

- Training

- Validating

- Blending Applications

The takeaway for A.I. Infrastructure is that they can do both Operational Vs Analytical Functions.

What Enterprise Executives are Doing Wrong - What Others Are Doing Right

Leading Enterprise Teams

- Think of Data as a Regulated Asset

- Early Investment in Observation

- Engineering Philosophy Aligned with Compliance

What Other Teams Get Wrong

- Data is a Byproduct of Operations

- Quality is Considered After a Failure Occurs

- Create a Reactive System to the Errors

Reactive Teams

- Fix Late

- Use Manual Processes

- Limited Visibility

- Full as standard

Proactive Teams

- Proactively Create Prevention Of Future Failure

- Use Automation

- Unlimited Visibility

- Use Data as a Standard to Evaluate

Takeaway: Mindset and System Design make a large difference

Implementation Approach and Steps

1. Identify Critical Data Flow to Support from High-Risk Areas

- Target Critical Pipeline Functionality

- Major Impact Systems

2. Establish a ROI Based on Data Flow Failures

3. Implement an Increment Migration Strategy

- Implement a Periodic Dual Path

- Verify Functionality

- Transition Gradually to New Path

4. Coordinate with All Teams throughout the Process

- Engineering

- ISO

- Business Partners

5. Utilize A.I. Based System

Leading Companies have already established operations beyond manual process of operating their infrastructure.

They create an operation that:

- Proactively Identifies Future Failures

- Optimize Pipeline

- Increase Reliability

Where Logiciels Fit On This are on the Standard A.I. Based Infrastructure Model.

To Manage their Fragmented Systems, A.I.-based Engineering Framework Provide Teams a Unique Model From Other Industry Models in A.I.-By Providing an Accelerated Way To Deliver Quality While Maintaining a HighSummary: Begin with small steps, focus on impact, and build up in a systematic manner.

Final Thoughts

Creating a data infrastructure specifically for AI is one of the most complex engineering challenges in today’s world.

In summary here are three key points to highlight:

The demand of AI systems necessitates increased reliability as compared to classic forms of data systems

The architecture that supports your data, must support both batch and active workflows

Observability, compliance and system structure will provide pathways for success.

Establishing a true AI Data Infrastructure is NOT simply an upgrade, it is a fundamental change in the way data systems are designed and constructed.

When done correctly, an AI Data Infrastructure will deliver:

Reliable AI Models and Systems Increase Rate of Innovation Improve Decision-Making Processes Scalable AI Data Systems

Call to Action:

If your AI systems are experiencing issues with reliability and consistency, your next course of action will be to evaluate your current data infrastructure.

Here are a few additional resources to help you understand how to build an AI Data Infrastructure:

Why Does This Keep Happening? Identifying Root Causes of Your Data Infrastructure Issues and Correcting Them.

A Look into the Future of Data Infrastructure - What Leaders are Building Towards

Data Infrastructure Proof of Technology, Thanks!!! How to Prove Your Data and Systems are Ready for AI.

Logiciel Solutions Partners Help Create AI-First Data Infrastructures for Your Business Enabling Reliable, Scalable & Compliant Systems. Through a systems-oriented design approach and implementing intelligent automation, we create enhancements on your data, systems and processes providing a significant reduction in risk.

To Learn More About How to Build an AI Ready Infrastructure Visit;

AI Velocity Blueprint

Measure and multiply engineering velocity using AI-powered diagnostics and sprint-aligned teams.

Frequently Asked Questions

What is Data Infrastructure for AI?

Data Infrastructure for AI is the subsystems that gather, manage, process and deliver data from all sources of data that may be used by machine learning systems.

This includes the pipelines, storage, processing, and monitoring layers.

What makes Data Infrastructure for AI More Complex?

More than with classic data systems, AI Systems require an increased amount of data quality; capable of processing this data in real time; and also requiring all processing, results, and predictions made to be reproducible.

What is a Feature Store?

A Feature Store is a central repository of Machine Learning features, and provides for the management of features in order to maintain the consistent definition of a feature between the training and the inference phase.

How do you ensure data quality in AI systems?

You can ensure data quality throughout each of your data pipelines by implementing some form of validation, monitoring, and observability.

What is the greatest challenge in AI Data Infrastructure?

Maintaining consistency and reliability across multi-source, highly complex data systems.