A few years ago your team made a logical choice regarding your data architecture.

You had success with the pipelines, dashboards, and systems were working together at that time.

Now you are using approximately 40% of your sprint capacity (time) on the same architecture.

Pipelines are fragile, new failures occur with scaling, AI projects are stalled due to inconsistent data, and any proposed change/addition seems far more dangerous than it should be.

This is not an issue relating to the tools. This is an issue related to the design of the data architecture.

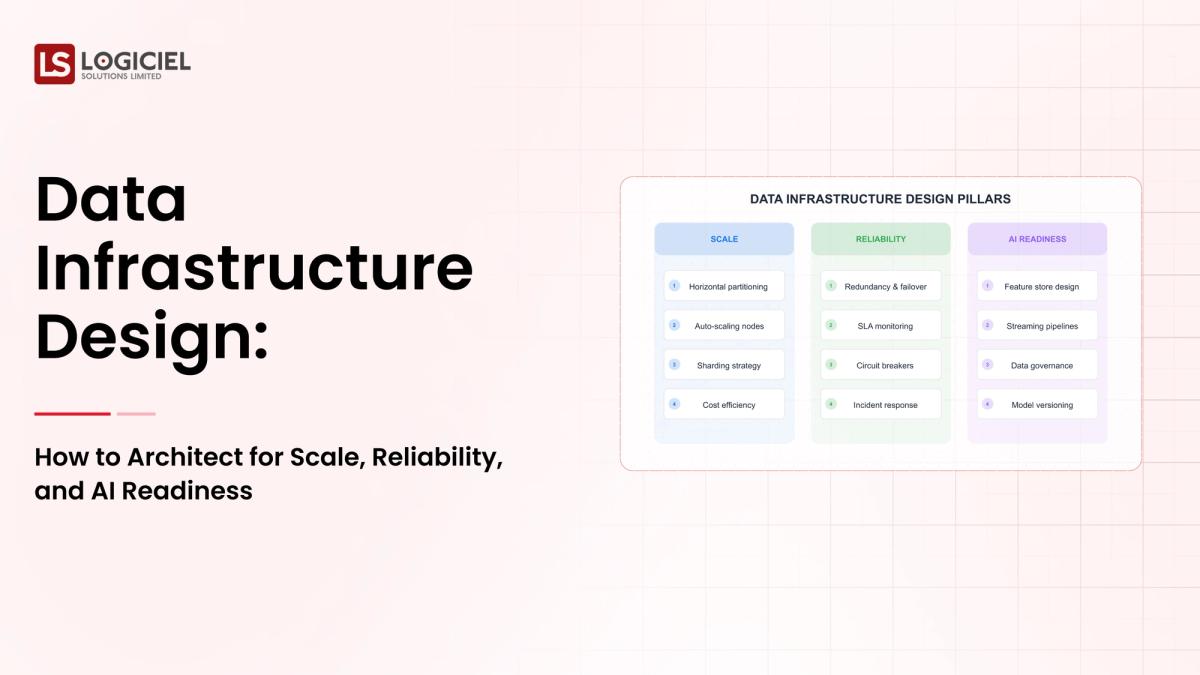

If you are a staff or principal engineer responsible for the design and development of systems, this guide will help you to:

Understand why the design of data architecture fails. Design systems that are capable of scaling in a reliable manner. Create manageable architectures to support workloads that require AI readiness.

Agent-to-Agent Future Report

Understand how autonomous AI agents are reshaping engineering and DevOps workflows.

Let’s look at how well most teams are currently positioned to succeed at designing and developing a data architecture.

The Primary Reason Most Organizations Fail to Develop a Data Infrastructure

Most of the issues encountered during the design of the data infrastructure are not technical in nature; they are structural.

Ad Hoc Decision Making

Many teams:

Choose tools based on familiarity. Make decisions in isolation (i.e. no enterprise view). Do not have an enterprise view of the overall system.

Over time these practices result in fragmentation of the technical architecture.

No Clear Definition of Ownership

When you do not have a clear definition of who owns an asset (pipeline, data quality, etc.) then:

The pipeline will break silently (you won’t know until it is too late). The quality of the data will suffer. The accountability of ownership and their ability to fix the issue is unclear.

Technical Debt is Compounding

When small shortcuts are taken - the result will be:

Fragile Pipelines. Inconsistency in Schema Design. Difficulty with Scaling the Pipeline.

Do Not Underestimate the Complexity Involved!

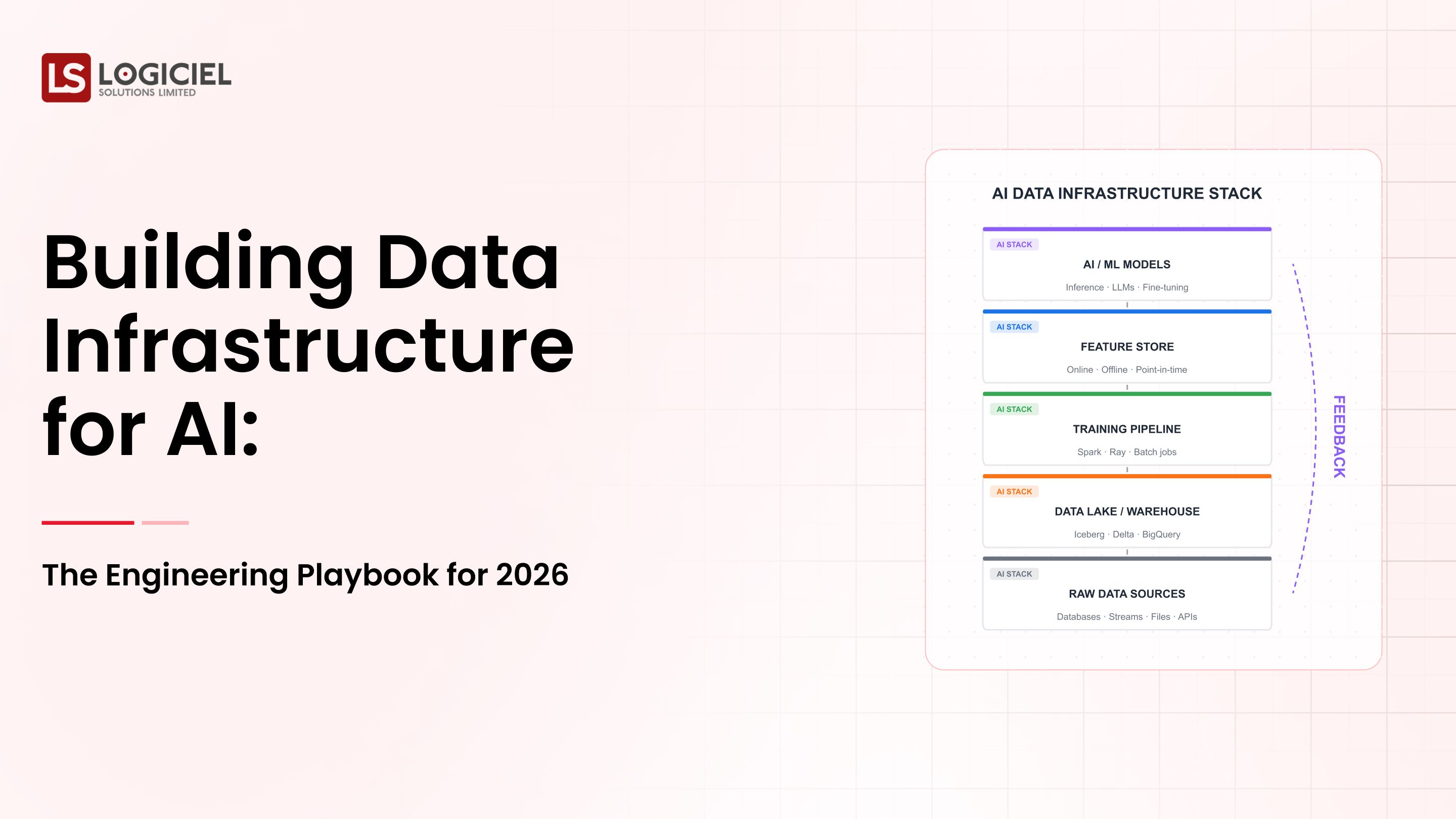

Modern systems typically include:

– Batch and Streaming Pipelines Together

– Multiple Storage Solutions

– AI Workloads

Success for a Staff Engineer or Principal Engineer Includes:

– Predictable Aspects of Your System

– Observable Failures of Your System

– Controlled Scaling of Your System

Key Takeaway: If your Data Infrastructure Design is Not Intentional, You Will Fail at Designing it Correctly

Prerequisites for Redesigning Your Infrastructure Before Beginning:

Ensure That You Have These Foundational Elements In Place Before Redesigning Your Infrastructure:

1. Clear Ownership Model

Identify the following:

– Who Owns Data?

– Who Owns Pipelines?

– Who Responds To Incidents?

2. Baseline for Your Infrastructure

Make Sure That All of The Following Are in Place:

– Your Pipelines Are Stable

– You Have An Existing Orchestrator

– You Have Reliable Storage Systems

3. Data Contracts

Create Data Contract For:

– Schema Agreements

– Data Expectations (What Does Your Data Look Like?)

– Validation Rules (How Will You Validate Each Piece of Data?)

4. Stakeholder Alignment

Make Sure That All of The Following Groups Are Aligned:

– Engineering Teams

– Business Stakeholders

– Managerial Priorities

5. Definition of Success Metrics

Define Success Metrics (How Will You Know When You Have Reached The Goals):

– Reliability

– Performance

– Freshness of Data

Key Takeaway: Strong Foundations Allow For Scalable Designs

Phase 1: Assess Your Current State

To Redesign Something, You Must First Understand It.

Step 1: Audit Current Systems

Identify:

– Pipelines

– Tools Used

– Dependencies

Step 2: Evaluate Your Performance

Measure:

– Latency

– Failure Rates

– Freshness of Data

Step 3: Map Data Flows

Document:

– Source Systems

– Transformations

– Outputs

Step 4: Identify Bottlenecks (Speed Bump)

Common Problems:

– Inefficient Queries

– Poorly Designed Schemas

– Lack of Monitoring in Place

Result of This Phase Will Be A Prioritized Roadmap Based On Building Out:

– Quick Wins

– Long-Term Improvements

Key Takeaway: Lack Of Visibility Is The First Step In Not Improving Your Data Infrastructure.

Phase 2: Design Target Architecture

Now That You Have Diagnosed Your System, You Will Start Moving Into The Design Phase.

1. Define Design Principles

Your System Should Have:

– Scalability

– Reliability

– Observability.Intentional Choice of Components

2. Use care in selecting the most appropriate choice and not the default choice.

The following should be evaluated:

- Scalability

- Flexibility

- Cost

3. Make the System Observable First

Include:

- Logging

- Metric Reporting

- Alerts

4. Modularize Your Systems

Separate (or separate the processes):

- Ingesting

- Storing

- Processing

5. Allow for Future Adaptation

Systems need to have the ability to change according to :

- New Data Sources

- Changing Requirements

Takeaway: Good design anticipates future needs.

Phase 3. Build, Test and Roll Out In Incremental Phases

• Control the Implementation.

1. Begin small.

Focus on:

- A single domain.

- A single pipeline.

2. Run two or more similar systems in parallel.

Demonstrate:

- Consistent data (between systems)

- Decrease the level of risk that would be incurred if used without a second system.

3. Automated Testing.

• Validate the Data (to ensure accuracy of data).

• Conduct tests on the Data Pipeline.

4. Instrument Everything within the system.

Monitor:

- performance, in order to remediate problems,

- Errors,

- Quality of the data that has been processed.

5. Continuously iterate to make improvements to:

• To improve the: Architecture

• To improve the: Process

• To improve the: Tools

Takeaway: An Incremental rollout will reduce risk and produce improved results .

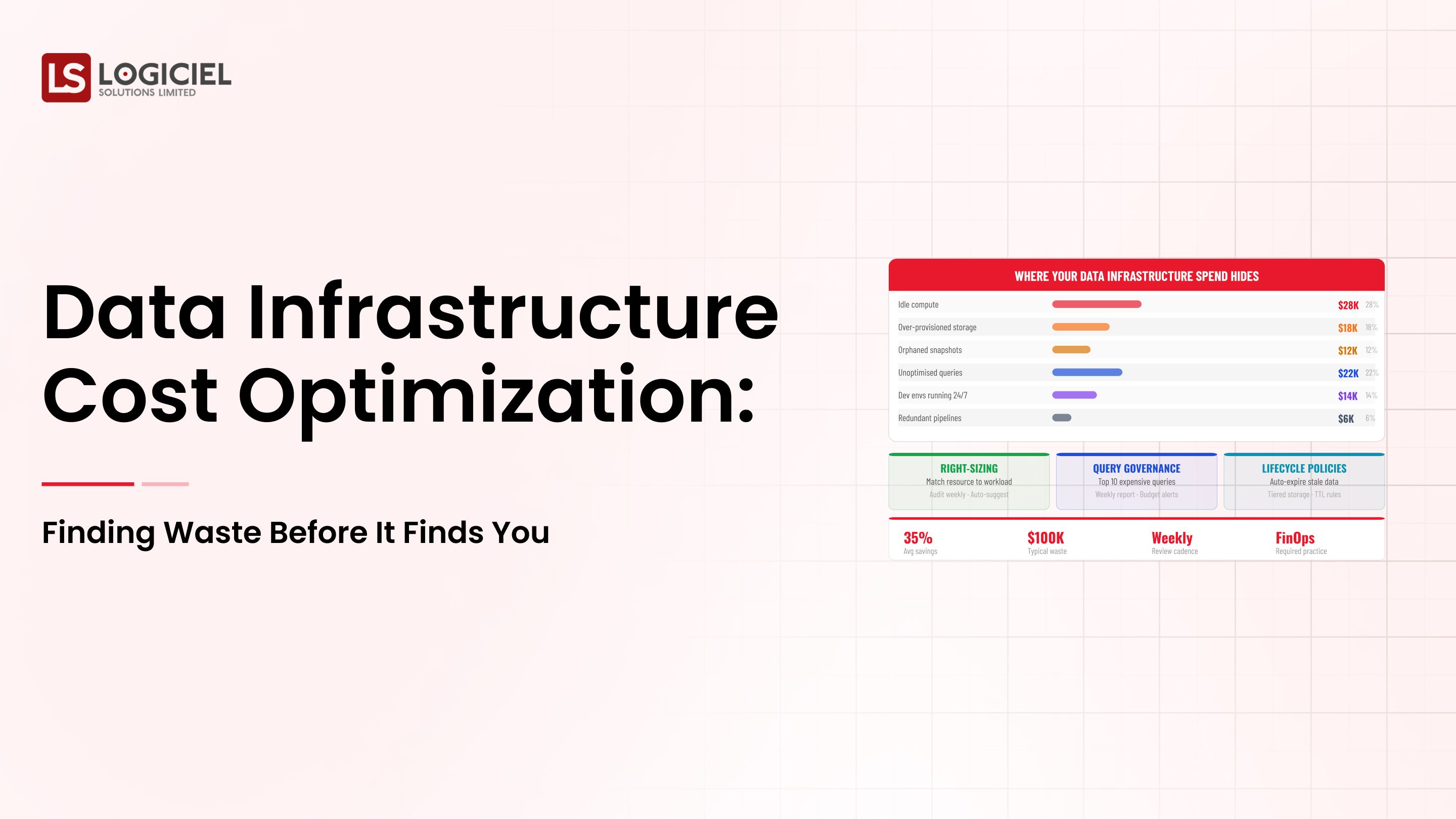

How To Measure Success And Make Improvements?

1. Define SLOs

Examples of SLO's would include:

- Uptime of 99.9%

- Latency of Data <5 mins.

- Error Rate <1%.

2. Create Panels with visible stakeholders and real-time monitoring .

3. Conduct Regular Reviews of:

- Conduct Post-Mortem Reviews

- Conduct incident analysis reviews.

4. Track Metrics of:

- MTTR - Mean Time To Recover

- MTTD - Mean Time To Detect

- Data Accuracy

5. Use Intelligence At The System-Level.

High performing teams will build automated monitoring systems that detect anomalies, predict systems failures and improve performance.

This is where Logiciel's AI-first Engineering Model is important, as it provides an artificial intelligence system for teams to build automated, self-optimizing systems that scale with confidence, not just react to problems.

Takeaway: Measurement will lead to Continuous Improvement.

To Sum Up...

The foundation of scalable, dependable and AI-ready systems is the design of the data infrastructure system.

To summarize the 3 most significant takeaways from this article, they are:

Most failures with a system are a result of a poorly designed system and not the tools to build the system.

["Success is Modular, Observable and Scalable."]

To achieve long-term success from a process perspective, there is an on-going requirement for continuous iteration of the process.

Designing data infrastructure is not a simple process, it requires alignment and discipline with a continuous investment in the process.

When designed properly, the data infrastructure system provides:

• Faster time-to-delivery

• Reliable data systems.

• Scalable architecture.

• AI-Ready Systems

Call To Action

If your data systems continue to increase in complexity, it is time to reconsider your architecture.

Learn about the following:

Why does the data infrastructure system continually fail and how do you fix the underlying problems?

How do I run a proof of concept for a data infrastructure system?

How do I justify investing in a data infrastructure system to my CFO?

Logiciel Solutions provides teams with assistance to design their AI-first data infrastructure system and allow for scalable and reliable systems with a reduction in the overall complexity of the data systems.

We utilize automated and intelligent systems to improve performance and reliability.

By designing the architecture and processes for the architecture for future growth, your ability to deliver and develop a scalable system will increase.

RAG & Vector Database Guide

Build the quiet infrastructure behind smarter, self-learning systems. A CTO’s guide to modern data engineering.

Frequently Asked Questions

Data Infrastructure Design:

It is the process of designing systems that process, collect, store and transmit data efficiently and reliably.

Why do data systems fail at scale?

Poor design, lack of observability, and the increased amount of data processed creates a massive amount of complexity.

What are the key components of a data infrastructure system?

Ingesting Data, Storing Data, Processing Data , Orchestration Layer, Observability Layer.

How do you design for scalability?

Utilizing Modular Architecture, using Distributed systems and Automated Processes.

What is one of the Biggest Mistakes with Data Infrastructure Design?

Expecting that once the system has been built, it will be constantly maintain without change.