There is a regulator on a call asking how the AI system makes decisions, what controls are in place, and where the evidence lives. Your team scrambles to assemble it from logs, policies, and runbooks. Some pieces are missing; some are out of date; the conversation does not go well.

This is more than an audit moment. It is a failure of the AI governance framework.

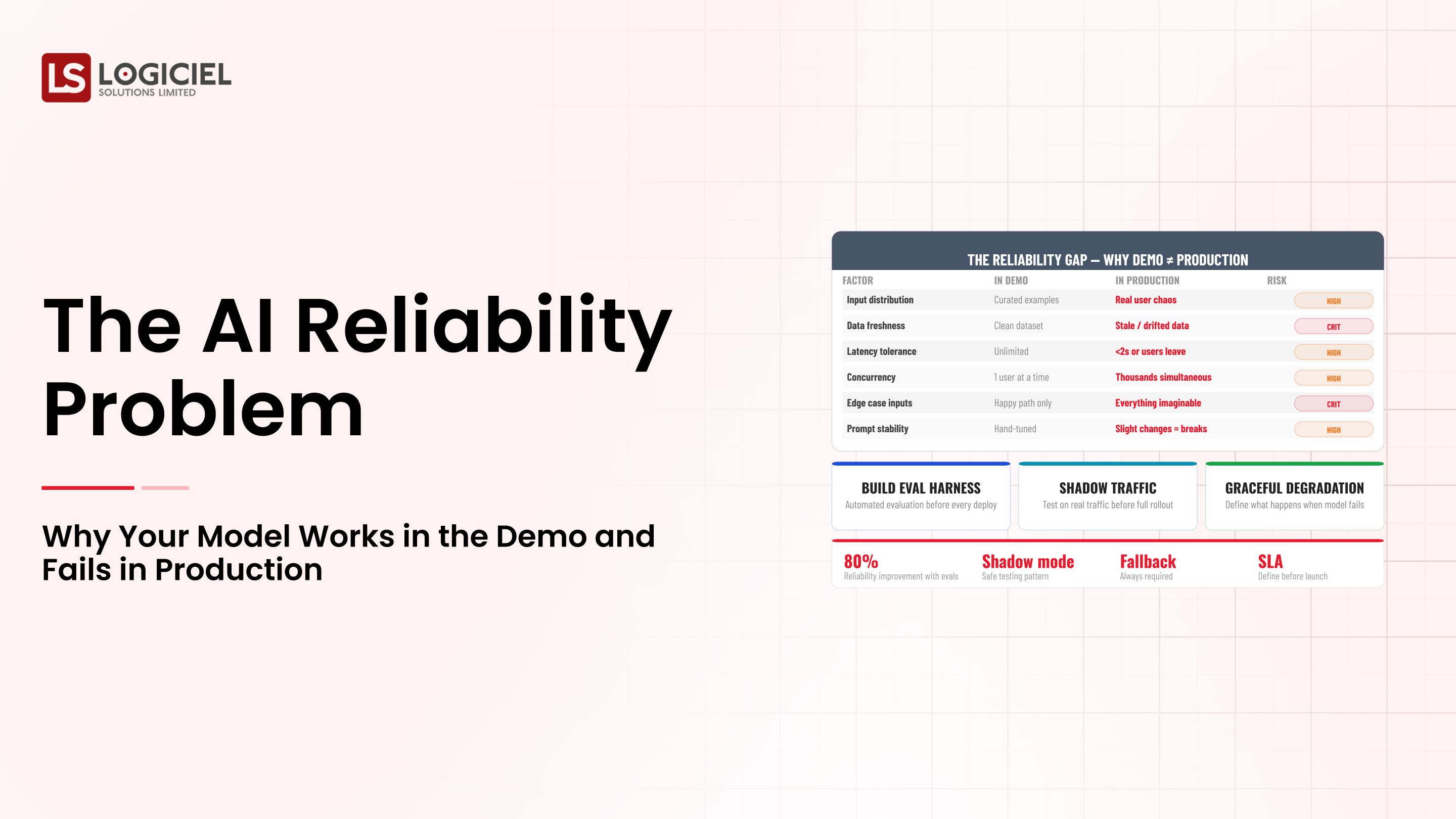

A modern AI governance framework is not a policy document and an annual review. It is a layered system with runtime enforcement, evidence design, and operating cadence.

However, many enterprises still treat governance as documentation work and discover at the first audit that documentation is not enforcement.

Reactive to Proactive Incident Elimination

Inside a 6-month transition that took emergency incidents from monthly to zero.

If you are a Chief Risk Officer and are responsible for building or scaling your AI governance program, the intent of this article is:

- Define what an AI governance framework actually is

- Walk through the four layers that hold up under audit

- Lay out the operating cadence that keeps the framework current

To do that, let's start with the basics.

What Is AI Governance Frameworks? The Basic Definition

At a high level, an AI governance framework is a layered system of policies, runtime controls, evidence collection, and operating cadence that ensures AI systems behave within an approved envelope and that compliance can be demonstrated on demand.

To compare:

If a policy document is a building's blueprint, an AI governance framework is the building plus the inspection schedule plus the evidence the inspections actually happened.

Why Is AI Governance Frameworks Necessary?

Issues that AI Governance Frameworks addresses or resolves:

- Closing the gap between policy intent and runtime behavior

- Producing audit evidence on demand rather than reactively

- Aligning risk, legal, security, and engineering on shared controls

Resolved Issues by AI Governance Frameworks

- Translates policy into enforced runtime controls

- Captures evidence design that survives audit

- Establishes the cadence that prevents framework drift

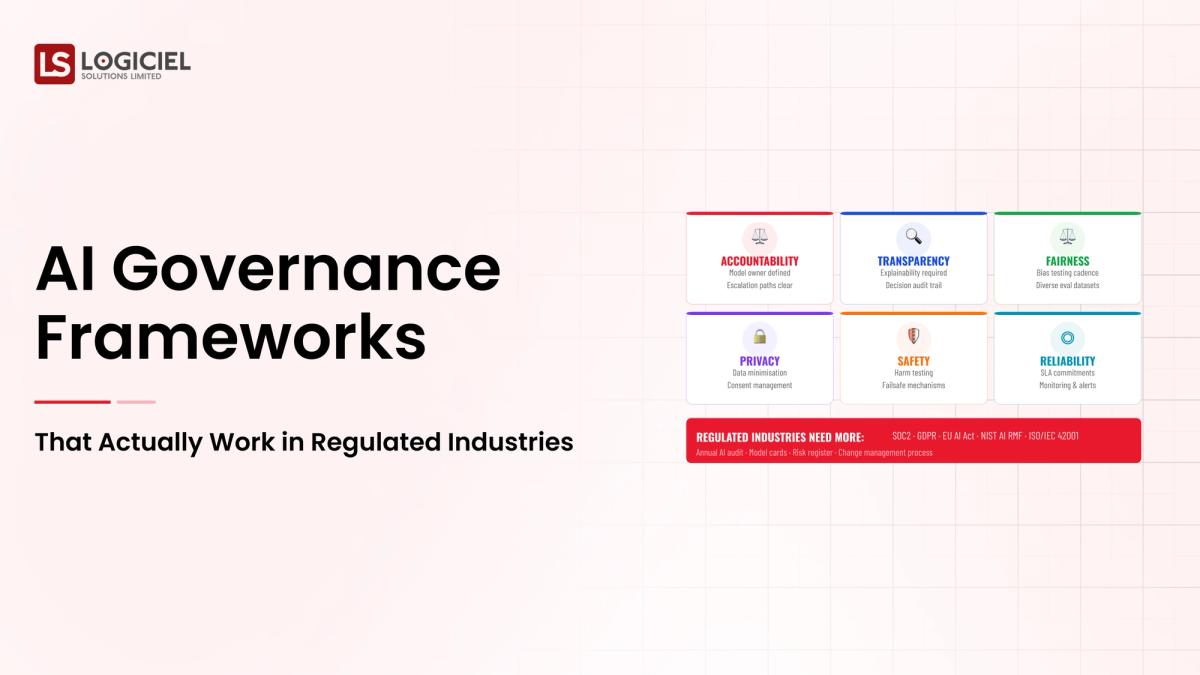

Core Components of AI Governance Frameworks

- Policy layer (what the organization commits to)

- Runtime controls layer (technical enforcement of the policy)

- Evidence layer (queryable record of what the controls did)

- Operating cadence layer (review, update, and tabletop schedule)

Modern AI Governance Frameworks Tools

- Policy engines like Open Policy Agent for runtime enforcement

- GRC platforms (Drata, Vanta, Hyperproof) extended for AI workloads

- AI observability platforms (LangSmith, Arize, Galileo) for evidence

- Audit-trail stores: append-only S3, BigQuery, Snowflake with retention rules

- Tabletop exercise frameworks adapted for AI incidents

Tooling is the easy part; the discipline that keeps the framework current is the hard part.

Other Core Issues They Will Solve

- Surfaces gaps between policy and implementation through evidence

- Reduces audit preparation time from weeks to hours

- Builds organizational muscle for the next regulatory change

In Summary: AI governance frameworks are layered systems that combine policy, runtime enforcement, evidence, and cadence to make AI behavior defensible.

Importance of AI Governance Frameworks in 2026

AI governance has shifted from a risk function activity to a board-level program. Four reasons explain why.

1. Regulatory regimes are now binding.

EU AI Act enforcement is in effect. State-level rules are multiplying. Sector-specific obligations have caught up. Frameworks that are not enforced face binding consequences.

2. Boards are asking specific governance questions.

What controls are in place. Where the evidence lives. How the program responds to incidents. Vague answers are no longer acceptable.

3. Customers are demanding governance evidence in contracts.

Enterprise procurement now includes AI-specific governance attestations. Programs without evidence lose deals.

4. AI capability is outpacing policy update cycles.

Frameworks that update annually lag behind systems that change quarterly. The cadence has to match the rate of change.

Traditional vs. Modern AI Governance Frameworks Concepts

- Policy document and annual review vs. layered framework with quarterly cadence

- Reactive evidence assembly vs. evidence-by-design captured automatically

- Steering committee oversight vs. engineering and risk shared ownership

- External regulation as the only driver vs. risk-led framework verified against regulation

In summary: AI governance frameworks are the foundation of every enterprise AI program operating in a regulated environment.

Details About the Core Components of AI Governance Frameworks: What Are You Designing?

Let's go through each layer.

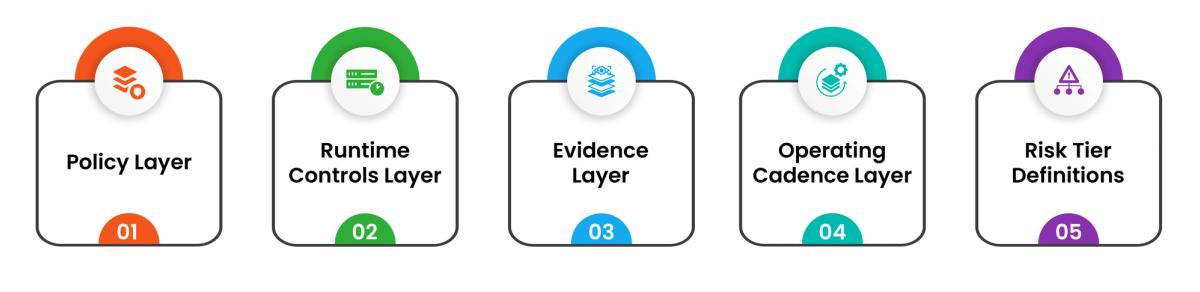

1. Policy Layer

What the organization commits to. Owned by risk and legal.

Policy contents:

- Acceptable and prohibited use

- Risk tiering for AI use cases

- Data handling, vendor management, incident response

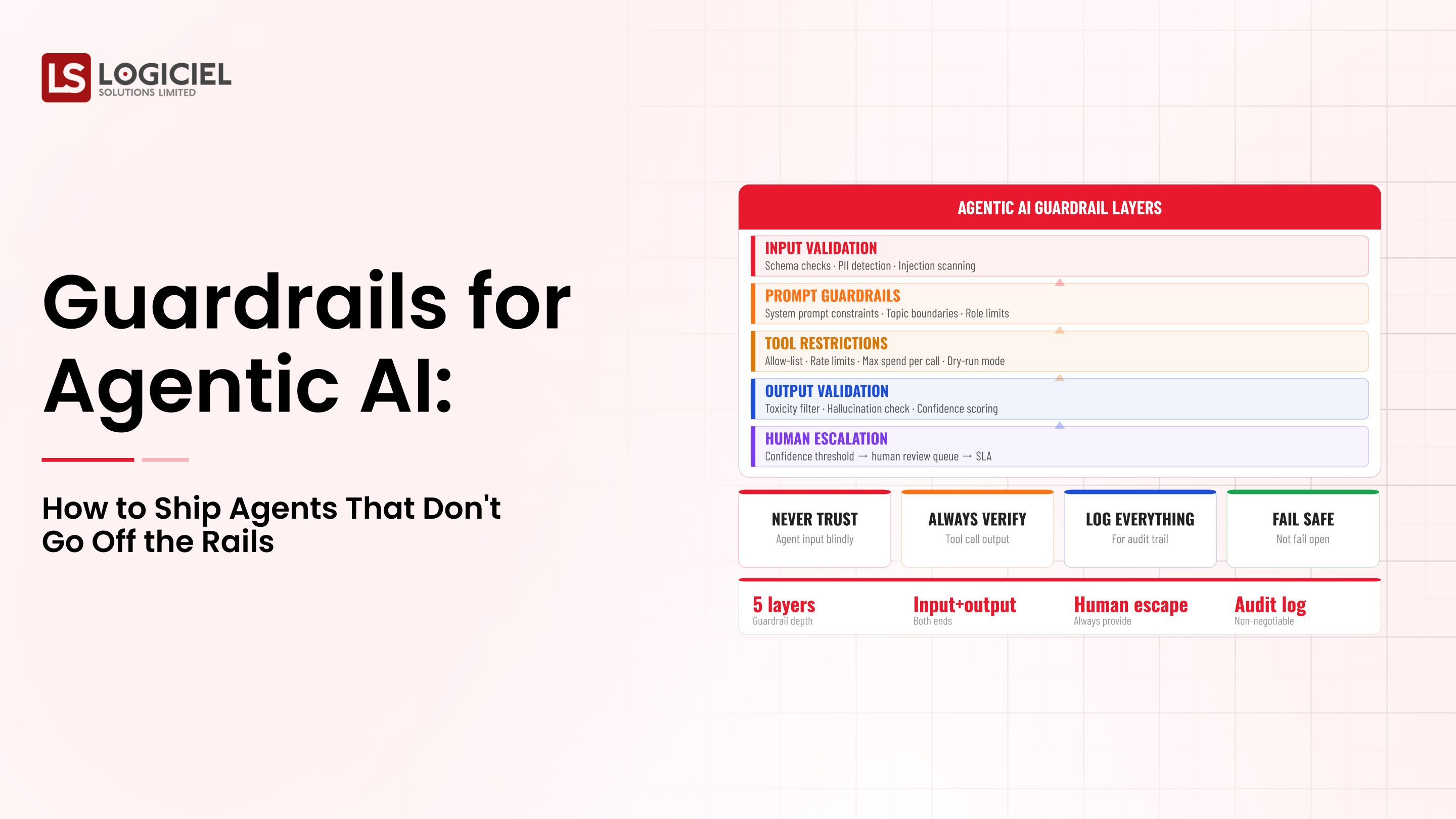

2. Runtime Controls Layer

The technical mechanisms that enforce the policy. Owned by engineering with risk and security partners.

Controls per risk tier:

- High risk: tool-level controls, output validation, kill switches, HITL, full audit

- Medium risk: tool-level controls, output validation, audit

- Low risk: lighter controls, basic audit

3. Evidence Layer

The queryable record of what the controls did. Owned jointly by engineering and risk.

Evidence design:

- Capture plan, tool calls, intermediate state, final outcome

- Append-only storage with retention matching regulatory requirements

- Queryable interface designed for auditors and risk reviewers

4. Operating Cadence Layer

The rhythm that keeps the framework current. Owned by the AI governance committee.

Cadence components:

- Quarterly review of policies, runtime controls, and evidence design

- Annual external review and tabletop exercise

- Incident-driven updates whenever incidents occur

5. Risk Tier Definitions

Categorize AI use cases into tiers; controls scale with tier.

Tier examples:

- High: affects rights, finances, or safety

- Medium: customer-facing automation with reversible decisions

- Low: internal productivity with limited blast radius

Benefits Gained from Layered Architecture and Operating Cadence

- Defensible posture under audit and regulator review

- Predictable response to new regulatory changes

- Trust with customers, the board, and the regulators

How It All Works Together

Policies define commitments. Runtime controls enforce them. Evidence captures the enforcement. Operating cadence keeps the layers current. Without cadence, the framework lags. Without evidence, the framework cannot defend itself. Without runtime controls, the policy is decoration.

Common Misconception

AI governance is a risk function activity that engineering should support.

AI governance is a shared responsibility across risk, legal, engineering, and the lines of business. Engineering owns the runtime layer; risk owns the policy layer; both share the evidence layer.

Key Takeaway: Each layer requires its own owners and its own deliverables; programs that under-invest in any layer have predictable gaps.

Real-World AI Governance Frameworks in Action

Let's take a look at how ai governance frameworks operates with a real-world example.

We worked with a financial services company building an AI governance framework spanning multiple AI use cases, with these constraints:

- Compliance with EU AI Act and US state-level rules

- Defensible audit posture for SOC 2 and ISO 27001

- Reusable framework across multiple AI programs in the portfolio

Step 1: Define Risk Tiers

Categorize AI use cases by risk tier. Tiering is the foundation that lets governance scale.

- High, medium, low tiers with clear definitions

- Tier owner per use case

- Migration path between tiers

Step 2: Write the Policy Layer

Acceptable use, prohibited use, data handling, vendor management, incident response. Specific enough to enforce.

- Per-tier policy variants

- Sign-off from legal and risk

- Policy refresh cadence documented

Step 3: Design Runtime Controls per Tier

Technical specifications, not aspirational descriptions.

- Per-tier control bundle

- Engineering and risk co-ownership

- Implementation tracked through to production

Step 4: Design the Evidence Layer

What evidence each control produces; where it is stored; how long retained; who can query.

- Append-only storage with retention rules

- Queryable interface for auditors

- Documented access controls and audit of access

Step 5: Establish the Operating Cadence

Quarterly review of policies, controls, and evidence design. Annual tabletop and external review.

- Documented review schedule

- Named owners per layer

- Incident-driven updates with documented rationale

Where It Works Well

- Engineering and risk co-ownership of runtime and evidence layers

- Quarterly cadence that prevents drift

- Tabletop exercises that surface gaps before audits do

Where It Does Not Work Well

- Policy without runtime enforcement

- Steering committee without engineering presence

- Annual review only, when systems change quarterly

Key Takeaway: Frameworks that survive regulator review are frameworks that operate the cadence. Layers without cadence drift; cadence without layers wastes time.

Common Pitfalls

i) Policy without runtime controls

A policy without enforcement is a hope. Translate every policy commitment into runtime controls.

- Map each policy to one or more controls

- Verify enforcement via eval

- Capture evidence of enforcement

ii) Runtime controls without evidence

Controls that work but produce no auditable evidence are controls you cannot defend. Build the evidence layer alongside.

iii) Annual review only

AI changes faster than annual cadence. Quarterly minimum; incident-driven updates whenever incidents occur.

iv) Steering committee without engineering

Committees that produce policy disconnected from implementation produce frameworks that fail audit. Engineering belongs in the room.

Takeaway from these lessons: Most governance failures are gap failures. The pieces exist; they are not connected. The cadence is what connects them.

AI Governance Frameworks Best Practices: What High-Performing Teams Do Differently

1. Tier risk before scoping controls

Risk tiering lets controls scale. Without tiering, you over-control everything (slow) or under-control high-risk systems (dangerous).

2. Build evidence into the runtime layer

Evidence design alongside controls. Append-only storage; queryable interface; retention matching regulatory requirements.

3. Operate on a quarterly cadence

Quarterly review of policies, controls, and evidence design. Annual external review. Incident-driven updates.

4. Run tabletop exercises with risk

Tabletops surface gaps that document review does not. Schedule annually; document findings; remediate before the next audit.

5. Co-own the framework across risk and engineering

Risk owns the policy; engineering owns the runtime; both share the evidence. The cadence is the AI governance committee.

Logiciel'svalue add is helping risk and engineering leaders build the layered framework, design the evidence layer, and operate the cadence that keeps the framework current.

Takeaway for High-Performing Teams: High-performing organizations co-own AI governance across risk and engineering and operate the framework on a cadence.

Signals You Are Designing AI Governance Frameworks Correctly

How do you know the ai governance frameworks program is set up to succeed? Not in a board deck or a celebration, but in the daily evidence the team produces. Below are the signals that distinguish programs on the path from programs that look like progress.

- The team can describe failure modes without flinching. People who actually run ai governance frameworks systems will tell you the last three things that broke. People who have only read about it will not.

- Cost is observable in real time. The team can tell you, today, how much they spent yesterday on this and what drove the change.

- Change is boring. New versions, new models, new pipelines all roll forward and roll back the same way. Heroic deploys signal an immature system.

- Eval is continuous, not ceremonial. A live dashboard refreshed at least daily, not a quarterly slide.

- Vendor lock-in is a known quantity. The team can name the dependencies that would hurt to remove and the rip-and-replace cost in dollars and weeks.

Adjacent Capabilities and Connected Work

This work does not exist in isolation. AI Governance Frameworks depends on, and feeds into, several adjacent capabilities. Building one without thinking about the others is the most common scoping mistake.

In most enterprise programs, ai governance frameworks shares infrastructure with the data platform, the observability stack, and the security review process. It shares team capacity with platform engineering, applied ML, and SRE. And it shares leadership attention with whatever the next AI initiative is on the roadmap. Naming these adjacencies upfront helps the program scope realistically and helps leadership see the work as a portfolio rather than a one-off project.

The most common mistake in adjacent-capability scoping is treating each adjacency as someone else's problem. The integration with the data platform is your problem. The security review of the runtime is your problem. The on-call rotation that covers the system you ship is your problem. Pretending otherwise pushes work to teams that did not plan for it, and the work returns to you later as a delay or an incident. Own the adjacencies you depend on; partner with the teams that own them; share the timeline.

Conclusion

AI governance frameworks for regulated industries are layered systems. The layers are policy, runtime controls, evidence, and cadence. Without all four, the framework will not survive its first audit.

Key Takeaways:

- Governance is layered: policy, runtime, evidence, cadence

- Runtime enforcement is the substance; documentation is the wrapper

- Quarterly cadence prevents drift; tabletop exercises surface gaps

When the framework is designed and operated correctly, the benefits compound:

- Defensible posture under audit and regulator review

- Predictable response to new regulatory changes

- Reduced audit preparation time across the organization

- Trust with customers, the board, and regulators

Data Infrastructure ROI Calculator

Use this ROI calculator to measure maintenance cost, inefficiencies, and hidden losses in your data stack.

Call to Action

If you are responsible for AI governance, the work this quarter is to inventory which of the four layers your framework has, which it lacks, and which is decorative.

Learn More Here:

- AI Governance Frameworks Logiciel Playbook

- AI Powered Development Regulated Industries Compliance 2025

- AI Software Development Pricing Roi Guide

At Logiciel Solutions, we work with risk and engineering leaders on AI governance design and the runtime control work that turns policies into enforced controls.

Explore how to design your AI governance framework.

Frequently Asked Questions

What is an AI governance framework?

A layered system of policies, runtime controls, evidence collection, and operating cadence that ensures AI systems behave within an approved envelope and that compliance can be demonstrated on demand.

How is AI governance different from risk management?

Risk management identifies and prioritizes risks. Governance addresses them in production through policies, controls, and evidence. Complementary; both are required.

How do regulatory regimes (EU AI Act, NIST AI RMF) fit in?

They inform the framework but do not constitute it. Build against actual risk; verify against regulation. Building only to satisfy regulation produces a framework that lags real risk.

Who owns the framework?

Joint ownership: risk owns policy, engineering owns runtime, both share evidence. The AI governance committee owns the cadence.

What is the biggest mistake in AI governance?

Treating it as a documentation activity. Without runtime enforcement and evidence design, the framework cannot defend itself when an audit happens.