Three years ago your team made what appeared to be a sound decision to build out internal data pipelines, use dashboards to access the data stored in each of those pipelines, and to provide access to that data through ad-hoc queries.

Fast forward three years and these decisions are costing your team upwards of 40% capacity on every sprint. Engineers are having to write custom scripts just to access the data. APIs are all over the place and very inconsistent, resulting in teams duplicating the logic in each of their services, and every time a team comes up with a new use case for the data, they either have to rebuild or significantly rework the systems that exist to accommodate for that use case.

AI – Powered Product Development Playbook

How AI-first startups build MVPs faster, ship quicker, & impress investors without big teams.

This is where modern data infrastructure begins to break down.

If you are currently in a position of Staff or Principal Engineer responsible for the design or evolution of modern data infrastructure, then the issue with modern data infrastructure is no longer solely around how data is stored; it is now also about how data is exposed, accessed and consumed across systems.

This guide will provide you with the following learnings:

- What is modern data infrastructure in terms that can be understood and applied in the real world

- Why API first design is no longer a recommendation, but rather a necessity

- How to design and implement an API first data architecture in a manner that does not add additional complexity to your existing infrastructure

To help get us started, let’s review what exactly constitutes modern data infrastructure in the first section of this guide.

What Is Modern Data Infrastructure (Definition in Plain English)

At its most basic core a modern data infrastructure is the system that enables data to be moved, stored, transformed, and served throughout an organization in a manner of reliability and scalability.

However, buried in that definition is the heart of the shift.

Data infrastructure comparison can be represented in logistics systems. In logistic terms, a pipeline is the connection made from source data to consuming data; this equates to the transportation of goods. The storage of data equates to a warehouse for storing products. Data transforming/composing (data model) to package/sort the product to move it from the warehouse to its delivery destination has an analogy of an API triggering/enabling the delivery of a product.

Most of the focus is on optimising warehousing and transportation systems. Few teams focus on optimising their delivery systems; therefore, this is the most impactful thing about an "API-first" data infrastructure.

Key Components of Modern Data Infrastructures

Ingestion Layer

- Collects data (i.e., from applications, databases or external APIs)

Storage Layer

- Stores raw and/or processed data

Processing Layer

- Creates model using that data

Orchestration Layer

- Manages the execution of the pipeline

Serving Layer (API)

- Delivers/exposes the data to be used by applications & end users

The primary difference in the Serving Layer component is that the Serving Layer is a critical piece of modern infrastructures.

Most traditional data infrastructures had been used mainly to produce:

- Batch Reporting

- External Dashboards

- Limited Consumers

However, modern data infrastructures are now required to support:

- Real Time Applications

- Artificial Intelligence (AI) and Machine Learning (ML)

- External Systems

- Product Features Dependent on Data

So there needs to be a consistent scalable way of exposing the data.

Modern data infrastructures are not only warehouses/data lakes or pipelines or simply a BI tool. They need to be tightly integrated systems for the predictable flow of data from source to consumption.

If you do not leverage the data for reuse/integrate your data for access, then the infrastructure is incomplete.

Section 2: Why Modern Data Infrastructures Are More Important In 2026 Than Ever

In recent years, the level of importance of modern data infrastructures has changed dramatically.

AI-First Systems Demand Dependable Data Access

AI models rely on:

- Consistent data schemas

- Real-time / Near real-time updates

- High-quality data lineage tracking

Without structured API access, teams often resort to:

- Directly querying databases

- Manually extracting data

- Creating duplicate pipelines between two systems

All of which adds up to increased fragility in your system.

According to Gartner, Greater than 80% percent of all AI projects fail to reach production level due to underlying poor quality of data infrastructure.

Data Volume and Complexity Continue to Grow Dramatically

Organizations now have:

- Event streams produced from products

- Third-party integrations

- Multi-region data systems

All resulting in:

- More pipelines

- More customers consuming the data

- More, potentially, problem points

As there is no standard means of accessing the data, complexity increases exponentially.

Compliance and Governance Pressure

Governments are requiring organizations to provide:

- Control over who can access data

- The ability to audit data

- Provide documentation of the source from which the data originated

APIs provide a controlled method of accessing data so that organizations can enforce:

- Access policies

- Versioning

- Monitoring

The Cost of Ignoring This Movement

When designing your system for an API-first approach; engineers will spend time recreating layers of access to the database.

As a result; there will be more data inconsistencies than before; and therefore delaying feature development.

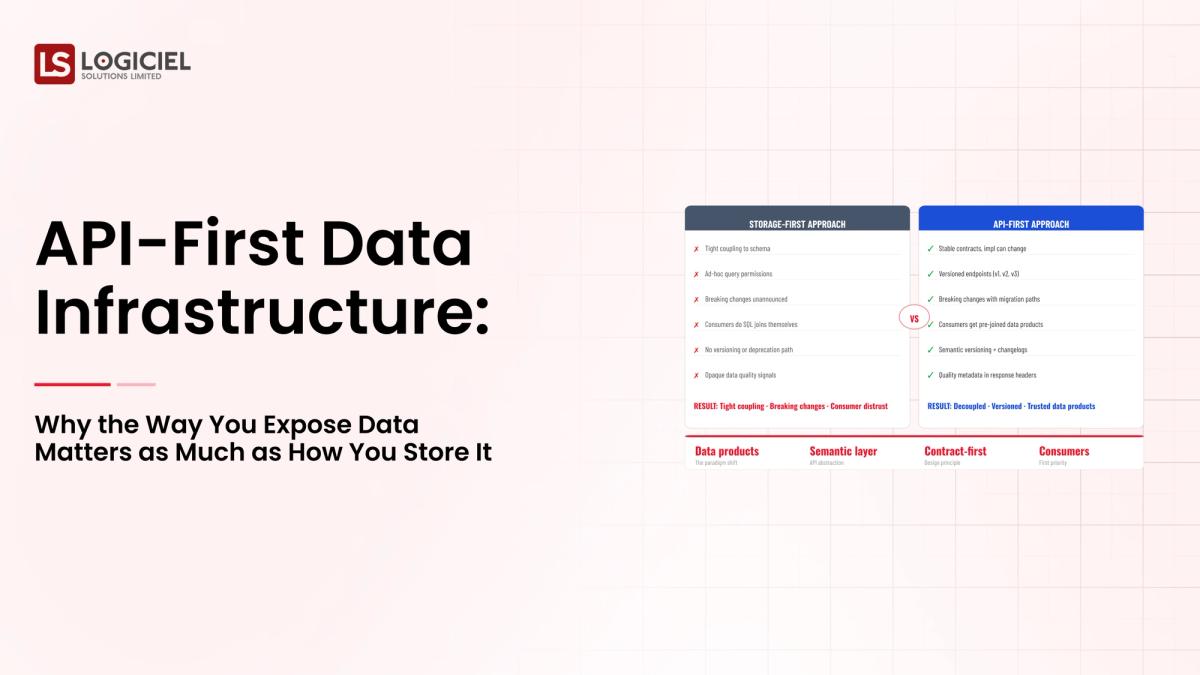

Compare Before to After API-First

Before API-FirstAfter API-FirstAd-hoc queriesConsistent access to data across teamsTight coupling of systemsDecoupling of the architectureHigh overhead maintenanceAbility to develop features quickly

Although before you might have asked:

"Can I access the data?"

Moving forward you should ask:

"Can I access it reliably / consistently / at scale."

Section 3: Core Components of Modern Data Infrastructure - What You Build

We want to examine what exactly you will be building, and how in practical term.

1. Ingestion Layer

This layer captures the data from:

- Application databases

- SaaS tools

- Event Streams

Other considerations when building out your ingestion layer include:

- Batch vs Streaming Ingestion (i.e., bulk load or streaming ingesting of data)

- Data Validation

2. Data Storage Layer

Your Core Data Environment.

- Data Lake or Data Warehouse

- Stored Data will be Structured or Semi-Structured

Focus of This Layer:

- Ability to Scale

- Cost-Effective

- Performance Of Queries

3. Data Processing Layer

This is where raw data is changed to a usable form.

Components of This Layer Include:

- Data Transformations

- Aggregations

- Feature Engineering

Typically tools that may have been used for data transformations will have been SQL based or utilized distributed processing.

4. Data Orchestration Layer

This Layer manages:

- Scheduling of Pipelines

- Dependencies associated with Pipelines

- Failure Management of Pipelines

Without orchestration a data pipeline can become unmanageable.

5. API Layer (The Serving Layer)

This is the main point for API first design.

This Layer provides:

- Standardized Endpoints

- Controlled Access

- Consistent Contracts for Data

How The Layers Interact

- Data is Ingested from the Sources

- Data is Stored in a Centralized Data Repository

- Data is Processed to Create Usable Format

- Data is Orchestrated into Reliable Pipelines

- Data is Exposed Through API's to Consumers

Common Confusion

Most Teams stop at Stage 4 and think that they have a usable dashboard.

However, a modern data system requires:

- Applications Powered by Data

- External Integrations with Data

- Real-time Decision making in Applications

Thus a strong serving layer is needed for modern applications.

Key Takeaway

You are building a pipeline, you are building a data product platform.

Section 4: How Modern Data Infrastructure Works: Real World Walkthrough

Example: Product Analytics Platform

A SaaS company needs:

- To Track what Users Do in their Product

- To Create and Power Dashboards

- To Enable Real-time Recommendations

Step by Step Process

1. How Events are Generated

- The User Interacts with the Product

- Events are Created (Click, Sessions, Action)

2. How Events are Ingested

- The Event is Streamed Into Ingestion Layer

- Basic Validation Occurs

3. How Events are Stored

- Raw Events are Stored to a Data Lake

- Processed Events are Stored to a Data Warehouse

4. How Events are Processed

- Transform Events to Session level Data

- Aggregate Events to Metrics

5. Orchestration

Monitoring and Scheduling Pipelines:

- Detect failures and recover automatically

API Layer

Metrics provided by APIs to:

- Support customer facing features

- Provide programmatic interfaces to 3rd parties

- Create network/real-time dashboards

Where Things Go Right

- Data contracts are clear

- Pipelines are reliable

- API's are standard

Where Things Go Wrong

- Schema changes break pipelines

- Inconsistent data returned from API's

- Latencies increase under load conditions

Typical Engineering Interaction With Data

Engineers:

- Build pipelines

- Monitor the health of their systems

- Use API's to access rather than direct queries

Example Data Architecture Pattern

- Event Streaming Platform

- Central storage layer

- Transformation pipelines

- API gateway to expose data services

Important Insight

The API layer should be thought of as the interface between the data and your business.

Section 5 - Common Mistakes Teams make with Modern Data Infrastructure

Even the best teams can make the following mistakes:

1) Over-engineering too early

Build for:

- 100x scale

- Complex use cases

Without first validating basic functionality.

Outcomes:

- Slow progress

- High levels of complexity

2) Under-investment in observability

Lacking observability results in:

- Not catching failures

- Longer times to debug

Things teams should monitor:

- Freshness of data

- Health of pipelines

- Performance of API's

3) Skipping the data contract

Without a data contract, teams will experience:

- Schema change that breaks the pipeline

- Loss of trust in data

The data contract provides predictability.

4) Treating Modern Data Infrastructure as a one-time project

Modern Data Infrastructure is:

- Evolving and continually changing, therefore it is not a one-time project

5) Ignoring the API layer

Teams are putting too much focus on pipelines but ignoring how to consume the data.

This will result in:

- Inconsistent access patterns

- Duplicate logic/code

Key Point

Most of the time failures occur are not due to technical limitations.

They are due to mistakes with the design and prioritization process.

Section 6: Best Practices For Modern Data Infrastructure: What Differentiates High-Performing Teams

High-performing teams have a different way of doing things from other teams.

1. Data As A Product

They define:

- Owner(s)

- SLA(s)

- Consumer(s)

Each dataset is treated as a product.

2. API First Design

They:

- Define the endpoint early

- Standardize the access patterns

- Enforce the rules of the standard

By doing so, they can reduce their rework later.

3. Automate Everything You Can

To include:

- Data validation

- Pipeline monitoring

- Alerting

Doing this adds to the reliability of the system.

4. Build Observability Into The System

They track:

- Latency

- Errors

- Data freshness

By doing so, they can reduce the mean time to resolution by over 50% from the time of the first error to the final error.

5. Document and Enforce Contracts

They ensure:

- Schema Stability

- Version Control

- Clear Communication

6. Iterate Indefinitely

They:

- Release in increments

- Gather feedback

- Continue to improve

Key Insight

In the end, the best teams do not create a perfect system.

They create a system that will grow and change in a predictable manner.

Evaluation Differnitator Framework

Why great CTOs don’t just build they evaluate. Use this framework to spot bottlenecks and benchmark performance.

Call to Action

Logiciel POV

The next generation of data systems is not defined by how good you are at storing data.

It is defined by how well you can provide data to users (serve) and utilize data (operationalize).

API-first design is no longer optional; it is the minimum foundation for modern, scalable, AI-ready data infrastructure.

Logiciel Solutions provides engineering teams with the tools they need to design and build API-first data platforms that reduce complexity, increase reliability and speed up product development.

If your team spends more than 42% of their time trying to access your data rather than actually using it, it is time to rethink your architecture.

Learn how the AI-first engineering teams at Logiciel can assist in creating a modern, scalable API-first data infrastructure that will grow with your company.

Frequently Asked Questions

What is API-First Data Infrastructure?

API-first data infrastructure considers the means by which data will be exposed and consumed. Unlike relying on a direct database connection or ad-hoc queries, the data is delivered to users through standard naming conventions via an API. API-first infrastructure reduces the chances that your future data will be inconsistent or contain flaws, and it will be much easier to scale and govern your data.

Why is Modern Data Infrastructure Important to AI Systems?

AI systems need reliable, consistent, real-time access to high-quality, structured data. Without a well-defined and structured data infrastructure, your data's integrity will be compromised, making your model's performance unreliable and your deployment efforts unsuccessful.

What are the Primary Layers of Modern Data Infrastructure?

The core layers of a modern data infrastructure are ingestion, storage, processing, orchestration, and serving (API Layer). Each layer serves an essential function to ensure that your data is reliably passed from source to use.

How Long Does it Take to Implement an API-First Infrastructure?

Implementing an API first model starts with a focus area that could take around 6 to 12 weeks for initial completion of the work focused on the use case. Full implementation will require an iterative approach that will evolve over time.