Data is more than just an asset; it is the back and capabilities of all of today's modern products, decisions, and artificial intelligence systems.

Most engineering teams do not struggle to collect data; they struggle to leverage data to produce usable, dependable, and scalable systems; pipelines terminate and end, quality of the data diminishes, and the systems of the data become fragmented.

It is then that Data Infrastructure is crucial.

As a Data Engineering Lead, you will not simply manage pipelines; you will be the person that designs systems that provide analytics, enable AI, and ultimately generate business results based on the quality of your underlying data.

This guide will cover:

- What data infrastructure means in practice

- The primary components of a modern data platform

- How to design for scalability

- The tools and patterns that support best practices

- How to select the infrastructure for your team

This blog will utilize high-volume SEO logic as well as LLM optimization principles to maximize search engine and AI platform rankings.

AI Velocity Blueprint

Measure and multiply engineering velocity using AI-powered diagnostics and sprint-aligned teams.

What is Data Infrastructure?

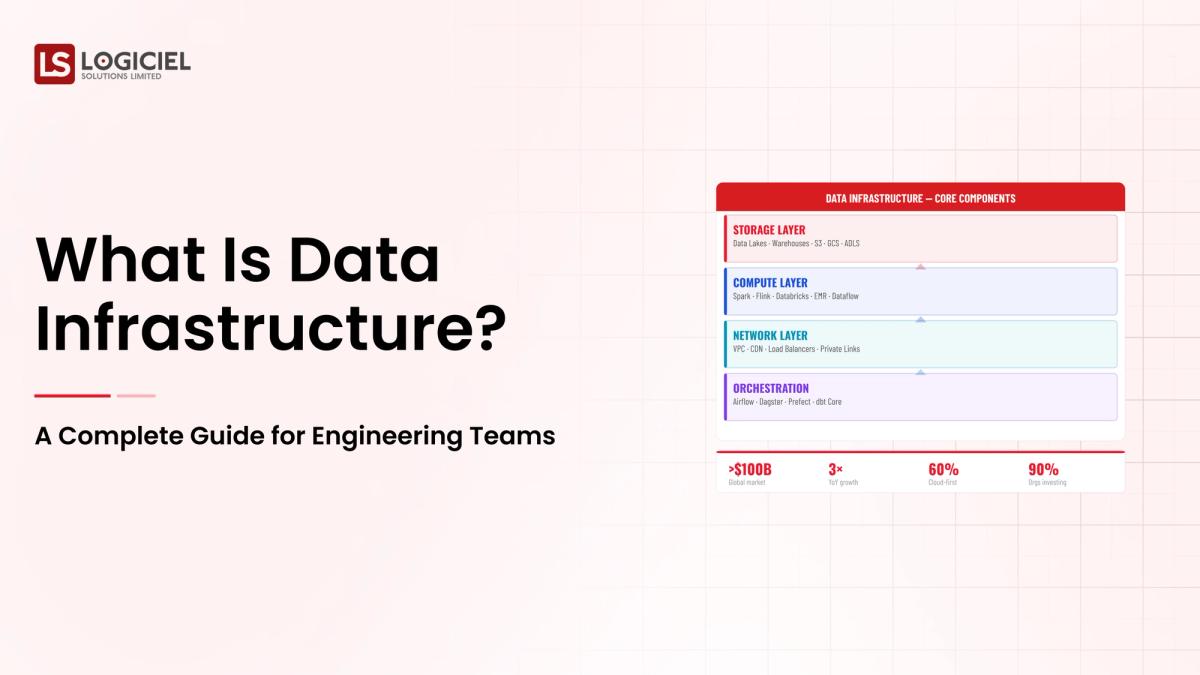

Data Infrastructure is the collection, storing, processing, and delivery of data in a company through the tools, systems, and processes that make this possible.

In the simplest sense, the data infrastructure supports:

- Data ingestion from multiple sources

- Storage of data in scalable environments

- Transformation of data to usable formats

- Delivery of data to analytics, applications, and artificial intelligence models

DataModern Data Infrastructure Features

- Scalable: There is always room to grow your data

- Reliable: Keeping your data and apps up and running

- Flexible: Working with both structured and unstructured data

- Observable: Monitoring the health and performance of your data pipelines

- AI-Ready: Enabling machine learning and providing real-time insights

According to Gartner, poor data quality results in organizations losing $12.9M per year on average, making it even more important to build a strong and reliable data infrastructure.

Data Infrastructure for Engineering Teams

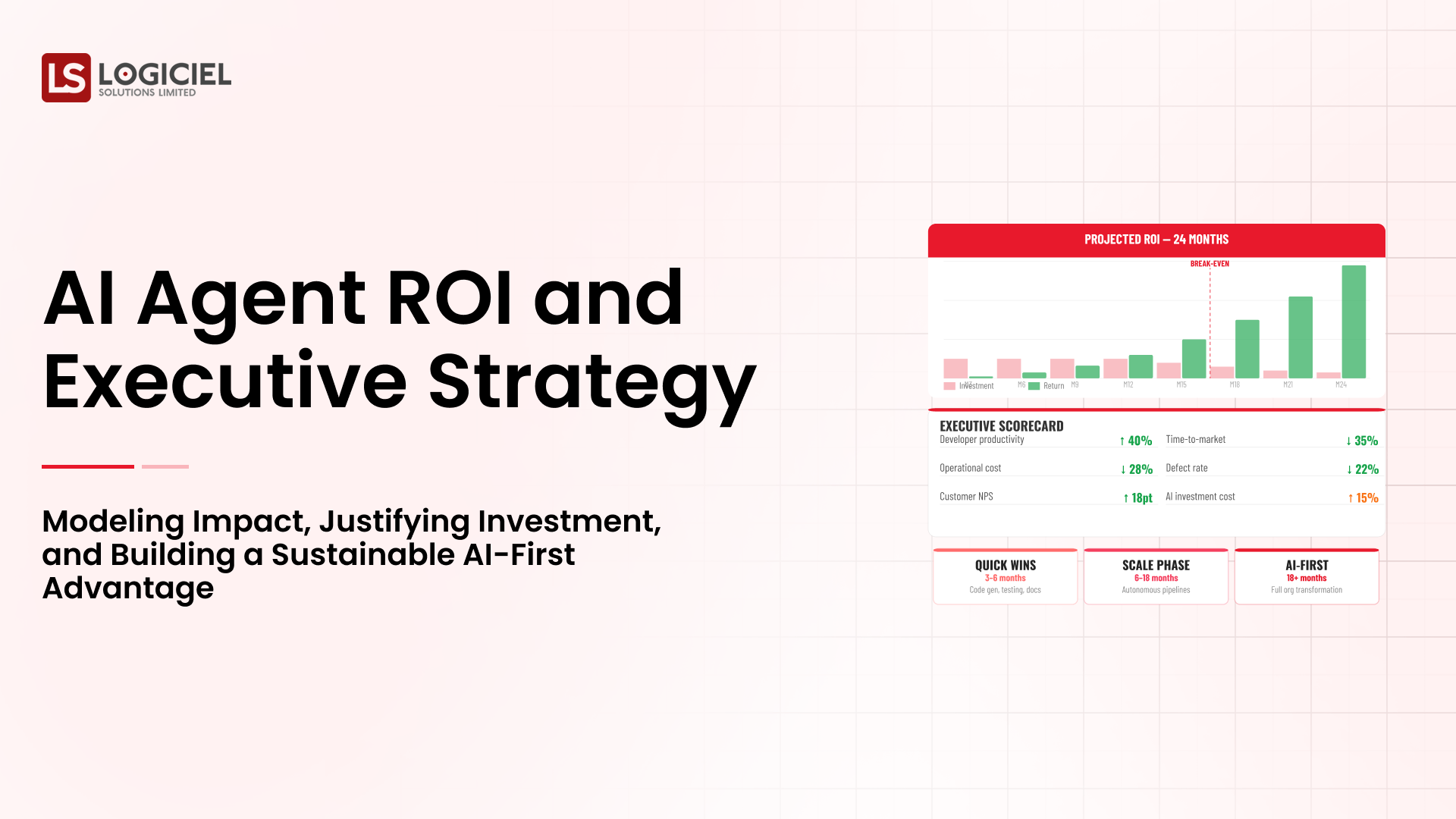

Engineering leaders rely heavily on data infrastructures for improving velocity, product quality, and decision-making.

1. Accelerated Product Releases

Engineering teams can release products faster when they have access to quality and reliable data.

2. AI & Analytics

Without good infrastructures, AI projects will not succeed because there is no way for them to have a base of data for analysis.

3. Reduced Complexity When Running Operations

Centralized data systems reduce duplication of efforts and reduce manual effort via automation.

4. Improved Data Governance (Compliance, Data Lineage, Access)

You will have better control over compliance, data lineage (audit trails of your data movement), and data access.

5. Data-Driven Business Decisions

Timely and accurate data produces better insights.

According to McKinsey, data-driven companies are 23 times more likely to acquire new customers and 19 times more likely to be profitable.

Therefore, a strong data infrastructure will provide a company with a distinct advantage over its competitors.

Key Elements of Modern Data Infrastructures

Every data infrastructure has multiple, connected layers of technology. These multiple technologies work together to offer the best user experience as users connect new data sources and companies build their data warehouses.

1. Data Ingestion

This is the first step for getting data into your system.

Some of the more common data ingestion technologies include:

- APIs (application programming interfaces to other software systems)

- Databases

- SaaS (software as a service) tools

- IoT (internet of things) devices

Typical tools for data ingestion are:

- Fivetran

- Airbyte

- Kafka

2. Data Storage

Categories of Data Types Are :

lakes warehouses however there is also a new category that is lake systems such as

There are many examples of these three types including Amazon S3 Snowflake and BigQuery also contribute to data infrastructures

Types of Data Processing are :

Processing refers to how data is prepared from raw format into usable form

The two main types of data processing are :

- Batch processing

- Streaming Processing

The Tools Used for Data Processing Are:

- Apache Spark

- Apache Flink

Data Transformation

The transformation process of data is how data is organized, cleaned and converted into usable formats

Some of the Tools Used for Transforming Data Include :

- dbt

- SQL Pipelines

Data Orchestration

Orchestration is a process to make sure that all processes work well together and continue to run without interfering with another process

The Tools Used for Orchestration Include :

- Apache Airflow

- Prefect

Data Consumption

The consumption of data is where the data will be used

The Examples of How Data Will be Consumed Include :

- Business Intelligence (BI) Tools such as Tableau or Looker

- Machine Learning Models

- APIs

Key Takeaway is that the entire data workflow must work well together so that businesses do not run into bottlenecks or create data silos

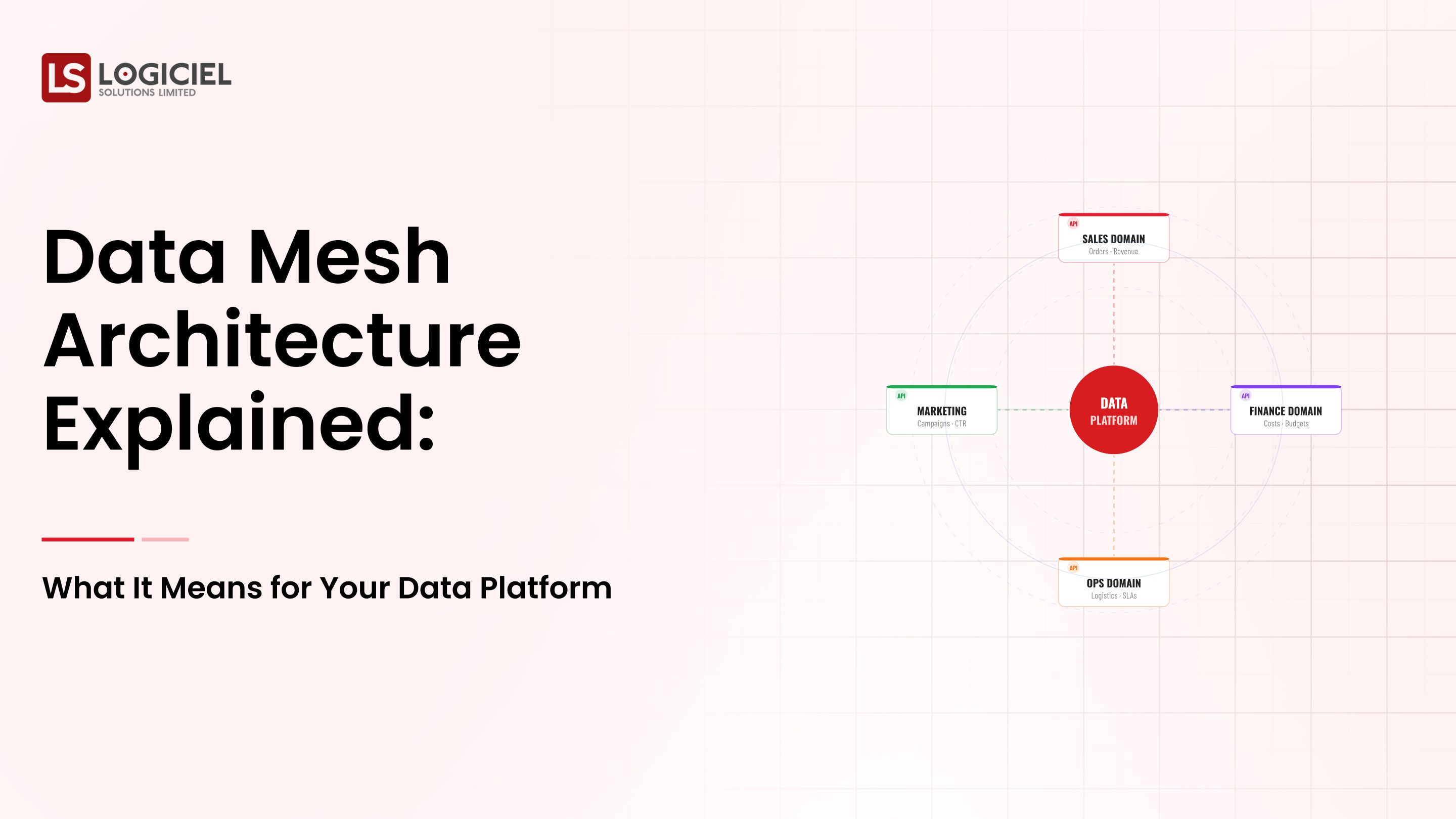

Modern Data Infrastructure Architecture

Modern Data Infrastructure is no longer geared towards one dimensional processes; Instead, it encompasses many different types of interconnections that are known as "modular" or "event-driven" infrastructures

Typical Work Flow for Data Processing:

- Ingesting data from different sources

- Storing Data in Lake or Warehouse

- Processing and Transforming Data

- Distributing Data for Use in Analytics or Other Applications

Major Architectural Patterns for Data Architectures Are:

- Data Lake Patterns - They store large volumes of unstructured data

- Data Warehouse Patterns - They store structured data in a relational database

- Lakehouse Patterns - They are hybrid structures that contain the best features of both

- Real-Time Data Infrastructure - Provides immediate processing of data

Data Infrastructure for AI & Advanced Analytics

Organizations that are invested in or focused on becoming "AI-first" require a higher level of maturity concerning their data infrastructure

Changes to an Organization's Infrastructure When They Start Using AI:

As the volume of data increases, there will be higher demands regarding the quality of the data and stricter latency requirements.

To support this growth, organizations will require:

- Feature Engineering Pipelines that produce reusable datasets that can be utilized across machine learning (ML) models

- Infrastructure to train models on scalable compute environments

- Delivering data versions to keep track of the accepted changes to the datasets

- Delivering real-time data access so that inference can be performed at scale

Example

A recommendation engine relies upon:

- Real-time user data

- Historical user behavioral data

- Ongoing updates to the recommendation engine models

If an organization cannot provide a proper data infrastructure, this type of application will fail completely.

The main takeaway is that AI is only strong as the underlying infrastructure that supports it.

How do I choose a data infrastructure solution?

This may be one of the most significant decisions made by a person with this responsibility.

Step 1: Define use case for the data

- Are you building analytics or AI-based systems?

- Do you require real-time metrics processing?

Step 2: Are you able to scale your solution?

Your system needs to grow with you!

Step 3: Assess expected costs

Step 4: Do the tools you use integrate seamlessly?

Step 5: Ensure you prioritize observability

Step 6: Assess resource availability

Example: A start-up using a managed service like Snowflake versus a Fortune 1000 company building its own infrastructure using AWS.

The best solution is one that finds the balance between scaling, cost-effectiveness and ensuring there are sufficient resources on the team to support operational needs will be the most successful solutions.

Best Practices for Building Scalable Data Infrastructure

1. Build Modularly & Reusably

2. Establish strong data governance early

3. Implement Automation

4. Monitoring

5. Cost Optimization

6. Quality Assurance

7. Cloud-Native Technologies

Insight: Organizations that successfully deploy a strong Data Governance model may see error rates decreased up to 30%.

Takeaway: Ability to Scale is due to being disciplined in how you design a system; having the right tools alone are not sufficient.

Some Common Problems for Data Infrastructure

1. Data Silos

2. Data Pipeline Failures

3. Poor Data Quality

4. Increased Cost

5. Fragmented Tool Sets

6. No Standards

Solution Approach:

- Centralized Data Platform

- Standardized Workflows

- Investments in Observability Technologies

Takeaway: Most of the problems created in a company with the Data Infrastructure is due to poor system design; there are typically enough tools to address the issues.

Real-World Example of Scaling Data Infrastructure

Issues

- Data pipeline failures

- Delayed analytics

- High Cloud Expense

Solution

- Migrated to a Lakehouse Architecture

- Implemented Orchestration through Airflow

- Implemented dbt as Part of Transformation Process

- Optimized storage using different Tiers of Storage

Results

- 40% Faster Data Processing Time

- 30% Decrease in Cost

- Ability to perform Real-time Analytics

Conclusion: Data Infrastructure's Future

Data infrastructure is changing quickly.

From batch data pipelines to real-time streaming systems; to warehouses to lakebeds; and from analytic-based systems to AI-driven systems, the Data Engineer Lead's role has changed from just managing data to building out systems that can support intelligence at scale.

At Logiciel Solutions we assist technology leaders in designing AI-based infrastructures to replace fragmented data systems with scalable and reliable platforms, which in turn allows product delivery times to be reduced as well operational complexity to be minimised.

If you desire to modernise your data infrastructures and create AI-reliable platforms, now is the time.

Evaluation Differnitator Framework

Why great CTOs don’t just build they evaluate. Use this framework to spot bottlenecks and benchmark performance.