Deploying AI agents is an engineering initiative. Scaling them is an operational transformation. Justifying them at the executive and board level is a strategic exercise.

Many organizations adopt AI agents because competitors are experimenting with them or because productivity gains appear obvious at a surface level. However, sustainable adoption requires disciplined ROI modeling. Without quantifiable value, AI initiatives remain experimental and vulnerable to budget scrutiny.

For CTOs and executive teams, the central question is not whether AI agents are powerful. It is whether they generate measurable leverage relative to cost, risk, and complexity.

This cluster explores how to model ROI for AI agents, how to communicate value at the board level, how to distinguish productivity gains from strategic advantage, and how to design long-term AI-first strategy that compounds rather than plateaus.

Evaluation Differnitator Framework

Why great CTOs don’t just build they evaluate. Use this framework to spot bottlenecks and benchmark performance.

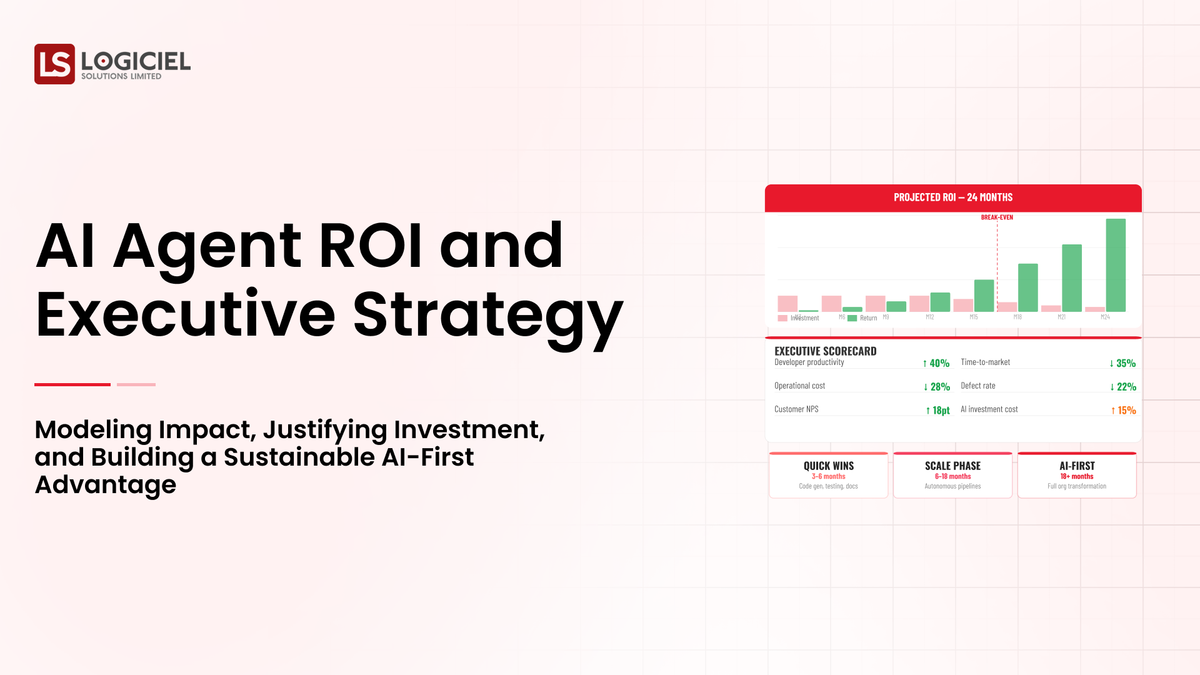

The Three Layers of AI Agent ROI

AI agent ROI operates across three distinct layers.

The first layer is productivity compression. This includes measurable reductions in repetitive cognitive tasks such as pull request review, documentation drafting, log analysis, and support ticket triage.

The second layer is operational efficiency. This includes reduced mean time to resolution for incidents, decreased backlog accumulation, improved ticket routing accuracy, and faster iteration cycles.

The third layer is strategic compounding advantage. This includes accelerated feature velocity, increased innovation bandwidth, and improved system resilience.

Organizations often focus only on the first layer because it is easiest to measure. However, long-term advantage emerges primarily from the second and third layers.

Understanding this layered model is essential for accurate ROI assessment.

Modeling Productivity Compression

Productivity compression is the most immediate and tangible benefit of AI agents.

Consider an engineering team processing four hundred pull requests per month. If average review time per pull request is thirty minutes and an AI review layer reduces human review time by twenty-five percent, the team saves one hundred hours per month.

If the average fully loaded cost of an engineer is one hundred dollars per hour, this represents ten thousand dollars per month in time value.

However, ROI modeling should not stop at salary equivalence. The real question is how those saved hours are redeployed.

If the time is reinvested into feature development, product velocity increases. If reinvested into refactoring or reliability improvements, system quality improves.

Productivity compression becomes meaningful only when reinvestment strategy is clear.

Modeling Operational Efficiency Gains

Operational ROI extends beyond individual productivity.

Consider incident response workflows. If mean time to resolution decreases from two hours to ninety minutes through agent-assisted log analysis and remediation suggestions, cumulative downtime decreases significantly over time.

Reduced downtime improves customer experience and retention. It may also reduce SLA penalty exposure.

Similarly, support ticket triage accuracy may increase through AI categorization, reducing misrouting and accelerating resolution.

Operational efficiency gains compound across customer interactions.

These gains are harder to measure than productivity compression but often have larger strategic impact.

Measuring Strategic Compounding Advantage

Strategic ROI is less immediate but more transformative.

When engineering teams spend less time on repetitive cognitive tasks, they can allocate more time to innovation. Faster iteration cycles enable earlier experimentation and quicker validation of product hypotheses.

Over time, compressed development cycles enable competitive differentiation.

Strategic compounding advantage emerges from increased velocity and resilience rather than direct cost savings.

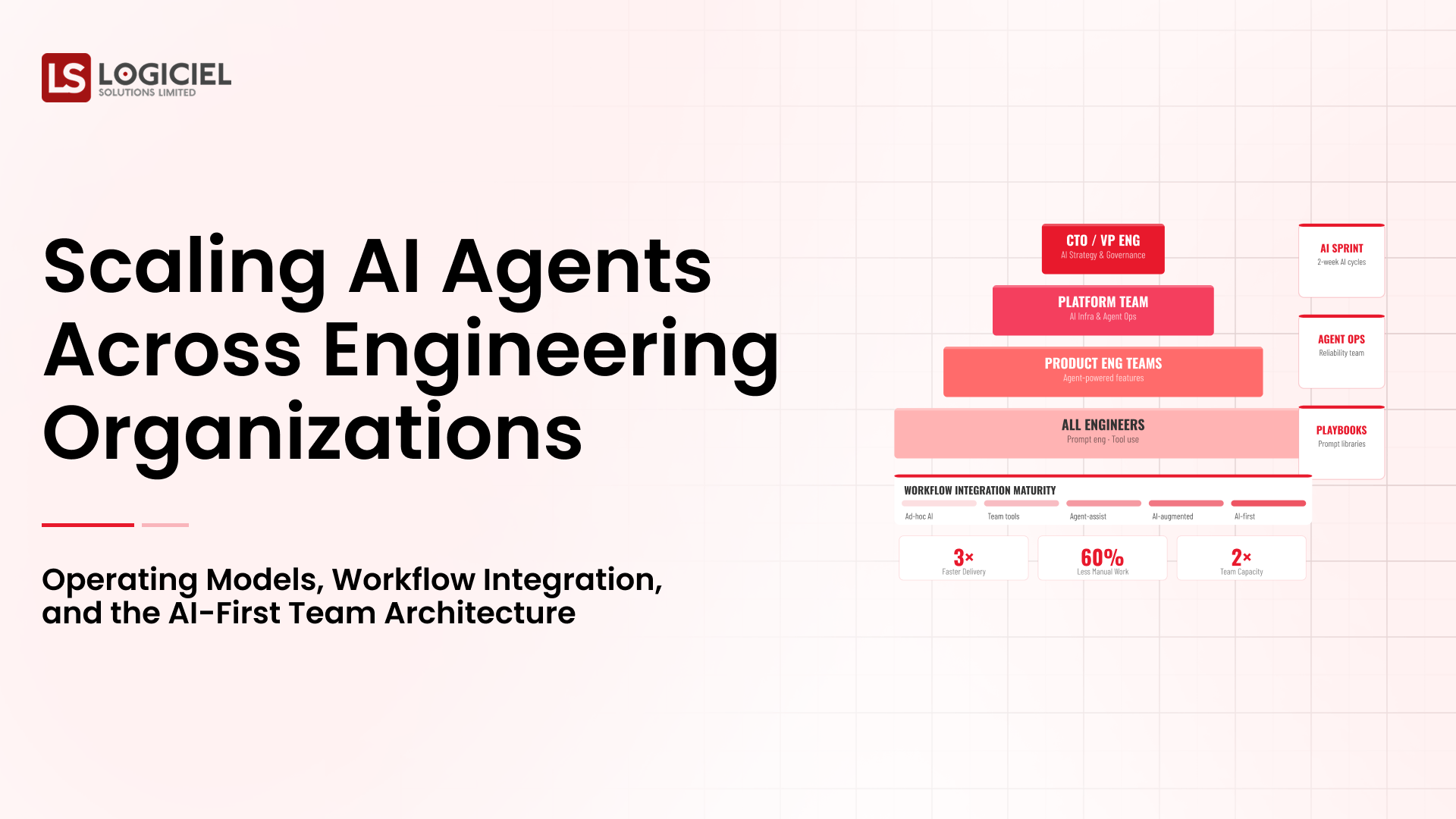

Boards often focus on cost justification. CTOs must reframe AI agents as velocity multipliers rather than headcount reducers.

Avoiding the Headcount Replacement Narrative

One of the most common misconceptions is that AI agents replace engineers.

This framing is strategically flawed.

Agents compress repetitive work. They do not replace architectural thinking, system design, or domain expertise.

Replacing engineers with AI increases risk. Augmenting engineers with AI increases leverage.

The objective is not to reduce team size but to increase output per engineer sustainably.

This distinction is critical for executive alignment and cultural stability.

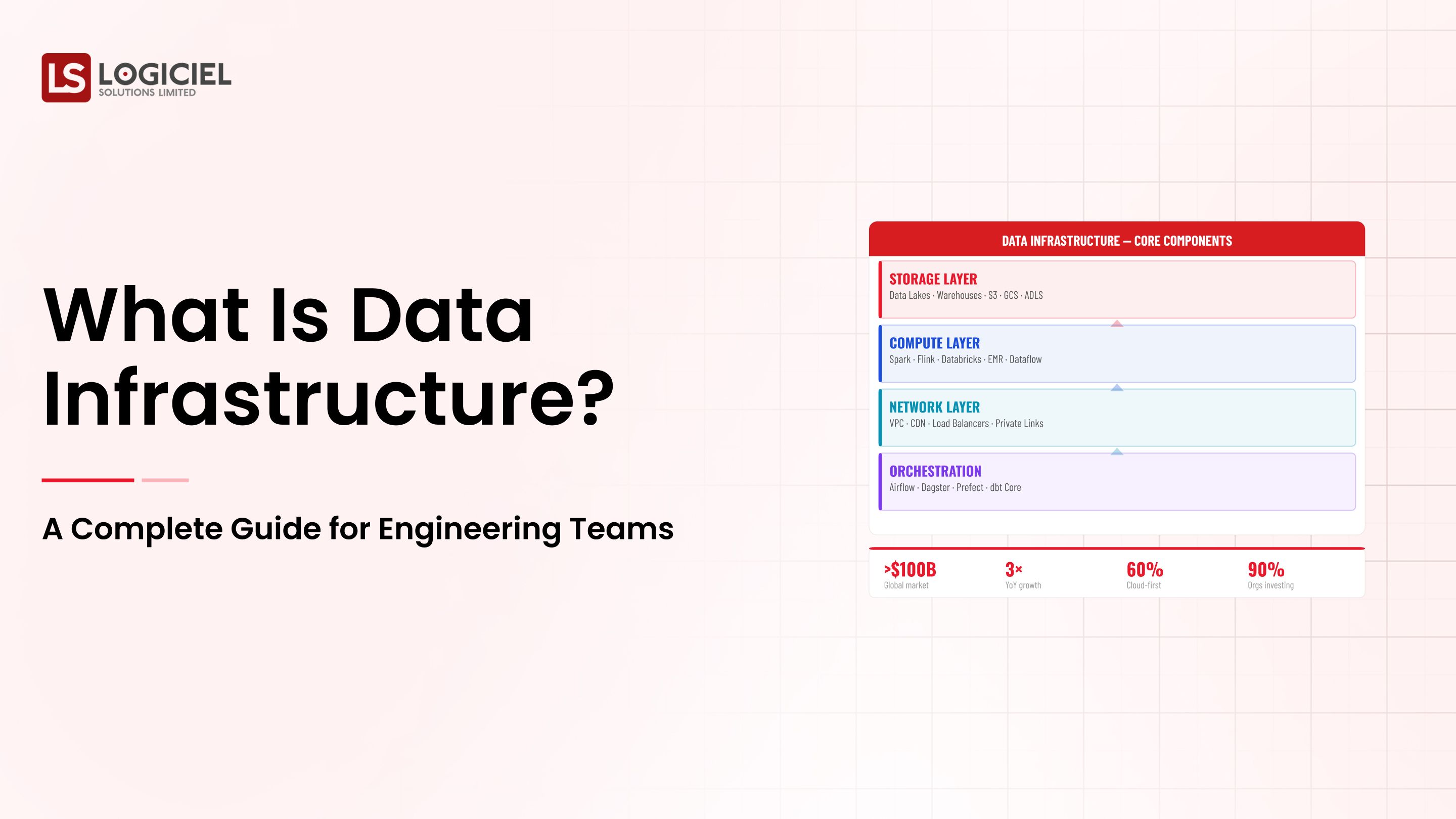

Cost Modeling and Economic Sustainability

ROI must consider cost amplification.

Multi-step reasoning consumes tokens. Reflection loops increase compute usage. Retrieval memory adds storage and processing overhead.

Cost should be modeled per completed workflow rather than per prompt.

For example, if an agent reduces support handling time by thirty percent but doubles token consumption during high-volume periods, the economic tradeoff must be understood.

Cost ceilings, loop limits, and caching strategies reduce runaway expense.

Economic sustainability determines whether AI initiatives scale or stagnate.

Risk-Adjusted ROI Assessment

AI agent deployment introduces risk alongside opportunity.

Prompt injection, tool misuse, data leakage, and behavioral drift must be factored into ROI models.

Risk-adjusted ROI includes mitigation investment.

For example, building AI Security Posture Management frameworks incurs upfront cost but reduces long-term exposure.

Boards evaluate risk-adjusted return, not gross efficiency gains.

CTOs must communicate both benefit and containment strategy.

Executive-Level Framing for Boards

When presenting AI agent initiatives at the board level, language matters.

Avoid technical descriptions of token efficiency or prompt engineering.

Frame AI agents as infrastructure modernization initiatives.

Explain how they compress cycle time, increase resilience, and enhance competitive responsiveness.

Present phased adoption plans with measurable milestones.

Demonstrate guardrail maturity and governance alignment.

Boards seek clarity on risk, cost predictability, and strategic positioning.

Position AI agents as structured leverage, not speculative experimentation.

Building a Multi-Year AI Strategy

Short-term experimentation rarely produces sustained advantage.

A multi-year AI strategy should define progressive milestones.

Year one may focus on bounded assistive workflows.

Year two may expand to operational automation with strict guardrails.

Year three may embed agents across product lifecycle and infrastructure management.

Each phase should include measurable metrics for productivity, reliability, and cost stability.

AI strategy must integrate with broader digital transformation goals.

Competitive Positioning and Market Differentiation

AI agents can create differentiation when embedded into customer-facing workflows.

For example, SaaS platforms that provide AI-assisted configuration, diagnostics, or support may deliver faster time-to-value for customers.

However, differentiation depends on reliability.

Customers will not tolerate instability introduced by unbounded reasoning.

Competitive advantage requires disciplined architecture.

Cultural Alignment at Executive Level

Executive alignment determines long-term success.

CTOs, CIOs, and CPOs must align on AI governance policies.

Finance leaders must understand cost variability models.

Security leaders must oversee guardrail maturity.

Without cross-executive alignment, AI initiatives fragment.

Scaling requires unified vision.

Avoiding Plateau After Initial Gains

Many organizations experience initial productivity gains and then plateau.

This occurs when agents remain isolated tools rather than integrated systems.

Continuous optimization is required.

Tool schemas must evolve. Orchestration logic must refine. Memory systems must improve. Governance frameworks must adapt.

AI advantage compounds only when continuously engineered.

Measuring Maturity Over Time

AI maturity can be evaluated across several dimensions.

Architectural maturity includes structured memory, tool discipline, and orchestration rigor.

Observability maturity includes reasoning trace logging and anomaly detection.

Governance maturity includes autonomy thresholds and risk classification.

Economic maturity includes cost modeling and budget attribution.

Tracking maturity helps executives evaluate progress beyond superficial metrics.

SaaS-Specific ROI Considerations

For SaaS organizations, ROI extends to customer lifetime value.

Faster support resolution improves retention. Reduced downtime increases trust. Faster feature delivery enhances competitive positioning.

AI agents embedded in internal workflows indirectly impact revenue growth.

Tenant-level cost attribution ensures profitability analysis remains accurate.

SaaS ROI modeling must include both internal efficiency and customer-facing value.

Long-Term Strategic Implications

The long-term impact of AI agents is structural leverage.

Organizations that embed reasoning systems into disciplined architectures will experience compressed development cycles, enhanced resilience, and improved decision velocity.

Those that experiment without governance will experience sporadic gains followed by instability.

Strategic advantage emerges from disciplined integration.

Closing Perspective on Executive Strategy

AI agents are not short-term productivity hacks. They are infrastructure investments.

ROI must be modeled across productivity compression, operational efficiency, and strategic compounding advantage.

Cost and risk must be managed through guardrails and observability.

Boards must view AI initiatives as modernization strategies rather than experimental spending.

For CTOs, the objective is sustainable leverage.

Agents amplify the strategic direction of the organization.

If guided by disciplined architecture and governance, they become competitive accelerators.

If deployed without structure, they become cost centers.

Executive clarity determines which path unfolds.

AI Velocity Blueprint

Measure and multiply engineering velocity using AI-powered diagnostics and sprint-aligned teams.