A product launch is delayed. Not because the feature isn’t built, but because the data behind it isn’t reliable. Dashboards don’t match. Pipelines break silently. Stakeholders stop trusting the numbers.

This is where most organizations start looking for new tools. But the real problem is rarely tooling. It is a lack of clarity in their data infrastructure strategy and, more importantly, their current level of maturity.

If you are a CTO or VP Engineering, the challenge is not deciding what to build next. It is understanding where you are today.

This guide is designed to help you do exactly that.

By the end of this article, you will:

- Understand why auditing your current data architecture is critical

- Learn the key dimensions that define a mature data infrastructure strategy

- Build a practical roadmap to move from fragmented systems to scalable, AI-ready infrastructure

Agent-to-Agent Future Report

Understand how autonomous AI agents are reshaping engineering and DevOps workflows.

Why Auditing Your Data Infrastructure Strategy Matters

Most teams respond to data failures the same way.

They:

- Add new pipelines

- Introduce more tools

- Increase system complexity

But they rarely step back to ask a more important question:

What is actually broken?

This is the core issue.

Without a structured audit, organizations build on weak foundations. The result is predictable:

- Rework within months

- Rising operational costs

- Fragmented systems that are harder to maintain

A strong data infrastructure strategy starts with clarity.

An audit provides three critical insights:

1. Where you are today

Not assumptions. Not opinions. A clear, evidence-based view of your current state.

2. What is not working

Whether it is reliability gaps, poor observability, or scalability limits.

3. What to fix first

Prioritization based on impact, not guesswork.

This is not about assigning blame. It is about removing ambiguity.

The value of a structured audit

Teams that invest 2 weeks in a structured audit often save:

- 6 to 12 months of rework

- Significant infrastructure cost

- Multiple failed implementation cycles

Example:

Without audit: Teams rebuild pipelines repeatedly

With audit: Teams fix root causes and stabilize systems

Key insight:

You cannot scale what you do not understand. A mature data infrastructure strategy begins with a clear baseline.

Dimension 1: Reliability - The Foundation of Trust

Reliability is the most visible indicator of whether your data platform works.

A reliable system:

- Produces accurate data

- Delivers consistent outputs

- Recovers quickly from failures

Without reliability, everything else becomes irrelevant.

Metrics that define reliability

To assess reliability, focus on measurable indicators:

- SLA adherence rate

- Frequency of incidents

- Mean Time to Repair (MTTR)

- Volume of data quality failures

These metrics provide a clear signal of system health.

Indicators of low reliability

You likely have a reliability problem if:

- Business teams manually verify data

- Reports show inconsistent numbers

- Pipelines fail frequently

- Engineers spend more time fixing than building

These are not edge cases. They are systemic issues.

What high-performing teams do differently

Leading teams treat reliability as a product requirement.

They:

- Define SLAs for every pipeline

- Automate monitoring and alerting

- Continuously validate data quality

They do not rely on manual checks.

Example of a reliable system

In a mature system:

- Issues are detected immediately

- Alerts trigger before stakeholders notice

- Outputs remain consistent across systems

Cost of low reliability

The cost is not just technical.

It impacts:

- Decision-making speed

- Stakeholder trust

- Engineering productivity

Key insight:

If your data is not trusted, your data infrastructure strategy is failing.

Dimension 2: Observability - Can You Actually See Your System?

Observability determines how quickly your team can detect and resolve issues.

In modern distributed systems, this is no longer optional.

What observability means in practice

A fully observable system allows you to:

- Detect pipeline failures instantly

- Trace data lineage end-to-end

- Monitor freshness and latency

It answers a critical question:

Do you know what is happening before your stakeholders do?

Key questions to assess observability

- Can you trace any data point back to its source within minutes?

- Do you monitor freshness across all datasets?

- Are failures detected proactively or reported by users?

Signals of poor observability

- Problems are discovered by business teams

- Debugging takes hours or days

- Limited visibility into pipeline behavior

These signals indicate reactive operations.

What high-performing teams do

They implement:

- End-to-end monitoring systems

- Automated lineage tracking

- Centralized observability platforms

Their systems surface issues before they escalate.

Why observability matters more in 2026

Modern data systems are:

- Distributed

- Real-time

- AI-driven

Without observability:

- Failures cascade quickly

- Root cause analysis becomes complex

Key insight:

If you cannot see your system, you cannot control it.

Dimension 3: Scalability - Will Your System Grow With You?

Scalability determines whether your system can handle growth without breaking.

This includes:

- Data volume growth

- Increased user demand

- More complex workloads

What scalability actually means

A scalable system:

- Maintains performance as data grows

- Supports more users without degradation

- Minimizes manual intervention

Questions to assess scalability

- Does performance degrade as data volume increases?

- Are there manual steps that slow down pipelines?

- Do you know your system’s capacity limits?

Signals of poor scalability

- Pipelines slow down over time

- Engineers spend more time fixing issues

- Manual processes increase

These are signs of structural limitations.

What high-performing teams do

They:

- Design modular architectures

- Automate repetitive processes

- Optimize resource utilization

They build systems that evolve with demand.

Example of a scalable system

A well-designed system:

- Handles 2–3x current load without issues

- Maintains consistent performance

- Requires minimal manual intervention

Why scalability fails

Most systems fail because:

- They were not designed for growth

- Technical debt accumulates over time

Key insight:

Your growth should not break your system. If it does, your data infrastructure strategy needs to be rethought.

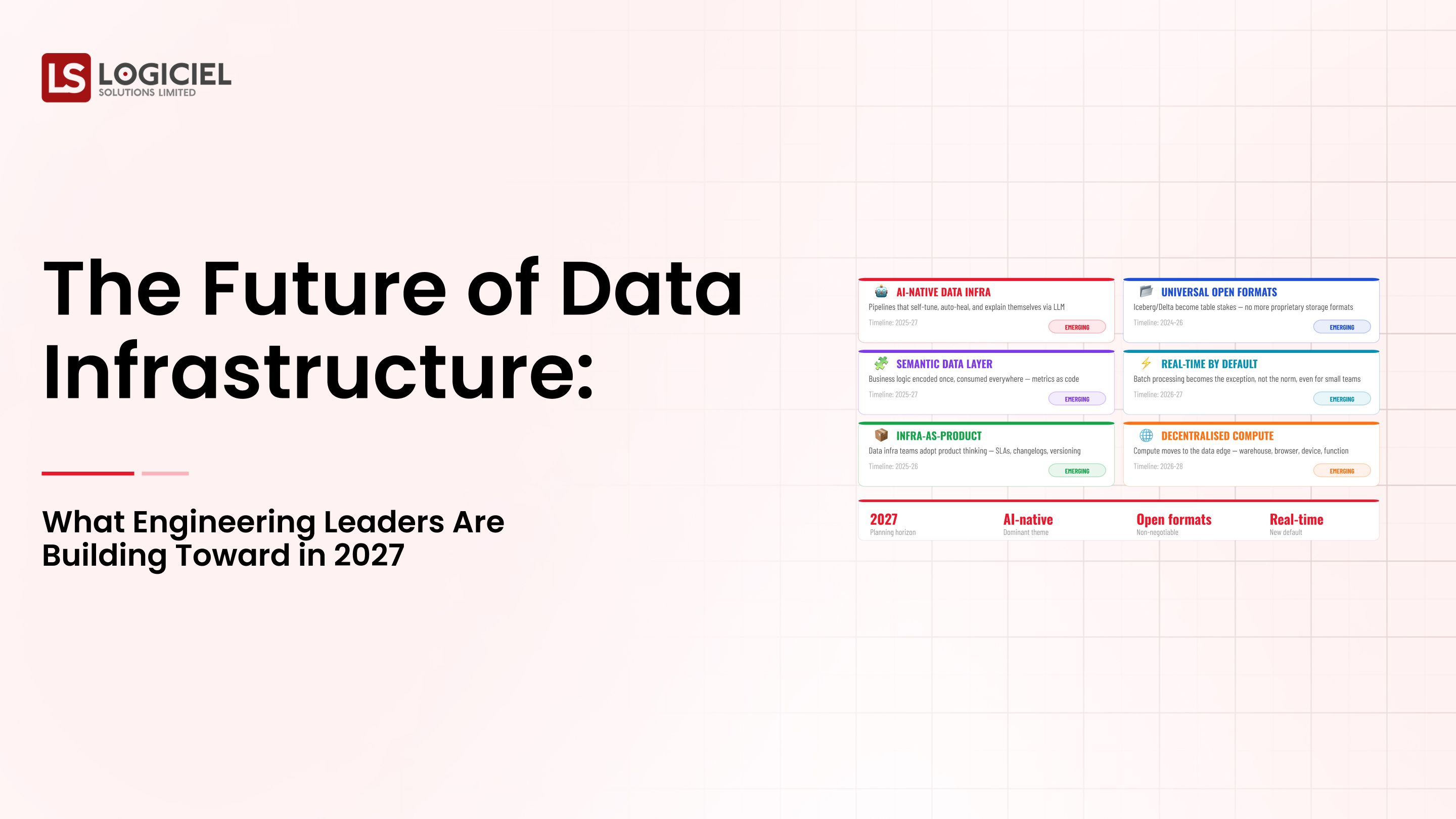

Dimension 4: AI Readiness - Is Your Data Platform Ready for AI?

AI readiness is no longer optional. It is a requirement.

However, most teams overestimate their readiness.

What AI readiness actually requires

A truly AI-ready system supports:

- Feature engineering pipelines

- Model training workflows

- Real-time inference systems

It ensures consistency across all stages.

Key questions to assess AI readiness

- Do you have a centralized feature store?

- Is data consistent across pipelines?

- Can you trace model inputs to their source?

Signals of low AI readiness

- Separate pipelines for ML teams

- Inconsistent data across environments

- Lack of governance and lineage

These issues directly impact model performance.

What high-performing teams do

They:

- Standardize features across models

- Ensure data consistency

- Align data and ML workflows

They treat data infrastructure as the foundation of AI.

Example of an AI-ready system

A mature system:

- Uses shared feature definitions

- Supports multiple models consistently

- Enables reproducibility

Why AI readiness matters

AI systems depend on reliable data.

Without it:

- Models fail

- Predictions become inconsistent

- Trust declines

Key insight:

The success of AI depends more on infrastructure maturity than model complexity.

Scoring Your Audit and Building a Roadmap

Once you assess each dimension, the next step is turning insights into action.

Step 1: Score each dimension

Use a scale from 1 to 5:

- 1 = Low maturity

- 5 = High maturity

Evaluate:

- Reliability

- Observability

- Scalability

- AI readiness

Step 2: Identify gaps

Focus on the lowest-scoring areas. These represent the highest opportunity for improvement.

Step 3: Prioritize using Impact × Effort

Classify initiatives into:

- High impact, low effort → Quick wins

- High impact, high effort → Strategic initiatives

This ensures efficient resource allocation.

Step 4: Build a roadmap

Example roadmap:

- Months 1–2: Fix reliability issues

- Months 3–4: Implement observability

- Months 5–6: Improve scalability and AI readiness

Step 5: Execute in parallel

Balance:

- Quick wins

- Long-term initiatives

This maintains momentum while building long-term capability.

Example outcome

Without structured planning:

- Teams react to problems

With structured planning:

- Teams build systematically

Key insight:

Maturity is achieved through structured execution, not isolated fixes.

RAG & Vector Database Guide

Build the quiet infrastructure behind smarter, self-learning systems. A CTO’s guide to modern data engineering.

Call to Action

At Logiciel, we help engineering leaders assess and improve their data infrastructure strategy with AI-first engineering practices.

Our teams identify maturity gaps, prioritize high-impact improvements, and build systems that scale with your business.

If you are unsure where your data platform stands, start with clarity.

Explore a structured audit or request a proof of concept to identify your next steps.

Frequently Asked Questions

What types of Best Practices can we implement to optimize an Intelligent Data Infrastructure?

Intelligent Data Infrastructure Best Practices consists of designing and building systems that have the following characteristics: dependable, observable, scalable and AI ready. Best Practices optimize the capability for reliable, accessible and usable data within the Data Analytics and Machine Learning work streams.

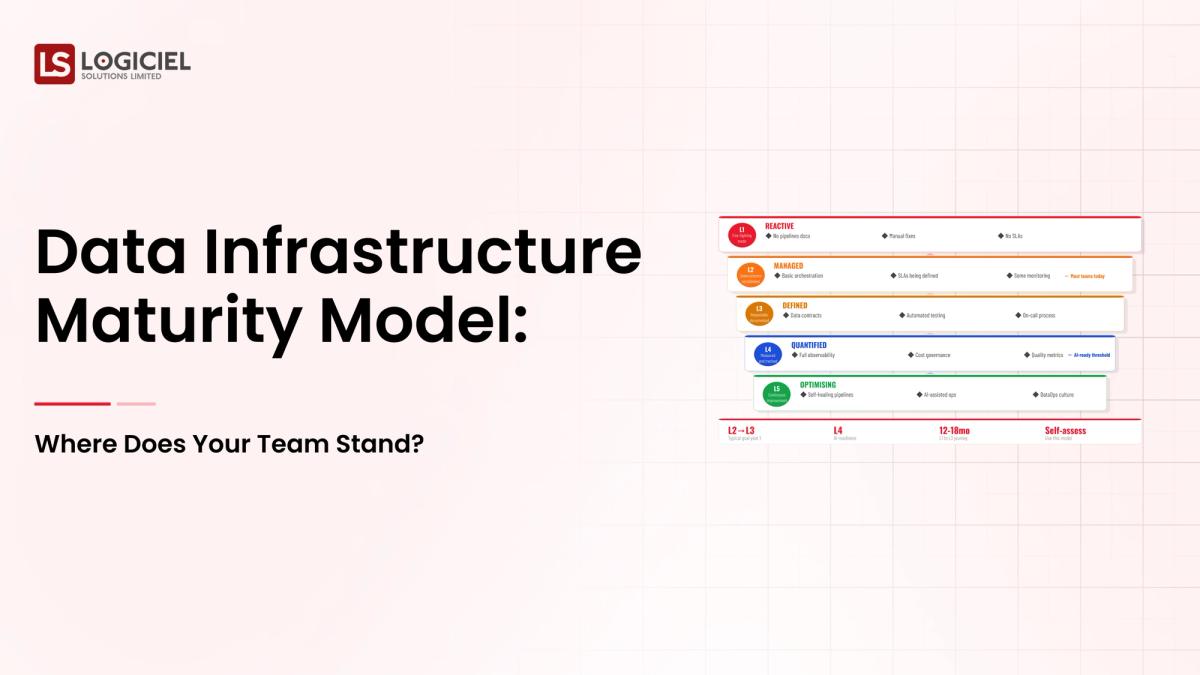

Why is a Data Infrastructure Maturity Model necessary?

A Data Infrastructure Maturity model enables Engineering leaders to evaluate current capabilities, identify gaps and formulate priority action items for improvements. Without performing a maturity assessment, teams tend to invest in Data Infrastructure technology without addressing the root cause of the foundational problems with Data Infrastructure.

What dimensions are used to evaluate Data Infrastructure Maturity?

Data Infrastructure Maturity is typically measured by four Dimensions: - Reliability (Trustworthiness of the data) - Observability (How visible is the system) - Scalability (Can the system support an increase in users or data) - AI Ready (Can the system support the complete Machine Learning workflow) Each of which is rated from ad-hoc to optimized.

What is the biggest mistake teams make when scaling their Data Infrastructure?

The biggest mistake teams make when scaling their Data Infrastructure is to build new Data Systems without auditing their existing Data Systems. This results in an increase in complexity, duplication of Data Pipelines and recurring reliability issues.

How can teams quickly improve the reliability of their Data Infrastructure?

Teams can quickly improve the reliability of their Data Infrastructure by: - Defining SLAs for Critical Data Pipelines - Implementing Automated Monitoring and Alerts - Verifying and Measuring Data Quality Implementing these recommendations will have an immediate positive impact on reliability without any significant re-architecting of their Data Infrastructure.

How can Data Infrastructures affect the success of AI?

AI is built on a foundation of reliable and consistent high quality data. When using inconsistent Data Pipelines, no ability to track lineage and no way of standardizing features, the AI models will fail when used in Production regardless of their level of sophistication.