Stakeholders no longer have faith in their data.

This is not because teams do not put forth effort.

However, the architecture and the capabilities behind the data are not meeting the needs of these stakeholders.

Dashboards are slow. Pipelines often fail and produce no signals. AI models do not deliver accurate results, and every team creates its own version of a successful solution.

This is the inflection point for data infrastructure and analytics.

As a VP or Head of Data, you are defining the future of your company’s data architecture over the next three to five years. You are no longer simply maintaining data systems.

AI Velocity Blueprint

Measure and multiply engineering velocity using AI-powered diagnostics and sprint-aligned teams.

Present State

Most businesses are functioning with:

- A combination of batch processing and real-time processing

- Multiple data storage solutions (database, cloud storage, live stream solutions)

- Multiple groups sharing responsibility but no single group owns the responsibility

This is all fine and well, but has brought with it:

- Complexity

- Inconsistencies

- Operational Overhead

How Things Have Changed in the Last 12 Months

Many things have changed recently and accelerated the pace of change:

- Reliance on AI has increased greatly

- Expectations for real-time information are now standard

- Volumes of data have grown considerably

Having made these changes to our data systems, we have now identified weaknesses in current systems.

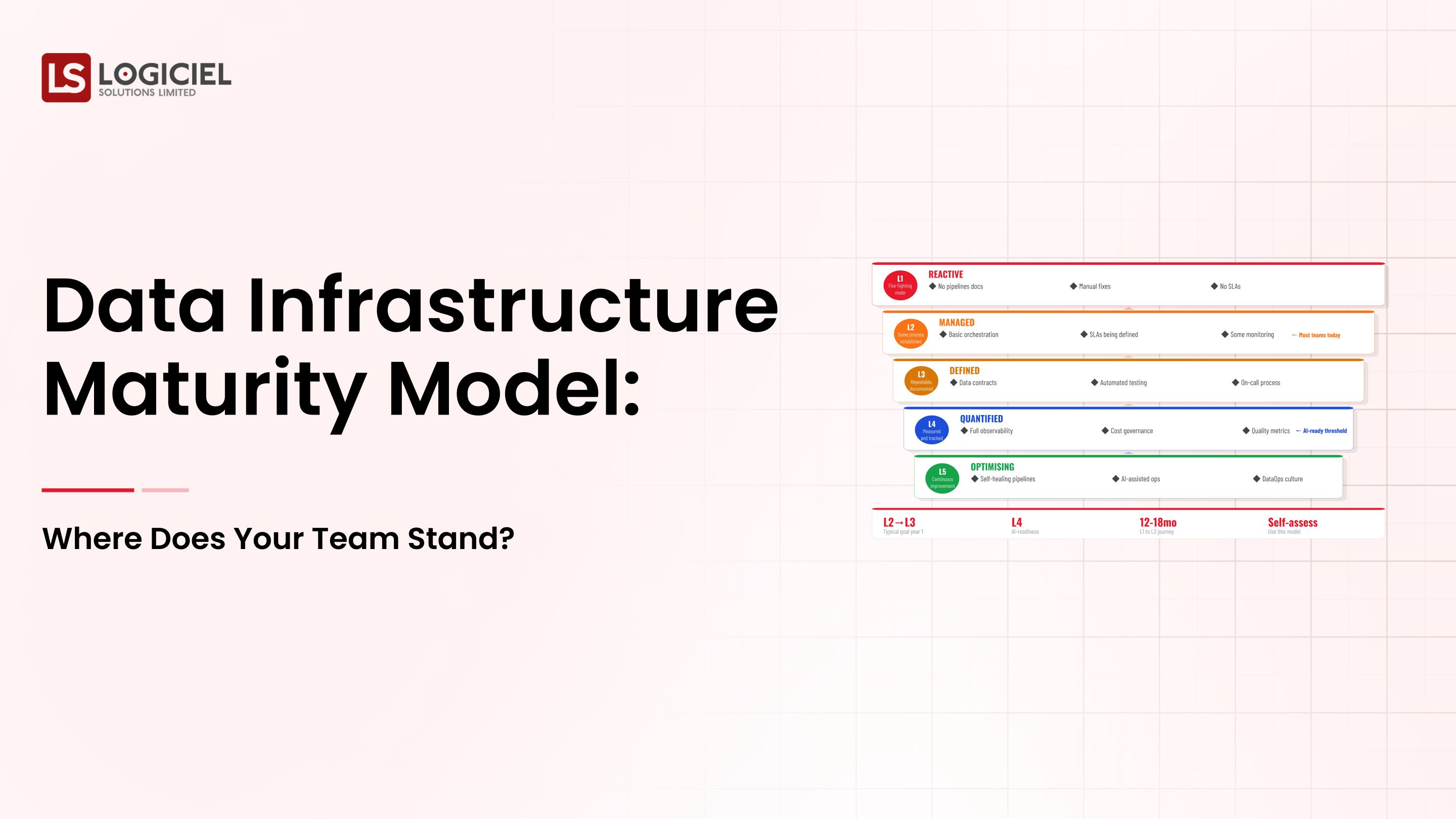

The Disparity Between High Achievers and Everyone

Teams that are high performing:

- Invest in observability

- Establish clear ownership

- Build modular designs

Conversely, many teams:

- Have challenges with reliability

- Do not have visibility into system operations

- Are reactive instead of proactive

Why the Disparity is Important

The disparity between high achieving teams and everyone else is widening.

Teams with strong data infrastructure and analytics:

- Move quickly

- Provide accurate data

- Scale their operations

The teams that are not in this position will fall behind.

Key Insight

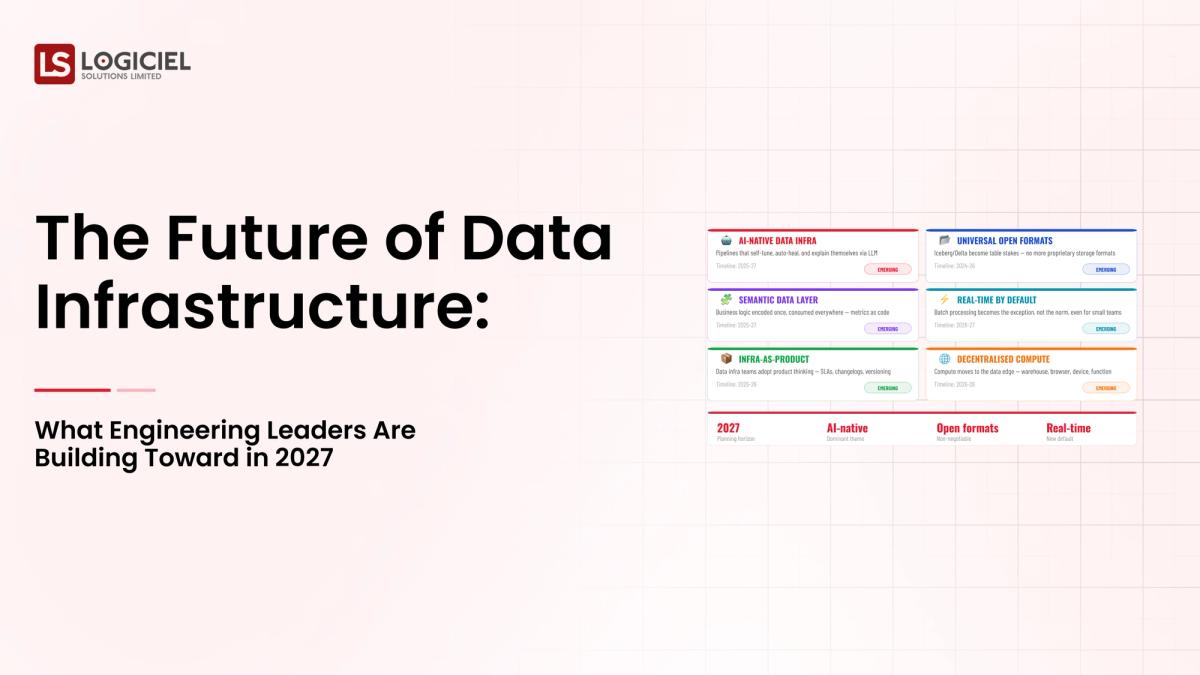

The future is not being equally executed today.

Some teams are now operating in 2027 or beyond.

Section 2: Trend 1: AI-Ready Infrastructure is a Top Priority

AI is no longer an experimental function, but has evolved into an essential part of a business' operations.

What AI Requires from Infrastructure

AI systems require:

- Reliable data processing

- Consistent data structures

- Complete end-to-end data tracking

If an organization does not have the above-mentioned requirements, models created for AI will fail after deployment.

What Teams are Doing

Teams that are forward leaning are:

- Leveraging feature stores

- Tracking end-to-end data flow across data processing systems

- Guaranteeing data is fresh at deployment

Retrofitting VS Designing AI-Friendly Systems

Many organizations are in the process of retroThe Characteristics of an AI-Ready Infrastructure are that Data is versioned, lineage is traceable, pipelines are reliable, and features are reusable.

In conclusion, the success of AI is going to depend more on the quality of infrastructure rather than the quality of models used.

Section 3: Trend Two: The Move from Batch Processing to Real-Time Processing and the associated costs.

Real-Time Processing of Data is becoming a standard practice.

Reasons for teams to move towards Real-Time Processing of Data include the following:

- Personalized Experiences

- Fraud Detection

- Operational Monitoring

The Use of Real-Time Processing of Data introduces:

- New Levels of Complexity

- Increased Amount of Operational Overhead

- New Types of Failures

All Pros/Cons considered, Batch Processing is a better solution for:

- Historical Data Analysis

- Cost-Effective Workloads

- Non-Time Sensitive Data

Some Leading Teams are creating:

- Real-Time Pipelines for capturing Real-Time Information

- Batch Pipelines for capturing Longer-Term Analysis

In conclusion, Real-Time Processing is not going to be a replacement for Batch Processing, but an additional feature of your Infrastructure.

Section 4: Trend 3: Data Contracts are No Longer Considered a Nice-to-Have, They're Required!

Schema Drift has become one of the largest hidden problems in data integrity.

The problems associated with the lack of using Data Contracts are:

- Schema Changes breaking Pipelines

- Losing Trust in Data because of the use of Schema Contracts

The function of Data Contracts is to:

- Define Expectations

- Enforce Schema Consistency

- Improve Reliability of Data

The tools that are used for Data Contracting are:

- Schema Validator tools

- Automated Testing frameworks

- Contract Enforcement tools

Some Commonly Cited concerns regarding Data Contracting

Section 5: 4th Trend: Consolidation (Reduced Tool Use) - Less Tool Scatter

Tool Overload

Having the wrong number of tools creates challenges for most teams.

Effects of Tool Scatter

- Increased cost

- Difficulty linking systems together

- Increased cost to service tools

Teams are Managing Tool Overload by

- Cutting back on tools

- Using integrated tools wherever possible

- Standardizing their processes

How Platforms Are Developing

Platform teams will be:

- Creating enterprise internal platforms

- Building reusable components

- Providing do-it-yourself access for users (self-service)

- Moving to a "built vs. bought" methodology

The Emerging Trend

Teams are tending to use increasingly more integrated solutions and employing much more standardized solutions.

Key Takeaway

A simple approach to doing business is starting to become a competitive advantage.

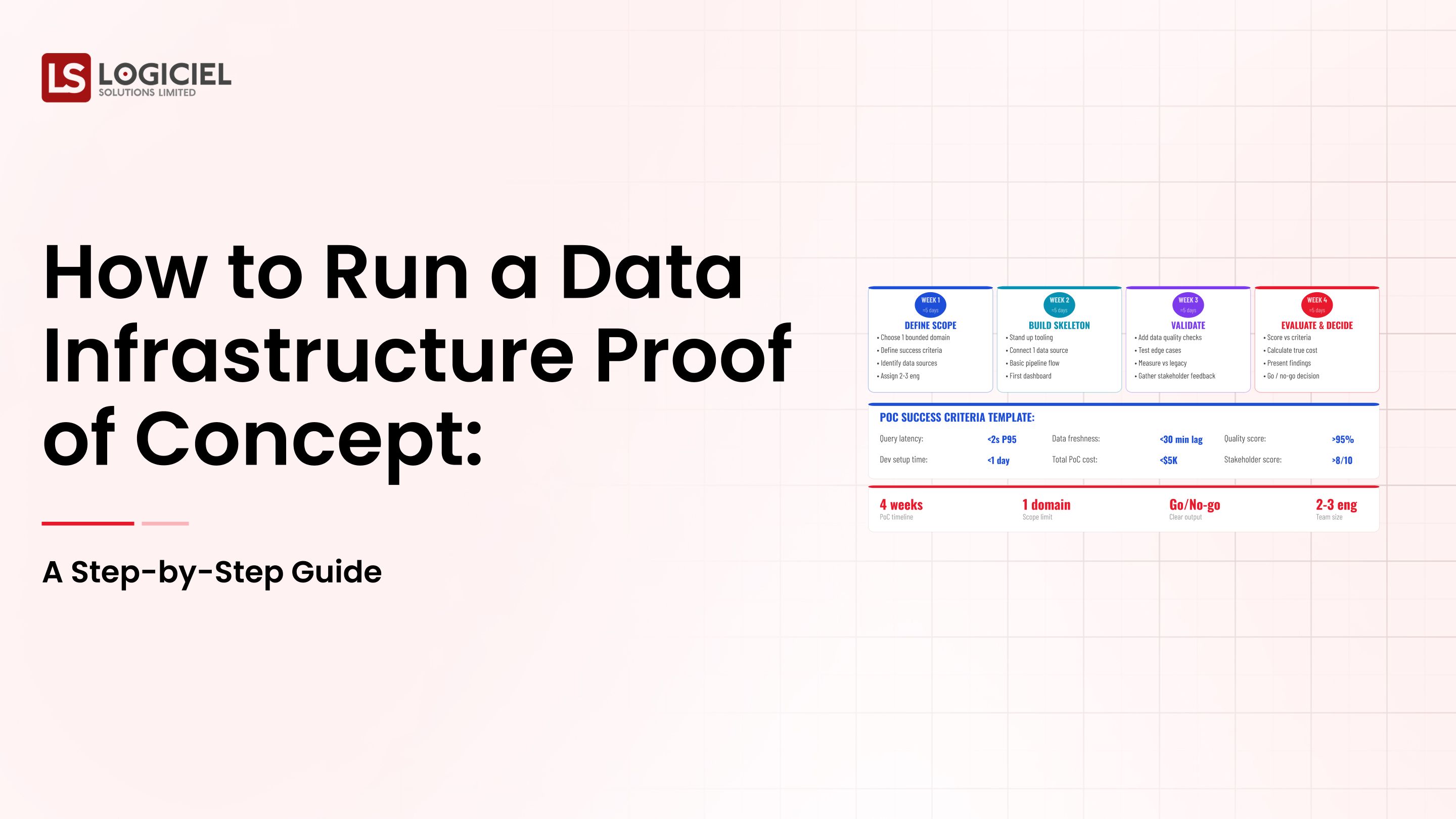

Section 6: Thoughtful Teams Are Now Taking Action

Most successful teams are already taking action.

1. Reviewing Current Systems

They have:

- Found potential areas for reductions in system usage

- Identified areas needing improvements to help reduce expenses

2. Commencing to Invest in Observability

They will:

- Track and report on pipeline health

- Measure freshness of data

- Lay groundwork for improving incident response

3. Beginning with AI in Mind from Day One

They have:

- Designed (or are now designing) their pipelines with AI/ML functions in mind

- Focus on producing/maintaining data consistency

4. Reducing Complexity

They will:

- Consolidate systems/tools used

- Simplify the overall system architecture being built

5. Aligning Teams

They have:

- Created a defined ownership model for the routing and /or systems they use

- Improve team collaboration by developing shared goals

Key Takeaway

Actions taken today will have a significant impact on how we develop the future.

AI – Powered Product Development Playbook

How AI-first startups build MVPs faster, ship quicker, & impress investors without big teams.

Call to Action

Tomorrow's Data Infrastructure and Data Analytics will no longer be defined as "More Tools."

They will be defined as "Better Solutions."

To give your teams the greatest probability of success by 2027, you must:

- Be built for AI from Day One

- Have a balance of Real-Time vs. Batch environments

- Be all about Simplicity

- Invest in Observability

Logiciel's team of AI-first engineering experts are your Resource to build Future-Ready Data Platforms that will keep pace with the growing needs of your business.

If you are beginning to think about growing your company, it may be time to start by determining how to develop your Data Infrastructure.

Find out how Logiciel's AI-first engineering experts can support your organization to truly develop a Data Platform that you will need toData Infrastructure and Data Analytics support your business in 2027 and beyond!

Frequently Asked Questions

What is Tomorrow's Data Infrastructure?

Tomorrow's Data Infrastructure will consist of ML ready architecture, real-time capabilities, strong data-level agreements and simplified workload configuration.

Why Do We Need a Machine Learning Ready Data Infrastructure?

The basis for any AI system is a foundation of consistent, reliable data. If you do not provide proper infrastructure, your data-analytics models will not provide value.

Should Every Team Have a Real-Time Data Infrastructure?

No; while real-time capabilities will have particular use cases; that does not mean they should be excluded in batch-based environments.

The Largest Single Trend Impacting Tomorrow's Data Infrastructure?

The single largest trend shaping the development and the future of data infrastructures is the increase in growth of AI-ready, observability-oriented and simplified architectures.