Every growing business reaches the same roadblock.

Initially, the data infrastructure is working properly. Dashboards stream data, and pipelines operate at full capacity; therefore, the teams have an accurate number of resources.

Then the business begins to expand.

- Min. Date sourced

- No. of Triggers

- Variation in Queries

- Expectation of Performance Increase

Suddenly things will begin to break.

Pipelining may disconnect without notice; the business will incur a higher cost of delivery; dashboards will lag in performance; affecting teams’ level of trust.

To a Data Engineering Lead, the issue is not just about Technology, this becomes a Business Bottleneck.

This guide contains seven of the most common data infrastructure challenges that growing teams face and how to resolve them through a system-wide approach.

Why your Data Infrastructure becomes a bottleneck when you scale

Systems built on speed, not scalability

Most teams start out with:

- Basic ETL Pipeline

- One Data Warehouse

- Limited or Non-existent Governance

That model generally works until:

- Ten times more data is collected

- Three teams start using the same data

- Real-time use cases develop

According to Gartner Research, poor Quality Data Costs the average company approximately $12.9 million per year.

Key learning point: Data infrastructure does not typically fail quickly. Data infrastructure usually degrades slowly over time, causing a business to hit a growth wall.

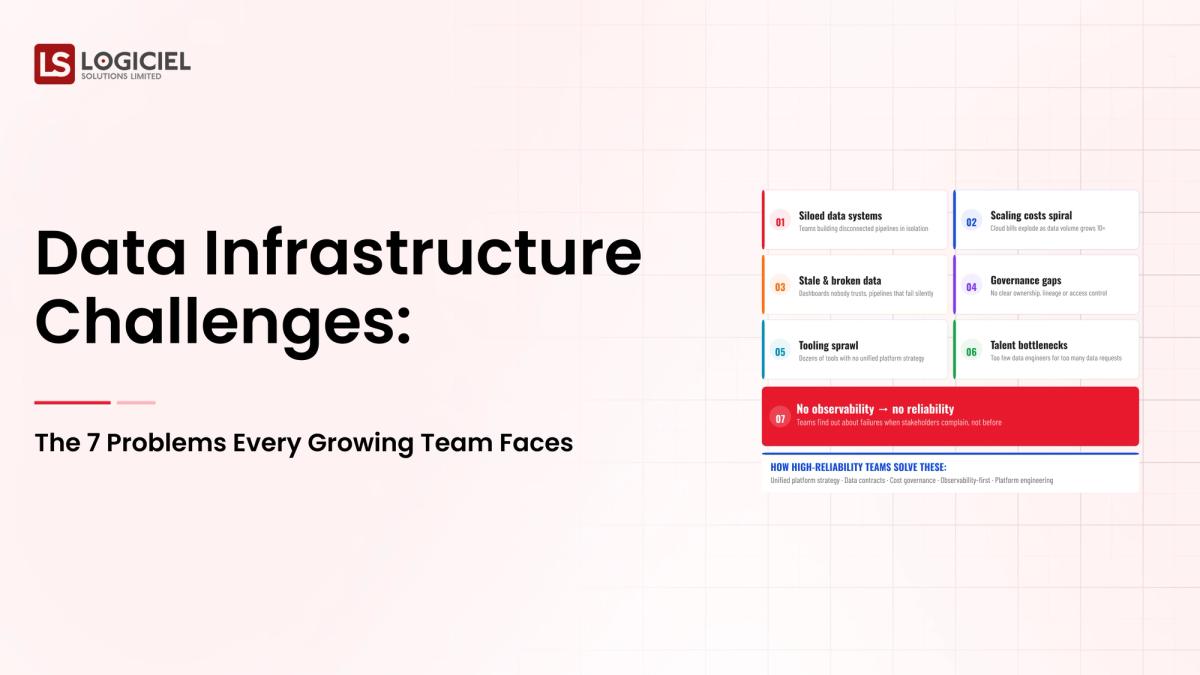

The Seven Data Infrastructure Challenges that Every Growing Team Faces

1. Disconnected Data Sources and Pipelining

Problems

When a business grows, its data will come from various places:

- SaaS Applications

- Internal Systems

- APIs

- External Providers

This results in no connection between Pipeline and Duplication of Logic. Fragmented data causes issues for organizations. The three main issues are:

- Different metrics for each team

- Duplicating the data process

- Extra maintenance to manage duplications

When you have more time to repair pipelines, you have less time to create new insights.

Remedy

- Build one central ingestion location

- Standardize ETL or ELT

- Use as many managed connectors as possible

Main point: Creating one layer of ingestion decreases the overall complexity of the data structure.

2. Poor Data Quality Contributes to Lack of Trust in the Data

The issue

The issue is when teams do not have faith in the data, they do not use the data.

Some common issues causing distrust are:

- Data missing

- Schemas inconsistent

- Data not updated on a timely basis

Importance

The quality of the data affects how teams cannot make sound decisions.

According to Deloitte, companies with an exceptional analytics governance program will achieve company goals twice as often when compared to other businesses.

Solution

- Implement data verification standards

- Define data ownership

- Utilize tools such as DBT to verify data

Takeaway: Dependability creates the foundation of a scalable data structure.

3. Rising Cost of Cloud Data Infrastructure (No Monitoring)

The issue

The issue is the cloud data infrastructure has significant growth in expenses as well.

Some typical expense drivers include:

- Unoptimized queries

- Over-provisioned compute

- Unused storage

Importance

Without the ability to see what is driving costs, it is impossible to monitor and manage the cloud data infrastructure.

If teams are not made aware of the issues driving expenses, their costs will often be unexpectedly high.

Solution

- Monitor expenses with dashboards

- Enhance query performance

- Use auto-scaling and resource isolation to optimize expenses

Takeaway: Managing expenses are built into the design when constructing the data infrastructure.

4. Real-Time Data Performance (No Capabilities)

The issue

The issue is batch processing can no longer provide the required amount of data to be processed.

Examples of modern requirements:

- Real-time dashboards

- Event-driven systems

- Artificial Intelligence applications

Importance

Delayed data results in delayed decision-making.

If an organization delays making decisions due to a lack of real-time data, that organization will be at a disadvantage to competitors due to the fast pace of business.

Introduction of streaming data pipelines, event-oriented architecture, number of batch processing plus real-time processing.

Key takeaway: Real-time capabilities are fast becoming the new norm for data infrastructure today.

5. Upscaling data infrastructure without breaking systems

The Issue

As additional people join in and employ the systems, the systems are placed under additional loads and become incapable of keeping pace with that demand.

Signs include:

- A length of time it has taken to execute queries

- Unpredictable data pipelines that may or may not work

- Frequent outages from the error of the service and the inability to stay on-line

The Importance

The problems of scalability will affect all the teams who work with and depend on the unavailability of a Data Infrastructure.

The Solution

- Use a Cloud-Native Architecture that leverages Scalable Resources

- Separate processing (computation) of data from storage of data

- Implement Workload Isolation principles

Key takeaway: Scalability must be incorporated into the Data Infrastructure solution and cannot be retrofitted after it has been built.

6. Datatangling governance and compliance challenges

The Problem

As more data becomes available, it is increasingly difficult to implement a governance framework.

Key areas include:

- Data Access Control

- Data Lineage

- Regulatory Compliance (example: PCI)

The Importance

Failure of the Data Infrastructure can produce massive security risks, compliance issues, and possible misutilization of the company’s Data Assets.

The Solution

- Create a clear set of governance policies for data

- Implement Role Based Access Control

- Use Data Management Cataloging Tools

Key takeaway: Clear Data Governance Policies and Procedures must be in place to create an Enterprise Grade Data Environment.

7. People & Complexity of Operations

The Problem

To operate in a modern, effective method, requires employees with varying levels of skillsets.

We need:

- Data Engineers

- Cloud Platforms

- DevOps

- Analytics

The Importance

The succeeding factor to implementing the Data Infrastructure may be finding and keeping employees with necessary skill sets.

The Solution

- Simplification of the underlying Architecture

- Utilize Managed Services for operating the Data Infrastructure

- Train and develop required employee skills on an ongoing basis

Key takeaway: Data Infrastructure simplification will make the operations easier.

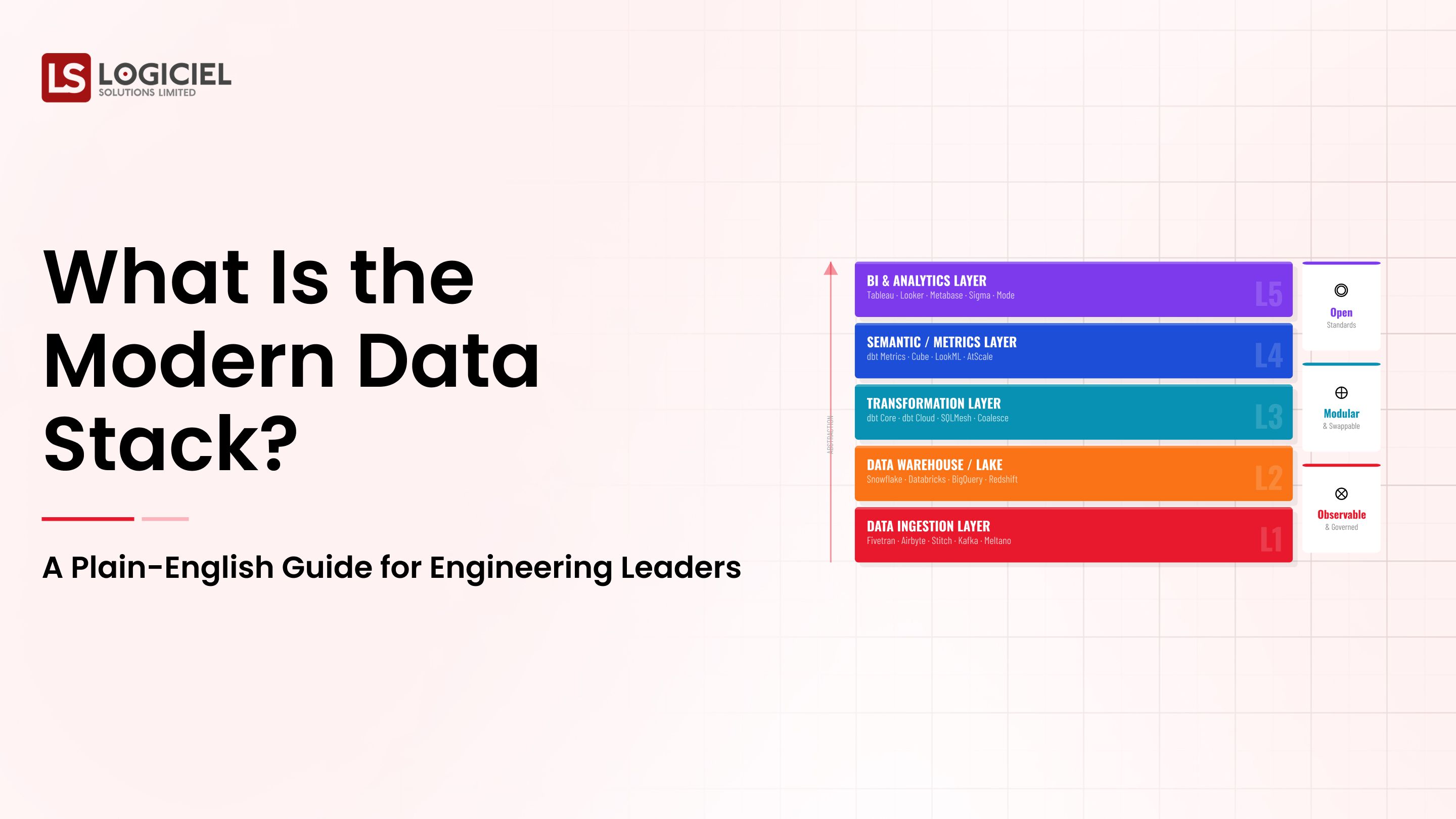

How Should a Modern Data Infrastructure Function?

A solid data infrastructure is defined as being:

- Expandable

- Dependable

- Cost efficient

- Governed

- Ready for AI

It consists of:

- Data ingestion workflows

- Cloud data warehouse

- Transformation layer

- Business Intelligence (BI) and Artificial Intelligence (AI) tools

It closely follows the methodology of the modern data stack.

How do I ensure my data infrastructure is ready for the future?

Data engineering managers can work to avoid these issues by focusing on:

1. Select Systems Before Selecting Tools

Pick architecture first, tools second.

2. Observability and Monitoring

Keep track of the health, cost, and performance of your workflow system.

3. Modular Architecture

Design systems that will be able to develop over a long period.

4. Ready for AI

Make sure your infrastructure gives your organization the ability to do advanced analysis.

Real World Examples: Repairing a Company's Data Infrastructure at Scale

One increasing SaaS company encountered:

- System failures

- Cost increases

- Slow dashboards

Logiciel was able to help with:

- Rethinking the data infrastructure

- Create controls for costs

- Advancing system performance

Results

- 40 percent faster queries

- 30 percent drop in costs

- Reliable data

Frequently Asked Questions

What is data infrastructure?

What are some examples of data infrastructure challenges?

How do you effectively scale data infrastructure?

What is the relationship of data infrastructure to AI?

How can companies reduce their data infrastructure costs?

Final Thoughts: Data Infrastructure as a Competitive Advantage

All growing teams will experience challenges with their data infrastructures.

The key difference is how they respond.

Reactive teams wait until an issue occurs to fix, whereas high performing teams develop systems to prevent problems.

It is not enough to fix data pipeline issues.

Moreover, the goal of creating a data infrastructure that will:

- Scale with business growth

- Support AI-driven decisions

- Produce reliable insights

Logiciel Point of View

At Logiciel Solutions, we partner with data engineering leaders to transition their teams from reacting to solving data infrastructure issues proactively. Our AI-first engineering teams build scalable, dependable, and optimized data infrastructures.

We do not just fix data issues; we develop systems capable of preventing them.

Engage Logiciel to help you create the data infrastructure to support the next phase of your growth. Schedule a call.