All data teams build pipelines.

However, not every team builds a reliable pipeline.

As a Data Engineering Lead, you’ve likely seen this gap:

- Pipelines that fail silently

- Delays to downstream analytics and AI workflows

- Increasing operational complexity as systems grow

The key differentiator between mid-level teams and high reliability teams is not tool selection; it is in how they build, run, and adapt their data pipelines using a systematic approach.

In this document, we will detail the elements that differentiate the highest performing teams:

- What is a data pipeline and how do they work?

- The architecture of a modern data pipeline

- Best Practices for reliability, scalability and automation in data processing

- The tools and frameworks used by top organizations

What Is A Data Pipeline?

A data pipeline refers to a mechanism that transports the data from the data source(s) to the final storage location(s) while making transformations to the data along that journey.

The four main stages of a data pipeline:

- Source Data

- Serialize Data

- Store Data

- Deliver Data

How do we use data pipelines?

The core foundation of the following processes uses a data pipeline:

- Analytics

- Machine Learning

- Real-Time Applications

Where does a data pipeline fit into ETL?

Traditionally, the ETL (Extract, Transform and Load) model of data processing has been used; on the other hand, the ELT (Extract, Load, and Transform) model is now preferred, whereby data will be transformed after it has been loaded into the destination.

How Data Pipelines Work

Typical to an efficient data pipeline, the data movement will take place in a stepwise fashion across several stages.

Data Pipeline Stages: The Typical Lifecycle

1. Data Ingestion

Collecting data from a variety of data sources.

2. Data Processing

Cleaning, Transforming, Enriching.

3. Data Storage (or Serving)

Delivering to systems for consumption.

These three stages form the basic lifecycle of any data pipeline.

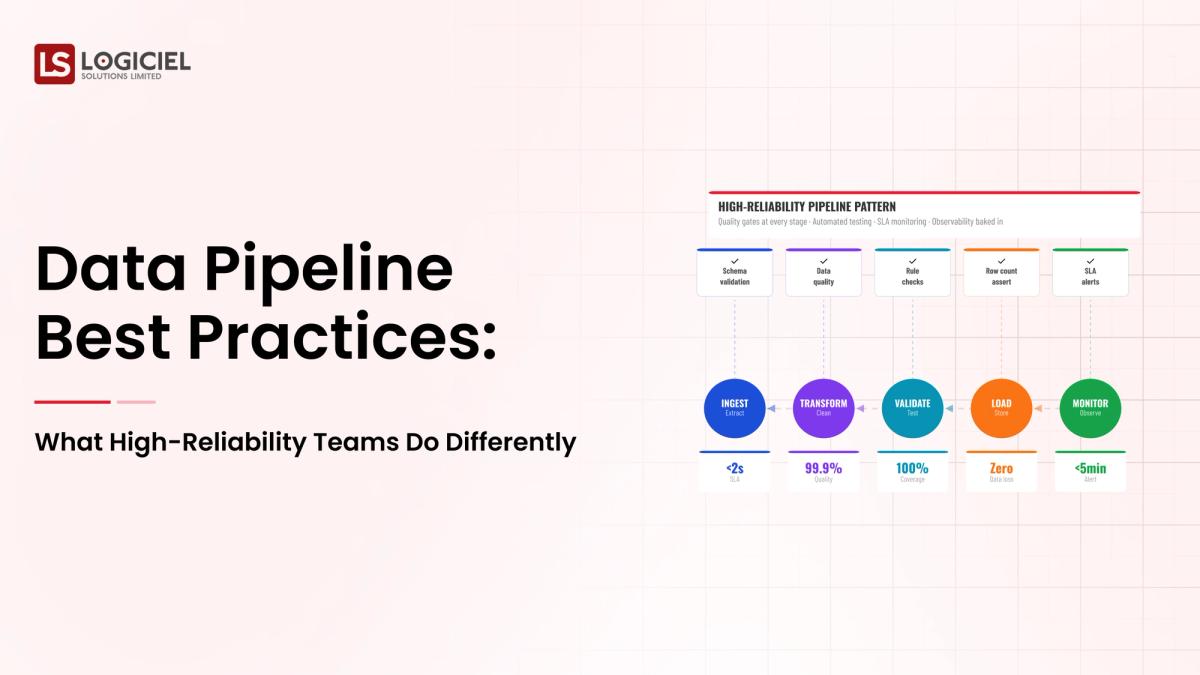

Considerations for Designing High-Reliability Data Pipelines

They put a strong focus on:

- Observability

- Fault Tolerance

- Automation

- Scalability

The Key Principles of a Data Pipeline

A Data Pipeline is More Than Just Code.

A data pipeline system is designed for the production environment and must meet Service Level Agreements (SLAs).

Best Practices for Data Pipeline Architectural Design

1. Design For Failures

Designing for failure is a necessary evil.

Pipelines should be designed to automatically:

- Retry

- Handle Partial Failures

- Maintain Data Consistency

2. Modular Architecture

Creating pipelines as reusable components improves:

- Maintainability

- Scalability

3. Separation of Compute and Storage

Modern architectural designs decouple the layers that provide compute and storage creating a flexible architecture.

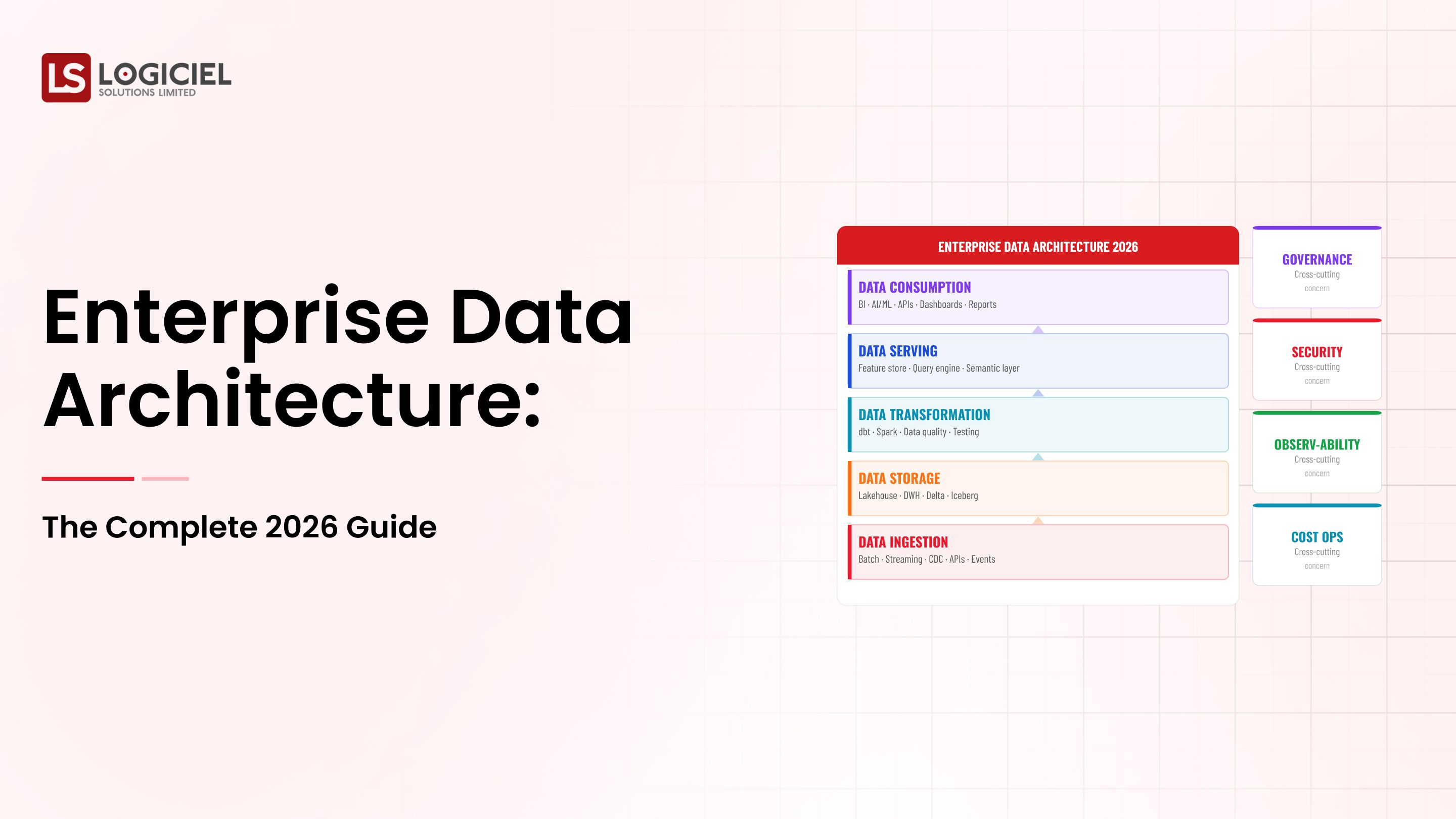

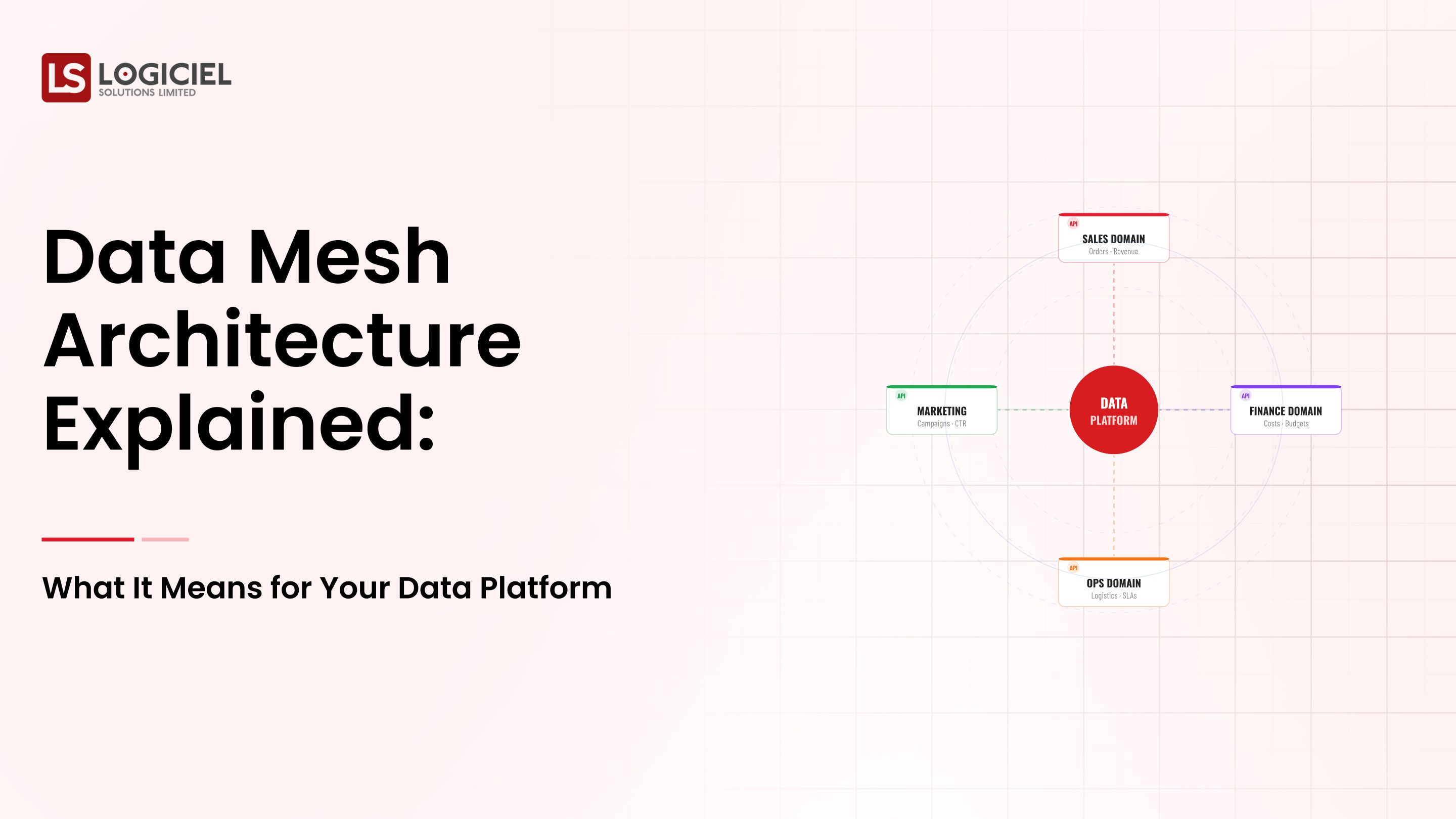

What is Data Pipeline Architecture

Data pipeline architecture provides an operational overview of how data flows through your organization or across all your analytical systems, toolsets, and layers.

Data Pipeline Orchestration / Automation

Orchestration is responsible for ensuring that a pipeline runs in a manner that its various components run in the correct order (flow of execution)

How to automate workflows in a data pipeline with popular tools.

Use orchestration tools such as:

- Apache Airflow

- Prefect

- Dagster

Advantages of automation.

- Reduced manual intervention

- Faster execution

- More reliable

Choosing the right tools for building your data pipeline

Typical categories for these tools include:

- Data ingestion tools

- Processing engines

- Orchestration platforms

The best cloud platforms for building a scalable data pipeline are:

- AWS - (Glue, Lambda, and Step Functions)

- Azure - (Data Factory and Synapse)

- Google Cloud - (Dataflow and Composer)

Open-source Comparison of Data Pipeline Orchestration Tool

Tool - Strength

- Airflow- Has a mature ecosystem.

- Prefect- Has a user-friendly design.

- Dagster- Has strong lineage tracking capabilities.

Differences between real-time vs. batch data pipelines

Difference of Features:

Feature Name- Batch Data vs. Real-Time Data

- Latency - High vs low.

- Complexity- Low ner vs high

- Use Cases- Reporting vs streaming analytics.

Examples of when to use Real-Time Data Pipelines

- Fraud detection

- System monitoring

- Personalization

Monitoring & Observability

Top managed services that are available to monitor Real-time Data Pipelines

- Datadog

- Prometheus

- Cloud native monitoring systems

How High-Reliability Teams Differ From Others

High-reliability teams make observability a top priority.

Key Metrics:

- Data Pipeline Latency

- Failure Rate

- Data Freshness.

Data Quality and Governance

Why Is This Important?

If companies use bad data, customers will lose trust.

Best Practices In Data Quality Go As Follows:

- Validate Data At All Steps

- Track Data Lineage

- Require Schemas

Key Insight

Reliability Is NOT JUST SYSTEM UPTIME. Reliability IS THE CORRECTNESS OF THE DATA.

Scaling Up Data Pipelines

Challenges

- Increasing amount of data

- Complex transformations that require massive processing

- Lack of resources to support processing of large amounts of data

Solutions

- Utilizing distributed processing tools (Spark, Flink)

- Implementing auto-scaling infrastructure

- Implementing partitioning strategies to make data easier to process

Cost-Optimization

Types of common cost drivers

- Compute usage

- Data transfer costs

- Storage costs

Optimization strategies for reducing costs

- Choose more efficient data formats (e.g., Parquet)

- Optimize scheduling so that it runs as efficiently as possible

- Reduce unnecessary processing of data

Data Pipeline Security

Key security considerations for data pipelines

- Access Control

- Encryption

- Monitoring

Best practices for securing your Data Pipeline:

- Implement Role-Based Access

- Encrypt data both when in use and when at rest

- Audit your Data Pipeline activity regularly

Common mistakes made by teams who build Data Pipelines

1. Over-engineering

Complex systems can increase risk of failure.

2. Lack of monitoring

If there is no visibility into the pipeline there will be no way to detect issues until they occur.

3. Ignorance of data quality

Data quality is critical to creating valuable insights. Poor data quality will create unreliable insights.

4. Poor documentation

Poor documentation can create severe challenges in maintaining and modifying your pipeline over time.

Future of the Data Pipeline

- Pipeline optimization using AI technology

- Real-time-first Data Pipeline architecture methodologies

- Unified Data Platforms as a new approach to managing data across the enterprise.

Is ETL dead?

No – ETL is evolving to become more flexible and scalable.

Frequently Asked Questions

What is a data pipeline and what makes it an important component of your overall Data Platform?

How do I build a data pipeline?

What are the primary stages of a Data Pipeline?

What is Data Pipeline orchestration?

What tools can I use to build my Data Pipelines?

Conclusion: Reliability Is a Design Decision

High-performing engineering teams do not build pipelines that "usually work."

They build them to be:

- Observable

- Fault-tolerant

- Scalable

- Automated

Your Data Pipeline is the foundation upon which you will build your Data Platform.

If your Data Pipeline fails, then everything downstream from that point will also fail.

At Logiciel Solutions, we help engineering teams to create their AI-first Data Platform and build the reliable and scalable Data Pipelines that will provide the foundation for delivering consistent results over time. That includes everything from the design of the architecture to the implementation of the pipeline.

If your Data Pipeline is becoming a bottleneck, it is time to rethink how you build it.