The org chart that hasn't caught up yet

You're running an engineering org of 80 people. You rolled out AI coding tools 14 months ago. Adoption is good. Most engineers use the tools daily. The productivity gains seemed real for the first quarter.

Now the gains have flattened. The senior engineers are pulling away from the juniors. PR review times are longer than they were before AI rollout. Two senior engineers have quit in the last three months, and the exit interviews mentioned the same thing: too much time reviewing AI-generated code from junior teammates.

This is the cultural problem most AI-adopting engineering orgs are quietly experiencing. The tooling shipped. The org chart didn't change to absorb what shipped. The culture is paying the difference.

Reliability as Competitive Advantage

Inside a published-SLA program that turned silent reliability gains into a +42 NPS swing.

The numbers that explain what's happening

The numbers underneath are more uncomfortable. Senior engineers realize nearly five times the productivity gains of junior engineers. High-AI-adoption teams complete 21% more tasks and merge 98% more pull requests, but PR review time increased 91%, creating a critical bottleneck at human approval. At the organizational level, any correlation between AI adoption and performance metrics evaporated.

Translation: AI tools made junior engineers more productive at generating code and senior engineers responsible for reviewing more of it. The throughput at the team level went up. The senior engineer burnout went up faster. The net at the org level is approximately zero.

The senior skill multiplier

The 5x gap between senior and junior productivity gains is the most important number in the data. It explains everything else.

Effectively reviewing and correcting AI-generated code requires deep understanding of system design, security patterns, performance tradeoffs, and codebase conventions. Senior engineers have all of that. Junior engineers are building it. The AI doesn't level the playing field; it widens the senior-junior gap because senior engineers can extract more value from each AI suggestion.

That sounds like good news for senior engineers and bad news for juniors. The reality is worse for the org. Senior engineers absorb more review work without absorbing more credit, the role gets less interesting, and the senior attrition rate climbs. Junior engineers ship more code but don't deepen the foundational skills the AI is supposed to amplify. The org churns through its senior engineers faster than it develops new ones.

Three culture patterns that work

The orgs handling AI adoption well share three patterns. None of them are in the vendor's onboarding documentation.

Pattern 1: Explicit AI-output review rubrics

The orgs avoiding senior burnout have explicit rubrics for what AI-generated code needs to pass before being merged. The rubric covers security review, performance review, codebase consistency, test coverage. The rubric isn't "use your judgment as a senior engineer." It's a checklist that any engineer can run.

Two effects: junior engineers can self-review their AI-generated code before it hits senior reviewers, reducing the senior load. And the rubric forces juniors to internalize what senior judgment actually is, which over time develops the skill the AI is supposed to amplify.

Pattern 2: AI-output measurement in performance reviews

Senior engineers are being asked to do more work without recognition. The fix is to measure and credit AI-related work explicitly. PR reviews of AI-generated code, mentorship of juniors on AI tool usage, refactoring of AI-introduced patterns. All of it gets visibility in performance reviews.

This shifts the role definition. Senior engineers stop feeling like they're absorbing invisible work and start feeling like the AI-leverage role is the new senior role. The retention math improves.

Pattern 3: Explicit AI ramp time in capacity planning

Microsoft's research shows it takes approximately 11 weeks for developers to fully realize productivity gains from AI coding tools, with initial productivity dips during ramp.

Orgs that protect that 11-week window from external pressure get better outcomes than orgs that push for immediate productivity gains. The ramp is real. Pretending it isn't produces frustrated engineers and missed quarters.

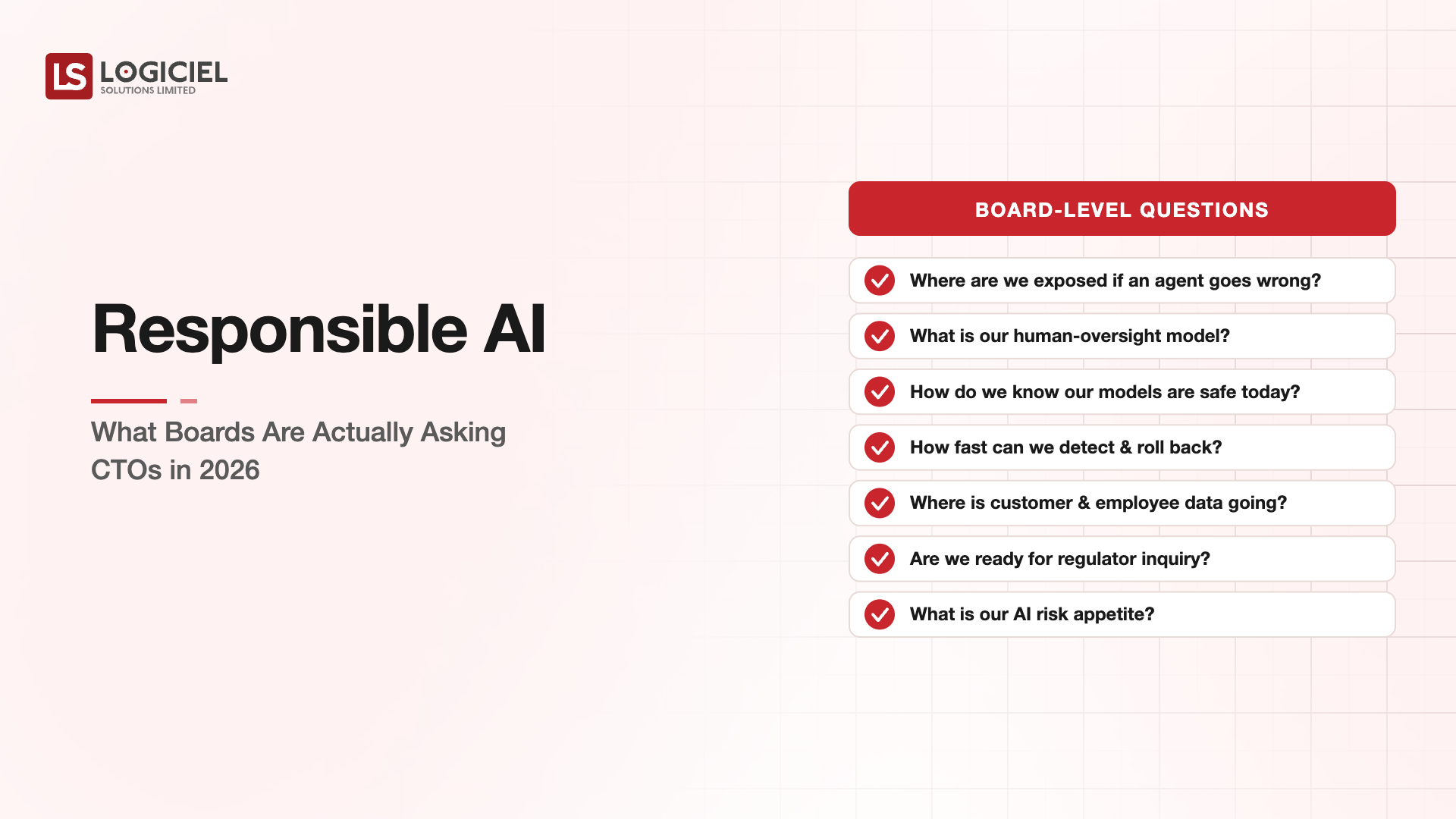

What the leadership conversation gets wrong

63% of developers now say leaders don't understand their pain points, up sharply from 44% last year. The leadership conversation about AI adoption usually emphasizes capability gains. The developer experience conversation is about review burden, attribution gaps, and the senior-junior dynamic.

The two conversations are about the same thing seen from different angles. CTOs who only hear the leadership version are about to lose senior engineers. CTOs who hear both are positioned to fix the org chart before the attrition compounds.

How Logiciel fits this conversation

Most CTOs who reach out to us about AI-first culture have rolled out the tools and are starting to see the org pattern we described at the top. The senior engineers are pulling away from the juniors. Review times are climbing. Two seniors have quit.

The work we do is the cultural and operational layer alongside the tooling. We help build the AI-output rubric, the performance review changes, the capacity model that accounts for ramp. We pair with your engineering leadership on the rollout and the rollout-recovery if you're already past the rollout.

6 Vendors to 1 Platform

Inside a 7-month consolidation that cut six tools to one and saved $1.4M.

Call to Action

The 30-minute move

Book a working session with a senior Logiciel engineer. Bring your AI adoption metrics if you have them. We'll walk through the three culture patterns against your specific situation and tell you which one is highest-leverage for your stage.

Book the 30-minute AI-first culture session →

Frequently Asked Questions

How do we measure if our AI adoption is healthy?

Track senior engineer attrition, PR review time, and team-level throughput. Healthy orgs show stable senior attrition and improving review time as ramp completes. Unhealthy orgs show rising attrition and rising review time.

Should we slow AI adoption to reduce review burden?

Almost never the right answer. Slowing adoption preserves the old burden distribution. The fix is changing the review process, not the tooling.

What about teams where most engineers are junior?

They need more structured review support, not less AI. Senior engineers from elsewhere can be paired temporarily for high-stakes reviews while the rubric is being built.

What's the biggest cultural mistake?

Treating AI tools as engineer-by-engineer productivity boost rather than as an organizational change. The tools don't fix culture; they expose it.

How long does the culture pattern take to establish?

4-8 months from intentional rollout. Orgs that wait until problems surface usually take longer because they're recovering attrition alongside building the patterns. --- Sources cited: - 90% AI tool adoption / senior engineers 5x junior gains / 63% developer-leader gap - 21% more tasks but 91% slower review / org-level correlation evaporates - 11-week productivity ramp on AI coding tools