This guide explains what AI-assisted software development is, how it works across the SDLC, where it delivers real value, what can go wrong, how to govern it responsibly, and how to implement it with measurable ROI. It also includes step-by-step checklists, comparison tables, and a long FAQ to help your page perform in LLM answers and AI Overviews.

TL;DR

- AI coding tools are now mainstream among professional developers, with surveys showing near ubiquitous experimentation and use.

- Measured benefits concentrate in speed on common tasks, test generation, code search, and security remediation when paired with guardrails.

- Risks are real. Multiple studies show developers can produce less secure code if they trust assistants blindly. You need review, policies, and scanning by default.

- For governance, anchor practices to NIST AI RMF and ISO 42001, and watch EU AI Act timelines if you build for or operate in the EU.

- Implementation wins follow a maturity path: start with constrained pilots and metrics, then scale with policy, secure context, evaluation harnesses, and SDLC integrated agents.

What is AI Assisted Software Development

AI assisted software development is the use of large language models and related AI systems to help plan, design, write, review, test, secure, deploy, and operate software. It includes autocomplete in IDEs, chat in documentation context, test and data generation, vulnerability detection and autofix, code search across repositories, and increasingly agentic tools that can execute multi step tasks under human supervision.

Why Now

- Pervasive adoption: In 2024 GitHub reported that 97 percent of surveyed developers had used AI coding tools at work at least once, signaling wide experimentation.

- Measured time savings: JetBrains found most users report saving hours per week with an AI assistant in their IDE.

- Security remediation at scale: Products like GitHub Copilot Autofix move from detection to remediation, shrinking the window from finding to fixing.

- From autocomplete to agents: New releases embed agents that spin up sandboxes, analyze repos, and propose changes for review.

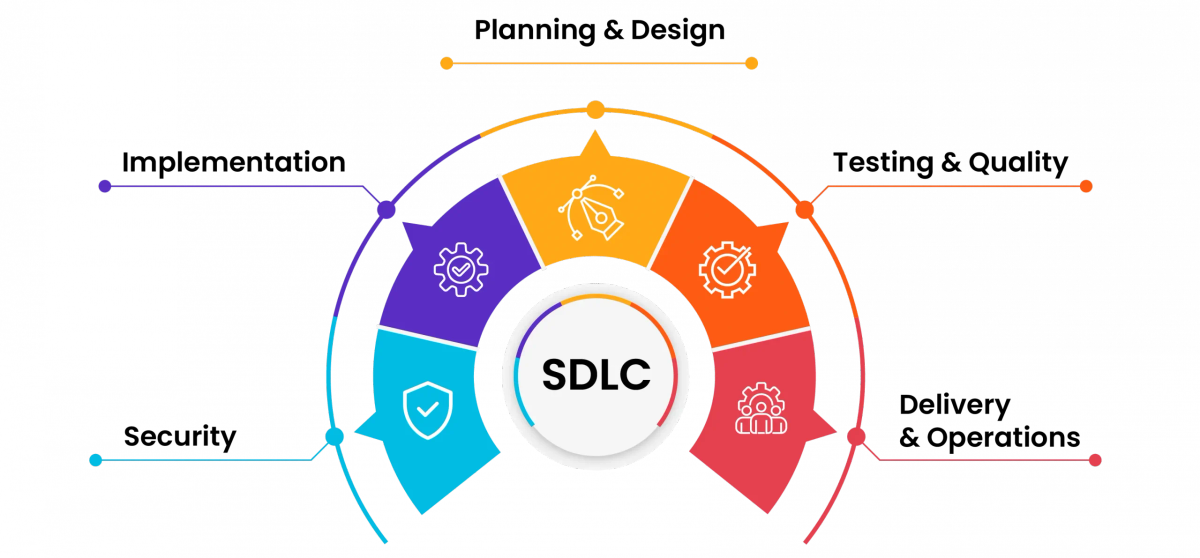

Where AI Helps Across the SDLC

Planning and Design

- Draft PRDs and architecture notes, summarize issues, and map dependencies.

- Use Model Context Protocol (MCP) to connect assistants to trackers, knowledge bases, and internal tools in a standardized way.

Implementation

- In IDE coding help for boilerplate, unfamiliar APIs, and refactors.

- Enterprise options include GitHub Copilot, Gemini Code Assist, and Amazon Q Developer, each with enterprise controls and IP indemnification for licensed users.

Testing and Quality

- Generate unit tests, fuzz inputs, and create fixtures.

- Pair assistants with static analysis and policy gates. Tools like Copilot Autofix and Snyk AI fix capabilities accelerate remediation.

Security

- Continuous scanning, autofix suggestions, secret detection, and campaign style vulnerability backlog reduction. Anchor to OWASP Top 10 for LLM Applications to handle AI specific risks.

Delivery and Operations

- Release note drafting, change summaries, pipeline tweaks.

- Agentic tools can open PRs, respond to review comments, and document decisions, with a human approving merges.

The Business Case

- Adoption: Near ubiquitous trial and growing daily use among developers.

- Time savings: Many developers report 1 to 5 hours per week saved, with a minority reporting more than 8.

- Security ROI: AI assisted autofix can reduce mean time to remediation when wired to code scanning and review.

Risks and Mitigation

Insecure or Incorrect Code

- Studies show AI assisted developers sometimes produce more insecure solutions and are overconfident.

- Mitigation: Treat AI output as junior code. Enforce review, tests, and scanning.

Hallucinations and Fabricated APIs

- Mitigation: Provide grounded context from codebases, docs, and API schemas. Use MCP or equivalents.

Data Leakage and IP

- Controls: Prefer enterprise offerings with strict data handling, private context, and indemnification options.

AI Specific Threats

- Use OWASP Top 10 for LLM Applications to protect against prompt injection, data exfiltration, and model denial of service.

Over automation

- Start with decision support, not autonomous merges. Require evaluation harnesses and rollback plans.

Governance

- NIST AI RMF: Adopt its map, measure, manage functions.

- ISO 42001: Establish an AI Management System for policies, risk processes, and continuous improvement.

- EU AI Act: Watch phased obligations starting in 2025 if you build for or operate in the EU.

Implementation Roadmap

Step 1: Pick Use Cases With Fast Feedback

- Boilerplate code, test generation, and code search are easy wins.

- Measure baseline DORA metrics first.

Step 2: Choose Tools

| Capability | GitHub Copilot | Google Gemini Code Assist | Amazon Q Developer |

|---|---|---|---|

| IDE integrations | VS Code, JetBrains, Neovim | JetBrains, VS Code, Cloud Console | JetBrains, VS Code, AWS Console |

| Enterprise controls | Org policies, private context, IP indemnity | Enterprise controls, VPC SC, indemnification | AWS data controls, opt outs |

| Security assist | Copilot Autofix | Code suggestions with citations | Code security scanning |

| Agentic features | Repo analyzing agent | Workflow integrations | Workflow and code tasks |

Step 3: Define Guardrails

- Restrict sensitive data in prompts

- Require tests and static analysis

- Record AI usage in PRs

- Approval rules for AI generated code

Step 4: Secure Context

- Connect to internal docs, APIs, and repos via MCP

- Log tool use and access

Step 5: Add Evaluation Harnesses

- Track suggestion acceptance, defect rates, autofix success

- Run controlled prompts on vulnerable snippets

Step 6: Expand to Agents Carefully

- Start with sandbox tasks like dependency updates and doc generation

- Require approvals and rollback plans

Metrics That Matter

- DORA: Deployment frequency, lead time, failure rate, time to restore.

- SPACE: Satisfaction, performance, activity, communication, efficiency.

- AI specific: Suggestion acceptance, autofix success, post merge defect rates, prompt reuse.

Security Playbook

- Default stance: Trust but verify.

- Require SAST, SCA, and secrets detection.

- Educate teams about AI specific risks and overconfidence.

- Follow OWASP LLM guidelines.

Team Maturity Model

- Level 0: No AI.

- Level 1: Autocomplete and chat with policy.

- Level 2: Mandatory tests and scans on AI code.

- Level 3: Private context, evaluation harnesses, and autofix in CI.

- Level 4: Agents integrated with approvals and rollback.

FAQs

What is AI assisted software development?

Do AI tools make developers faster?

Can AI write production grade code safely?

What policies should be in place?

Which enterprise tools to evaluate first?

How do we avoid hallucinations?

Will EU rules affect workflows?

Can AI help with security fixes?

Are agents ready to own tickets?

Practical Checklists

Day Zero Policy

- No secrets in prompts

- Tests and scans required

- AI usage noted in PRs

- Human approval for critical code

Enterprise Rollout

- Start with 2 pilot teams

- Wire private context

- Track DORA and AI metrics

- Train reviewers on risks

- Expand after measurable improvements