Understanding Pipelines in Software Development

In software engineering, a pipeline is an automated sequence of steps that code passes through on its way from development to production. Pipelines ensure that new code is consistently built, tested, and deployed in a reliable and repeatable manner.

Think of it as an assembly line for software. In manufacturing, raw materials enter one end of the assembly line and finished products emerge at the other. In software, the raw material is source code. The pipeline moves it through steps like compiling, testing, packaging, and deployment until a finished, working application is delivered to users.

Pipelines have become essential because of today’s demand for rapid delivery, high quality, and secure applications. According to the 2024 GitLab DevSecOps Report, 84 percent of software teams now use some form of continuous integration or delivery pipeline.

The key purpose of a pipeline is not just speed but consistency. Every time code changes, the pipeline enforces the same checks: automated builds, automated tests, packaging, and controlled deployment. This reduces the risk of human error and allows teams to deliver software daily, or even multiple times per day.

The Evolution of Software Development Pipelines

Manual Software Delivery

Before automation, developers manually compiled code, transferred files to servers, and executed scripts. This was slow and fragile. Deployments could take hours or even days. Mistakes were common and recovery was painful.

Waterfall Pipelines

During the era of Waterfall methodology, delivery pipelines were linear and rigid. Work moved from requirements → design → implementation → testing → deployment. Pipelines existed, but they were slow and inflexible. Feedback loops were long.

Agile Pipelines

With Agile, teams began breaking work into smaller increments. Pipelines became more iterative, allowing faster builds and test cycles. Still, many processes were manual.

DevOps Pipelines

DevOps introduced the culture of collaboration between development and operations, with automation at the core. CI/CD pipelines emerged as the backbone of modern delivery. They enabled continuous integration (code merged frequently) and continuous delivery (code always in a deployable state).

Cloud-Native and AI Pipelines

By 2025, pipelines are cloud-native, containerized, and increasingly AI-augmented. They scale globally, integrate security automatically, and provide predictive insights. According to Puppet’s 2023 State of DevOps Report, elite DevOps performers deploy code nearly 1,000 times more frequently than low performers all thanks to robust pipelines.

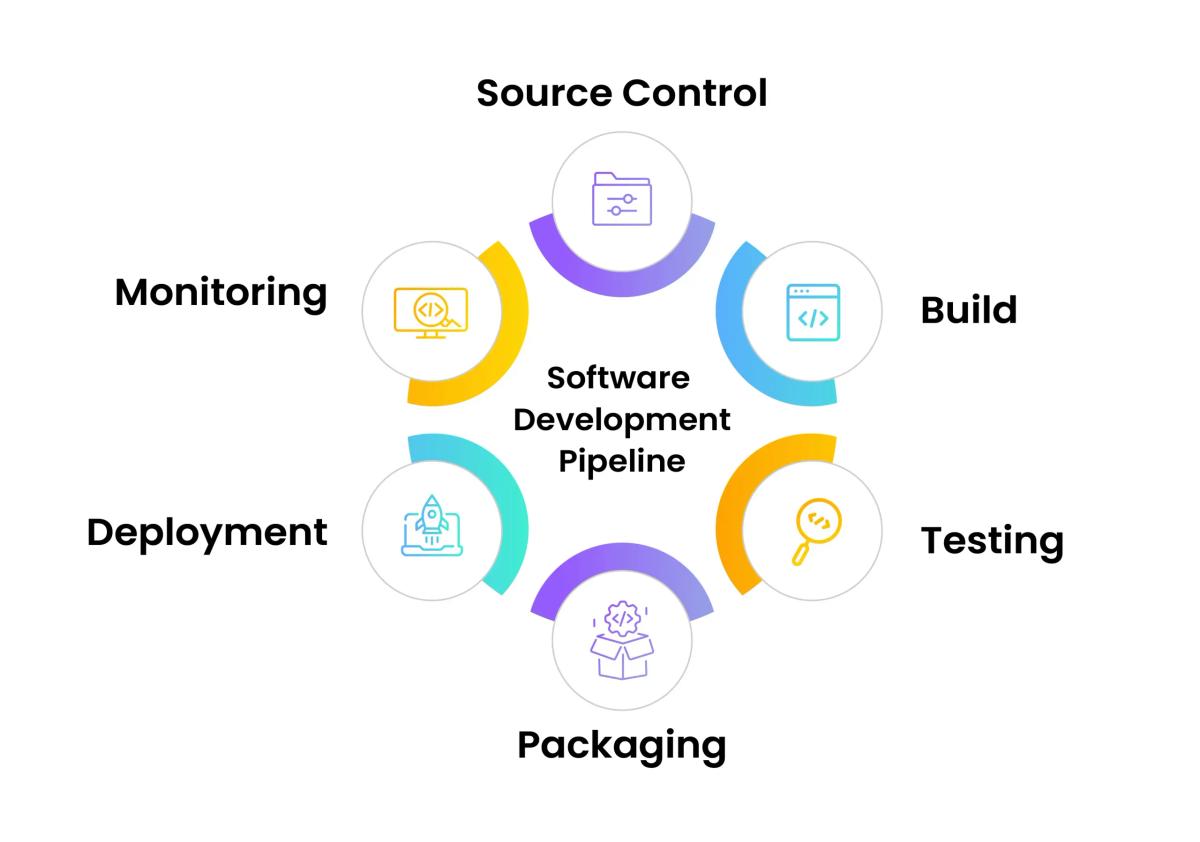

Key Stages of a Software Development Pipeline

A pipeline typically follows these stages:

1. Source Control

- Developers commit code to repositories like GitHub, GitLab, or Bitbucket.

- Pull requests and code reviews ensure quality at the source.

2. Build

- Code is compiled and dependencies resolved.

- Build automation tools (Maven, Gradle, npm) ensure consistency.

3. Testing

- Automated unit, integration, regression, and performance tests run.

- Testing frameworks validate every change to catch bugs early.

4. Packaging

- Applications are containerized (Docker) or packaged as artifacts.

- Artifacts are stored in repositories such as Nexus or Artifactory.

5. Deployment

- Code moves to staging or production environments.

- Deployment may use Kubernetes, Helm charts, or serverless functions.

6. Monitoring

- Observability tools (Prometheus, Grafana, Datadog) track performance.

- Alerts and feedback loops detect errors early.

This automated flow ensures that from commit to production, code follows a predictable, repeatable process.

Types of Pipelines in Software Engineering

1. Continuous Integration (CI) Pipelines

- Developers integrate changes frequently.

- Automated builds and tests verify each change.

2. Continuous Delivery (CD) Pipelines

- Ensures that every build is always in a deployable state.

- Deployment is still triggered manually but can be done at any time.

3. Continuous Deployment Pipelines

- Every change that passes automated tests is deployed automatically.

- Requires mature automation and monitoring.

4. Security Pipelines (DevSecOps)

- Security scanning, compliance checks, and vulnerability management integrated early.

5. Machine Learning Pipelines (MLOps)

- Manage data ingestion, training, model validation, deployment, and monitoring.

6. Data Pipelines

- Extract, transform, and load (ETL) pipelines move and process data for analytics.

7. Hybrid Pipelines

- Combine software, data, and ML pipelines into unified workflows.

Benefits of Software Development Pipelines

- Speed and Agility: Faster time-to-market.

- Quality Assurance: Automated tests reduce bugs.

- Consistency: Every change follows the same workflow.

- Collaboration: Shared visibility across Dev, QA, and Ops.

- Cost Efficiency: Less manual work and faster recovery from issues.

- Scalability: Pipelines support microservices and global delivery.

- Business ROI: McKinsey research shows optimized pipelines cut time-to-market by 40 percent.

Challenges in Pipeline Implementation

- Legacy Integration: Connecting modern pipelines to old systems.

- Pipeline Fragility: DORA reports that 40 percent of teams struggle with frequent pipeline failures.

- Security Risks: Supply chain attacks and insecure dependencies.

- Cloud Costs: Inefficient pipelines can waste resources.

- Cultural Barriers: Teams resistant to automation.

Best Practices for Building Pipelines

- Start small, automate gradually.

- Prioritize test automation.

- Integrate security early (DevSecOps).

- Use Infrastructure as Code for repeatability.

- Track pipeline KPIs (build time, failure rates, deployment frequency).

- Regularly audit and optimize.

Tools That Power Pipelines

Pipelines are powered by a combination of CI/CD platforms, container technologies, monitoring tools, and infrastructure automation. Choosing the right stack depends on team size, budget, and enterprise needs.

Popular CI/CD Tools

| Tool | Type | Strengths | Limitations |

|---|---|---|---|

| Jenkins | CI/CD | Open-source, highly flexible, thousands of plugins | Requires heavy setup and maintenance |

| GitHub Actions | CI/CD | Seamlessly integrates with GitHub repos, easy setup | Limited enterprise governance |

| GitLab CI/CD | CI/CD | Full DevSecOps suite, code + CI/CD + security in one | Steeper learning curve |

| CircleCI | CI/CD | Cloud-native, fast parallel builds, simple YAML config | Costs rise at enterprise scale |

| Azure DevOps | CI/CD + PM | Enterprise-ready, integrates project management | Can be complex to configure |

| Bitbucket Pipelines | CI/CD | Great for Atlassian ecosystem users | Limited scalability for large orgs |

Containerization and Orchestration

- Docker: The standard for packaging applications into portable containers.

- Kubernetes (K8s): Orchestrates containerized applications at scale, handles auto-scaling, load balancing, and self-healing.

- Helm: Package manager for Kubernetes, simplifies deployment.

Monitoring and Observability

- Prometheus: Collects metrics.

- Grafana: Visualizes metrics and dashboards.

- Datadog: Cloud monitoring with AI-driven insights.

- New Relic: End-to-end observability.

Infrastructure as Code (IaC)

- Terraform: Declarative infrastructure provisioning.

- Ansible: Automation for configuration management.

- AWS CloudFormation: AWS-native IaC.

These tools together create the modern DevOps toolchain, where pipelines automate every stage of software delivery.

Extended Best Practices for Designing Effective Pipelines

1. Start Small, Scale Gradually

Begin by automating basic builds and tests. Then add deployment and monitoring. Overengineering from day one creates complexity.

2. Automate Everything Possible

Automate builds, testing, security scans, packaging, and deployments. The fewer manual steps, the more reliable the pipeline.

3. Shift Left on Security

Integrate security scanning in early pipeline stages. Static analysis, dependency scanning, and secrets detection should run automatically.

4. Use Infrastructure as Code (IaC)

Provision environments as code to ensure consistency. This prevents “works on my machine” problems.

5. Implement Caching and Parallelization

Cache dependencies and run tests in parallel to keep pipelines fast. Long build times slow down teams.

6. Adopt Canary or Blue-Green Deployments

Reduce risk by gradually rolling out code or maintaining two environments for safe switching.

7. Monitor Pipeline KPIs

Track metrics like build success rate, average build time, and deployment frequency. Use them to identify bottlenecks.

8. Regularly Audit and Optimize Pipelines

Pipelines evolve. Regular reviews ensure they stay efficient, secure, and aligned with business goals.

Industry Use Cases for Pipelines

1. SaaS Companies

SaaS platforms thrive on rapid iteration. Pipelines allow them to release new features weekly or daily, while ensuring uptime. For example, a CRM company might use pipelines to deploy new integration features to thousands of customers with zero downtime.

2. FinTech

Financial companies face strict compliance requirements. Pipelines enforce audit trails, run compliance checks, and require approvals before deployment. This ensures security and regulatory alignment.

3. Healthcare

In healthcare, patient data security is paramount. Pipelines implement HIPAA compliance checks, encrypt sensitive data, and enforce strict testing before any deployment.

4. PropTech

Real estate platforms rely on accurate MLS data and frequent updates. Pipelines automate the ingestion of property data, integration testing, and deployment, ensuring property listings remain up-to-date.

5. AI and Machine Learning Startups

AI companies use MLOps pipelines to handle data ingestion, model training, and model deployment. This ensures that models are retrained on fresh data and redeployed automatically when performance drops.

Global Adoption and Statistics

- According to DORA’s 2023 Accelerate Report, elite teams deploy code 973x more often than low-performing teams.

- GitLab’s 2024 DevSecOps Survey shows that 60 percent of organizations reduced time-to-market significantly after adopting CI/CD pipelines.

- McKinsey reports that companies with optimized pipelines see 20–40 percent faster release velocity and significant cloud cost savings.

The Future of Software Development Pipelines

The future of pipelines will be shaped by AI, automation, and global distribution.

1. AI-Augmented Pipelines

AI will predict build failures, suggest test optimizations, and detect anomalies in deployment.

2. Low-Code / No-Code Pipelines

Non-technical users will be able to configure workflows visually, democratizing DevOps.

3. Serverless Pipelines

Event-driven functions will reduce costs by running pipelines only when triggered.

4. Observability-First Pipelines

Monitoring and feedback will be built in from the start, with real-time insights driving decisions.

5. Geo-Distributed Pipelines

Multinational teams will run pipelines across data centers worldwide, ensuring fast deployment and regional compliance.

Gartner predicts that by 2027, 75 percent of enterprises will rely on AI-driven pipeline orchestration, making pipelines not just a developer tool but a strategic enabler of innovation.

Frequently Asked Questions About Pipelines in Software Development

What is a pipeline in software development?

What is the difference between CI and CD in pipelines?

Why are pipelines critical for DevOps?

What stages make up a software pipeline?

What KPIs are used to measure pipeline effectiveness?

What tools are most popular for building pipelines?

How do pipelines reduce technical debt?

What are the security risks of CI/CD pipelines?

How do startups use pipelines differently from enterprises?

What costs are associated with pipelines?

What are ML pipelines and how are they different?

How are data pipelines different from software pipelines?

What is pipeline as code?

Are pipelines suitable for regulated industries?

How do pipelines improve business ROI?

What best practices ensure pipeline reliability?

How do global teams use pipelines?

What is the future of pipelines?

How do pipelines support microservices architectures?

What challenges do organizations face with pipelines?

How do pipelines integrate with cloud providers?

How do pipelines handle rollbacks?

What role does monitoring play in pipelines?

What is a multi-branch pipeline?

How do pipelines handle database changes?

What is the role of containers in pipelines?

What is a blue-green deployment pipeline?

What is a canary deployment pipeline?

How do pipelines improve developer productivity?

How do pipelines integrate with QA teams?

How do pipelines handle compliance audits?

What is the difference between a build pipeline and a release pipeline?

How do pipelines integrate AI and machine learning?

How do pipelines scale in large organizations?

How do pipelines help reduce downtime?

What is the role of Infrastructure as Code in pipelines?

How do pipelines support remote teams?

How are pipelines tested themselves?

What is the cost of pipeline failures?

How do pipelines integrate with agile methodologies?

Final Summary

Pipelines have transformed software development from a manual, error-prone process into a highly automated, consistent, and business-critical practice.

- In the past, software was deployed manually, leading to slow delivery and high risk.

- With Agile and DevOps, pipelines evolved to support iterative development and continuous collaboration.

- In the present, pipelines are automated CI/CD systems that handle builds, testing, deployments, monitoring, and even security checks.

- In the future, pipelines will be AI-driven, observability-first, and globally distributed, supporting not just code but also data and machine learning workflows.

From startups deploying MVPs to enterprises modernizing complex systems, pipelines are now the backbone of digital transformation.

Strategic Takeaways for Businesses

- Invest in Automation: The more processes you automate, the more reliable and scalable your software delivery becomes.

- Measure What Matters: Track DORA metrics deployment frequency, lead time, change failure rate, and MTTR to evaluate pipeline success.

- Adopt DevSecOps: Security must be integrated into pipelines early, not added as an afterthought.

- Leverage Cloud and Containers: Kubernetes, Docker, and IaC tools ensure global scalability and consistency.

- Plan for the Future: AI and low-code/no-code pipelines are rapidly becoming mainstream. Businesses that adopt them early will innovate faster.

Why Pipelines Matter Beyond Technology

Pipelines are not just an engineering practice they’re a business enabler. They directly impact revenue, time-to-market, and customer satisfaction.

- For CTOs: Pipelines provide the ability to scale engineering teams without sacrificing velocity.

- For Product Leaders: Pipelines allow faster delivery of customer-facing features.

- For Compliance Teams: Pipelines enforce governance and reduce audit effort.

- For Business Executives: Pipelines reduce costs, accelerate innovation, and improve ROI.

In short, pipelines align technical execution with business goals.

The Future Outlook

By 2027, more than three-quarters of enterprises will rely on AI-driven pipelines to manage not only code, but also data, infrastructure, and machine learning workflows. Pipelines will become:

- Predictive: Anticipating failures before they happen.

- Adaptive: Scaling across regions and workloads dynamically.

- Inclusive: Low-code interfaces will enable non-technical users to configure workflows.

- Strategic: Seen not as a developer tool, but as a competitive advantage in digital business.

Organizations that adopt and optimize pipelines today will be the market leaders of tomorrow.

Closing Thoughts

A pipeline in software development is far more than a technical workflow it is the foundation of modern software delivery. From ensuring reliability and security to driving innovation and growth, pipelines are at the heart of digital transformation.

As businesses continue to adopt cloud, AI, and global delivery models, pipelines will only grow in importance. They are the bridge between code and customer value.

The message is clear:

- If you are a startup, adopt pipelines early to scale smoothly.

- If you are an enterprise, optimize pipelines to modernize legacy systems.

- If you are a software leader, embrace AI-augmented pipelines to innovate faster.

Pipelines are not just the future of software development, they are the present reality. The companies that master them will deliver better software, faster, and more securely than their competitors.