At some time no matter which engineering team you are part of you will likely receive a recommendation that goes something like this… “Let’s use Apache Kafka.” This type of recommendation generally comes during times of severe strain on the system usually due to increased volume being processed by the system and data pipelines failing to be reliable and more recently with the emergence of real-time data requirements so the idea of introducing Apache Kafka seems to be an obvious answer.

However in reality the large majority of engineering teams introduce Apache Kafka much too early or for all the wrong reasons. For a staff or principal-level engineer the true challenge isn’t understanding what Apache Kafka is; it is determining when should Apache Kafka be considered along with when does it just add more complexity than needed.

This guide provides a higher level overview of what Apache Kafka is providing more than just simple definitions but answering the important questions:

- What Apache Kafka is and how it works with a little understanding as to why so many organizations are embracing it

- When Apache Kafka is the best solution (use case) and when it is a poor decision (overkill)

- How Apache Kafka compares with other real-time platforms that provide similar capabilities

- Important considerations for implementing Kafka in production environments

What Is Apache Kafka?

Apache Kafka is a distributed event streaming platform designed to support high-volume high-speed real-time data feeds.

Apache Kafka Allows Now Systems To:

- Publish Data Streams

- Subscribe To Data Streams

- Durably Store Data Streams

- Process Data Streams In Real Time

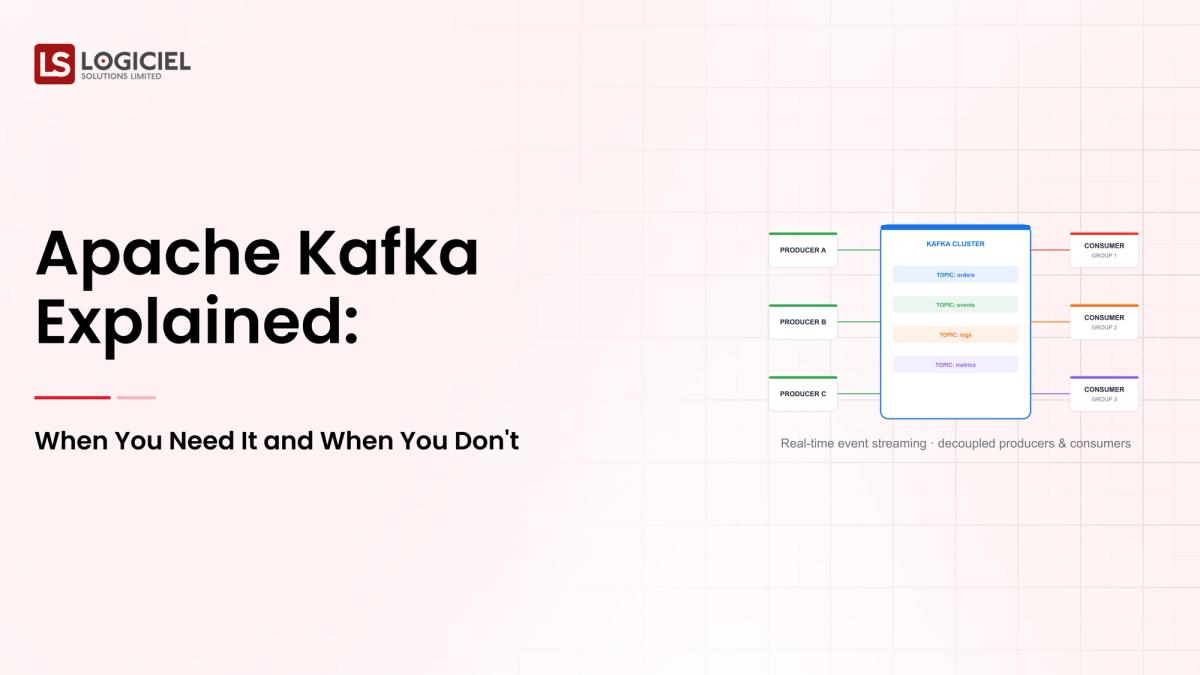

Overview Of How Apache Kafka Works

Kafka Consists Of A Few Basic Components:

- Producers Send Data

- Consumers Read Data

- Topics Organize Data

- Partitions Support Scalability

- Brokers Manage And Store Data

Why Was Apache Kafka Created?

LinkedIn Originally Built Kafka To Solve A Major Problem For The Company:

To Enable Reliability When Processing Large Amounts Of Real-Time Event Data.

Things You Should Know About Kafka

Kafka Is Much More Than A Message Queue.

Kafka Is Essentially A Distributed Log System Designed For Scalability And Durability.

Why Is Apache Kafka So Popular?

Kafka Has Found Itself Quickly Being Adopted In Organizations Across All Areas Of Industry For Several Reasons:

1. Very High Throughput

Kafka Handles Millions Of Events Per Second.

2. Scalability

Kafka Scales Horizontally By Adding More Brokers And Partitions.

3. Durability

Data Is Stored Persistently And Replicated To Other Nodes In An Apache Kafka Cluster.

4. Decoupled Systems

Producers And Consumers Can Work Independently From Each Other.

5. Real-Time Processing

Kafka Provides Almost Real-Time Data Pipelines.

Example

For Example, A Major E-Commerce Company Uses Kafka For Monitoring User Activity, Processing Orders, And Updating Their Recommendation Engines.

Overall, Kafka Effectively Handles The Challenges Of Scaling And Processing Data In Real Time.

How Does Apache Kafka Work In Practice?

To Fully Understand How Apache Kafka Works, You Have To Understand The Flow Process Used In Kafka.

Flow Process

- Step 1: Producers Write Event(s) To Topics

- Step 2: Events Are Stored In Partitions On Brokers

- Step 3: Consumers Read Events At Their Own Rate

- Step 4: Kafka Tracks Which Messages Have Been Consumed

- Step 5: Data will be replicated to ensure fault tolerance

Example Architecture

A standard design for the pipeline includes the following stages:

Applications ---> Kafka ---> Stream processing ---> Data Warehouse

Key takeaway from this example: Kafka acts as the "central nervous system" of the overall data flow.

When to Use Apache Kafka

Apache Kafka has great capabilities; however, it is not essential for every use case.

1. Real-Time Data Streaming

If you have a need for:

- Live analytics

- Event processed systems

Then Apache Kafka is a strong choice.

2. High-Throughput Systems

When doing the following:

- Processing millions of events

- Performing large scale data ingestion

Kafka is a leading solution for high-throughput solutions.

3. Microservices Communication

By using Kafka, your application can:

- Communicate asynchronously

- Maintain loose coupling

4. Data Integrating Across Systems

Apache Kafka can serve as the central data "backbone" in your organization.

5. Event Sourcing Architectures

With an event sourcing architecture, messages are retained in Apache Kafka for future retrieval, providing example of durable event logs.

Example Use Cases

A financial services firm utilizes Apache Kafka to achieve the following:

- Processing transaction events in real-time

- Identifying incidents of fraud

- Providing input into downstream analytic solutions

Key takeaway from these examples: If application scalability and real-time processing are not negotiable, consider using Apache Kafka.

When Not to Use Apache Kafka

One of the common mistakes that companies make is to incorrectly manage their applications and prevent the use of Apache Kafka.

1. Low Volume Data Systems

If the application is processing:

- Relatively small volume of data sets

- Low volume of transaction events over time

Then Apache Kafka will add complexity without enhancing the application.

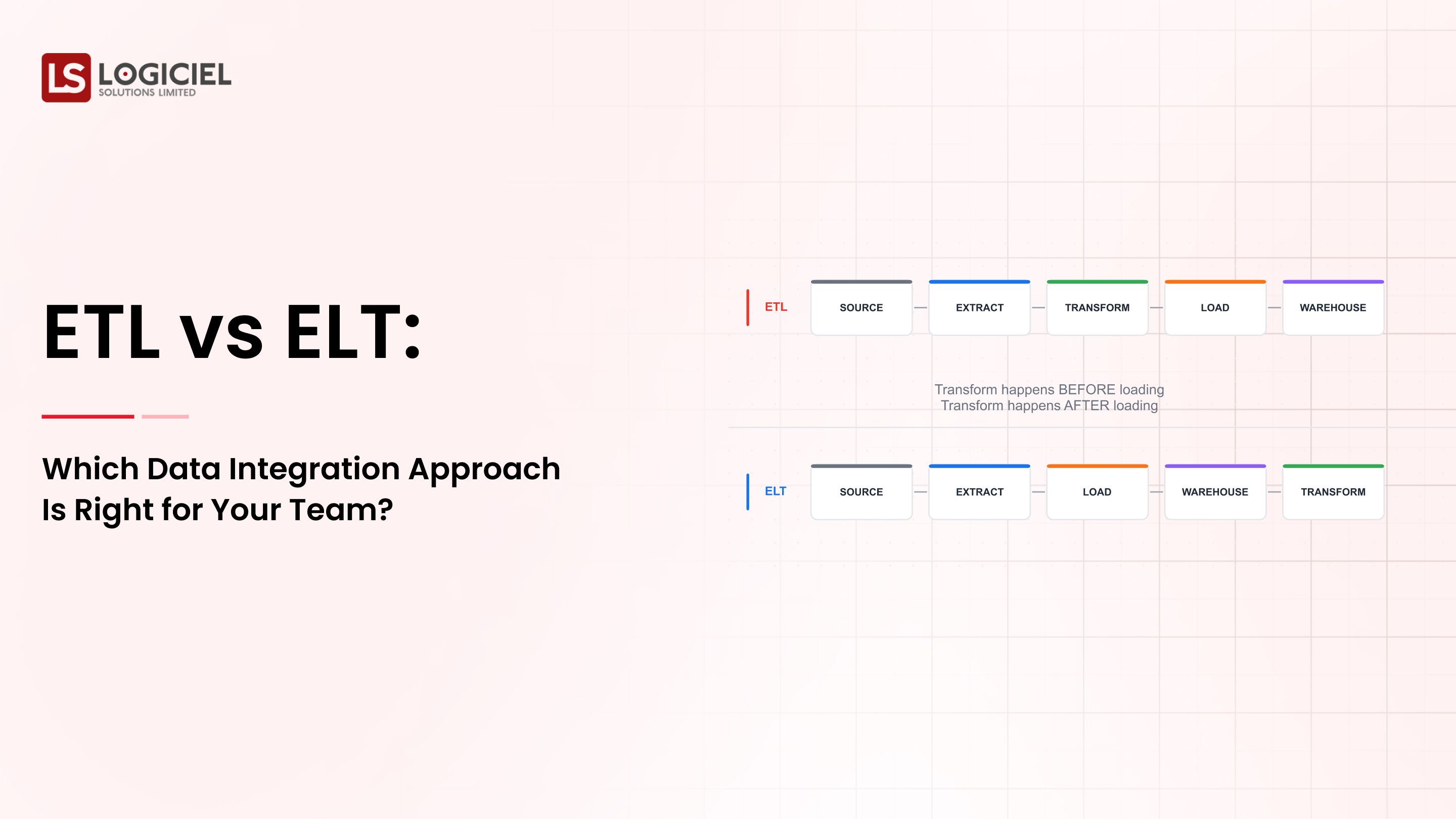

2. Basic ETL Pipelines

Most batch processing systems do not need to utilize Apache Kafka for their processes.

3. Early Stage Startups

If your organization is in early stages, scale and/or real-time requirements:

- Will delay your application development

4. Limited Engineering Resources

Kafka systems require:

- Operational expertise to support ongoing operations and maintenance

5. Over Engaging Problems

Many companies utilize Apache Kafka to address problems that they suspect may exist in the future.

Example

Startup uses Apache Kafka as its primary data streaming solution; as a result, the startup is experiencing:

- More complex applications

- Slower application development

- Higher total cost of ownership

Key takeaway: Apache Kafka is a powerful technology; however, it is also expensive in complexity.

What are some major differences between Apache Kafka and other streaming platforms?

A typical way to compare Kafka would be against:

RabbitMQ

Kafka has high throughput with its distributed log, whereas RabbitMQ serves as a message broker and supports more basic use cases.

Kinesis

Kafka is more flexible and supports a wider ecosystem. They are very similar to one another; however, Kinesis is also a managed service.

Pulsar

Kafka has a more mature ecosystem, while Pulsar offers more advanced capabilities such as multi-tenancy.

Key Considerations

Many organizations prefer Kafka due to its flexibility and the maturity of its ecosystem.

Takeaway to remember: Although Kafka may cost more in the short term, it will be much more extensible than other options.

Considering Managed Kafka vs Self-Hosted

Managed Kafka vs Self-Hosted is also an important comparison.

Self-Hosted offers:

- Full control over your system

- Customized configuration options

However, with Self-Hosted Kafka comes the downside of:

- Complexity of operations

- Maintenance of operations

Managed Kafka offers:

e.g., Confluent Cloud, AWS Managed/Managed Service and their respective pricing.

Managed Kafka Is a Better Option for Growing Teams for Three Reasons:

- Less Complexity

- Faster to Deploy

- Less Configured

Best Practices for Running Kafka in Production

- Design for Partitioning. Partitions dictate the scalability of your solution.

- Monitor Your Consumer Lag.

- Implement Schema Management.

- Secure Your Kafka Cluster.

- Plan For Failure.

- Optimize Retention Policies.

Some Common Mistakes Engineers Make

1. Using Kafka as a Queue

Kafka was originally designed as an event streaming solution, not a queuing solution.

2. A Bad Design Will Lower Efficiency

If you choose the appropriate topic design for your application, you will save time and build an efficient system.

3. Misjudging Your Operational Complexity

Kafka is an operational tool. You will need to manage your environment on an ongoing basis.

4. Not Considering How Scalable Your Design Is

The design decisions you make today will affect your scalability tomorrow.

5. Not Having Any Observability

Without observability, debugging your application will be difficult.

Who Uses Apache Kafka?

Many of the top organizations and companies use Kafka.

Common Use Cases Across Different Industries

- Companies in the tech industry using event tracking

- Financial services processing transactions

- Retailers using customer analytics

Why Do Companies Use Kafka?

- Reliability

- Scalability

- Flexibility

Insight Into Apache Kafka?

When organizations use Kafka, they cannot afford to have any downtime or high latency.

What Is the Future of Apache Kafka?

Kafka is continually being evolved.

Emerging Trends

- Serverless Kafka

- Stream processing integration (e.g., Flink)

- AI-enabled data pipelines

What Is The Impact Of This On Apache Kafka?

Apache Kafka is becoming:

- Easier to use

- More integrated

- More automated

Strategic Insight

Apache Kafka is likely to be one of the main components of the modern data architecture moving forward.

Frequently Asked Questions

What Is Apache Kafka?

How Does Apache Kafka Work?

Who Created Apache Kafka?

When Should I Use Apache Kafka?

When Should I Not Use Apache Kafka?

Conclusion: Apache Kafka Is A Strategic Asset and Must Not Be Viewed As A Default Solution.

Apache Kafka represents one of the more powerful tools that exist in data engineering today, however, it should not be deemed as the default solution. When used appropriately, Apache Kafka helps:

- Create scalable real-time systems

- Create reliable data pipelines

- Decouple architectures

When used inappropriately, Apache Kafka creates:

- Complexity

- An additional cost to your application

- Ongoing maintenance overhead

The Logiciel Point of View

At Logiciel Solutions, we help engineering teams design "AI-First" and event-driven architectures where tools like Apache Kafka are used strategically and not just by default. Our focus is on building scalable streaming systems to meet the various real business needs for your application, while providing performance, reliability, and maintainability over time.

If your team is evaluating the use of Apache Kafka, or is currently struggling with you current implementation of Kafka or a previous implementation, now is the time to review your architecture and to determine whether you have constructed your architecture for scaling or have restricted the ability to scale.

Let us help you design systems that will grow with your data and your company.