Every data engineering team in time, will find their pipelines are no longer simple.

As the data engineering team grows, they will experience complex data ingestion, transformation, orchestration and governance.. As the data engineers’ work becomes more complex, the stakes increase and so do performance issues, and increased risk.

Cost estimates become unknown due to the number of resources being consumed will create unknown risks of cost overruns, while the quality of the data will be more difficult to assure will become another major issue affecting the record and data quality for an organization is going to be the question, “Should I using ETL or ELT?” will become a very important.

This will not just be a technical question..

It will affect:

- The scale of how many pipelines your organization will be able to support.

- The speed at which your data teams will be able to get data (i.e., how soon they can get started with analysis) to how fast they can create a full-scale data pipeline for their organization.

- (Or with ETL or ELT how quickly will I get access to my data once it has been moved?)

- How efficiently your organization is using compute resources (i.e., how well will your computer resources and time be utilized)

- How prepared your organization’s architecture is for near or real-time analytics and/or AI.

This paper will provide the following information and resources related to ETL versus ELT:

- Definition of ETL and ELT

- The relative differences between ETL and ELT

- The relevant trade-offs between performance, price and scale

- When to use either model

- Common mistakes of inexperienced data movement teams

- How to create an ETL or ELT future/re-usable Data Integration Strategy for your data needs

What is ETL (Extract, Transform and LoadProcess

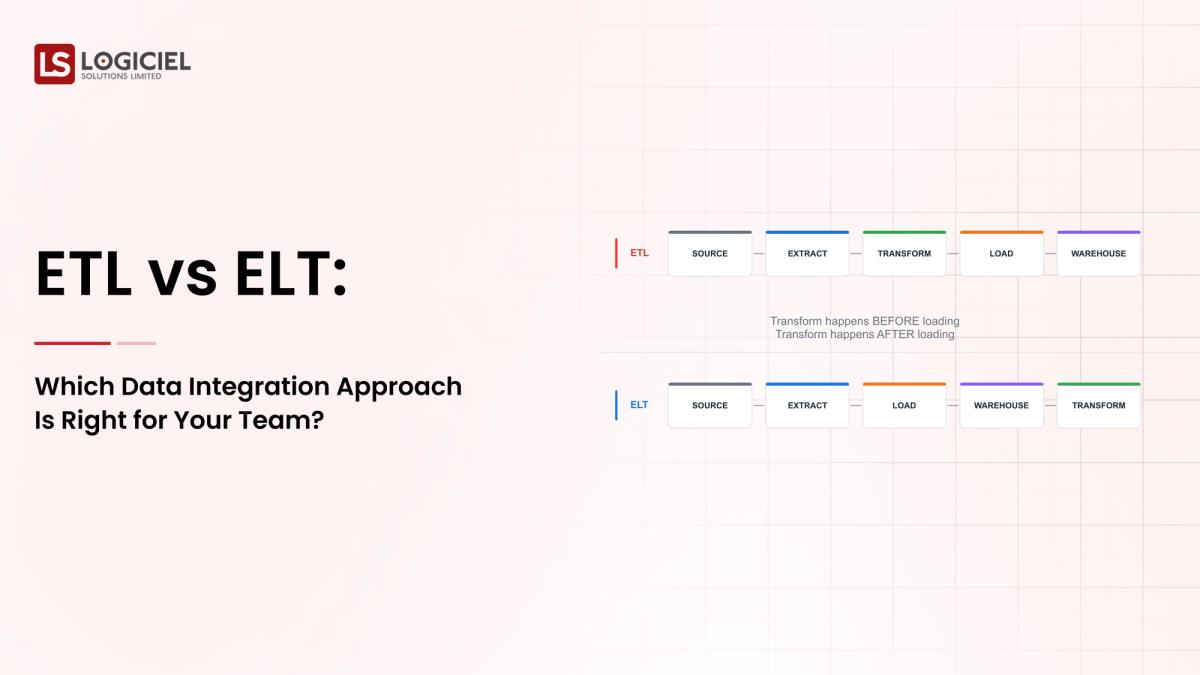

ETL follows a linear flow based upon extracting from source(s), then transforming data, then loading into target(s). Whereas with ELT, you are working reverse of how ETL flows through all four steps. ELT has an Extract followed by a Load step, while ETL has both of these together as part of the Extract as seen below:

ETL also involves the process of transforming before final Load and therefore cannot access any data until after all ETL processes have been run.

Time

ETL executes its load processes outside of the source systems. This often results in additional time to load data versus ELT because ELT executes its load processes inside the source systems. Additionally, since ELT uses cloud storage systems for its loads, it typically doesn't take as long to access the data once it's been loaded as it does for data to be accessed once it's been loaded for ETL processes.

Complexity

ETL has historically been more complex to implement than is ELT and therefore requires more technical expertise than does ELT.

Scalability

Scalability for ETL is typically limited due to the requirement that data must first be extracted before it can be transformed; thus most ETL solutions require integration with existing enterprise applications before the ETL processing can begin.

Processing Power

ETL typically requires high amounts of processing power to perform multiple transformations on the data, while ELT allows multiple transformations on the data at either the source or target level, allowing for greater distribution of processing power within its architecture.

Reporting

Reporting using ETL solutions typically takes longer to generate than with ELT solutions; however, when generating reports, ETL solutions typically require more technical skill from users than does ELT solutions when using Oracle database platforms for report generation.

Transparency

Users of ETL solutions often lose visibility into the ETL process due to their reliance on integration or batch-loading services, while users of ELT solutions can continue to have visibility into the ELT process due to its real-time capabilities.

Self-service Data

Depending on which form of ETL user deploys, users may have limited autonomy over their data compared with ELT users who may retain full autonomy over their data and are not limited to specific report styles.

Key Differences Between ETL and ELT

Timing of data transformation

ETL - prior to loading

ELT - after loading

Performance

ETL - ingestion process is slower

ELT - ingestion process is faster, lots of transformations require a lot of processing power

Scalability

ETL - not very scalable, can only scale if there is an infrastructure for transforming

ELT - can scale according to the compute resources available in the cloud

Costing Model

ETL - high upfront engineering cost

ELT - higher cost for compute/storage

Flexibility

ETL - a rigid schema

ELT - flexible schema-on-read

Summary

ETL and ELT are optimized at different priorities.

When to use ETL?

ETL continues to be a vital part of data processing.

Best Use Cases

- Environments with strict compliance

- Structured reporting environments

- Legacy data warehouses

Sample Use Cases

- Financial reporting pipelines

- Processing healthcare data

- U.S. Regulatory requirement compliance

ETL provides:

- Clean, verified and validated data

- Mitigation of inconsistent data

Key takeaway: Use ETL when you need accurate control of your data.

When to use ELT

ELT is the preferred processing method for modern day data platform.

Best Use Cases

- Systems designed for the cloud

- Very large-scale data ingestion

- Artificial intelligence and machine learning

Sample Use Cases

ELT provides:

- Rapid availability of data

- Ability to run multiple workloads simultaneously

Key takeaway: Use ELT when you need scalability and speed.

ETL vs. ELT for Cloud-based Data Warehouses

Cloud-based platforms have tilted the scale toward ELT.

Why ELT wins in Cloud-based Warehouses?

- Transforming data within a warehouse is efficient

- Storage is inexpensive

- Dynamically scales using compute resources

Typical Architectures for ELT in the Cloud

- ELT pipelines on Snowflake or BigQuery

- Data Lakes with transformation layers

- Hybrid Models

Many groups use both ETL and ELT.

- ETL for sensitive data

- ELT for analytics workloads

Modern architectures use a combination of both SQL and NoSQL since there's no "either/or" when it comes to both approaches; most systems require both ETL/ELT methods.

Common Data Engineering Errors Include

1. Thinking of ETL vs. ELT as a binary choice

There is no "either/or" binary option.

The majority of system designs will require both.

2. Not considering data governance during ELT process

ELT without appropriate data governance creates:

- Data sprawl

- Inconsistent metrics

3. Over-engineering ETL data pipeline

Too many transformations on upstream processes reduces agility.

4. Unestimating costs associated with ELT processes

Transforming data creates a "heavy" use of compute resources which increases overall costs.

5. Not aligning with use cases

Selecting the wrong data processing style for a workload will create inefficiencies.

Key Takeaway: The primary mistake made is not being strategic vs. technical.

How To Select The Right Methodology For Your Team

Data engineer's lead will require a systematic methodology to determine the right data processing methodology.

Step 1: Define Your Workload

- Analytics

- Reporting

- Machine Learning

Step 2: Evaluate The Volume Of Data

- Small → ETL

- Large scale → ELT

Step 3: Think About How You Want The Data Processed

- Real-time → ELT

- Batch process → ETL

Step 4: Evaluate Your Team’s Capabilities

- Teams that are SQL focused benefit from ELT

- Teams that are strong in pipeline engineering can maximize ETL

Step 5: Plan For Future Growth

Choose the process that can accommodate your planned growth.

Key Takeaway: Your selection should align with the long-term architecture of your systems, not short-term convenience.

ETL Tools

- Informatica

- Talend

- Apache NiFi

ELT Tools

- Dbt

- Fivetran

- Stitch

Hybrid Tools

Many modern platform will support both platforms.

Main Idea

The tools you use to support your data architecture should not define what your data architecture will be.

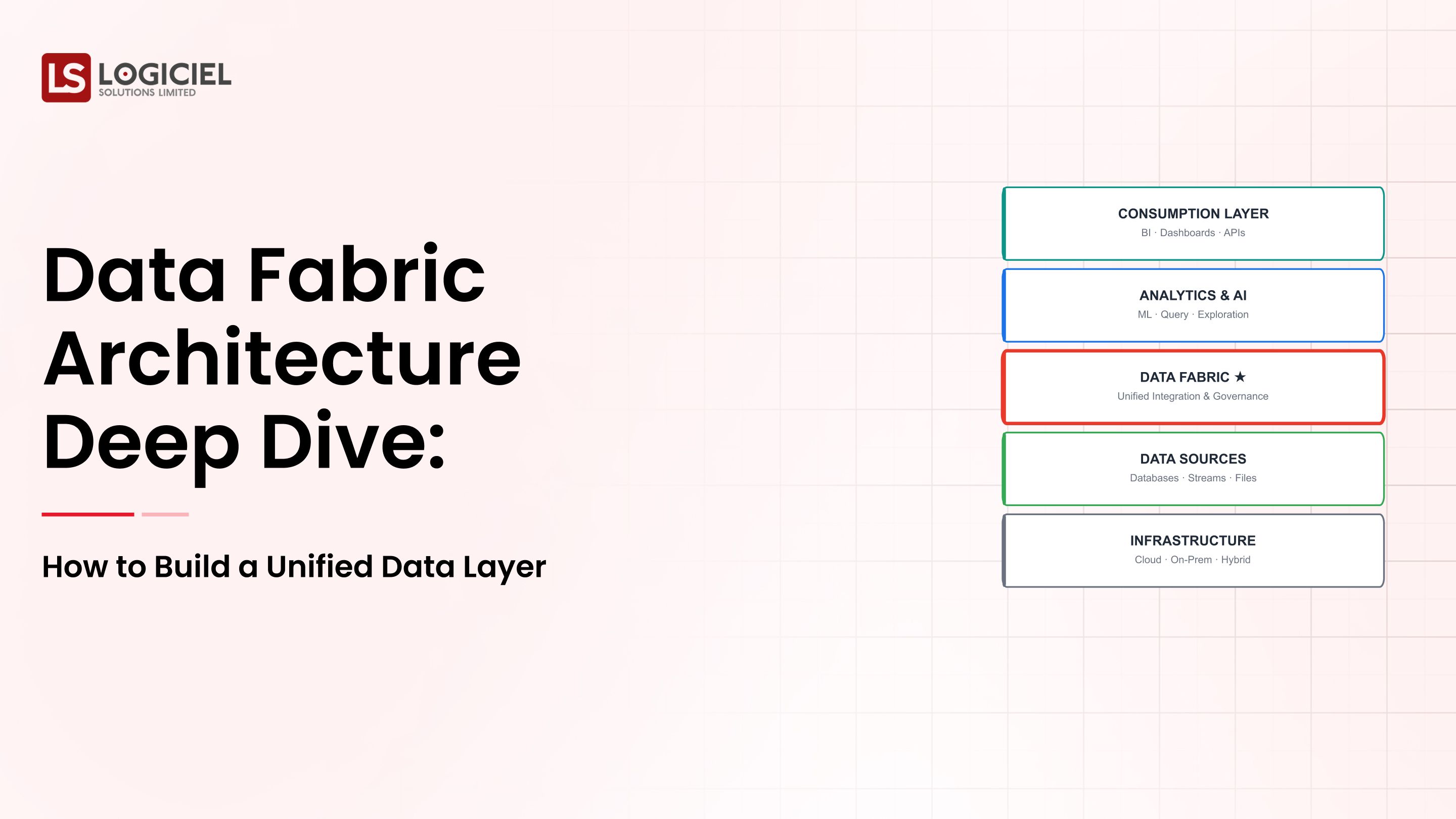

How Data Will Be Integrated: Moving Beyond ETL and ELT

There has been a shift in conversation about how data will be integrated.

Trends That Are Emerging

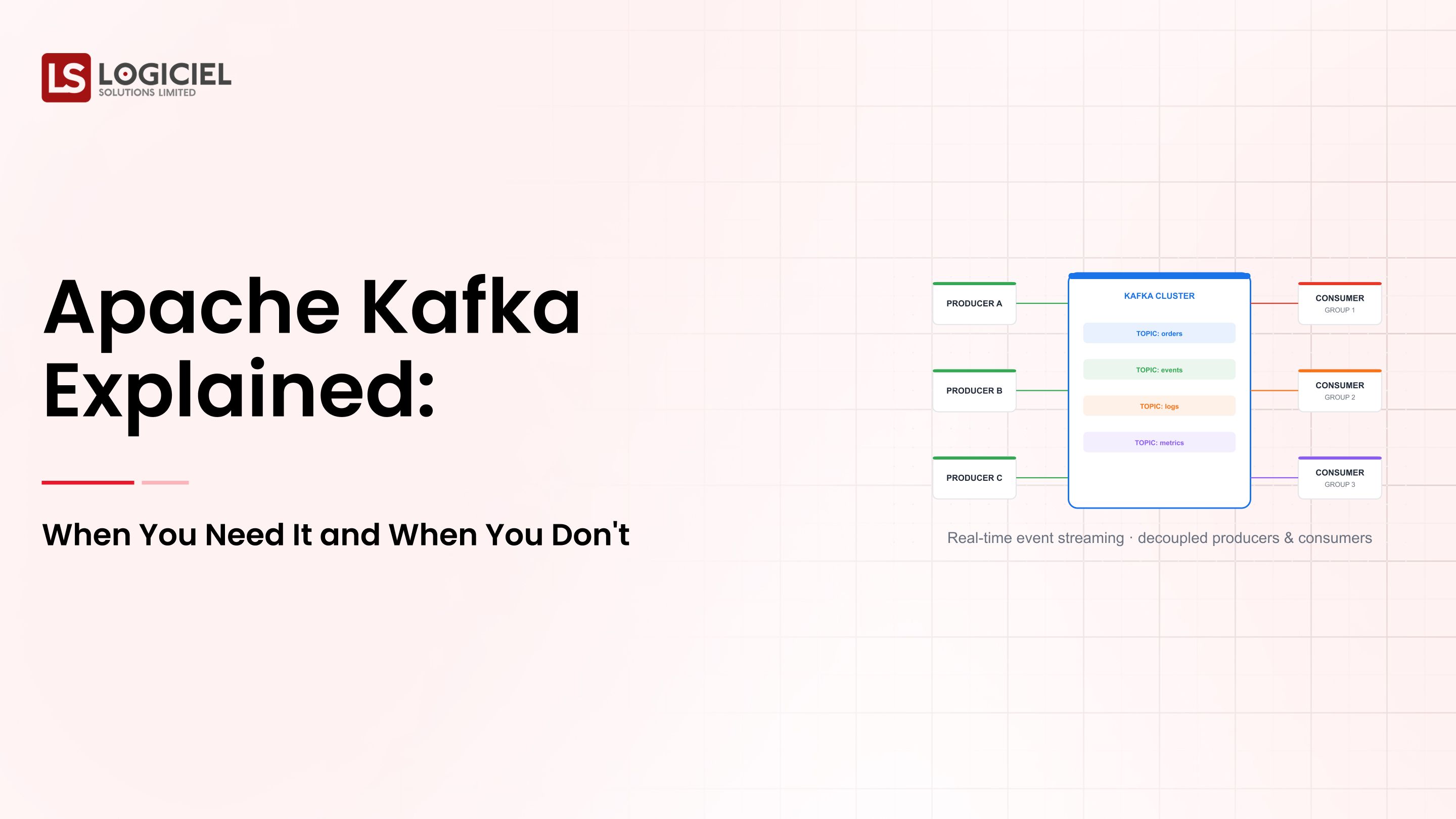

- Real-time data streaming pipelines

- Streaming data architectures

- Artificially intelligent transformation processes

- Integration of data

What That Means

Data integration is going to move to:

- Automated

- Intelligent

- Real time

Significant Conclusions

Companies that implement flexible architectures can:

- Operate quicker

- Utilizing resources more efficiently

- Allow for AI use

Key Takeaway: ETL vs ELT is just the beginning of the conversation.

Frequently Asked Questions

What is the difference between ETL and ELT?

Which one is better ETL or ELT?

Is it possible to use both ETL & ELT together?

Why is ELT more established than it used to be?

What are some of the risks associated with ELT?

Conclusion: ETL and ELT go hand in hand

The issue is not whether one of these approaches is "better" than the other.

The issue is which of these applications are best suited for each type of workload.

ETL provides Control and Reliability; whereas, ELT offers Speed and Scalability.

The most effective data architectures include a combination of the two approaches.

Logiciel's Point of View

Logiciel Solutions partners with teams to design AI-first data architectures that are grounded in the principles of ETL and ELT, while also achieving scalability.

We design comprehensive data integration systems that meet business objectives, provide cost optimization and support for advanced analytics, as well as AI workloads.

If your data pipelines are becoming too complex, time consuming or expensive, we encourage you to consider the possibility of redesigning your integration strategy.

Let us create a data architecture that allows you to scale your system in accordance with the data that it will accommodate.