As you lie awake in the middle of the night at 2:13 AM. Your phone vibrates; A critical pipeline has gone down with no warning and now the crews need to eliminate all of the lingering inquiries. By morning, after a rough night with the additional critical downtime, numerous dashboards will be inoperable.

For many organizations, the hidden costs of utilizing outdated systems can cause immeasurable numbers of problems.

Most organizations aren’t making the move from a legacy stack/technology because of its allure or excitement but rather due to the fact that they are no longer able to keep successfully maintaining legacy technology at a reasonable cost.

Why Audit-Ready Beats Audit-Survived Every Time

Inside a 120-day remediation that turned three material findings into zero at follow-up.

As a Data Engineering Lead responsible for creating a scalable data infrastructure, this type of situation is common.

This guide will help you:

- Learn how to avoid the common mistakes that many organizations and teams make when it comes to modernization

- Formalize a practical plan for a phased migration to reduce risk

- Create an infrastructure to support a large-scale, real-time data-driven solution as well as leveraging artificial intelligence

Let's start by looking at "Why do most organizations have difficulty achieving a successful modernization effort?"

Section 1: Why Do Most Teams Have Difficulty with Successful Data Platform Modernization

Most modernization efforts have not been a failure due to technical challenges.

Rather, their failures are due to the lack of planning and execution.

The Common Failure Modes

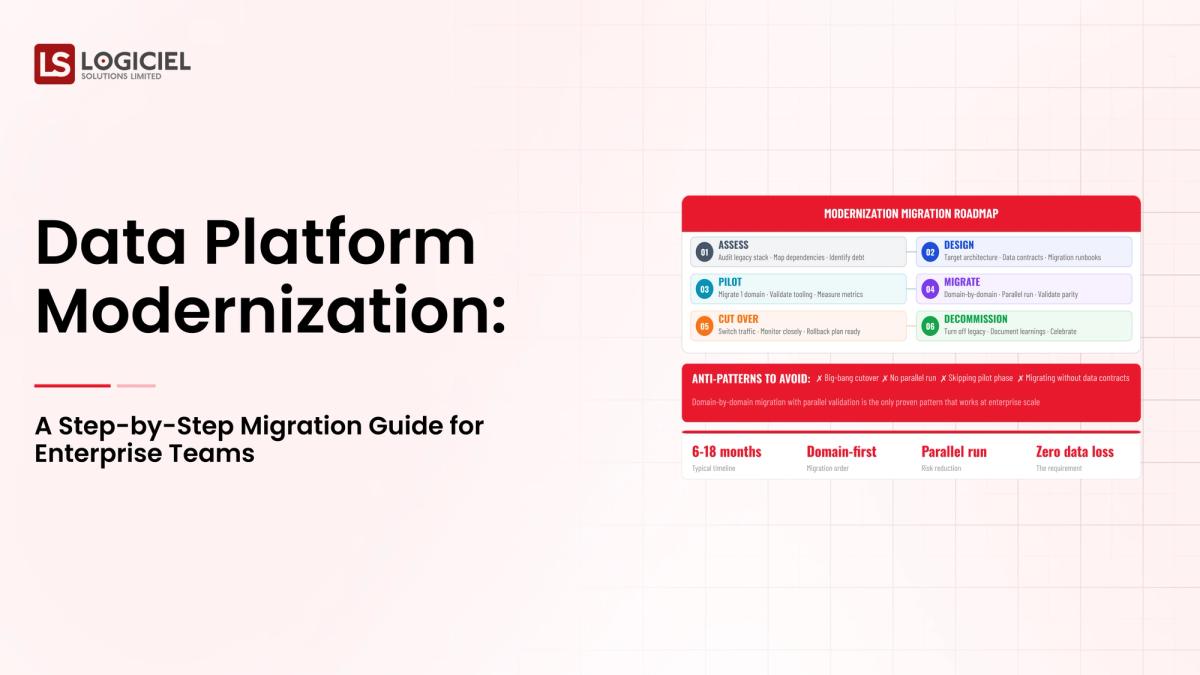

Most teams follow a modernization process like this:

- Select tools

- Migrate pipelines

- Hope it works

What they don’t consider:

- Mapping dependencies

- Aligning with stakeholders

- Incremental rollout plans

What happens:

- Pipelines are broken

- Data is inconsistent

- Stakeholders lose confidence

The Hidden Complexity

Modernizing modern data infrastructure in 2026 is more challenging because:

- Systems are interconnected to a greater degree

- Volumes of data are much larger

- Real-time demands create higher risk

- AI workloads now require greater data quality

What looks like “migration” is essentially a redesign of the system.

Technical Debt Builds Up

Legacy systems often contain:

- Hard coded transformations

- Undocumented pipelines

- Tightly coupled system dependencies

All of these add to migration risk.

What Does Success Look Like?

A Data Engineering Lead will define success for the organization to mean:

- No or minimal downtime during migration

- No data loss or inconsistencies

- Incremental adoption by relevant stakeholders

- Reliable, consistent and performant solutions

A Real Example

A team attempts to migrate their warehouse.

However:

- Dashboards break

- Metrics change

- Stakeholders lose confidence

Within weeks, the teams typically revert back to the previous systems.

This is not a failed migration, it is a failed strategy.

Section #2 - Prerequisite: What You Need Before You Begin

Prior to beginning your migration, you need to build alignment and clarify expectations.

1. Establish Clear Ownership

Each pipeline, and dataset must include:

- An assigned owner

- A defined SLA

- Accurate documentation

Without established ownership, you will likely encounter delays with your migration.

2. Stabilize Your Existing System

Resolve:

- Broken pipelines

- Critical data quality issues

- Pipelines without any documentation

Migrating systems that are not stable will increase risk.

3. Create Data Contracts

Data contracts include agreements to ensure:

- Schema is consistent

- Changes in schema can be predicted

- Breakages can be minimized during migration

4. Engage Your Stakeholders

You will need commitment from:

- Your director of engineering

- Research and development

- Your analytics teams

- Your business teams

Define:

- The goals that you desire for migration to take place

- The risks that may occur to delay or impact the project

- What you expect to gain from the project

Determine Success Metrics

Sample Success Metrics could be:

- Uptime of Pipelines

- Accuracy of data

- Speed of migration

- Involvement of Stakeholders

5. Obtain Financial Support and Staffing

To be successful in modernizing your platform on a cloud-based infrastructure, you will need to establish a budget for:

- Engineering time

- Your infrastructure

- Temporary duplication of the old and the new systems

Section 3: Phase 1: Assessing the Current State

Before you begin anything, you must know what you are working with.

1. Create a List of Your Entire Stack

Make an inventory of:

- The data sources that supply data

- The data pipelines to move data throughout the business

- Where the data is stored

- All of the BI tools in use to visualize the data

For Each Item:

- Identify the owner of each of the data sources, pipelines, storage systems and BI tools

- Frequency of usage within the company

- Identify if any known issues exist

2. Identify Your Critical Pipelines

Focus on working with the following critical pipelines of data:

- Data that impacts the revenue of your business

- Customer-facing analytics that must be accurate

- Compliance data that is continually procured and needs to be accurate

3. Map the Dependencies of Your Pipelines

Determine the following issue areas:

- Which pipeline(s) depends on other pipeline(s)

- Which pipeline(s) are utilized by which dashboard(s)

Failure to do this will allow you to accidentally break some of your critical pipelines when you migrate your data.

4. Identify Bottlenecks in Your Pipelines

Typical bottlenecks examples are:

- Slow pipelines due to database performance

- Frequent pipeline failures due to lack of data quality or accuracy

- Manual processes to produce reports

5. Review the SLAs of the Current System

Questions to Consider:

- Does the current system provide the reliability that was originally planned for?

- How often do failures occur?

- How much time does it take to recover from failures and have the data available again?

6. Develop a Prioritized Migration Plan

When developing the prioritized migration plan, split the Plan into the following 2 migration areas:

Quick Win Migration

Migrate the pipelines where there is little to no risk in migrating.

An example could be:

- Move your isolated systems to the new cloud-based infrastructure

High-Risk Migration

Migrate the pipelines that are the company's core data model, shared datasets and output reports.

Now you will have:

- A clear map of the systems

- A priority plan for your migration projects

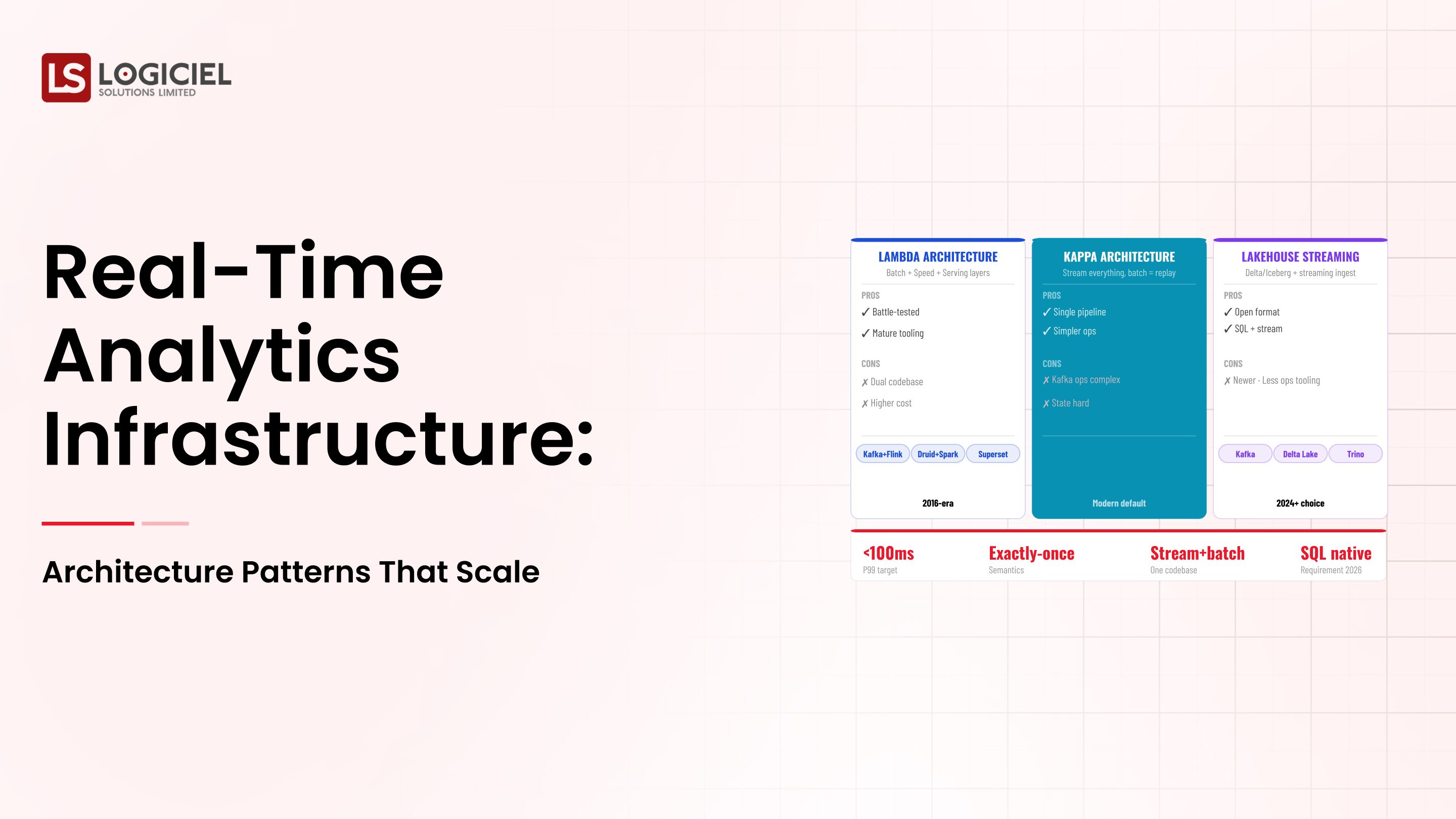

Section 4: Phase 2: Determine Your Target Architecture

Define what will be the target architecture of your new modern data infrastructure.

1. Define Architectural Principles

A modern data cloud-based infrastructure will have these architectural principles in place:

- Modular

- Scalable

- Observable

- Contract-driven

2. Evaluate Architectural Components Carefully

Avoid re-creating the existing components of your current infrastructure.

Evaluate these criteria:

- Scalability

- Cost

- Integration

- The team's expertise in completing these tasks

Consider these primary components that comprise a data platform:

- Ingestion (batch and streaming)

- Storage (traditional data warehouse and lakehouse)

- Processing

- Orchestration

- Observability

3. Design for Observability First

To design an observable data pipeline, you'll want to build in features such as:

- Pipeline monitoring

- Tracking data freshness

- Alerting when errors occur

- Tracking lineage

These components of observability are critical to reducing the risk of migration.

4. Plan For Data Model Changes

Modernizing your data platform will likely include the following components:

- Redesigning your schema

- Increasing the level of normalization for your data

- Improving the way you aggregate your data

5. Define Data Contracts

Data contracts between your team and your data provider(s) define what your team will receive, including:

- Schema stability

- Versioning

- Communication around changes

6. Document Your Assumptions

For each assumption, you will want to document:

- How much you anticipate your data will grow

- How long it takes to process your data

- How much capacity your team has

You should review these assumptions regularly.

Phase 3: Build, Test, and Roll Out Incrementally

How you execute the migration will ultimately determine whether you are successful.

1. Start Small

Choose the following items to prove out your approach:

- One domain

- One non-critical pipeline

2. Run Parallel Systems

Keep the old system running while the new system validates the output of the old system.

This will ensure:

- There is no loss of data

- You will be able to easily roll back if necessary

3. Build Automated Tests

Automate the following tests prior to rollout:

- Validate schema

- Check the quality of the data

- Validate data transformations

4. Instrument Everything

Track:

- Latency

- Error

- Data freshness

5. Validate with Stakeholders

Before making the switch, use the following process to validate with stakeholders:

- Compare the output of the old system to that of the new system

- Have stakeholders approve of the new system's output

6. Scale Gradually

After validation, begin expanding to other pipelines and refining the process.

Key Insight

Remember that migration is not a switch; it is a controlled transition.

Section 6: Measuring Success and Iteration

Measurement is critical after being live.

1. SLO's

Define the SLOs you will hold your system to:

- Uptime

- Data freshness

- Data accuracy

Example:

- 99.9% uptime

- Less than 10 minutes of latency

2. Build Transparent Dashboards

Show:

- Health of the data pipelines

- Availability of the data

- Incidents

3. Track Adoption

Track:

- Number of users of the new system

- Amount of reliance on the legacy system

- Feedback from stakeholders

4. Run Retrospectives

Run retrospectives:

- Weekly during the first 3 months for engineering

- Monthly for stakeholders

5. Monitor Leading Indicators

Monitor:

- Incident frequency

- SLA adherence

- Performance improvement

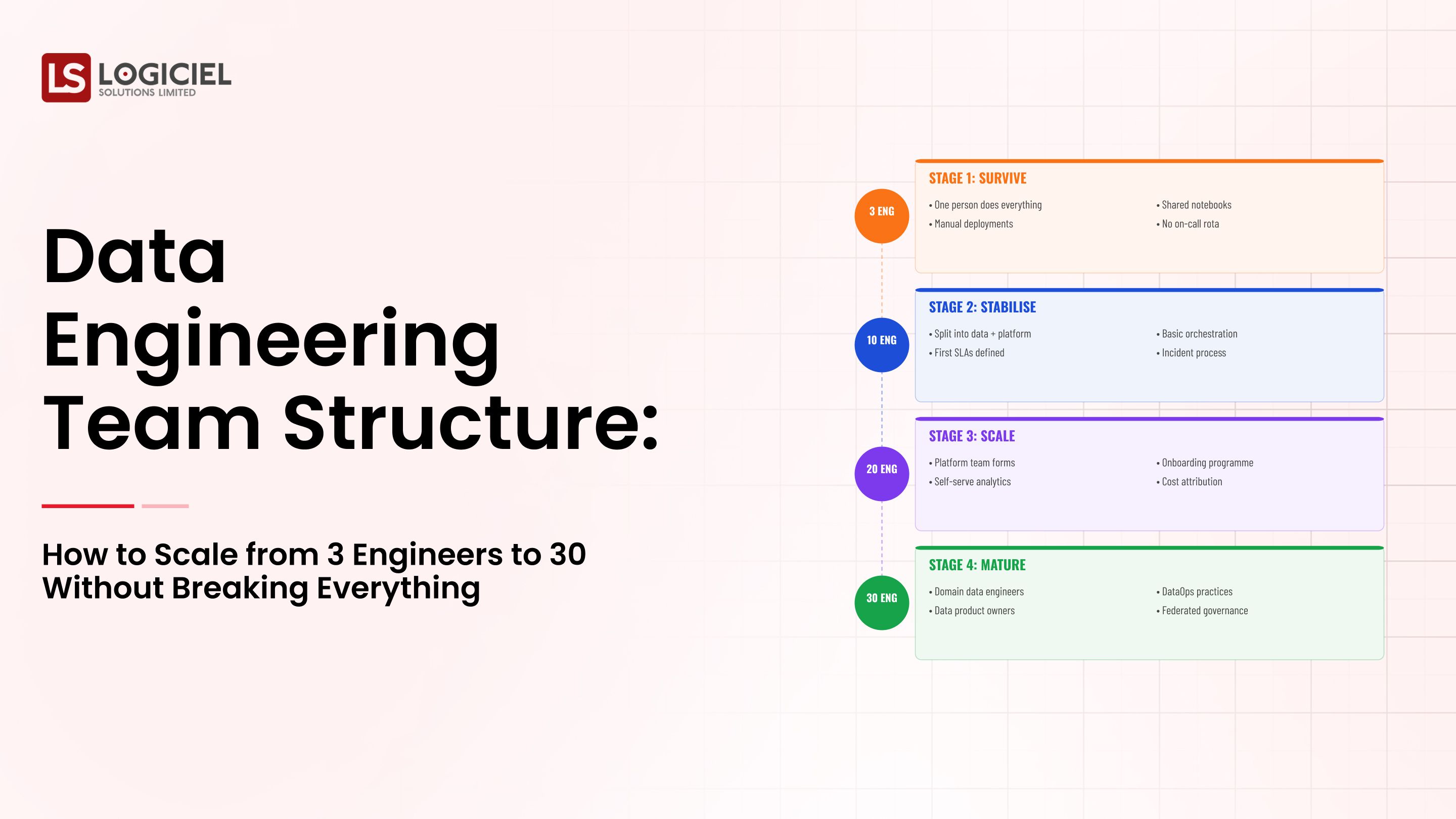

Scaling Data Team Without Scaling Headcount

Inside a 12-week overhaul that doubled output and cancelled two senior data engineering hires.

Call to Action

Logiciel's POV

Modernizing your data platform is not about adopting new tools.

It is about building a system that meets the needs of your growing business, supports AI, and provides real-time use cases while maintaining trust amongst teams.

Execution is what will separate success from failure.

At Logiciel Solutions, we help enterprise teams design and migrate to a modern data infrastructure with minimal disruption and maximal impact.

If your current system is causing you to be less productive, then it is time to modernize your data platform, and we can help you with that by creating a plan for your modernization.

Explore how Logiciel's AI-first engineering teams can assist in migrating and scaling your data platform with confidence.

Frequently Asked Questions

What is data platform modernization?

Data platform modernization refers to migrating legacy systems to a scalable, reliable, and AI-ready infrastructure. The process typically consists of: - Migrating pipelines - Redesigning architectures - Improving the quality and observability of the data

How long does a data migration take?

After the initial migration phase, which typically takes about 8-12 weeks for a single domain, full modernization typically takes many months and is completed iteratively.

What is the biggest risk in data modernization?

The biggest risk in a data modernization project is breaking existing systems or losing the trust of your stakeholders. This is usually due to insufficient planning, testing, and stakeholder alignment.

Should you migrate all of your data at once?

No, the safest method is to migrate incrementally with parallel systems that validate outputs to minimize risk.