An additional pipeline has failed. Not due to some difficult scenario, but due to something very fundamental, like the omission of a resource dependency, a schema incompatibility, or forgetting to run an application.

Your team doesn't develop systems; they maintain them.

This is where data infrastructure automation becomes important.

If you are a data engineer or an engineering lead, this article will help you...

- Identify how Python fits within contemporary data infrastructure automation

- Discover efficient tools and patterns deployed by producing teams

- Implement processes that reduce manual labour and offer increased reliability

Let's begin by explaining what it is to use automation to manage data infrastructure.

AI Velocity Blueprint

Measure and multiply engineering velocity using AI-powered diagnostics and sprint-aligned teams.

What is data infrastructure automation? Definition in everyday language.

Data infrastructure automation refers primarily to the use of code to control, manage, monitor and optimise data systems without any manual intervention.

Simple analogy

Automation is the equivalent of driving everything using automatic transmission versus manually driving everything using a stick shift.

What data infrastructure automation encompasses:

- Pipeline execution

- Data validation

- Monitoring and alerting

- Infrastructure provisioning

Why use Python?

Python is widely used due to its:

- Ease of use

- Flexibility

- Large ecosystem

Major issues solved by data infrastructure automation:

When automation does not exist:

- Systems do not function properly

- The number of errors increases

- Engineering time is wasted

Conclusion:

Automating the data infrastructure reduces the total amount of manual labour needed to maintain a system as well as increases the reliability of a system.

How Data Infrastructure Automation Will Make All the Difference by 2026

A) Data systems are quickly becoming more complicated

Today's systems include the following:

- Batch pipelines

- Streaming workflows

- Multiple different tools

Because of this, manual management has simply gotten too big for most companies to handle anymore.

B) AI workloads have to be reliable

AI systems rely on the following:

- Consistent data

- Reliable pipelines

- Constant updates to take place in order for proper functioning

C) Engineering time costs too much

If there is no automation:

- Engineers will spend too much time doing maintenance

- Engineers will have less productive time working on things other than maintenance

D) Failures are more impactful now

When an engineer fails:

- The company receives bad information

- The company will make more bad decisions

- The company loses more money

Manual vs Automated Systems

| Manual Systems | Automated Systems |

|---|---|

| Many errors | Reliable pipeline working efficiently |

| Amount of resources utilized will be extremely limited | Resources greatly reduced or not needed |

| Time it takes to develop a product will take much longer | Development takes a fraction of the time |

Conclusion:

Automation is a must in order for your data systems to be growth-oriented.

A Basic Understanding of What the Parts of Data Infrastructure Automation Are (and therefore will be built with)

Each building block/part of data automation is broken down as follows:

1) Pipeline Automation

Will automate the following:

- Ingesting data into systems

- Transforming data in systems

- Scheduling of when this data flows into/in through the system

2) Infrastructure Automation

Code via computer programs:

- Provision resources for any process (or build an infrastructure for your system)

- Manage environments in which these resources will be provisioned

3) Data Quality Automation

The following will be automated through code:

- Data Validation checks

- Data Schema enforcement

4) Monitoring and Alerting

The following processes will be monitored/become automatic through computer programming:

- Process failures

- Process performance

- Process anomalies

5) Deployment Automation

The following will be set up to automatically use continuous integration and/or continuous deployment of data systems:

- Data/old versions of data flows into/in through to/from the system

- The old data flows into/in through to/from the systems

What is it like when all of these components are run in concert with one another?

- Pipelines run seamlessly

- Systems monitoring their performance

- System will alert other systems if there are issues

- Deployments

An Overview of How Data Infrastructure Automation Works in Practice: A Real World Example Using a SaaS Data Platform

Your system’s activities:

- User events

- Transaction records

- Analytical data

1st Step: Automated Data Ingestion

Automated pulling of data via Python scripts through:

- Application Programming Interfaces (APIs)

- Data stores, such as:

2nd Step: Automated Data Processing

Data processing happens in Pipelines:

- Data cleaning

- Dataset transformation

3rd Step: Automated Data Validation

Automated validation of:

- Data quality

- Schema conformity/consistency

4th Step: Automated Data Monitoring

Automated monitoring of:

- Pipeline health

- Performance metrics

5th Step: Automated Deployment

Changes to the data pipeline are:

- Tested and validated

- Deployed to production

Where It Works

Automated systems with reliable pipelines and less manual effort are effective.

How It Does Not Work

Automated systems suffer from poor automation design and a lack of observability.

General Takeaway:

Automation will help ensure reliability if it is implemented correctly.

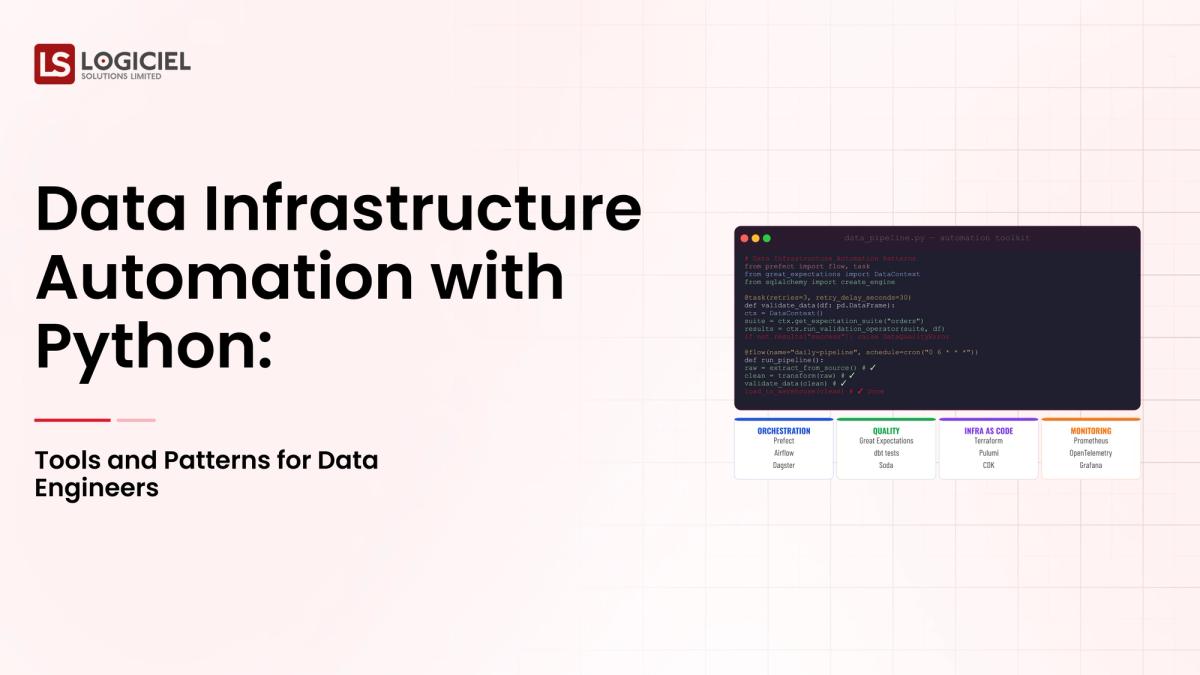

Python Tools for Data Infrastructure Automation

1. Airflow (Workflow Orchestration)

How to use:

- Schedule Pipelines

- Control Workflows

2. dbt (Data Transformation)

How to use:

- Model Your Data

- Write SQL Transformations

3. Great Expectations (Data Quality)

How to use:

- Validate Your Data

- Automated Testing of Data

4. Terraform (Infrastructure As Code)

How to use:

- Provision Resources

- Manage Infrastructure

5. Custom Python Scripts

How to use:

- Integrate with Existing Systems

- Create Customized Workflows

Why Choose Python for Automation?

- Large quantities of Libraries

- Robust Community

- Easily able to integrate into existing systems

General Takeaway:

Python acts as the glue that holds your data infrastructure together.

Common Automation Patterns Used By High Performing Teams

1. Infrastructure As Code (Iac)

- Use Code To Define Infrastructure

2. Continuous Integration/Continuous Delivery Of Data Pipelines

- Automated Testing & Deployment

3. Data Validation Pipelines

- Validate Quality And Consistency Of Data

4. Event Driven Automation

- Trigger Actions Based On Events Or Changes

5. Observability First Design

- Systems Are Built With Monitoring/Alerts

General Takeaway:

The Pattern is More Important Than The Tools Used.

Common Misstep with Automated Systems

Teams often do too much automation early, causing:

- Complex Processes

- Heavy Maintenance Load

- Increased Monitoring Responsibility

Teams often do not recognize the value of monitoring.

Without monitoring, there is no way for a team to recognize the following:

- Failures go unnoticed

Many teams do not have:

- Retry Mechanisms

- Alerts

Automation must be managed as evolving from the original system design.

The Use of monitorable systems is a key takeaway when designing automated systems that evolve over time.

Best Practices of High-Performing Teams

Start by Automating High Impact Processes

Separate system components so that the responsibilities for each component are clear

Establish Monitoring and Observability to have visibility during system operation

AI-First Engineering

High performers are not limited to using scripting to perform automation.

They utilize AI-driven systems to:

- Anticipate Failures

- Optimize Pipelines

- Minimize Manual Effort

Logiciel Solutions differentiation comes from its ability to provide teams with comprehensive AI-driven automation systems as opposed to fragmented automation solutions.

Therefore, teams must think about automation in terms of a system as opposed to all of the individual operations that are needed to create automated solutions.

The Conclusion - The Future of Data Infrastructure Automation

For Modern Engineering Teams, Automation of Data Infrastructure is Critical.

The three most important thoughts to remember regarding Automation are:

- Automating will reduce the time associated with manual operations because of improved reliability

- Python is a flexible and scalable language for building automated solutions

- The success of Automation depends greatly on the Patterns of behavior and Design of the systems that will support Automation

Automation is now a necessity, not an option.

If implemented properly, the use of Automation will result in:

- Faster delivery of products

- Higher reliability of products

- Lower operating costs

- Higher Productivity of Engineers

Evaluation Differnitator Framework

Why great CTOs don’t just build they evaluate. Use this framework to spot bottlenecks and benchmark performance.

What will you do next?

If your team is spending too many hours managing your Pipeline, it is time for you to begin using Automation.

Further information can be found at the following links:

- Data Infrastructure Design and how to Build Data Infrastructures that will scale and be Reliable

- Why your Data Infrastructure is continuously "Breaking"

- A list of Data Infrastructure Proof of Concept Projects

At Logiciel Solutions, our goal is to assist teams in building AI-First Data Systems that will both Automate their operations and Improve their Reliability.

Frequently Asked Questions

What is Automation?

Automation refers to the use of Application Programming Interfaces (API's) to automate the management and optimization of Data Systems without the need for Human Intervention.

Why Use Python?

Python is a flexible and scalable language that is widely used in Data Engineering and has many libraries supporting Data Engineering activities.

What Tools Can Be Used for Automation?

Tools such as Airflow, DBT, Great Expectations, and Terraform are commonly used.

What are the Primary Benefits of Automation?

Reduce Manual Effort and Improve Reliability.

What are the Primary Mistakes made with Automation?

Automation will over-invest in automation without providing sufficient Design or Monitoring for the systems automating.