Everything about the demo seemed flawless.

The dashboards were efficient; the user interface appeared crisp, and every question was answered by the sales team with unwavering confidence.

Fastforward six months, and now your team is drowning.

Pipelines are more challenging to debug than anticipated; expenditures are more than anticipated; performance is inconsistent; and now switching vendors seems even more expensive than remaining with the current vendor.

This tells me that when vendors evaluate potential data infrastructure vendors, they fail to properly conduct their evaluations; thereby failing to properly select the right vendor for their organization.

Agent-to-Agent Future Report

Understand how autonomous AI agents are reshaping engineering and DevOps workflows.

If you are the CTO, VP of Engineering, or Head of Data for your organization, then this manual will provide answers to three essential questions to assist in the vendor evaluation process:

- How can I avoid the common errors made in selecting a vendor?

- What are the correct questions to ask while evaluating a data infrastructure vendor?

- How do I build a framework that I can use to create structure in order to eliminate the potential for long term risk?

Now let's examine why evaluations fail.

Why Evaluations Fail

1) Relying too heavily on demos

Demos show you are the best case scenario,

- The perfect use of the product

- A pre-configured environment

They provide a limited view of:

- Failure handling

- Edge cases

- Real world complexity

2) Decision making based on features

Organizations typically compare:

- Feature lists

- User-interface capabilities

Organizations fail to compare:

- Long-term impact

- Long-term scalability

3) Ignoring the operational complexity of the tools

Most tools work perfectly until you attempt to use them on a greater scale than what you are using today.

4) Lack of structure when evaluating vendors

Without a framework, your decision will be subjective and you will overlook other important considerations.

What does success look like?

A strong vendor evaluation will include:

- Testing of real workloads

- Evaluation of long term impact

- Involvement of all stakeholders

In Conclusion:

Evaluating a vendor based on features without evaluating the vendor on outcomes will result in a vendor selection issue.

What Are the Most Important 5 Categories of Evaluation?

1. Performance/Scalability

Ask Yourself:

- How well will the system perform when it is being used by lots of users and at its maximum user load?

- What are the latency benchmarks?

- What happens during a peak usage period?

2. Reliability/Observability

Ask Yourself:

- How can I determine whether a failure has occurred?

- What monitoring tools do I have access to?

- How difficult is debugging?

3. Cost/Pricing Model

Ask Yourself:

- What will it cost me to own this system?

- Are there additional costs?

- As I grow my usage, what will the costs be?

4. Integration/Ecosystem Fit

Ask Yourself:

- How well does the system fit into my current stack?

- How flexible are the available APIs?

- What are the dependencies I would gain when using this system?

5. Support/Vendor Stability

Ask Yourself:

- What types of support will be available?

- How quickly will the vendor's response time be?

- What is the vendor's long-term roadmap?

Final Thoughts:

All of the above categories are the basis for determining how successful you will be long term or short term with using this system.

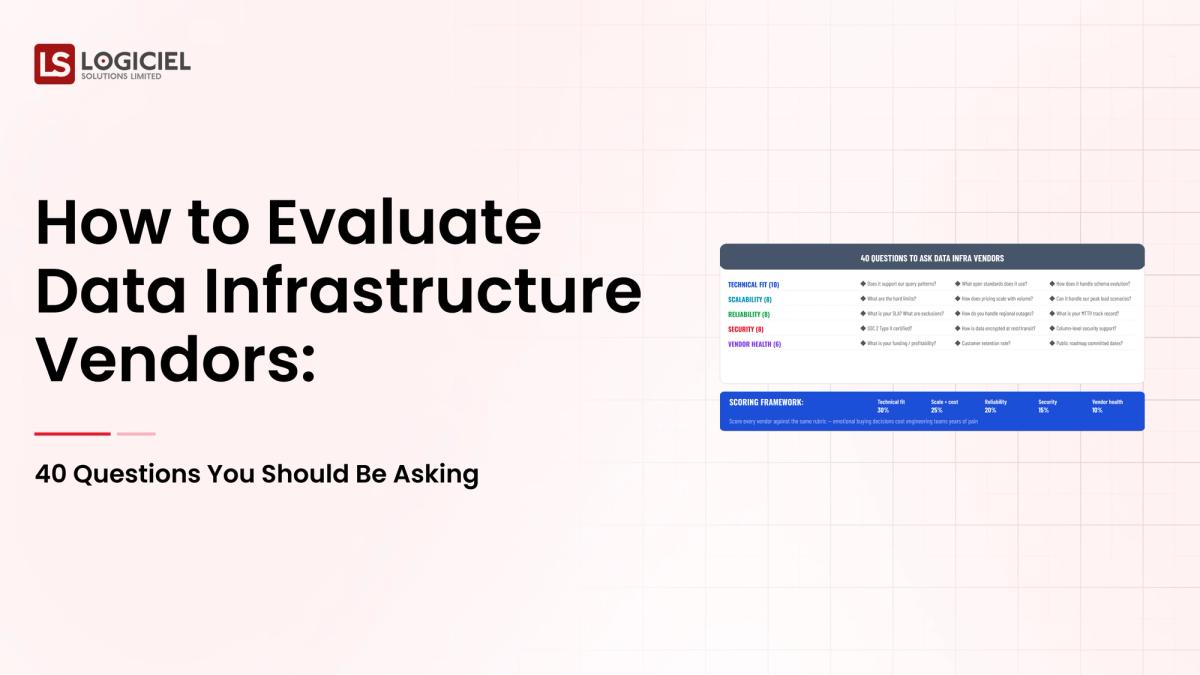

40 Questions to Ask When Evaluating a System (Organized by Category)

1. Performance/Scalability (8 Questions)

- What is the performance of the system when the data size increases?

- What are the latency benchmarks?

- How does the system handle multiple transactions at the same time?

- Is there a limit to how much throughput can occur on the system?

- Can the system scale horizontally?

- What types of performance-related issues exist?

- How will the system deal with large joins or aggregations?

- Do you have any tuning utilities available on the system?

2. Reliability/Observability (8 Questions)

- How will I know if something has failed?

- What are your alerting procedures?

- How easy will it be for me to find the cause of a failure?

- Will I have access to any built-in monitoring tools?

- How do you ensure the quality of my data?

- What logging capabilities are you providing?

- How will you handle retries?

- What is your uptime service level agreement (SLA)?

3. Cost/Pricing (8 Questions)

- What is your pricing model?

- Costs of Transfer of Data

- Costs Related to Scaling

- License Fees

- Total Ownership Costs

4. Integration & Ecosystem (8 Questions)

- Supported Integrations

- API Extensibility

- Open Standards Support

- Migration Ease

- Dependencies

- Compatibility with Existing Tools

- System Extensibility

- Hybrid Architectures Supported

5. Vendor & Support Fit (8 Questions)

- Support Availability

- Issue Response Time

- Dedicated Support

- Product Roadmap

- Update Frequency

- Vendor Financial Stability

- Reference Customers

- Onboarding Process

Conclusion:

Above Questions Confirm Actual Performance Versus Marketing Claims.

How to Implement Framework in Practice

Step 1: Create Shortlist

Choose:

- Based on Starting Criteria

- 3-4 Vendors

Step 2: Implement Structured Evaluation

By Utilizing:

- Real Data

- Real Workloads

- Measurable Criteria

Step 3: Rate All Vendors

Use Matrix Rating:

Criteria WeightVendor AVendor B

Step 4: Validate from Real Users

Ask Three Questions:

- Reference Customers

- Industry Peers

Step 5: Align Stakeholders

Ensure:

- Engineering

- Finance

- Leadership

Are Aligned.

Conclusion:

Use Structure To Reduce Bias And Improve Quality Of Decisions.

Where To Look For Red Flags During Evaluations

1) Vendors Avoiding Discussion Of Failure Scenarios

If A Vendor Cannot Discuss:

- What Would Happen If Their System Failed

- How They Recover From Failure

2) Opaque Pricing Can Create Many Different Types of Hidden Costs

- Data Transfer Costs

- Scaling Costs

- Support Costs

In addition, a lack of transparency into a vendor’s costs can dramatically increase the overall burden of operating/debugging the vendor's solution.

3) Ecosystem Lock-In

It is important to assess whether there is any potential for ecosystem lock-in when deciding on the vendor’s solution.

For example:

- Do you have to replace your entire technology stack to implement the vendor's solution?

Vendor Warning Signs Are The Things Not Highlighted In Their Marketing Material.

How Do I Decide?

1. Long-Term Alignment

Scalability, operational complexity and cost should all be considered when making your final decision on a vendor.

2. Balance Trade-Off

No vendor will have all the features and capabilities that you require, and therefore your selection should be based on your priorities and constraints.

3. Validate with Data

Use real world data to help support your final vendor decision.

Examples:

- Proof of Concept Results

- Performance Metrics

4. Build a Business Case

Include the following:

- Return on Investment (ROI)

- Cost Savings

- Improvements in Efficiency

Where does Logiciel Fit In?

Most vendors focus on providing tools and solutions, whereas Logiciel Solutions delivers outcomes:

- AI-first Engineering Approach

- Less Complexity of Operations

- More Reliable Systems

Logiciel has been a vendor of choice for many of our clients due to:

- Their ability to seamlessly integrate with other systems

- They automate the optimization and scaling processes for the end-user

When choosing a vendor for your team, consider whether they will be a good fit for your:

- Overall System

- Team (People)

- Goals

In summary

Evaluating potential data infrastructure vendors is one of the most critical decisions an engineering leader will make throughout the life of their engineering team.

Key Takeaways

- Focus on outcome vs. feature

- A structured approach helps to minimize risks

- Validate final vendor decisions with real world testing

Although this may seem complicated; when done right, it will result in:

- Better performance of your entire data infrastructure

- Reduced operating cost

- Scalable infrastructure

- Increased productivity of your entire engineering team

RAG & Vector Database Guide

Build the quiet infrastructure behind smarter, self-learning systems. A CTO’s guide to modern data engineering.

Next Steps

Once you have started to evaluate possible vendors for your system, utilize the structured framework to properly assess these options:

- Data Infrastructure Vendor Landscape: Who are the major players in 2026?

- How To Perform a Data Infrastructure Proof of Concept

- How To Justify Data Infrastructure Investment to Your CFO

The Fundamental Role of Logiciel Solutions

At Logiciel Solutions, we help teams design, build, and deploy high performance, reliable, scalable AI-first data infrastructures.

Frequently Asked Questions

How do I evaluate a data infrastructure vendor?

You need to evaluate a vendor through evaluating performance, scalability, costs, integration and support through a structured framework.

What is the biggest mistake made when evaluating a vendor?

Focusing on features versus the operational impact a vendor's solution might have on your entire organization.

How many vendors should I evaluate?

Most engineering teams will evaluate three to four vendors so as to provide enough depth of analysis to allow for comparisons.

What is a POC?

A proof-of-concept (POC) is a test of the vendor's solution to validate that it meets their team's requirements and that it can deliver the expected results on real-world workloads.

How long does it take to evaluate a vendor?

Vendor evaluation can take anywhere from four to eight weeks depending on the complexity of a vendor's solution.