There is a board deck arguing that the data team should focus on AI readiness, while the operations team argues data quality is more important, while the analytics team wants real-time pipelines. The data team is being pulled in three directions and the leadership conversation has not been framed.

This is more than a prioritization gap. It is a failure of data engineering definition.

A modern data engineering function does pipelines, contracts, observability, and platform engineering as a single discipline aimed at producing trustworthy data at the speed the business needs.

Investor-Ready Infrastructure in 90 Days

Inside a 90-day sprint that took a flagged round to a $28M close.

However, many organizations still treat data engineering as a service desk and discover the gap when AI, analytics, or operations need data that does not yet exist.

If you are a VP Data and are responsible for building or scaling your data engineering organization, the intent of this article is:

- Define what data engineering actually is in 2026

- Walk through the layers that make up the modern discipline

- Lay out the operating model that turns data engineering into a platform

To do that, let's start with the basics.

What Is Data Engineering? The Basic Definition

At a high level,data engineering in 2026 is the discipline of building, operating, and governing the systems that produce trustworthy data at the speed the business needs.

To compare:

If software engineering builds products, data engineering builds the rivers that products and analytics flow through. Both require engineering discipline; the discipline is different.

Why Is Data Engineering Necessary?

Issues that Data Engineering addresses or resolves:

- Producing trustworthy data for AI, analytics, and operations

- Bounding latency and freshness for real-time use cases

- Building the platform that compounds across data products

Resolved Issues by Data Engineering

- Translates business questions into pipeline contracts

- Surfaces data quality and freshness as production metrics

- Establishes the operating model for data systems

Core Components of Data Engineering

- Ingestion pipelines from operational sources

- Storage and modeling (warehouse, lakehouse, lake)

- Transformation and ELT patterns

- Data contracts between producers and consumers

- Observability and quality monitoring

Modern Data Engineering Tools

- Snowflake, BigQuery, Databricks for storage and compute

- dbt, Spark, Flink for transformation

- Airflow, Dagster, Prefect for orchestration

- Monte Carlo, Acceldata, Soda for observability

- Schema registries and contract testing platforms

Tooling has matured significantly; the operating discipline is the differentiator.

Other Core Issues They Will Solve

- Provides defensible data lineage for audit and regulator review

- Reduces incident severity through observability

- Builds reusable platform for the next data product

In Summary: Data engineering in 2026 is the discipline that produces trustworthy data at the speed the business needs.

Importance of Data Engineering in 2026

Data engineering matters more in 2026 than ever before. Four reasons.

1. AI demands trustworthy data.

AI models trained or grounded on unreliable data produce unreliable outputs. Data engineering is the foundation.

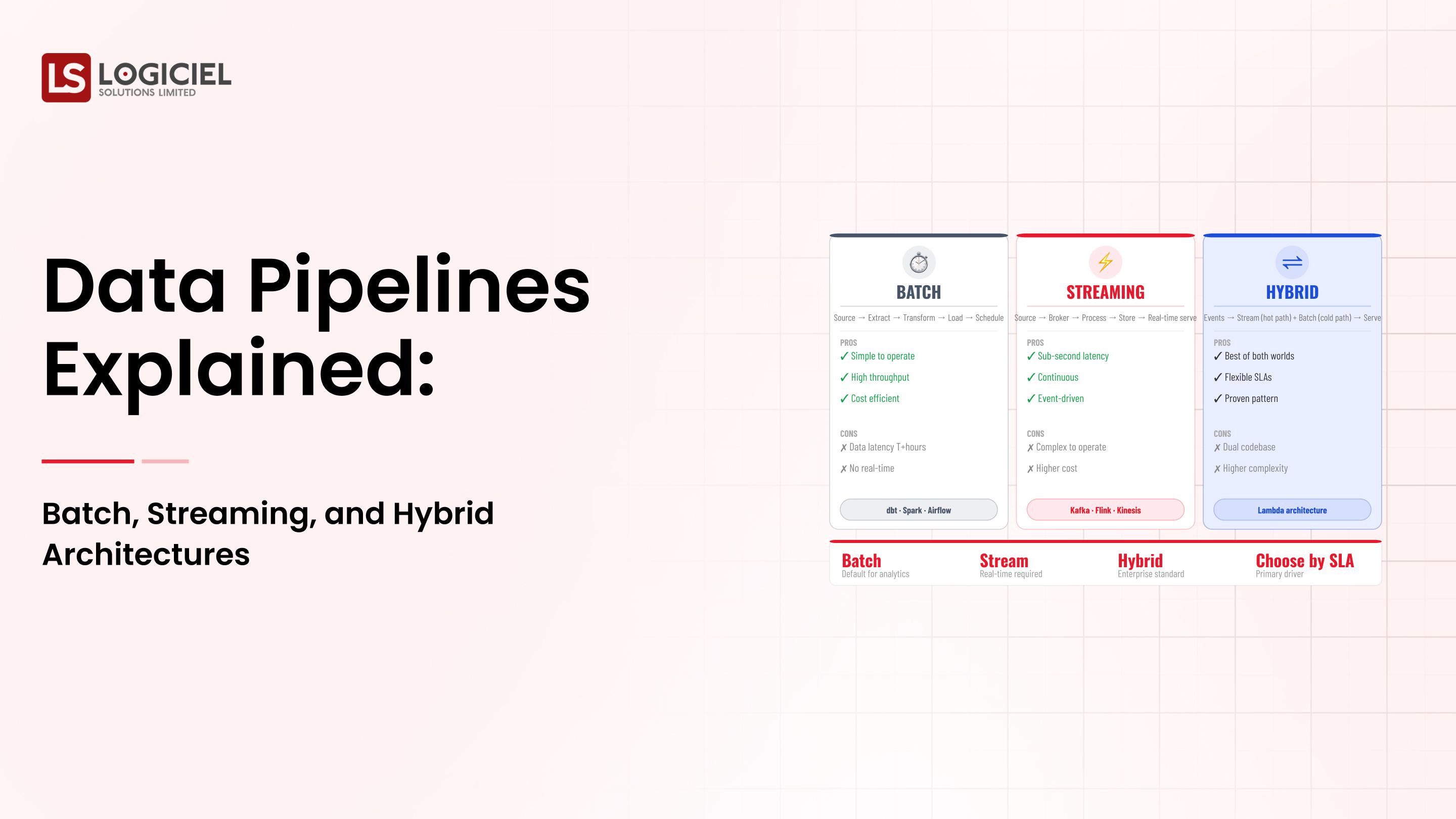

2. Real-time use cases are mainstream.

Streaming pipelines and event-driven architectures have moved from niche to standard. The skill set has expanded.

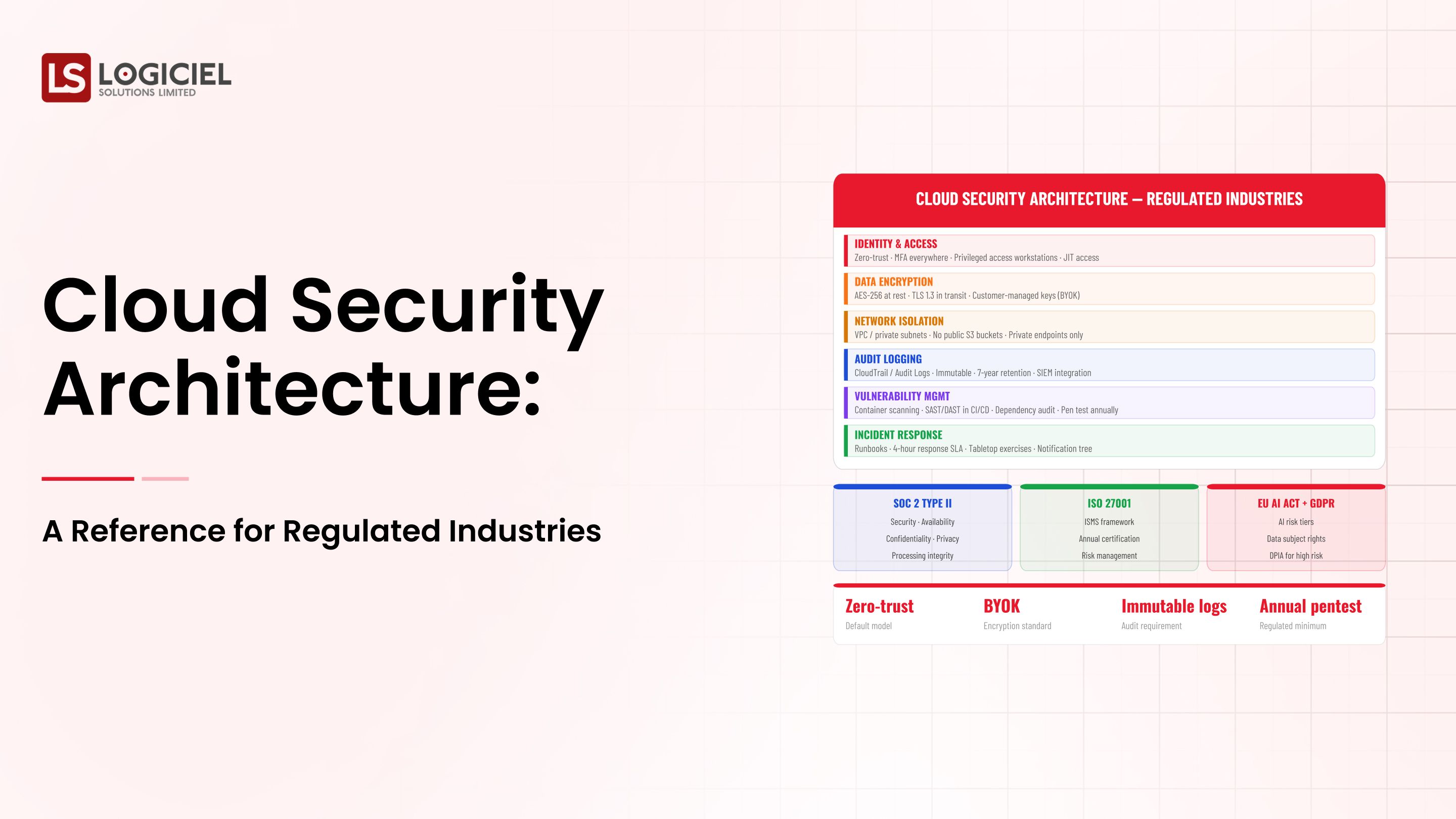

3. Regulatory expectations require lineage.

Audit and regulator reviews now require defensible data lineage. Without engineering discipline, the lineage does not exist.

4. Data products are now treated as products.

The shift from data tickets to data products changes how teams scope, build, and operate data work.

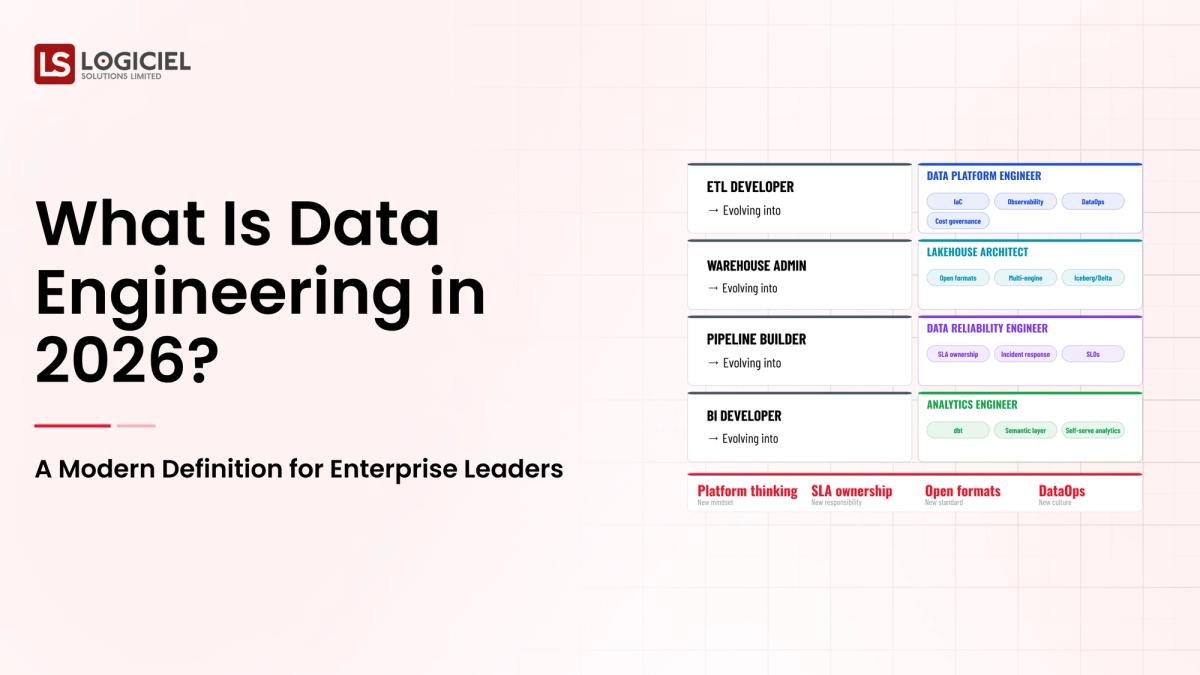

Traditional vs. Modern Data Engineering Concepts

- Service desk for data tickets vs. platform team building data products

- Manual quality checks vs. observability streaming

- Implicit contracts between teams vs. explicit data contracts

- Annual review cadence vs. continuous engineering practices

In summary: Data engineering in 2026 is the discipline that lets AI, analytics, and operations build on data that the business can trust.

Details About the Core Components of Data Engineering: What Are You Designing?

Let's go through each layer.

1. Ingestion Layer

Where data enters the platform.

Ingestion concerns:

- Source connectors and CDC

- Schema validation at ingest

- Latency and freshness budgets per source

2. Storage and Modeling Layer

Where data lives and how it is shaped.

Storage concerns:

- Warehouse, lakehouse, lake choices

- Modeling patterns: dimensional, normalized, denormalized

- Partitioning, clustering, and cost optimization

3. Transformation Layer

Where raw data becomes usable.

Transformation concerns:

- ELT patterns with dbt or Spark

- Quality checks on transform output

- Lineage capture across transformations

4. Contracts Layer

Explicit agreements between producers and consumers.

Contract concerns:

- Schema, semantics, freshness commitments

- Versioning and deprecation

- Contract testing in CI/CD

5. Observability Layer

Knowing what the platform is doing.

Observability concerns:

- Quality and freshness signals

- Pipeline health and latency

- Cost attribution per data product

Benefits Gained from Contracts and Observability

- Trustworthy data for downstream consumers

- Faster incident detection and recovery

- Reusable platform for the next data product

How It All Works Together

Ingestion captures source data with contracts. Storage and modeling shape it for use. Transformation produces usable datasets. Contracts protect downstream consumers. Observability surfaces what the platform is doing. Together, the layers turn data engineering into a platform that produces trustworthy data at speed.

Common Misconception

Data engineering is the team that runs SQL and Airflow.

Data engineering is platform engineering for data systems. Pipelines are one part of a larger discipline.

Key Takeaway: Each layer requires its own engineering investment. Programs that under-invest in any layer have predictable gaps.

Real-World Data Engineering in Action

Let's take a look at how data engineering operates with a real-world example.

We worked with a data team transitioning from service desk to platform team, with these constraints:

- Multiple downstream teams (analytics, AI, operations)

- Existing pipelines built ad hoc over years

- Limited engineering headcount

Step 1: Inventory the Data Landscape

Sources, pipelines, consumers, contracts (explicit or implicit).

- Source inventory

- Pipeline inventory

- Consumer and use-case mapping

Step 2: Establish Data Contracts

Explicit producer-consumer agreements with versioning.

- Schema and semantics

- Freshness and quality SLOs

- Contract testing in CI/CD

Step 3: Build the Observability Layer

Quality, freshness, lineage, cost.

- Quality monitoring per dataset

- Freshness SLOs and alerts

- Lineage capture across transformations

Step 4: Refactor to Platform Patterns

Reusable transformation libraries; templated pipelines.

- Reusable transformation patterns

- Templated pipeline scaffolding

- Self-service onboarding for new sources

Step 5: Operate as a Platform

Quarterly review cadence; data product roadmap; named owners.

- Quarterly platform review

- Roadmap aligned with consumer needs

- Named owners per data product

Where It Works Well

- Explicit data contracts between producers and consumers

- Observability streaming quality and freshness

- Platform team treating data as products

Where It Does Not Work Well

- Service desk model for data work

- Implicit contracts between teams

- Annual review cadence

Key Takeaway: The data team that operates as a platform produces trustworthy data faster than the team that operates as a service desk.

Common Pitfalls

i) Service desk model

Service desks deliver tickets; platforms deliver products. The shift is structural, not cosmetic.

- Move to product model

- Define data products

- Roadmap aligned with consumers

ii) Implicit contracts

Implicit contracts break silently. Explicit contracts produce signal.

iii) No observability layer

Without observability, quality issues compound. Build the layer; surface signals.

iv) Quality as an afterthought

Quality designed in is cheaper than quality bolted on. Build it into ingest and transform.

Takeaway from these lessons: Most data engineering struggles are operating-model failures, not tooling failures. Tools are widely available; discipline is the work.

Data Engineering Best Practices: What High-Performing Teams Do Differently

1. Treat data as products

Each data product has owners, consumers, contracts, and a roadmap.

2. Establish explicit contracts

Schema, semantics, freshness commitments. Tested in CI/CD.

3. Build the observability layer

Quality, freshness, lineage, cost. Streaming, not periodic.

4. Refactor to platform patterns

Reusable transformations, templated pipelines, self-service onboarding.

5. Operate as a platform

Quarterly review cadence, named owners, roadmap aligned with consumers.

Logiciel's value add is helping data leaders build data engineering as a platform with contracts, observability, and operating model that produce trustworthy data at speed.

Takeaway for High-Performing Teams: High-performing data teams operate as platform organizations with explicit contracts and observability streaming.

Signals You Are Designing Data Engineering Correctly

The board deck won't tell you whether the program is healthy. The team's daily evidence will.

Watch for whether the team can describe failure modes calmly. Programs that have been running long enough have failure modes; the team that talks about them without flinching is the team that's actually been running them.

Watch for cost visibility. Today, can the team tell you yesterday's spend and what changed? If yes, the discipline is real. If no, it's coming.

Watch for whether change feels boring. Routine deploys, routine rollbacks, routine model swaps. Drama in deploys is a sign of an immature system, not an exciting one.

Watch for whether eval runs every day. Live dashboard, real numbers, regression alerts. Not a quarterly slide with hand-waved confidence.

Watch for whether the team can quantify vendor lock-in. Rip-and-replace cost in dollars and weeks. Programs that can't answer this haven't done the math, which means the math is going to surprise them later.

Adjacent Capabilities and Connected Work

You can't run this in isolation. There are a handful of other surfaces it touches every week, and ignoring them is how programs lose their second quarter.

The data platform shows up first. Observability is right behind it. The security review process is rarely visible until you need it. Team capacity also splits across platform engineering, applied ML, and SRE; leadership attention splits across whatever the next AI initiative is. Pretending these neighbors don't exist is comfortable for about a month.

The dumbest version of this mistake is "that's their team's problem." It isn't. The data platform integration, the runtime security review, the on-call rotation that wakes up when something breaks: all yours, even if other teams technically own the surface. Treat the neighbors as collaborators with shared timelines, not as dependencies you can route around.

Stakeholder Considerations and Communication

You'll be asked the same questions in different shapes by different people. Worth thinking ahead about each.

Boards want risk, return, and competitive position. CFOs want the unit economics and a number that holds up across sensitivity scenarios. CISOs want the threat model and how you'll defend an audit. Engineering wants the scope, the build/buy split, and the operational load they'll carry. The line of business wants a date and a user experience.

Anticipate these and you save yourself from improvising in the hot seat. A one-page brief per audience, refreshed every quarter, is cheap. The only reason most programs don't have them is that nobody made it someone's job. Make it someone's job.

Cadence is the other half. Weekly updates while you're shipping. Monthly during steady-state. Every incident or material change, no exceptions. Programs that go quiet between releases lose the trust they earned earlier. Decide how often you'll talk to each stakeholder before you start, then keep that promise.

Metrics That Tell You Data Engineering Is Working

The success signals above tell you what good looks like at a moment in time. These are the leading indicators that tell you whether the program is improving across moments.

The first is time from concept to deployment. If a new use case takes nine weeks to ship today and twelve weeks took to ship six months ago, the platform is paying back. If it took six weeks six months ago and nine weeks today, something is rotting.

The second is per-unit cost. Each quarter, are you spending less per unit of output, or more? If usage is flat, the answer is mostly about platform efficiency. If usage is growing, the answer is mostly about whether your cost shape held up under scale.

The third is incident severity. New programs have high-severity incidents because the operating model is new. Mature programs have lower-severity incidents because the operating model has absorbed the lessons. If your severity isn't dropping, your operating model isn't learning.

The fourth is reuse. Look at program two and program three. How much of what you built for program one is in them? High reuse means the platform investment is the gift that keeps giving. Low reuse means you're shipping the same thing over and over.

The fifth is sponsor confidence. Indirect, but readable in approved budget and strategic emphasis. If your sponsor is asking for more, you're winning. If they're asking you to slow down or scope down, the trust has shifted.

Conclusion

Data engineering in 2026 is platform engineering for data systems. The layers are well known; the operating model is the work.

Key Takeaways:

- Data engineering is platform engineering, not service desk work

- Five layers: ingestion, storage, transformation, contracts, observability

- Operating model and cadence are the multipliers

When data engineering is run as a platform, the benefits compound:

- Trustworthy data for AI, analytics, and operations

- Faster incident detection and recovery

- Reusable platform for the next data product

- Defensible lineage for audit and regulator review

Board Approval for Infrastructure Modernization

Inside a financial-frame business case that turned a 14-month stall into a 45-minute board approval.

Call to Action

If your data team is operating as a service desk, the move this quarter is to define data products, establish contracts, and build the observability layer.

Learn More Here:

At Logiciel Solutions, we work with data leaders on the platform transformation: contracts, observability, and operating model that turn data engineering into a multiplier.

Explore how to modernize your data engineering function.

Frequently Asked Questions

What is data engineering?

The discipline of building, operating, and governing the systems that produce trustworthy data at the speed the business needs.

How is data engineering different from analytics engineering?

Data engineering builds the platform; analytics engineering builds the analytical models on top. Both are engineering disciplines; the boundary is the consumer-facing model layer.

What does the team look like?

Platform engineer, data engineer, analytics engineer, observability engineer, governance partner. Smaller teams compress roles; larger teams add specialists.

How do data contracts work?

Explicit producer-consumer agreements covering schema, semantics, freshness, and quality. Tested in CI/CD; versioned with deprecation paths.

What is the biggest mistake in data engineering?

Operating as a service desk instead of a platform team. The shift is structural, not cosmetic.