There is a debate in the leadership team about whether to adopt data mesh. One side argues it is the future; the other side argues it is overhead. The honest answer is more nuanced and depends on the organization's actual structure.

This is more than an architectural debate. It is a strategy choice that determines how data engineering scales for the next three years.

A modern data mesh implementation is decentralized data product ownership with federated governance, supported by a platform layer that handles the cross-cutting concerns.

Real Estate Marketing Attribution

A single attribution mistake led to a 22% pipeline drop. Here’s how real estate teams fix it with full-funnel visibility.

However, many organizations adopt the label without the structure and discover that data mesh without the underlying organizational shift is data debt with new vocabulary.

If you are a Chief Data Officer and are responsible for building or scaling your data architecture transformation, the intent of this article is:

- Define what data mesh actually is in practice

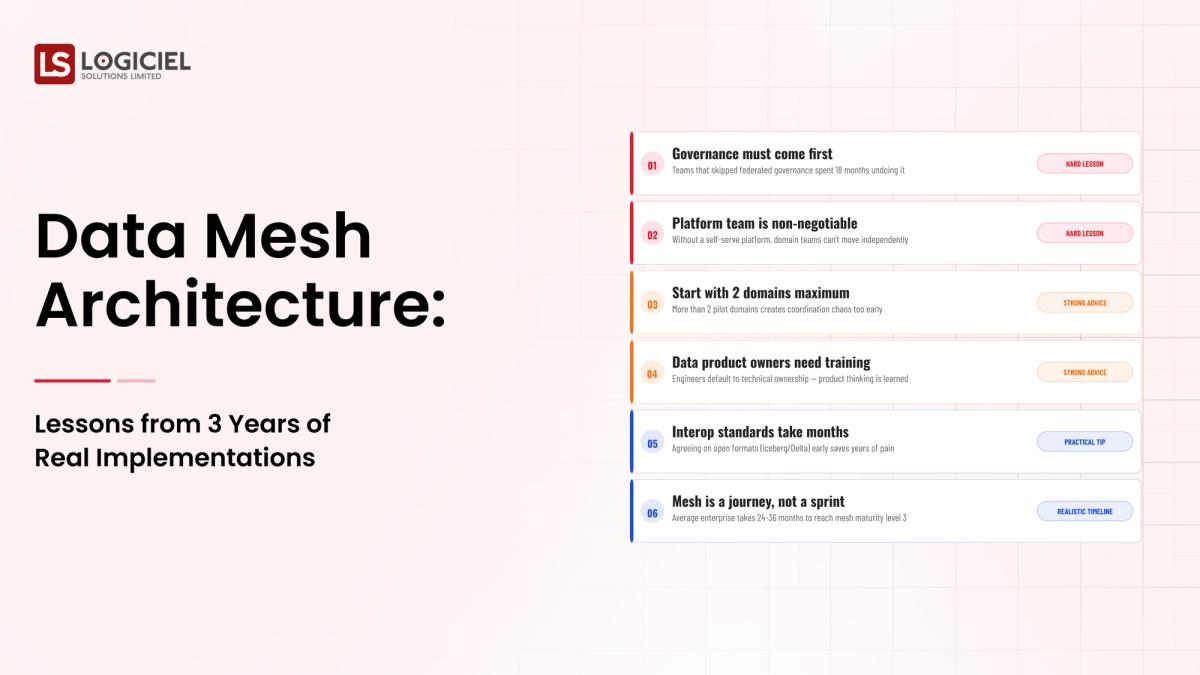

- Walk through lessons from three years of real implementations

- Lay out the decision criteria for whether data mesh fits your organization

To do that, let's start with the basics.

What Is Data Mesh Architecture? The Basic Definition

At a high level, data mesh is decentralized data product ownership with federated governance, supported by a platform layer that handles cross-cutting concerns like ingestion, storage, observability, and contracts.

To compare:

If centralized data platforms are a single shared kitchen, data mesh is multiple kitchens with shared standards. The standards are the prize; the kitchens are the structure.

Why Is Data Mesh Architecture Necessary?

Issues that Data Mesh Architecture addresses or resolves:

- Scaling data engineering past the centralized team bottleneck

- Aligning data ownership with the teams closest to the data

- Building federated governance that does not slow product teams

Resolved Issues by Data Mesh Architecture

- Distributes data ownership without losing standards

- Builds the platform layer that supports decentralized teams

- Establishes federated governance that scales

Core Components of Data Mesh Architecture

- Domain-aligned data products with named owners

- Self-serve platform layer for cross-cutting concerns

- Federated governance with computational policies

- Data product contracts and observability

- Operating model with quarterly platform reviews

Modern Data Mesh Architecture Tools

- Data catalog platforms (Atlan, Collibra) for data product discovery

- Self-serve platforms built on Snowflake, BigQuery, Databricks

- Open Policy Agent for federated governance enforcement

- Schema registries and contract testing frameworks

- Observability platforms (Monte Carlo, Acceldata) for cross-domain monitoring

Tools support the architecture; the organizational shift is the differentiator.

Other Core Issues They Will Solve

- Builds organizational muscle for distributed data work

- Reduces centralized team bottlenecks

- Scales data work to the size of the organization

In Summary: Data mesh is an organizational and technical pattern that distributes data ownership while preserving standards through a platform layer.

Importance of Data Mesh Architecture in 2026

Data mesh matters in 2026 for organizations that have outgrown the centralized data team model. Four reasons.

1. Centralized teams hit scaling limits.

Past a certain organizational size, centralized data teams become bottlenecks. Data mesh distributes the work.

2. Domain context lives in domain teams.

Domain teams know their data better than centralized engineers. Mesh aligns ownership with knowledge.

3. Platform engineering has matured.

Self-serve platforms now support data mesh in ways that were impractical three years ago.

4. Governance can be federated.

Computational policies and federated governance let standards apply across decentralized teams without manual review.

Traditional vs. Modern Data Mesh Architecture Concepts

- Centralized data team vs. distributed data product ownership

- Manual governance review vs. computational policy enforcement

- Generic shared infrastructure vs. self-serve platform layer

- Implicit data product boundaries vs. explicit contracts

In summary: Data mesh is a pattern for organizations large enough to need it; smaller organizations stay centralized.

Details About the Core Components of Data Mesh Architecture: What Are You Designing?

Let's go through each layer.

1. Domain Data Products

Data owned by the domain team that produces or uses it.

Product components:

- Named owner per data product

- Documented contract with consumers

- Roadmap and sunset criteria

2. Self-Serve Platform Layer

The shared infrastructure that domain teams use.

Platform components:

- Ingestion and storage scaffolding

- Observability and quality monitoring

- Self-serve onboarding for new data products

3. Federated Governance

Standards applied across decentralized teams.

Governance components:

- Computational policies enforced at platform layer

- Cross-domain governance committee

- Tabletop exercises for governance scenarios

4. Contracts Layer

Producer-consumer agreements between data products.

Contract components:

- Schema, semantics, freshness, quality

- Versioning and deprecation

- CI/CD testing across producers and consumers

5. Operating Model

Quarterly cadence and platform evolution.

Operating components:

- Quarterly platform review with domain teams

- Roadmap aligned with domain needs

- Sunset criteria for unused data products

Benefits Gained from Self-Serve Platform and Federated Governance

- Distributed data work without losing standards

- Domain context applied where it matters

- Scalable data engineering past centralized team limits

How It All Works Together

Domain teams own data products. The self-serve platform handles cross-cutting concerns. Federated governance applies standards computationally. Contracts protect consumers. The operating model evolves the platform. Together, the layers turn data mesh from concept into operating reality.

Common Misconception

Data mesh is the right answer for every data organization.

Data mesh is the right answer for organizations that have outgrown centralized teams. Smaller organizations should stay centralized.

Key Takeaway: Each layer enables the others. Programs that adopt the label without the structure ship organizational confusion.

Real-World Data Mesh Architecture in Action

Let's take a look at how data mesh architecture operates with a real-world example.

We worked with a Chief Data Officer evaluating data mesh adoption across multiple business units, with these constraints:

- Multiple business units with diverse data needs

- Centralized data team hitting scaling limits

- Federated governance requirements across regions

Step 1: Assess Organizational Readiness

Is the organization large enough? Do domain teams want ownership? Is platform engineering mature enough?

- Organizational size assessment

- Domain team interest and capability

- Platform maturity assessment

Step 2: Build the Platform Layer

Self-serve scaffolding for data products.

- Ingestion and storage scaffolding

- Observability built in

- Self-serve onboarding

Step 3: Define Data Products and Owners

Per-domain data product with named owner and contract.

- Per-product owner

- Per-product contract

- Per-product roadmap

Step 4: Establish Federated Governance

Computational policies; governance committee; tabletop exercises.

- Computational policies at platform

- Governance committee

- Quarterly tabletop

Step 5: Operate as a Platform Org

Quarterly review; roadmap aligned with domains; platform evolution.

- Quarterly platform review

- Domain-aligned roadmap

- Platform evolution roadmap

Where It Works Well

- Data mesh adopted with the underlying organizational shift

- Self-serve platform that domains actually want to use

- Federated governance enforced computationally

Where It Does Not Work Well

- Adopting the label without the structure

- Self-serve platform that domains avoid

- Manual governance review across distributed teams

Key Takeaway: Data mesh works when the organizational shift matches the technical pattern; it fails when one is adopted without the other.

Common Pitfalls

i) Adopting the label without the structure

Calling teams 'data product owners' without giving them ownership produces confusion.

- Real ownership requires real authority

- Real ownership requires real funding

- Real ownership requires real accountability

ii) Self-serve platform that nobody uses

Platforms domains avoid are platforms that produce shadow infrastructure.

iii) Manual governance review

Manual review across distributed teams does not scale. Federate computationally.

iv) Premature data mesh

Smaller organizations should stay centralized. Mesh is for organizations that have outgrown centralized teams.

Takeaway from these lessons: Most data mesh failures are organizational failures, not technical failures.

Data Mesh Architecture Best Practices: What High-Performing Teams Do Differently

1. Assess readiness before adoption

Organizational size, domain team interest, platform maturity.

2. Build the self-serve platform first

Domain teams need a platform they want to use; build it before mandating mesh.

3. Federate governance computationally

Policies enforced at platform layer, not through manual review.

4. Define data products with real ownership

Authority, funding, accountability per product owner.

5. Operate as a platform org

Quarterly review aligned with domains; platform evolution roadmap.

Logiciel's value add is helping CDOs assess data mesh readiness and build the platform and governance layers that make distributed data work.

Takeaway for High-Performing Teams: High-performing organizations adopt data mesh when they have outgrown centralized teams and have the platform maturity to support it.

Signals You Are Designing Data Mesh Architecture Correctly

The signals below distinguish programs that are working from programs that look like they're working. Worth checking yours against the list.

The team describes failure modes without theater. They know the last three things that broke. They know why. They know what changed.

Cost is current. The dashboard shows yesterday's spend, broken out by feature, with someone whose job it is to explain it.

Change is unremarkable. Deploys ship, rollbacks happen, models swap, and nobody panics. Drama in production deploys is a sign that the system isn't yet running like infrastructure.

Eval runs continuously. Daily at minimum. Regression blocks deploy. Quality is a number on a screen, not an opinion in a meeting.

The team has done the lock-in math. The cost of removing each major dependency is documented in dollars and weeks. They didn't wait for the painful renewal to figure that out.

Adjacent Capabilities and Connected Work

Programs like this never run alone. They share infrastructure with the data platform, share alert noise with whatever observability stack the SRE team runs, and share a security review queue with everything else trying to ship that quarter.

They also share team capacity, which is the part that gets lost in planning. Platform engineering, applied ML, and SRE all carry pieces of this work. So does whatever leadership has marked as the next big AI initiative. Naming the overlap on day one prevents a year of "I thought your team had that."

If you take one thing from this section, take this: the integration with the data platform is your problem, not theirs. Same for the security review. Same for the on-call rotation. Treating those as someone else's job pushes work onto teams that didn't plan for it, and it comes back as a delay or an incident. Own what you depend on; partner where it makes sense; share the timeline.

Stakeholder Considerations and Communication

The same program will be evaluated by four or five audiences who don't share vocabulary. Worth getting ahead of.

Board questions: risk, ROI, competitive position. CFO: unit economics, forecast under multiple usage scenarios. CISO: threat model, audit defensibility. Engineering: scope, buy/build, on-call load. Line of business: when value lands, what users experience. None of these questions are unreasonable. They're just easy to fail when you're answering them in real time without prep.

The fix is boring but it works. Build a one-page brief for each major stakeholder. Update quarterly. Have it ready before the meeting where you need it. The cost of writing them is low; the cost of not having them is the meeting where the program loses its sponsor.

The communication cadence question is the same idea, applied to time. Weekly during delivery. Monthly during operation. Every incident, every meaningful change. The teams that protect the cadence keep their stakeholders. The teams that go silent between milestones surprise people, and surprises in this context are rarely good news.

Metrics That Tell You Data Mesh Architecture Is Working

Below the surface signals above are some operational metrics that are worth tracking weekly. They're not the metrics that make it into board decks. They're the ones that tell you, internally, whether the program is on the path or running in place.

Time from idea to production is the most useful single number. New use cases moving faster every quarter is the cleanest sign the platform is paying back. New use cases taking longer than they did six months ago is a sign that something has accreted that nobody is fixing.

Cost per unit of value is next. Spending less per output each quarter is the leading indicator that the platform layer is amortizing. Spending more is the leading indicator that you're carrying complexity nobody has audited.

Incident severity over time should trend downward. Operating models mature; runbooks improve; on-call gets better at triage. Flat severity is fine for a quarter; flat severity for a year says the team has stopped learning from incidents.

Reuse rate across programs is the metric most CTOs forget to track. What fraction of program one is in program two? In program three? High reuse is what compounds. Low reuse is what makes the second program as expensive as the first.

Stakeholder confidence is harder to measure but easier to feel. The proxies: budget approved, scope expanding rather than contracting, sponsor asking for more rather than asking you to defend. None of these are vanity. All of them tell you whether the program has runway.

Conclusion

Data mesh is an organizational and technical pattern. It works when adopted as both; it fails when adopted as one without the other.

Key Takeaways:

- Mesh is for organizations that have outgrown centralized teams

- Self-serve platform plus federated governance is the structure

- Adopting the label without the structure produces confusion

When data mesh is adopted with the underlying organizational shift, the benefits compound:

- Distributed data work without losing standards

- Domain context applied where it matters

- Scalable data engineering past centralized limits

- Federated governance that does not slow domains

Real Estate Identity Resolution

Duplicate records are hiding your best leads. Identity resolution reveals true buyer intent and fixes your pipeline.

Call to Action

If you are evaluating data mesh, the move this quarter is to assess organizational readiness before adopting the pattern.

Learn More Here:

- Data Mesh vs Data Fabric All in 2026

- Enterprise Data Architecture 2026 Guide

- Data Mesh Architecture Concept Summary

At Logiciel Solutions, we work with data leaders on data mesh assessment and the platform and governance layers that make distributed data work.

Explore whether data mesh fits your organization.

Frequently Asked Questions

What is data mesh?

Decentralized data product ownership with federated governance, supported by a platform layer that handles cross-cutting concerns.

Is data mesh the right answer for every organization?

No. It is for organizations that have outgrown centralized data teams. Smaller organizations should stay centralized.

What does the platform layer do?

Ingestion and storage scaffolding, observability, self-serve onboarding, computational governance enforcement. The platform is what makes mesh practical.

How do we federate governance?

Computational policies enforced at the platform layer; cross-domain governance committee for cases that cannot be computationally enforced; quarterly tabletop exercises.

What is the biggest mistake in data mesh adoption?

Adopting the label without the underlying organizational shift. Calling teams 'data product owners' without giving them real ownership produces confusion.