Everything looks fine-until it doesn’t.

Your dashboards are running. Pipelines show “green.” Teams trust the data.

Then suddenly:

- Metrics drop

- Reports look wrong

- Models behave unpredictably

By the time anyone notices, the issue has already spread.

This is the reality for many data-driven organizations. The problem is not that pipelines fail-it’s that they fail silently.

Without observability, you don’t know when, where, or why things broke.

AI – Powered Product Development Playbook

How AI-first startups build MVPs faster, ship quicker, & impress investors without big teams.

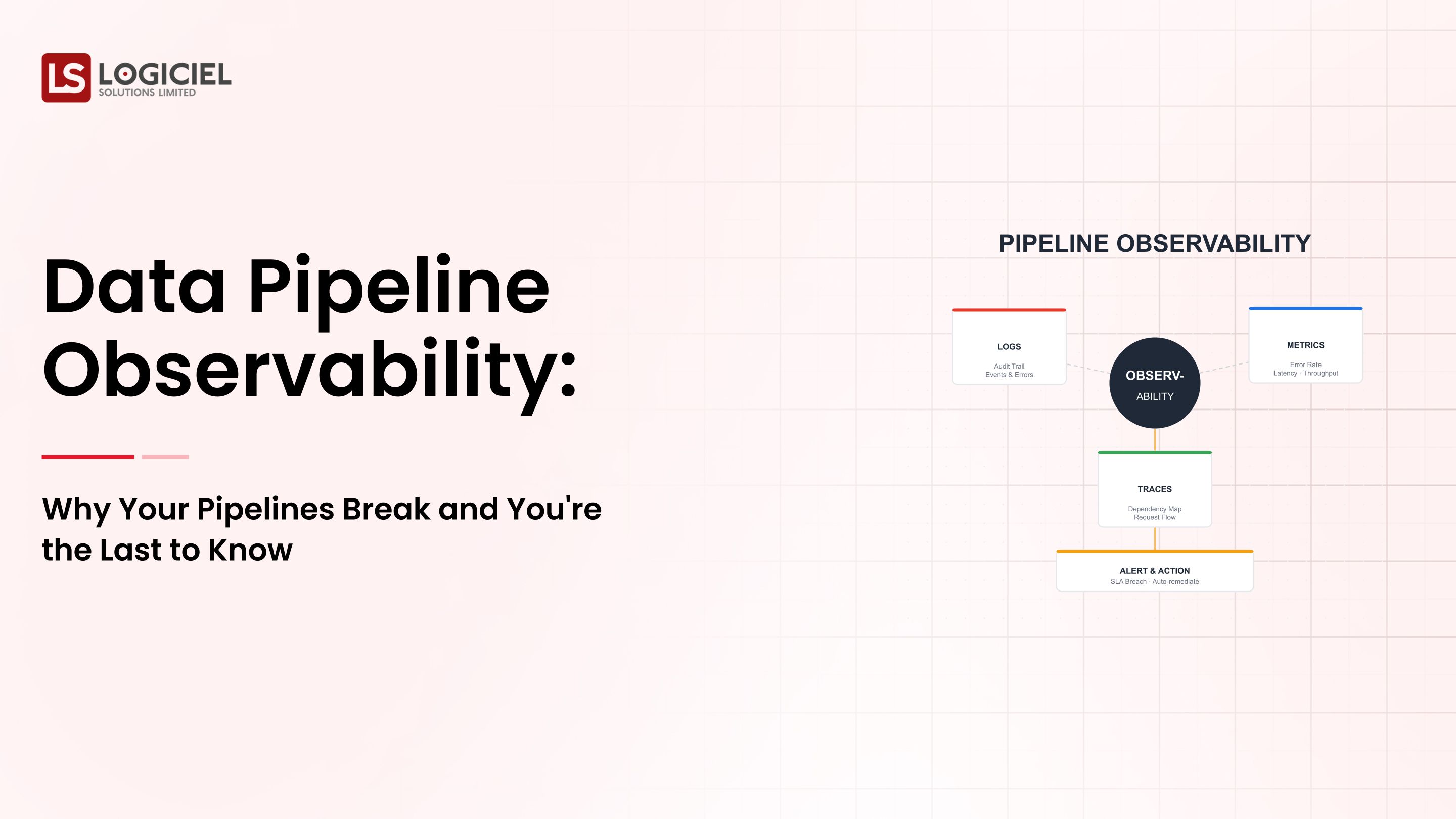

What is Data Pipeline Observability?

Data pipeline observability is the ability to:

- Track data flow across systems

- Detect anomalies in real time

- Identify root causes of failures

- Ensure data reliability end-to-end

Observability vs Traditional Monitoring

Monitoring tells you:

- Whether a job ran

- Whether it failed or succeeded

Observability tells you:

- Whether the data is correct

- Whether it is complete and fresh

- Why something went wrong

Key takeaway: Monitoring shows status. Observability explains behavior.

Why Pipelines Fail Without Detection

1. “Successful” Pipelines Can Still Produce Bad Data

Pipelines often track execution, not data quality.

Example:

- Records dropped

- Incorrect formats

The system reports success, but the business sees failure.

2. No End-to-End Visibility

Modern pipelines span:

- Multiple tools

- Multiple teams

- Multiple environments

There is no single source of truth.

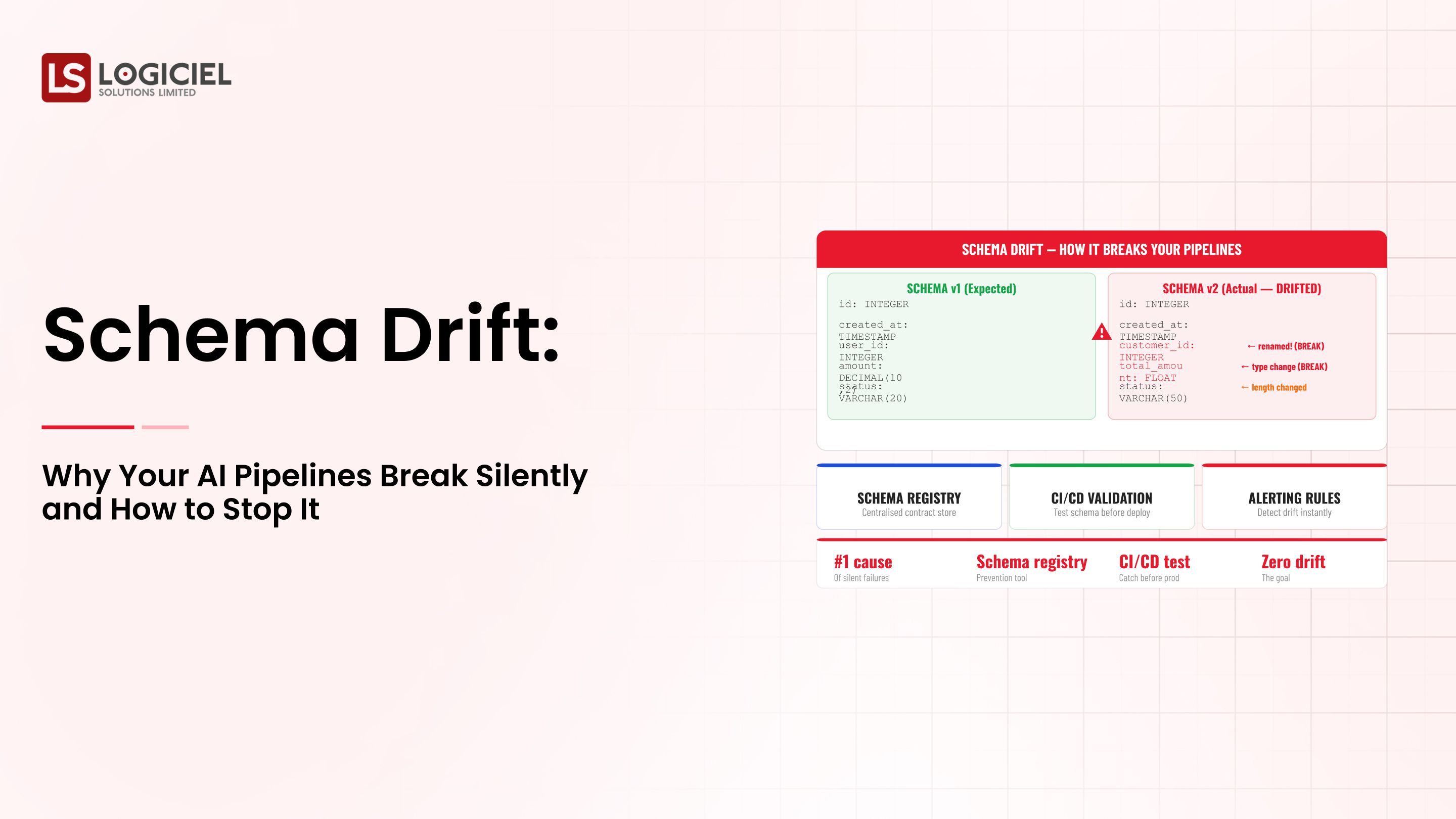

3. Schema Drift

Data changes over time:

- Fields change

- Types evolve

- Structures break

Without controls, this leads to silent failures.

4. Delayed Feedback Loops

Issues are detected:

- Hours later

- Days later

This increases cost and reduces trust.

5. Alert Fatigue

Too many alerts lead to:

- Ignored warnings

- Missed critical issues

Key takeaway: The biggest risk is not failure-it’s undetected failure.

The Hidden Cost of Poor Observability

1. Poor Business Decisions

Incorrect data leads to flawed strategies and lost revenue.

2. Reduced Engineering Productivity

Teams spend time debugging instead of building.

3. Loss of Data Trust

Teams stop relying on data and revert to manual processes.

Key takeaway: Observability is a business requirement, not just a technical feature.

Core Components of Observability

1. Data Quality Monitoring

Track:

- Missing values

- Anomalies

- Unexpected changes

2. Data Lineage

Understand:

- Where data comes from

- How it changes across pipelines

3. Pipeline Health Monitoring

Monitor:

- Latency

- Throughput

- Failures

4. Schema Management

Control schema changes and ensure compatibility.

5. Alerting and Incident Management

Send the right alerts to the right teams at the right time.

Key takeaway: Observability requires multiple layers working together.

Choosing the Right Platform

Modern observability relies on cloud-native systems:

- AWS: Scalable and flexible

- Google Cloud: Strong analytics capabilities

- Azure: Enterprise-grade governance

Key considerations:

- Integration with pipelines

- Real-time monitoring

- Scalability

Key takeaway: Implementation matters more than platform choice.

Cost vs Value of Observability

Costs include:

- Storage

- Monitoring tools

- Compute

Optimization strategies:

- Tiered storage

- Focus on critical pipelines

- Automate anomaly detection

Key takeaway: Poor data costs more than observability.

Security in Observability Systems

Observability systems handle sensitive data.

Best practices:

- Mask sensitive data

- Enforce access control

- Encrypt data in transit and at rest

Real-Time Observability

Real-time systems improve:

- Detection speed

- Response time

- Data accuracy

Key factors:

- Low latency

- Scalability

- Integration

Key takeaway: Faster detection leads to faster recovery.

The Role of Automation

Benefits:

- Faster issue detection

- Reduced manual effort

- Consistent monitoring

What to automate:

- Pipeline deployment (CI/CD)

- Anomaly detection

- Incident response

Key takeaway: Automation shifts teams from reactive to proactive.

AI in Observability

Modern tools use AI for:

- Anomaly detection

- Root cause analysis

- Predictive monitoring

Trade-offs:

- Higher cost

- Increased complexity

Key takeaway: AI improves speed but requires strong governance.

Architecture Impact on Observability

Data Warehouse:

- Easier to monitor

- Structured data

Lakehouse:

- More flexible

- Harder to monitor

Key takeaway: Choose architecture based on observability needs, not just scale.

How to Choose Observability Tools

Key criteria:

- End-to-end visibility

- Anomaly detection

- Integration capabilities

- Ease of use

Case Example: Silent Pipeline Failure

Problem:

An e-commerce company relies on real-time dashboards.

A schema change causes data loss-but the pipeline reports success.

Impact:

- Incorrect revenue reporting

- Poor business decisions

Solution:

- Implement observability

- Add schema validation

- Enable real-time alerts

Result:

- Faster issue detection

- Reduced business impact

- Increased trust in data

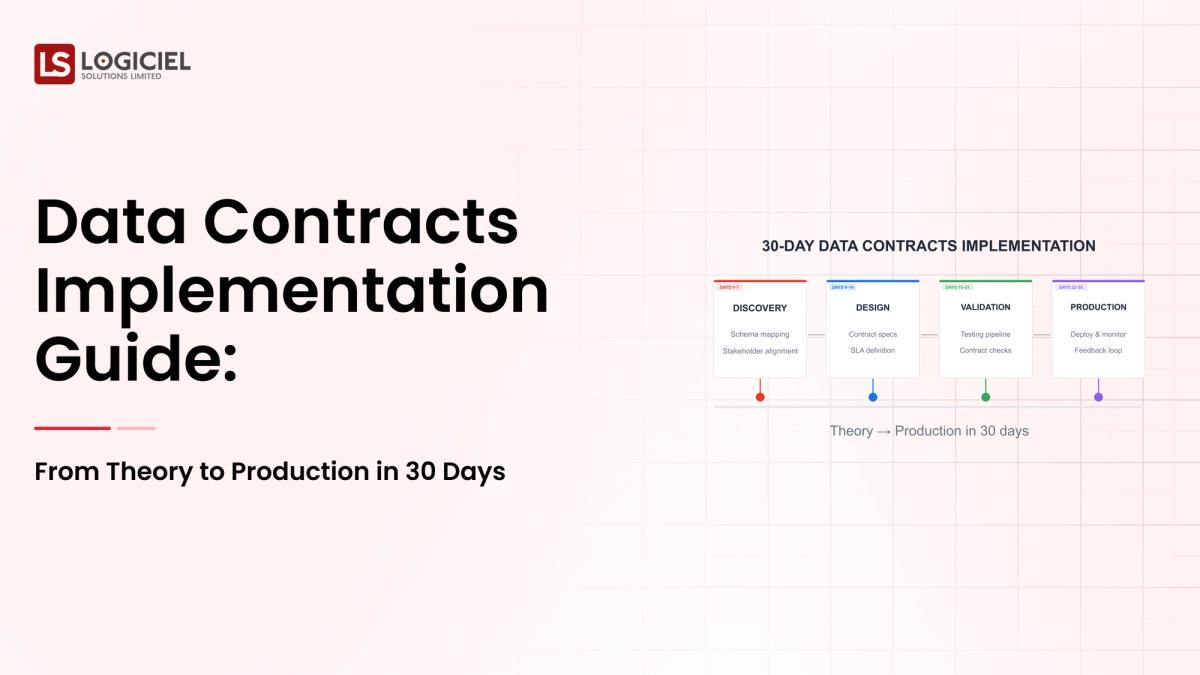

Future of Data Pipeline Observability

- AI-driven observability

- Unified data platforms

- Data contracts

- Self-healing systems

Key takeaway: Observability will become a core layer of data infrastructure.

Conclusion

Pipelines will fail. That’s inevitable.

What matters is whether you can detect and respond before the failure impacts the business.

Modern data teams must go beyond pipelines and dashboards. They must deliver:

- Visibility

- Trust

- Actionable insights

Logiciel POV

At Logiciel Solutions, we help organizations build AI-first observability systems that provide full visibility into data pipelines and prevent silent failures.

Our engineering teams design systems that ensure data reliability, reduce downtime, and enable confident decision-making at scale.

Discover how we can help you build observable, reliable data infrastructure.

AI Velocity Blueprint

Measure and multiply engineering velocity using AI-powered diagnostics and sprint-aligned teams.

Frequently Asked Questions

What is observability in data pipelines?

It is the ability to monitor data quality, detect anomalies, and understand pipeline behavior.

Why do pipelines fail silently?

Because systems track execution, not data correctness.

What is data infrastructure management?

Managing systems that move, store, and monitor data across pipelines.

How does observability improve data quality?

It detects issues early and ensures reliable data delivery.

What are observability tools?

Tools that track data flow, lineage, and anomalies across systems.